The landscape of digital marketing has undergone a seismic shift as the cost of customer acquisition continues to rise across major advertising platforms like Google Ads and Meta. In this high-stakes environment, the ability to convert traffic into leads or customers is no longer just a tactical advantage but a fundamental requirement for business sustainability. Despite the meticulous efforts of marketing teams to establish ad-to-page relevance and craft compelling offers, many find that their conversion rates frequently fall below industry benchmarks. Industry data suggests that while the average conversion rate across various sectors hovers around 2.35%, the top 10% of performers achieve rates nearly five times higher by employing rigorous optimization strategies. The primary vehicle for this optimization is A/B testing, a scientific methodology that allows marketers to move beyond intuition and rely on empirical data to drive page performance.

The Strategic Necessity of Conversion Rate Optimization

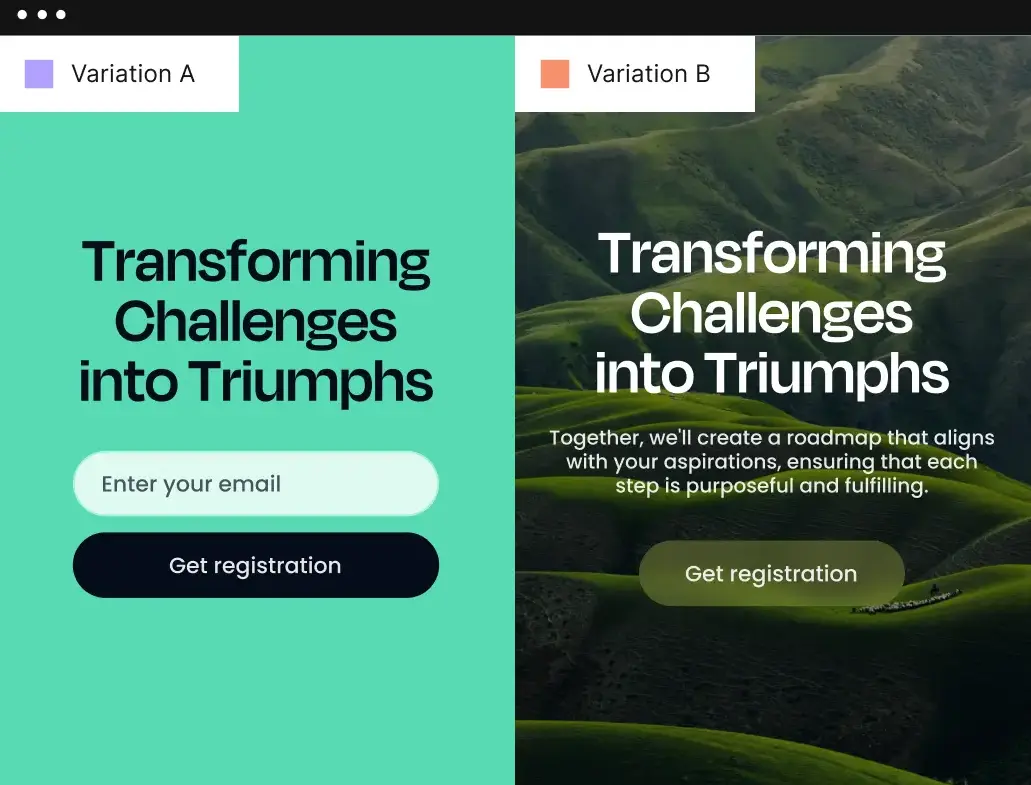

Conversion Rate Optimization (CRO) serves as the bridge between traffic generation and revenue. The process of creating an effective landing page is inherently complex, requiring a harmonious alignment of headlines, visual hierarchy, call-to-action (CTA) placement, and psychological triggers. When a page underperforms, the traditional response was often to scrap the design entirely—a costly and time-consuming endeavor. However, modern marketing frameworks prioritize iterative testing. By utilizing A/B testing, organizations can isolate specific variables—such as a single word in a headline or the color of a submission button—to determine their specific impact on user behavior.

A/B testing, also known as split testing, involves creating two or more versions of a digital asset to see which performs better. This methodology provides insights into several critical areas: user preference, friction points within the user journey, and the specific messaging that resonates with different audience segments. As digital privacy regulations tighten and third-party cookies phase out, the importance of optimizing first-party experiences on landing pages has become the focal point of the modern marketing stack.

A Chronology of Experimentation Technology

The history of A/B testing has moved through three distinct eras. In the early 2000s, testing was a manual, developer-heavy process that required hard-coding different versions of pages and manually diverting server traffic. The second era saw the rise of client-side testing tools, which allowed marketers to use visual editors to "overlay" changes on a page using JavaScript. While this democratized testing, it often led to "flicker" effects and slower page load times, which could paradoxically harm conversion rates.

The current era is defined by server-side experimentation and artificial intelligence. Modern tools now integrate directly with the server, ensuring that variations load instantaneously and seamlessly. Furthermore, the introduction of machine learning has moved testing from a static "winner-takes-all" model to dynamic traffic allocation, where algorithms automatically shift traffic toward the better-performing variation in real-time, minimizing the "regret" of lost conversions during the testing period.

Critical Frameworks for Evaluating Testing Platforms

For organizations looking to integrate a testing platform into their workflow, the selection process must be governed by several technical and operational criteria. These include the ease of integration with existing marketing stacks, the impact on page load speed, the sophistication of the analytics dashboard, and the level of technical debt the tool introduces.

- Deployment Methodology: Does the tool use client-side or server-side execution? Server-side is generally preferred for performance and SEO stability.

- AI Integration: Does the platform offer generative AI for copy variations or automated traffic steering?

- Data Depth: Does the tool provide qualitative data (like heatmaps) alongside quantitative metrics (like conversion rates)?

- Usability: Can a marketing manager deploy a test without significant intervention from the engineering or IT department?

Leading Solutions in the A/B Testing Market

1. Instapage: The Integration of Construction and Experimentation

Instapage has positioned itself at the top of the market by offering a unified ecosystem for building, testing, and optimizing landing pages. Unlike standalone testing tools that must be "bolted on" to an existing site, Instapage integrates experimentation directly into the creation process. This no-code platform utilizes server-side experimentation, which eliminates the performance lag associated with older testing technologies.

The platform’s "AI Experiments" feature represents a significant leap forward in CRO. Traditional A/B testing requires a test to run until it reaches "statistical significance"—a point where the data is robust enough to prove a winner. This can take weeks or months depending on traffic volume. Instapage’s AI-driven dynamic traffic allocation tracks the progress of an experiment and automatically directs more traffic to the higher-performing variation as the test progresses. This ensures that marketers are not wasting valuable ad spend on a losing variation simply to satisfy a statistical requirement.

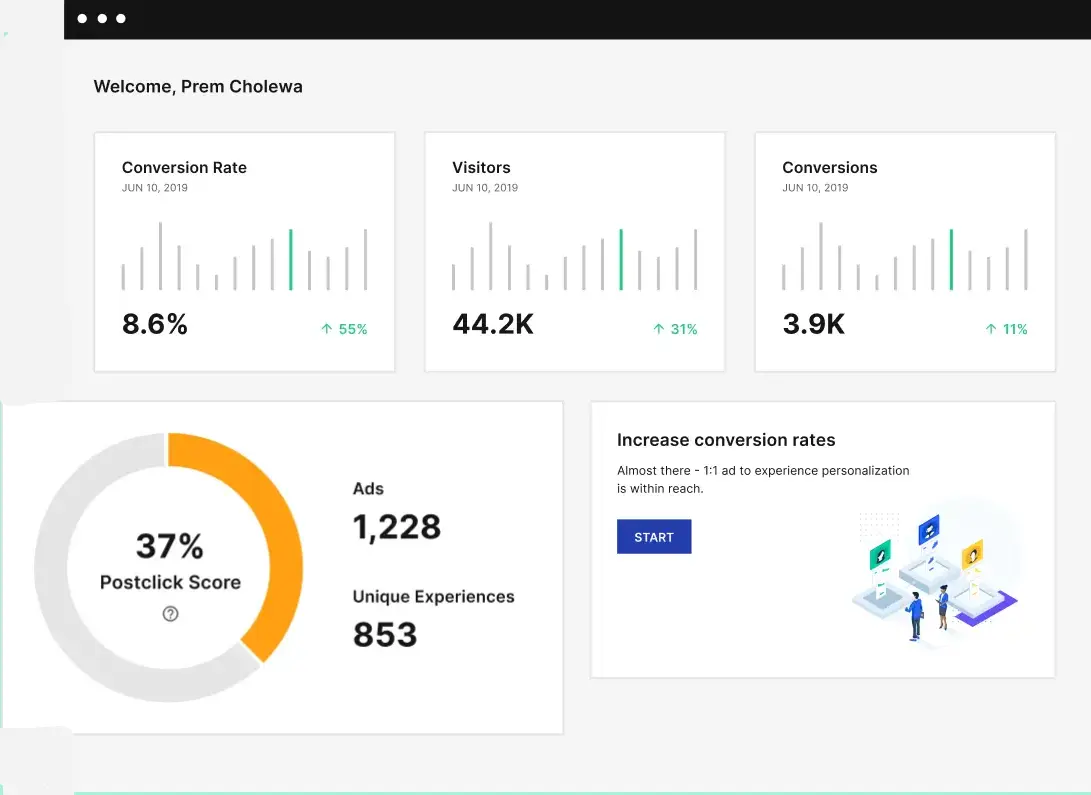

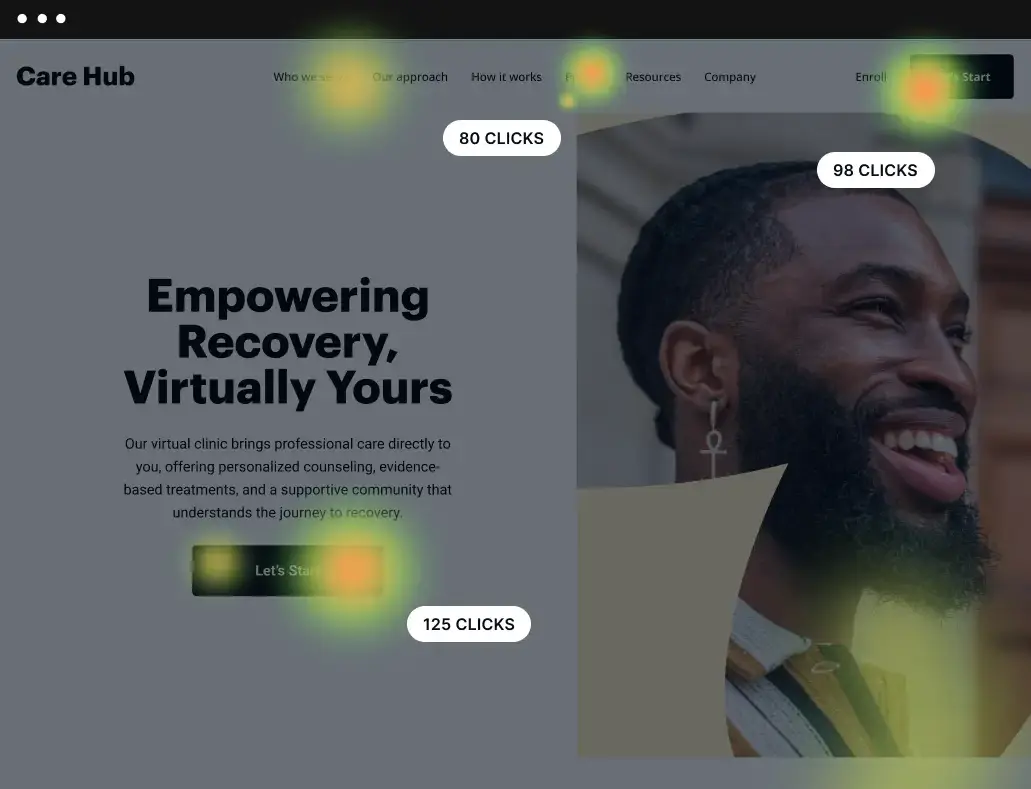

Furthermore, Instapage provides a comprehensive analytics suite that tracks visitors, conversions, cost-per-visitor, and cost-per-lead. To provide a holistic view of the user experience, the platform includes built-in heatmaps. These visual tools track mouse movement, clicks, and scroll depth, allowing marketers to see exactly where users are engaging and where they are dropping off. This qualitative data is essential for forming the hypotheses that drive future A/B tests.

2. VWO: Specialization in Split URL Testing

VWO (Visual Website Optimizer) remains a formidable player in the space, particularly for marketers who need to test radical departures in page design. While many tools focus on element-level changes, VWO excels in split URL testing. This allows a company to host two entirely different versions of a landing page on different URLs and split the traffic between them. This is particularly useful for rebranding efforts or when testing a completely different conversion funnel. VWO’s platform provides detailed probability metrics, helping teams understand not just which version won, but the likelihood that the winning version will continue to outperform in the future.

3. Optimizely: Enterprise-Grade Scalability

Optimizely has evolved into a comprehensive digital experience platform (DXP), catering largely to enterprise-level organizations. Its Web Experimentation tool is designed to bridge the gap between technical teams and general users. With a robust visual editor and extension templates, it reduces the reliance on developers for front-end changes. Optimizely’s strength lies in its ability to run experiments at the "network edge," which ensures a swift user experience regardless of the user’s geographic location. Their embedded AI capabilities also assist in generating copy variations for CTAs, making it easier to scale experimentation across thousands of pages.

4. GrowthBook: The Data-Centric, Open-Source Alternative

GrowthBook offers a different philosophy, focusing on transparency and avoiding vendor lock-in. As an open-source platform, it allows companies to maintain full control over their data. It integrates directly with major SQL data sources, such as BigQuery, Snowflake, and Redshift, as well as analytics tools like Mixpanel and Google Analytics. GrowthBook is favored by data science teams who want to run unlimited tests and apply complex statistical models to their results without the restrictions of a proprietary "black box" algorithm.

Case Study: The Impact of Systematic Testing at Verizon

The real-world efficacy of these tools is best illustrated by the experience of the Verizon Digital Media Services (VDMS) team. Faced with high conversion costs, the team utilized Instapage’s A/B testing features to validate page elements. By observing visitor behavior first-hand and experimenting with multiple variations in a controlled environment, Verizon was able to identify friction points that were previously invisible. The result was a reduction in cost per conversion by more than 50%. This case study highlights a critical reality: even minor adjustments in page architecture, when validated by data, can lead to massive improvements in ROI.

Technical Analysis: The Multi-Armed Bandit Problem

The shift from standard A/B testing to the dynamic traffic allocation seen in tools like Instapage is a solution to what mathematicians call the "Multi-Armed Bandit" problem. In a standard test, if Version A is clearly outperforming Version B after three days, but the test requires ten days to reach statistical significance, the marketer "loses" the potential conversions that Version A would have generated from the traffic sent to Version B during the remaining seven days. Dynamic allocation solves this by automatically tapering off traffic to the "loser" and maximizing traffic to the "winner" mid-test. This approach is increasingly becoming the standard for high-volume advertisers who cannot afford the opportunity cost of traditional testing.

Broader Implications for the Marketing Industry

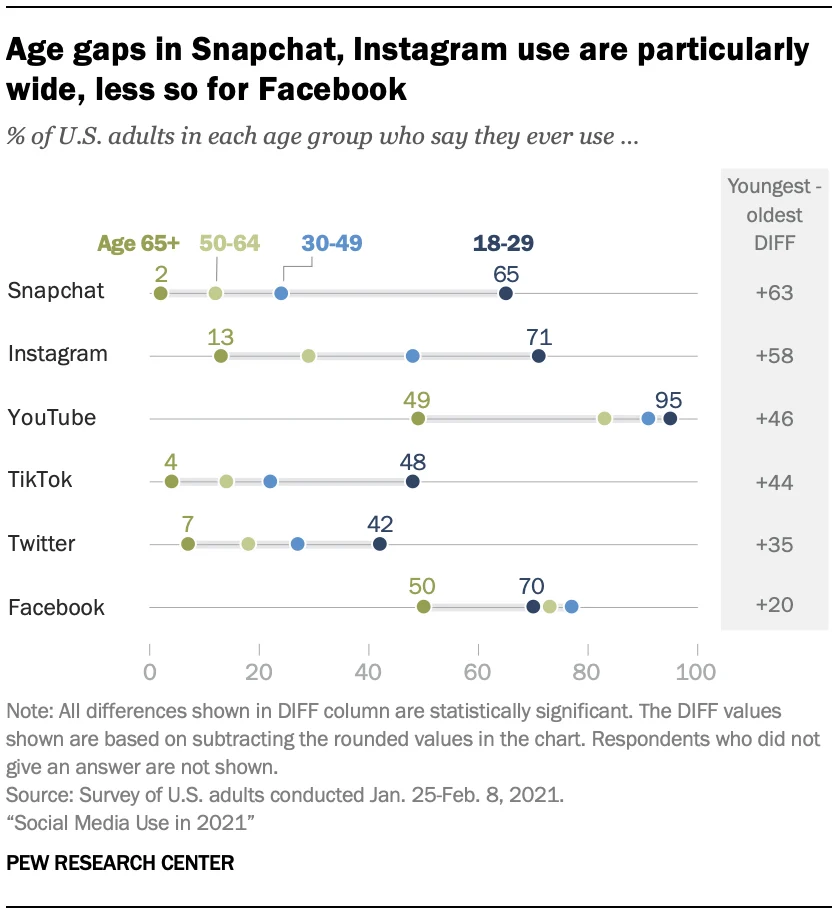

The democratization of high-level A/B testing tools signifies a shift in the marketing profession. The role of the "Creative Director" is increasingly being supplemented, or in some cases replaced, by the "Growth Engineer" or "Data Analyst." Decisions that were once made based on aesthetic preference are now made based on conversion data.

Moreover, the integration of AI into these tools suggests a future where landing pages are not just tested, but are hyper-personalized in real-time. As AI learns which headlines work for users coming from specific zip codes or device types, the "static" landing page may become obsolete, replaced by a fluid, generative experience that optimizes itself for every individual visitor. For businesses, the message is clear: the cost of not testing is the highest cost of all. By adopting a culture of experimentation and leveraging the right technological stack, organizations can ensure that their digital presence is an engine for growth rather than a drain on resources.