The digital marketing landscape has long been driven by the pursuit of optimization, yet a growing discrepancy between advertised software specifications and real-world performance is raising concerns among data scientists and web developers. In the competitive field of A/B testing—a method of comparing two versions of a webpage to determine which performs better—vendors frequently highlight "lightweight" script sizes as a primary selling point. Claims of snippets as small as 2.8 KB or 17 KB are common, suggesting that these tools will have a negligible impact on page load speeds. However, a comprehensive investigation into the execution footprints of leading experimentation platforms reveals that these initial figures often represent only a "loader" or "stub," with the true payload required to run experiments often reaching hundreds of kilobytes.

This gap between marketing claims and technical reality has significant implications for Core Web Vitals, search engine optimization (SEO), and the overall user experience. As organizations increasingly rely on client-side experimentation to drive conversions, understanding the architectural trade-offs of these tools has become a critical requirement for technical stakeholders.

The Discrepancy Between Advertised and Actual Script Sizes

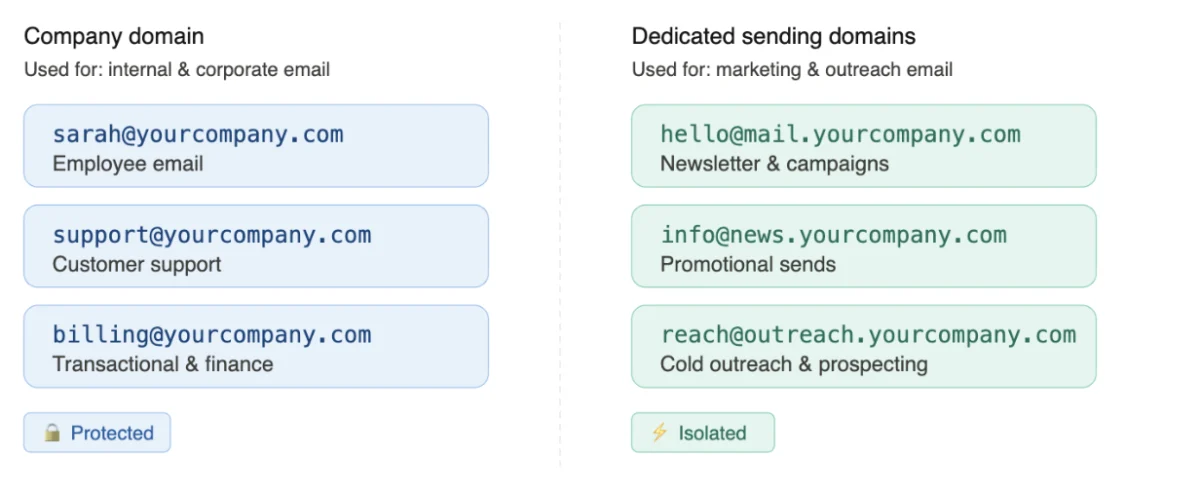

In the current software-as-a-service (SaaS) market, "snippet size" has become a shorthand for performance. The logic appears sound: a smaller script should download faster, leading to a quicker Time to Interactive (TTI). However, the technical reality of how modern A/B testing tools function is more complex. Most tools utilize a two-stage loading process. The first stage is the installation of a small "stub" or "loader" script on the website. This is the figure typically cited in marketing materials. The second stage involves this loader fetching the actual experimentation engine, configuration files, and variation code from a Content Delivery Network (CDN) or API after the page has begun to render.

A recent audit of production environments across several major platforms—including VWO, ABlyft, Mida.so, and Convert—highlights the extent of this payload deferral. For instance, while VWO advertises a 2.8 KB stub, direct measurements from live customer sites show a gzipped base SDK of at least 14.7 KB, with total execution payloads often climbing to approximately 254 KB once all campaign data is loaded. Similarly, ABlyft’s 13 KB claim was found to correspond to a gzipped SDK of 32 KB, which translates to an uncompressed footprint of roughly 168.5 KB.

These findings suggest that "smallest snippet size" is frequently a weak metric for evaluating the true performance impact of a testing tool. By deferring the payload, vendors can claim a small initial footprint, but the browser must still eventually download and execute the full weight of the code to deliver the experiment to the user.

Methodology of the Technical Investigation

To uncover the true execution cost of A/B testing scripts, researchers employed a multi-step investigative process designed to simulate real-world user conditions. The study moved beyond the static analysis of code snippets provided in documentation and instead focused on "in-the-wild" implementations.

The first phase involved direct measurement from production environments. Using command-line tools like curl, investigators captured the tracking scripts from live customer websites. This allowed for the measurement of both the gzipped transfer size (the compressed data sent over the network) and the uncompressed payload (the actual amount of JavaScript the browser’s CPU must parse and execute).

The second phase consisted of code-level analysis to trace the execution path. Researchers looked for patterns of "progressive injection," a technique where a script dynamically introduces additional scripts or external API calls at runtime. By monitoring the network waterfall in Browser Developer Tools, the team could identify secondary scripts, configuration files, and dynamically injected resources that are often hidden from the initial page source code.

Finally, the gathered data was benchmarked against official vendor documentation and third-party performance studies. This comparison aimed to identify where transparency was lacking and which architectural models provided the most predictable performance profiles.

Architectural Trade-offs: Embedded Bundles vs. Progressive Loading

The investigation categorized A/B testing tools into three primary architectural types, each with distinct advantages and disadvantages regarding performance and data reliability.

1. Embedded Bundles (The Upfront Model)

Platforms like Convert utilize an embedded bundle approach. In this model, the script delivered to the browser contains the full experimentation engine along with all active experiences and targeting logic in a single request. While this results in a larger initial snippet—typically around 93 KB to 110 KB gzipped—it eliminates the need for subsequent network requests during the page load process. The primary benefit is predictability; the browser knows exactly how much code it needs to handle from the start, and there is no risk of the experiment failing to load due to a delayed secondary request.

2. Stub and API Configuration (The Deferred Model)

This is the most common model among tools like VWO, Mida.so, and ABlyft. A lightweight loader is placed on the page, which then fetches the necessary configurations and variation code via API calls. While this allows for "small snippet" marketing claims, it introduces a "distributed payload" risk. If the secondary requests are delayed by network latency, the user may see the original version of the page before the variation is applied—a phenomenon known as "flicker" or Flash of Original Content (FOOC).

3. Feature Flagging and Server-Side Testing

Tools like Amplitude Experiment often focus on feature flagging. These systems return variant decisions rather than full DOM (Document Object Model) manipulations. While extremely lightweight, they are not directly comparable to traditional client-side A/B testing tools, as they require more substantial backend implementation and are generally used for product feature releases rather than visual UI testing.

Impact on Core Web Vitals and SEO

The timing and size of these scripts have a direct correlation with Google’s Core Web Vitals, a set of metrics used to measure the user experience of a webpage. Two metrics in particular are sensitive to A/B testing implementations: Largest Contentful Paint (LCP) and Cumulative Layout Shift (CLS).

LCP measures when the largest content element on the screen becomes visible. If an A/B testing script is large or relies on multiple deferred requests, it can delay the rendering of the page, thereby increasing the LCP score and potentially harming the site’s search engine ranking.

CLS measures the visual stability of a page. In "stub-based" architectures, if the variation code arrives after the page has already rendered, the sudden injection of new elements or style changes can cause the layout to jump. To mitigate this, many vendors use "anti-flicker" snippets that temporarily hide the entire page content until the experiment is ready. While this prevents layout shifts, it creates a "blank screen" effect that can frustrate users and further inflate LCP and other loading metrics.

Industry Reactions and the Demand for Transparency

The discrepancy in reporting has sparked a debate within the conversion rate optimization (CRO) community. Many performance-focused engineers argue that the current industry standards for reporting script size are misleading. The lack of transparency makes it difficult for organizations to conduct an accurate Cost-Benefit Analysis when choosing a testing vendor.

While vendors argue that progressive loading is necessary to manage complex experiment setups without blocking the initial render, critics point out that the total "weight" on the user’s device remains the same. The consensus among technical leads is shifting toward a "Total Payload" metric rather than "Initial Snippet Size." This shift encourages vendors to be more honest about the full resources required to run their software.

Furthermore, the investigation highlights that "zero performance drop" claims, such as those made by newer AI-driven testing platforms, are often impossible to verify without public disclosure of their payload data and execution logic. In an era where web performance is synonymous with revenue, the "black box" approach to script delivery is increasingly viewed with skepticism.

Broader Implications for the MarTech Ecosystem

The findings of this investigation serve as a cautionary tale for the broader Marketing Technology (MarTech) ecosystem. As websites become increasingly burdened by "tag bloat"—the accumulation of various tracking and optimization scripts—the cumulative effect on browser performance can be devastating.

For businesses, the lesson is clear: evaluating a tool based on a single marketing metric is insufficient. A holistic evaluation must include:

- Total Execution Payload: The sum of all JavaScript, CSS, and configuration data required for a test.

- Network Waterfall Analysis: Monitoring how many separate requests are made and their impact on the loading sequence.

- Execution Timing: Whether the tool applies changes before or after the initial DOM is ready.

- Flicker Mitigation Strategy: Assessing whether the tool uses "hide-the-page" tactics that may mask performance issues while hurting UX.

As the industry moves forward, the demand for "Performance-First" experimentation will likely force vendors to optimize their actual engines rather than just their initial loaders. For now, the responsibility lies with digital teams to look beyond the marketing snippets and analyze the true cost of the code they deploy on their digital storefronts. The ultimate goal of A/B testing is to improve the user experience and increase revenue; it is a profound irony if the very tools used to achieve this end up driving users away through degraded site performance.