In the rapidly evolving landscape of digital commerce and software development, experimentation has transitioned from a niche technical requirement to a core business strategy. Organizations across the globe are increasingly turning to A/B testing and Conversion Rate Optimization (CRO) to de-risk product launches and drive incremental revenue. However, a significant number of these programs stall before they can deliver meaningful results. The primary culprit, according to industry experts, is a phenomenon known as the "big test trap." This organizational hurdle occurs when teams prioritize massive, transformative experiments over small, iterative learnings, leading to development bottlenecks and a loss of institutional momentum.

Lucia van den Brink, the founder of the consultancy The Initial and a prominent voice in the experimentation space, recently addressed these challenges on the VWO Podcast. Her insights provide a roadmap for organizations to move beyond the initial excitement of experimentation into a phase of sustainable, high-velocity testing that balances strategic ambition with operational reality.

The Anatomy of the Big Test Trap

The narrative of a failing experimentation program often begins with a team receiving executive approval after months of internal advocacy. Driven by the need to prove the value of the program immediately, leadership and product teams often gravitate toward a "grand slam" approach. The logic appears sound on the surface: if the change is significant, such as a full website redesign or a new checkout flow, the resulting data should be clear and the impact substantial.

In practice, however, these large-scale projects often become "vaporware." Lucia van den Brink cites instances where teams spend three months in design reviews and another six months in development. A year into the program, the "first test" has yet to go live. By this point, the initial budget may be depleted, stakeholders lose interest, and the culture of experimentation is declared a failure before it truly began.

The "big test trap" is fueled by several organizational factors:

- High Stakes Visibility: Large projects attract the attention of C-suite executives, creating a pressure cooker environment where failure is not seen as a learning opportunity but as a waste of resources.

- Resource Competition: Major experiments require significant engineering and design capacity, often putting the experimentation team at odds with the core product roadmap.

- Complexity of Attribution: When a test involves dozens of simultaneous changes—such as a new UI, a new pricing model, and new copy—it becomes mathematically impossible to isolate which specific variable drove the result.

A Chronology of Sustainable Growth: The Four-Step Framework

To counter these systemic failures, Van den Brink proposes a structured four-step framework designed to build habits, systems, and portfolio balance from the outset.

Step 1: Recognizing and Mitigating the Urge for Complexity

The first step in any successful program is acknowledging that the "big test" is often a distraction. Van den Brink highlights that companies almost universally start with a massive idea because of an implicit belief that impact is proportional to the size of the change.

She recalls a product owner whose first proposed experiment was a complete fraud detection system. Another client attempted to launch a full redesign of their primary landing page as their maiden test. While these projects are important, they are unsuitable for an initial experiment because they lack "velocity." In the early stages of a CRO program, the goal is not just to win, but to establish a workflow. By choosing smaller, more manageable tests, teams can navigate the technical hurdles of their testing platform and clear the path for more complex experiments later.

Step 2: Prioritizing High-Impact, Low-Effort Micro-Tests

The second phase of the framework focuses on the "small wins" strategy. Contrary to the belief that big changes drive big results, modest adjustments—such as altering the hierarchy of information or refining a call-to-action—can produce substantial conversion lifts with a fraction of the development time.

The primary advantage of small tests is their ability to foster a "learning culture." For example, a B2B SaaS team might spend their first three months building infrastructure rather than a single massive feature. This includes:

- Setting up tracking for micro-conversions (e.g., button clicks, scroll depth).

- Establishing a streamlined QA process for variations.

- Training team members on how to interpret statistical significance.

Once this infrastructure is in place, the team can run 20 tests in six months rather than one test in a year. This volume of data provides a much clearer picture of user behavior than any single large-scale overhaul ever could.

Step 3: Strategic Portfolio Balancing

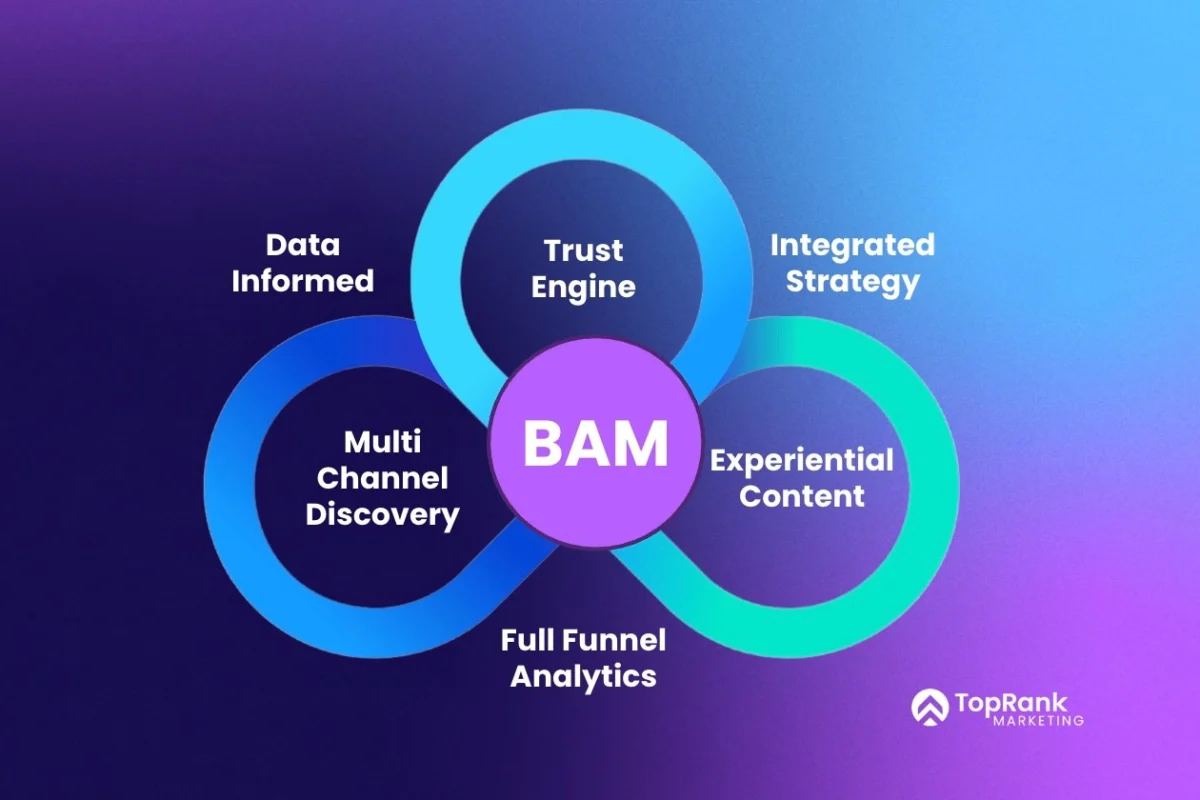

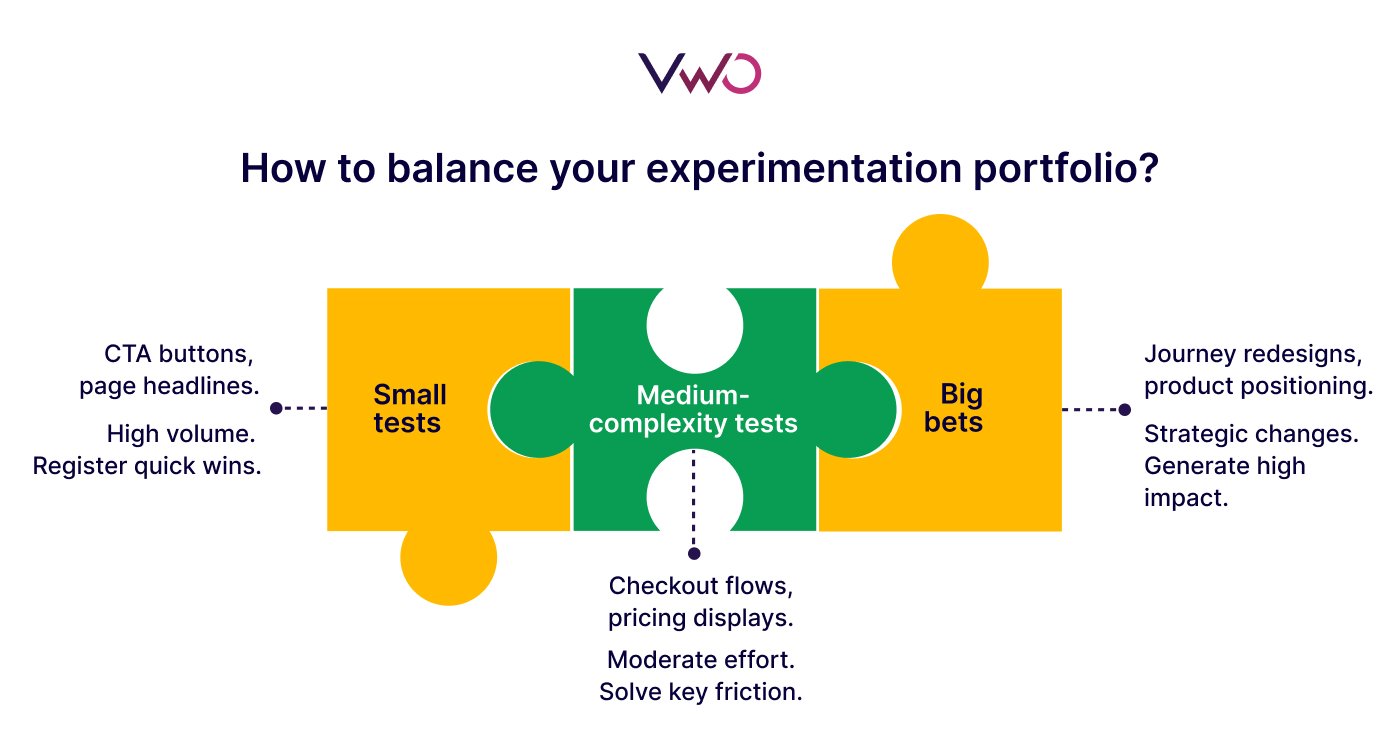

As a program matures, it must move beyond random testing into strategic portfolio management. Van den Brink suggests that an experimentation program should be managed like a financial investment portfolio, diversifying risk across different types of experiments.

A balanced portfolio typically includes:

- Low-Risk/Iterative Tests (70%): Small changes to copy, colors, or layouts that are easy to implement and provide steady learnings.

- Medium-Risk/Functional Tests (20%): Testing new features or significant changes to existing user flows.

- High-Risk/Strategic Tests (10%): "Big swings" such as new pricing tiers or fundamental shifts in the value proposition.

This 70/20/10 distribution ensures that the program continues to deliver value and insights even if the high-risk experiments fail to yield a positive result. It prevents the entire program’s success from being tied to a single "make-or-break" project.

Step 4: Validating Strategy Through Large-Scale Experiments

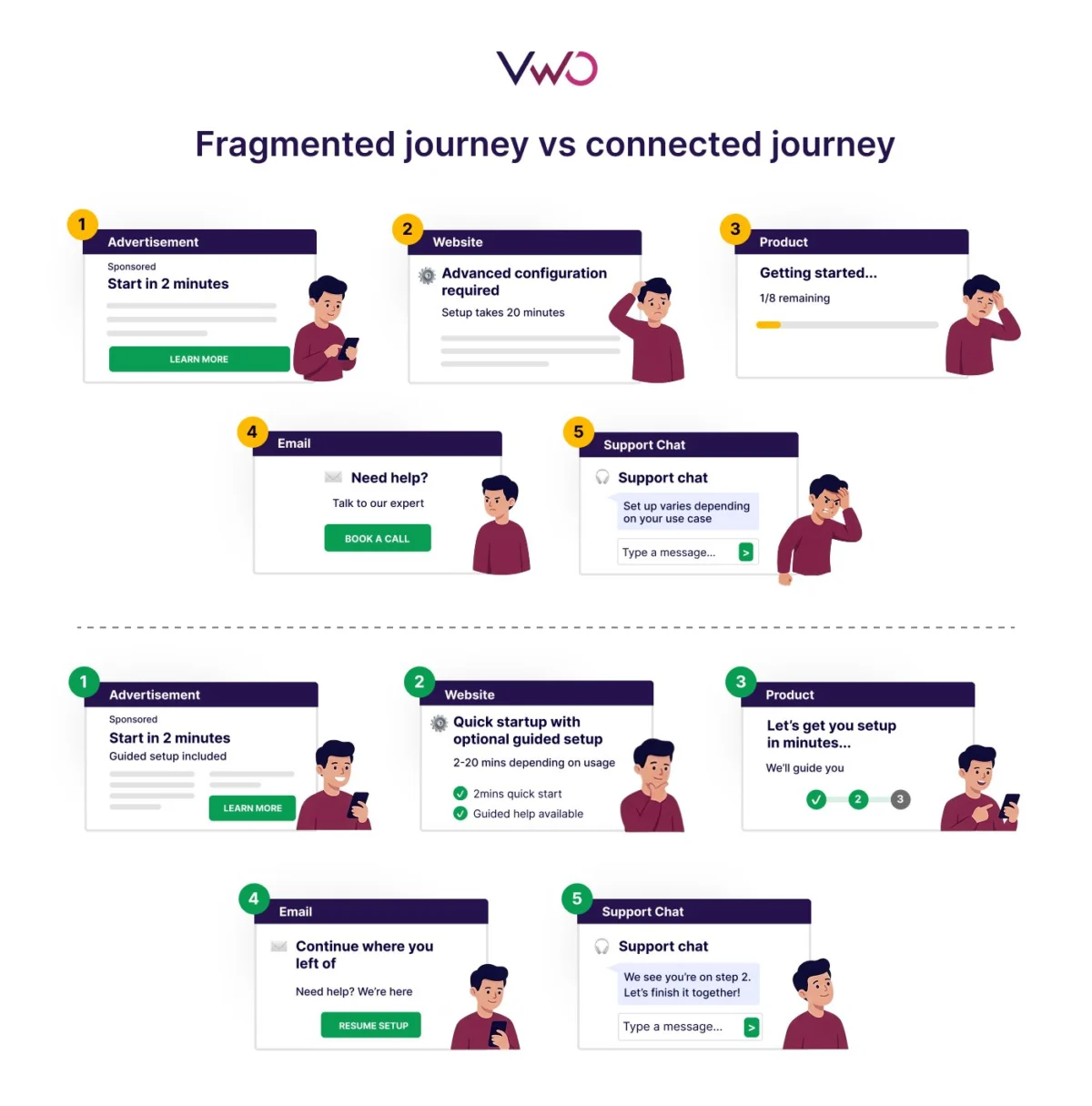

The final step in the framework is a paradigm shift in how big tests are viewed. Instead of using large experiments to explore what might work, they should be used to validate what smaller tests have already indicated.

Large-scale experiments remain vital for major strategic decisions, such as redesigning a core product flow or introducing a new subscription model. However, these should only be greenlit after a series of smaller tests have provided a high level of confidence in the direction. For instance, if several small tests indicate that users respond more favorably to "benefit-focused" messaging rather than "feature-focused" messaging, a subsequent large-scale redesign should lean heavily into that benefit-driven approach. This method reduces the risk of the big test failing and ensures that the engineering effort is backed by behavioral evidence.

Industry Context and Data-Driven Insights

The need for this iterative approach is backed by broader industry data. According to research from the Harvard Business Review, companies like Microsoft and Google run thousands of experiments per year, but only about 10% to 20% of them result in positive changes. In an environment where the majority of hypotheses will be proven wrong, a "one big test" strategy is statistically likely to fail.

Furthermore, the "Sunk Cost Fallacy" often plagues large experimentation projects. When a company spends six months developing a single A/B test, there is immense internal pressure to "win." This can lead to data poaching or ignoring negative results to justify the investment. Conversely, small tests are "disposable." If a small test fails, the team has lost only a few days of work, making it easier to accept the data objectively and move on to the next hypothesis.

The Role of Technology in Scaling Programs

The emergence of AI-powered experimentation tools has further lowered the barrier to entry for high-velocity testing. Modern platforms, such as VWO, now offer features like AI copy generation and automated audience segmentation. These tools allow teams to create variations in minutes rather than days, directly supporting Van den Brink’s advocacy for speed and volume.

By utilizing AI to handle the "low-effort" portion of the portfolio, human designers and engineers can focus their energy on the "high-risk" strategic tests that require deeper creative and technical problem-solving. This synergy between human expertise and automated efficiency is the hallmark of a mature experimentation program.

Broader Implications for Corporate Culture

Beyond the technical and financial benefits, a sustainable experimentation program has a profound impact on organizational culture. It shifts the power dynamic from the "HiPPO" (Highest Paid Person’s Opinion) to a meritocracy of data.

When a team moves from running one test a year to five tests a month, the organization begins to view "failure" differently. A failed test is no longer a waste of budget; it is a saved investment in a feature that users didn’t actually want. This mindset encourages curiosity and innovation across all departments, from marketing to product development.

Lucia van den Brink’s framework serves as a reminder that the most successful digital products are not built through singular moments of genius, but through thousands of small, informed decisions. For organizations looking to scale their experimentation efforts, the message is clear: start small, build a system, balance your risks, and use your big swings to confirm a path you have already discovered through data. In the world of CRO, the tortoise—consistent, iterative, and persistent—frequently beats the hare.