A robust XML sitemap functions as an essential digital roadmap, meticulously guiding search engines like Google to all critical pages within a website. This structured file is not merely a convenience but a cornerstone of effective Search Engine Optimization (SEO), ensuring that even websites with less-than-perfect internal linking structures can have their vital content rapidly discovered and indexed. In an increasingly complex digital ecosystem, understanding the mechanics and strategic implementation of XML sitemaps is paramount for enhancing search visibility and ensuring content discoverability by the new generation of AI agents.

Understanding the Digital Roadmap: What are XML Sitemaps?

At its core, an XML sitemap is a file containing a comprehensive list of a website’s essential URLs, designed to inform search engine crawlers about all available content. Its primary purpose is to facilitate efficient crawling and indexing, thereby helping search engines comprehend the website’s architecture and prioritize important content. While HTML sitemaps exist primarily for human users, offering a navigable, hierarchical overview of a site’s structure, XML sitemaps are exclusively engineered for search engine consumption. They communicate directly with algorithms, providing a structured data feed that streamlines the discovery process.

The concept of sitemaps emerged in the early 2000s, gaining prominence as the web expanded rapidly. Google, Yahoo, and Microsoft (Bing) collectively introduced the Sitemaps Protocol in 2006, standardizing the format and establishing it as a key tool for webmasters. This initiative was a direct response to the escalating challenges of discovering and indexing the burgeoning volume of online content, particularly for large, dynamic, or newly launched websites.

The Anatomy of a Sitemap: Structure and Key Tags

An XML sitemap adheres to a standardized Extensible Markup Language (XML) format, making it easily readable and processable by search engines. This structured text file provides crucial metadata about each URL it lists, such as when a page was last modified, which helps search engines crawl sites more intelligently and efficiently.

A basic XML sitemap structure, containing a single URL, illustrates its simplicity and purpose:

<?xml version="1.0" encoding="UTF-8"?>

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<url>

<loc>https://www.example.com/important-page/</loc>

<lastmod>2024-01-01</lastmod>

<changefreq>monthly</changefreq>

<priority>0.8</priority>

</url>

</urlset>Each URL entry is encapsulated within <url> tags, which themselves reside within the <urlset> container. The XML declaration (<?xml version="1.0" encoding="UTF-8"?>) specifies the XML version and character encoding.

Key tags within an XML sitemap include:

<loc>(Mandatory): This tag specifies the absolute, canonical URL of the page intended for crawling and indexing. It is arguably the most critical component, directly informing search engines of the page’s location.<lastmod>(Optional but Recommended): This tag indicates the date of the page’s last significant modification. Google explicitly favors this tag, using it to determine when a page might need re-crawling. Accuratelastmodvalues can significantly improve crawl efficiency for frequently updated content like news articles or product listings.<changefreq>(Optional, largely ignored by Google): This tag suggests how frequently the content on a page is expected to change (e.g., "daily," "weekly," "monthly"). While part of the sitemaps protocol, major search engines like Google and Bing have officially deprecated its use in practice, relying more on their own algorithms and thelastmodtag to gauge update frequency.<priority>(Optional, largely ignored by Google): This tag offers a numerical suggestion (from 0.0 to 1.0) of a page’s relative importance compared to other pages on the same site. Similar tochangefreq, Google has stated that it generally ignores this tag, preferring to determine page importance through its own ranking signals, such as internal linking and backlinks.

The evolution of Google’s stance on changefreq and priority highlights a broader trend: search engines increasingly prioritize real-world signals and intelligent algorithms over explicit hints from webmasters, with the exception of clear, factual data like the lastmod date.

Navigating Vast Digital Landscapes: The Sitemap Index

For websites with an extensive number of pages—often exceeding hundreds of thousands—a single XML sitemap file can become unwieldy. The Sitemaps Protocol imposes limits: a single sitemap can contain a maximum of 50,000 URLs and must not exceed 50 MB in uncompressed size. To address this, the concept of an XML sitemap index was introduced.

A sitemap index file acts as a directory, listing multiple individual XML sitemap files rather than individual page URLs. This allows website owners to segment their sitemaps logically, for example, by content type (e.g., sitemap-pages.xml, sitemap-blogposts.xml, sitemap-products.xml) or by chronological archives. This organizational structure is particularly beneficial for large e-commerce sites, news portals, or content hubs that generate new pages daily.

The structure of a sitemap index file is similar to a regular sitemap, but it uses <sitemapindex> and <sitemap> tags:

<?xml version="1.0" encoding="UTF-8"?>

<sitemapindex xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<sitemap>

<loc>https://www.example.com/sitemap-pages.xml</loc>

<lastmod>2025-12-11</lastmod>

</sitemap>

<sitemap>

<loc>https://www.example.com/sitemap-products.xml</loc>

<lastmod>2025-12-11</lastmod>

</sitemap>

</sitemapindex>Submitting a sitemap index to search engines allows them to discover and process all associated sitemaps from a single entry point, significantly simplifying the management of large websites and ensuring comprehensive content discovery.

Beyond Basic Discovery: The Multifaceted Benefits for SEO

While search engines can often discover content through internal links and backlinks, an XML sitemap provides a direct, explicit signal that offers numerous advantages:

- Improved Crawl Efficiency: Sitemaps optimize how search engine bots crawl a site. Instead of relying solely on link traversal, which can be inefficient for deep or complex structures, sitemaps provide a prioritized list, guiding crawlers to the most important content first. This is crucial for websites with large archives, dynamic content, or limited "crawl budget."

- Faster Indexing of New Content: For sites that frequently publish new content—such as news outlets, blogs, or e-commerce stores with daily product updates—including these new pages in a sitemap accelerates their discovery. This can lead to significantly faster indexing and appearance in search results, giving a competitive edge.

- Discovery of Orphan Pages: Orphan pages are those that exist on a website but are not linked from any other internal page. Without an XML sitemap, these pages are virtually invisible to search engine crawlers, even if they contain valuable content. Sitemaps act as a safety net, ensuring these pages are still discovered and indexed.

- Leveraging Additional Metadata: While some tags are deprecated, the

lastmodtag remains vital. It informs search engines precisely when content was updated, allowing them to re-crawl and re-evaluate pages more effectively, ensuring users always find the freshest information. - Support for Specialized Content: XML sitemaps can be extended to include specific types of content, such as images, videos, and news articles, through specialized sitemap extensions (e.g., image sitemaps, video sitemaps, news sitemaps). These specialized sitemaps provide additional context that helps search engines surface media content in relevant search results, like Google Images or video search.

- Enhanced Understanding of Site Structure: A well-organized sitemap offers search engines a clear, high-level overview of a website’s hierarchy and the relationships between different sections. This structural clarity can contribute to better understanding and potentially improved relevance in search rankings.

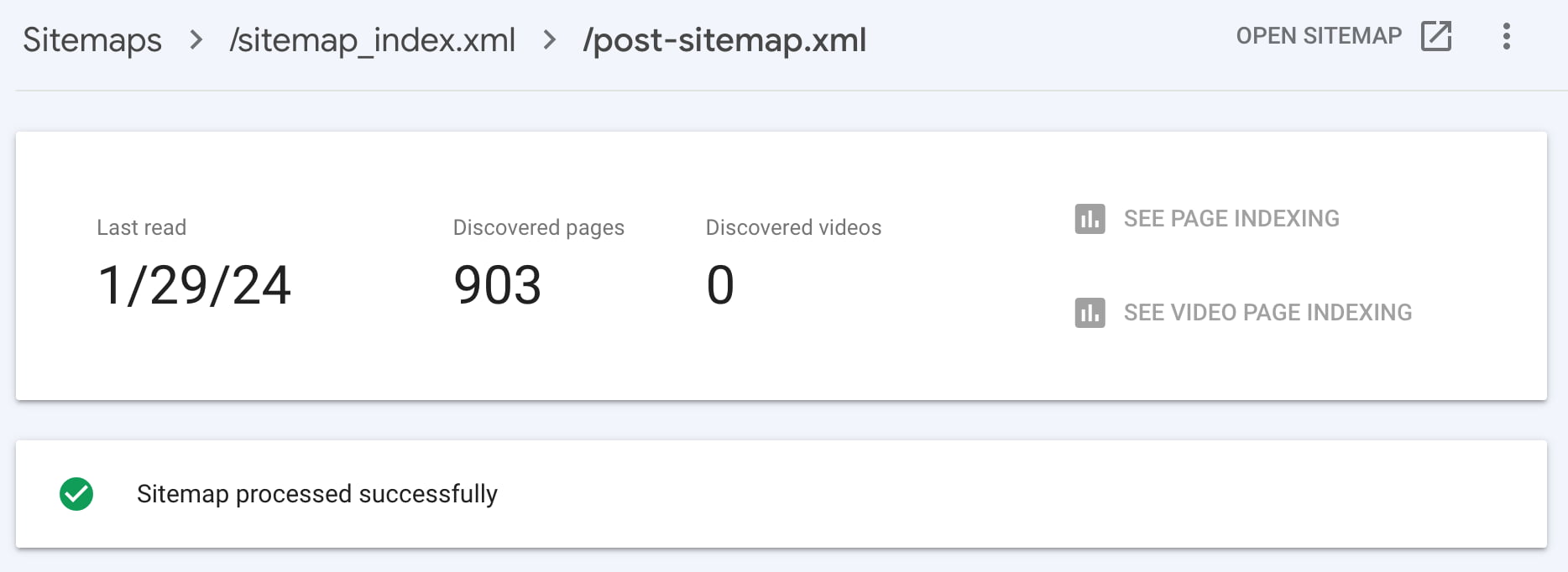

- Indexing Insights through Search Console: Submitting sitemaps to tools like Google Search Console (GSC) provides invaluable diagnostic information. Webmasters can monitor how many URLs are submitted versus how many are actually indexed, identify crawl issues, pinpoint indexing errors, and gain insights into Google’s interaction with their site. This feedback loop is critical for proactive SEO management.

- Support for Multilingual and Multinational Websites: For global websites, XML sitemaps can integrate

hreflangannotations. These attributes signal to search engines that different language or regional versions of a page exist, helping them serve the most appropriate version to users based on their location and language preferences.

Sitemaps in the Era of Artificial Intelligence

The rise of AI-powered search experiences, such as Google’s AI Overviews, Bing Copilot, and large language models (LLMs), introduces new dimensions to content discoverability. While these AI systems process and synthesize information in novel ways, their underlying data sources fundamentally rely on the traditional search index. This means that for content to be leveraged by AI agents—whether for generating summaries, answering queries, or populating knowledge panels—it must first be crawled and indexed by search engines.

In this context, XML sitemaps play an indirectly but critically important role. By ensuring efficient content discovery and indexing, sitemaps are the foundational layer for AI-driven information retrieval. If a page isn’t in the index, it cannot be considered by an AI system. Furthermore, the lastmod tag’s accuracy becomes even more crucial. AI models strive for fresh, up-to-date information, especially for rapidly evolving topics. An accurate lastmod value helps search engines prioritize re-crawling recently updated pages, ensuring that AI systems have access to the latest data.

Without a well-maintained sitemap, valuable content risks being missed or indexed slowly, diminishing its chances of appearing in traditional search results and, consequently, in AI-generated answers. Thus, while sitemaps do not directly "train" AI, they are indispensable for feeding the AI’s information pipeline, making them a silent but powerful enabler of content visibility in the age of intelligent search.

Automating Discoverability: The Yoast SEO Solution

Recognizing the critical importance of XML sitemaps, leading SEO plugins like Yoast SEO have automated their generation and management. This approach eliminates the manual burden on webmasters, who would otherwise need to create and continuously update these complex files. Yoast SEO, available in both free and premium versions, automatically constructs and maintains an XML sitemap index, which then points to individual sitemaps categorized by content type (e.g., posts, pages, categories, tags).

This automation ensures several key benefits:

- Real-time Updates: As content is published, updated, or removed, Yoast SEO automatically refreshes the sitemap index and relevant individual sitemaps, ensuring search engines always have an accurate, up-to-date overview of the site’s content.

- Intelligent Organization: The plugin intelligently groups URLs into separate sitemaps, adhering to the 50,000 URL limit per file and making the sitemap structure manageable for large sites.

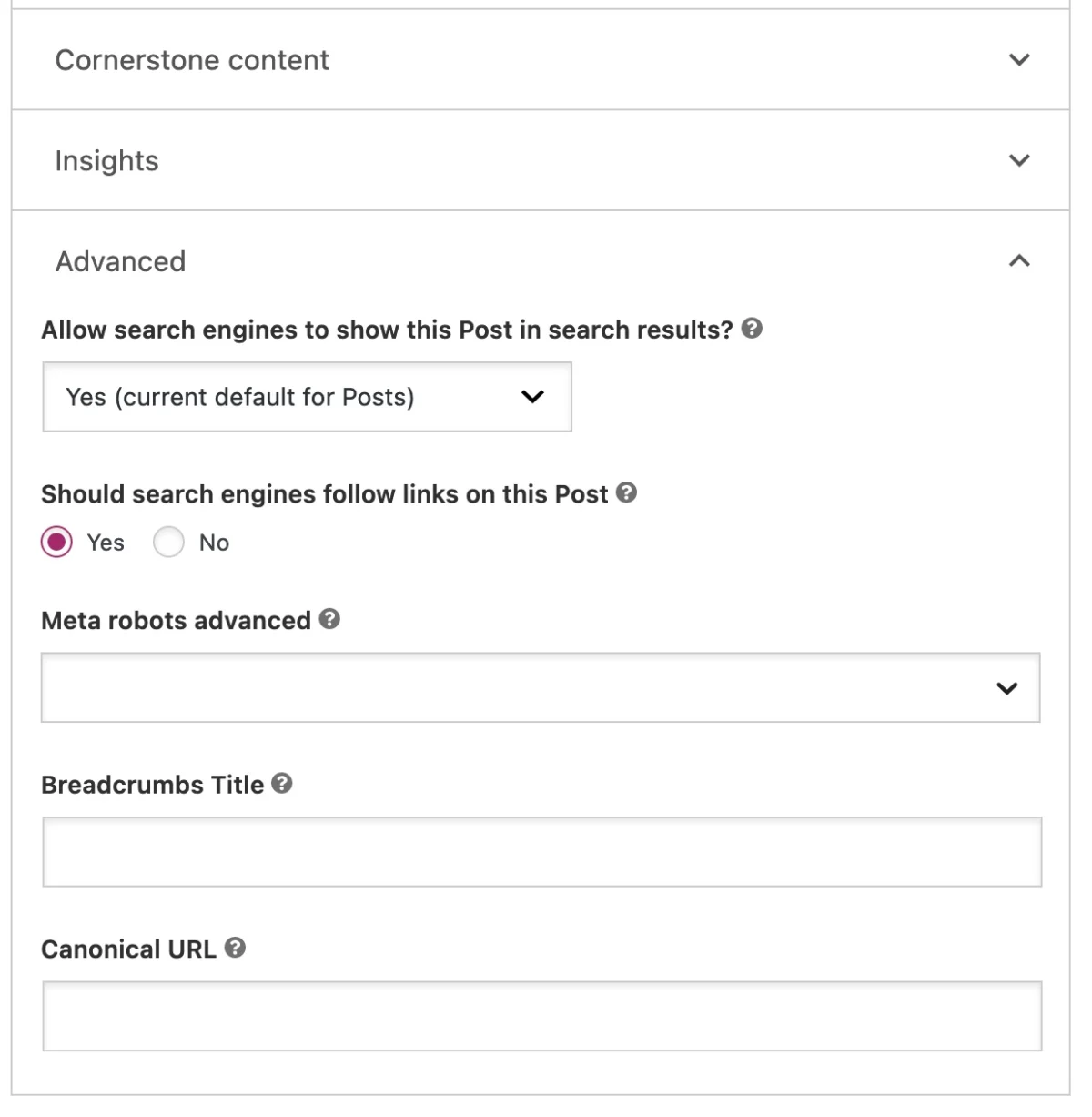

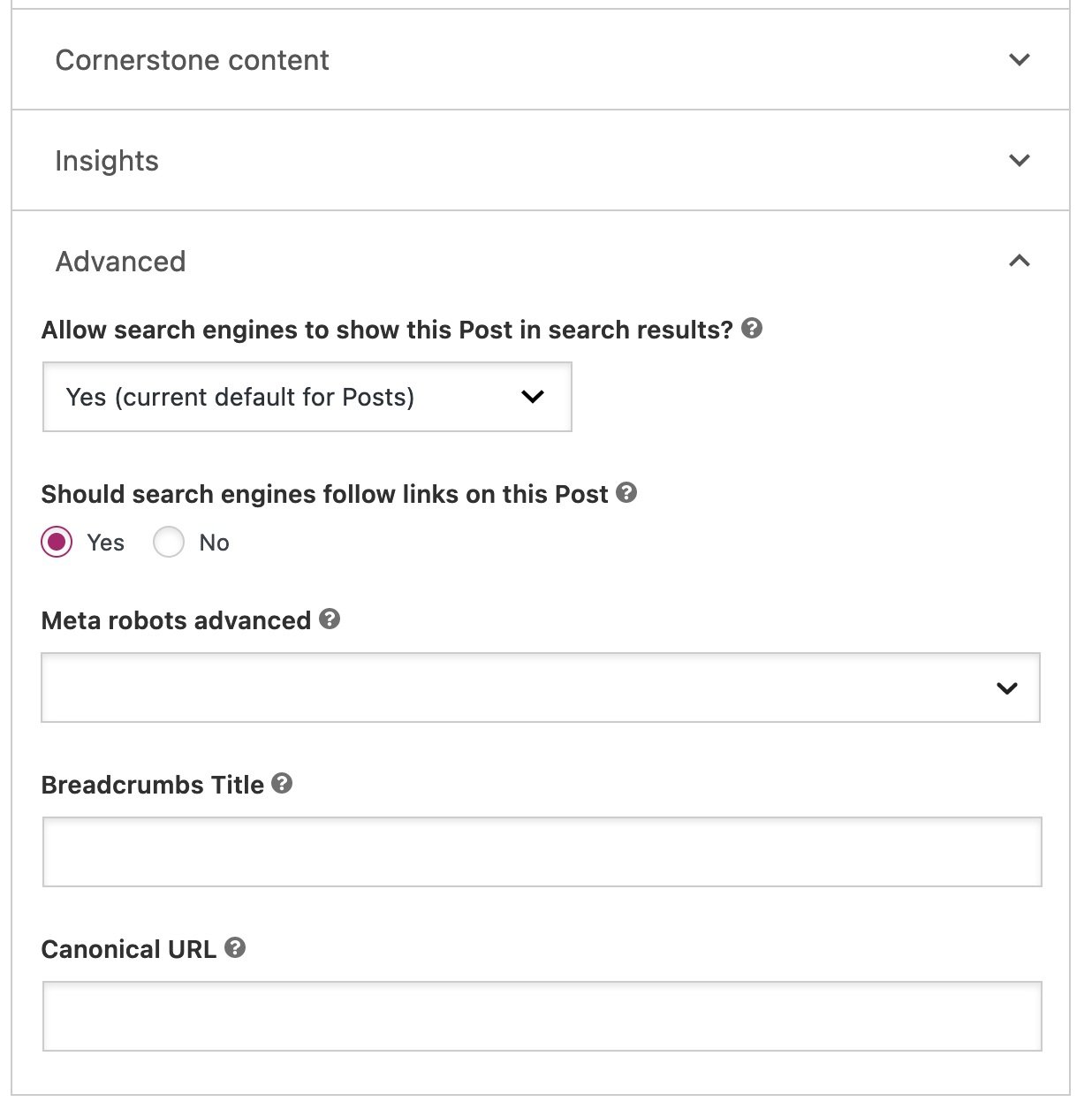

- Exclusion of Non-Indexable Content: Crucially, Yoast SEO automatically excludes pages marked as

noindexfrom the sitemap. This keeps the sitemap clean and focused solely on content intended for search engine indexing, preventing crawlers from wasting resources on irrelevant pages. Webmasters retain full control, being able to toggle the "Allow search engines to show this content in search results?" option in the Yoast SEO sidebar for individual posts or pages. - Developer Customization: For advanced users, Yoast SEO offers filters that allow further customization of sitemap behavior, such as programmatically excluding specific content types or adjusting the number of URLs per sitemap.

This automated approach ensures that even novice website owners can benefit from robust sitemap management without needing deep technical SEO knowledge, significantly lowering the barrier to entry for effective content discoverability.

Strategic Sitemap Management: Best Practices and Inclusions

Deciding which pages to include in an XML sitemap requires strategic thinking, prioritizing relevance and user experience. The guiding principle should be: if a URL provides value to a visitor and you want it to appear in search results, it belongs in the sitemap. Conversely, if a page offers little value or is not intended for public search, it should be noindex‘ed and consequently excluded from the sitemap.

- Essential Content: All primary content, such as core service pages, product pages, blog posts, and important informational articles, should always be included. These are the pages users are most likely to seek out.

- Orphan Pages (if valuable): If an orphan page holds significant value but lacks internal links, including it in the sitemap is crucial for its discovery. However, the ideal solution is to integrate it into the site’s internal linking structure.

- Exclusions for Thin or Duplicate Content: Pages with "thin content" (minimal value, low word count) or near-duplicate content should generally be excluded or

noindex‘ed. Examples include thank-you pages after form submissions, login pages, or very sparse tag/category archives. For instance, a new blog might initially have tag archives with only one or two posts. These might be considered "thin" and could be excluded until they accumulate more content, or enriched with unique introductory text. - Canonical URLs Only: The sitemap should only list canonical URLs. Any duplicate content or URLs with parameters that resolve to the same page should be handled with

rel="canonical"tags and excluded from the sitemap to prevent confusion for search engines. - Error Pages (404s): Error pages should never be included in a sitemap. A sitemap is a list of existing and valuable content.

Regularly auditing your sitemap and cross-referencing it with Google Search Console data is vital. A significant discrepancy between submitted and indexed URLs could indicate underlying crawlability or indexability issues that need immediate attention, such as server errors, noindex directives, or content quality problems.

Addressing Common Queries: XML Sitemap FAQs

- What if Google Search Console reports errors in my XML sitemap? An invalid or improperly read XML sitemap in GSC requires immediate investigation. GSC usually provides specific error messages, such as "malformed XML" or "empty sitemap." Common causes include syntax errors, incorrect URLs, or sitemap files exceeding size/URL limits. Review the reported issue, correct the underlying problem, and resubmit the sitemap.

- How can I check if a website has an XML sitemap? The most common method is to append

sitemap.xmlto the root domain (e.g.,example.com/sitemap.xml). Many sites, especially those using SEO plugins like Yoast, redirect this to a sitemap index file, often found atexample.com/sitemap_index.xml. If neither works, it doesn’t necessarily mean there isn’t one; some sites use different filenames or paths, which might be specified in therobots.txtfile. - How do I update an XML sitemap? While manual creation and updating are possible, they are highly inefficient and error-prone. The best practice is to use an SEO plugin (like Yoast SEO) or a Content Management System (CMS) that automatically generates and updates sitemaps in real-time as content changes. This ensures accuracy and consistency without manual intervention.

- Can I use

<priority>in my XML sitemap? Although the<priority>tag is part of the Sitemaps Protocol, Google has explicitly stated that it does not use this attribute to prioritize crawling or indexing. Webmasters should focus on strong internal linking, content quality, and relevantlastmoddates to signal importance to search engines.

The Unifying Force: Why Every Website Needs an XML Sitemap

While technically not a mandatory component for a website, the consensus among SEO professionals and search engine guidelines strongly advocates for the use of XML sitemaps across virtually all websites. Google’s documentation itself highlights their benefits for "really large websites," "websites with large archives," "new websites with just a few external links," and "websites which use rich media content."

However, the modern web environment suggests that every website, regardless of size or age, benefits from a well-structured XML sitemap. The sheer scale of the internet, with billions of pages vying for attention, makes efficient discoverability a constant challenge. Even small sites can have internal linking issues, and new sites desperately need a direct signal to Google to jumpstart their indexing process. Moreover, as search evolves to incorporate AI, ensuring that your content is readily available and up-to-date in the search index becomes an even more critical strategic imperative.

An XML sitemap acts as a reliable communication channel between a website and search engines, mitigating risks associated with overlooked content, improving crawl efficiency, and providing vital metadata. By providing this digital roadmap, webmasters empower search engines to accurately and comprehensively understand their online presence, ultimately leading to enhanced visibility, better user experience, and a stronger foundation for SEO success in an ever-changing digital landscape.