Digital marketing has reached a level of saturation where simply driving traffic to a website is no longer a guarantee of commercial success. As customer acquisition costs (CAC) continue to rise across platforms like Google Ads and Meta, the efficiency of the landing page has become the primary determinant of a campaign’s return on investment (ROI). Creating a high-performing landing page remains one of the most significant challenges for modern marketing teams. It requires a delicate balance of ad-to-page relevance, compelling value propositions, and a seamless user experience that guides the visitor toward a specific action. However, even when best practices are followed, conversion rates often fall below industry benchmarks. In such instances, industry experts suggest that rather than abandoning a campaign, marketers must employ rigorous A/B testing to identify and rectify friction points.

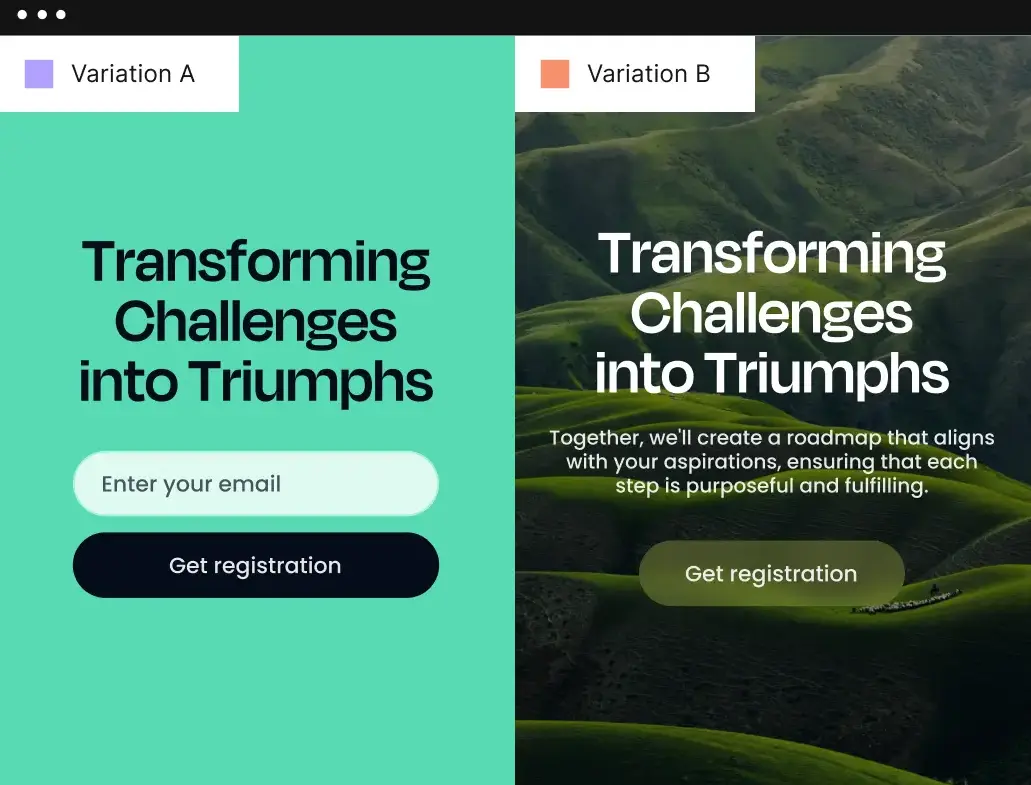

A/B testing, also known as split testing, is a methodical process of comparing two or more versions of a webpage to determine which one performs better. By isolating specific variables—such as headlines, call-to-action (CTA) buttons, imagery, or form lengths—marketers can gather empirical data on user behavior. This data-driven approach eliminates guesswork, allowing teams to understand which elements resonate with their target audience and which hinder the conversion process. As the digital landscape shifts toward hyper-personalization, the role of A/B testing has expanded from simple color changes to complex AI-driven experiments that dynamically allocate traffic based on real-time performance.

The Strategic Framework for Evaluating A/B Testing Platforms

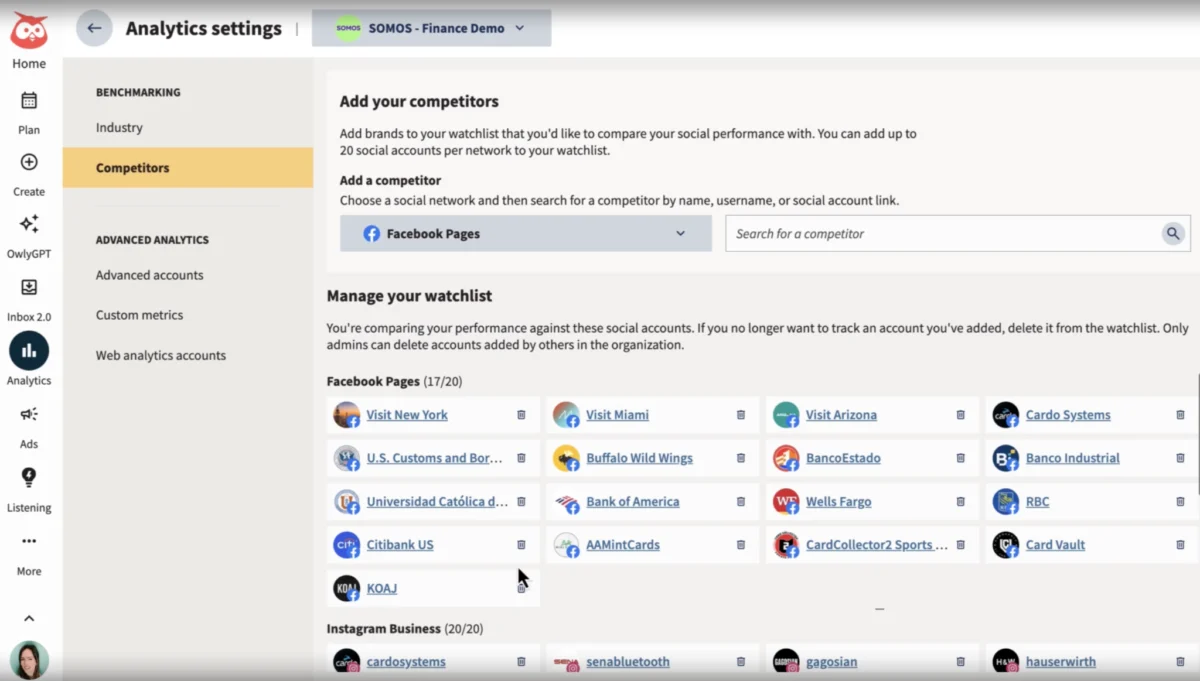

Selecting an A/B testing tool is a critical infrastructure decision for any growth-oriented organization. The market is saturated with platforms claiming to offer "expert-built" solutions, but the utility of these tools varies significantly based on technical requirements and marketing goals. To evaluate these platforms effectively, organizations typically look at several key criteria. First is the ease of use, specifically whether the tool offers a no-code or low-code interface that allows marketing teams to deploy tests without constant reliance on web developers. Second is the depth of integration; an A/B testing tool must be able to communicate seamlessly with the existing marketing stack, including CRM systems, analytics engines like Google Analytics 4 (GA4), and advertising platforms.

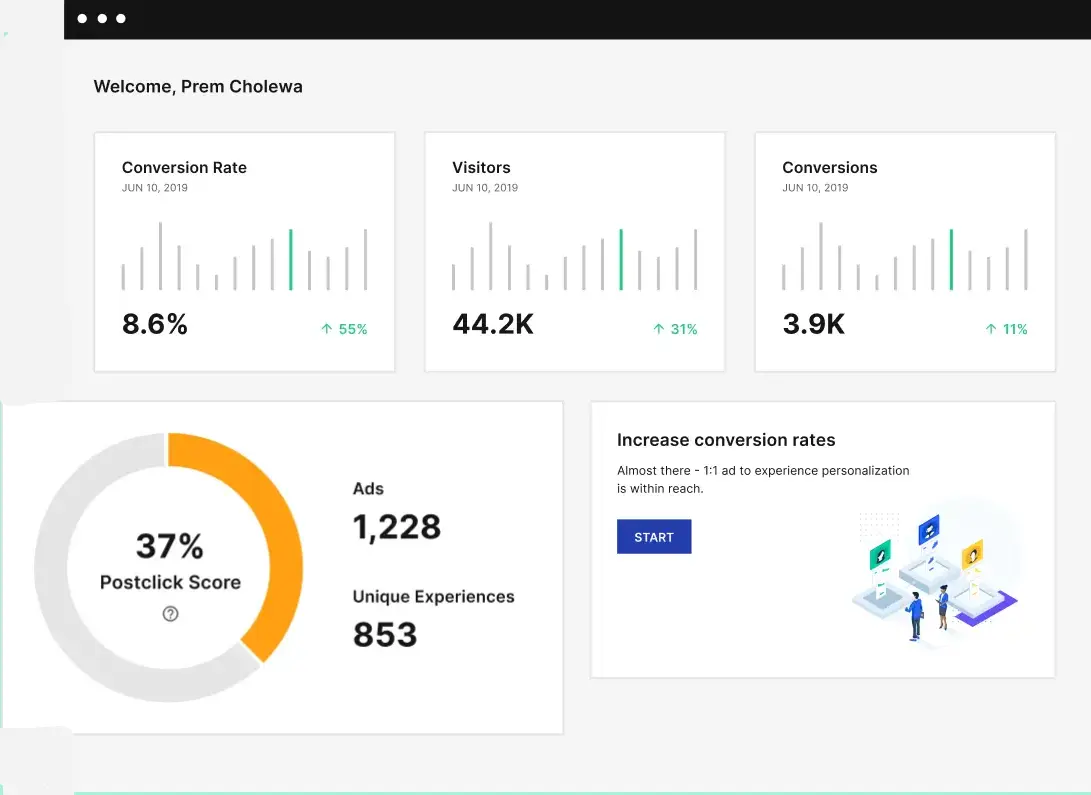

Furthermore, the sophistication of the analytics dashboard is paramount. A professional-grade tool should provide insights beyond simple click-through rates, offering data on cost-per-visitor, cost-per-lead, and statistical significance. Statistical significance is a mathematical calculation that ensures the results of a test are not due to random chance, providing the confidence needed to implement permanent changes. Finally, modern evaluation now includes the presence of AI capabilities, such as automated copy generation and dynamic traffic allocation, which can significantly accelerate the testing lifecycle.

A Comparative Analysis of Leading A/B Testing Solutions

1. Instapage and the Shift Toward AI-Driven Experimentation

Instapage has positioned itself as a leader in the landing page optimization space by integrating building, testing, and analytics into a single ecosystem. Unlike traditional tools that require a separate landing page builder and a separate testing script, Instapage utilizes server-side experimentation. This technical approach is significant because it reduces "flicker"—the brief moment where a visitor sees the original page before the variation loads—which can negatively impact user experience and skew test results.

One of the most notable advancements in the Instapage platform is the introduction of AI Experiments. This feature utilizes dynamic traffic allocation, a method that departs from traditional A/B testing. In a standard test, traffic is split 50/50 until a winner is declared. In contrast, AI Experiments use machine learning to monitor the conversion rate of each variation in real-time, automatically funneling more traffic to the higher-performing version while the test is still active. This minimizes "regret"—the lost conversions that occur when traffic is sent to an underperforming variation during the testing period.

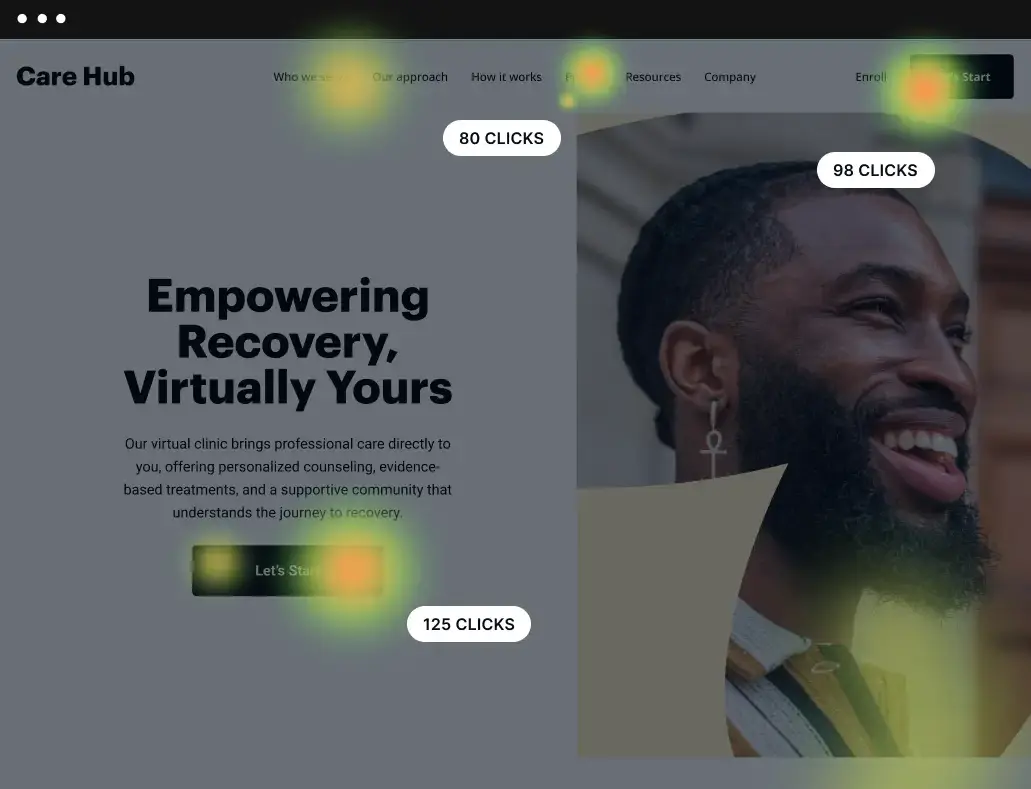

Case studies from major enterprises, such as Verizon, highlight the impact of this integrated approach. By utilizing heatmaps to track mouse movement and scroll depth alongside A/B testing, teams have been able to cut their cost-per-conversion by more than 50%. The ability to generate AI-driven headlines and CTAs further reduces the time-to-market for new experiments, allowing for a high-velocity testing culture.

2. VWO: Multi-Metric and Split URL Testing

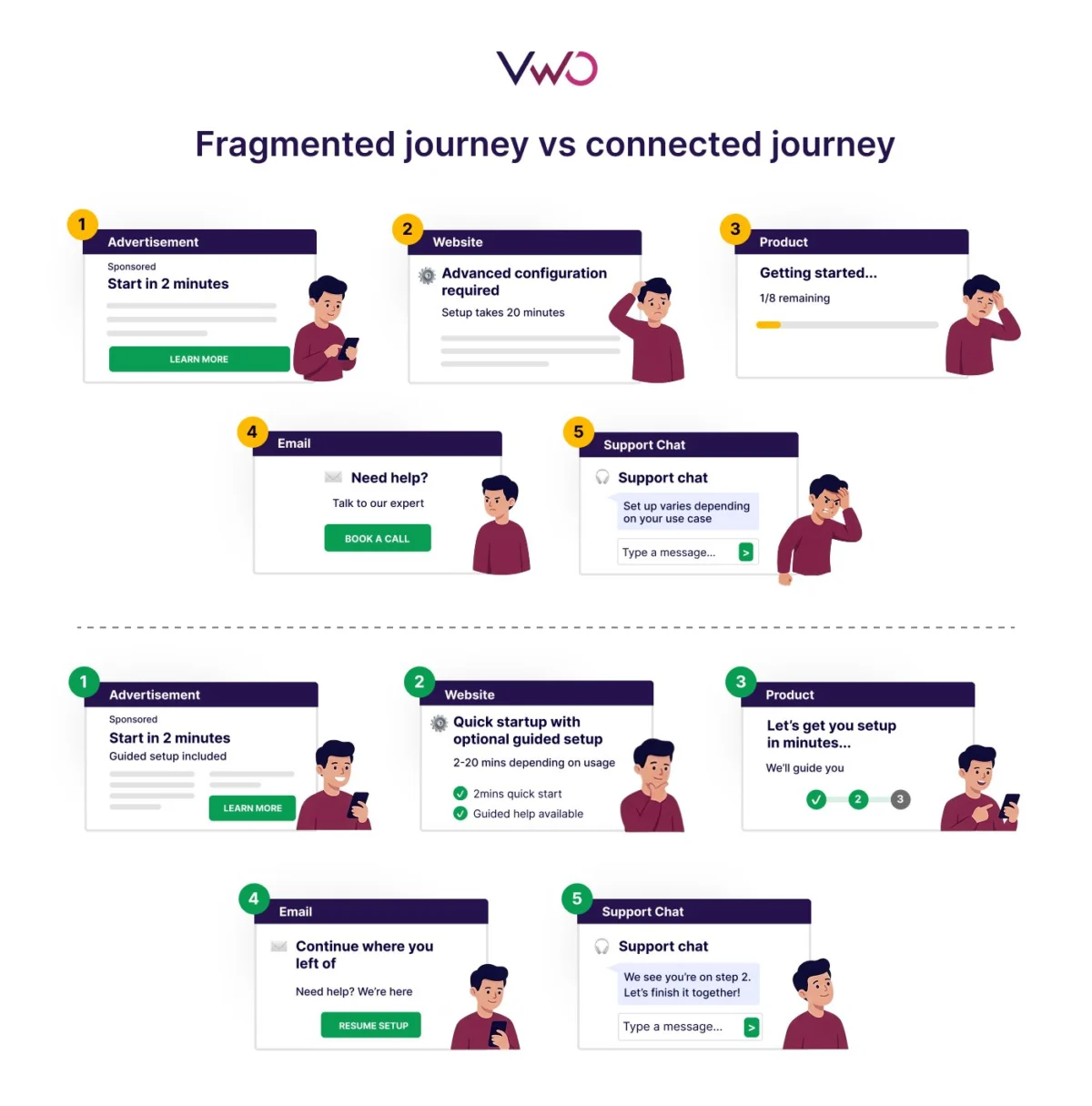

Visual Website Optimizer (VWO) remains a staple for marketers who require robust split URL testing. This method is particularly useful when testing radical design overhauls or entirely different page structures rather than minor element changes. By hosting two different versions of a page on distinct URLs, VWO allows for a clean comparison of entirely different user journeys.

VWO’s strength lies in its ability to track multiple metrics simultaneously across the entire marketing funnel. While many tools focus solely on the final conversion, VWO enables marketers to see how a change on a landing page affects downstream behavior, such as engagement with subsequent pages or long-term retention. This holistic view prevents "local maxima" optimization—a scenario where a change increases initial clicks but ultimately leads to lower quality leads or sales.

3. Optimizely: Enterprise-Scale Low-Code Testing

Optimizely is often the choice for enterprise-level organizations that require high-performance experimentation at scale. The platform has evolved to offer "Web Experimentation" that functions at the network edge. Testing at the edge means the logic for the experiment is executed closer to the user’s physical location, ensuring lightning-fast load times and a seamless user experience regardless of the complexity of the test.

Optimizely’s visual editor is designed to reduce the "developer bottleneck," allowing non-technical users to target page elements and preview changes instantly. However, it also provides the depth required by technical teams, such as extension templates and the ability to run server-side experiments across various applications. Their embedded AI capabilities are specifically tuned for call-to-action messaging, suggesting variations based on historical performance data within specific industries.

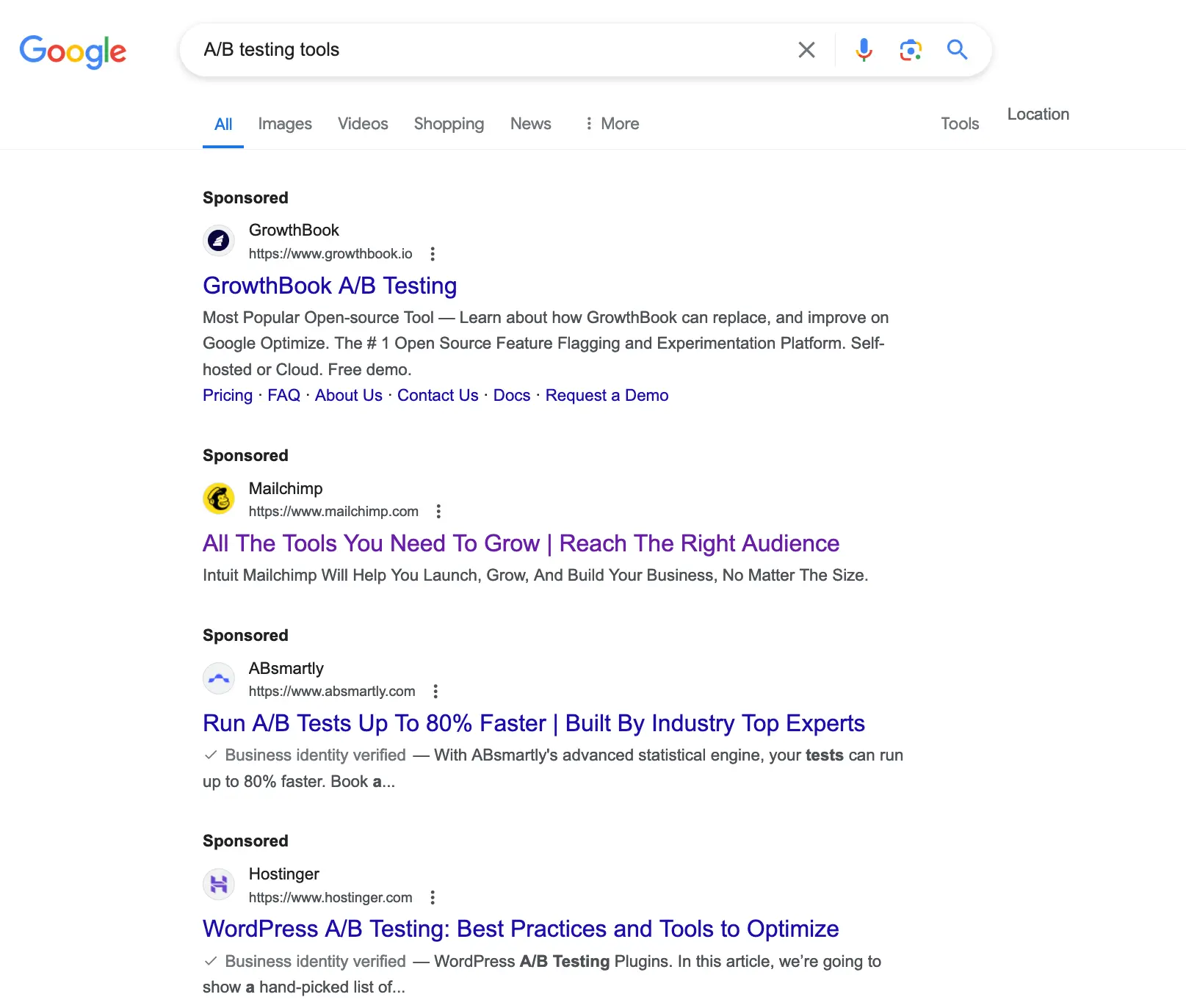

4. GrowthBook: The Data-Centric Open Source Alternative

GrowthBook represents a newer philosophy in the experimentation space, focusing on transparency and data sovereignty. As an open-source platform, it appeals to organizations that want to avoid "vendor lock-in" and maintain full control over their testing data. GrowthBook integrates directly with major SQL data sources like Snowflake, BigQuery, and Redshift, as well as third-party tools like Mixpanel.

This approach is particularly beneficial for data science teams who want to apply their own statistical models to experiment results. GrowthBook allows for unlimited A/B testing and includes feature flagging, which enables developers to toggle features on or off for specific user segments. This integration of product development and marketing optimization makes it a versatile tool for companies where the landing page and the product experience are closely intertwined.

The Chronology of Optimization: From Static to Dynamic

The history of landing page optimization has moved through three distinct eras. In the early 2000s, the "Static Era" saw marketers creating single pages and making changes based on gut feeling or basic hit counters. There was little understanding of user psychology, and "testing" was largely anecdotal.

The "Statistical Era" began with the rise of tools like Google Website Optimizer (the precursor to Google Optimize) and the early versions of Optimizely. This period introduced the industry to the concept of statistical significance and the randomized controlled trial (RCT) for web design. Marketers began to understand the importance of sample sizes and the dangers of making decisions based on "noise" rather than "signal."

Today, we are in the "AI and Personalization Era." The focus has shifted from finding the "one best page" for everyone to finding the "best page for this specific user." Modern tools now allow for audience-specific variations, where a visitor arriving from a LinkedIn ad for "Enterprise Solutions" sees a completely different headline and social proof than a visitor arriving from a Facebook ad for "Small Business Tools."

Supporting Data and Broader Implications for the Industry

The necessity of these tools is underscored by industry data regarding user expectations. According to various market research reports, a one-second delay in page load time can lead to a 7% reduction in conversions. This fact alone makes the technical execution of A/B testing (such as avoiding flicker and using edge computing) a business imperative rather than a technical preference. Furthermore, companies that take a structured approach to conversion optimization are twice as likely to see a large increase in sales.

The broader implications of widespread A/B testing are profound. As more companies adopt these tools, the general quality of the web improves. Friction is removed, messaging becomes clearer, and the "noise" of irrelevant advertising is reduced. However, this also raises the bar for entry. In a competitive landscape where every major player is optimizing their funnel, the "baseline" for a good landing page continues to rise.

Official responses from the tech community suggest that the next frontier will be the integration of privacy-first testing. With the phasing out of third-party cookies and the tightening of regulations like GDPR and CCPA, A/B testing tools are having to innovate to provide accurate data without compromising user privacy. The shift toward server-side testing and first-party data integration is a direct response to these regulatory pressures.

In conclusion, A/B testing is no longer an optional luxury for digital marketers; it is a fundamental component of the modern growth stack. Whether through the AI-driven dynamic allocation of Instapage, the funnel-wide metrics of VWO, the enterprise-scale power of Optimizely, or the data flexibility of GrowthBook, organizations have more power than ever to understand and influence the customer journey. The transition from "throwing in the towel" to "investigating what isn’t working" marks the difference between a failing campaign and a scalable business model. As AI continues to evolve, the speed and accuracy of these experiments will only increase, making the pursuit of the "perfect" conversion rate a continuous, data-driven journey.