In the increasingly competitive digital marketplace, many marketing and product teams find themselves trapped in a cycle of testing low-impact ideas that fail to move the needle on core business metrics. Despite the proliferation of A/B testing tools, the primary challenge remains a lack of strategic focus. To address this, industry leaders have developed structured frameworks designed to shift the focus of Conversion Rate Optimization (CRO) roadmaps toward initiatives that yield the highest statistical lift and revenue impact. The most effective of these methodologies is the SHIP model, a four-phase cyclical process that prioritizes data-backed insights over subjective preferences.

The SHIP Model: A Cyclical Approach to Growth

The SHIP model provides a rhythmic structure to optimization projects, ensuring that every test performed is rooted in empirical evidence. Rather than viewing CRO as a series of disconnected experiments, this model treats it as a continuous loop where each phase informs the next, creating a compound effect on site revenue and organizational learning.

The acronym stands for:

- Scrutinize: Gathering data and identifying friction points.

- Hypothesize: Creating predictive statements based on research.

- Implement: Designing and launching the A/B test.

- Propagate: Analyzing results and applying learnings across the broader business.

By following this rhythm, companies can avoid the "random testing" trap. Industry data suggests that only about one out of every eight A/B tests produces a statistically significant winner. However, teams that utilize a structured research phase before testing report success rates that are significantly higher, often doubling the win rate of their experiments.

Phase 1: The Scrutinize Audit and Data Gathering

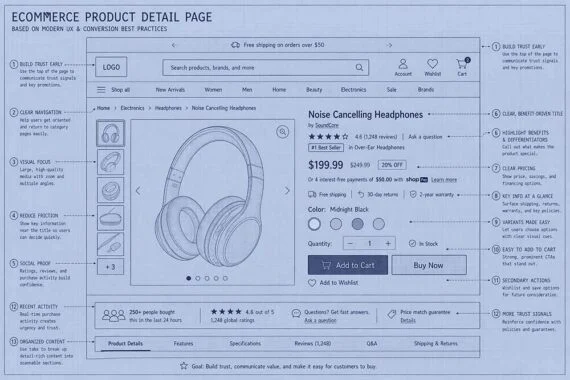

The Scrutinize phase is designed to uncover not just where visitors are dropping off, but why. This involves a combination of quantitative and qualitative research. Quantitative data, derived from tools like Google Analytics 4 (GA4), identifies high-exit pages and funnel leaks. Qualitative data, gathered through heatmaps, session recordings, and user surveys, provides the "why" behind the behavior.

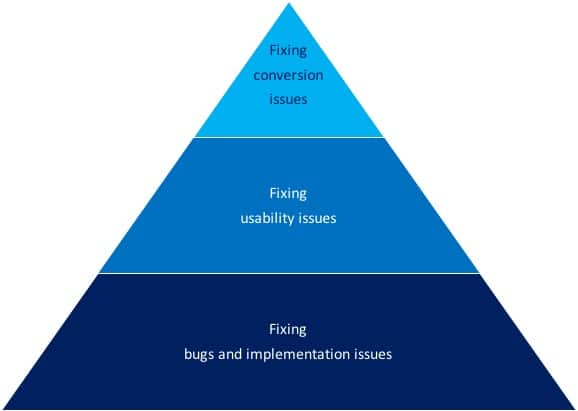

Marketers often view a website as a digital storefront, but a more accurate analogy is a sales conversation. When conversions stall, it indicates a breakdown in that conversation. The Scrutinize phase identifies the specific moment of friction. This audit typically reveals three categories of issues: bugs, usability flaws, and conversion blockers.

The Critical Distinction: Usability vs. Conversion

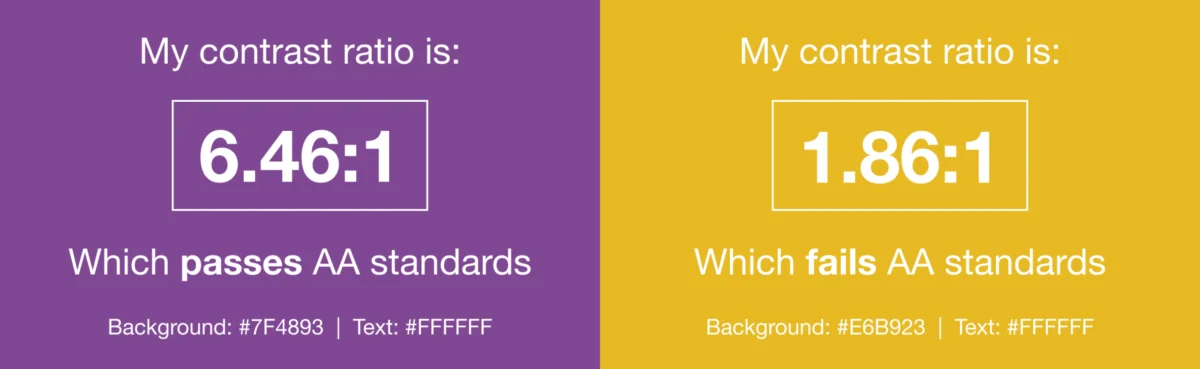

A common pitfall in CRO is the failure to distinguish between a user-friendly site and a high-converting site. While the two concepts are related, they are not identical.

Usability Issues refer to technical or design hurdles that prevent a user from completing a task. Examples include broken links, slow page load speeds, or a navigation menu that is difficult to use on mobile devices. These are "hygiene" factors; fixing them removes barriers but does not necessarily persuade a user to buy.

Conversion Issues are psychological barriers. A site may be perfectly usable, but if it fails to build trust, lacks a clear value proposition, or does not address user fears, the visitor will not convert. Conversion optimization focuses on persuasion, utilizing social proof, urgency, and clarity to motivate action. Experts note that while every usability issue is a conversion issue, the reverse is not true. A perfectly functional page can still have a 0% conversion rate if the offer is not compelling.

Engineering the Hypothesis: From Intuition to Science

Once research opportunities are identified during the Scrutinize phase, they must be translated into hypotheses. A hypothesis is a predictive statement that links a specific change to a measurable outcome.

The SHIP model distinguishes between an initial hypothesis and a concrete hypothesis. An initial hypothesis is a preliminary idea, such as "Adding social proof will increase trust." While useful for brainstorming, it lacks the specificity required for a rigorous experiment.

A concrete hypothesis follows a structured format: "Based on qualitative data from user polls showing a lack of brand trust, we believe that adding customer testimonials to the checkout page will address user uncertainty, resulting in a 10% increase in completed purchases." This level of detail ensures that the team understands exactly what is being tested and what the expected impact should be, allowing for more accurate post-test analysis.

Comparative Analysis of Prioritization Frameworks

With dozens or even hundreds of potential testing ideas, prioritization becomes the most critical step in a CRO program. Several frameworks have emerged to help teams rank their ideas objectively.

The PIE Framework

Developed by Widerfunnel, the PIE framework evaluates ideas based on three criteria:

- Potential: How much improvement can be made on this page?

- Importance: How valuable is the traffic to this page? (High-volume pages are prioritized).

- Ease: How difficult is it to implement this test technically?

While PIE is highly effective for its simplicity, critics argue it can be overly subjective, as "Potential" is often a "gut feel" estimate by the optimizer.

The Hotwire Framework

Introduced by Pauline Marol, this model adds a layer of strategic alignment. It scores ideas based on the severity of the issue, the potential value of the fix, and how well the test aligns with broader business objectives. This ensures that the CRO team is not working in a vacuum but is contributing to the company’s long-term goals.

The PXL Framework

Created by CXL, the PXL framework attempts to remove subjectivity by using a binary scoring system. It asks specific questions: Is the change above the fold? Is it a change in motivation or just a change in color? Is it supported by data? By forcing "yes" or "no" answers, PXL provides a more objective ranking of ideas.

The Invesp Weighted Model: A Data-First Approach

The Invesp prioritization framework builds upon these previous models by introducing a weighted average system. This model recognizes that a single problem can be solved in multiple ways, each with a different implementation cost and potential impact.

The Invesp model evaluates research opportunities based on 11 distinct criteria, including:

- Method of Discovery: Items found through multiple research methods (e.g., both analytics and user testing) receive a higher weight.

- Type of Change: Adding or removing elements is weighted more heavily than simply changing the location or color of an element.

- User Psychology: Tests that address "FUDs" (Fears, Uncertainties, and Doubts) are prioritized.

- Strategic Value: Alignment with the core business KPIs.

In this model, an item identified through four or five different research methods receives a score of 12, whereas an item found through only one method receives a 3. This ensures that the roadmap is driven by the strongest evidence rather than the loudest voice in the room.

Strategic Implications and Business Growth

The implementation of a structured CRO playbook has profound implications for business scalability. In the current economic climate, where Customer Acquisition Costs (CAC) are rising across platforms like Meta and Google, the ability to convert a higher percentage of existing traffic is a significant competitive advantage.

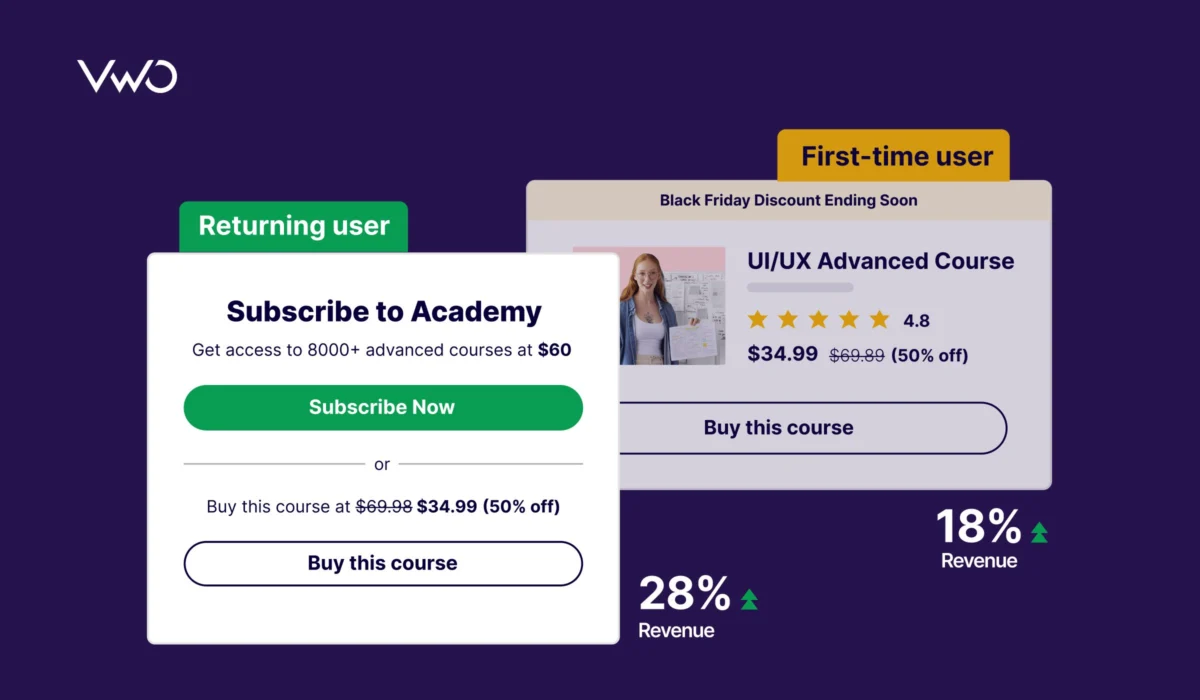

Data from recent industry reports indicates that companies that take a structured approach to conversion optimization are twice as likely to see a large increase in sales. Furthermore, the "Propagate" phase of the SHIP model ensures that learnings are not siloed. If a test proves that urgency-based messaging works on a product page, that insight can be applied to email marketing, paid search ads, and offline collateral.

However, experts caution that there is no "perfect" framework. The most successful organizations are those that adapt these models to their unique market conditions, competition, and internal culture. As Chris Goward, a pioneer in the field, has noted, the priority rating of a test page must depend on the unique business environment.

Timeline of CRO Evolution

The discipline of CRO has evolved significantly over the last two decades:

- Early 2000s: The "Wild West" era of A/B testing, characterized by simple button-color tests and "gut-feeling" changes.

- 2010-2015: The rise of formal frameworks like PIE and the introduction of user-friendly testing platforms like Optimizely and VWO.

- 2016-2021: The shift toward "Experience Optimization," where CRO became integrated with UX design and data science.

- 2022-Present: The era of AI-driven optimization and hyper-personalization, where frameworks like SHIP and PXL are used to manage complex, multi-channel experimentation programs.

Conclusion

A successful CRO program is not defined by the number of tests it runs, but by the quality of the insights it generates and the revenue it captures. By moving away from low-impact "tweaking" and toward a rigorous, research-based methodology like the SHIP model, businesses can transform their websites from static brochures into dynamic sales engines. Prioritization frameworks serve as the essential filter in this process, ensuring that limited resources are always directed toward the opportunities with the highest potential for impact. As digital landscapes become more crowded, the precision offered by these strategic playbooks will remain the primary differentiator between market leaders and those struggling to maintain profitability.