The rapid proliferation of Large Language Models (LLMs) and generative artificial intelligence has necessitated a robust framework for content governance to prevent the dissemination of harmful, biased, or illicit material. OpenAI, a leader in the generative AI space, has addressed this requirement through the release and refinement of its moderation tools, most notably the omni-moderation-latest model. This model represents a significant technological leap from legacy systems, offering a multimodal approach that integrates both text and image analysis into a single, high-performance safety layer. By leveraging the architecture of GPT-4o, the Omni Moderation model provides developers with a sophisticated, zero-cost solution for identifying and filtering content that violates safety guidelines, ensuring that AI-driven applications remain secure for public and enterprise use.

The Evolution of AI Content Governance and Safety Layers

The journey toward reliable AI moderation has been iterative. In the early stages of LLM development, safety was often handled via manual "hard-coding" of banned words or simple keyword filters. However, as AI became more capable of understanding nuance, intent, and context, these primitive methods proved insufficient. OpenAI initially introduced the text-moderation-latest endpoint to provide a more semantic approach to safety. While effective for text, the rise of multimodal AI—capable of processing images, audio, and video—demanded a more comprehensive tool.

The introduction of the omni-moderation-latest model marks a pivotal point in this chronology. Built upon the foundations of GPT-4o, OpenAI’s flagship multimodal model, the Omni Moderation tool is designed to understand the interplay between different media types. In a modern digital environment where harmful content often takes the form of memes, doctored images, or text embedded within visuals, a text-only moderation tool is no longer a complete defense. The Omni model fills this gap by providing a unified interface for analyzing diverse data inputs, reflecting the industry’s broader shift toward holistic AI safety.

Technical Specifications and Multimodal Capabilities

The Omni Moderation API is designed to be both accessible and highly granular. Unlike standard generative models that incur costs based on token usage, OpenAI has positioned the moderation endpoint as a free resource. This strategic decision encourages developers to prioritize safety without the burden of additional operational expenses.

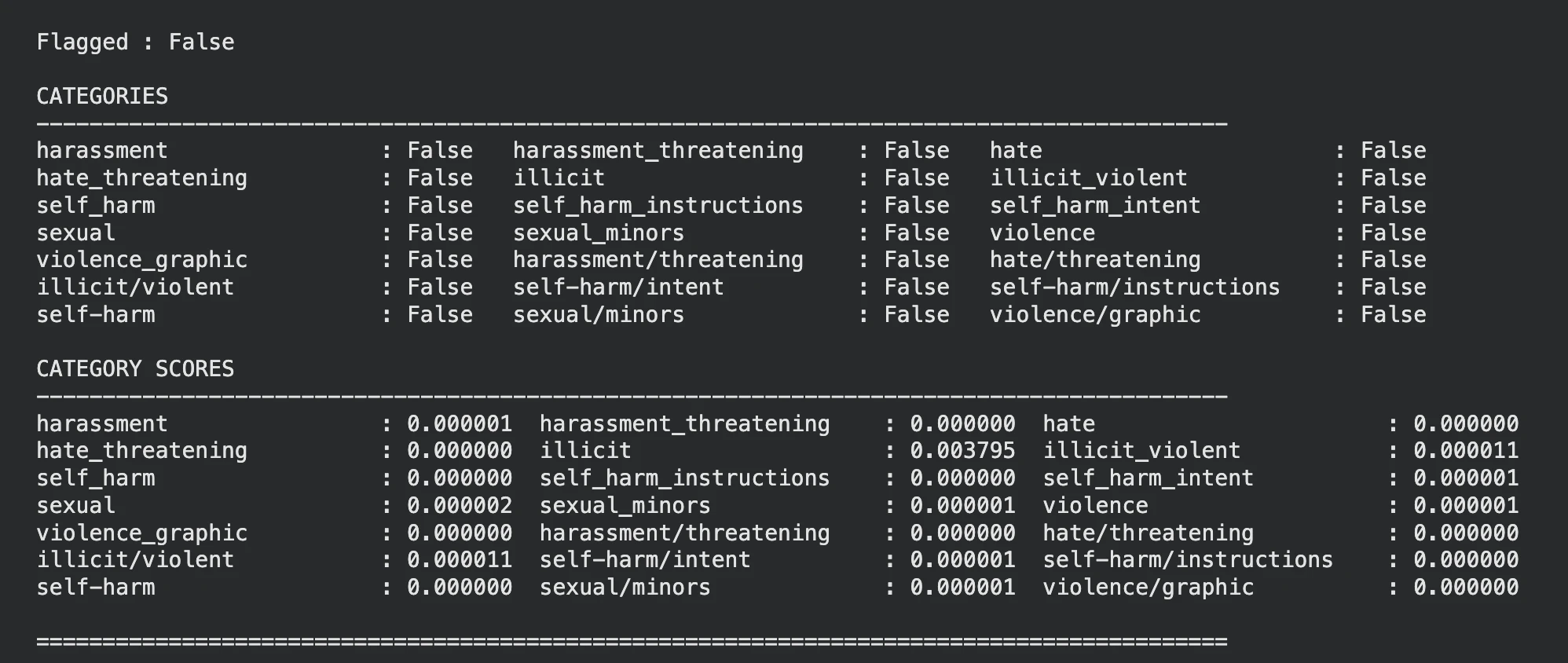

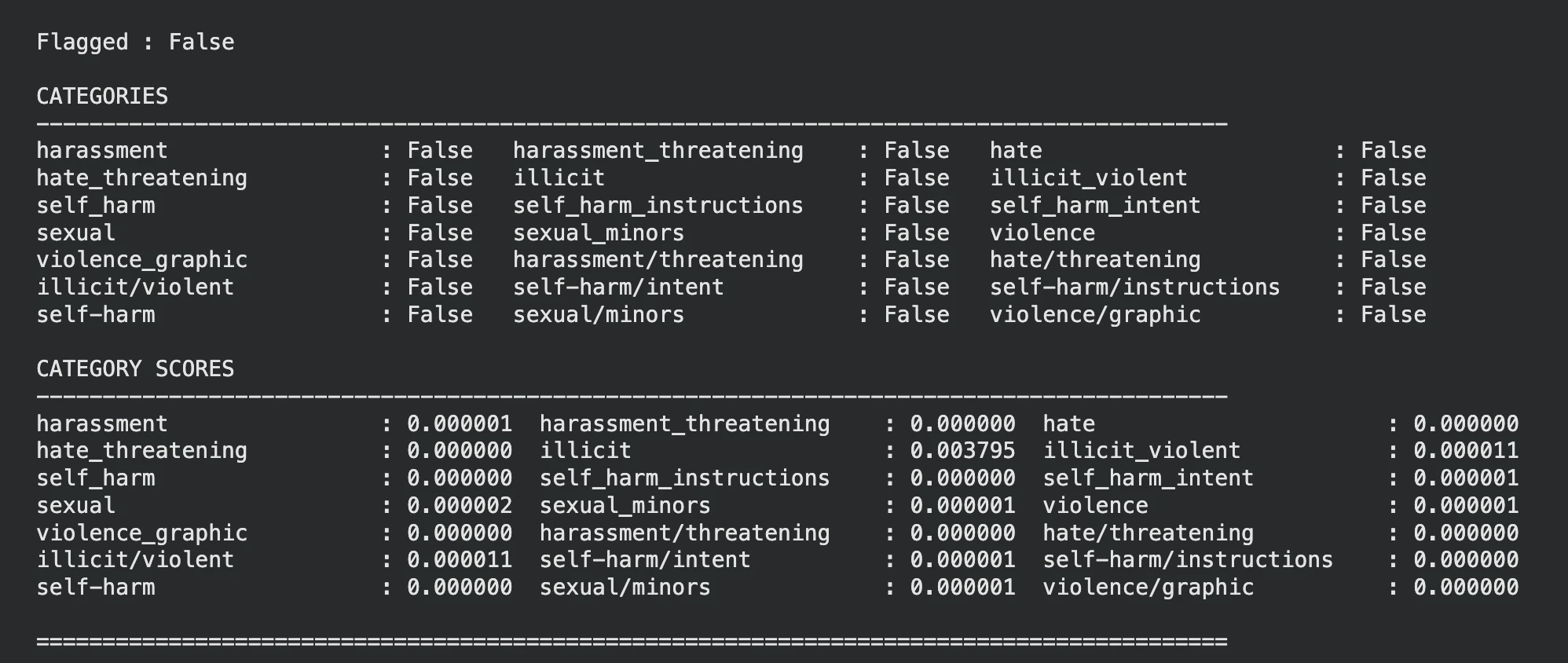

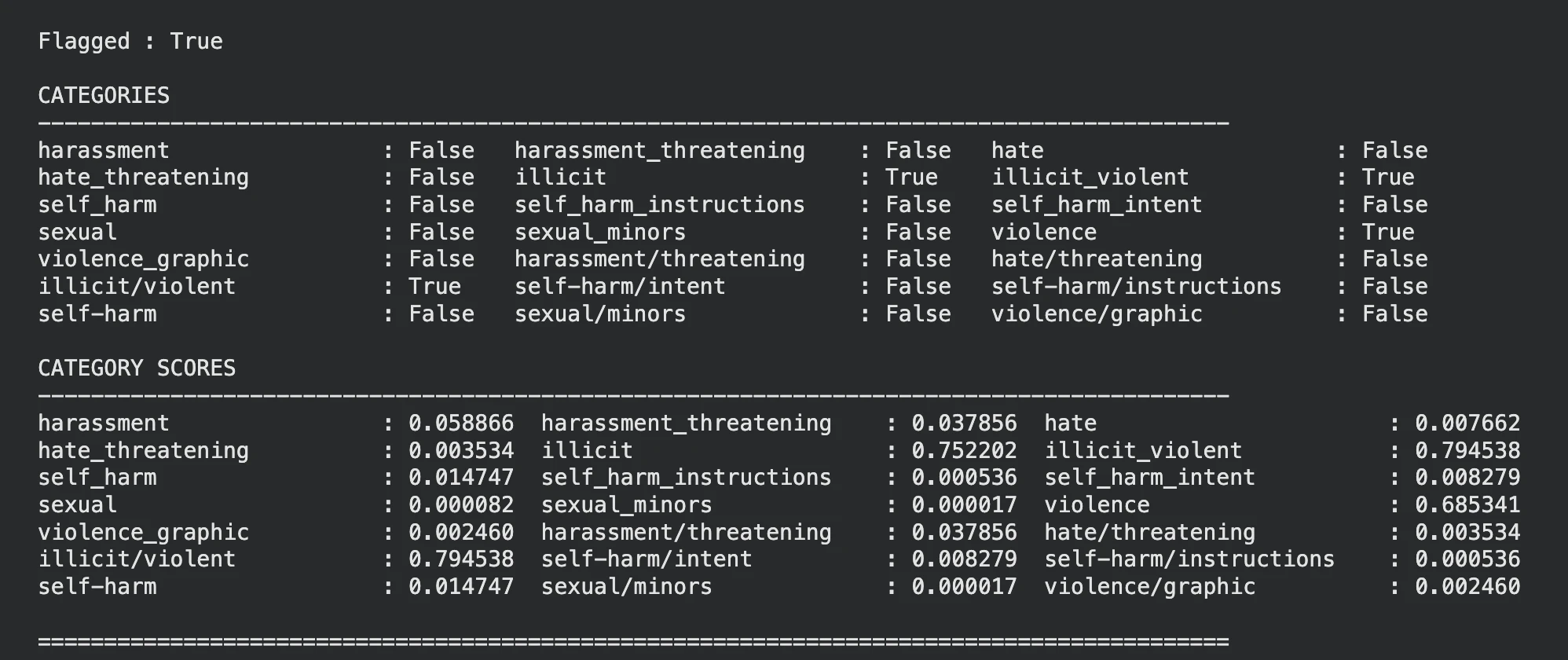

The model classifies input based on several critical safety categories. These include, but are not limited to:

- Hate/Threatening: Content that expresses, incites, or promotes hate based on race, gender, religion, or other protected characteristics, specifically including threats of violence.

- Self-Harm: Identifying content that encourages, provides instructions for, or promotes self-injury or suicide.

- Sexual/Minors: Detecting sexually explicit content and material involving the sexual exploitation of minors.

- Violence/Graphic: Filtering out depictions of death, physical injury, or extreme violence.

- Harassment: Recognizing persistent unwanted contact or content intended to demean individuals.

The "Omni" aspect of the model allows these categories to be applied to both text strings and image URLs. When an image is submitted, the model performs a visual analysis to determine if the content depicts prohibited themes, such as graphic violence or illicit substances. This dual-capability is essential for platforms that allow user-generated content, such as social media applications, educational forums, and customer support chatbots.

Chronology of OpenAI’s Safety Tooling

To understand the impact of the Omni model, it is necessary to examine the timeline of OpenAI’s safety releases:

- 2022: Initial Moderation API: OpenAI launched its first dedicated moderation endpoint, focusing on text-based classification to help developers align with the company’s usage policies.

- 2023: Refinement of Classifiers: The company updated its models to reduce false positives and improve detection in sensitive categories like self-harm and hate speech.

- May 2024: The Launch of GPT-4o: With the debut of GPT-4o, the infrastructure for multimodal understanding was established, allowing for simultaneous processing of text, audio, and images.

- Late 2024: Omni Moderation Model Release: The

omni-moderation-latestmodel was introduced to the public, effectively deprecating older text-only models for new high-performance applications.

This progression highlights a move away from siloed safety checks toward an integrated "safety-by-design" philosophy, where the moderation tools are as sophisticated as the generative models they monitor.

Implementation and Developer Workflow

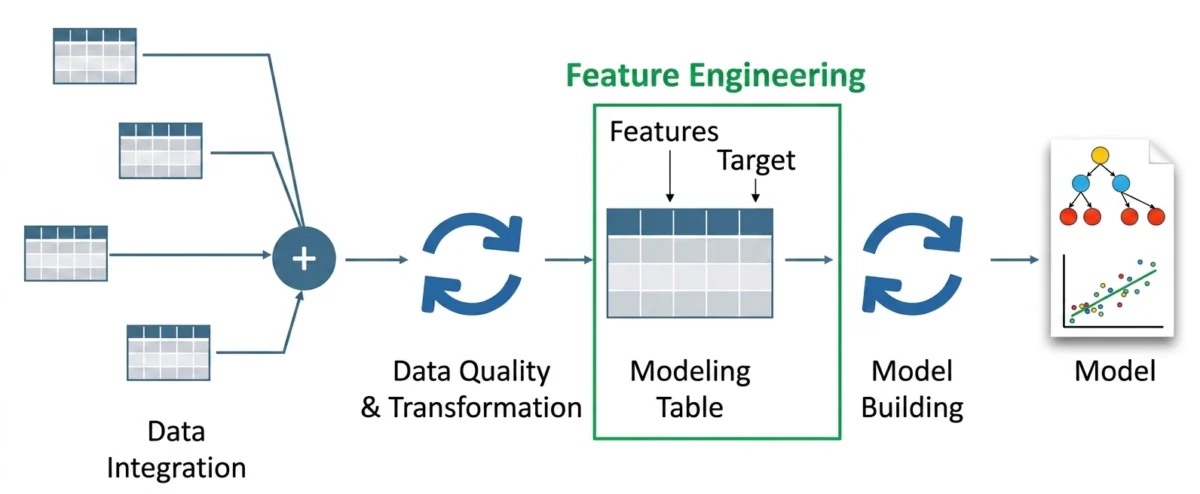

For developers seeking to integrate this safety layer, the process is streamlined to minimize friction. The prerequisite remains a standard OpenAI API key, which grants access to the free moderation endpoint. The workflow typically involves initializing an OpenAI client and sending a request to the moderations.create method.

In a practical environment, such as a Google Colab notebook or a production backend, the implementation follows a structured pattern. Developers define the model as omni-moderation-latest and provide the input. For text-based moderation, the input is a simple string. For image moderation, the input is structured as an object containing an image_url.

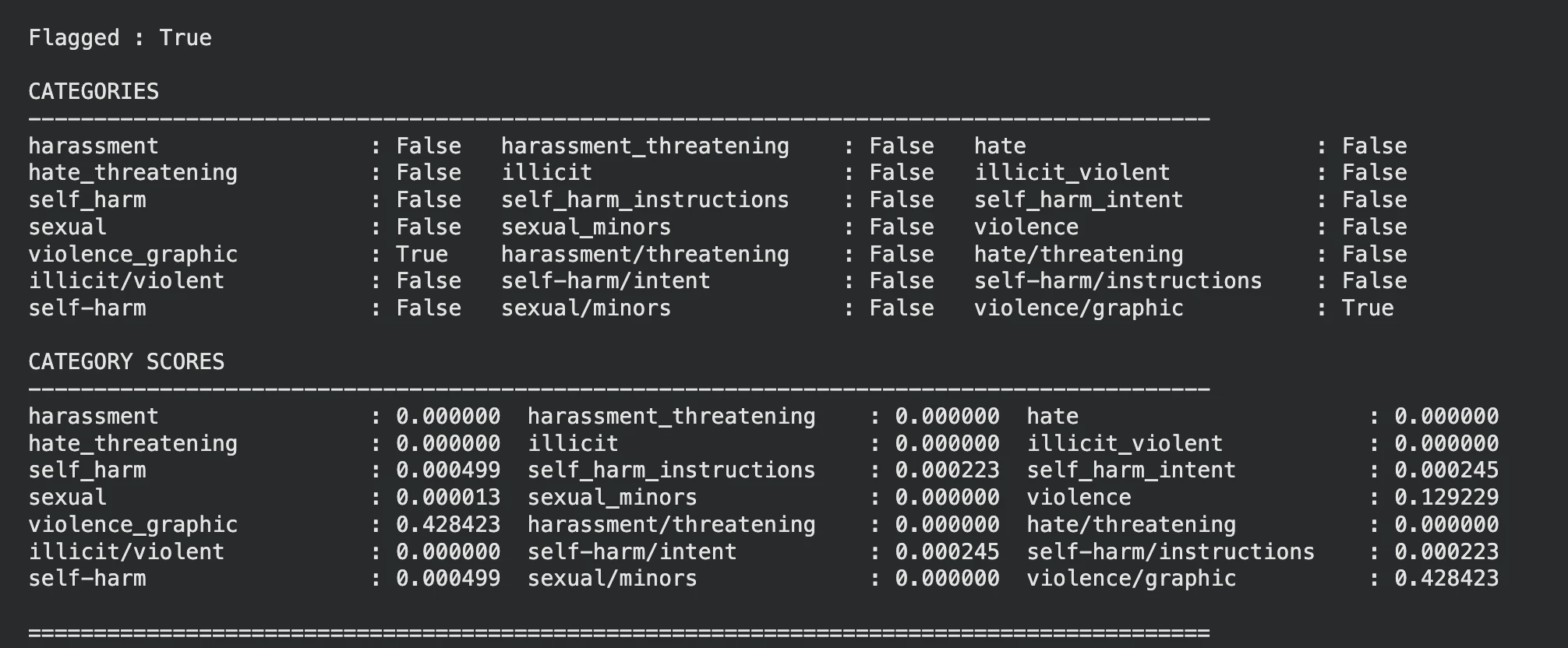

The response from the API is structured to provide both a binary "flagged" status and a detailed breakdown of "category scores." The binary status indicates whether the content exceeds OpenAI’s internal threshold for any given category. However, the category scores—represented as floating-point numbers between 0 and 1—offer developers the flexibility to set their own thresholds. A social media platform designed for children might implement a very low threshold for violence, whereas a news reporting tool might allow for higher scores in the same category to accommodate journalistic content.

Comparative Analysis: OpenAI vs. Industry Alternatives

OpenAI is not alone in the content safety market, and a comparative analysis reveals the competitive landscape. Microsoft’s Azure AI Content Safety is perhaps the most direct competitor. Azure’s offering provides similar multimodal moderation but includes enterprise-grade features such as "Jailbreak Detection" and "Protected Material Detection," which identifies copyrighted content.

Google’s Perspective API, managed via Jigsaw, remains a popular choice for text-based toxicity detection, particularly in the realm of online comments. However, Perspective has traditionally lacked the deep multimodal integration found in the Omni Moderation model. Meta has also contributed to this space with "Llama Guard," a model designed specifically for safeguarding human-AI conversations, though it often requires more significant local compute resources compared to OpenAI’s API-based approach.

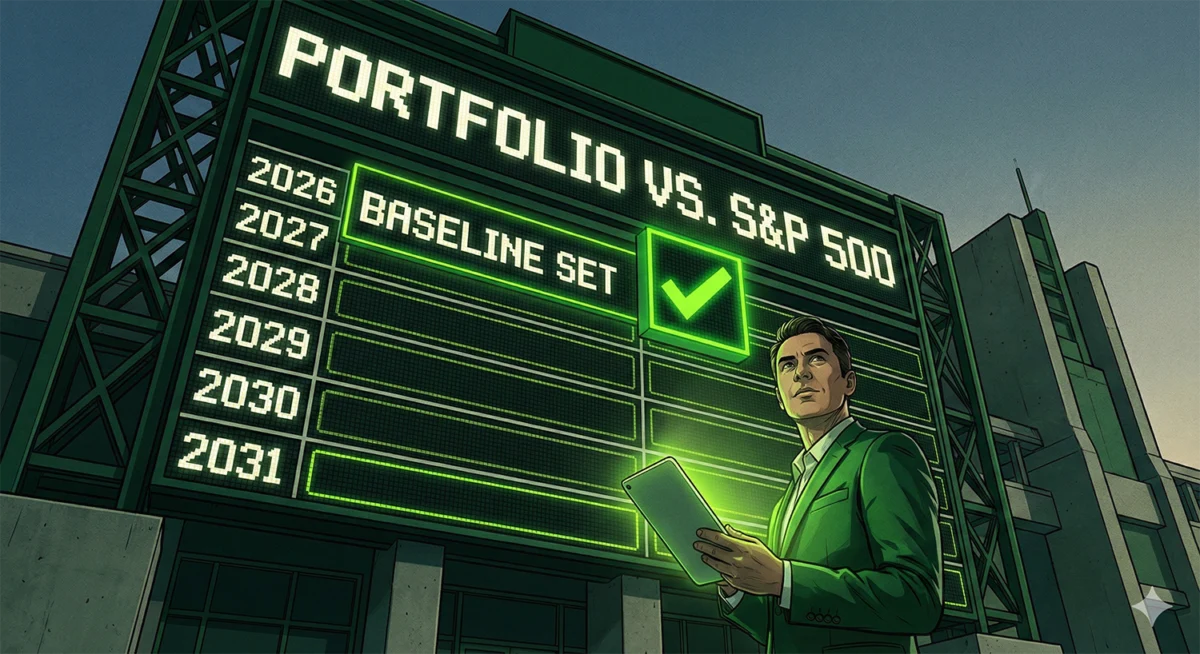

The primary advantage of OpenAI’s Omni Moderation model lies in its cost-to-performance ratio. By offering a GPT-4o-based tool for free, OpenAI has effectively set a new baseline for what developers expect from a safety API.

Broader Implications for AI Ethics and Regulation

The release of advanced moderation tools comes at a time of increasing regulatory scrutiny. The European Union’s AI Act and recent executive orders in the United States emphasize the responsibility of AI providers to mitigate risks. Tools like the Omni Moderation model provide a technical mechanism for companies to demonstrate compliance with these emerging "duty of care" standards.

Furthermore, the shift to multimodal moderation addresses the growing concern over "AI-generated harms." As deepfakes and AI-generated imagery become more realistic, the ability to automatically flag non-consensual or violent imagery is critical for maintaining digital trust. The Omni model acts as a frontline defense against the weaponization of generative AI.

However, industry experts note that automated moderation is not a panacea. There are ongoing discussions regarding "over-moderation" or "censorship," where AI might flag legitimate artistic expression or political speech due to a lack of nuanced cultural context. OpenAI addresses this by encouraging developers to use the category scores to fine-tune the model’s sensitivity to their specific use cases.

Potential Use Cases and Future Outlook

The applications for the Omni Moderation model are vast. In the corporate sector, it can be used to monitor internal communication channels to prevent workplace harassment. In the gaming industry, it can be integrated into chat systems to filter toxic behavior in real-time. Educational platforms can use it to ensure that student-AI interactions remain focused on learning and free from inappropriate content.

Looking forward, the evolution of moderation will likely move toward "context-aware" safety. Future iterations of the Omni model may be able to understand not just what is in an image or text, but the intent behind it—distinguishing, for example, between a medical photo of a wound and a depiction of senseless violence.

Conclusion

The omni-moderation-latest model represents a significant achievement in the field of AI safety. By providing a multimodal, high-accuracy, and cost-free tool, OpenAI has empowered developers to build safer and more reliable AI systems. As the digital landscape continues to evolve with more complex media types, the integration of such sophisticated moderation layers will be essential for the responsible growth of artificial intelligence. While challenges regarding nuance and cultural context remain, the move toward a unified, "omni" approach to safety is a clear indication of the industry’s commitment to mitigating the risks of the generative era. For any organization deploying LLM-based systems, the adoption of this safety layer is not merely a technical recommendation but a fundamental step toward ethical AI deployment.