Imagine a web ecosystem where not just humans but AI agents communicate with websites, going beyond traditional browsing. Unlike conventional web experiences, where people click, scroll, and search, AI agents can navigate, interpret, and even perform tasks autonomously on your site. This is not a futuristic concept; it is already unfolding. This marks the emergence of the agentic web, a profound architectural shift poised to redefine how we interact with digital information and services.

The Paradigm Shift: From Human-Centric to Agent-Ready Web

For decades, the internet has primarily served as a human interface. Users navigated websites, manually inputting queries into search engines, clicking on results, comparing information across various pages, and painstakingly completing tasks such such as filling out forms or making purchases. Even with advancements in search engine algorithms and user experience design, the fundamental interaction model remained largely unchanged: a human actively engaging with a passive digital environment.

This foundational model is now undergoing a radical transformation. The agentic web represents a monumental shift from a digital landscape designed exclusively for human users to one meticulously crafted for seamless interaction between both people and intelligent AI assistants. In this evolving paradigm, the laborious manual processes of researching products, comparing services, completing forms, and executing transactions are increasingly being delegated to sophisticated AI assistants. These agents possess the capability to autonomously search, interpret vast quantities of information, and act decisively on behalf of their human principals. Consequently, the user’s role evolves from that of an active navigator and executor to a higher-level decision-maker, guiding and overseeing the AI’s delegated actions. The shift is palpable: from searching to delegating.

Crucially, this evolution transcends mere "smarter chat interfaces." It signifies the rise of truly autonomous agents capable of interpreting complex user intent, comparing a multitude of options across diverse platforms, and executing concrete actions. Websites are no longer merely static pages to be visited; they are transforming into dynamic endpoints to be queried, understood, and acted upon by intelligent systems. For this vision to materialize at scale, intelligence cannot be confined to a singular assistant or a closed platform. It necessitates a distributed model where systems can communicate with other systems frictionlessly. This mandates a web that is inherently machine-readable, robustly interoperable, and fundamentally built for sophisticated agent-to-agent interaction. The agentic web is not a distant prediction; it is an architectural overhaul already well underway, reshaping the very fabric of our digital world.

The Foundation of Interoperability: Protocol Thinking

If the agentic web’s essence lies in intelligent systems interacting autonomously with websites, then the fundamental question becomes: how do these disparate systems achieve mutual understanding? The answer, unequivocally, lies not in visual design or superficial aesthetics, but in robust infrastructure and shared communication standards.

The internet’s unparalleled success has always been predicated on a foundation of shared communication rules and protocols. From the inception of the World Wide Web, standards like HTTP (Hypertext Transfer Protocol) enabled browsers to request and receive web pages, forming the bedrock of client-server communication. Later, RSS (Really Simple Syndication) emerged to facilitate the distribution of content updates, allowing information to flow automatically. More recently, structured data formats, such as those defined by Schema.org, have empowered search engines to interpret the semantic meaning of content, moving beyond mere keywords to understand entities, relationships, and context. These are not mere "features"; they are foundational protocols—agreements that enable large-scale coordination, interoperability, and the seamless exchange of information across a globally distributed network.

Now, this same fundamental logic is being applied to the burgeoning domain of AI agents. In the agentic web, AI agents will not emulate human browsing behavior by visually clicking buttons or scanning pages for information. Instead, they will engage in a more direct, programmatic interaction: sending precisely formulated requests, interpreting structured responses, comparing a multitude of options programmatically, and completing tasks with machine precision. For this complex interplay to function effectively across potentially millions of diverse websites, communication cannot be improvised or ad-hoc; it must be rigorously standardized.

This is precisely where "protocol thinking" becomes not just advantageous, but absolutely essential. Protocol thinking involves designing websites and their underlying data structures in a manner that makes them predictably understandable for machines. Rather than requiring developers to build bespoke, custom integrations for every new AI assistant or platform that emerges, websites will expose a consistent, standardized interaction layer. This means agents do not need to "learn" every unique interface; instead, they rely on a common set of shared rules and communication protocols.

As emphasized in contemporary discussions surrounding distributed intelligence, the ultimate objective is not to centralize control within a single, all-powerful chatbot or AI. Rather, intelligence must be distributed across the vast expanse of the web. Systems require a streamlined, simplified mechanism to communicate effectively without needing to delve into the intricate technical specifics of every individual tool or service they connect to. This level of seamless interaction is only achievable when there exists a common ground, a universal language for machine-to-machine communication.

In practical terms, this entails:

- Standardized APIs: Providing well-documented, machine-readable interfaces for data access and task execution.

- Rich Structured Data: Embedding semantic markup (like Schema.org) that explicitly defines the entities, actions, and relationships on a page, enabling AI to understand content contextually.

- Common Interaction Models: Developing patterns for how agents initiate, manage, and complete tasks, regardless of the specific website or service.

- Interoperable Data Formats: Ensuring that data exchanged between agents and websites is in universally understood formats, preventing data silos.

Protocols are the architects of this shared language, building the essential infrastructure for the agentic web to flourish.

Microsoft’s Vision: Introducing NLWeb

Recognizing the critical need for standardized communication in this evolving digital landscape, Microsoft unveiled NLWeb. First introduced in May 2025 as an open project, NLWeb was designed with a singular, ambitious goal: to simplify the process for websites to offer rich, natural language interfaces using their own proprietary data and preferred AI models. Later, in November 2025, at the widely anticipated Microsoft Ignite event, Microsoft presented NLWeb again, this time alongside its initial enterprise offering through Microsoft Foundry, signaling its readiness for broader adoption.

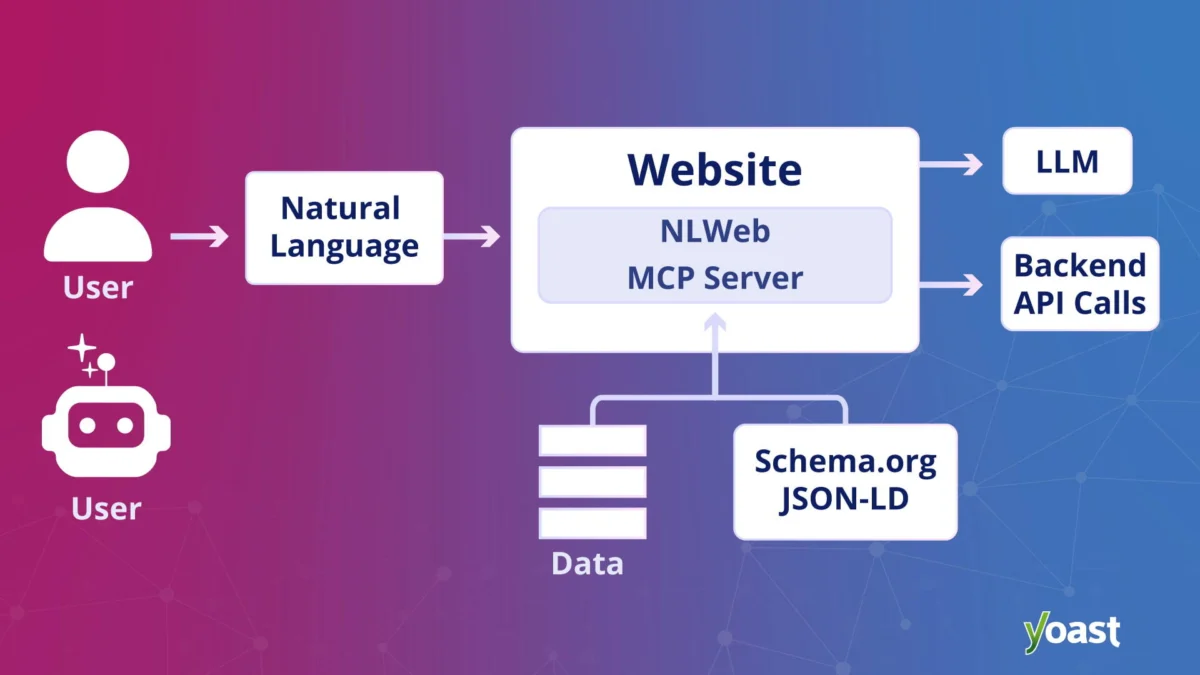

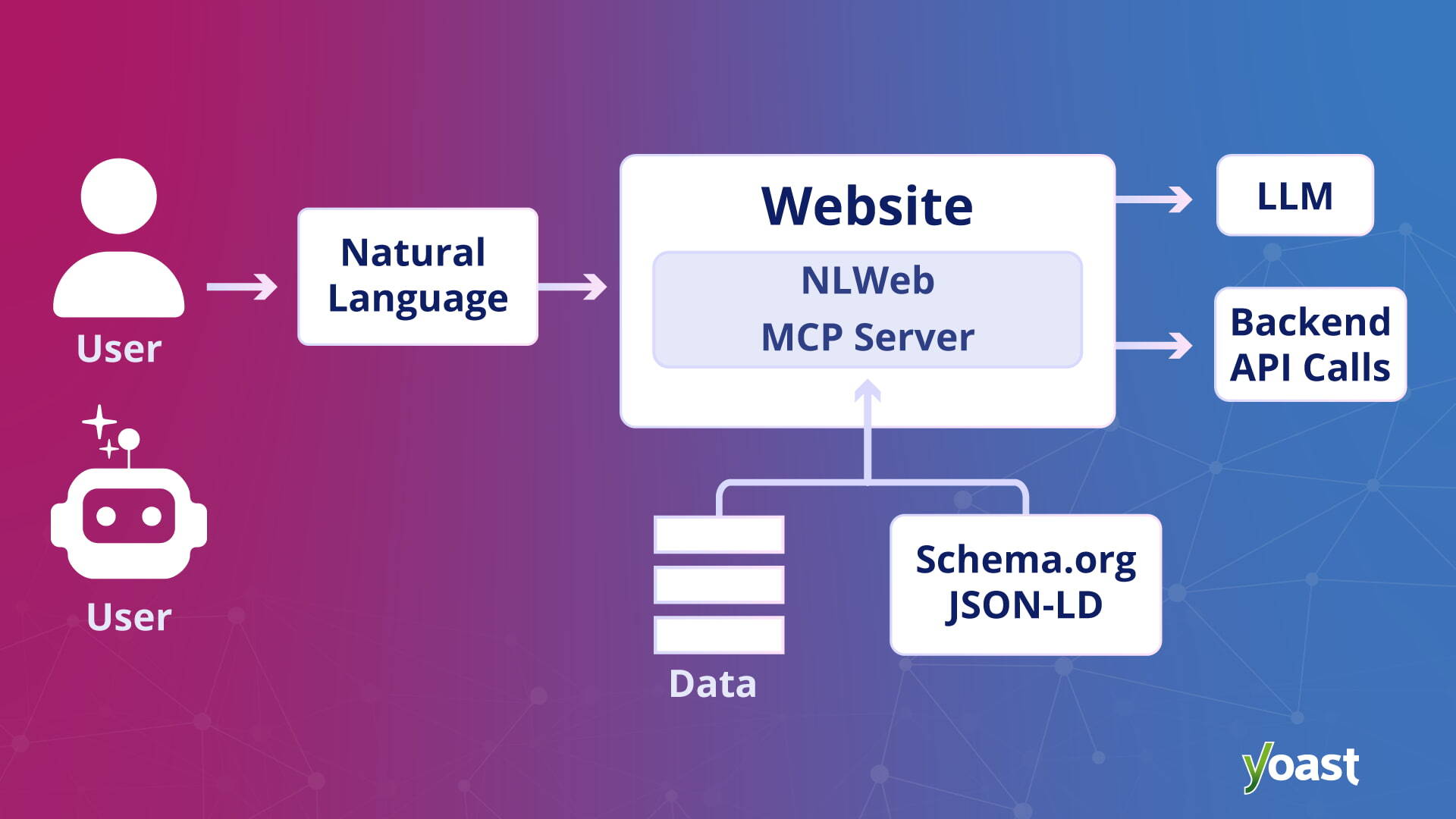

At its fundamental core, NLWeb aims to empower any website to function with the agility and responsiveness of a sophisticated AI application. Instead of users manually navigating through a labyrinth of pages, both human users and AI agents can directly query a site’s content using intuitive natural language. For instance, a user might ask, "What are the return policies for electronics bought last month?" or an agent might query, "Find the best flight deals from London to New York for July," and the website, through NLWeb, would provide a direct, concise answer drawn from its own data.

However, NLWeb is significantly more than just a conversational overlay. Each instance of NLWeb also functions as a Model Context Protocol (MCP) server. This crucial technical detail means that when a website activates NLWeb, it instantaneously becomes inherently discoverable and accessible to other agents operating within the MCP ecosystem. In simpler terms, AI agents no longer require cumbersome, custom-built integrations for every individual website. If a website proudly supports NLWeb, agents can automatically recognize its capabilities and interact with it in a standardized, predictable manner. This eliminates a massive barrier to widespread agent adoption and fosters a truly interconnected digital environment.

NLWeb intelligently builds upon existing, widely adopted web formats that websites already leverage, such as Schema.org for structured data and RSS for content syndication. It combines this meticulously structured data with the advanced capabilities of large language models (LLMs) to dynamically generate coherent and accurate natural language responses. This innovative approach allows websites to expose their valuable content in a format that is not only comprehensible to human users but also perfectly digestible and actionable for AI agents.

Importantly, NLWeb is designed to be technology agnostic. Site owners retain complete freedom to choose their preferred underlying infrastructure, AI models, and database solutions. The overarching objective is to foster broad interoperability, not to enforce platform lock-in. In many respects, NLWeb is strategically positioned to fulfill a role in the agentic web analogous to what HTML (Hypertext Markup Language) accomplished for the early internet. It provides a shared, universal communication layer that enables agents to query websites directly, moving beyond the limitations of traditional web crawling or visual interface emulation. This marks a significant leap towards a more intelligent and automated web.

NLWeb’s Distinctive Approach to AI Interaction

A critical differentiator for NLWeb lies in its unique methodology compared to standard Large Language Model (LLM) citations. In typical LLM scenarios, the model first generates an answer, often through probabilistic inference, and then subsequently attempts to retroactively add sources or citations. While impressive, this approach inherently carries a risk of inaccuracies, known as "hallucinations," where the model invents plausible-sounding but factually incorrect information. The response, being probabilistic, is not always guaranteed to be grounded in verifiable facts.

NLWeb operates on a fundamentally different principle. It strategically treats the language model not as an inventor of facts, but as a highly intelligent retrieval layer. Instead of concocting answers, NLWeb is engineered to pull verified objects, data points, and factual information directly from the website’s own structured data. This raw, authoritative data is then presented in natural language by the LLM.

This distinction is paramount. It ensures that responses generated via NLWeb are intrinsically grounded in the publisher’s own, authoritative data from the very outset. This dramatically reduces the propensity for hallucination and provides site owners with an unprecedented degree of control over how their content and brand are represented to both human users and AI agents. By prioritizing direct data retrieval over probabilistic generation, NLWeb offers a more reliable, accurate, and trustworthy interaction model for the agentic web.

Implications for Search Engine Optimization (SEO) Professionals

As the web inexorably evolves to accommodate and empower AI agents, SEO professionals are confronting a new, existential question: how does one maintain visibility and influence when answers are increasingly generated and synthesized by AI, rather than merely ranked and listed in traditional search results? This query gained significant traction during Microsoft’s Ignite event, where a consultant eloquently described a client’s dilemma: a mayonnaise brand owner seeking to ensure their product appeared when an AI assistant was queried about mayonnaise. The question, seemingly simple, illuminated a profound shift: if AI systems generate authoritative answers instead of presenting a list of search results, what does the future of optimization truly entail?

This is where the paradigm shift for SEO becomes strikingly real. The agentic web is not a replacement for the open web; it is an additional, sophisticated layer built on top of it. Traditional search engines will continue to index pages, and conventional rankings will retain their importance for direct human browsing. However, intelligent systems now possess the capability to directly query websites, synthesize information across multiple sources, and generate highly condensed, tailored responses.

For SEOs, this fundamentally redefines the role and strategic purpose of a website. It is no longer sufficient to conceive of a website merely as a collection of pages designed to attract human visitors and secure high rankings. Instead, websites must be strategically treated as sophisticated data endpoints, purpose-built to be queried, understood, and acted upon by intelligent systems.

This mandates that structured data, meticulously organized information architecture, and machine-readable content transition from being mere "enhancements" for rich results to becoming the absolute foundation of visibility. These elements are the prerequisites that enable AI systems to even interpret, select, and leverage a website’s content in the first place. An absence of well-defined structured data means an AI agent may simply bypass a site, unable to effectively process its offerings. The ability to be "understood" by machines becomes the new frontier of discoverability.

Broader Business and User Implications

The advent of the agentic web carries profound implications extending far beyond the realm of SEO, impacting businesses and end-users alike. For businesses, the shift offers unprecedented opportunities for automation and enhanced efficiency. Imagine customer service agents seamlessly retrieving complex product information or processing returns through direct agent-to-website communication, freeing up human staff for more nuanced interactions. Personalized marketing can reach new heights, with agents capable of understanding individual preferences and proactively presenting relevant offers by querying various vendor sites. Supply chain management could be optimized as agents autonomously track inventory, compare supplier prices, and even initiate reorders. This paradigm promises a significant reduction in operational friction and cost, fostering a new era of digital commerce and service delivery.

For end-users, the agentic web promises a dramatically more convenient and efficient online experience. The tedious chore of navigating multiple websites, comparing features, and filling out forms could become a relic of the past. Instead, users can delegate these tasks to their AI assistants, receiving synthesized answers and executed actions without ever needing to open a browser tab. This translates to substantial time savings and a frictionless user journey, empowering individuals to achieve their goals with minimal effort.

However, this transformative potential also brings challenges. Data privacy and security become paramount concerns. How will personal data be handled when agents are autonomously interacting with numerous sites? Robust protocols and regulatory frameworks will be essential to prevent misuse and ensure user consent. Ethical AI considerations also come to the fore; who is responsible if an agent makes an error, and how do we ensure fairness and transparency in agentic decision-making? The development of the agentic web will require ongoing collaboration between technologists, policymakers, and ethicists to navigate these complex questions.

Yoast’s Role in the Agentic Transition for WordPress

In a significant development accompanying the NLWeb announcement, Microsoft prominently highlighted Yoast as a key partner dedicated to bringing these advanced agentic search capabilities to the vast WordPress ecosystem. This collaboration, detailed in an official press announcement regarding "Yoast and Microsoft’s NLWeb integration," underscores the practical efforts being made to bridge the gap between cutting-edge AI protocols and everyday website management.

For the millions of WordPress site owners worldwide, abstract concepts such as "infrastructure," "endpoints," and "protocols" can often seem daunting and technically impenetrable. This is precisely where proactive preparation and strategic partnerships become invaluable. While Yoast does not automatically deploy NLWeb for its users, its core functionalities lay the critical groundwork. Specifically, the schema aggregation feature found in Yoast SEO, Yoast SEO Premium, Yoast WooCommerce SEO, and Yoast SEO AI+ plays a pivotal role. This feature meticulously organizes and structures a site’s content using standardized schema markup. Crucially, when site owners enable the relevant Yoast feature, there is no discernible visual alteration on the front end of their website. The transformative change occurs beneath the surface, within the underlying data structure.

In essence, Yoast strategically maps and organizes structured data, significantly reducing the technical effort and complexity otherwise required to build and integrate NLWeb on top of existing WordPress sites. Yoast empowers publishers by completing much of the foundational groundwork, ensuring their content is inherently machine-readable and semantically rich. This pre-processing makes a WordPress site "agent-ready," simplifying its integration into the NLWeb and broader agentic ecosystem.

The agentic web is not merely a fleeting trend to be chased; it is a fundamental evolution of the internet. For WordPress users, and indeed all website owners, it is about ensuring that their valuable content remains discoverable, profoundly understandable, and ultimately usable in a rapidly evolving digital world where intelligent systems are increasingly acting autonomously on behalf of human users. Yoast’s collaboration with NLWeb represents a proactive step towards empowering website owners to participate effectively in this future, emphasizing that visibility in this new layer hinges on clarity, interoperability, and robust data infrastructure.

The Road Ahead: Challenges and Opportunities

The journey towards a fully realized agentic web is complex, fraught with both immense opportunities and significant challenges. The technical infrastructure, while progressing rapidly with initiatives like NLWeb, still requires broader adoption and further standardization across the industry. Interoperability, while a core tenet, will demand ongoing collaboration between diverse technology companies to ensure seamless communication channels are established and maintained.

Beyond the technicalities, ethical considerations surrounding AI autonomy, data governance, and the potential for algorithmic bias will necessitate careful deliberation and robust regulatory frameworks. Ensuring that agents act in the best interests of their users, while respecting privacy and security, will be paramount. The potential for misinformation or manipulation through agentic interactions also poses a risk that needs proactive mitigation.

However, the opportunities presented by the agentic web are truly transformative. It promises a future where online interactions are exponentially more efficient, personalized, and proactive. From automating complex personal tasks to revolutionizing business operations, the ability for AI agents to intelligently navigate and act upon the web could unlock unprecedented levels of productivity and convenience. Content creators and businesses that embrace this shift by prioritizing structured, machine-readable data will be best positioned to thrive. The agentic web is not just an evolution of technology; it is a fundamental redefinition of our digital relationship, promising a future where intelligence is distributed, and interaction is seamless.