XML sitemaps serve as a fundamental navigational tool for search engines, acting as a comprehensive directory that guides Google and other crawlers to all crucial pages within a website. This digital roadmap is indispensable for optimizing search engine optimization (SEO) efforts, facilitating rapid discovery and indexing of essential content, even when a website’s internal linking structure may not be flawless. Understanding the mechanics and strategic implementation of XML sitemaps is paramount for any website owner aiming to improve their online visibility and ensure their content is surfaced effectively, including by emerging AI agents.

Understanding XML Sitemaps: The Digital Roadmap

An XML sitemap is a specialized file, formatted in Extensible Markup Language (XML), that systematically lists a website’s vital pages. Its primary function is to enable search engines to efficiently locate, crawl, and comprehend the architecture of a site. Beyond mere listing, sitemaps empower search engines to prioritize content, allocate crawl budget more effectively, and understand the relationships between different sections of a website. This structured approach is particularly advantageous for large, complex, or newly launched websites, where organic discovery through traditional linking might be slow or incomplete.

Historically, as the internet grew in complexity and scale, search engines faced increasing challenges in comprehensively cataloging the vast amount of information available. The Sitemap Protocol, initially introduced by Google in 2005 and subsequently adopted by Yahoo and Microsoft (Bing) in 2006, standardized the way webmasters could communicate directly with search engines about their site’s content. This protocol provided a streamlined method for publishers to declare all pages they wished to be indexed, effectively complementing the existing process of discovering content via hyperlinks. Before sitemaps, search engine bots relied almost exclusively on following internal and external links, a method that often left "orphan pages" (pages with no incoming links) undiscovered. The introduction of sitemaps marked a significant step in empowering webmasters with greater control over their site’s indexability.

While XML sitemaps are the most common and specifically designed for search engines, it is worth noting that other sitemap formats exist, such as HTML sitemaps. HTML sitemaps are primarily intended for human users, offering a hierarchical, navigable list of pages to enhance user experience and site navigation. In contrast, XML sitemaps are machine-readable files, optimized solely for search engine bots, providing metadata that goes beyond simple URL listings.

The Anatomy of an XML Sitemap

An XML sitemap adheres to a strict, standardized format that search engines can readily parse and interpret. It is a plain text file written in XML, allowing for clear and rapid understanding of a website’s URLs and their associated attributes, such as the last modification date.

A basic XML sitemap containing a single URL would appear as follows:

<?xml version="1.0" encoding="UTF-8"?>

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<url>

<loc>https://www.example.com/example-page/</loc>

<lastmod>2024-01-01</lastmod>

</url>

</urlset>Each URL within a sitemap is encapsulated by specific XML tags that convey important information to search engines. While some of these tags are mandatory for a valid sitemap, others are optional but can provide valuable signals.

< ?xml >: This mandatory tag declares the XML version and character encoding (typically UTF-8) used in the sitemap file.<urlset>: Also mandatory, this acts as the root element and container for the entire sitemap. It defines the sitemap protocol standard and encloses all individual URL entries.<url>: A mandatory tag that represents a single URL entry. Every page intended for discovery must be nested within its own<url>tag.<loc>: This mandatory tag specifies the full, canonical URL of the page that the webmaster wants search engines to crawl and index. It should always contain the absolute URL, including the protocol (HTTP or HTTPS).<lastmod>: An optional but highly recommended tag,<lastmod>indicates the date of the page’s last significant modification. This timestamp helps search engines determine when a page might need to be re-crawled, thus optimizing crawl efficiency. Google, in particular, places significant emphasis on this tag.<changefreq>: This optional tag suggests how frequently the content on a page is expected to change (e.g., daily, weekly, monthly, hourly). While part of the sitemaps.org protocol, major search engines like Google and Bing have officially stated they generally ignore this tag as a direct signal for crawl frequency.<priority>: Another optional tag,<priority>was intended to suggest the relative importance of a page compared to others on the same site, using a scale from 0.0 to 1.0. Similar to<changefreq>, Google has explicitly disregarded this tag for prioritizing content in search results or crawl scheduling. The actual importance of a page is determined by various other SEO signals, including internal linking and external backlinks.

It is critical for webmasters to understand that while <changefreq> and <priority> are technically valid within the sitemap protocol, their utility for influencing Google’s crawling and ranking decisions is negligible. Focus should be placed on ensuring accurate <loc> and <lastmod> values, alongside a robust internal linking strategy.

XML Sitemap Index: Managing Scale

For websites with a vast number of URLs, or those wishing to categorize their sitemap entries by content type, an XML sitemap index becomes indispensable. A sitemap index is essentially a sitemap of sitemaps. Instead of listing individual page URLs, it lists multiple individual XML sitemap files. This acts as a directory, pointing search engines to several distinct sitemaps.

The Sitemap Protocol imposes limits: a single sitemap file can contain a maximum of 50,000 URLs and must not exceed 50 MB in uncompressed size. Websites that surpass these thresholds are required to segment their URLs into multiple sitemap files. The sitemap index then serves as a central point of submission to search engines, allowing them to discover and process all associated sitemaps efficiently.

Common use cases for sitemap indexes include:

- Large Websites: E-commerce sites with thousands of product pages, news sites with extensive archives, or large content platforms.

- Content Type Segmentation: Organizing URLs by categories such as pages, blog posts, product listings, image galleries, or video content. This can improve organization and help identify issues specific to certain content types.

- Multilingual Sites: Separate sitemaps for different language versions of content.

The structure of a sitemap index file differs from an individual sitemap:

<?xml version="1.0" encoding="UTF-8"?>

<sitemapindex xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<sitemap>

<loc>https://www.example.com/sitemap-pages.xml</loc>

<lastmod>2025-12-11</lastmod>

</sitemap>

<sitemap>

<loc>https://www.example.com/sitemap-products.xml</loc>

<lastmod>2025-12-11</lastmod>

</sitemap>

</sitemapindex>In this example, the sitemap index directs search engines to two separate sitemap files: one for pages and another for products. This modular approach significantly aids search engines in efficiently navigating and indexing expansive digital properties. In essence, an XML sitemap assists search engines in discovering individual pages, while a sitemap index facilitates the discovery of multiple sitemap files.

The Indispensable Role of XML Sitemaps in Modern SEO

While a website can technically be indexed without an XML sitemap—as search engines can discover pages through internal and external links—the strategic benefits of having one are profound and highly recommended across the SEO industry.

1. Improved Crawl Efficiency and Budget Management:

Search engines operate with a "crawl budget," which is the number of URLs a search engine bot will crawl on a site within a given timeframe. For large or frequently updated websites, efficiently managing this budget is crucial. Sitemaps provide a clear, prioritized list of important URLs, guiding crawlers directly to valuable content and minimizing the time spent discovering less significant pages. This ensures that essential content is crawled regularly, preventing important updates from being missed.

2. Accelerated Content Discovery and Indexing:

When new pages or significant updates are published, including them in a sitemap signals their existence directly to search engines. This can dramatically reduce the time it takes for new content to be discovered and indexed, a critical advantage for news outlets, blogs, or e-commerce sites with dynamic product inventories. Faster indexing translates to quicker visibility in search results.

3. Bridging the Gap: Unearthing Orphan Pages:

Orphan pages are a common SEO challenge. These are pages that exist on a website but are not linked from any other internal page. Without an internal link, search engine crawlers, which primarily navigate by following links, might never discover these pages. An XML sitemap acts as a safety net, explicitly listing these URLs and ensuring they are found, crawled, and potentially indexed, thus maximizing the website’s indexable content.

4. Rich Media and Specialized Content Support:

XML sitemaps can be extended to include specialized information about various types of media, such as images, videos, and news articles. Dedicated sitemaps for these content types (e.g., Image Sitemaps, Video Sitemaps, News Sitemaps) provide search engines with specific metadata. For instance, a video sitemap can include details like video duration, rating, and content maturity. This specialized information helps search engines understand the content better and surface it in specific search verticals like Google Images or video search results, expanding a website’s reach.

5. Global Reach: Supporting Multilingual (Hreflang) Implementations:

For websites targeting multiple languages or geographical regions, XML sitemaps offer a robust mechanism for implementing hreflang annotations. These annotations inform search engines about alternate language versions of pages, ensuring that users in different locations or speaking different languages are served the most appropriate content version. Including hreflang in sitemaps simplifies the process for search engines to correctly identify and serve localized content, preventing issues like duplicate content penalties.

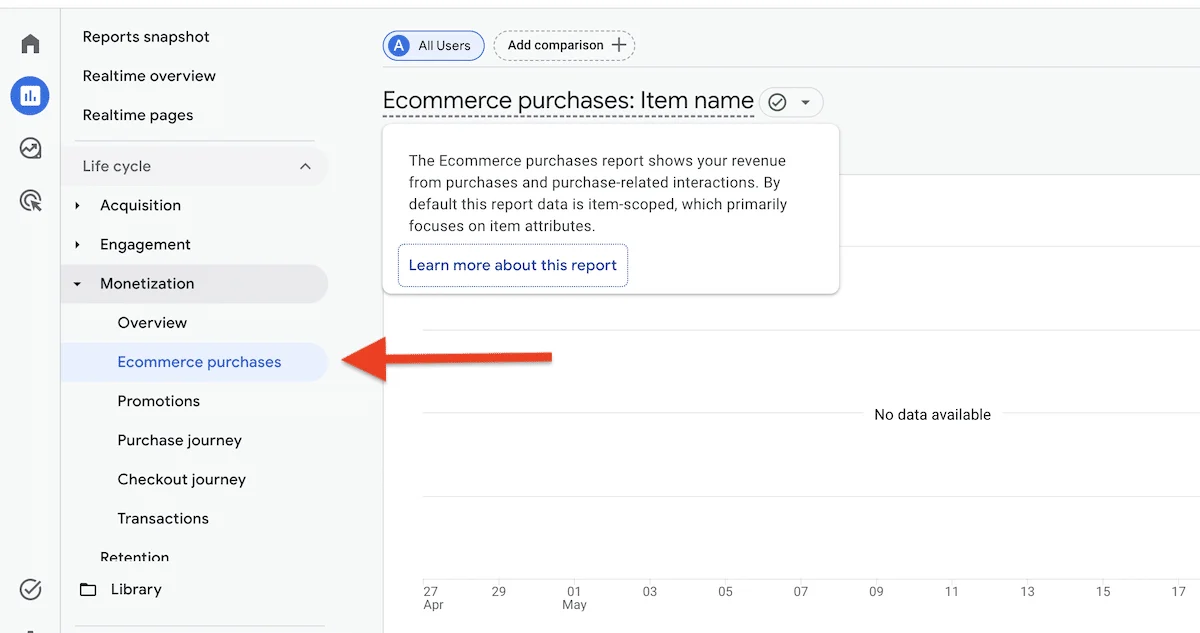

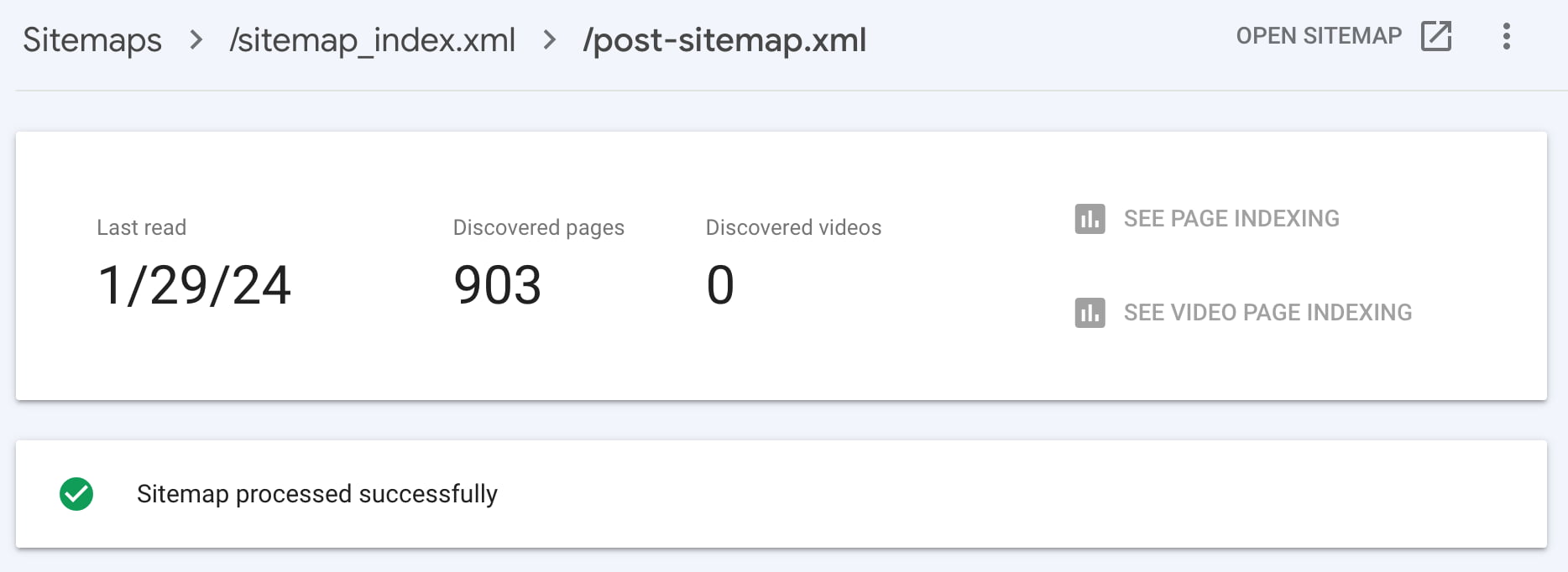

6. Analytical Insights via Search Console:

Submitting an XML sitemap to tools like Google Search Console (GSC) provides invaluable insights into a website’s indexing status. GSC reports show how many URLs from the sitemap have been submitted, how many have been indexed, and any crawling or indexing errors encountered. This data empowers webmasters to proactively identify and address issues that might hinder their content’s visibility, such as server errors, broken links, or content quality problems. The ability to monitor "submitted vs. indexed" counts is a direct feedback loop on a site’s health.

The AI Search Frontier: Sitemaps’ Indirect Influence

The advent of AI-powered search experiences, such as Google’s AI Overviews and Bing Copilot, has introduced new layers to content discoverability. While these AI agents generate summarized answers or interactive experiences, they fundamentally rely on the underlying traditional search index to retrieve information. This means that for a website’s content to be considered by an AI agent, it must first be successfully crawled and indexed by the search engine.

This is precisely where XML sitemaps maintain their critical role. By ensuring that important URLs are discoverable and indexed, sitemaps indirectly contribute to content eligibility for AI-generated answers. Moreover, the <lastmod> tag becomes particularly significant in the AI era. AI systems prioritize fresh, up-to-date information to provide accurate and relevant summaries. An accurate <lastmod> value signals to search engines that a page has