In an era increasingly defined by artificial intelligence, the trustworthiness of data has become the bedrock of organizational success. Data quality management (DQM) is not merely a technical undertaking but a fundamental organizational discipline encompassing a collection of practices, tools, and processes designed to ensure data is accurate, complete, consistent, timely, unique, and valid. This holistic approach covers everything from data profiling and cleansing to rigorous governance, validation, enrichment, and continuous monitoring. Without a robust DQM framework, organizations risk building their entire operational and strategic edifice on a shaky foundation, undermining critical business decisions and jeopardizing their competitive standing.

The Evolving Landscape of Data: A New Imperative for Quality

The past two decades have witnessed an exponential surge in data generation, driven by pervasive digitalization, the proliferation of connected devices, and the adoption of cloud computing. This "Big Data" phenomenon, characterized by its immense volume, rapid velocity, and diverse variety, has transformed how businesses operate, offering unprecedented opportunities for insight and innovation. However, it has simultaneously introduced complex challenges for data integrity. The sheer scale and speed at which data is collected, processed, and utilized make manual quality control virtually impossible, rendering organizations vulnerable to errors that can propagate rapidly through interconnected systems.

The advent of artificial intelligence and machine learning has further amplified the criticality of data quality. AI models, whether used for predictive analytics, personalized customer experiences, or automating complex workflows, learn from the data they are fed. The principle of "garbage in, garbage out" has never been more relevant; flawed, biased, or incomplete data will inevitably lead to erroneous, biased, or ineffective AI outputs. According to a 2022 Gartner report, poor data quality costs organizations an average of $12.9 million annually, a figure projected to rise as AI adoption expands. This financial drain underscores that DQM is no longer a "nice-to-have" but a strategic imperative directly impacting a company’s bottom line and future viability.

The Insidious Nature of Poor Data Quality

Data quality issues are often insidious, developing gradually and silently before manifesting as significant operational and strategic hurdles. A customer relationship management (CRM) system, designed to be the single source of truth for customer information, serves as a prime example. CRMs are fed by myriad data streams, including direct imports, third-party integrations, manual entry by sales and service teams, and automated capture systems. Each of these entry points introduces variables and potential risks. Errors can creep in through typos, inconsistent formatting, outdated information, or duplicate records, creating a ripple effect that extends far beyond the immediate data point.

Detecting these issues can be particularly challenging due to several factors. Initially, minor discrepancies may not be immediately obvious. Over time, as problems accumulate, the integrity of the data erodes, and its reliability diminishes. The sheer volume of data further complicates detection; analyzing massive datasets manually for inconsistencies is a monumental, if not impossible, task. CDO Trends highlights this challenge, noting that 57 percent of data practitioners identify maintaining data quality as their biggest hurdle during data analysis. Issues often hide in plain sight, masked by data silos, a lack of standardized data entry protocols, and insufficient validation mechanisms at the point of data capture.

Quantifying the Cost: The Business Impact of Flawed Data

The business impact of poor data quality is multifaceted and severe, affecting every aspect of an organization from daily operations to long-term strategic planning. In sales, outdated contact information leads to wasted time for representatives chasing non-existent leads or contacting current customers as if they were new prospects, eroding efficiency and potentially damaging customer relationships. Marketing efforts suffer from inaccurate segmentation, resulting in irrelevant campaigns, wasted ad spend, and diminished return on investment.

Operationally, flawed insights from corrupted reports and analytics skew forecasts and performance breakdowns, leading to misinformed strategies and resource misallocation. The Validity State of CRM Data Management in 2025 report reveals that a staggering 37 percent of teams report losing revenue directly due to poor data quality. This loss extends beyond direct revenue, encompassing hidden costs such as increased operational overhead for data remediation, compliance fines for data privacy violations (e.g., GDPR, CCPA), and the intangible cost of damaged brand reputation and customer trust.

Beyond financial and operational ramifications, poor data quality significantly hampers customer experience. Missing or incorrect customer information can lead to slow service, broken personalization attempts, and awkward interactions, ultimately driving customer churn. When businesses cannot trust their data, their ability to compete effectively diminishes, ceding ground to more data-mature rivals.

Pillars of Data Quality: Defining Excellence

At the core of effective data quality management are six foundational elements that collectively determine the trustworthiness and utility of data:

- Accuracy: Data must correctly reflect the real-world facts or events it represents. Inaccurate data can lead to erroneous conclusions and poor decisions.

- Completeness: All necessary data attributes must be present. Incomplete records can hinder analysis, prevent proper segmentation, and lead to missed opportunities.

- Consistency: Data should be uniform across all systems and applications. Inconsistent data, such as different spellings of a customer’s name in various databases, creates confusion and undermines a unified view.

- Timeliness: Data must be available when needed and reflect the current state of affairs. Outdated data can render insights irrelevant and actions ineffective.

- Uniqueness: There should be no duplicate records for the same entity within the dataset. Duplicates inflate counts, waste resources, and distort analysis.

- Validity: Data must conform to predefined rules, formats, and ranges. For example, a phone number field should only contain numbers and adhere to a specific length.

To effectively monitor and improve these qualities, organizations must track key data quality metrics. While the specific metrics may vary, five commonly monitored areas provide critical insight:

- Error Rate: The percentage of data records containing errors or inaccuracies.

- Completeness Rate (or Fill Rate): The percentage of required fields that contain data.

- Consistency Score: A measure of how uniform data is across different sources or within a single dataset.

- Timeliness Index: How current the data is relative to a defined update cycle or event.

- Deduplication Rate: The percentage of duplicate records identified and resolved.

These metrics serve as critical indicators, highlighting areas of weakness and guiding remediation efforts, ensuring data remains practical, trustworthy, and actionable.

Crafting a Robust Data Quality Management Strategy

Building an effective DQM strategy requires a structured, systematic approach, moving beyond reactive fixes to proactive prevention.

- Conduct a Comprehensive Data Audit: The initial step involves a thorough assessment of existing data assets to identify current quality levels, pinpoint sources of errors, and understand the impact of poor data. This audit should cover all critical business systems, including CRMs, ERPs, and marketing platforms.

- Define Clear Data Standards and Policies: Establish explicit rules for data entry, formatting, validation, and retention. Develop a centralized data dictionary that defines key terms, data types, and acceptable values. These standards form the bedrock of consistent data quality across the organization.

- Implement a Data Governance Framework: Assign clear ownership and accountability for data domains. Appoint data stewards who are responsible for the quality of specific datasets, enforcing standards, and mediating data-related issues. This framework ensures that data quality is an ongoing organizational discipline, not just an IT task.

- Leverage Data Quality Tools and Technology: Automate data quality processes wherever possible. Solutions designed for data profiling, cleansing, deduplication, standardization, and validation can process large volumes of data efficiently, preventing errors at the point of entry and correcting existing issues at scale.

- Foster a Data-Centric Culture: Data quality is a shared responsibility. Educate employees across all departments on the importance of accurate data and their role in maintaining it. Provide training on data entry best practices and the use of DQM tools.

- Establish Continuous Monitoring and Improvement: DQM is not a one-time project but an ongoing cycle. Regularly monitor data quality metrics, conduct periodic audits, and adapt policies and processes as data needs evolve and new systems are introduced.

A Chronology of DQM Program Implementation

While every organization’s data quality journey is unique, a general timeline for implementing a comprehensive DQM program typically involves several distinct phases:

- Phase 1: Discovery and Assessment (Weeks 1-4): This initial phase focuses on understanding the current state. It includes a comprehensive data audit across critical systems, stakeholder interviews to identify pain points and business needs, defining the scope of the DQM initiative, and establishing baseline data quality metrics.

- Phase 2: Strategy and Governance Definition (Weeks 5-8): Based on the assessment, this phase involves developing clear data quality standards, policies, and rules. It includes establishing a data governance framework, identifying and appointing data stewards, and selecting appropriate data quality tools or platforms.

- Phase 3: Implementation and Remediation (Months 3-6): This is the execution phase where selected DQM tools are integrated into existing systems. Initial data cleansing efforts are undertaken to address historical quality issues (e.g., deduplication, standardization, validation). Data migration activities, if required, are also managed with strict quality controls.

- Phase 4: Automation and Continuous Monitoring (Months 7-12+): The final phase focuses on embedding DQM into daily operations. Automated data quality checks are configured at data entry points and during data integration processes. Ongoing monitoring dashboards are established, and regular reporting mechanisms are put in place. This phase also includes continuous improvement loops, adapting the strategy based on feedback and evolving business requirements.

Real-World Impact: Case Studies in Action

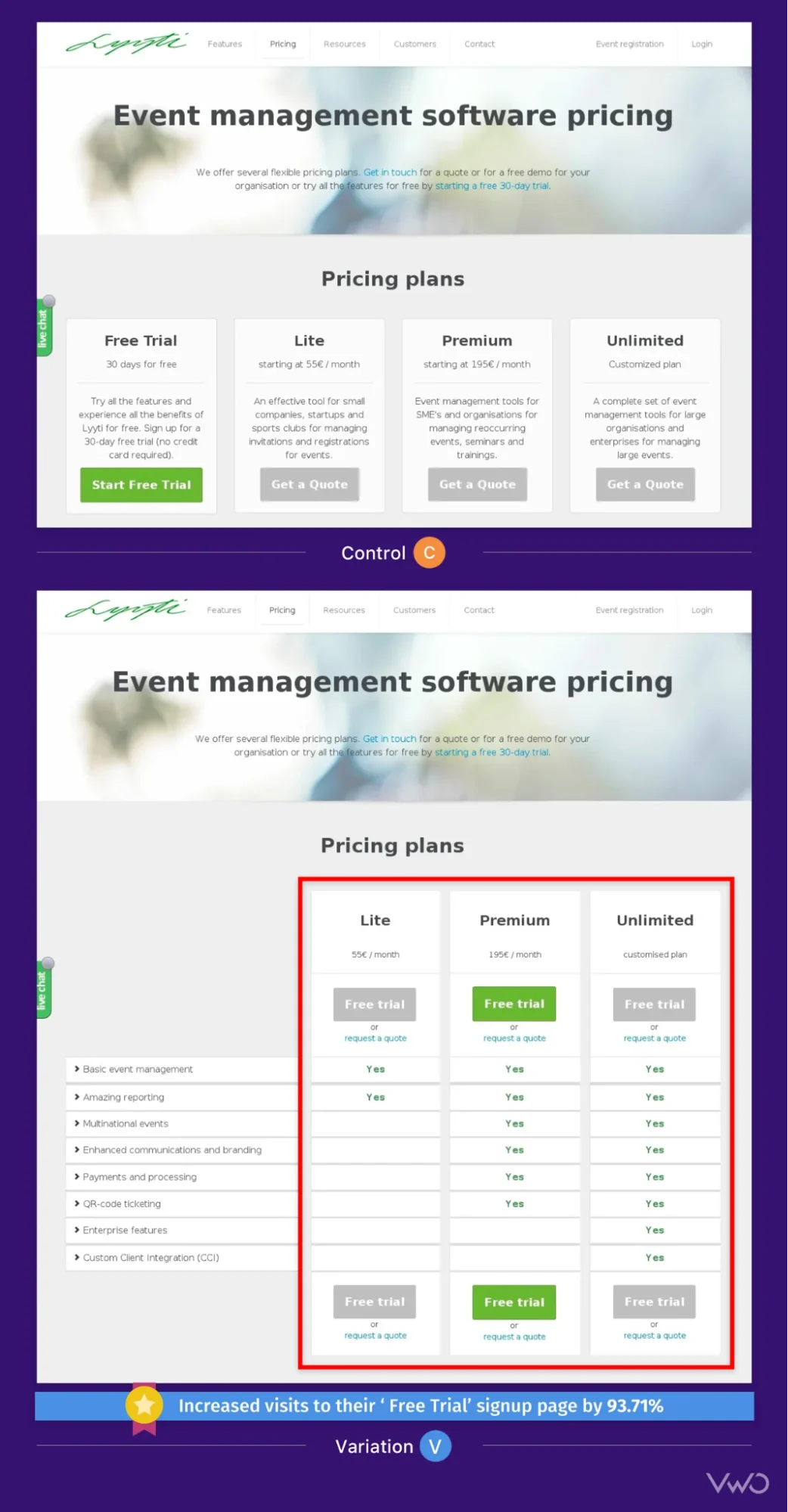

The tangible benefits of a robust DQM strategy are evident in organizations that have successfully implemented such programs. For instance, BARBRI, an educational company, faced a significant challenge with system integrations flooding its Salesforce CRM with thousands of duplicate records monthly. This led to sales representatives wasting valuable time contacting the same prospects multiple times. By implementing an automated data cleansing process, BARBRI transformed what was once a days-long manual effort into minutes, freeing their team to focus on sales enablement and strategic outreach.

Similarly, Thornburg Investment Management struggled with a constant influx of duplicates and unstandardized data from various third-party sources. Following the adoption of a dedicated data quality solution, Thornburg reported recovering approximately 120 hours of manual data management time per week. This significant saving was akin to adding a full-time developer to their team without the associated hiring costs, dramatically improving operational efficiency.

908 Devices, a company where Salesforce data underpins everything from daily sales activities to executive decision-making within platforms like Tableau, recognized data quality as a competitive advantage. By making data quality management the backbone of their data projects, handling deduplication, standardization, and record management at scale, they empowered their teams to operate with speed and agility without compromising data accuracy. These examples underscore that effective DQM is not merely about fixing problems but about enabling growth and strategic advantage.

Frequently Asked Questions about Data Quality Management

- What is data quality management? Data quality management is an ongoing organizational discipline that ensures data is accurate, consistent, reliable, and complete. It integrates technology, processes, and people to maintain data integrity across an organization, ensuring data is clean, standardized, and ready for critical business decisions and campaigns.

- Why is data quality management important? Data is central to every business decision. Poor data leads to tangible consequences, including lost revenue, compliance failures, and flawed decision-making. Organizations with mature data quality practices gain a competitive advantage, enabling them to move faster, serve customers better, and allocate resources more effectively.

- How do you implement data quality management? Implementation typically begins with a data quality audit to identify key issues, followed by defining organizational data quality rules and standards. Appointing data stewards for critical data domains and enforcing entry standards is crucial. Finally, implementing automated tools for ongoing data cleansing and maintenance ensures sustained data quality.

- What are the main challenges in data quality management? The primary challenges include inconsistent, inaccurate, incomplete, and duplicate data, often stemming from poor data hygiene practices and a lack of standardization. Unaddressed, these issues compound into data silos, inefficient processes, and accountability gaps across the organization.

- How much does poor data quality cost businesses? Poor data quality costs businesses more than most realize. Reports indicate that a significant percentage of CRM users experience direct revenue loss due to poor data. Direct costs include wasted marketing spend on invalid contacts and potential data compliance fines. Indirect, harder-to-quantify costs include lost revenue opportunities, flawed decision-making, failed AI projects, and customer churn from irrelevant communications.

- How is AI changing data quality management? AI is only as effective as the data it learns from, significantly raising the stakes for data quality. As organizations increasingly rely on AI for decisions and automated workflows, the margin for error in underlying data shrinks. This shift elevates data quality from a reactive, manual task to a proactive, real-time discipline, where automated tools are essential to prevent problems before they impact AI systems.

The Future of Data Quality: AI-Driven Imperatives

The future of data quality management is inextricably linked with the advancement of artificial intelligence. As AI models become more sophisticated and deeply embedded in business processes, the demand for impeccably clean and reliable data will only intensify. Organizations will increasingly leverage AI itself to enhance DQM, employing machine learning for predictive data quality, anomaly detection, and automated data governance. This proactive approach will allow businesses to identify and rectify data issues in real-time, preventing them from impacting critical AI-driven operations.

Ultimately, data cleanliness is not an end goal but a continuous journey. Quality data is the lifeblood of efficient operations, accurate reporting, and superior business outcomes. In an increasingly data-driven and AI-powered world, investing in robust data quality management is not just a best practice; it is a strategic imperative for any organization aiming to build trust, drive innovation, and sustain competitive advantage.