Imagine a web ecosystem where not just humans but AI agents communicate with websites, going beyond traditional browsing. Unlike conventional web experiences, where people click, scroll, and search, AI agents can navigate, interpret, and even perform tasks autonomously on your site. This is not a futuristic concept. It is already unfolding. This is the emergence of the agentic web. This fundamental shift from a web primarily designed for human interaction to one that seamlessly accommodates both human users and intelligent AI assistants is poised to redefine digital engagement, search, and content visibility.

The Paradigm Shift: From Human-Centric to Agent-Centric Web

For decades, the internet has operated on a foundational premise: humans are the primary navigators. Users manually searched, clicked through results, compared information across various sources, and painstakingly completed tasks, whether it was booking a flight, purchasing a product, or filling out a complex form. Even with the continuous evolution of search engines, the core interaction model remained largely static: a user initiating a query, receiving a list of links, and then actively engaging with those links. This established pattern, while effective for its time, presents inherent limitations in an increasingly complex and data-rich digital landscape.

The agentic web heralds a profound departure from this human-centric paradigm. It represents an architectural reorientation, moving towards a web infrastructure designed to facilitate interaction not only between people and websites but also between intelligent AI agents and web resources. In this evolving model, the burden of manual research, comparative analysis, form completion, and transaction execution will increasingly be delegated to sophisticated AI assistants. These agents, empowered by advanced algorithms and access to vast datasets, can autonomously search, interpret nuanced information, synthesize insights, and execute actions on behalf of their human counterparts. The user’s role thus transforms from an active navigator and executor to a strategic decision-maker, entrusting the intricate legwork to their digital delegates. The shift is from searching to delegating, from passive consumption to active, automated execution.

Crucially, this evolution extends far beyond the capabilities of smarter chat interfaces. While conversational AI has made significant strides, the agentic web envisions truly autonomous agents capable of interpreting complex search intent, discerning optimal options from a multitude of choices, and executing a series of actions across different web endpoints. Websites, in this new reality, cease to be mere pages to be visited and visually scanned. Instead, they become intelligent endpoints to be queried, programmatically accessed, and acted upon by machine intelligence.

For this vision to materialize at a global scale, intelligence cannot be confined to a singular assistant or a proprietary, closed platform. It necessitates a distributed architecture where diverse systems can communicate and interact without friction. This demands a web that is inherently machine-readable, universally interoperable, and explicitly engineered for seamless agent-to-agent interaction. This is not a speculative forecast; it is an architectural transformation actively underway, driven by the rapid advancements in artificial intelligence and the growing demand for automated efficiency.

The Foundation of Interoperability: Protocol Thinking and the Infrastructure of Agentic Web Communication

The fundamental question underpinning the agentic web’s viability is simple yet profound: how do these intelligent systems understand and interact with each other across the vast, disparate landscape of the internet? The answer, as history has repeatedly demonstrated in the evolution of the web, lies not in intricate visual design or individual platform-specific integrations, but in robust, standardized infrastructure – specifically, in "protocol thinking."

The web’s enduring success has always been predicated on shared communication rules and agreed-upon standards. From the foundational Hypertext Transfer Protocol (HTTP), which enables browsers to request and receive web pages, to the Simple Mail Transfer Protocol (SMTP) for email, and the Really Simple Syndication (RSS) for content distribution, these are not mere features. They are protocols – universal agreements that facilitate large-scale coordination and communication across diverse systems and geographies. Structured data formats, such as Schema.org, have further enhanced this by providing a common language for search engines to interpret the meaning and context of web content, moving beyond mere keywords to semantic understanding.

This same logic is now being applied to the realm of AI agents. In the agentic web, agents will not mimic human browsing behaviors, such as clicking buttons or visually parsing page layouts. Instead, they will send programmatic requests, interpret structured responses, compare options based on defined criteria, and execute tasks directly. For such operations to function consistently and reliably across millions of websites globally, communication cannot be improvised or subject to individual interpretations. It must be standardized, predictable, and universally understood.

This is precisely where protocol thinking becomes indispensable. It mandates designing websites and web services in a manner that is inherently predictable and intelligible for machines. Rather than requiring developers to build custom integrations for every conceivable AI assistant, chatbot, or platform, websites must expose a consistent, machine-readable interaction layer. Agents, in turn, do not need to "learn" every unique interface; they simply rely on these shared, pre-defined rules and protocols to communicate effectively.

As proponents of distributed intelligence emphasize, the goal is not to centralize control within a single omniscient chatbot. The intelligence must be decentralized, residing across various agents and systems. For this distributed intelligence to thrive, systems require a simplified, standardized method to communicate without needing to understand the intricate technical specificities of every tool or service they interact with. This is only achievable when there is a common ground – a universal language of interaction.

In practical terms, this means embracing:

- Structured Data: Extending the use of standards like Schema.org to encompass not just content description but also actionable functions and services offered by a website.

- APIs (Application Programming Interfaces): Designing well-documented, standardized APIs that allow agents to programmatically query and interact with website functionalities.

- Semantic Web Technologies: Leveraging ontologies and linked data to provide rich, machine-interpretable meaning to web content and services.

- Standardized Request/Response Formats: Establishing common formats for agents to send requests and for websites to return structured data, ensuring consistency across the ecosystem.

Protocols are the bedrock that creates this shared language, enabling the agentic web to scale and fulfill its transformative potential. Without them, the agentic web would devolve into a chaotic collection of incompatible systems, undermining its core promise of seamless, autonomous interaction.

NLWeb: Microsoft’s Vision for Agentic Communication and a New Web Frontier

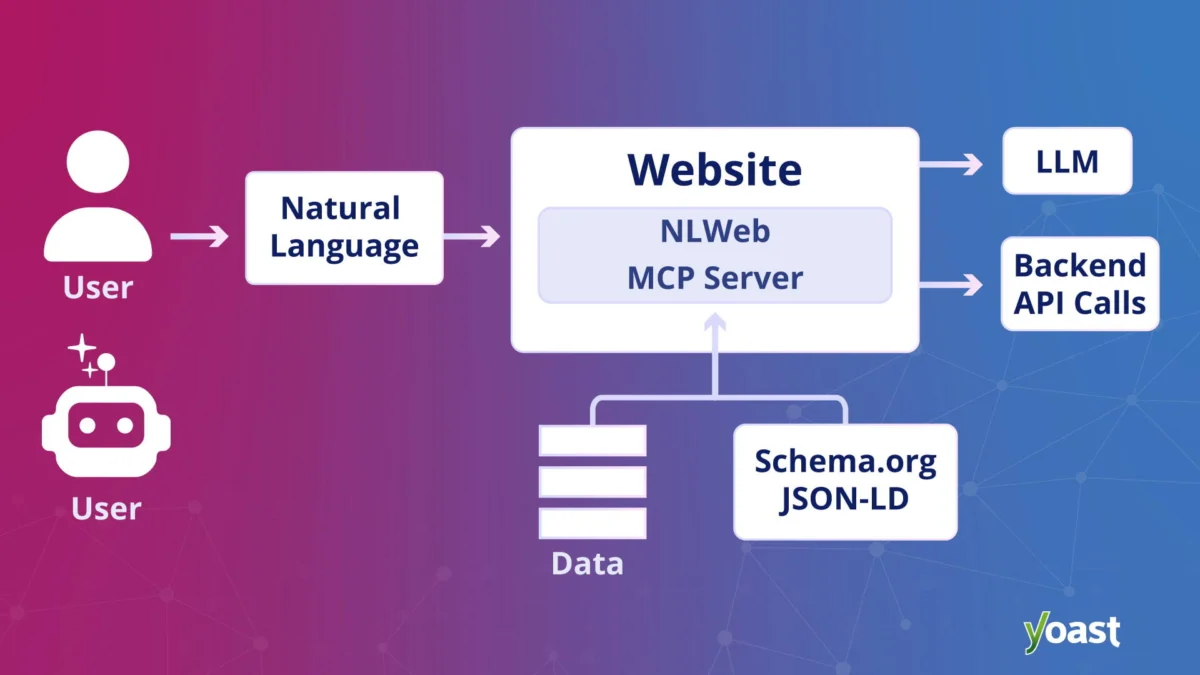

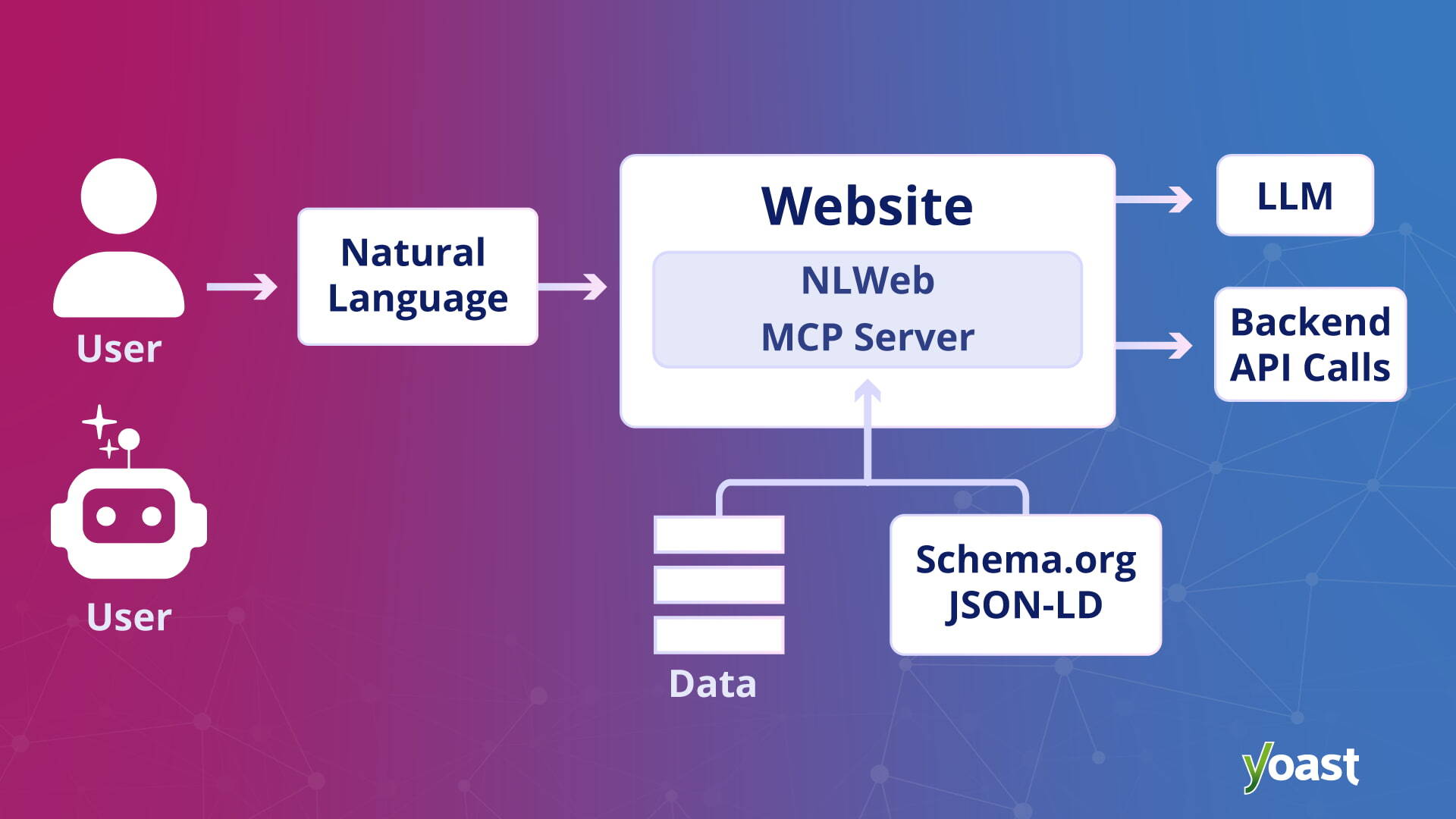

In response to the burgeoning demands of the agentic web, Microsoft introduced NLWeb, an open project designed to simplify the creation of rich natural language interfaces for websites, leveraging their own data and preferred AI models. First unveiled in May 2025, NLWeb made a significant return in November 2025 at Microsoft Ignite, where it was presented alongside its first enterprise offering through Microsoft Foundry, signaling its strategic importance within Microsoft’s broader AI ecosystem.

At its core, NLWeb aims to empower any website to function effectively as an AI application. Instead of users or agents having to manually navigate through pages, menus, and links, NLWeb allows direct querying of a site’s content using natural language. This transforms the user experience, making information retrieval and task execution dramatically more intuitive and efficient. For instance, a user might ask a travel site, "Find me a direct flight to Paris next Tuesday under $500," and the NLWeb-enabled site would directly process this, rather than requiring multiple manual searches and filters.

However, NLWeb is more than just a conversational overlay. A pivotal aspect of its architecture is that every NLWeb instance also functions as a Model Context Protocol (MCP) server. This design choice is crucial for the interoperability demanded by the agentic web. When a website integrates NLWeb, it inherently becomes discoverable and accessible to other AI agents operating within the broader MCP ecosystem. This eliminates the need for bespoke, custom integrations for every individual AI assistant or platform. Instead, if a website supports NLWeb, agents can recognize its capabilities and interact with it in a standardized, protocol-driven manner. This fosters a more open and distributed ecosystem, where agents can fluidly move between different NLWeb-enabled sites, aggregating information and performing tasks without proprietary barriers.

NLWeb intelligently builds upon established web formats that are already widely adopted, such as Schema.org for structured data and RSS for content syndication. By combining this existing structured data with the analytical power of large language models (LLMs), NLWeb can generate highly relevant and accurate natural language responses. This approach ensures that websites can expose their content in a format that is simultaneously comprehensible to human users and precisely interpretable by AI agents.

A key design principle of NLWeb is its technology agnosticism. Site owners retain the freedom to choose their preferred infrastructure, AI models, and database solutions. This commitment to interoperability over platform lock-in is vital for fostering widespread adoption and ensuring that the agentic web remains open and accessible, rather than fragmenting into proprietary silos. In many respects, NLWeb is positioned to play a role in the agentic web akin to that which HTML played for the early internet: providing a shared, foundational communication layer that enables agents to query websites directly, moving beyond the limitations of traditional crawling and visual interfaces.

Distinction from Traditional LLM Citations: Grounding in Publisher Data

A critical differentiator for NLWeb lies in its approach to information retrieval and response generation, particularly when compared to standard LLM citation practices. In conventional LLM interactions, the model typically generates an answer first, often based on its vast training data, and then attempts to append sources or citations to support that probabilistic response. This methodology, while powerful, inherently carries the risk of introducing inaccuracies or "hallucinations" – instances where the AI generates plausible but factually incorrect information – because the answer precedes the verification.

NLWeb operates on a fundamentally different principle. It conceptualizes the language model not as an inventor of answers, but as a highly intelligent retrieval layer. Instead of fabricating responses, NLWeb is engineered to pull verified "objects" – discrete pieces of information, data points, or functionalities – directly from the website’s own structured data. These verified objects are then presented to the user or agent in natural language.

This distinction is paramount. It signifies that NLWeb responses are "grounded" in the publisher’s own, authoritative data from the outset. This significantly mitigates the risk of hallucination and provides site owners with a far greater degree of control over how their content is represented and interpreted by AI systems. It ensures that the information conveyed is accurate, reliable, and consistent with the source, fostering trust and maintaining brand integrity in the agentic web environment. This grounding mechanism is a cornerstone of NLWeb’s design, ensuring accuracy and accountability in AI-driven interactions.

Implications for Search Engine Optimization (SEO) Professionals

The advent of the agentic web presents both profound challenges and unprecedented opportunities for SEO professionals. As the web evolves to support sophisticated AI agents, a new and pressing question emerges: how does one ensure visibility and discoverability when answers are increasingly generated and synthesized by AI, rather than merely ranked as a list of links?

A vivid illustration of this challenge arose during Microsoft’s Ignite event. A consultant described a client in the food industry, specializing in products like mayonnaise. The client’s concern was straightforward: how could their brand appear prominently when a user asked an AI assistant a natural language question about mayonnaise? This seemingly simple query exposed a deeper paradigm shift. If AI systems are designed to generate direct, synthesized answers instead of presenting a traditional list of search results, the very definition of "optimization" must evolve.

This shift underscores a crucial point: the agentic web is not a replacement for the open web, but rather an additional, intelligent layer built on top of it. Traditional search engines will continue to index pages, and rankings for human-driven queries will still hold significance. However, the introduction of intelligent systems capable of directly querying websites, comparing information across multiple sources, and generating synthesized responses adds a new dimension to visibility.

For SEO professionals, this fundamentally alters the website’s role and the strategies required for success. It is no longer sufficient to think solely in terms of pages designed for human visits and clicks. Websites must now be conceptualized as intelligent endpoints, meticulously structured and optimized to be queried, understood, and acted upon by machine intelligence.

This necessitates a renewed emphasis on several core SEO principles, elevating them from "best practices" to foundational requirements:

- Structured Data: Comprehensive and accurate implementation of Schema.org markup is no longer just an enhancement for rich results; it becomes the essential language through which AI systems interpret, categorize, and select your content. This includes not just basic entity markup but also action-oriented schema for services and products.

- Clean Information Architecture (IA): A logical, hierarchical, and easily navigable site structure, both for humans and machines, becomes paramount. AI agents need to efficiently map and understand the relationships between different pieces of content and functionalities on your site.

- Machine-Readable Content: Content must be clear, unambiguous, factual, and devoid of excessive jargon or ambiguity. It must be written with both human readability and machine interpretability in mind, ensuring that AI can accurately extract key facts and insights.

- Semantic SEO: Moving beyond keyword stuffing, SEOs must focus on optimizing for concepts, entities, and user intent. This means understanding the broader context of user queries and ensuring content provides comprehensive, authoritative answers.

- Interoperability: Actively preparing websites to interact with protocols like NLWeb and other agentic standards, ensuring they can seamlessly provide data and services to autonomous agents.

The key takeaway for SEOs is clear: the agentic web is an additional layer on the open web, not a replacement for it. To remain visible and competitive, SEO professionals must ensure their websites are not only structured for human consumption but are also meticulously organized, accessible, and ready to be queried and understood by intelligent systems. Visibility in this new layer depends on clarity, interoperability, and robust infrastructure, making foundational SEO practices more critical than ever before. The future of SEO will blend traditional ranking strategies with advanced semantic understanding and agent-centric optimization.

Yoast’s Strategic Partnership and WordPress Integration: Democratizing Agentic Capabilities

In a significant development for the broader web ecosystem, Microsoft highlighted Yoast as a key partner in bringing agentic search capabilities to the vast WordPress community during the NLWeb announcement. This collaboration, detailed in the official press announcement "Yoast and Microsoft’s NLWeb integration," underscores the practical efforts to democratize access to the agentic web.

For the millions of WordPress site owners worldwide, concepts such as infrastructure, endpoints, and communication protocols can often seem abstract and technically daunting. This is precisely where the strategic preparation facilitated by tools like Yoast SEO becomes invaluable. While Yoast does not automatically deploy NLWeb for its users, its robust schema aggregation feature, available in Yoast SEO, Yoast SEO Premium, Yoast WooCommerce SEO, and Yoast SEO AI+, plays a critical preparatory role. This feature systematically organizes and structures website content, rendering it significantly easier to build and implement NLWeb on top of it. When site owners enable these relevant Yoast features, there is no visual change on the front end of their website. The transformation occurs beneath the surface, in the underlying data structure.

In essence, Yoast actively maps and organizes structured data, dramatically reducing the technical effort and complexity required to integrate NLWeb. By doing so, Yoast helps publishers complete much of the foundational groundwork necessary for agentic web readiness. This partnership is crucial because WordPress powers over 43% of all websites, making it a critical gateway for widespread adoption of agentic capabilities. By simplifying the structural requirements, Yoast enables a vast segment of the internet to prepare for a future where AI agents interact directly with website content.

This collaboration signifies that the agentic web is not merely a distant technological aspiration for large enterprises, but a tangible evolution for everyday website owners. It is about ensuring that content remains discoverable, understandable, and actionable in an increasingly automated world where intelligent systems are progressively acting on behalf of users. The partnership between Microsoft and Yoast illustrates a concerted effort to build the necessary bridges between cutting-edge AI protocols and widely used content management systems, accelerating the transition to a truly agentic internet.

Broader Industry Context and Future Outlook

The emergence of the agentic web, spearheaded by initiatives like Microsoft’s NLWeb, is part of a broader, industry-wide movement towards more intelligent and automated digital interactions. Major technology players are heavily investing in AI agent capabilities, from Google’s continued advancements in conversational AI and search understanding to OpenAI’s development of agentic functionalities that can interact with various applications. This widespread effort signifies that the shift towards agent-driven interactions is not a singular company’s vision but a collective trajectory for the future of the internet.

The implications of the agentic web extend far beyond SEO and web development. It promises to revolutionize user experience, making digital tasks more efficient and seamless. Imagine a personal AI assistant that can autonomously research and book the best vacation package, manage financial transactions, or even coordinate complex projects by interacting directly with various web services. This level of automation has the potential to free up significant human time and cognitive load.

However, this transformation also introduces new challenges. Data privacy and security become paramount, as AI agents will handle sensitive user information and execute actions on their behalf. Robust authentication, authorization, and data governance frameworks will be essential to build and maintain user trust. Ethical considerations surrounding AI autonomy, accountability, and potential biases in agent decision-making will also require careful consideration and regulatory oversight. The risk of AI hallucinations, while mitigated by NLWeb’s grounding approach, remains a broader industry concern that requires ongoing technological and ethical solutions.

Furthermore, the economic impact could be substantial. New business models centered around agent services and agent-to-agent transactions may emerge, creating new opportunities for innovation and revenue generation. Content creators and publishers will need to adapt their strategies, focusing on creating highly structured, verifiable, and actionable content that can be readily consumed and utilized by AI agents, rather than solely optimizing for human eyeballs.

The agentic web, powered by protocols like NLWeb, represents a pivotal moment in the internet’s evolution. It marks a transition from a passive information repository to an active, intelligent, and highly automated ecosystem. While the journey will undoubtedly involve overcoming technical, ethical, and societal hurdles, the vision of a web where intelligence is distributed and interactions are seamless holds the promise of a dramatically more efficient, personalized, and powerful digital future. The foundation is being laid, and the digital landscape is poised for its next profound transformation.