In the digital landscape of 2026, the barrier to entry for website and application experimentation has reached an all-time low, driven by the proliferation of sophisticated AI-driven tools that can generate hypotheses, write front-end code, and summarize statistical results in a matter of seconds. As the cost of executing an A/B test approaches zero, the competitive advantage for modern enterprises has shifted away from the mere ability to run tests and toward the discipline of rigor, transparency, and data triangulation. Marketing leaders are increasingly finding that while AI can accelerate the pace of testing, the quality of the insights derived depends entirely on the human-led processes that govern the experimentation lifecycle.

The Evolution of Experimentation Rigor

The transition from 2024 to 2026 marked a pivotal era in how digital growth teams operate. Previously, A/B testing was often viewed as a specialized tactical function, frequently siloed within growth or product teams. Today, experimentation is embedded across the entire marketing ecosystem. This shift is exemplified by the adoption of frameworks such as HubSpot’s Loop marketing system, which integrates continuous testing into every stage of the customer journey.

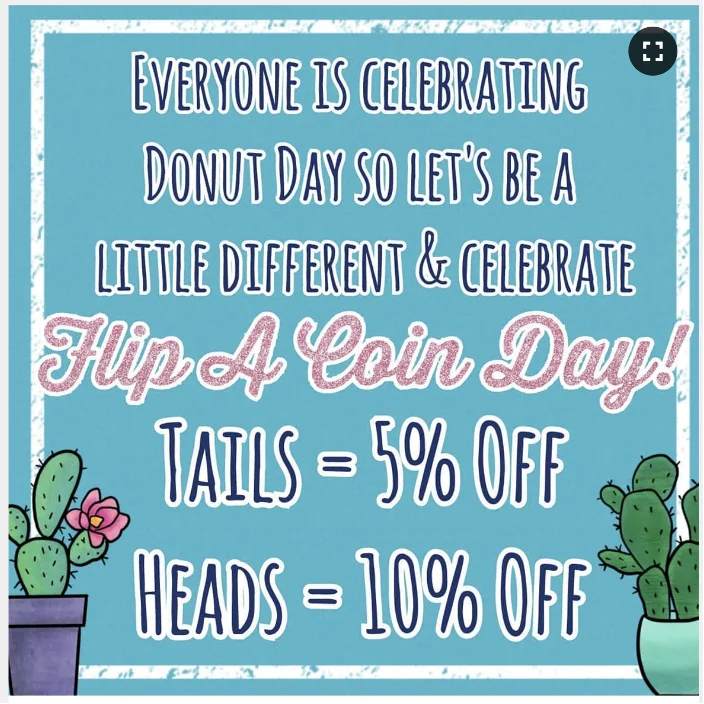

However, this democratization of testing has introduced a significant risk: the "Garbage In, Garbage Out" (GIGO) effect. In an era where AI can generate hundreds of test variants instantly, the danger of building hypotheses on shallow research or unvalidated AI-generated buyer personas has become a primary concern for Conversion Rate Optimization (CRO) experts. Industry data suggests that tests based on robust qualitative research are twice as likely to reach statistical significance compared to those based on gut feeling or unverified AI outputs.

Strategic Decision-Making: When to Deploy a Test

A critical component of the 2026 experimentation standard is knowing when a randomized controlled trial is the appropriate tool. Experts suggest that A/B testing should be reserved for scenarios where the risk of a "wrong" decision is high and the potential for learning is significant.

Criteria for Launching an Experiment

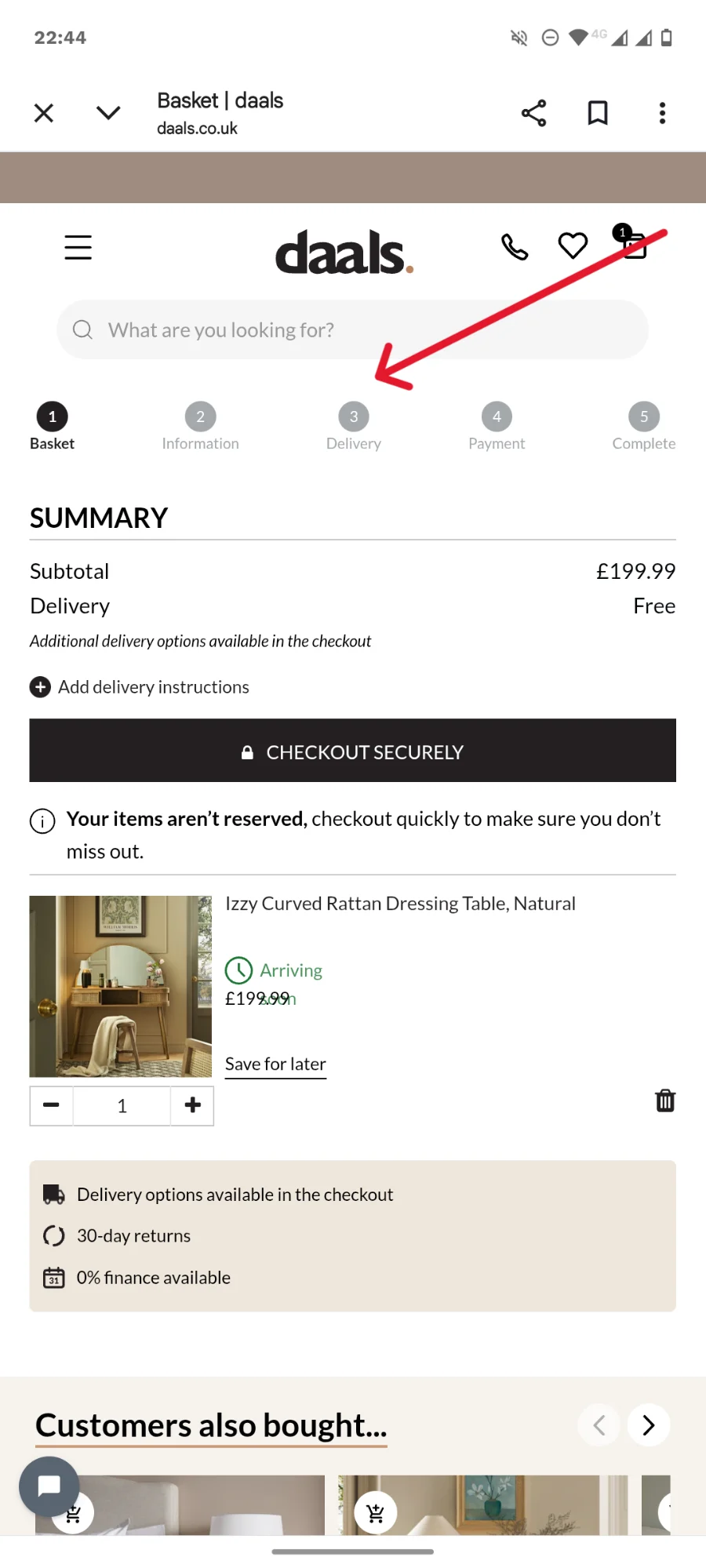

Successful experimentation programs in 2026 prioritize testing when there is sufficient traffic to reach a meaningful conclusion within a reasonable timeframe—typically two to four weeks. Tests are deemed necessary when a change is controversial, high-risk, or when data from qualitative sources like heatmaps and user recordings shows a clear conflict in user behavior. Furthermore, testing is essential when the goal is to quantify the specific impact of a new feature on North Star metrics, such as Lifetime Value (LTV) or Average Order Value (AOV).

Scenarios to Avoid Testing

Conversely, running a test can be counterproductive in several scenarios. For instance, low-traffic pages often fail to provide the statistical power necessary to detect anything but the most massive of changes, leading to "flat" results that waste time. Additionally, if a change is a "no-brainer" fix—such as repairing a broken checkout button or updating outdated legal compliance text—the consensus among lead experimenters is to ship the change immediately rather than delaying for the sake of a test.

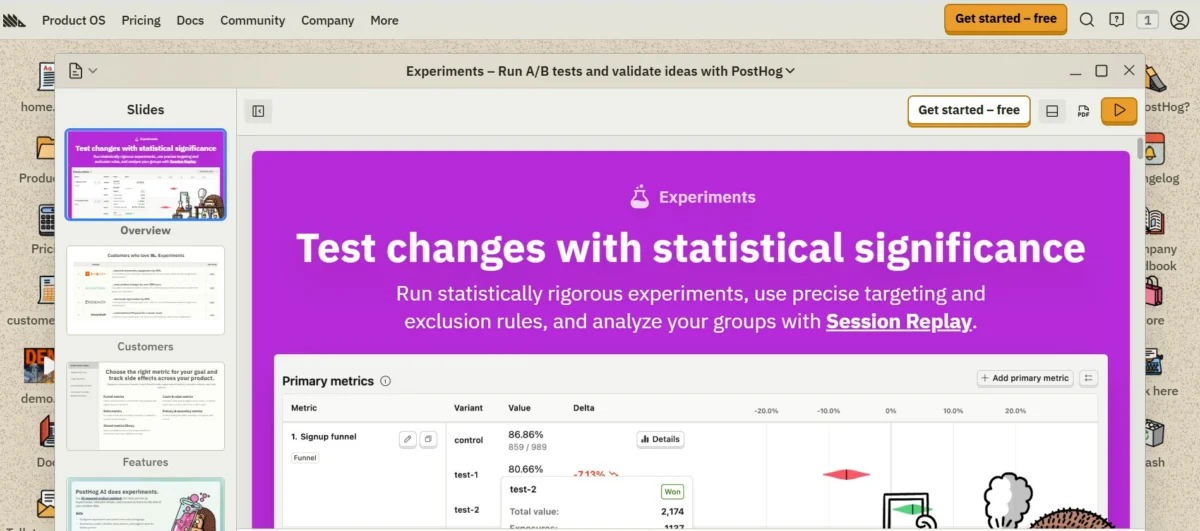

The Modern Experimentation Stack and the Role of AI

A 2026 survey of over 100 experimentation professionals, including in-house teams at Fortune 500 companies and independent consultants, revealed a significant shift in what users value in their testing platforms. While flashy AI features initially dominated the market, current demand is focused on prompt-led workflows, automated documentation, and deeper access to raw data.

Marcella Sullivan, a prominent CRO expert, notes that the most effective platforms today are those that reduce manual administrative labor while maintaining high levels of transparency. The survey indicated that 83.3% of experimenters use generative AI tools like ChatGPT or Claude on a daily basis. The primary use cases include:

- Data Synthesis: Analyzing massive sets of qualitative customer feedback to identify recurring pain points.

- Coding Support: Generating CSS and JavaScript for test variants, which has reduced the reliance on dedicated front-end developers for simple tests.

- Ideation: Treating AI as a "sparring partner" to challenge existing hypotheses and suggest alternative executions.

Despite this high adoption rate, the industry remains wary of "AI hallucinations"—instances where models fabricate data or misinterpret statistical causality. To mitigate this, leading organizations maintain a "human-in-the-loop" policy, where every AI-generated insight or code snippet must be validated by a human specialist before going live.

The ALARM Protocol: A Framework for Quality

To ensure that experiments yield defensible insights, many teams have adopted the ALARM protocol, a structured framework developed to challenge assumptions and identify risks before a single visitor is enrolled in a test.

- Alternative Executions: Instead of settling for the first design idea, teams must explore at least two or three different ways to manifest a hypothesis.

- Loss Factors: Identifying why a test might fail—such as low visibility of the element or competing calls to action—allows teams to refine the execution before launch.

- Audience and Area: Precision in targeting is paramount. Experimenters must define exactly which segments (e.g., returning mobile users from organic search) will see the test to avoid diluting the results.

- Rigor: Applying psychological principles, such as cognitive load reduction or social proof, to ensure the variant is rooted in proven behavioral science.

- MDE & MVE: Determining the Minimum Detectable Effect (MDE) helps teams understand if they have the traffic to even see a result, while the Minimum Viable Experiment (MVE) focuses on building the simplest version of the test to prove the concept.

Statistical Methodologies: Frequentist vs. Bayesian

The debate between Frequentist and Bayesian statistics remains a central topic in 2026. Frequentist testing, the traditional bedrock of A/B testing, relies on fixed sample sizes and p-values to control for error rates over time. It is highly regarded for its ability to "de-risk" decisions in a robust, albeit sometimes unintuitive, manner.

Bayesian statistics, however, has gained significant ground due to its more intuitive nature. It provides a probability of a variant being better than the control, which is often easier for stakeholders to grasp. In 2026, many enterprise-grade tools offer both, allowing teams to use Frequentist methods for high-stakes, "go/no-go" product decisions and Bayesian methods for rapid marketing iterations where the cost of being slightly wrong is lower than the cost of waiting for a larger sample size.

Data Triangulation and Defensible Insights

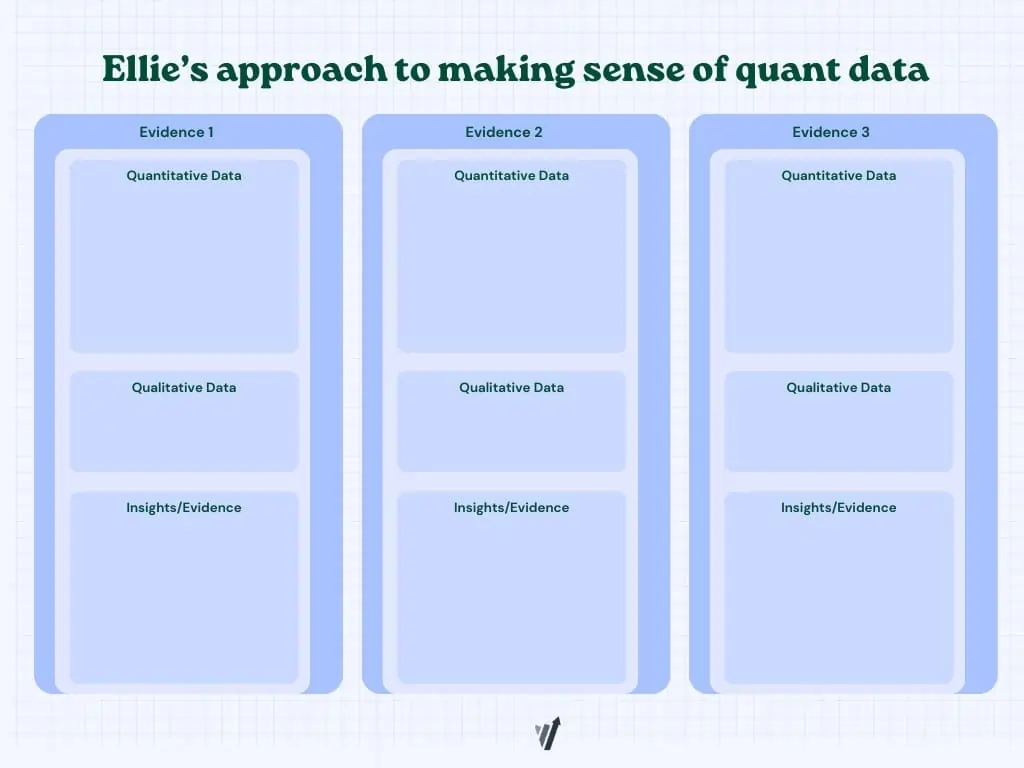

One of the most significant advancements in recent years is the move toward data triangulation. As Ellie Hughes, Head of Consulting at Eclipse Group, suggests, the goal is to take a "quantitative approach to qualitative data and a qualitative approach to quantitative data."

This involves synthesizing three distinct data streams:

- Quantitative Data: Analytics from tools like Google Analytics 4 or server-side logs that show what is happening.

- Qualitative Data: Insights from user interviews, heatmaps, and session replays that explain why it is happening.

- Heuristic Analysis: Expert reviews based on usability principles and past experiment history.

By triangulating these sources, experimenters can move past simple "winner vs. loser" declarations. For example, if a test shows a lift in conversion but heatmaps show users are struggling with a new form field, the team knows the "win" might be fragile and requires further iteration.

The Future Outlook: 2027 and Beyond

As we look toward 2027, the trajectory of experimentation suggests a move toward hyper-personalization at scale. The "DNA" of testing is evolving from static A/B tests into dynamic, server-side experimentation where AI agents continuously optimize small segments of the user base in real-time.

However, the core tenets of the discipline remain unchanged. The broader implication for businesses is that as tools become more automated, the human role shifts from "executor" to "orchestrator." The ability to ask the right questions, maintain a clean data environment, and foster a culture of transparent documentation will be the factors that distinguish market leaders from those who are simply "noise-testing."

In conclusion, the experimentation landscape of 2026 is defined by a paradox: while it has never been easier to run a test, it has never been more difficult to run a good test. By adhering to rigorous protocols like ALARM, embracing data triangulation, and maintaining a healthy skepticism of AI-generated outputs, organizations can ensure that their experimentation programs drive genuine, sustainable growth rather than just temporary statistical fluctuations.