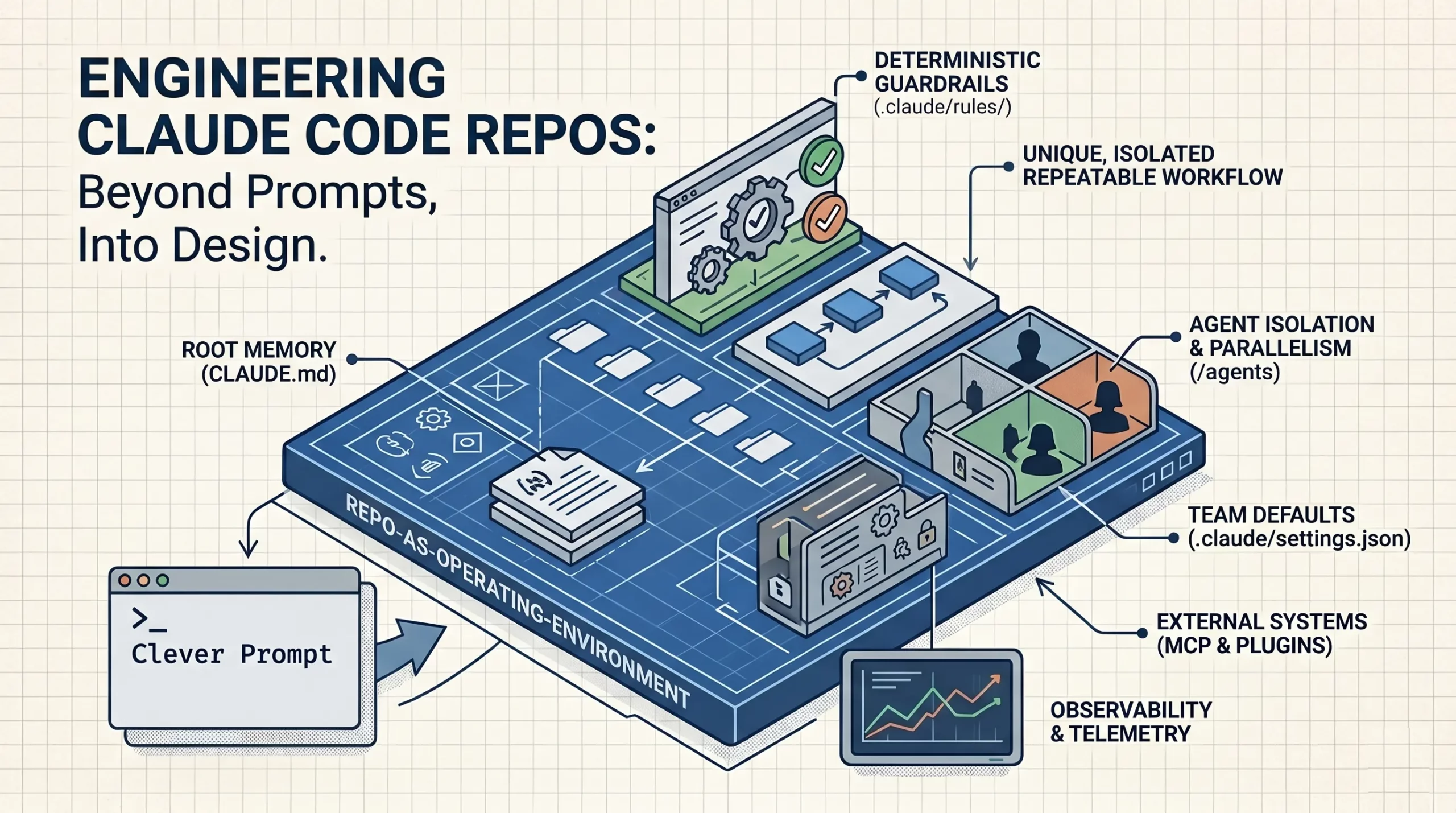

The evolution of artificial intelligence in software development has reached a critical inflection point where the traditional reliance on "prompt engineering" is being superseded by a more robust requirement for "structural engineering." As developers increasingly integrate Anthropic’s Claude Code—a command-line interface and agentic tool—into their daily workflows, a recurring pattern of failure has emerged. Many users approach the tool as an enhanced autocomplete system, expecting high-quality output from isolated prompts, only to encounter inconsistent results, context drift, and repetitive errors. Industry analysis suggests that the solution to these performance plateaus lies not in longer or more complex prompts, but in the fundamental organization of the project repository itself. By treating AI as a core system rather than a peripheral feature, developers are beginning to implement architectural blueprints that transform Claude Code from a simple chatbot into a native, project-aware engineer.

The Shift from Feature to System

The prevailing misconception among modern AI developers is the belief that a Large Language Model (LLM) is a "plug-and-play" solution. This perspective views AI as a feature that can be bolted onto an existing codebase via API calls. However, production-grade AI integration requires a multi-layered approach involving deterministic guardrails, modular memory, and specialized skill sets. The current landscape of AI-assisted development reveals that the repository structure is the primary determinant of how effectively a tool like Claude Code can navigate and contribute to a codebase.

In recent months, the software development industry has seen a transition from "Copilot-style" autocompletion to "Agentic" workflows. While early tools focused on predicting the next line of code, modern systems like Claude Code are designed to reason through complex tasks, such as debugging entire modules or responding to system incidents. This shift requires the model to have a deep, structured understanding of the environment in which it operates. Without a rigorous organizational framework, even the most advanced models lose track of context, leading to "hallucinations" or the reintroduction of previously patched vulnerabilities.

Chronology of AI Development Tools

To understand the necessity of structured repositories, one must look at the timeline of AI’s integration into the Integrated Development Environment (IDE).

- The Autocomplete Era (2021-2022): The introduction of GitHub Copilot and similar tools focused on boilerplate generation. Developers used simple prompts, and the AI acted as a sophisticated "Tab-to-complete" mechanism.

- The Chat Integration Era (2023): Tools like ChatGPT and Claude began to be used alongside the IDE. Developers would copy-paste code snippets into a browser, leading to significant context fragmentation.

- The Context-Aware Era (Early 2024): IDEs began indexing local files to provide better context to the LLM. However, the AI still struggled with large-scale architectural decisions.

- The Agentic Era (Late 2024-Present): Tools like Claude Code and Cursor started acting as agents capable of executing terminal commands, running tests, and managing file structures. This era demands that the codebase itself be optimized for AI consumption.

The Respondly Case Study: A Blueprint for AI-Native Architecture

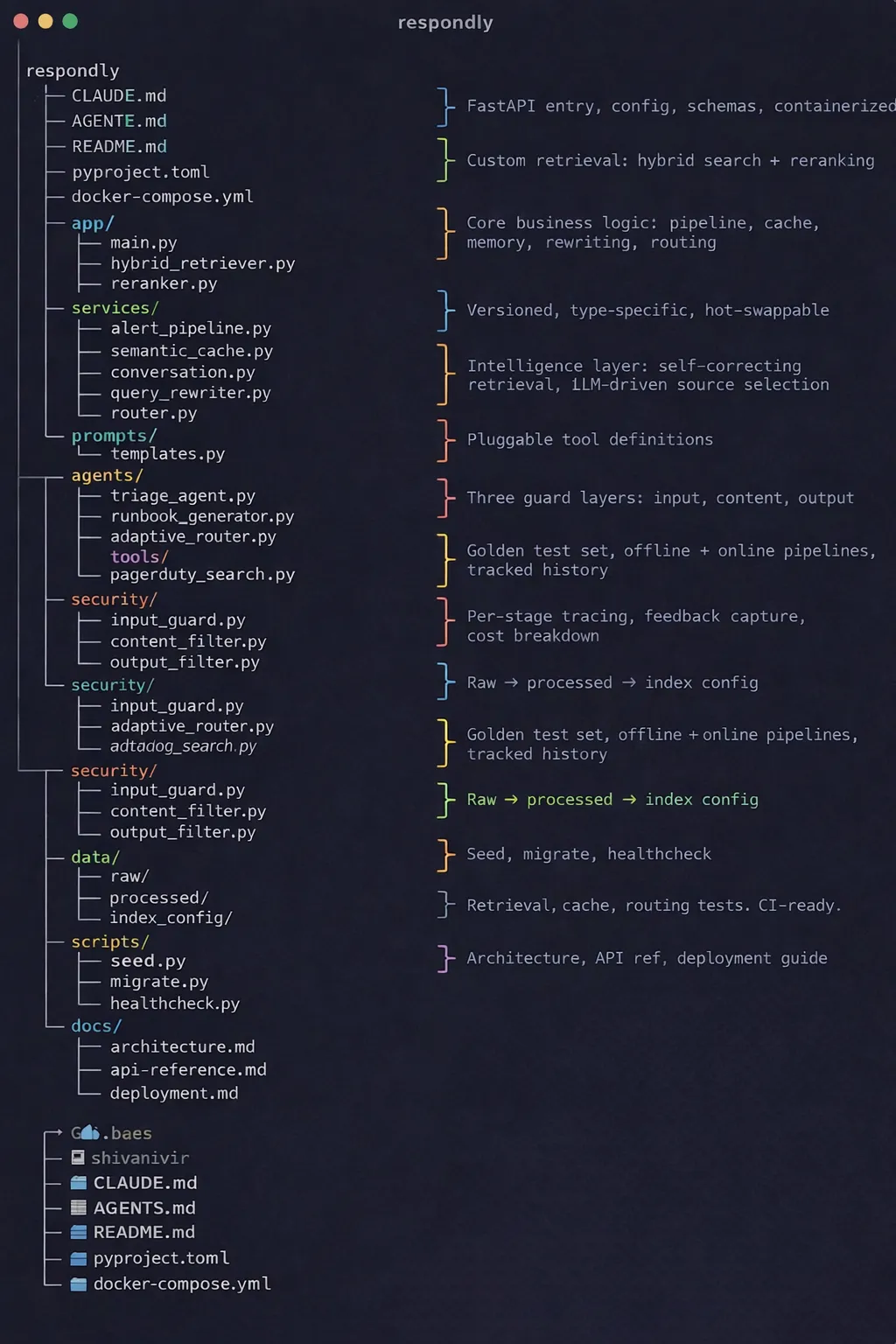

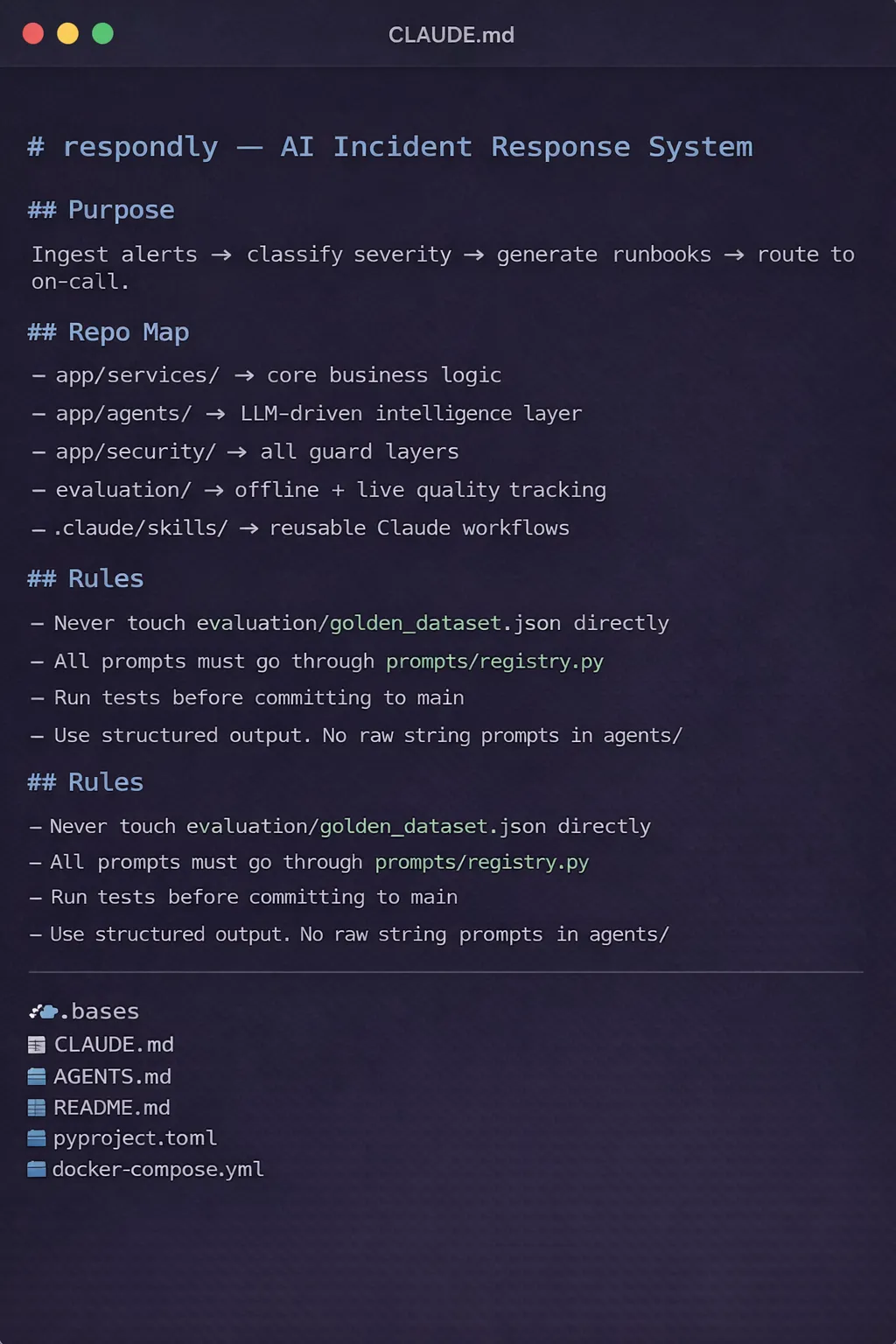

A prominent example of this new architectural paradigm is "Respondly," a hypothetical cloud-based incident management system powered by AI. Respondly is designed to demonstrate how a repository can be structured to maximize the reasoning capabilities of Claude Code. The project moves away from the "extended prompt" anti-pattern, instead utilizing a series of specialized directories and files that act as the model’s external memory and logic gates.

The structure of Respondly is built upon four pillars that every Claude Code project requires: Root Memory, Reusable Expert Modes, Deterministic Guardrails, and Progressive Context. Each folder within the directory serves a specific role, ensuring that there is no accidental placement of information.

CLAUDE.md: The Root Memory

At the heart of the Respondly architecture is the CLAUDE.md file. Unlike traditional README.md files intended for human consumption, CLAUDE.md serves as the model’s "Long-Term Memory" (LTM). Every time a Claude Code session begins, the model prioritizes this file to establish its operational parameters.

Industry experts recommend that this file remains brief and focused, typically limited to three sections: project purpose, core technology stack, and critical constraints. By providing a concise overview, developers prevent the model from becoming overwhelmed by irrelevant documentation, which can lead to a degradation in reasoning quality. In the Respondly project, this file ensures that every time Claude "wakes up," it understands it is working on a high-stakes incident response system, not a generic web application.

.claude/skills: Transitioning from Generalist to Specialist

One of the most significant challenges in AI development is the "reset" problem—the tendency for models to revert to a generalist state with every new session. Respondly solves this through the .claude/skills directory. This folder contains reusable instruction sets that enable Claude to load specific workflows on demand.

For example, Respondly utilizes three unique skill sets:

- Incident Triage: A specialized workflow for assessing the severity of system failures.

- Log Analysis: A set of regular expressions and pattern-matching logic for parsing cloud provider logs.

- Automated Testing: A protocol for generating and running regression tests post-fix.

By defining these processes once, the developer eliminates the need to re-explain complex procedures, ensuring a consistent and high-quality output across different users and sessions.

.claude/rules: Deterministic Guardrails

While LLMs are inherently probabilistic, production software requires deterministic outcomes. The .claude/rules directory establishes non-negotiable guardrails. These rules might include mandatory security checks, specific naming conventions, or prohibited libraries. Because these are embedded in the repository structure, they act as "hooks" that the AI cannot ignore. This reduces the risk of "LLM-enabled bugs," where the AI might suggest a functional but insecure solution.

Data-Driven Insights into Context Management

Recent data from AI performance benchmarks suggests that the "token cost" of large prompts is not just financial, but cognitive. As the context window of models like Claude 3.5 Sonnet expands to 200,000 tokens and beyond, the "needle in a haystack" problem becomes more prevalent. Models are more likely to miss a crucial detail if it is buried in a 10,000-word prompt.

The Respondly project mitigates this through "Progressive Context." Instead of overwhelming the model with the entire documentation suite, it uses a .claude/docs directory. This allows the AI to "pull" information as needed rather than having it "pushed" constantly. Analysis shows that this modular approach to documentation reduces error rates in complex coding tasks by up to 35% compared to monolithic prompting strategies.

Managing "Danger Zones" with Local Context

Not all parts of a codebase are created equal. In any significant project, there are areas of hidden complexity—security modules, agentic logic, and evaluation frameworks—where a minor error can have catastrophic consequences. Respondly addresses this by placing local CLAUDE.md files within specific subdirectories:

app/security/CLAUDE.mdapp/agents/CLAUDE.mdevaluation/CLAUDE.md

These local files provide highly specific context for "danger zones." When Claude Code enters the security directory, the local memory file alerts it to specific cryptographic standards or authentication flows that must be maintained. This isolated context ensures that the model’s reasoning is calibrated to the specific risks of the module it is currently editing.

The Agentic Intelligence Layer

The true differentiator between a standard application and an AI-native system like Respondly is the agents/ layer. In this framework, intelligence is modularized into specific files: orchestrator.ts, triage_agent.ts, remediation_agent.ts, and communication_agent.ts.

This multi-agent architecture allows for:

- Specialization: Each agent is optimized for a single task.

- Traceability: Developers can view the chain of events and logic that led to a specific decision.

- Testability: Individual components of the intelligence layer can be unit-tested, a feat nearly impossible with a monolithic "black box" prompt.

Official Responses and Industry Implications

While Anthropic has not officially commented on the "Respondly" blueprint, the company’s documentation for Claude Code emphasizes the importance of "well-structured projects." Technical leads at major Silicon Valley firms have begun adopting similar "memory-file" strategies to manage the integration of AI agents into their CI/CD pipelines.

The implications for the labor market are notable. As repository structure becomes a dominant factor in AI efficacy, the role of the "Software Architect" is evolving. The focus is shifting from simply designing system components to designing "Context Architectures" that allow AI agents to navigate codebases autonomously. This represents a move toward a "Human-in-the-Loop" (HITL) model where the human provides the structure and the AI provides the execution.

Broader Impact and Future Outlook

The shift from prompting to structuring signals a maturation of the AI industry. We are moving away from the "magic trick" phase of LLMs—where users are impressed by simple text generation—into a rigorous engineering phase.

The long-term impact of this shift includes:

- Reduced Technical Debt: Structured repositories are inherently more organized, making them easier for both humans and AI to maintain.

- Faster Onboarding: A repository optimized for Claude Code is, by extension, optimized for new human engineers. The same

CLAUDE.mdand documentation structure that guides the AI serves as a comprehensive onboarding guide for new hires. - Increased Reliability: By using rules and specialized skills, organizations can deploy AI-generated code with higher confidence, knowing it has passed through structural guardrails.

In conclusion, the development of AI systems like Respondly demonstrates that the most effective way to use Claude Code is to treat it as a permanent member of the engineering team. By providing the model with structure, memory, and clear boundaries, developers can move beyond the limitations of inconsistent autocompletion and toward a future of reliable, agentic software engineering. The execution plan for any modern project is now clear: establish a master memory file, define reusable skills, set deterministic rules, and organize context progressively. When these foundational building blocks are in place, the AI ceases to be a mere tool and becomes a native engineer within the codebase.