Memory serves as the fundamental architecture that dictates how human beings process reality and how artificial intelligence (AI) agents execute autonomous actions. In the current landscape of large language model (LLM) development, the transition from "stateless" chat interfaces to "stateful" autonomous agents represents a significant leap in computational capability. Without a dedicated memory layer, an AI agent is restricted to responding only to immediate input; however, with the integration of memory patterns, these systems can maintain context, recall historical actions, and iteratively refine their knowledge base. As AI engineering increasingly draws from cognitive science, the industry is witnessing a shift toward sophisticated memory architectures that categorize information into short-term, episodic, semantic, and long-term storage, each presenting unique trade-offs in retention, retrieval speed, and system control.

The Architectural Necessity of Agent Memory

In technical terms, agent memory is the structural capacity of an AI system to store information, retrieve it during relevant prompts, and utilize past experiences to optimize future outputs. This capability is not inherent to the foundational models themselves. Most standard LLMs, by design, do not retain information across separate sessions; they operate primarily within a fixed context window. Consequently, developers must engineer memory as a separate design layer that wraps around the model. This layer functions as a decision-making engine that determines what data is worth saving, how it should be indexed, and the precise conditions under which it should be surfaced.

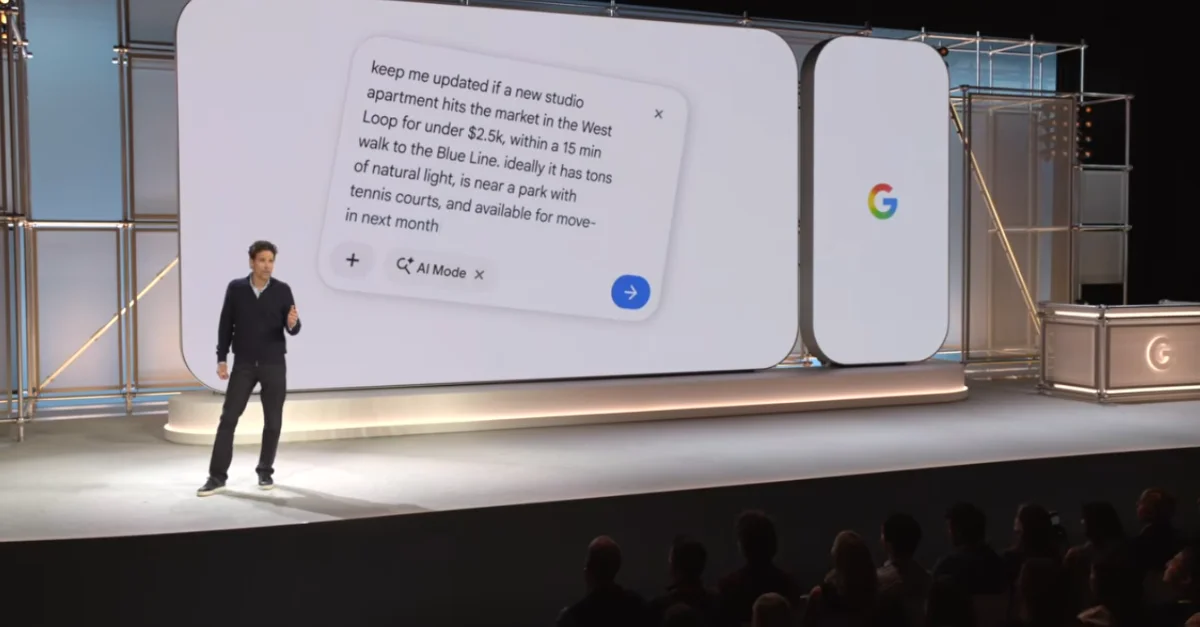

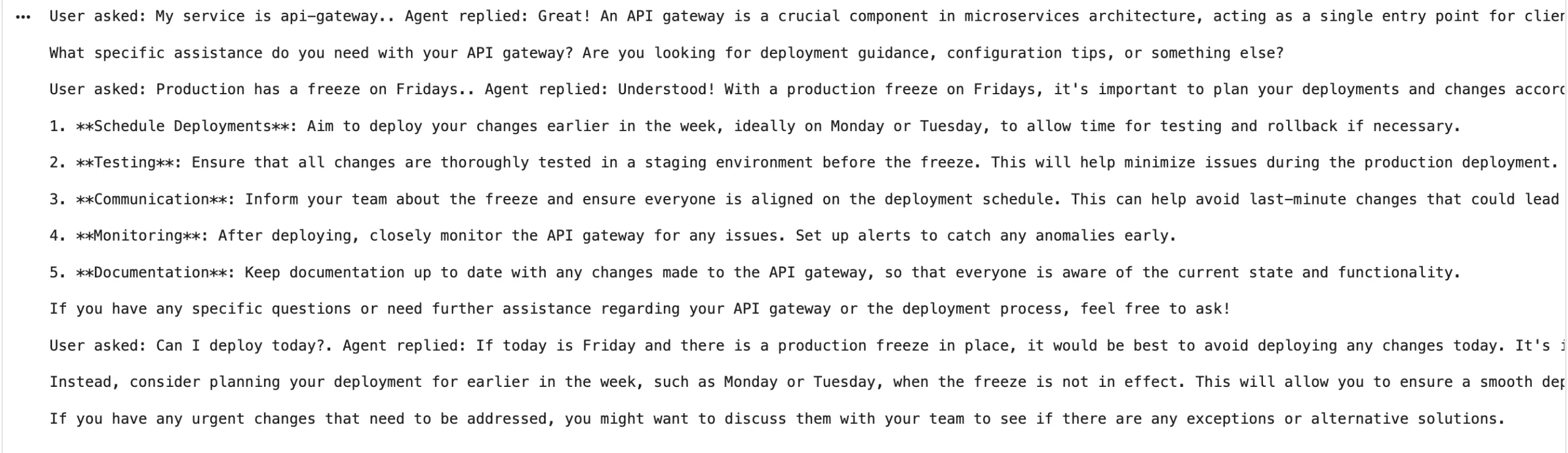

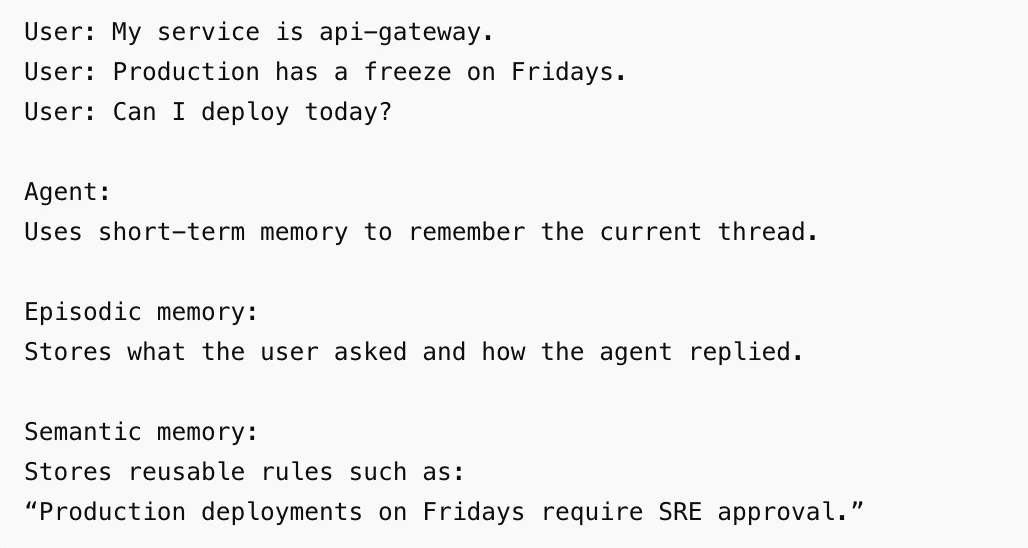

In a rudimentary deployment, memory might consist solely of the last few exchanges in a chat history. However, in enterprise-grade autonomous agents, the scope expands to include user preferences, multi-step task histories, tool execution logs, and an archive of past errors. For instance, a technical support agent might remember that a specific user manages a particular cloud service and that deployments to that service are prohibited on weekends. When the user later asks for a status update or a deployment action, the agent utilizes this stored "semantic" and "episodic" data to provide a response that is not only accurate but also contextually aware of organizational constraints.

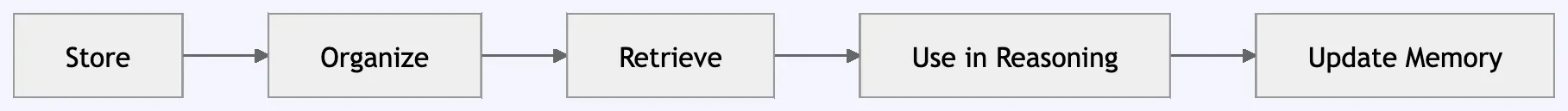

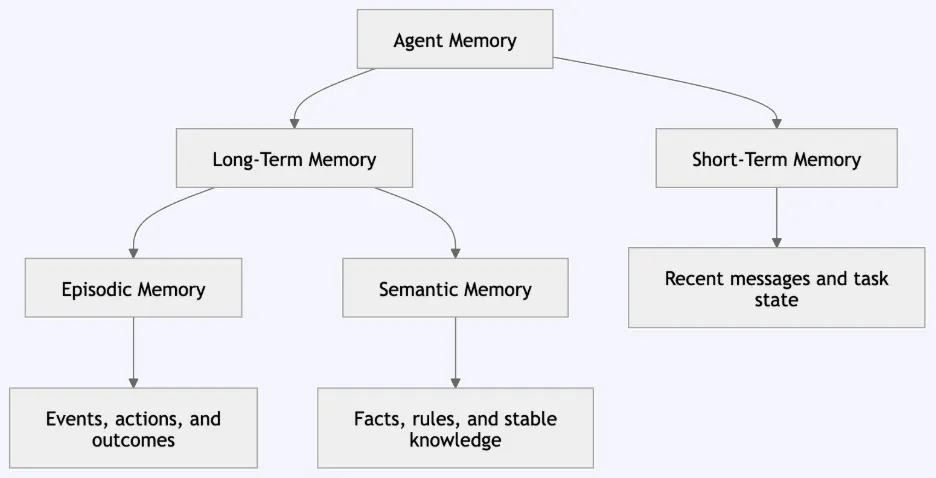

Categorizing Memory Types: From Cognitive Theory to Engineering

To build robust AI agents, engineers have adopted a taxonomy from cognitive science, dividing memory into four distinct functional areas. This stratification ensures that the system remains efficient, avoiding the "noise" that occurs when an agent attempts to process every past interaction simultaneously.

Short-Term Memory

Short-term memory in AI agents is often referred to as the "active context." It involves the immediate retention of the current conversation thread. In framework implementations like LangGraph, this is managed through "checkpointers" that save the state of a thread. The primary objective is to maintain a fluid conversation flow, ensuring the agent understands what "it" refers to when a user follows up on a previous statement.

Episodic Memory

Episodic memory focuses on specific events and their outcomes. It is a chronological log of interactions. If a user asks, "What did we decide about the API gateway yesterday?" the agent queries its episodic memory to find the specific event timestamped with that discussion. This provides traceability and allows the agent to learn from the success or failure of past sequences of actions.

Semantic Memory

Semantic memory represents the storage of facts, rules, and general concepts that remain stable over time. Unlike episodic memory, which is a record of when something happened, semantic memory is a record of what is true. Examples include a user’s coding preferences, corporate compliance policies, or technical documentation. This information is typically retrieved via similarity searches in vector databases.

Long-Term Memory

Long-term memory acts as the overarching repository that persists across sessions and users. It bridges the gap between episodic events and semantic facts, ensuring that once an agent learns a piece of information, it remains accessible indefinitely unless specifically purged. This is the cornerstone of personalization in modern AI applications.

A Chronology of Memory Integration in AI Development

The journey toward stateful AI has evolved rapidly over the last decade. Understanding this timeline provides context for the current complexity of agent memory patterns.

- 2014–2017: The Era of Recurrent Neural Networks (RNNs). Early AI models used LSTMs (Long Short-Term Memory) to process sequences. While they had a form of internal memory, it was limited and prone to "forgetting" long-range dependencies.

- 2018–2022: The Transformer Revolution. The introduction of the Transformer architecture and the "Attention" mechanism allowed models to look at all parts of an input simultaneously. However, these models remained stateless; memory was limited to what could fit in the prompt.

- 2023: The Rise of RAG. Retrieval-Augmented Generation (RAG) became the industry standard for giving AI access to external "memory" by fetching documents from a database.

- 2024–Present: The Shift to Agentic Memory. Developers began moving beyond simple document retrieval toward "Agentic Memory," where the agent actively decides what to write to its own memory and how to update its internal knowledge graph based on its experiences.

Technical Frameworks: Implementing Memory with LangGraph

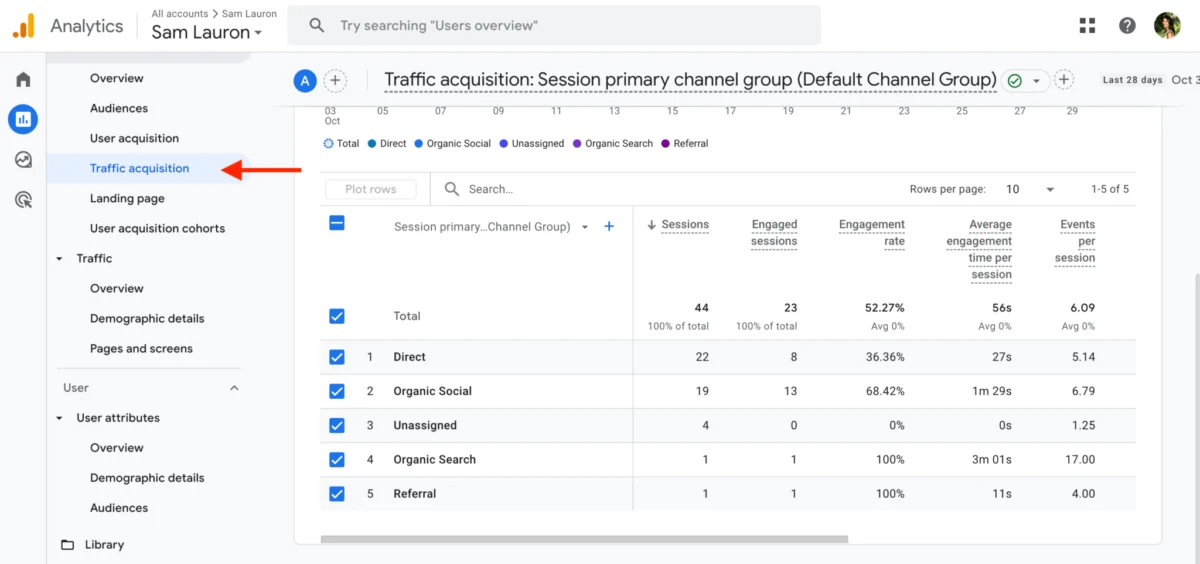

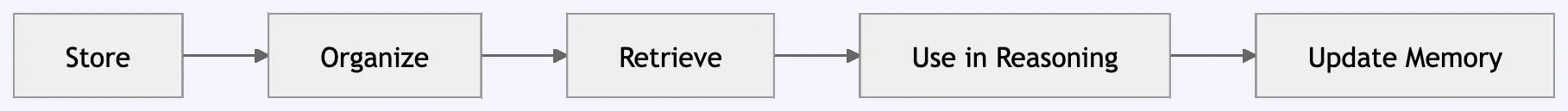

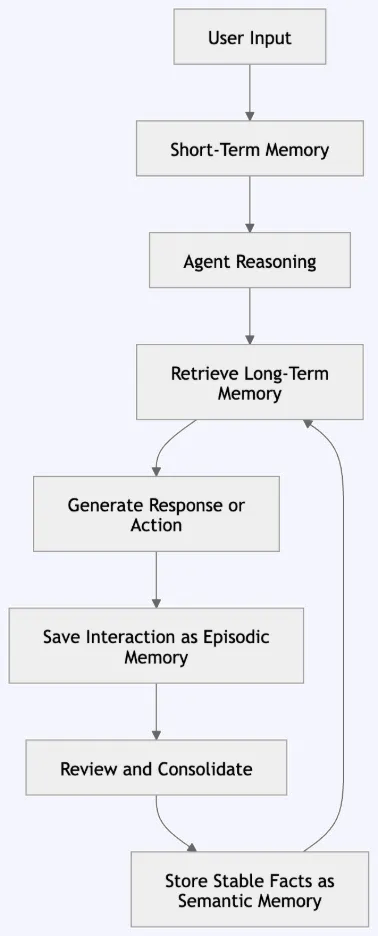

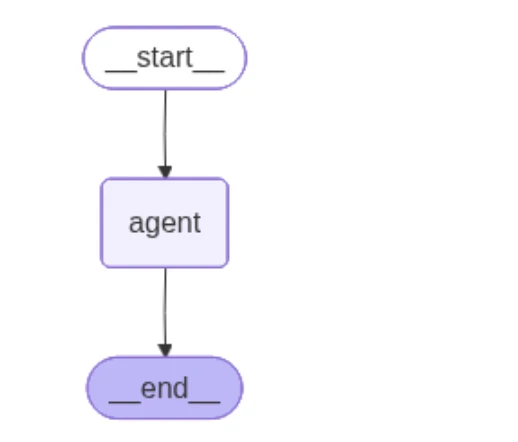

Current industry standards for building these systems often involve frameworks like LangGraph, which allow for the creation of cyclic graphs representing agent workflows. A typical memory pipeline in these systems follows a structured data flow:

- Input Reception: The user submits a query.

- Short-Term Retrieval: The agent identifies the current thread state.

- Memory Search: The system performs a similarity search in a vector store (Semantic Memory) and a lookup in a historical log (Episodic Memory).

- Reasoning: The LLM synthesizes the user input with the retrieved memories.

- Execution and Response: The agent acts or replies.

- Memory Writing: The interaction is logged as an episode, and any new facts are extracted and saved as semantic updates.

In practice, this requires a dual-storage strategy. Short-term state is often stored in high-speed, in-memory databases like Redis or PostgreSQL checkpointers to ensure low latency. Semantic facts are stored in vector databases like Chroma, FAISS, or Pinecone, which support high-dimensional similarity searches.

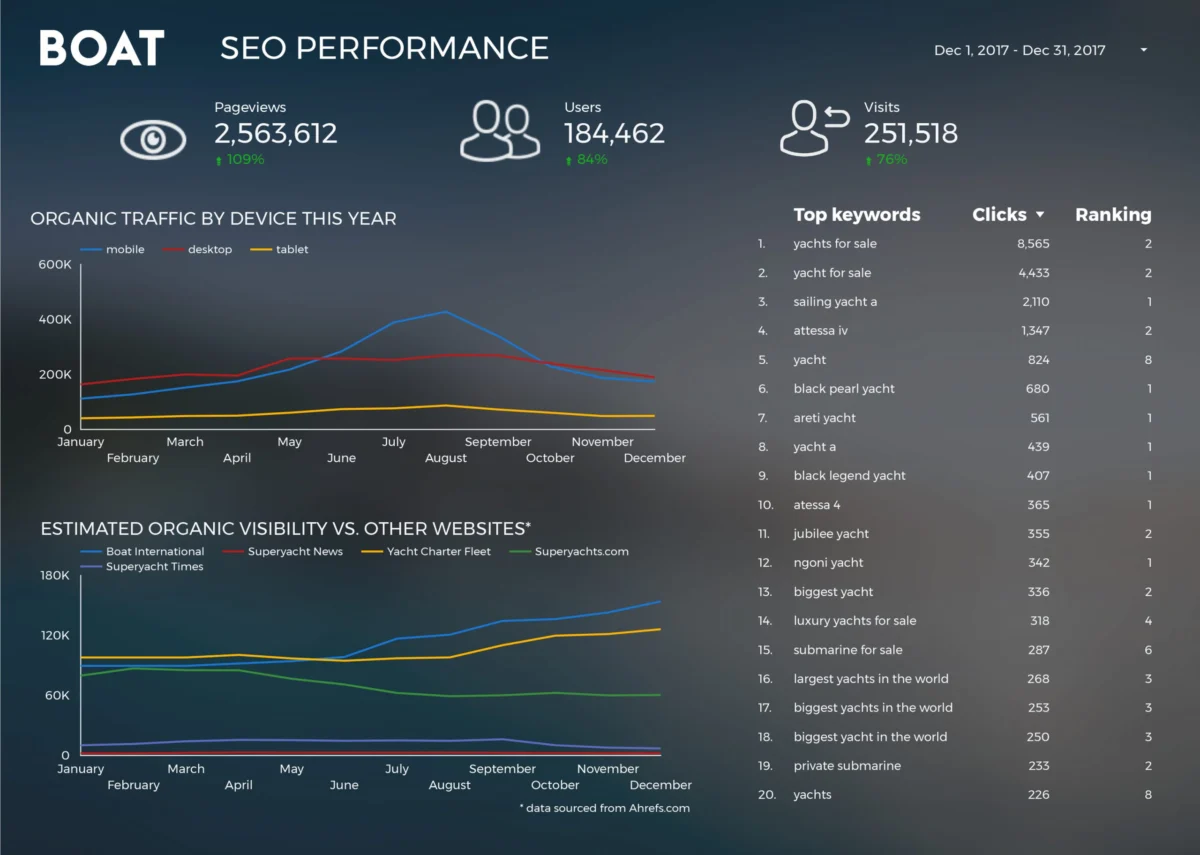

Industry Perspectives and Supporting Data

Market analysts at firms like Gartner and Forrester have noted that "memory-augmented agents" are becoming a key differentiator in the SaaS sector. According to recent developer surveys, nearly 65% of engineers working on production-level AI agents cite "context management" and "memory persistence" as their primary technical challenges.

"The challenge is no longer just getting the model to understand the prompt," says one senior AI architect from a leading cloud provider. "The challenge is ensuring the agent has the right ‘mental model’ of the user’s history without hallucinating past events. We are essentially building a digital subconscious for these models."

Supporting data suggests that agents equipped with episodic and semantic memory layers show a 40% improvement in task completion rates for complex, multi-day workflows compared to stateless models. This is primarily because these agents do not require the user to repeat instructions or re-upload context, significantly reducing "prompt fatigue."

Security, Privacy, and Governance in AI Memory

As agents gain the ability to remember, they also gain the ability to store sensitive information, leading to significant concerns regarding data governance and privacy. Memory systems must be designed with strict "multi-tenancy" in mind. A memory leak where User A’s data is retrieved to answer User B’s query is a catastrophic failure in an enterprise environment.

The industry is currently coalescing around several "Golden Rules" for safe agent memory:

- Strict User Separation: Memory must be partitioned by unique user IDs and organization IDs within the storage namespace.

- Selective Retention: Agents should be programmed to ignore sensitive strings, such as passwords, API keys, or personally identifiable information (PII), during the "Memory Writing" phase.

- The Right to Erasure: In compliance with GDPR and CCPA, memory systems must support granular deletion, allowing users to wipe their "episodic" or "semantic" history without breaking the agent’s core functionality.

- Memory Validation: Critical facts saved to semantic memory should undergo a validation step—either through human-in-the-loop verification or cross-referencing with a "source of truth"—to prevent "memory poisoning," where an agent learns and repeats a mistake.

Broader Impact and Future Implications

The implications of advanced AI memory extend far beyond simple chatbots. In the field of autonomous software engineering, agents with memory can track the evolution of a codebase, remembering why a specific architectural decision was made months prior. In healthcare, an AI assistant could maintain a long-term episodic record of a patient’s symptoms and treatments, offering insights that a human practitioner might miss over years of disparate appointments.

However, the cost of memory remains a factor. Storing and embedding millions of "episodes" requires significant computational resources. As the volume of data grows, the industry will likely move toward "Memory Summarization" techniques, where an agent periodically compresses its episodic history into a set of core semantic facts, discarding the raw logs while retaining the learned wisdom.

In conclusion, agent memory is the bridge between a static tool and a dynamic collaborator. By effectively integrating short-term, episodic, semantic, and long-term layers, developers are creating systems that do not just process data, but actually "learn" from their environment. As these architectures mature, the focus will shift from the mere capacity to remember to the sophisticated ability to forget the irrelevant, ensuring that AI remains a sharp, focused, and reliable extension of human intent.