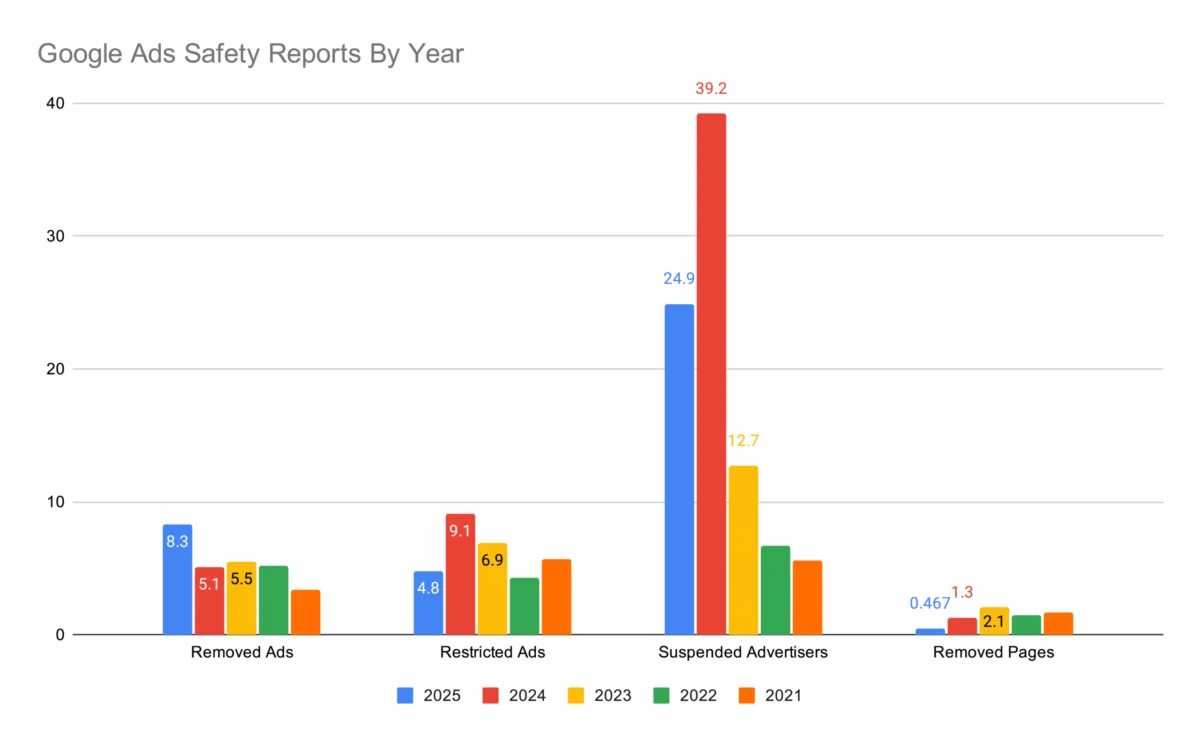

Mountain View, California – Google has released its annual Ads Safety Report for 2025, detailing an unprecedented surge in its enforcement actions against malicious and policy-violating advertisements. The report highlights a staggering 8.3 billion ads removed from its platforms, marking a substantial increase of nearly 63% compared to the 5.1 billion ads removed in the previous year. A critical aspect of this year’s findings is Google’s assertion that 99% of these problematic ads were detected and blocked before they ever reached a user, a feat attributed largely to advancements in artificial intelligence, particularly the integration of its Gemini AI model. This robust pre-serving removal rate underscores an intensified commitment to user safety and platform integrity in the rapidly evolving landscape of digital advertising.

The annual safety report serves as a transparent account of Google’s efforts to combat abuse across its vast advertising network, which includes Search, YouTube, Display Network, and various other properties. The substantial leap in removed ads from 5.1 billion in the preceding report to 8.3 billion in the current cycle reflects not only an escalation in malicious activity but also Google’s enhanced capabilities in identifying and neutralizing such threats. This continuous cat-and-mouse game between platform security teams and bad actors necessitates constant innovation, and the 2025 report clearly positions AI as a frontline defense mechanism.

The Escalating Scale of Ad Abuse and AI’s Pivotal Role

The year-over-year increase of approximately 63% in ad removals is a stark indicator of the dynamic and challenging environment Google navigates. This growth signifies a continuous adaptation by malicious advertisers, who relentlessly develop new tactics to circumvent detection systems. These tactics range from sophisticated cloaking techniques that present legitimate content to reviewers but malicious content to users, to rapidly deploying new campaigns designed to exploit emerging trends or vulnerabilities. The sheer volume of ads processed daily across Google’s ecosystem demands automated solutions that can operate at scale and speed.

Enter Gemini, Google’s advanced AI model, which is credited with playing a pivotal role in achieving the impressive 99% pre-serving removal rate. Traditional ad review processes, which relied heavily on human moderation and rule-based systems, struggled to keep pace with the volume and sophistication of modern ad fraud. AI-driven systems, like Gemini, can analyze vast datasets of ad creatives, landing pages, advertiser behavior, and user interactions in real-time. They are trained to identify subtle patterns, anomalies, and contextual clues that indicate policy violations, even those designed to evade detection. This predictive capability allows the system to flag and remove ads before they can inflict harm, safeguarding users from scams, malware, inappropriate content, and misinformation. The shift towards AI-first detection signifies a major strategic pivot, enabling Google to move from reactive moderation to proactive prevention.

Beyond Ad Removals: A Multifaceted Enforcement Strategy

While the removal of 8.3 billion ads is a headline figure, Google’s safety report details a much broader, multi-pronged approach to maintaining a clean advertising ecosystem. The company’s enforcement actions extend far beyond individual ad units, targeting the sources of abuse across its network.

In 2025, Google suspended approximately 25 million advertiser accounts. This measure is crucial for disrupting persistent bad actors who might attempt to launch numerous campaigns or create new accounts after previous violations. By suspending the advertiser account itself, Google aims to cut off the supply of malicious ads at its root, preventing repeat offenses and sending a strong signal to those contemplating policy breaches. This strategy helps to foster a more accountable environment for legitimate advertisers.

Furthermore, Google restricted 4.8 billion ads, indicating a nuanced approach where some ads are not outright removed but limited in their reach or targeting due due to minor policy infringements or content sensitivity. This allows for a more granular control over ad exposure. The report also notes that 480 million web pages were blocked or restricted from showing ads. This action targets the landing pages and content hosted by advertisers, ensuring that even if an ad slips through, the destination content adheres to Google’s policies. This prevents advertisers from using compliant ads to drive traffic to illicit or harmful websites.

Complementing these actions, Google took enforcement actions against 245,000 publisher sites. Publishers host Google ads on their properties, and if their content violates policies (e.g., hate speech, misinformation, copyrighted material), Google can restrict their ability to monetize through its ad network. This puts pressure on publishers to maintain high content standards, thereby protecting advertisers from appearing alongside unsuitable content and protecting users from harmful information.

To keep pace with the evolving tactics of bad actors and emerging digital threats, Google made over 35 policy updates in 2025. These updates are a critical component of Google’s proactive defense. As new forms of fraud, scams, and objectionable content emerge (e.g., AI-generated misinformation, new types of financial scams, or novel deceptive health claims), Google must continually refine its policies to address these specific challenges. These updates ensure that the platform’s rules remain relevant and effective, providing clear guidelines for advertisers and enabling Google’s automated systems to adapt to new forms of abuse.

Contextualizing the Threat Landscape: A Global Challenge

The challenges highlighted in Google’s report are not isolated to a single region or type of content; they represent a global and pervasive threat to the integrity of the internet. Online ad fraud is a multi-billion dollar industry, with sophisticated criminal enterprises actively seeking to exploit digital advertising platforms for financial gain, data theft, or the spread of propaganda. These bad actors operate across borders, leveraging advanced technologies to launch coordinated attacks.

Common categories of violations targeted by Google’s safety measures include:

- Deceptive Practices: Misleading claims, phishing scams designed to steal personal information, fake product promotions, and "get-rich-quick" schemes.

- Dangerous Products and Services: Ads for illegal drugs, unapproved pharmaceuticals, weapons, or other items that pose a direct threat to safety.

- Counterfeits and Trademark Infringement: Ads selling fake versions of branded goods or infringing on intellectual property.

- Inappropriate Content: Ads promoting hate speech, discriminatory practices, violence, or sexually explicit material.

- Malware and Unwanted Software: Ads that lead to sites distributing viruses, ransomware, or unwanted browser extensions.

- Misinformation: Ads promoting false or misleading information, particularly concerning sensitive topics like health, politics, or public safety.

The economic stakes are immense. Ad fraud not only costs advertisers billions in wasted spend but also erodes user trust in legitimate advertising and online content. For Google, maintaining a safe environment is paramount to protecting its revenue streams, preserving its reputation as a reliable advertising partner, and ensuring the long-term viability of its ecosystem.

Google’s Proactive Defense Mechanisms: The Human-AI Synergy

While AI, spearheaded by Gemini, is at the forefront of Google’s ad safety efforts, the company’s approach is best described as a human-AI synergy. Machine learning models excel at identifying patterns, scaling detection, and operating with speed. They can process billions of data points in moments, far exceeding human capabilities. However, AI still requires human oversight and input.

Human policy experts are crucial for defining the nuanced rules that AI systems learn from, interpreting complex cases where context is vital, and adapting policies as new threats emerge. These teams analyze trends in abuse, provide feedback to improve AI models, and handle appeals or cases that require subjective judgment. The constant feedback loop between human reviewers and AI systems is what drives continuous improvement in Google’s detection capabilities. This collaborative model ensures that while automation handles the vast majority of routine violations, human intelligence guides the strategic direction and handles the most challenging ethical and policy dilemmas.

Google also invests in sophisticated threat intelligence operations, working to understand the motivations and methods of bad actors. This includes tracking botnets, identifying fraudulent networks, and collaborating with industry partners and law enforcement agencies when appropriate. This intelligence-gathering helps to anticipate future threats and build more robust defenses.

Implications for the Digital Advertising Ecosystem

The findings of Google’s 2025 Ads Safety Report carry significant implications for various stakeholders within the digital advertising ecosystem.

- For Advertisers: A cleaner ad environment means legitimate businesses can operate with greater confidence. Reduced competition from fraudulent ads can lead to better campaign performance and more accurate attribution of marketing spend. However, it also means advertisers must be meticulously compliant with Google’s evolving policies. Businesses that adhere to best practices will thrive, while those attempting shortcuts or deceptive tactics face swift and decisive enforcement. The emphasis on pre-serving removal also means that advertisers must ensure their campaigns are fully compliant before launch, as the window for detection has become incredibly narrow.

- For Users: The primary beneficiaries are internet users. Fewer malicious ads translate to a safer, less frustrating online experience. Users are less likely to encounter scams, phishing attempts, malware, or inappropriate content, which helps rebuild trust in online advertising and content platforms. This improved trust can lead to greater engagement with legitimate ads and a more positive overall perception of Google’s services.

- For Google: The report reinforces Google’s position as a leader in online safety and content moderation. By proactively tackling ad abuse, Google protects its brand reputation, maintains the integrity of its platforms, and safeguards its substantial advertising revenue, which forms the bedrock of its business model. Demonstrating a strong commitment to safety is also critical for navigating increasing regulatory scrutiny globally regarding online content and data privacy.

Challenges and the Road Ahead

Despite the impressive figures, the battle against ad abuse remains an ongoing "arms race." Bad actors are continuously innovating, leveraging new technologies like advanced AI to create more convincing deepfakes, sophisticated cloaking techniques, and highly personalized scams. The challenge for Google and other platforms is to stay one step ahead, continually evolving their detection methods and policy frameworks.

Emerging threats, such as AI-generated misinformation and highly personalized deceptive content, will undoubtedly test the limits of current detection systems. The sheer volume of content and ads generated globally makes comprehensive human review impossible, placing an even greater reliance on advanced AI. Google will need to ensure its AI models are not only effective at identifying existing threats but also capable of adapting to entirely new forms of abuse without inadvertently suppressing legitimate advertising.

The balance between robust enforcement and supporting legitimate business remains a delicate act. Overly aggressive systems could inadvertently flag compliant ads, causing frustration for advertisers. Therefore, continuous refinement of AI models and policy interpretation is essential.

In conclusion, Google’s 2025 Ads Safety Report paints a clear picture of an escalating struggle against online ad abuse, met with an equally escalating response from the tech giant. The removal of 8.3 billion ads, particularly the 99% blocked before serving, showcases the transformative power of AI in enhancing platform safety. As the digital landscape continues to evolve, Google’s commitment to continuous innovation in policy, technology, and human expertise will be critical in shaping a safer and more trustworthy environment for advertisers and users worldwide.