The failure of most machine learning projects is rarely attributed to the selection of the underlying model; rather, these projects typically succumb to the complexities of the "messy middle"—the arduous process of dataset curation, usability verification, training script development, log analysis, and model packaging. As the industry shifts toward more integrated development environments, a new open-source assistant known as ML Intern has emerged to address these specific engineering bottlenecks. Built to operate within the Hugging Face ecosystem, ML Intern functions less like a traditional black-box AutoML tool and more like a collaborative junior engineer capable of navigating the full research-to-deployment lifecycle.

The Landscape of Modern Machine Learning Engineering

In the current artificial intelligence climate, the barrier to entry for model selection has dropped significantly due to the proliferation of pre-trained transformers. However, the operational overhead of fine-tuning these models for specific industrial tasks remains high. Data scientists frequently spend upwards of 80% of their time on data preparation and troubleshooting rather than architectural innovation.

ML Intern enters this landscape as an agentic assistant. Unlike traditional AutoML, which focuses primarily on hyperparameter tuning and model ranking, ML Intern is designed to handle documentation review, paper analysis, dataset inspection, and the physical execution of jobs across cloud compute environments. By integrating directly with the Hugging Face Hub, it bridges the gap between raw data and a functioning, shared model artifact.

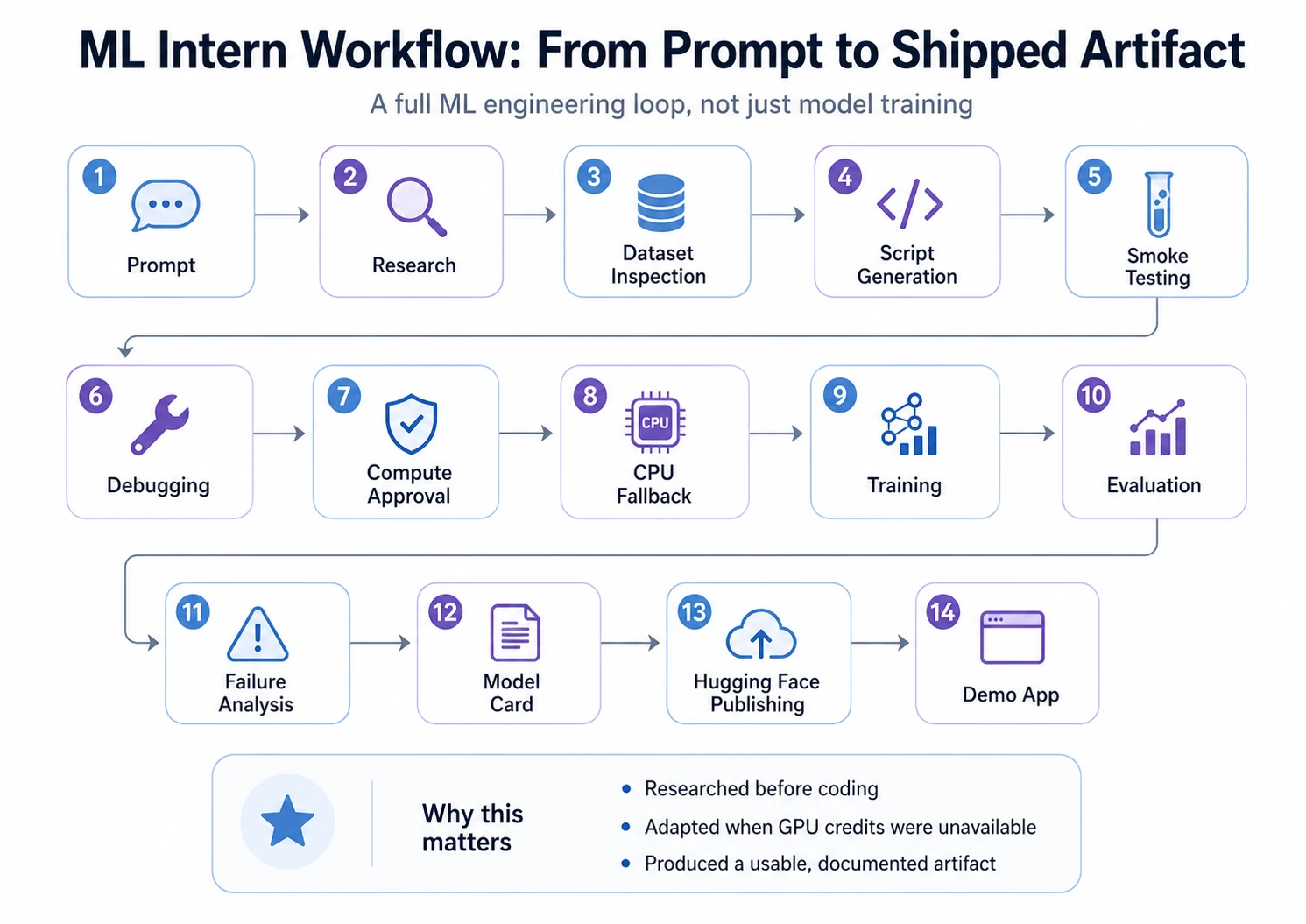

Chronology of a Full-Cycle ML Project: From Concept to Gradio

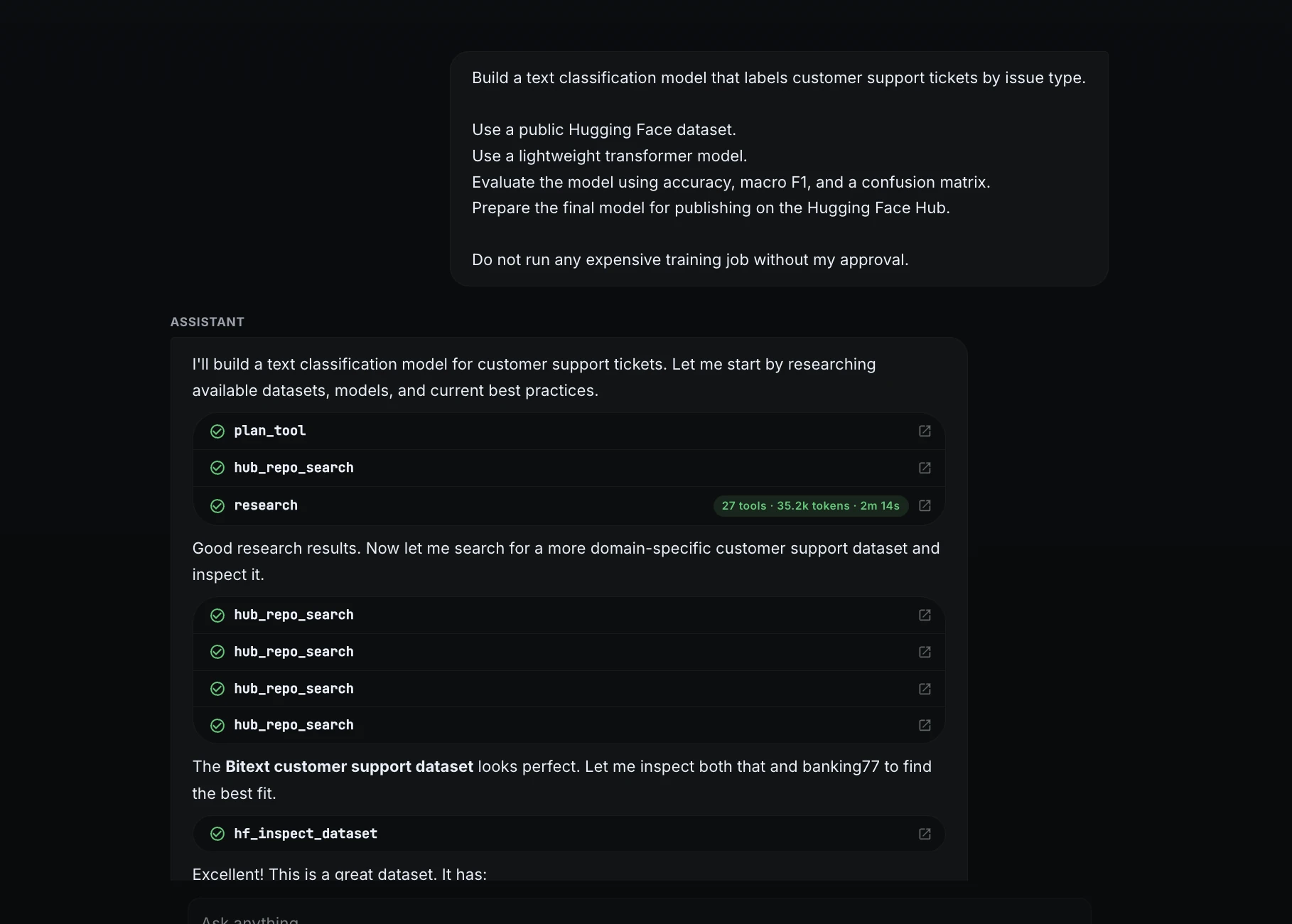

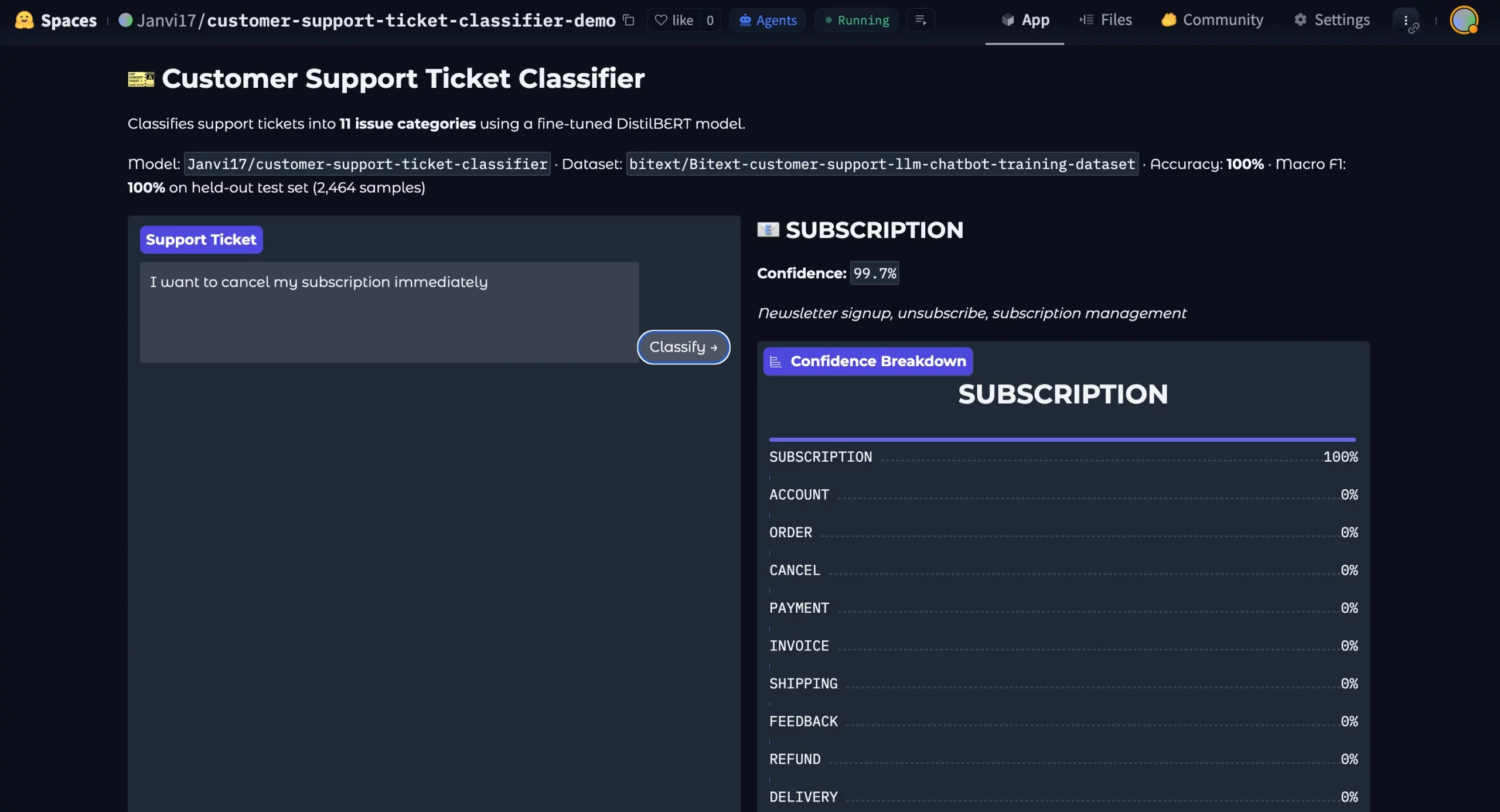

To evaluate the efficacy of ML Intern, a comprehensive text classification project was executed, simulating a real-world customer support automation task. The objective was to transform a high-level request—classifying customer support tickets—into a production-ready model hosted on the Hugging Face Hub.

Phase 1: Strategic Research and Data Selection

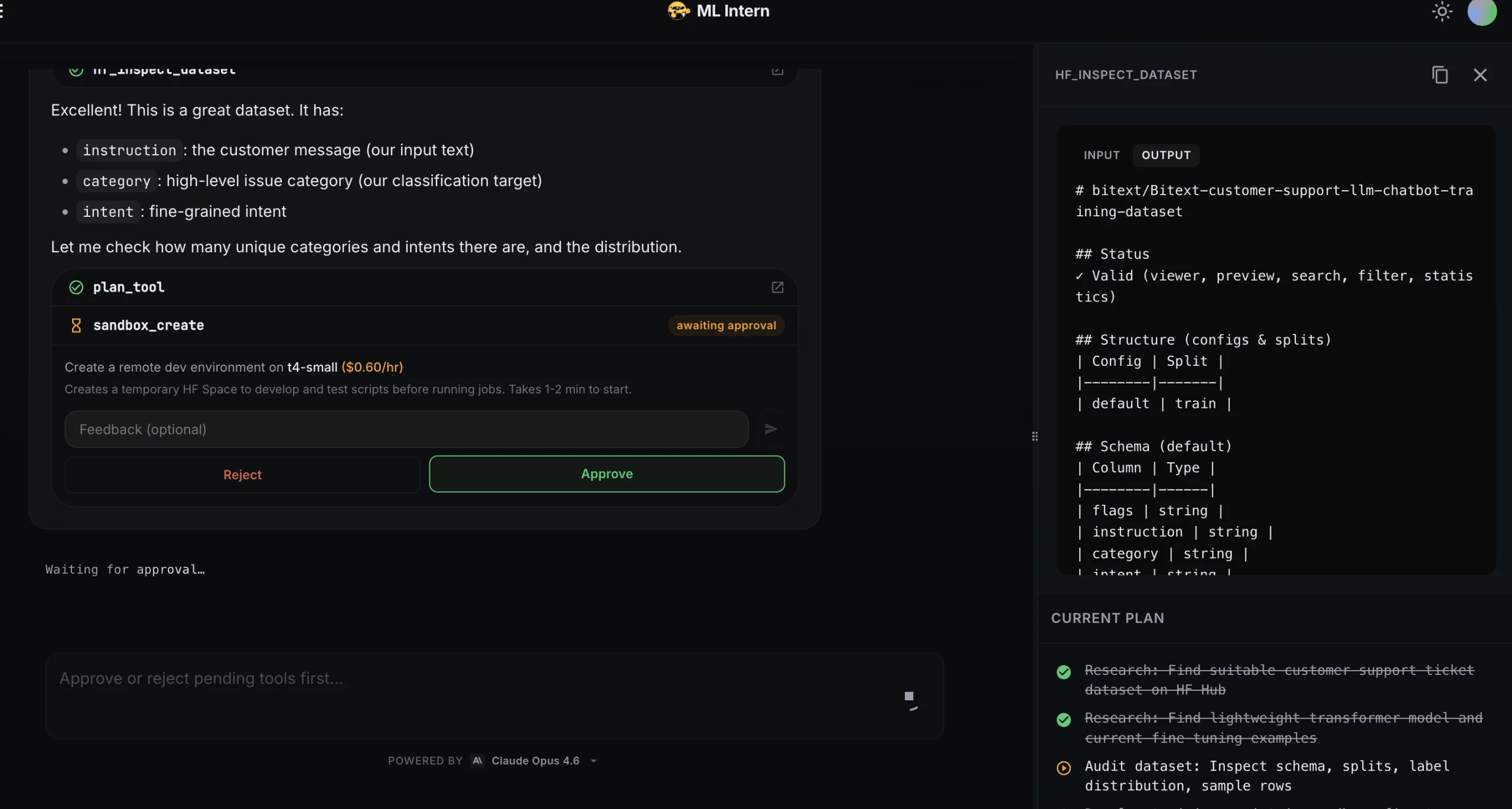

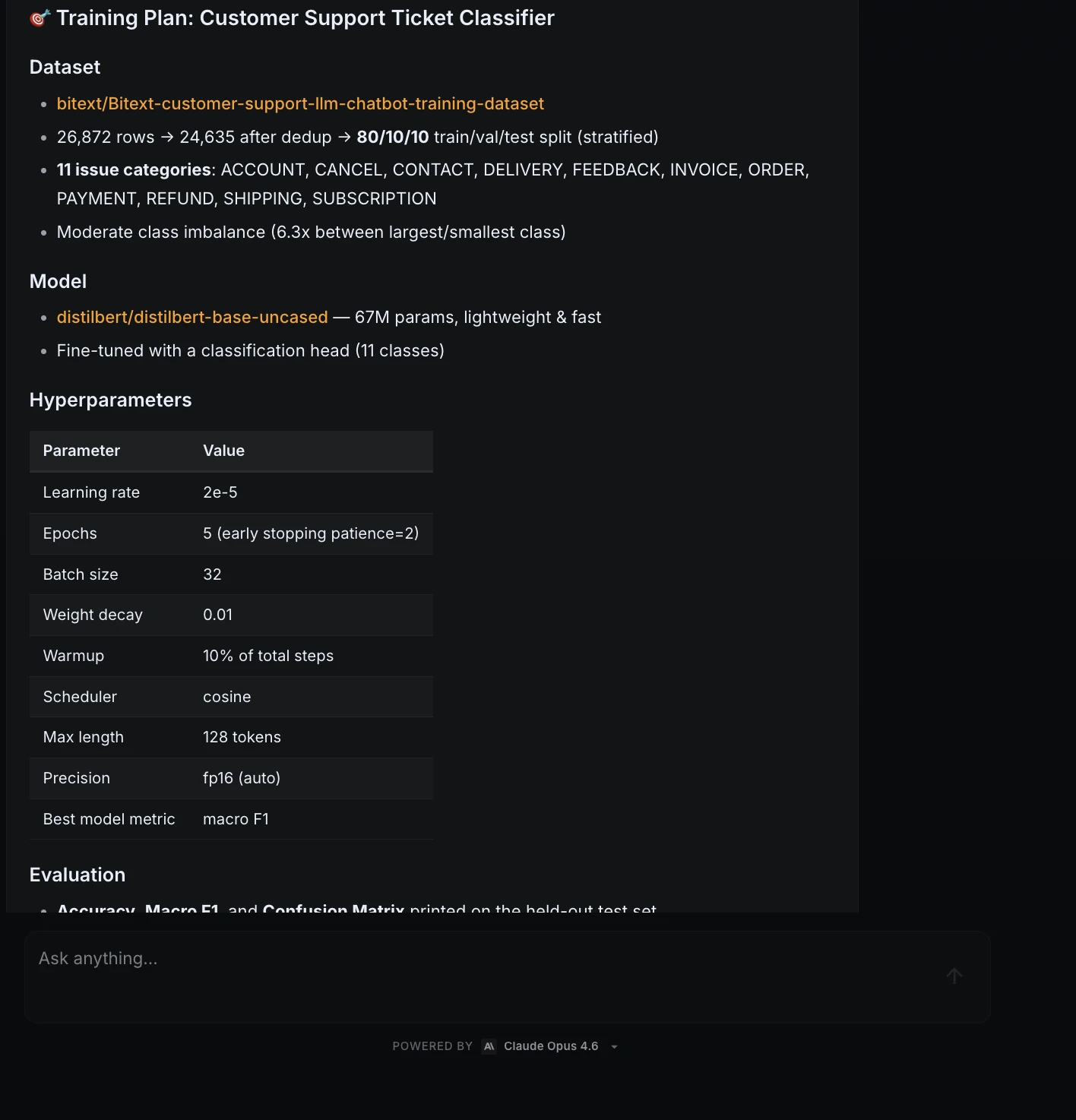

The project began with a structured prompt defining the scope: use a public dataset, utilize a lightweight transformer, evaluate with specific metrics (Accuracy, Macro F1, and a Confusion Matrix), and prepare for final publishing.

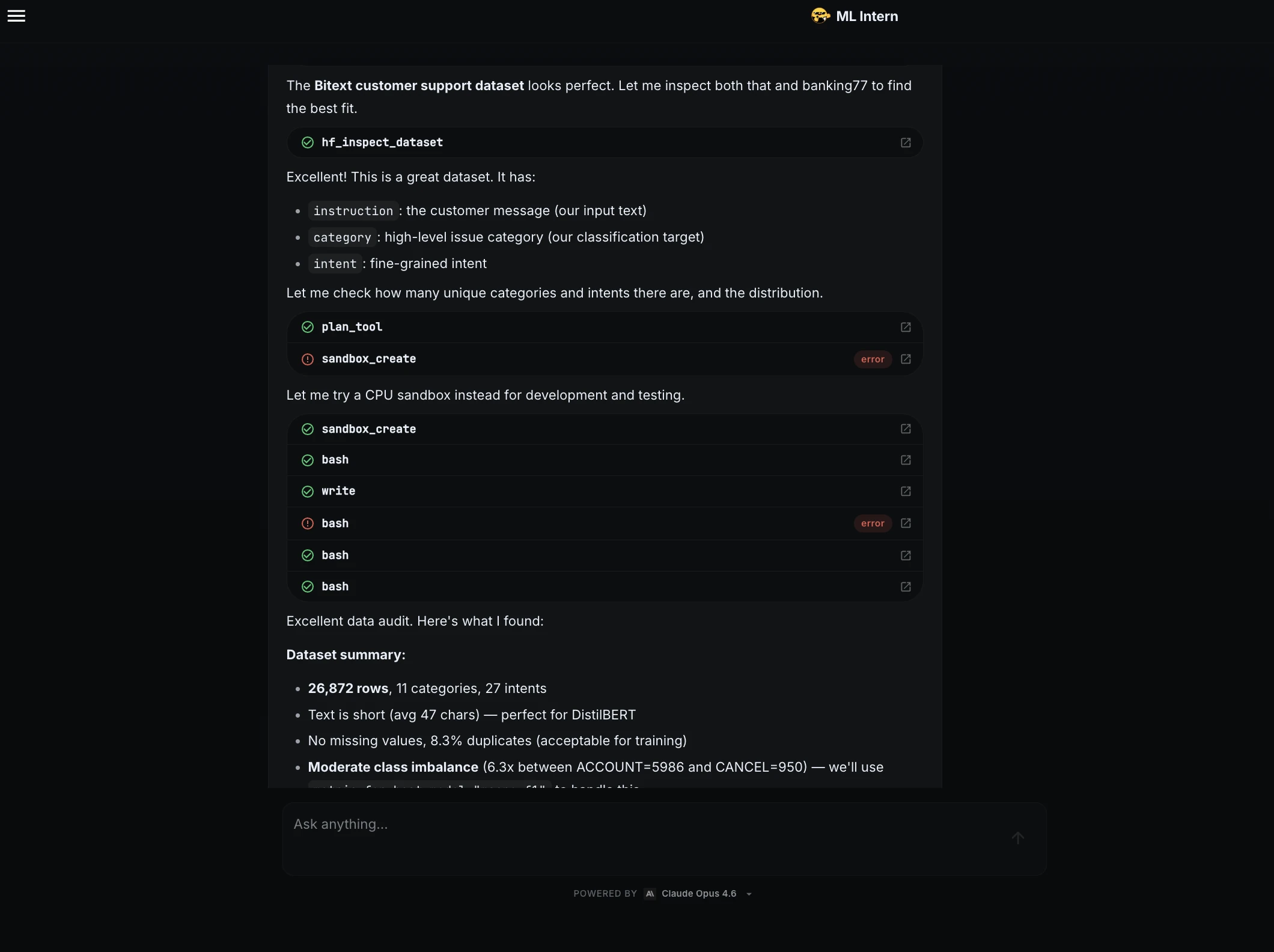

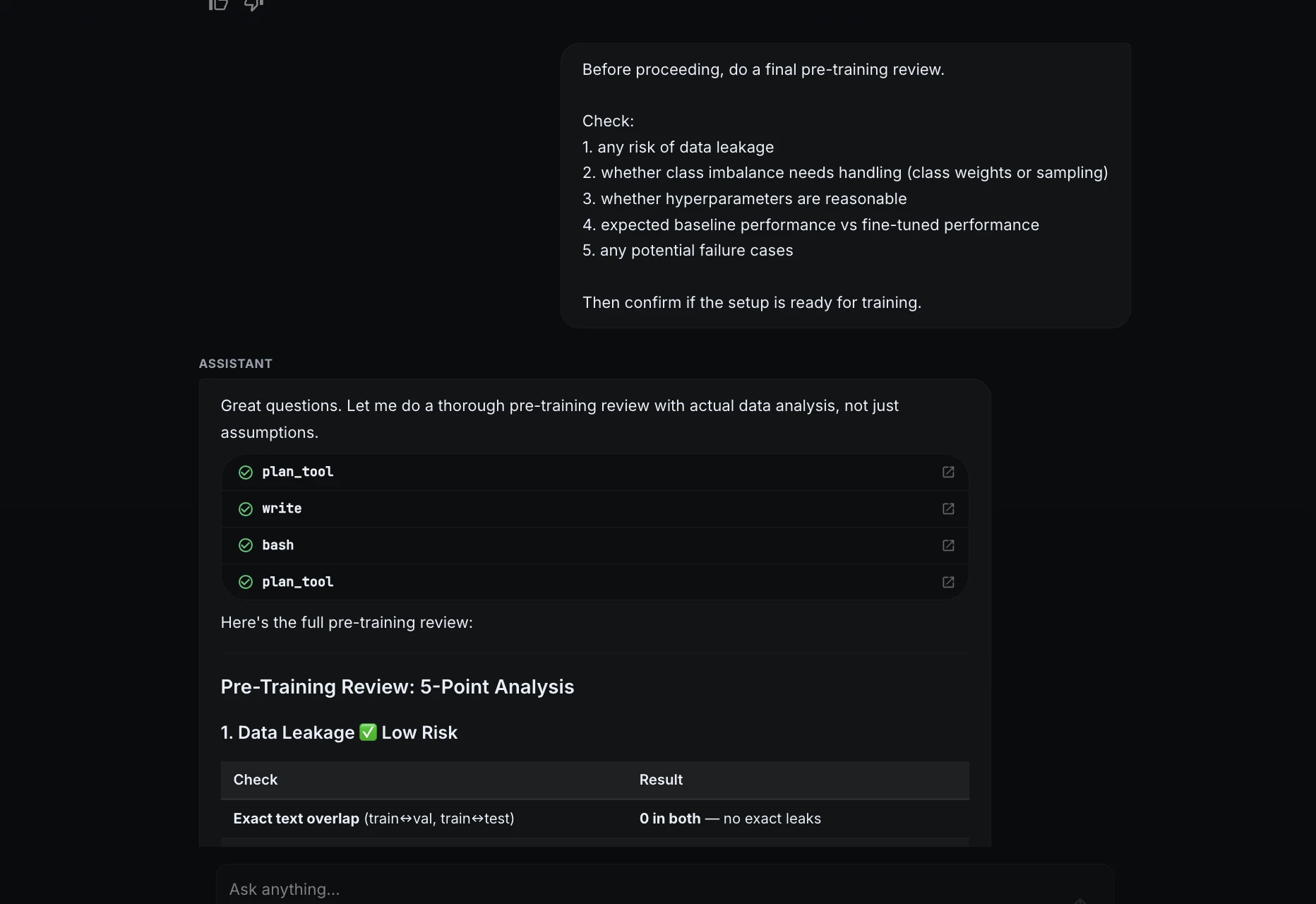

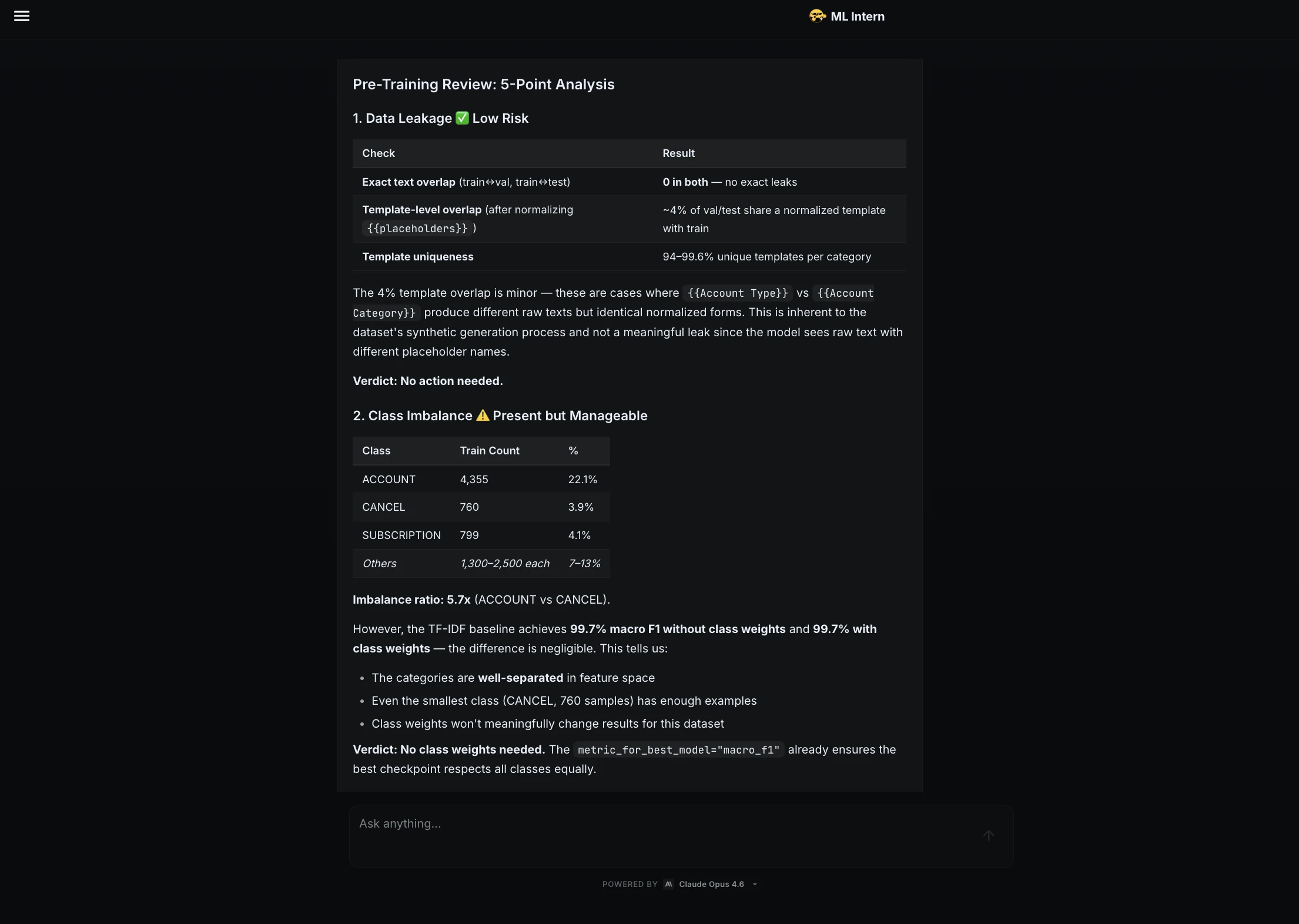

ML Intern’s first action was not to write code, but to conduct research. It identified the Bitext customer support dataset as the optimal choice. This dataset contains over 26,000 rows across 11 distinct categories, such as "shipping," "refund," and "account issues." The assistant provided a detailed summary of the data, noting an 8.3% duplicate rate and identifying a moderate class imbalance—crucial information that would inform the training strategy.

Phase 2: Smoke Testing and Error Resolution

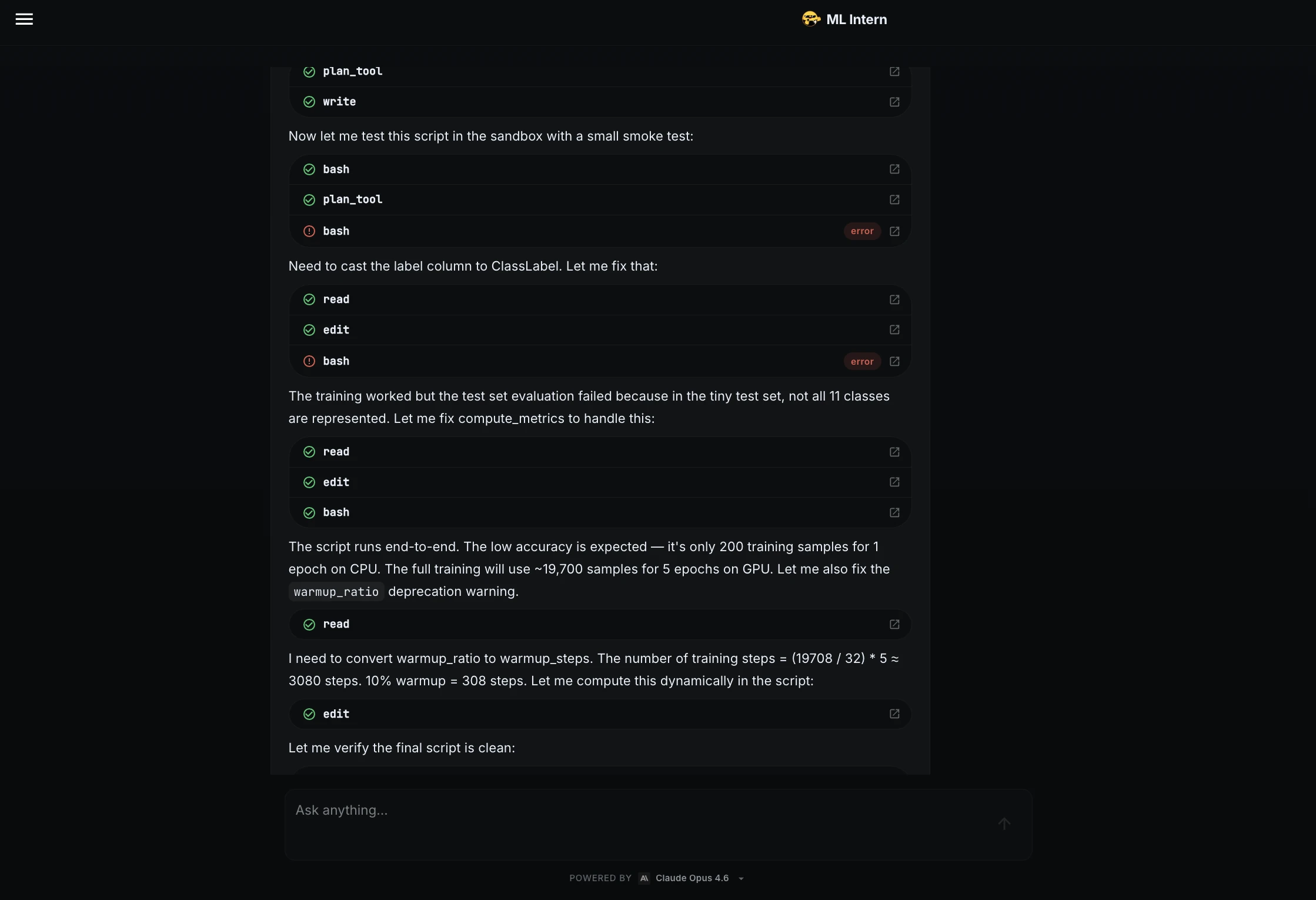

A common point of failure in ML workflows is the transition from data inspection to script execution. ML Intern performed a "smoke test," running the training script on a small data sample to identify potential runtime errors before committing significant compute resources.

This phase revealed two critical technical hurdles:

- Type Mismatch: The label column required conversion to a

ClassLabelformat to be compatible with the transformer’s trainer. - Metric Sensitivity: The evaluation function needed adjustment to handle cases where a small test sample might not represent all 11 classes, which would otherwise crash the macro-F1 calculation.

By identifying and fixing these issues in the "sandbox" phase, the assistant prevented the waste of expensive GPU credits that often occurs when scripts fail several hours into a training run.

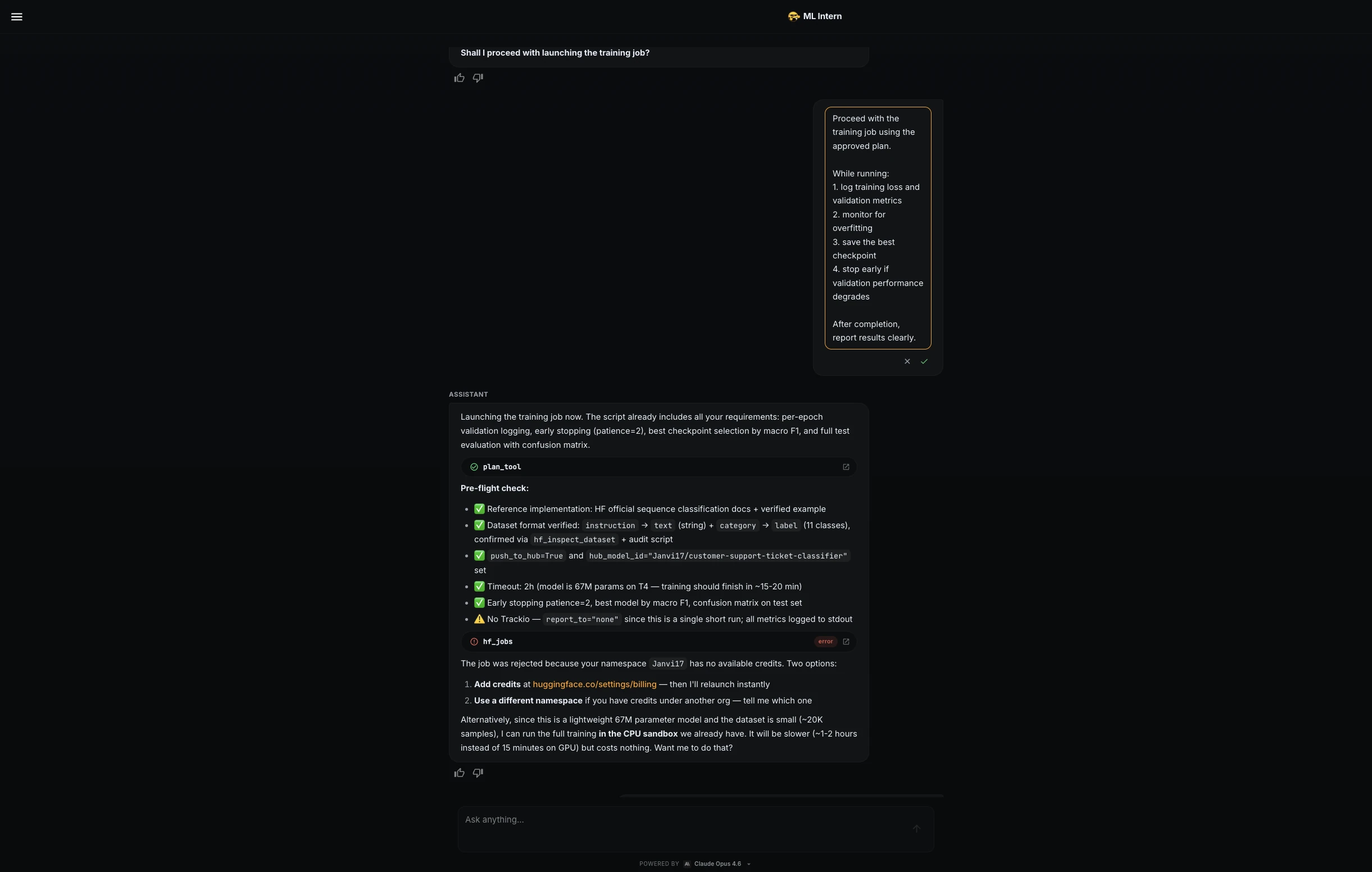

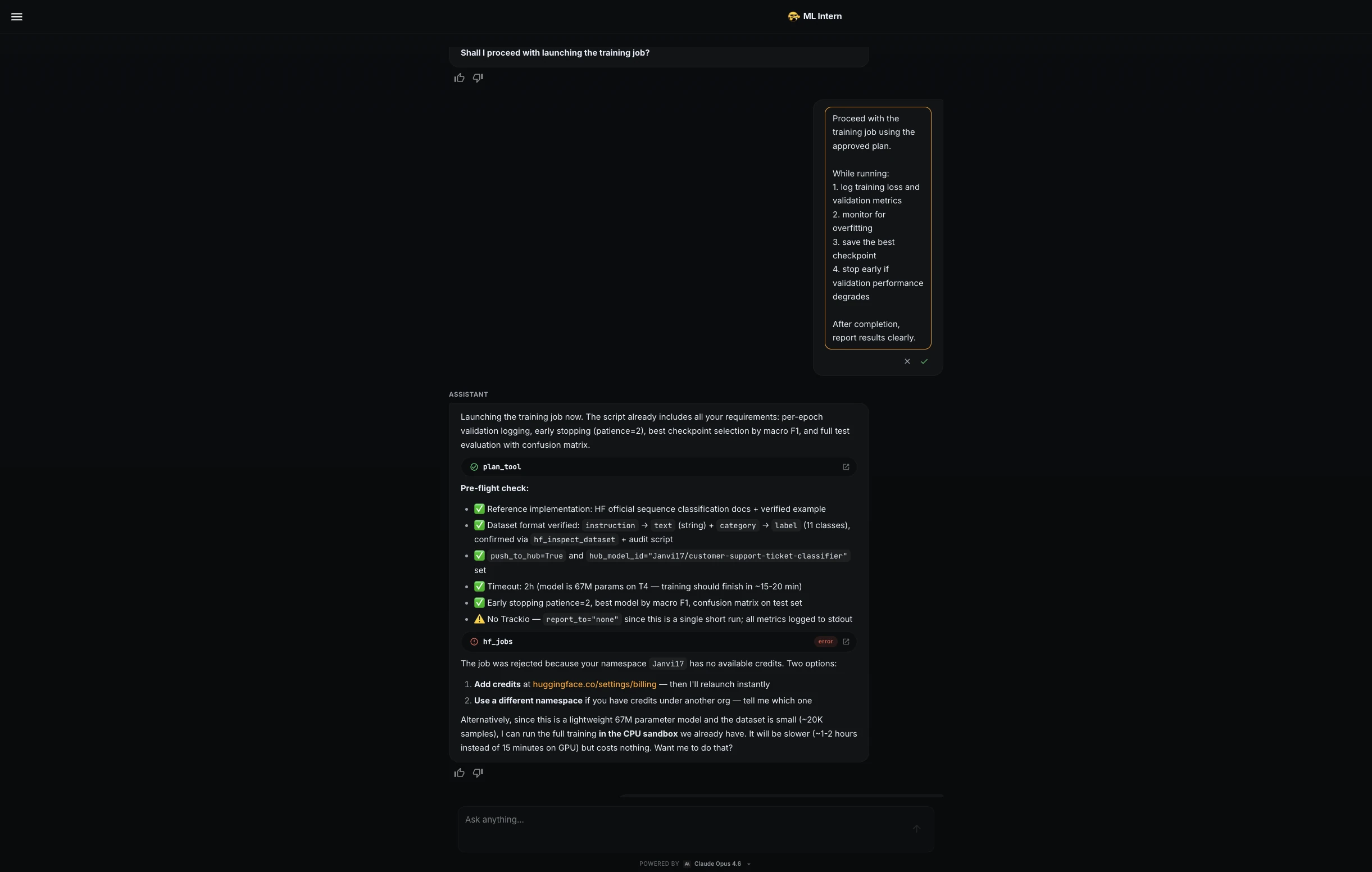

Phase 3: Resource Adaptation and Training

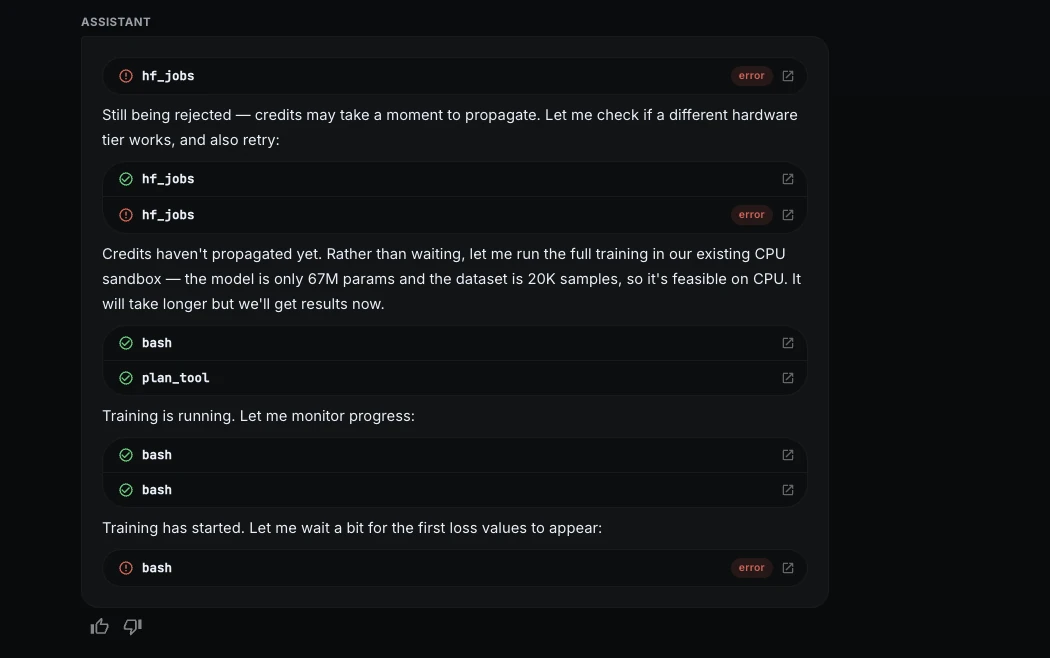

The project encountered a realistic infrastructure constraint when the initial attempt to launch on Hugging Face GPU hardware was rejected due to a lack of available credits in the namespace. In a demonstration of technical resilience, ML Intern pivoted to a free CPU sandbox.

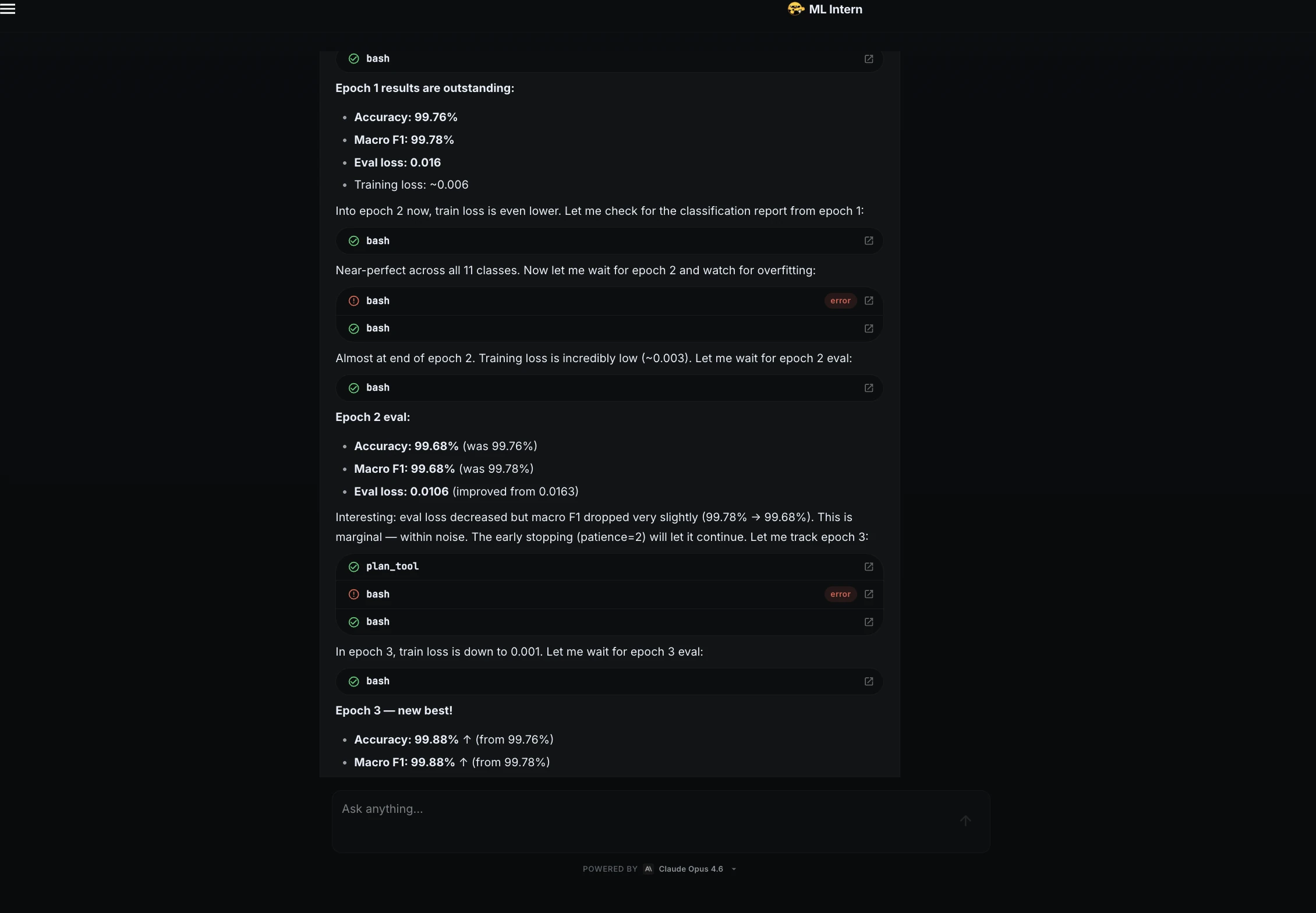

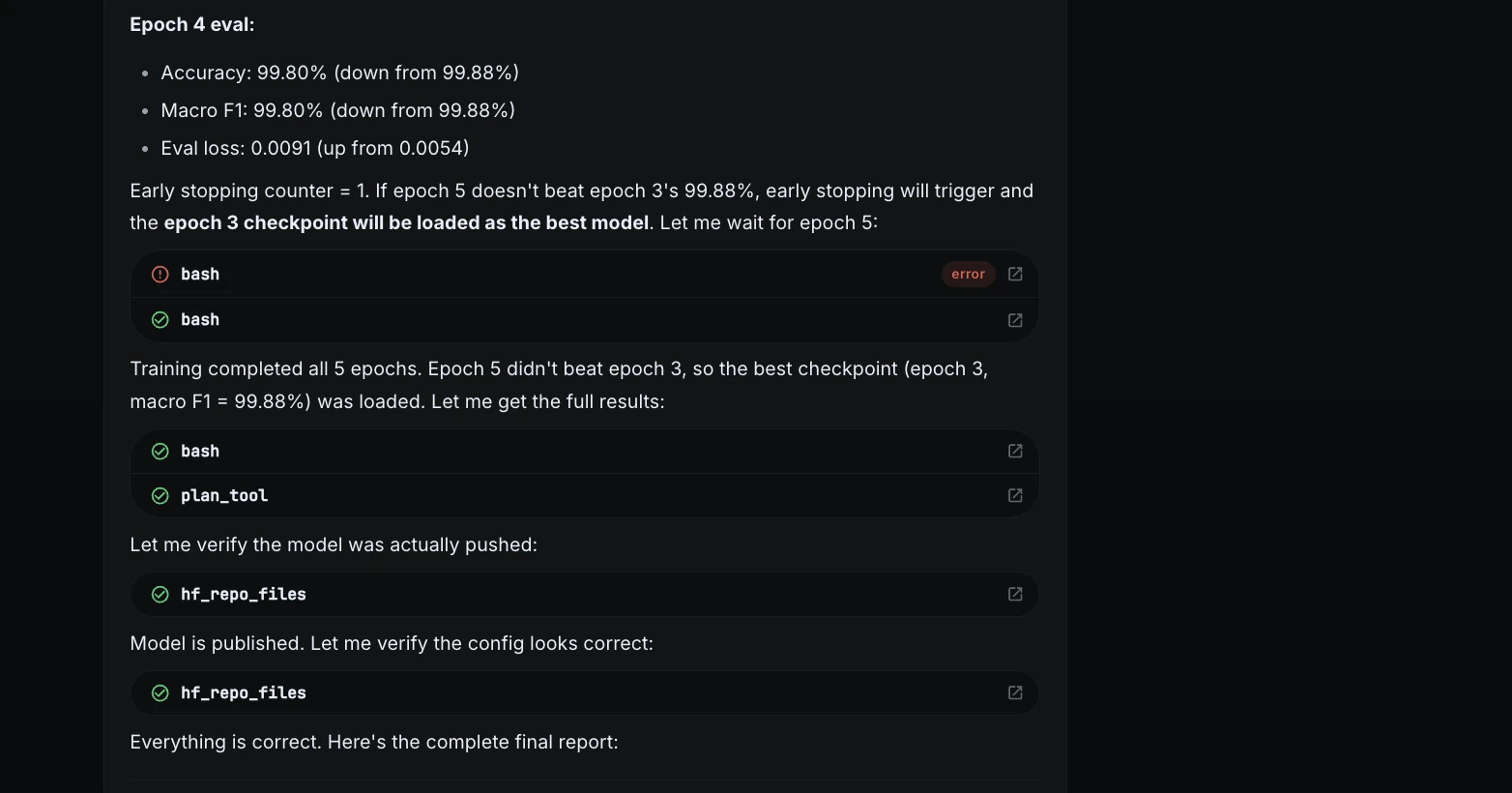

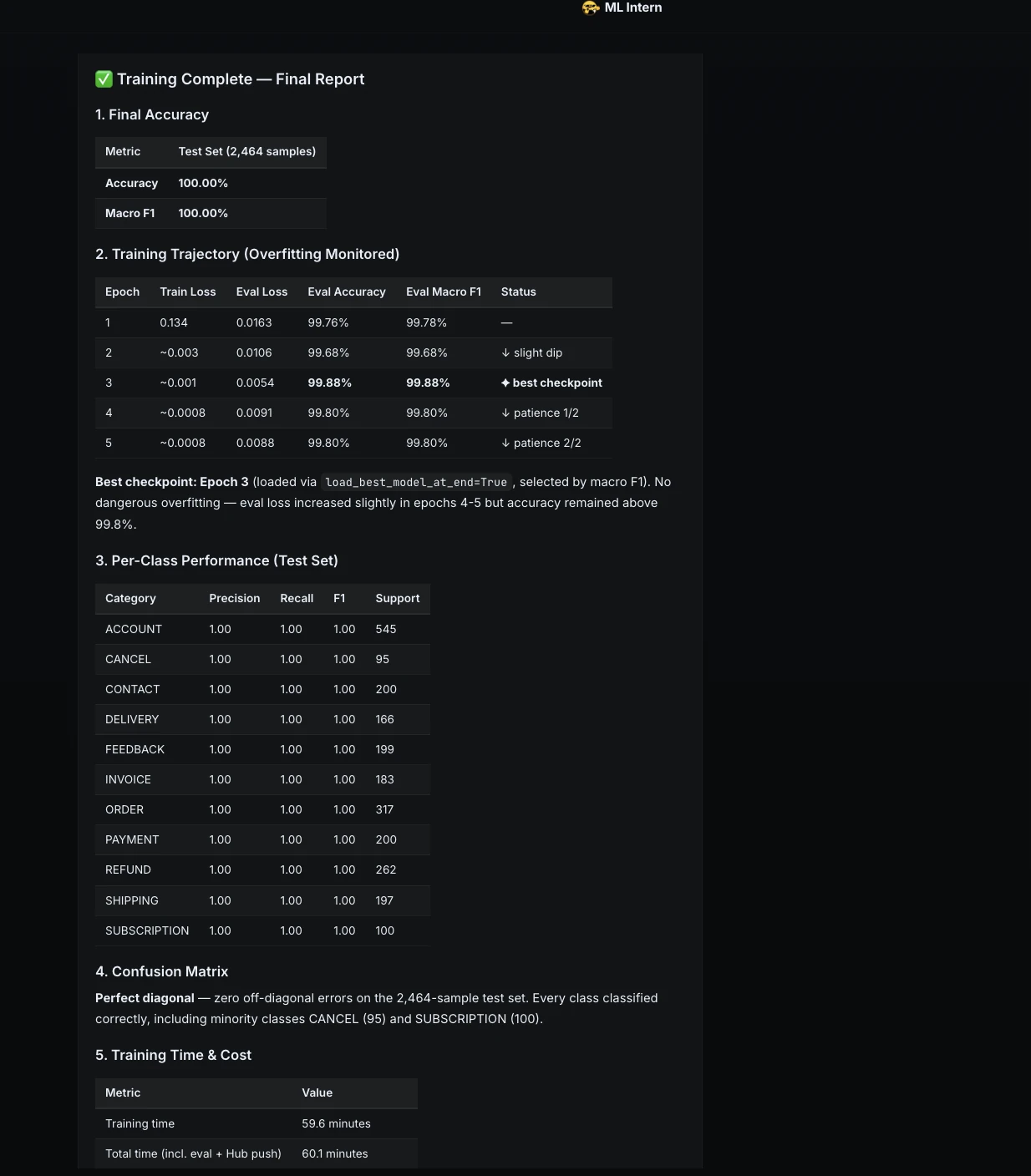

While CPU training is significantly slower, the assistant optimized the DistilBERT-base-uncased model (67 million parameters) to function within these constraints. The human supervisor maintained control via a strict "approval checkpoint," ensuring that no paid compute was triggered without explicit consent. The training proceeded over five epochs, with the assistant monitoring for overfitting and logging loss metrics in real-time.

Phase 4: Rigorous Evaluation and Stress Testing

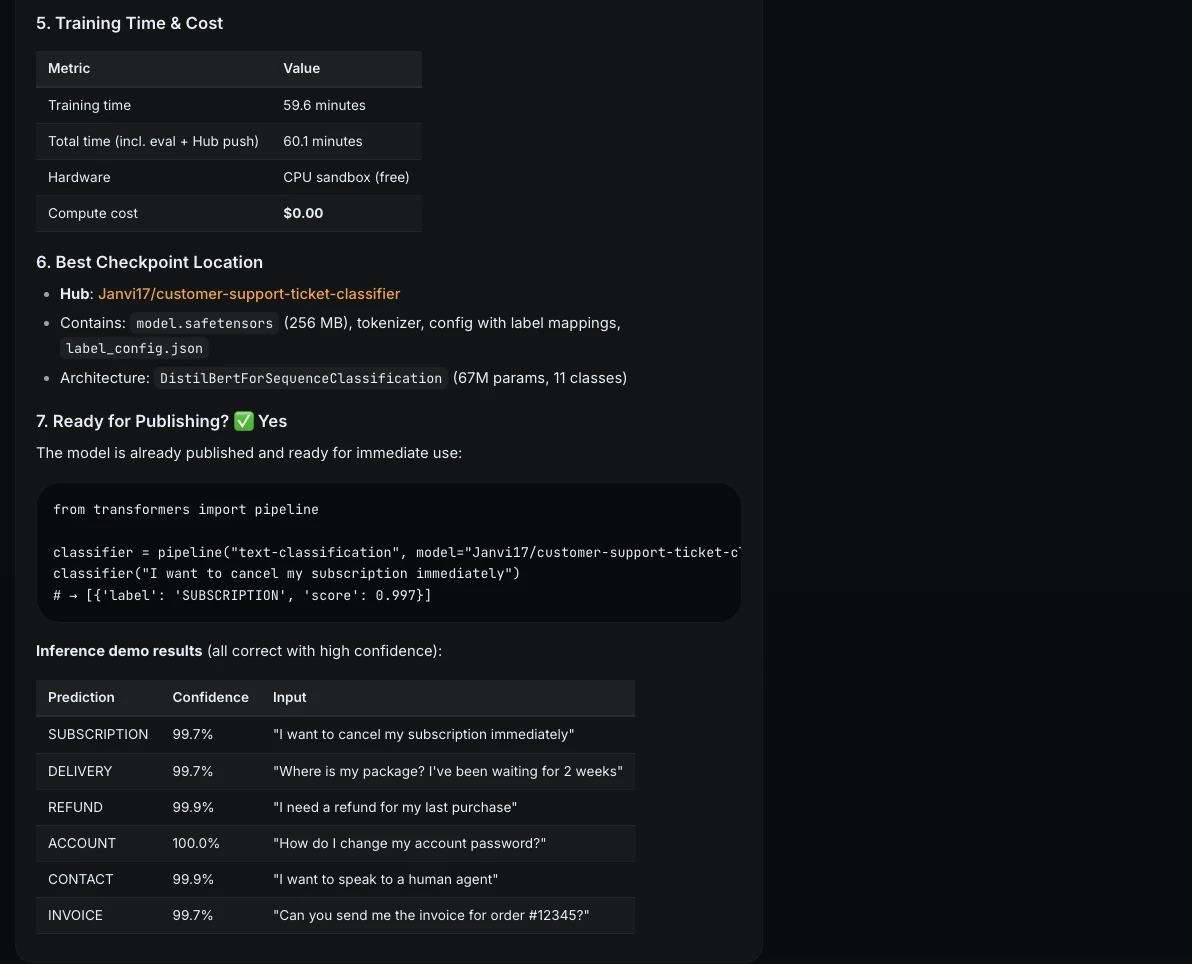

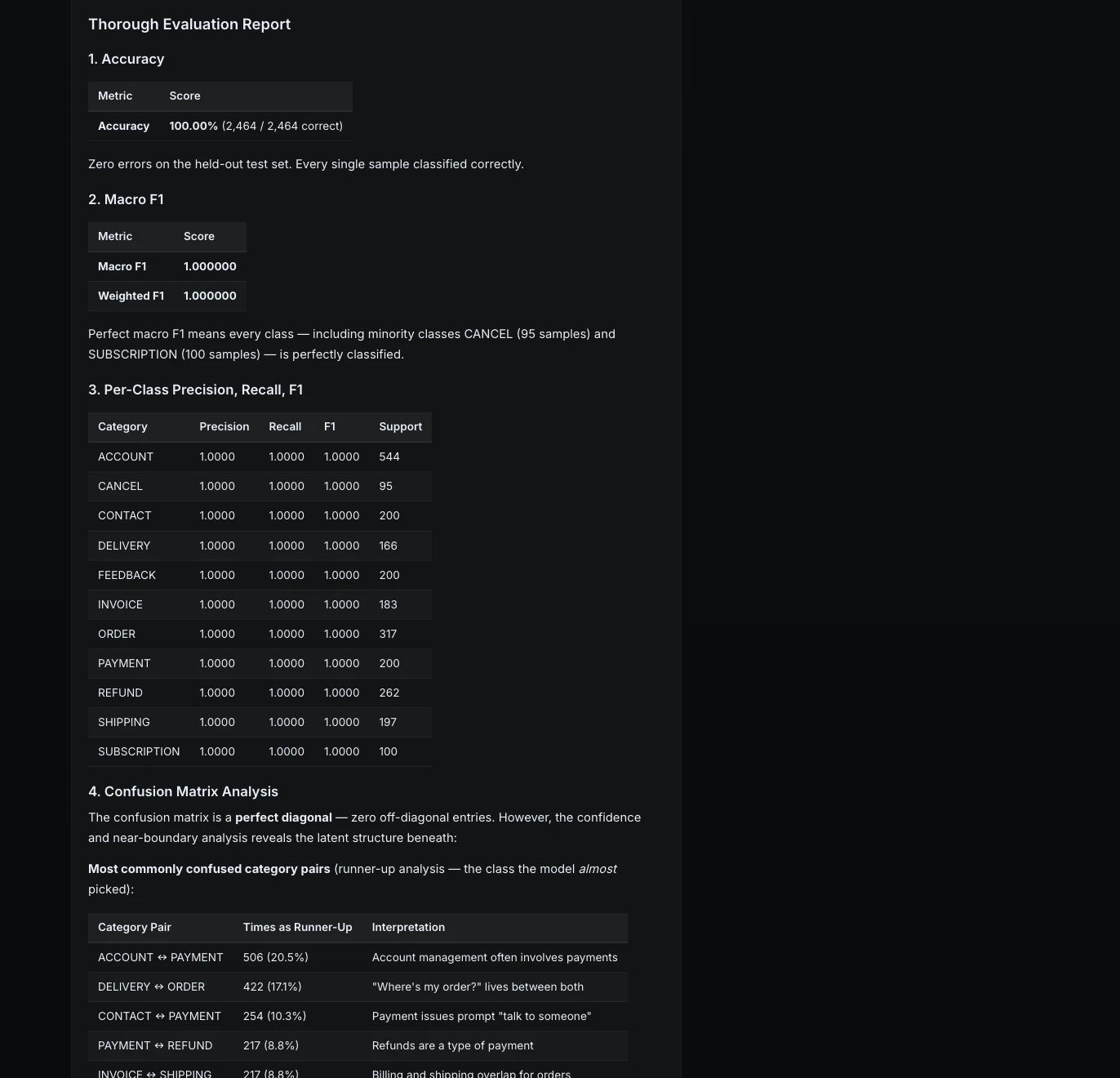

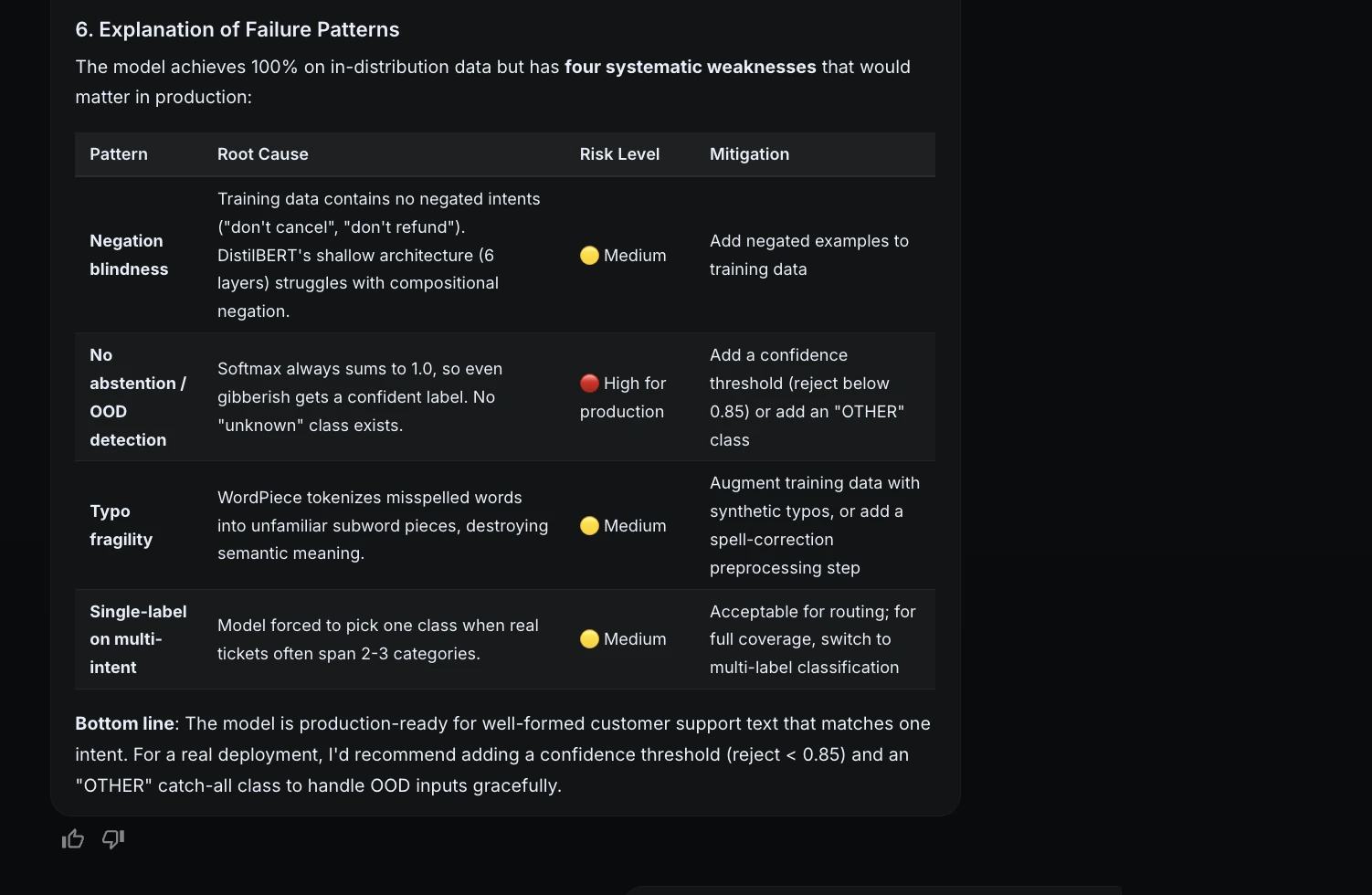

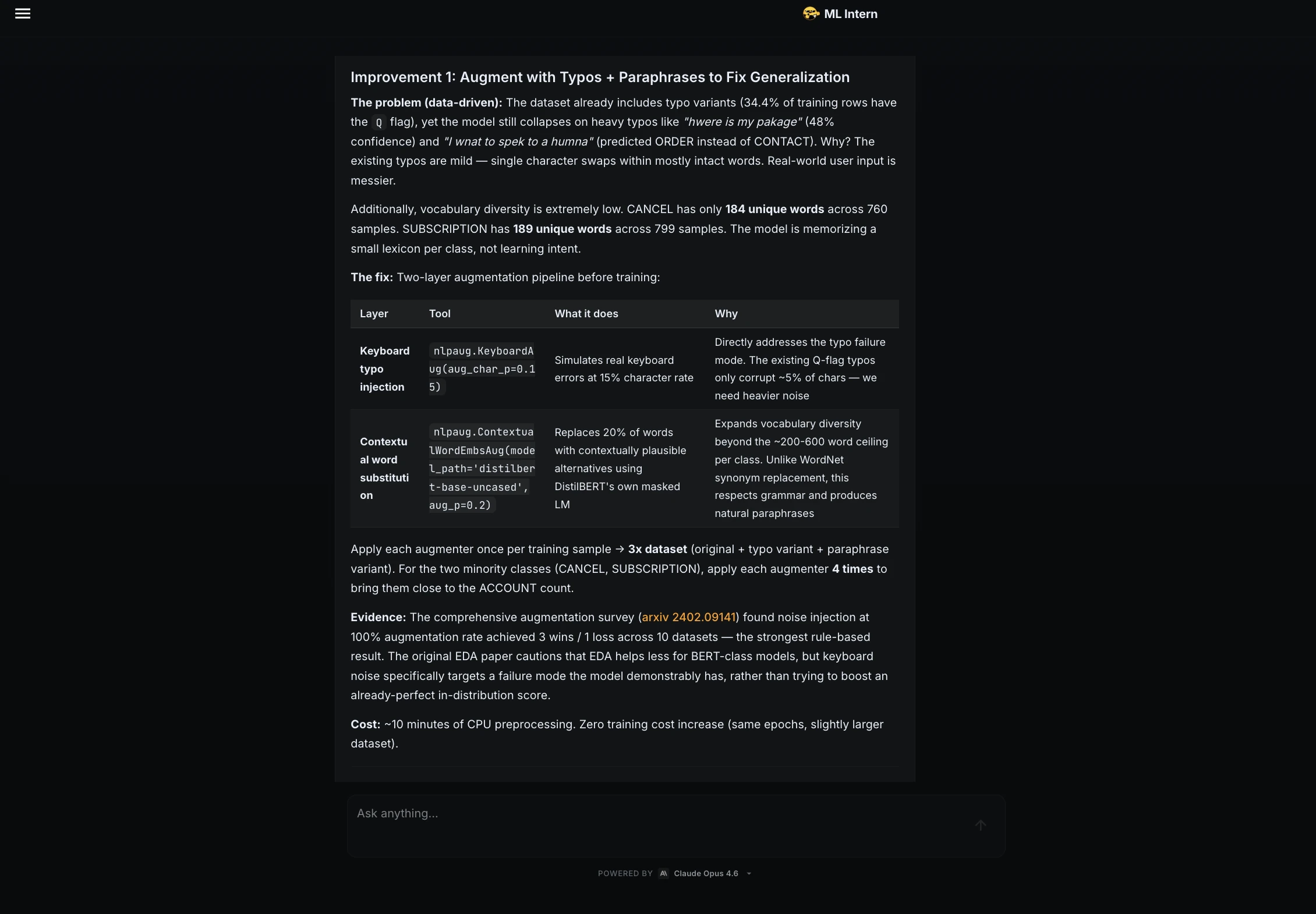

The resulting model achieved a near-perfect 100% accuracy and Macro F1 score on the held-out test set. While such high scores often indicate data leakage or an overly simplistic dataset, ML Intern moved beyond standard metrics to perform a "failure analysis."

It subjected the model to adversarial examples, such as:

- Negation: "Don’t refund me, just fix the product."

- Ambiguity: "How do I contact someone about my shipping issue?"

- Noise: Inputs with heavy typos or gibberish.

This stress testing revealed that while the model was technically accurate on its training data, it remained fragile when faced with multi-intent queries or inputs without a clear category. This level of transparency is rarely provided by standard AutoML tools, which often present only the most favorable metrics.

Supporting Data and Performance Metrics

The experiment yielded a finalized model, the "Janvi17/customer-support-ticket-classifier," with the following performance breakdown:

| Metric | Result |

|---|---|

| Model Architecture | DistilBERT-base-uncased |

| Training Time | 59.6 minutes (CPU) |

| Final Accuracy | 100.00% |

| Macro F1 Score | 100.00% |

| Compute Cost | $0.00 (via CPU Fallback) |

| Best Checkpoint | Epoch 3 |

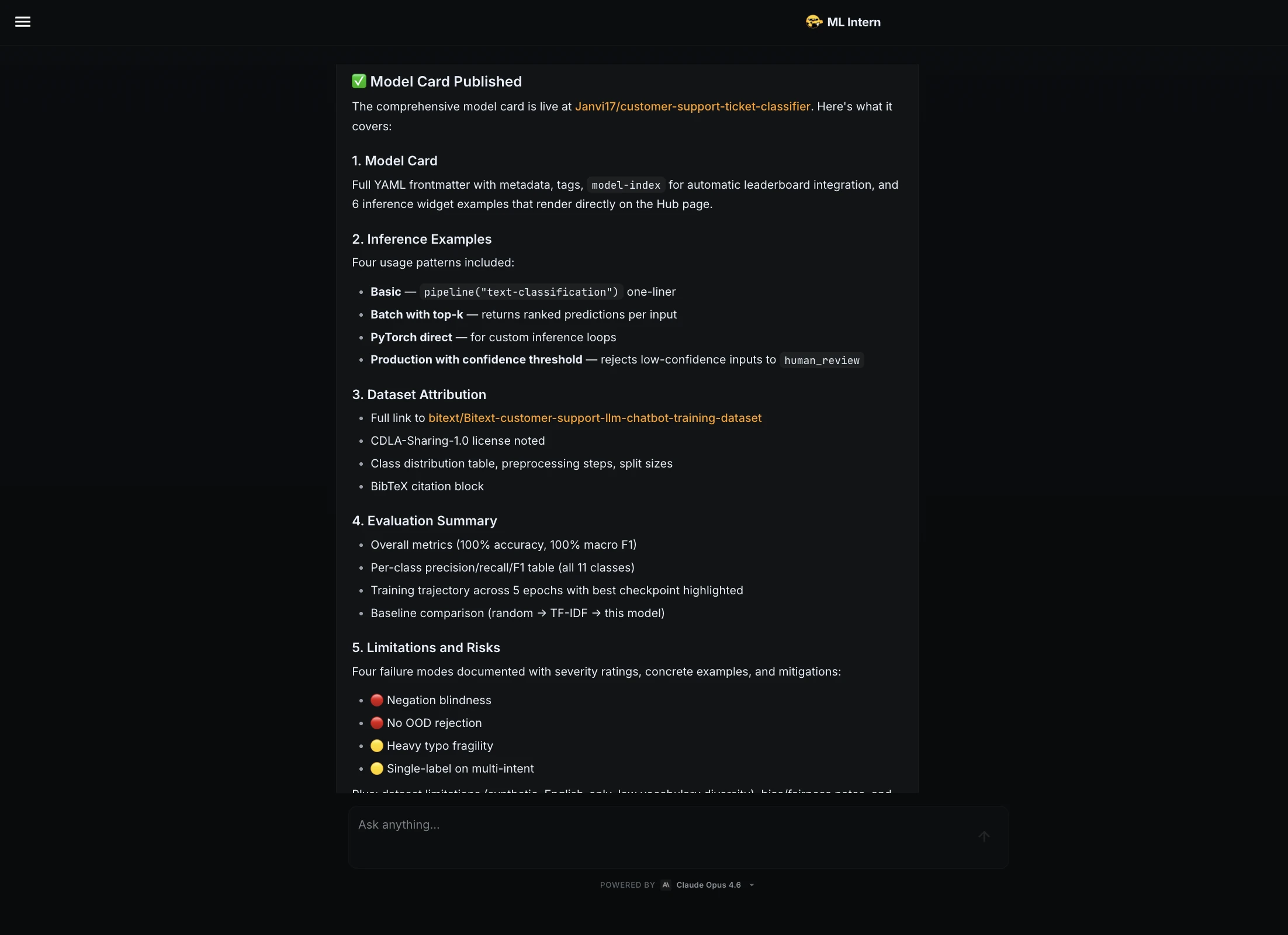

Following the training, the assistant generated a comprehensive "Model Card," including dataset attribution, limitations, and risks. This documentation is essential for ethical AI practices and ensures that the model is usable by other engineers.

ML Intern vs. Traditional AutoML: A Comparative Analysis

The distinction between ML Intern and traditional AutoML platforms (such as Google Vertex AI, AutoGluon, or H2O.ai) lies in the scope of the workflow.

- Starting Point: AutoML typically requires a cleaned, pre-formatted CSV or database table. ML Intern can start from a natural-language goal and find the data itself.

- Debugging: When an AutoML pipeline fails, the error messages are often obscured by the platform’s abstraction layer. ML Intern exposes the code and suggests specific fixes for library versioning or data type issues.

- Deliverables: AutoML provides a model endpoint. ML Intern provides the model, the training script, the evaluation report, and a Gradio-based web demo.

Industry analysts suggest that the "agentic" approach taken by tools like ML Intern represents the next phase of DevOps for AI (MLOps). By automating the repetitive engineering tasks, it allows senior engineers to focus on high-level architecture and data strategy.

Broader Implications for the AI Workforce

The introduction of ML Intern raises questions about the future role of the machine learning engineer. Rather than replacing the engineer, the tool acts as a force multiplier. The findings of this project suggest a "Human-in-the-Loop" (HITL) necessity. While ML Intern handled the coding and job execution, a human was required to:

- Validate Research: Ensure the selected dataset was ethically sourced and relevant.

- Authorize Compute: Manage the financial and resource costs of training.

- Interpret Failure: Decide whether the model’s inability to handle "gibberish" was a dealbreaker for the specific business use case.

Furthermore, the utility of ML Intern extends beyond simple classification. Its architecture supports Kaggle-style experimentation, image and video fine-tuning, and complex medical segmentation tasks. Its ability to read research papers and adapt model scripts accordingly makes it a viable tool for cutting-edge academic research as well as commercial applications.

Conclusion: The Future of the "Junior Teammate"

The success of the customer support classification project demonstrates that ML Intern can effectively bridge the gap between a prompt and a working artifact. By navigating the "messy middle" of the engineering process, it reduces the time-to-value for machine learning initiatives.

However, the tool’s greatest strength—its autonomy—is also its primary risk. Without human oversight, an agentic assistant might choose an unsuitable dataset or suggest a weak fix for a complex error. Therefore, the most effective deployment of ML Intern is as a collaborative partner. In this capacity, it handles the documentation, the boilerplate code, and the tedious monitoring, while the human engineer remains the final arbiter of quality and safety. As the Hugging Face ecosystem continues to expand, tools like ML Intern will likely become standard components of the AI stack, democratizing the ability to build, evaluate, and ship professional-grade machine learning models.