XML sitemaps serve as critical navigational guides for search engines, acting as comprehensive roadmaps that lead algorithms to all important pages on a website. These structured files are invaluable for Search Engine Optimization (SEO), enabling Google and other search engines to efficiently locate and understand essential content, even when a website’s internal linking structure may not be perfectly optimized. Beyond traditional search, XML sitemaps play an indirect yet crucial role in surfacing content for emerging AI-powered agents and generative search experiences. This article delves into the fundamental nature of XML sitemaps, their structural components, historical context, myriad benefits for discoverability, and their evolving relevance in the age of artificial intelligence.

What are XML Sitemaps? A Foundational Overview

At its core, an XML sitemap is a text file written in Extensible Markup Language (XML) that meticulously lists a website’s essential pages. Its primary purpose is to ensure that search engine crawlers can efficiently find, access, and index these pages. More than just a list, it helps search engines comprehend the website’s overall structure, hierarchy, and content priorities. While webmasters might encounter various sitemap formats, an XML sitemap is uniquely engineered for machine readability, distinguishing it from HTML sitemaps which are designed to enhance user navigation through a clear, hierarchical list of pages.

XML sitemaps include vital metadata for each URL, offering search engines deeper insights into the content. This metadata can specify the canonical URL (<loc>), the date of the page’s last significant modification (<lastmod>), and, historically, suggested change frequency (<changefreq>) and relative importance (<priority>). Search engines leverage this information to crawl websites more intelligently and efficiently. This is particularly crucial for websites that are large, newly launched with limited external links, or possess complex navigation structures that might otherwise hinder comprehensive discovery.

For instance, a simple XML sitemap for a single URL might appear as follows:

<?xml version="1.0" encoding="UTF-8"?>

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<url>

<loc>https://www.yoast.com/wordpress-seo/</loc>

<lastmod>2024-01-01</lastmod>

</url>

</urlset>Each URL within a sitemap is encapsulated by specific XML tags. The <urlset> tag serves as the container for the entire sitemap, defining the sitemap protocol. Within it, each <url> tag represents a single page entry. The <loc> tag is mandatory and specifies the full canonical URL. The <lastmod> tag, while optional, indicates the last modification date, signaling to search engines when re-crawling might be beneficial. Historically, <changefreq> and <priority> tags were used to suggest how often content changes and its relative importance. However, Google has officially stated it largely ignores these two tags, placing greater emphasis on the <lastmod> tag for freshness signals.

The Evolution of the Sitemap Protocol

Before the widespread adoption of sitemaps, search engine discovery relied almost entirely on the intricate web of internal and external links. Crawlers would navigate from page to page, following hyperlinks, a process that could be inefficient for large or poorly linked sites. The XML sitemap protocol was initially introduced by Google in June 2005, marking a significant shift in how webmasters could communicate directly with search engines. This innovation provided a standardized, machine-readable format for listing URLs, giving site owners a proactive tool to ensure their content was seen.

The protocol gained broader industry acceptance in November 2006 when Google, Yahoo, and Microsoft (for Live Search, now Bing) jointly announced their support for the Sitemaps 0.9 protocol. This collaboration solidified XML sitemaps as a universal standard for web content discovery. This chronology underscores the protocol’s foundational role, evolving from a Google-specific tool to an industry-wide best practice, reflecting the growing complexity of the web and the continuous need for efficient indexing mechanisms.

XML Sitemap Index: Managing Scale

For websites with an extensive number of pages, a single XML sitemap can quickly become unwieldy. Search engines impose limits on individual sitemap files: typically, a single sitemap can contain a maximum of 50,000 URLs or be up to 50 MB in size (uncompressed). To circumvent these limitations, the concept of an XML sitemap index was introduced.

A sitemap index file acts as a directory, listing multiple individual XML sitemap files rather than individual page URLs. This structure is particularly beneficial for large-scale websites, such as e-commerce platforms with millions of products, news archives, or vast content repositories. Site owners can organize their sitemaps by content type (e.g., separate sitemaps for articles, product pages, categories, or images), by date, or by other logical groupings. Submitting a sitemap index to search engines allows them to discover and process all associated sitemaps from a single point of entry, streamlining the crawling process.

Here’s an example of a sitemap index file:

<?xml version="1.0" encoding="UTF-8"?>

<sitemapindex xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<sitemap>

<loc>https://www.example.com/sitemap-pages.xml</loc>

<lastmod>2025-12-11</lastmod>

</sitemap>

<sitemap>

<loc>https://www.example.com/sitemap-products.xml</loc>

<lastmod>2025-12-11</lastmod>

</sitemap>

</sitemapindex>This structure ensures efficient discovery and crawling, preventing any single sitemap from exceeding technical limits and improving overall site management.

Why XML Sitemaps Are Indispensable for SEO

While search engines can indeed discover pages through internal links and backlinks, relying solely on these methods can be insufficient for optimal SEO. XML sitemaps offer a multitude of benefits that make them an indispensable component of any robust SEO strategy:

-

Improved Crawl Efficiency: Sitemaps optimize a website’s "crawl budget"—the number of pages a search engine crawler will process on a site within a given timeframe. By explicitly listing important URLs, sitemaps guide crawlers directly to valuable content, preventing them from wasting resources on less important or non-indexable pages. This is particularly advantageous for large, dynamic websites where content updates frequently.

-

Faster Indexing of New and Updated Content: For websites that publish content regularly, such as news outlets, blogs, or e-commerce stores with frequently changing product listings, sitemaps accelerate the discovery process. Including new or updated pages in the sitemap helps search engines find them sooner, leading to quicker indexing and appearance in search results. Data suggests that content included in a sitemap can be indexed up to 50% faster than content discovered purely through linking.

-

Discovery of Orphan Pages: Orphan pages are those that exist on a website but are not linked to from any other internal page. Since crawlers typically follow links, orphan pages can be missed entirely. An XML sitemap acts as a safety net, ensuring these valuable but isolated pages are still discovered and considered for indexing.

-

Additional Metadata Signals: The

<lastmod>tag is a powerful signal. It informs search engines when a page was last meaningfully updated, helping them determine when to re-crawl the page for fresh content. This is crucial for maintaining content relevance and accuracy in search results. -

Support for Specialized Content: Beyond standard web pages, sitemaps can be extended to include specialized content types like images, videos, or news articles. Image sitemaps, for example, can include details about image location, subject matter, and captions, helping images appear in Google Images. Video sitemaps can specify video duration, category, and target audience, improving visibility in video search results.

-

Better Understanding of Site Structure: A well-organized sitemap provides search engines with a clear, logical overview of a website’s architecture. This can help algorithms understand the relationships between different sections, content types, and individual pages, contributing to a more accurate representation in search results.

-

Indexing Insights Through Search Console: Submitting sitemaps to tools like Google Search Console (GSC) provides invaluable diagnostic data. Webmasters can monitor how many URLs submitted via the sitemap have been discovered and indexed, identify potential crawl issues, and pinpoint indexing errors, allowing for proactive problem-solving.

-

Support for Multilingual and Multi-regional Websites: For global websites targeting various languages or regions, XML sitemaps can integrate

hreflangannotations. These annotations signal to search engines the existence of alternate language versions of pages, ensuring that users in different locations receive the most appropriate language version of the content.

XML Sitemaps and the Future of AI Search

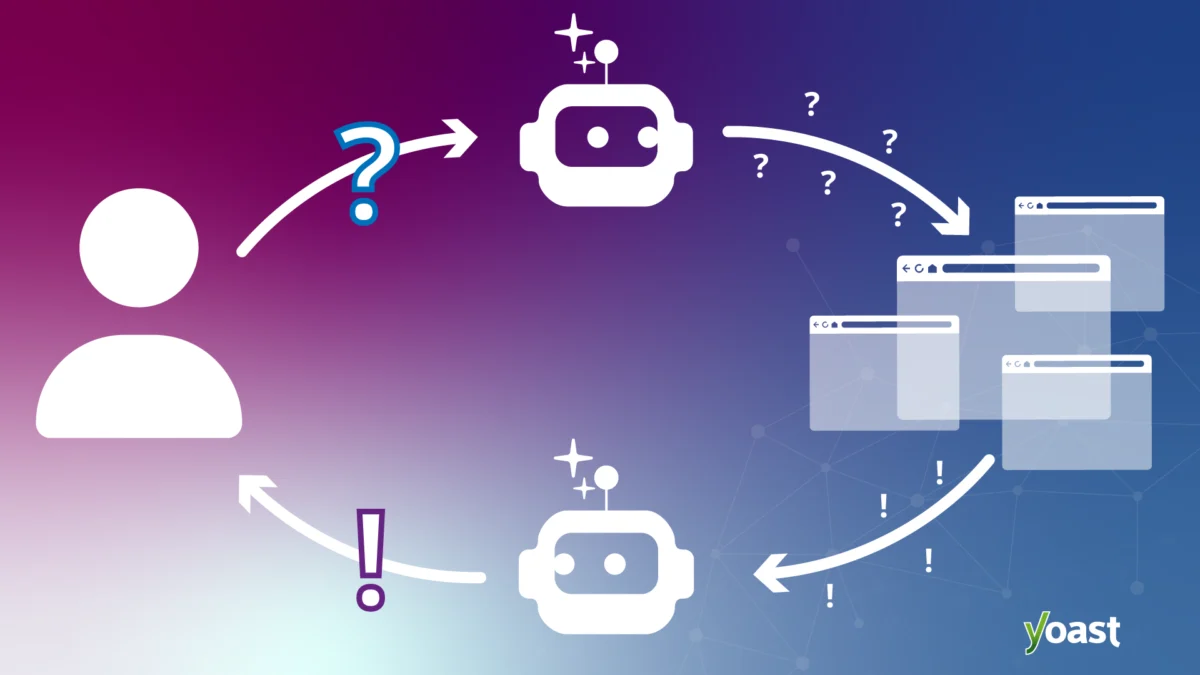

The rise of AI-powered search experiences, such as Google’s AI Overviews, Bing Copilot, and other generative AI models, has introduced new layers of complexity to content discoverability. While these AI systems process and synthesize information in advanced ways, their foundational reliance on traditional search indexes remains paramount. This means that for content to be considered by AI agents, it must first be effectively crawled and indexed by the underlying search engine.

In this context, XML sitemaps continue to play an indirectly critical role. By facilitating the efficient discovery and indexing of content, sitemaps ensure that a website’s pages are available within the vast data reservoirs that AI models query. An accurate <lastmod> value is particularly useful here, as AI systems often prioritize fresh, up-to-date information to generate relevant and timely answers. If a page isn’t indexed, or if its freshness isn’t clearly signaled, its chances of contributing to an AI-generated answer significantly diminish.

Therefore, while an XML sitemap alone won’t guarantee content appears in AI answers, it serves as a foundational step. It ensures discoverability, indexability, and signals freshness, all of which are prerequisites for content to be considered and potentially leveraged by advanced AI search functionalities. Neglecting sitemap optimization could inadvertently reduce a website’s visibility in this evolving search landscape.

Practical Implementation: Leveraging Automation Tools

Manually creating and maintaining XML sitemaps for dynamic websites, especially those with frequently changing content, is a time-consuming and error-prone endeavor. This is where automation tools become invaluable. Solutions like Yoast SEO, a widely used plugin for WordPress, automatically generate and manage XML sitemaps.

Yoast SEO’s approach ensures that as content is published, updated, or removed, the sitemap index and individual sitemaps are updated in real-time. This guarantees that search engines always have the most current overview of the pages intended for crawling and indexing. The plugin intelligently organizes sitemaps by content type, creating separate sitemaps for posts, pages, and other public content types, all accessible via a central sitemap index. This automated process significantly reduces the manual workload for webmasters and minimizes the risk of outdated or incomplete sitemaps.

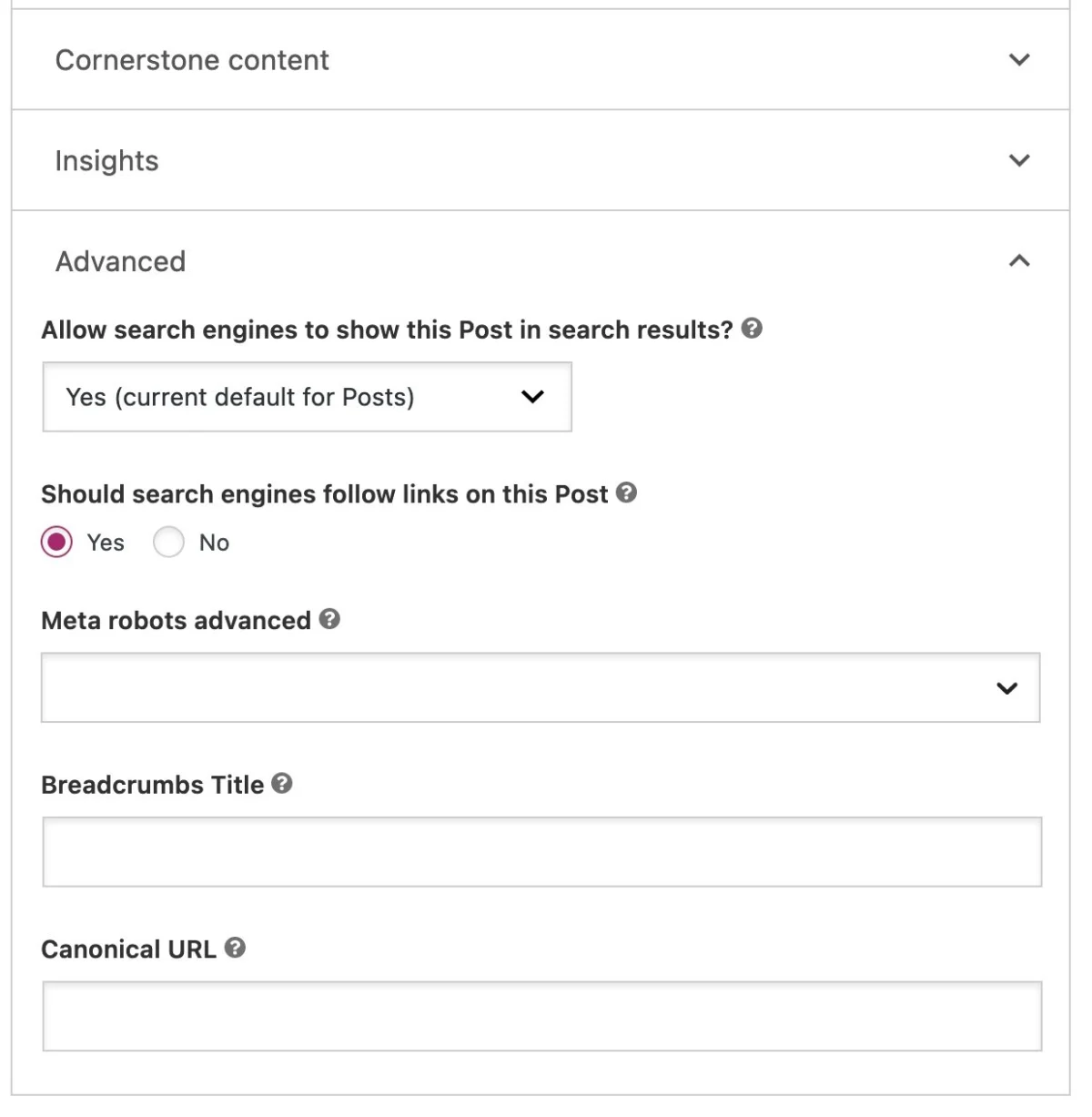

A key advantage of automated sitemap generation is its ability to adhere to SEO best practices by default. Yoast SEO, for example, automatically excludes content marked as "noindex" from the sitemap. This ensures that the sitemap remains clean and focused exclusively on URLs that should appear in search results, preventing search engines from wasting crawl budget on pages intentionally hidden from public search.

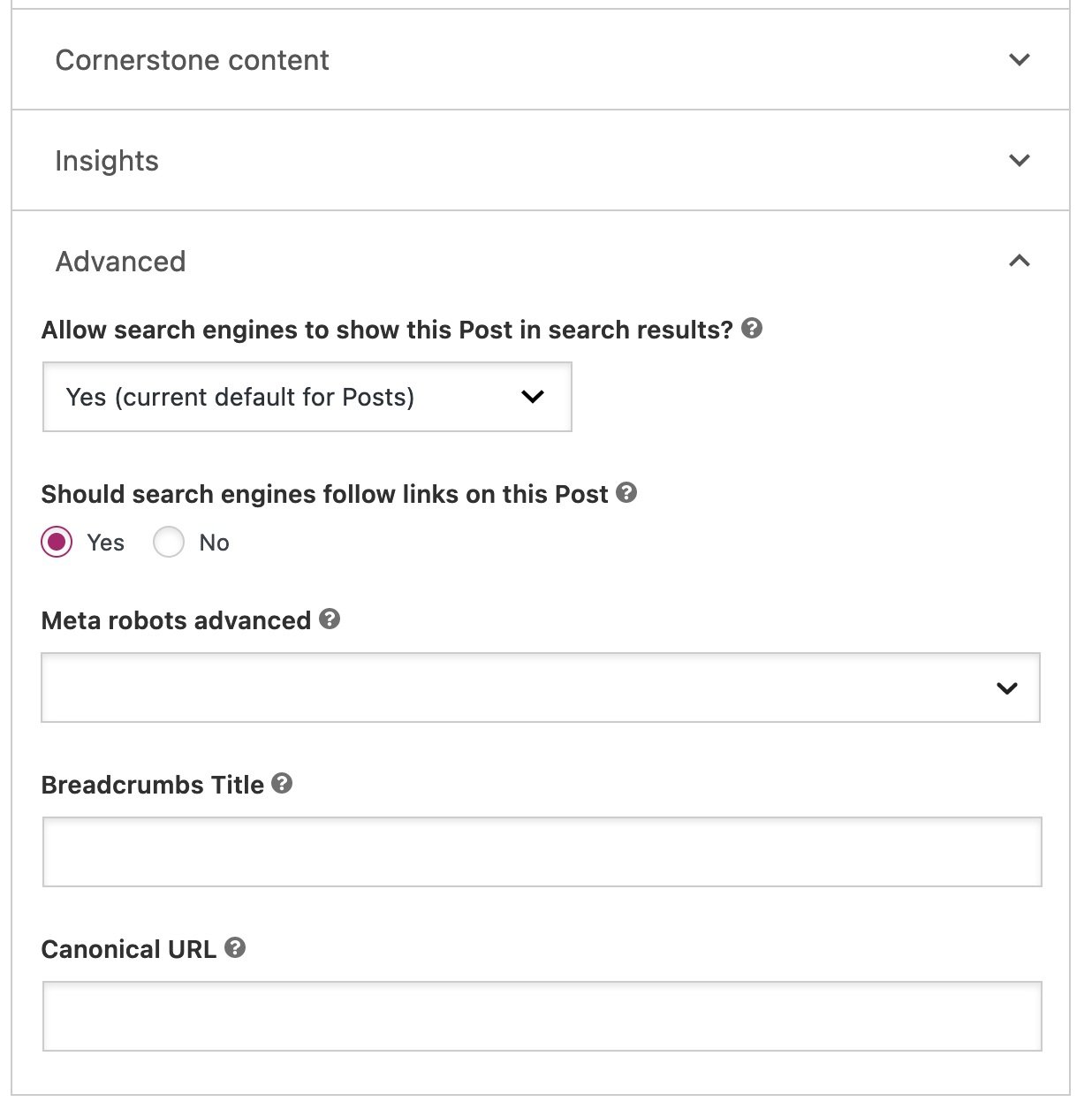

Webmasters also retain granular control over what appears in their sitemap. Through settings within the plugin, individual posts or pages can be easily excluded by toggling the "Allow search engines to show this content in search results?" option. When set to "No," the content is noindexed and automatically removed from the XML sitemap. For more advanced scenarios, developers can utilize filters to customize sitemap behavior, such as limiting the number of URLs per sitemap or programmatically excluding specific content types based on custom logic. This blend of automation and control empowers website owners to maintain an optimized and accurate sitemap effortlessly.

Strategic Content Inclusion: What Belongs in Your XML Sitemap?

The decision of which pages to include in an XML sitemap is strategic and should align with a website’s SEO objectives. The guiding principle is simple: only include high-quality, canonical, and indexable pages that you want visitors to find through search engines.

Pages that should generally be excluded from a sitemap include:

- Noindexed pages: Pages explicitly marked with a

noindextag. - Duplicate content: Pages that are near-identical copies of other pages.

- Broken pages (404s): Pages that no longer exist.

- Pages blocked by robots.txt: If a page is disallowed by robots.txt, it should also not be in the sitemap.

- Parameter URLs: Pages with URL parameters that create duplicate content issues.

- Thin content: Pages with very little unique or valuable content.

- Thank you pages: Confirmation pages after form submissions, which typically offer no value in search results.

Consider a new blog as an example. Initially, all blog posts and main pages would be prime candidates for inclusion in the sitemap, as their discoverability is paramount. However, certain archive pages, like tag or category archives with only one or two posts, might qualify as "thin content." While these pages could potentially become valuable landing pages in the future, if they currently offer minimal value to a user, it might be strategic to noindex them and exclude them from the sitemap until they are enriched with more content or unique value. The key is to evaluate each page’s relevance and value from a search engine and user perspective. Leaving a page out of the sitemap does not guarantee it won’t be indexed if Google can find it via internal links; therefore, for explicit exclusion, a noindex tag is essential.

Submitting and Monitoring Your Sitemap

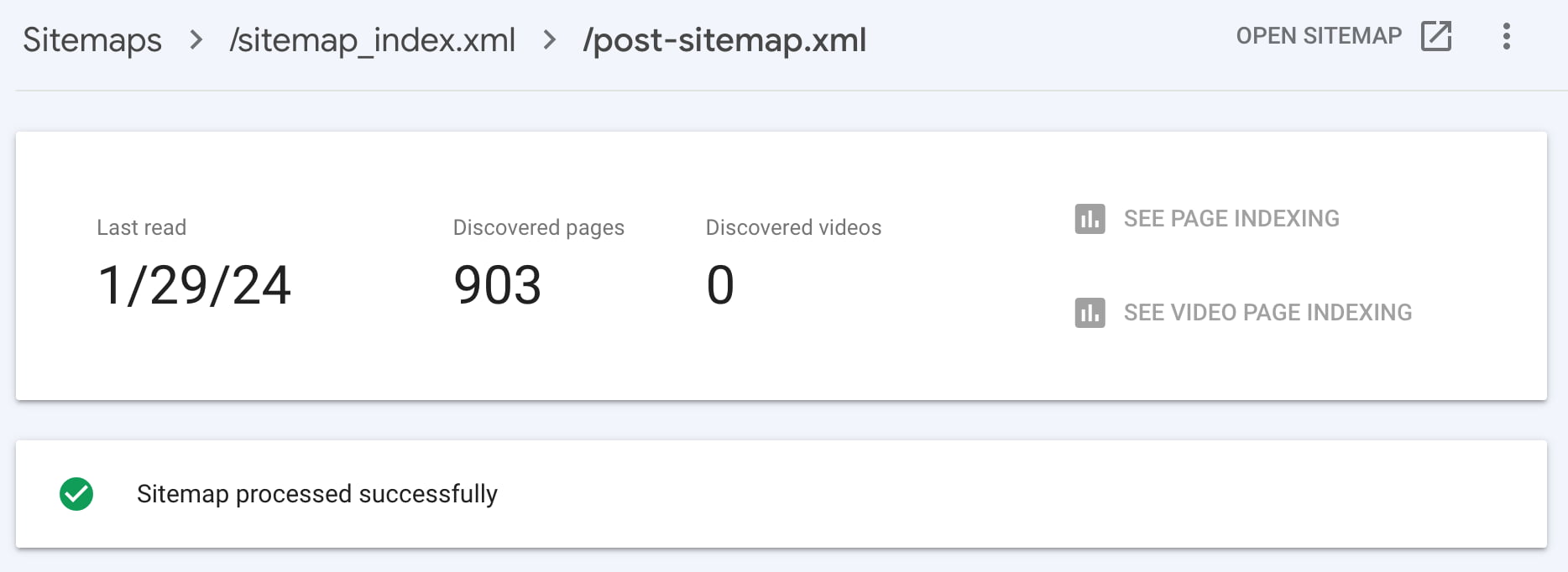

Once an XML sitemap is generated and optimized, the next critical step is to submit it to search engines and monitor its performance. Google Search Console (GSC) is the primary tool for this purpose. Within GSC, webmasters can navigate to the "Sitemaps" section and submit their sitemap index URL (e.g., example.com/sitemap_index.xml). Bing Webmaster Tools offers a similar functionality for submission to Bing.

After submission, GSC provides valuable insights, indicating how many URLs Google has "submitted" from your sitemap versus how many it has "indexed." A significant discrepancy between these two figures warrants immediate investigation. This could signal underlying issues such as:

- Crawl errors: Pages that Google tried to crawl but encountered an error (e.g., 404, server error).

- Indexing errors: Pages that were crawled but deemed unsuitable for indexing (e.g.,

noindextag, duplicate content, thin content). - Canonicalization issues: Google choosing a different canonical URL than the one submitted.

- Quality issues: Google perceiving the content as low quality or irrelevant.

Monitoring these metrics regularly allows webmasters to identify and rectify problems, ensuring that all important content is discoverable and indexed. If discrepancies arise, further investigation into specific page statuses using GSC’s "Page Indexing" report is recommended, alongside reviewing internal linking strategies to bolster the discoverability of unindexed but important pages.

Addressing Common Concerns: XML Sitemap FAQs

What happens if Google Search Console reports errors in an XML sitemap?

An XML sitemap with reported errors indicates that Google encountered issues while processing the file. These errors could range from syntax issues (malformed XML) to accessibility problems (sitemap not reachable) or content issues (e.g., including noindex pages). It is crucial to review the specific error messages provided in GSC. Common fixes include validating the XML syntax, ensuring the sitemap URL is correct and accessible, and verifying that all listed URLs are valid and should be indexed. Promptly addressing these errors ensures the sitemap effectively serves its purpose.

How can I check if a website has an XML sitemap?

The most common way to check for an XML sitemap is by appending /sitemap.xml to the website’s root domain (e.g., https://www.example.com/sitemap.xml). Many websites, especially those using SEO plugins like Yoast SEO, will redirect this URL to a sitemap index, often found at /sitemap_index.xml. If neither of these works, check the website’s robots.txt file (e.g., https://www.example.com/robots.txt), as the sitemap URL is often declared there using the Sitemap: directive.

What is the best way to update an XML sitemap?

While manual updates or static sitemap generators exist, they are inefficient for dynamic websites. The most effective and recommended method is to use an SEO plugin or content management system (CMS) feature that automatically generates and updates the XML sitemap in real-time. For WordPress users, plugins like Yoast SEO handle this process seamlessly. When new content is published, existing content is updated, or pages are removed, the sitemap is automatically adjusted, ensuring it always reflects the current state of the website.

Do <priority> and <changefreq> tags still matter in XML sitemaps?

No, Google has officially stated that it generally ignores the <priority> and <changefreq> attributes within XML sitemaps. In the past, these tags were intended to suggest the relative importance of a page and how frequently its content was expected to change. However, Google’s algorithms have become sophisticated enough to determine these factors independently based on various signals, including internal linking, content freshness, and user engagement. Webmasters should focus their efforts on the <loc> and <lastmod> tags, as lastmod remains a valuable signal for content freshness.

Conclusion

XML sitemaps are far from a mere technical formality; they are a fundamental component of effective search engine optimization. By providing a direct, machine-readable roadmap of a website’s critical content, they significantly enhance discoverability, accelerate indexing, and improve crawl efficiency. In an increasingly complex digital landscape, where content must not only cater to traditional search algorithms but also inform emerging AI-powered agents, the strategic implementation and diligent maintenance of XML sitemaps are more crucial than ever. Every website, regardless of its size or age, benefits from a well-structured sitemap that clearly communicates its most valuable pages to the world’s leading search engines. Webmasters are encouraged to regularly review and optimize their XML sitemaps, leveraging tools like Google Search Console to ensure their digital presence is fully and effectively indexed.