Your data is only as powerful as it is trustworthy—especially in the age of artificial intelligence. In an increasingly data-driven world, the integrity of information has transcended a mere technical concern to become a foundational pillar of business strategy, competitive advantage, and operational efficiency. Organizations worldwide are grappling with an explosion of data, sourced from myriad channels and systems, making the disciplined practice of data quality management (DQM) more critical than ever before. This comprehensive approach encompasses the practices, tools, and processes designed to ensure that data is accurate, complete, consistent, timely, unique, and valid, providing a reliable bedrock upon which impactful decisions and advanced technologies like AI can genuinely thrive.

The Foundational Role of Data Quality in the AI Era

The advent and rapid proliferation of artificial intelligence, machine learning, and advanced analytics have dramatically amplified the stakes for data quality. AI models, by their very nature, learn from the data they are fed. If this underlying data is flawed, biased, or incomplete, the outputs—whether predictive insights, automated decisions, or personalized customer interactions—will inevitably inherit and perpetuate those imperfections, leading to the infamous "garbage in, garbage out" phenomenon. This makes DQM not just a best practice, but an existential necessity for any enterprise aspiring to leverage AI for innovation and growth.

Data quality management extends beyond simple data cleansing; it is a holistic discipline covering everything from initial data profiling and validation to ongoing monitoring, enrichment, and robust governance frameworks. It transforms raw, potentially chaotic information into a trusted organizational asset. Without a solid foundation of high-quality data, businesses risk building their entire operational and strategic edifice on shifting sands, jeopardizing everything from customer satisfaction to regulatory compliance and market position.

At its heart, DQM focuses on six core elements that collectively determine data trustworthiness:

- Accuracy: Ensuring data reflects the true state of affairs, free from errors.

- Completeness: Verifying that all necessary data points are present.

- Consistency: Maintaining uniformity across different systems and datasets.

- Timeliness: Confirming data is up-to-date and available when needed.

- Uniqueness: Eliminating duplicate records to provide a single, definitive view.

- Validity: Ensuring data conforms to defined formats, types, and business rules.

These qualities are the benchmarks against which data’s reliability is measured. A shortfall in any one area can compromise the utility of an entire dataset, leading to misinformed strategies and unforeseen operational bottlenecks.

The Insidious Spread of Poor Data: A CRM Case Study

A prime example of how poor data quality can propagate and inflict widespread damage is within a Customer Relationship Management (CRM) system. Designed to be the definitive "single source of truth" for customer information, a CRM is constantly fed by diverse data streams: manual entries, system integrations, bulk imports, and automated capture tools. Each entry point introduces potential variables and risks, making it susceptible to errors.

Consider the ripple effect of inaccurate or incomplete CRM data:

- Skewed Customer Segmentation: Marketing campaigns may target the wrong demographics, leading to wasted spend and low conversion rates.

- Slowed Operations: Sales representatives waste valuable time chasing outdated contacts, dealing with duplicate records, or struggling to find complete customer histories. This directly impacts productivity and morale.

- Corrupted Reporting and Analytics: Management reports and analytics forecasts become unreliable, leading to misinformed strategic decisions. If leaders are basing investments or market entries on flawed insights, the competitive liability can be immense.

- Damaged Customer Relationships: Incorrect contact information, duplicate profiles, or inconsistent interaction histories lead to disjointed customer experiences, repetitive inquiries, and a perceived lack of understanding, ultimately eroding trust and loyalty.

When businesses operate at scale, these aren’t minor inconveniences. They become significant competitive handicaps. The goal of DQM is not merely to react to data problems but to proactively prevent them, safeguarding against decision-making failures and compliance breaches. Clean data is not a luxury; it is the fundamental distinction between a system that empowers a business and one that actively undermines it.

Unmasking the Hidden Costs: Business Impact of Flawed Data

The financial and operational ramifications of poor data quality are often underestimated and pervasive. While some costs are direct and easily quantifiable, others are subtle, eroding efficiency and opportunity over time. Validity’s State of CRM Data Management in 2025 report starkly reveals that 37 percent of teams report losing revenue as a direct consequence of poor data quality. This figure, while significant, likely represents only a fraction of the total impact.

Beyond direct revenue loss, the business impact includes:

- Operational Inefficiencies: Employees spend an estimated 30-40% of their time on non-value-added activities due to poor data, such as verifying information, merging duplicates, or correcting errors. This is equivalent to losing a significant portion of an organization’s workforce to manual data remediation.

- Wasted Marketing Spend: Inaccurate customer data leads to marketing campaigns targeting invalid contacts, resulting in bounced emails, misdirected advertisements, and ineffective personalization efforts. The average cost of a bad lead can quickly escalate, draining marketing budgets without yielding results.

- Suboptimal Decision-Making: Flawed data leads to flawed insights. Strategic decisions concerning product development, market expansion, resource allocation, and competitive positioning are compromised, often resulting in missed opportunities or costly missteps. Industry studies, such as those by Gartner, often highlight that poor data quality costs organizations an average of $15 million annually, primarily through lost productivity and erroneous decisions.

- Eroded Customer Experience and Churn: Missing or incorrect customer information leads to slow service, irrelevant communications, and awkward interactions. Imagine a sales rep contacting a loyal customer as if they were a new lead, or a support agent unable to access a customer’s full interaction history. Such experiences damage brand perception, reduce customer satisfaction, and accelerate churn.

- Compliance Risks and Fines: Data privacy regulations like GDPR and CCPA mandate accurate and well-managed personal data. Non-compliance due to poor data quality can lead to substantial fines, legal challenges, and severe reputational damage.

- Failed AI and Automation Projects: As highlighted earlier, AI systems are only as intelligent as their training data. Poor data quality can introduce bias, reduce predictive accuracy, and undermine the effectiveness of automated workflows, rendering significant investments in AI technologies moot.

The Challenge of Detection: Why Bad Data Persists

Data quality issues are often insidious, developing gradually and silently. A few scattered errors may not be immediately obvious, but as problems accumulate, the underlying cracks in the data infrastructure become increasingly apparent. This challenge is further exacerbated by the exponential growth in data volume, velocity, and variety. Analyzing vast datasets to pinpoint quality issues is a major obstacle, with CDO Trends noting that 57 percent of data practitioners identify maintaining data quality as their biggest challenge during data analysis.

Several factors contribute to the difficulty in detecting data quality problems:

- Data Silos: Information is often fragmented across different departmental systems, making a holistic view difficult and fostering inconsistencies.

- Lack of Data Ownership: Without clear accountability for data quality within specific domains, issues can go unaddressed.

- Complex Data Ecosystems: Modern enterprises use a multitude of integrated applications, each potentially introducing or altering data, making it hard to trace the origin of errors.

- Manual Entry Errors: Human mistakes during data input are a perennial source of inaccuracy and incompleteness.

- Insufficient Data Profiling Tools: Many organizations lack the sophisticated tools needed to automatically scan, analyze, and report on data quality metrics at scale.

- Dynamic Data Landscapes: Data is constantly changing. What is accurate today might be outdated tomorrow, requiring continuous monitoring.

Measuring What Matters: Key Data Quality Metrics

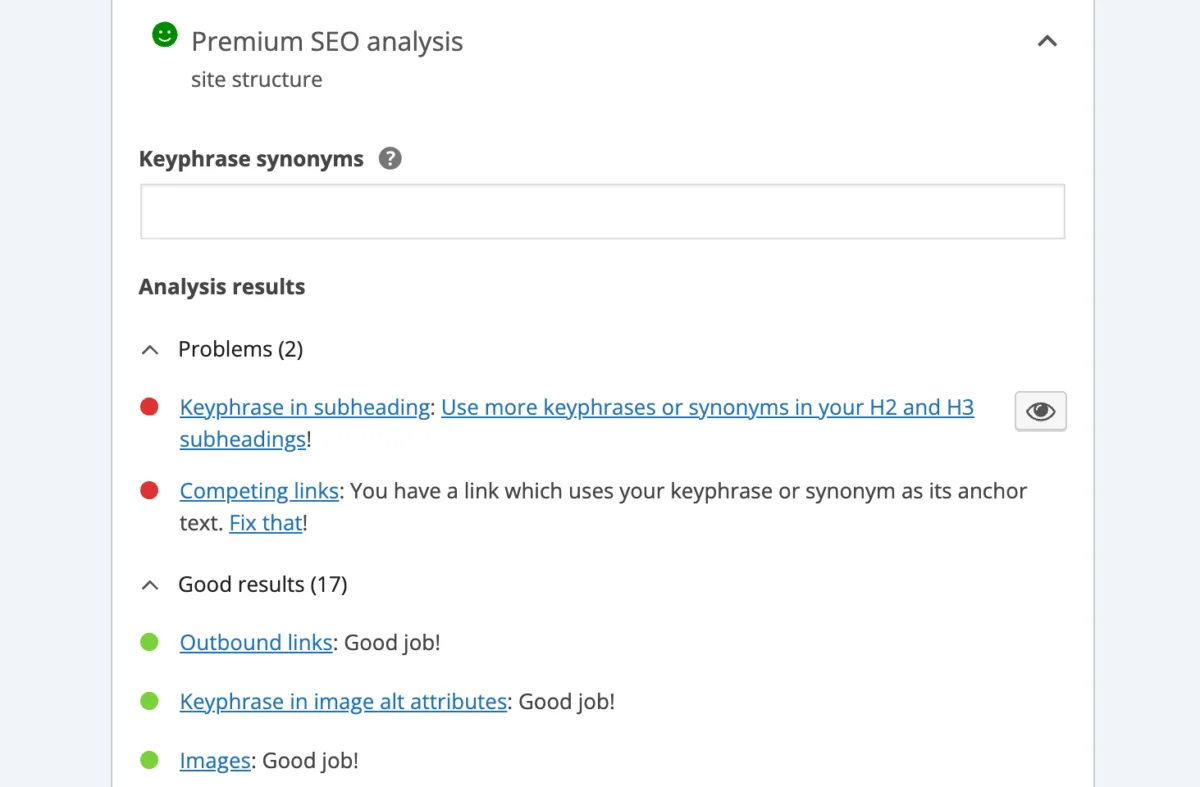

To effectively oversee and improve data quality, organizations must move beyond anecdotal evidence and track concrete metrics. These five key data quality metrics provide actionable insights into the trustworthiness and utility of your data, guiding improvement efforts:

- Data Accuracy Rate: Measures the percentage of data records that are correct and reflect reality. This often involves comparing data against a trusted source or validating against business rules.

- Data Completeness Rate: Indicates the percentage of required data fields that contain values, as opposed to being blank or null.

- Data Consistency Rate: Evaluates the degree to which data values are uniform across different systems or datasets where they should be identical.

- Data Timeliness Rate: Assesses how current the data is, often measured by the age of the data or the time since its last update relative to business needs.

- Data Uniqueness Rate: Determines the percentage of records that are distinct, indicating the presence of duplicate entries.

By regularly monitoring these metrics, businesses can gain a clear understanding of their data’s health, identify areas of concern, and quantify the impact of their DQM initiatives.

Building a Robust Data Quality Strategy: A Phased Approach

Implementing a successful data quality management program requires a structured, strategic approach, moving beyond ad-hoc fixes to systemic improvements. While every organization’s journey is unique, a general timeline and set of phases can guide the process:

Phase 1: Assessment and Discovery (Weeks 1-4)

- Conduct a Comprehensive Data Audit: Begin by profiling existing data to identify current quality issues (duplicates, inaccuracies, inconsistencies). This involves analyzing data sources, mapping data flows, and identifying critical data elements. Tools that can quickly scan and report on data health are invaluable here.

- Define Data Quality Requirements: Engage with key stakeholders across departments (sales, marketing, operations, finance) to understand their data needs and the impact of poor data on their functions. Clearly define what "good quality data" means for different data types and business processes.

- Identify Root Causes: Determine why data quality issues are occurring. Is it manual entry, faulty integrations, lack of validation rules, or inconsistent processes?

Phase 2: Strategy and Governance (Weeks 5-8)

- Establish Data Quality Standards and Rules: Based on the audit and requirements, define clear, measurable data quality rules and standards. This includes naming conventions, data formats, acceptable values, and validation logic.

- Develop a Data Governance Framework: Appoint data stewards responsible for specific data domains. Define roles, responsibilities, and accountability for data quality across the organization. This framework should outline policies for data creation, usage, storage, and retirement.

- Select Appropriate Tools: Evaluate and select data quality management tools (like DemandTools) that can automate profiling, cleansing, validation, and monitoring tasks.

Phase 3: Implementation and Automation (Months 2-6)

- Data Cleansing and Remediation: Execute initial data cleansing efforts using selected tools to correct historical errors, merge duplicates, standardize formats, and fill in missing information. Prioritize critical datasets first.

- Implement Validation Rules: Embed data validation rules at the point of data entry in all relevant systems (e.g., CRM, ERP) to prevent bad data from entering the system.

- Automate Data Quality Processes: Configure automated routines for ongoing deduplication, standardization, enrichment, and monitoring. This shifts DQM from a reactive, manual task to a proactive, automated discipline.

- Integrate DQM into Workflows: Ensure data quality checks are built into routine business processes, such as lead generation, customer onboarding, and data migrations.

Phase 4: Monitoring and Continuous Improvement (Ongoing)

- Continuous Monitoring and Reporting: Establish dashboards and reports to track key data quality metrics in real-time. Regularly review these metrics to identify new issues or trends.

- Regular Audits and Reviews: Periodically re-audit data sources and review governance policies to adapt to evolving business needs and data landscapes.

- Foster a Data-Driven Culture: Educate employees on the importance of data quality and their role in maintaining it. Promote data literacy and accountability across the organization.

- Feedback Loops: Create mechanisms for users to report data quality issues and for the DQM team to address them promptly.

Data Quality Management in Action: Real Customer Results

The theoretical benefits of data quality management are substantial, but its true value is best demonstrated through practical application and tangible results. Organizations leveraging robust DQM solutions like DemandTools have transformed their data challenges into competitive advantages.

-

BARBRI: Merging 6,000 Duplicate Records a Month—Automatically. System integrations can inadvertently flood CRMs with duplicate records, leading to inefficiencies. BARBRI faced this challenge, with sales representatives repeatedly contacting the same prospects. By implementing DemandTools, they automated the entire data cleansing process, reducing what was once a days-long manual task into minutes. This freed their team to focus on sales enablement, significantly boosting productivity and eliminating wasted effort.

-

Thornburg Investment Management: 120 Hours Saved Every Week. Thornburg battled a constant influx of duplicates and unstandardized data from various third-party sources. The manual effort required to manage this was immense. After integrating DemandTools, the firm recovered an astonishing 120 hours of manual data management time per week. This gain in efficiency was akin to adding a full-time developer to their team without the overhead, demonstrating the profound impact of automation on operational capacity.

-

908 Devices: Making Data Quality a Competitive Advantage. At 908 Devices, Salesforce data is the lifeblood, informing everything from daily sales activities to executive-level strategic decisions made in platforms like Tableau. Recognizing data quality as paramount, they made DemandTools the backbone of their data strategy. The solution handles deduplication, standardization, and record management at scale, enabling the team to operate with agility and confidence, secure in the knowledge that their underlying data is accurate and reliable.

These examples underscore a crucial point: DQM is not merely about fixing problems but about unlocking potential, optimizing resources, and creating a strategic differentiator in a data-saturated market.

The Future of Data Quality: AI as Both Driver and Beneficiary

The symbiotic relationship between AI and data quality is becoming increasingly apparent. AI is not just making DQM more urgent; it is also offering new avenues for improving it. Advanced AI and machine learning algorithms can be deployed to:

- Automate Data Profiling: AI can quickly identify patterns, anomalies, and potential quality issues in massive datasets, far beyond human capacity.

- Predict Data Errors: Machine learning models can learn from historical data errors to predict where new errors are likely to occur, enabling proactive prevention.

- Contextual Data Enrichment: AI can intelligently enrich data by inferring missing values or adding relevant external information based on context.

- Smart Deduplication: AI-powered matching algorithms can identify duplicates even with variations in spelling or format, offering more sophisticated cleansing capabilities.

This shift is transforming data quality from a reactive, manual chore into a real-time, proactive discipline. As organizations rely more on AI to drive decisions and automate workflows, the margin for error in underlying data shrinks dramatically. This pushes DQM to the forefront of strategic planning, where tools that can manage data quality at scale are indispensable for staying ahead of problems before they surface.

Strategic Imperative: Partnering for Data Excellence

Data cleanliness is no longer an option but a strategic imperative. Quality data is essential for efficient operations, accurate reporting, regulatory compliance, and ultimately, superior business outcomes. Organizations that invest in mature data quality practices gain a significant competitive advantage: they move faster, serve customers better, optimize resource allocation, and innovate with greater confidence.

For over two decades, solutions like DemandTools from Validity have empowered thousands of organizations to take control of their Salesforce data. By providing flexible, powerful capabilities to standardize fields, eliminate duplicates, update records, and prevent bad data from entering systems, such tools ensure data integrity at scale. This allows businesses of all sizes to clean, manage, and maintain high-quality data that drives better operations, more intelligent decisions, and stronger customer relationships.

In an era where data is at the core of every business decision, safeguarding its quality is paramount. It is the difference between a system that propels your business forward and one that holds it back. By embracing a comprehensive data quality management strategy and leveraging purpose-built tools, organizations can build a robust, trustworthy data foundation, ensuring their data assets are ready for the challenges and opportunities of the AI age.