The digital landscape of 2026 presents a stark contrast between a vast majority of static web entities and a highly sophisticated, exclusive tier of data-driven organizations. Recent industry analysis and original data from global experimentation platforms reveal that despite the ubiquity of e-commerce and digital services, structured A/B testing remains a rarity. Less than 0.2% of all active websites currently engage in formal experimentation, a statistic that underscores a massive divide in digital maturity. While the total number of websites globally hovers between 1.1 and 1.2 billion, only approximately 2.2 million sites utilize dedicated A/B testing or personalization tools. This elite group, however, accounts for the vast majority of digital innovation and revenue growth, particularly among high-traffic enterprises where adoption rates are significantly higher.

The Digital Divide: Adoption Rates by Traffic Tier

The adoption of Conversion Rate Optimization (CRO) tools is directly correlated with traffic volume and business scale. While the global average is less than one percent, the data shifts dramatically when examining the web’s top earners. Among the top 10,000 websites by traffic, 32% employ at least one A/B testing or personalization platform. This figure tapers as traffic decreases: 20.95% of the top 100,000 sites and 11.5% of the top one million sites are active experimenters.

Market analysts suggest that these figures may actually underrepresent the true scale of experimentation. Large-scale enterprises increasingly favor server-side testing and custom-built internal platforms to avoid the performance "flicker" associated with traditional client-side tools. These bespoke systems often evade detection by standard technology trackers like BuiltWith. Industry sectors leading this charge include Retail and E-commerce, which represent 27% of all practitioners, followed by Technology and SaaS at 23%, and Finance and Insurance at 13%. These sectors are characterized by high-stakes metrics such as Average Order Value (AOV), Monthly Recurring Revenue (MRR), and Lifetime Value (LTV), where even a 1% improvement in conversion can result in millions of dollars in additional revenue.

A Five-Year Retrospective: The Evolution of Experimentation Maturity

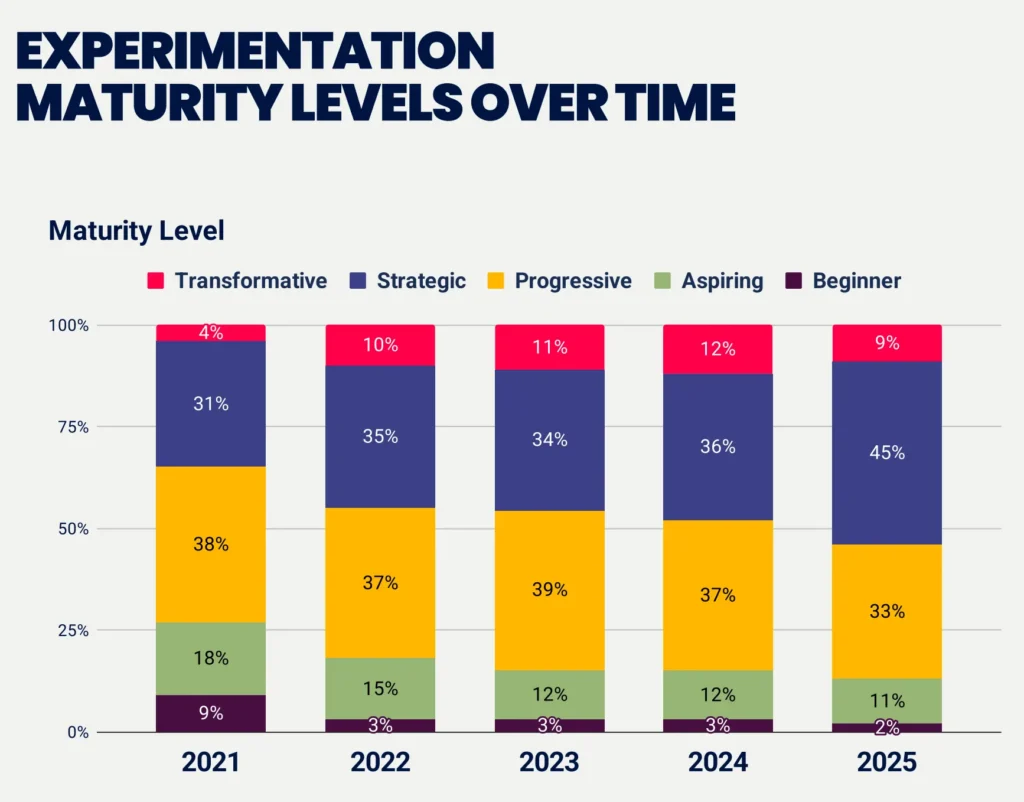

The journey toward a "culture of experimentation" has seen significant progress over the last half-decade. In 2021, the experimentation landscape was dominated by "beginners" and "aspirants." By 2026, the industry has matured considerably. According to the 2025 Experimentation Maturity Program Report by Speero, 54% of surveyed companies now operate at "strategic" or "transformative" levels, a substantial increase from the 35% reported in 2021.

This shift indicates that the "progressive middle" is shrinking as companies either commit to deep experimentation or abandon the practice due to a lack of resources. Beginner-level programs have dropped from 9% to a mere 2%. However, the "transformative" level—defined by seamless cross-team collaboration, executive-level sponsorship, and the democratization of data—remains elusive, achieved by only 10% of organizations. For many, the hurdle is not the technology, but the organizational structure. Approximately 33% of beginner-level firms have been testing for less than a year, while a staggering 67% of these firms do not track the duration of their programs at all, suggesting a lack of formalization that prevents them from reaching the higher tiers of maturity.

Statistical Rigor and Methodological Trends

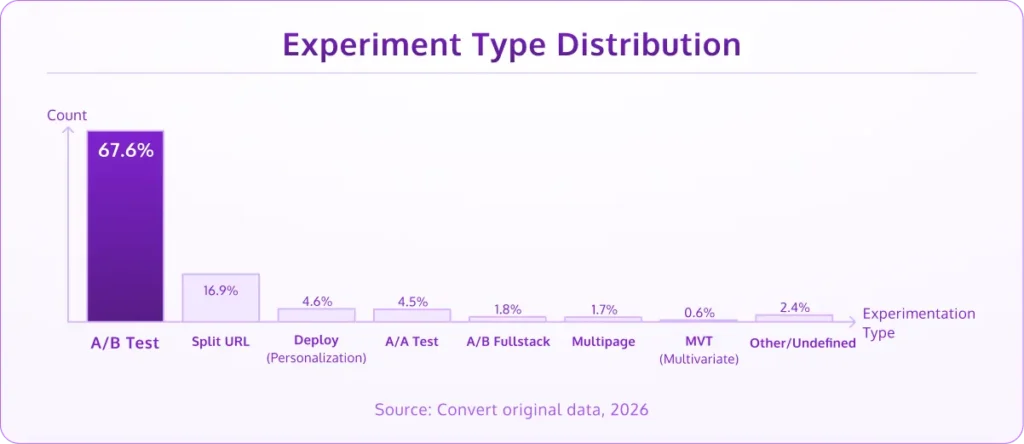

Data from experiments run throughout 2025 and early 2026 shows that the classic A/B test remains the industry workhorse, accounting for 67.6% of all experiments. This is followed by split URL tests at 16.9%, which are typically utilized for major structural overhauls or comparing entirely different landing page designs.

Despite the hype surrounding advanced algorithms, Multi-Armed Bandit (MAB) testing accounts for less than 3% of experiments. While MAB is efficient for short-term promotions where "earning while learning" is critical, the majority of CRO professionals prefer traditional fixed-horizon testing for its statistical clarity. Multivariate Testing (MVT) also remains a niche methodology, utilized in less than 1% of cases due to the immense traffic requirements needed to reach statistical significance across multiple variable combinations.

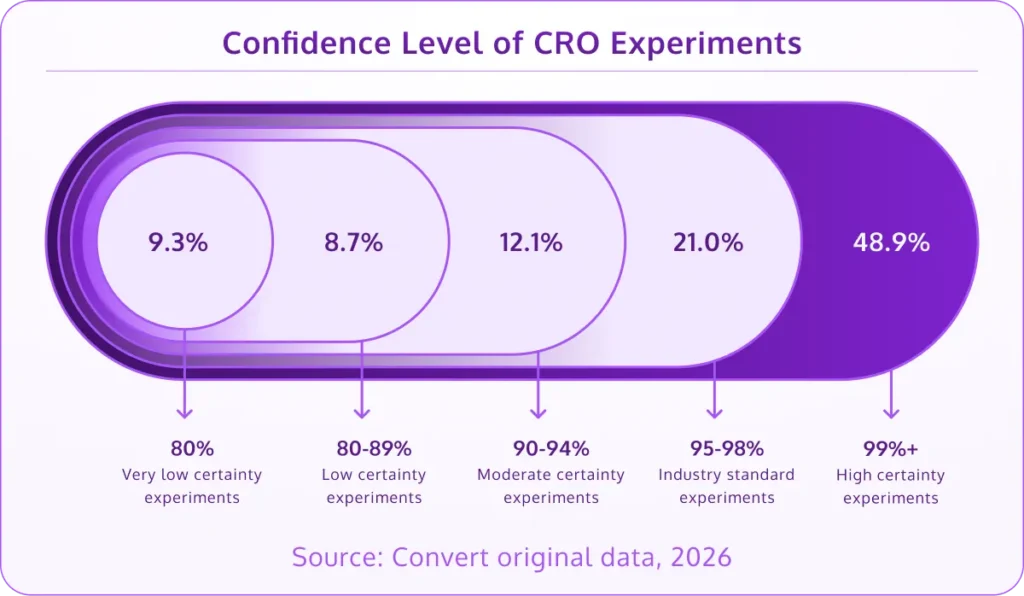

The industry has, however, seen a marked increase in statistical rigor. Approximately 70% of CRO teams now run their tests to at least a 95% confidence level, with nearly half (49%) pushing for 99% confidence to minimize the risk of false positives. Conversely, 18% of tests are still concluded with less than 90% confidence, often used as directional indicators rather than definitive proof for major deployments.

The Reality of Results: Lift Distribution and Twyman’s Law

The expectation of "massive wins" often clashes with the reality of compounding gains. Analysis of completed tests shows that 60% of successful A/B tests deliver a lift of less than 20%, and 84% deliver less than 50%. The distribution of winning results is as follows:

- 0-10% lift: 21.6% of tests

- 11-20% lift: 18.6% of tests

- 21-30% lift: 15.7% of tests

- 31-40% lift: 14.7% of tests

- 41-50% lift: 13.7% of tests

A notable 7.8% of tests report improvements exceeding 100%. While these results are celebrated, seasoned practitioners point to Twyman’s Law: "Any statistic that looks interesting or different is usually wrong." Such extreme outliers are often the result of data tracking errors, seasonality, or novelty effects. High-performing teams treat these "mega-wins" with skepticism, often re-running the experiment to validate the findings before full-scale implementation.

The Agency Ecosystem and the Impact of Artificial Intelligence

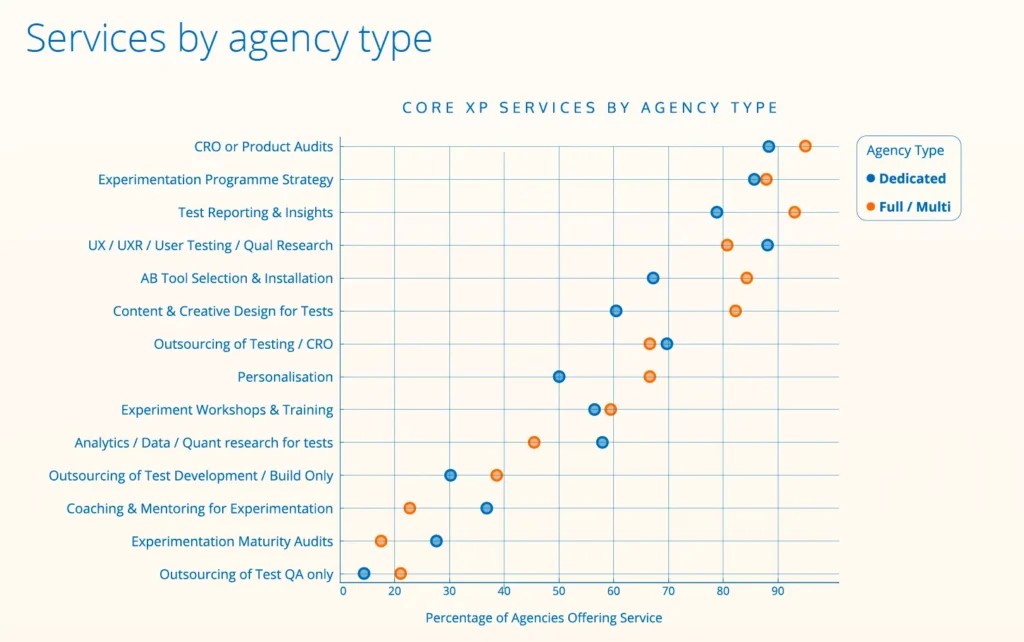

The service provider landscape has expanded to meet the demand for specialized expertise. There are currently 237 identified experimentation agencies worldwide. Of these, 55% are dedicated exclusively to experimentation, while 43% are multi-service firms. The ecosystem is characterized by small, highly experienced teams; over half of these agencies employ fewer than five people, but 90% of them have been in operation for over five years.

The most significant shift in the agency world is the integration of Artificial Intelligence (AI). Currently, 76% of agencies report that AI is a core component of their workflow. Usage is highest among smaller, newer agencies (81%) compared to established firms (70%). AI is primarily used for:

- Research and Analysis: Processing vast amounts of qualitative user feedback.

- Hypothesis Formation: Generating test ideas based on historical data patterns.

- Coding: Accelerating the creation of experiment variations.

- Reporting: Automating client summaries and data visualization.

As AI automates the tactical execution of tests, agencies are pivoting toward strategic enablement. Approximately 60% of agencies report that their primary stakeholders are shifting from marketing departments to product teams. This move toward "product-led experimentation" signals a change in how businesses view CRO—not just as a way to improve ad copy, but as a fundamental method for feature validation and product development.

Organizational Challenges and the "Invisible Work" Gap

Despite the proven ROI of experimentation, internal cultural hurdles persist. A significant 58% of companies lack a clear prioritization framework (such as ICE or PXL), leading to wasted resources on low-impact tests. Furthermore, 52% of businesses admit to having no formal Quality Assurance (QA) process for their experiments, a lapse that can lead to broken user experiences and corrupted data.

A critical disconnect exists between the effort expended and the recognition received. While 63% of employees feel their company culture encourages testing, only 47% feel their efforts are properly recognized by leadership. This 16-point gap highlights the difficulty of communicating the value of "failed" tests, which provide essential learning even if they do not result in immediate revenue lift. This is compounded by the fact that only 26% of companies have a senior management sponsor who is directly accountable for experimentation growth.

Strategic Implications for 2026 and Beyond

The data suggests that the future of digital competition will be defined by the ability to personalize at scale. Effective personalization can yield a 5-15% revenue lift and a 10-30% improvement in marketing ROI. With 71% of consumers now expecting personalized interactions, the transition from general A/B testing to sophisticated personalization is the next frontier for the 0.2% of companies that experiment.

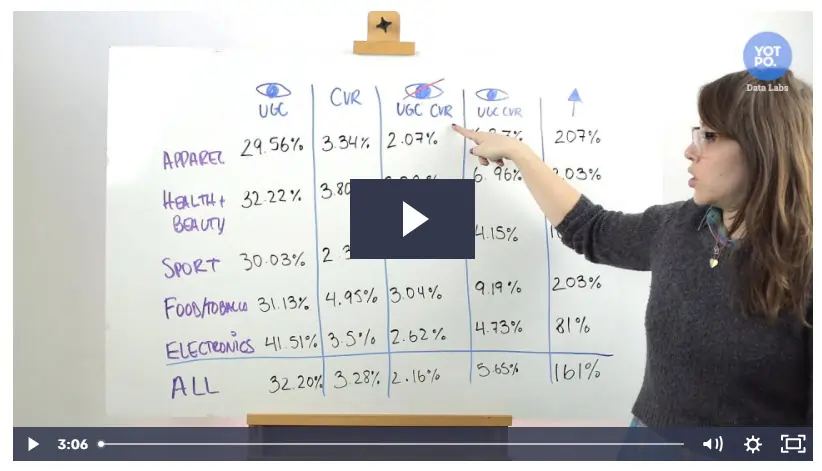

Furthermore, the "low-hanging fruit" of CRO remains relevant. Research from the Baymard Institute indicates that the average e-commerce checkout still contains 11.3 form fields, despite evidence that eight is the optimal maximum. Reducing friction in the checkout process, incorporating User-Generated Content (which can boost conversions by 161% in certain sectors), and ensuring mobile-first optimization remain the foundational tactics that drive results.

In conclusion, the experimentation landscape of 2026 is one of high specialization and significant untapped potential. While the barrier to entry—technical knowledge, traffic requirements, and cultural buy-in—remains high, the rewards for the few who navigate these challenges are substantial. As AI continues to lower the technical hurdles for execution, the true competitive advantage will shift toward those who can master the strategy, prioritization, and cultural integration of experimentation within the broader business framework. Organizations that fail to adopt these practices risk falling into the 99.8% of websites that remain static in an increasingly dynamic digital economy.