Digital optimization has reached a critical crossroads where the convenience of simple split testing often masks deeper structural deficiencies in product strategy and user experience. For many modern marketing and product teams, the journey into experimentation begins with a modest success—a headline change that nudges conversions or a button color that increases click-through rates. However, what starts as a data-driven tool often devolves into a decision-making crutch. As teams become increasingly dependent on A/B testing for every minor adjustment, they risk losing sight of the broader business objectives, focusing instead on incremental gains while ignoring the fundamental health of the user journey.

The current landscape of Conversion Rate Optimization (CRO) suggests that while A/B testing is a foundational element of the digital toolkit, its over-application is a symptom of low organizational maturity. High-growth enterprises are shifting their focus from "winning" individual tests to building comprehensive experimentation programs that prioritize long-term profitability and deep user research over short-term session lifts.

The Evolution of the Experimentation Culture

The rise of A/B testing as the default methodology for digital decision-making can be traced back to the early 2000s, popularized by tech giants like Microsoft, Google, and Amazon. A landmark moment in this chronology occurred within Microsoft’s Bing team, where a simple experiment merging two ad title lines into a single, longer headline resulted in a click-through rate increase that generated over $100 million in additional annual revenue. Such high-profile success stories normalized the "test everything" mindset across the industry.

The democratization of this technology followed shortly thereafter. The emergence of experimentation platforms like FigPii and Optimizely lowered the barrier to entry, allowing non-technical marketing staff to launch variants without the need for complex statistical pipelines or data science intervention. This ease of use, while beneficial for scaling, created a culture where "experimentation" became synonymous with "running a simple A/B test."

Industry data underscores this trend. According to recent surveys, approximately 77% of all digital experiments remain simple A/B tests involving only two variants. Despite the availability of multivariate and multi-treatment designs, the majority of teams default to the simplest possible approach, often at the expense of deeper insight.

The Statistical Reality and the Traffic Barrier

One of the most significant challenges facing the modern experimentation program is the lack of statistical power. For an A/B test to provide a reliable answer, it requires a substantial volume of traffic and conversions to distinguish a genuine behavioral shift from random noise. To detect a modest lift of 1% or 2% with statistical confidence, a site often needs hundreds of thousands of visitors per variant.

For the average e-commerce brand, this requirement creates a functional bottleneck. Even companies generating one to two million sessions per month find themselves in a precarious position where tests must run for six to twelve weeks to reach significance. This delay hinders organizational agility and often leads to three common failure modes:

- Premature Termination: Ending tests before they reach statistical power, leading to "false positives."

- Inconclusive Results: Running tests on low-traffic pages where the data never reaches a definitive conclusion.

- The "Flat" Test Trap: Focusing on micro-changes that are too small to ever move the needle significantly, regardless of traffic volume.

Beyond the "What": Addressing the "Why" Through Behavioral Analysis

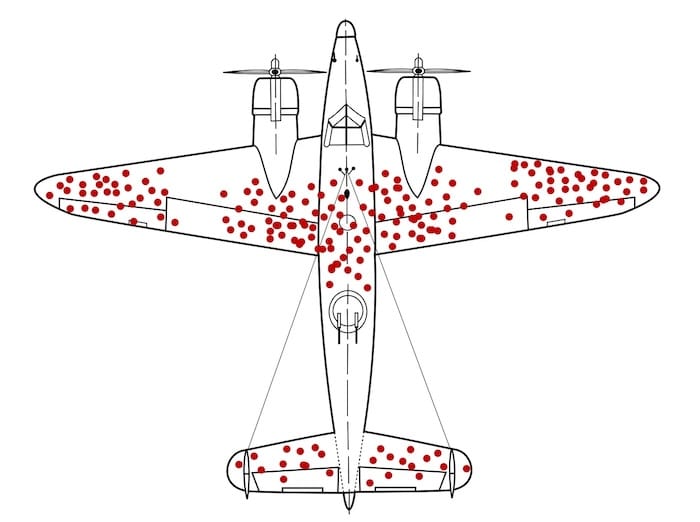

A fundamental limitation of A/B testing is its inability to explain the underlying cause of user behavior. While a test can definitively state that Variant B outperformed Variant A, it offers no insight into the psychological or functional triggers behind that outcome. This creates a "survivorship bias" in digital data, a concept famously illustrated by World War II statistician Abraham Wald.

During the war, the military analyzed bullet holes in returning aircraft to determine where to add armor. Wald realized that the military was only looking at the "survivors"—the planes that were hit but made it back. The most critical data lay in the planes that did not return because they were hit in vulnerable areas like the engine. In e-commerce, A/B tests often focus on the "survivors" (the converters) while ignoring the silent majority who dropped out of the funnel. Without qualitative research—such as heatmaps, session recordings, and customer surveys—teams end up "optimizing the bullet holes" rather than fixing the fundamental vulnerabilities that cause users to abandon the site entirely.

Short-Term Wins vs. Long-Term Business Health

The metrics traditionally used to measure A/B testing success are often at odds with long-term business viability. Currently, over 90% of experiments focus on five primary metrics: CTA clicks, revenue, checkout completion, registration, and add-to-cart actions. CTA clicks alone account for nearly 35% of all primary metrics tracked in digital experiments.

However, high-maturity teams have observed a "decay" in the value of these short-term metrics. A variant that increases "add-to-cart" rates through aggressive prompts may simultaneously decrease the Average Order Value (AOV) or lead to a higher rate of returns. Furthermore, short-term lifts in conversion can negatively impact Customer Lifetime Value (LTV) if the tactic involves misleading messaging or "dark patterns" that erode brand trust.

The "Jam Experiment," a classic study in behavioral economics, serves as a warning for modern testers. In that study, a display with 24 varieties of jam attracted more interest but resulted in a 3% purchase rate, whereas a display with only 6 varieties led to a 30% purchase rate. This "paradox of choice" demonstrates how a variant that wins on an engagement metric (attracting more people) can ultimately fail on a conversion metric. High-maturity programs now prioritize "Holdout Groups"—segments of users who are never exposed to experiments over a long period—to measure the true incremental impact of their optimization efforts on long-term retention and profit.

Frameworks of High-Maturity Experimentation Programs

Organizations that have successfully moved beyond the "A/B testing trap" share several common characteristics in their approach to growth.

1. Diversified Methodology

Mature teams treat A/B testing as one tool among many. They utilize a broader toolkit that includes:

- Sequential Tests: For faster decision-making on high-impact changes.

- Switchback Tests: Often used in marketplace dynamics (like delivery apps) to prevent interference between treatment and control groups.

- Quasi-Experiments: For situations where random assignment is impossible, such as changes to physical retail locations or regional pricing models.

- Holdout Groups: To measure the cumulative effect of all experiments over a six-to-twelve-month period.

2. Research-Driven Hypotheses

Rather than testing random ideas from a backlog, high-maturity teams ground every experiment in evidence. A robust hypothesis now follows a strict four-part structure:

- Evidence: "Because session recordings show users hesitate at the shipping stage…"

- Belief: "…we believe uncertainty about delivery dates is reducing completion…"

- Action: "…so we will implement a real-time delivery estimator on the product page…"

- Outcome: "…and we expect a 5% increase in mobile checkout completion."

3. Testing "Big Levers"

Instead of focusing on cosmetic UI changes, advanced programs test fundamental business drivers. This includes experimenting with pricing elasticity, subscription models, value proposition clarity, and information architecture. For example, rather than testing the color of a "Buy Now" button, a high-maturity team might test the entire sequence of how product benefits are communicated, potentially reordering the entire page layout based on user motivation levels.

Analysis of Implications for the Digital Economy

The shift away from superficial A/B testing toward a more holistic experimentation model has profound implications for the digital economy. As the cost of customer acquisition (CAC) continues to rise across platforms like Meta and Google, the ability to maximize the value of existing traffic becomes a competitive necessity rather than a luxury.

Companies that fail to evolve their CRO maturity will likely find themselves trapped in a cycle of "local maxima"—achieving the best possible version of a fundamentally flawed experience. In contrast, organizations that integrate qualitative research with sophisticated experimental designs will be better positioned to adapt to changing consumer behaviors.

The industry response is already visible. Leading CRO firms are moving away from "test execution" services toward "experimentation auditing" and "program building." This shift reflects a growing recognition that the value of experimentation lies not in the "win" itself, but in the institutional knowledge gained and the long-term compounding of incremental improvements. Ultimately, the goal of a modern experimentation program is to move the organization from a culture of "opinion-based guessing" to one of "evidence-based certainty," where every digital change is a calculated step toward sustainable growth.