In the hyper-competitive landscape of digital product development, the pressure to innovate often leads to a paradoxical outcome: the creation of features that no one wants or, worse, features that actively damage the brand. According to the Feature Adoption Report by Pendo, an industry-standard benchmark, approximately 80 percent of shipped software features are rarely or never used following their initial launch. This statistic highlights a systemic inefficiency in the technology sector, where billions of dollars in engineering capital are diverted into "zombie features" that clutter user interfaces without delivering sustained value.

The consequences of these failures extend far beyond wasted developer hours. A botched feature release can trigger immediate revenue contraction, erode years of painstakingly built user trust, and provide competitors with an opening to capture market share. From the high-stakes world of algorithmic trading to the ubiquity of mobile operating systems, the history of technology is littered with cautionary tales of releases that went spectacularly wrong. By analyzing these failures, product teams can identify the structural vulnerabilities in their release cycles and implement safety checkpoints to mitigate risk.

A Chronology of Disaster: High-Profile Feature Failures

To understand the mechanics of a failed release, one must examine the specific instances where established giants faltered. These examples illustrate that failure is rarely the result of a single bug, but rather a breakdown in the processes governing deployment, user psychology, and market validation.

1. The 45-Minute Collapse: Knight Capital’s Trading Error (2012)

In August 2012, Knight Capital Group was a dominant force in American equities, responsible for roughly 17 percent of the trade volume on the New York Stock Exchange (NYSE). Their downfall was precipitated by a botched software update intended to prepare their systems for a new NYSE Retail Liquidity Program.

The Timeline of the Event:

- July 2012: Knight Capital’s engineering team develops updates for SMARS, an automated routing system for orders. The update included repurposing a legacy code flag known as "Power Peg," which had been used years earlier for testing.

- July 31, 2012: An engineer manually deploys the new code to eight production servers. Crucially, the update is only successfully applied to seven of the eight servers.

- August 1, 2012, 9:30 AM: As the opening bell rings, the eighth server—still running the old "Power Peg" test code—interprets incoming live orders as test instructions. It begins buying and selling millions of shares at a frantic pace to drive prices toward a specific target, as the test code was designed to do.

- August 1, 2012, 10:15 AM: After 45 minutes of chaos, the system is finally shut down. In that window, Knight executed 4 million trades across 154 stocks.

The Fallout:

The error resulted in a realized loss of $440 million, a figure that exceeded the company’s entire cash reserves at the time. Despite a $400 million emergency infusion from a consortium of investors, Knight Capital was forced into a merger with Getco LLC less than a year later. The Securities and Exchange Commission (SEC) later fined the firm $12 million, citing a lack of adequate "kill switches" and failure to implement automated deployment checks.

2. The Cultural Mismatch: LinkedIn Stories (2020–2021)

While Knight Capital’s failure was technical, LinkedIn’s foray into ephemeral content was a failure of product-market fit. In 2020, following the success of the "Stories" format on Snapchat, Instagram, and Facebook, LinkedIn launched its own version of the feature.

The Logic and the Reality:

LinkedIn’s leadership aimed to lower the barrier to entry for sharing, hoping to encourage casual, frequent interactions among professionals. However, the move ignored the fundamental psychology of the platform. Users view LinkedIn as a digital CV and a repository of professional credibility; they generally prefer "evergreen" content that builds their professional brand over 24-hour snippets.

By September 2021, LinkedIn officially discontinued the feature. Liz Li, LinkedIn’s Senior Director of Product, noted in a post-mortem that users wanted their videos to "live on their profile, not disappear." The failure served as a reminder that mimicking a competitor’s feature without considering the unique context of one’s own audience is a recipe for low adoption.

3. The Navigational Crisis: The Apple Maps Launch (2012)

In September 2012, Apple made the strategic decision to replace Google Maps as the default navigation app on iOS 6. The goal was to exert more control over the user experience and the valuable location data associated with it.

The Consequences of Inadequate Validation:

Upon release, the product was riddled with inaccuracies. It famously directed drivers in Australia into the middle of a dangerous national park, "melted" images of iconic landmarks like the Brooklyn Bridge in its 3D Flyover mode, and lacked basic public transit information for major global cities.

The public backlash was immediate and severe. Apple’s stock price dropped by 4.5 percent, wiping out approximately $30 billion in market capitalization in the days following the launch. The crisis forced CEO Tim Cook to issue a rare public apology, even suggesting that users download rival apps like MapQuest or Google Maps while Apple fixed its product. The executive in charge of the project, Scott Forstall, was subsequently ousted from the company.

Categorizing the Five Modes of Feature Failure

Industry analysts have identified five recurring patterns that characterize failed releases. Recognizing these early can prevent a company from proceeding with a doomed launch.

- Adoption Failures: These occur when a feature is functionally sound but remains unused. This is often due to poor discoverability, high friction in the user journey, or a solution that addresses a problem the user does not actually have.

- Perception Failures: This involves "improvements" that users perceive as regressions. When a UI overhaul disrupts the "muscle memory" of power users, the resulting friction can lead to vocal dissatisfaction, even if the new system is technically faster.

- Performance Failures: A feature may provide value but at the cost of the overall system’s health. For example, a new AI-driven recommendation engine might provide better suggestions but slow down page load times by 500ms, leading to a net drop in conversion.

- Monetization Failures: These updates inadvertently cannibalize revenue. A common example is introducing a free tier feature that is so robust it encourages existing paid subscribers to downgrade.

- Trust Failures: These are the most damaging, often involving privacy or security. When a feature shares user data in an unexpected way or introduces a vulnerability, the resulting loss of confidence can be permanent.

The Safety Checkpoint Framework: A Strategic Path Forward

To avoid the pitfalls experienced by Knight Capital, LinkedIn, and Apple, modern product teams are adopting a rigorous framework centered on "Feature Experimentation." This approach treats every release not as a finished product, but as a hypothesis to be tested.

Checkpoint 1: Progressive Exposure

Instead of a "big bang" release to 100 percent of the user base, teams use feature flags to roll out updates to a small, controlled segment (e.g., 1 percent or 5 percent). This limits the "blast radius" if a critical bug or negative user reaction occurs.

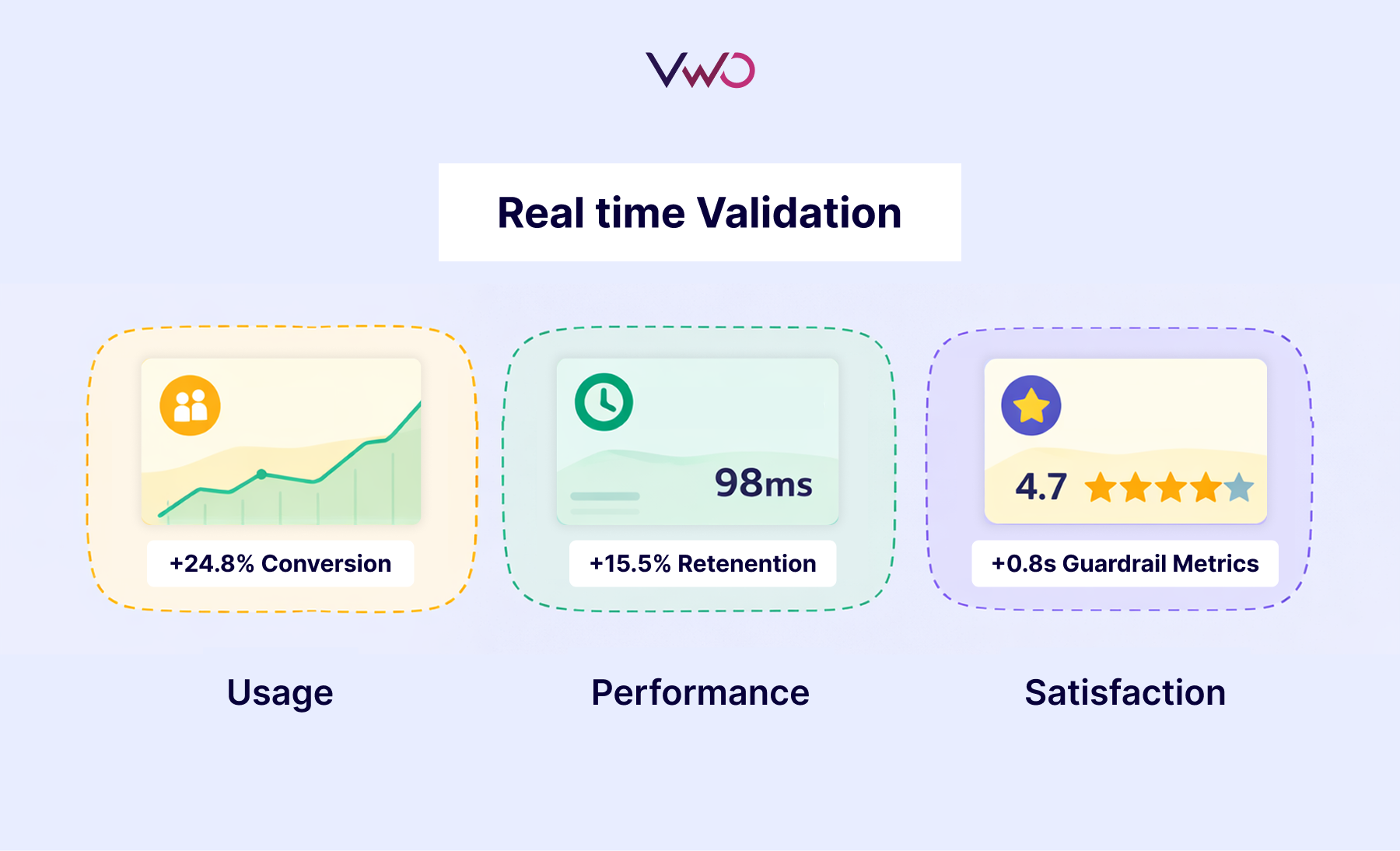

Checkpoint 2: Real-Time Validation

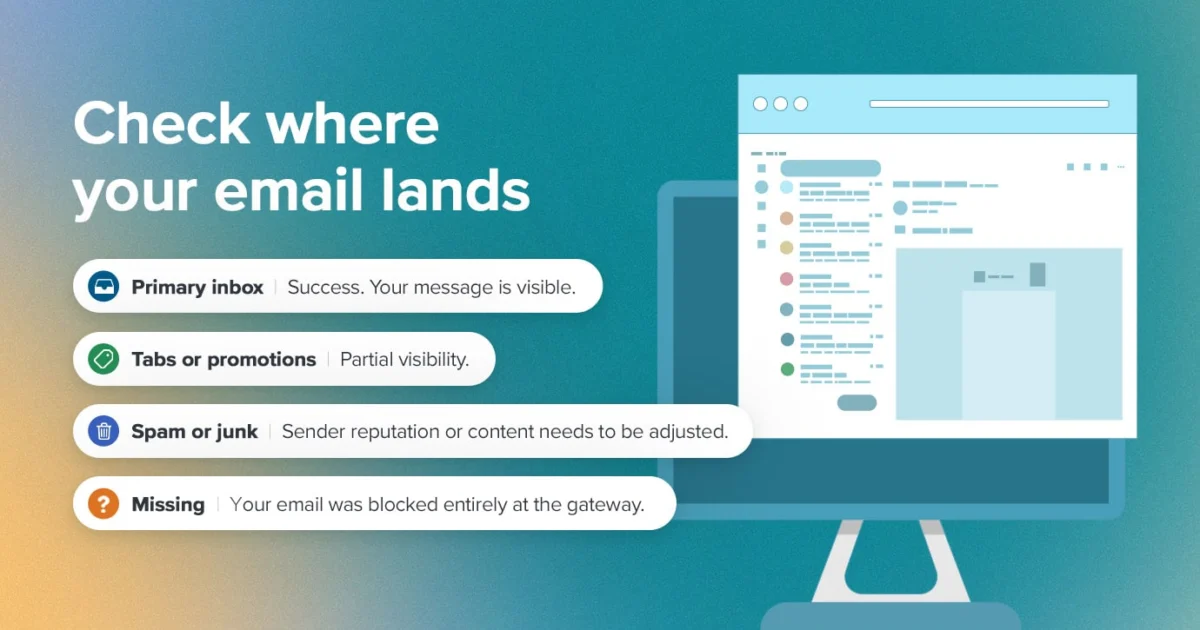

Monitoring must go beyond simple "uptime." Teams must track three distinct metrics:

- Primary Metrics: Does the feature do what it was intended to (e.g., increase click-through rate)?

- Secondary Metrics: How does it affect related behaviors (e.g., does it increase time on site)?

- Guardrail Metrics: Does it harm the system (e.g., does it increase error rates or latency)?

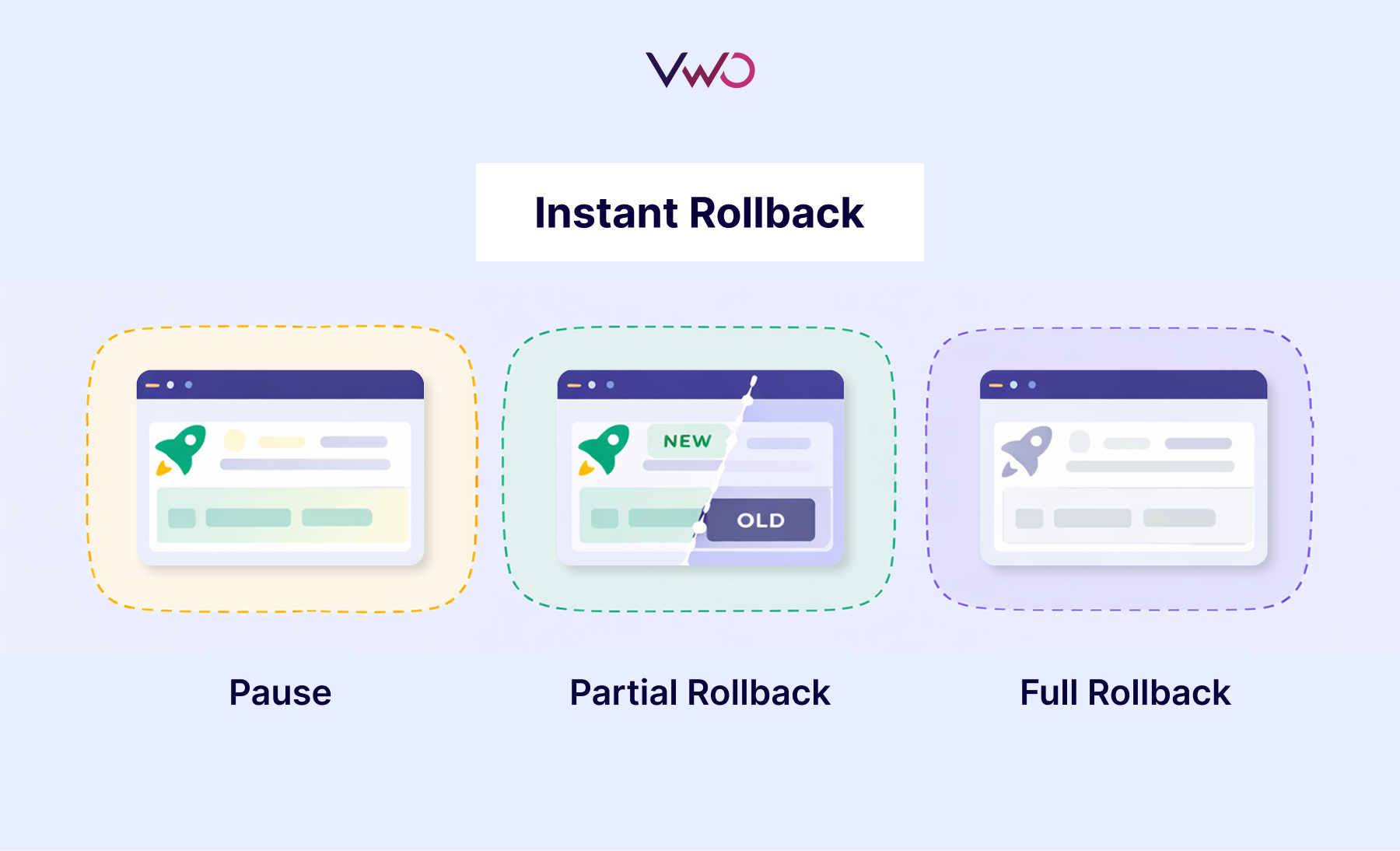

Checkpoint 3: The Instant Rollback

A "kill switch" is a prerequisite for any high-stakes release. As seen in the Knight Capital disaster, the ability to disable a feature instantly without a full code redeployment can save a company from insolvency.

Checkpoint 4: Controlled A/B Testing

Validation should be based on evidence, not intuition. By running variations of a feature against a control group, teams can measure the exact delta in user behavior, ensuring that a feature is actually an improvement before it is fully committed to the codebase.

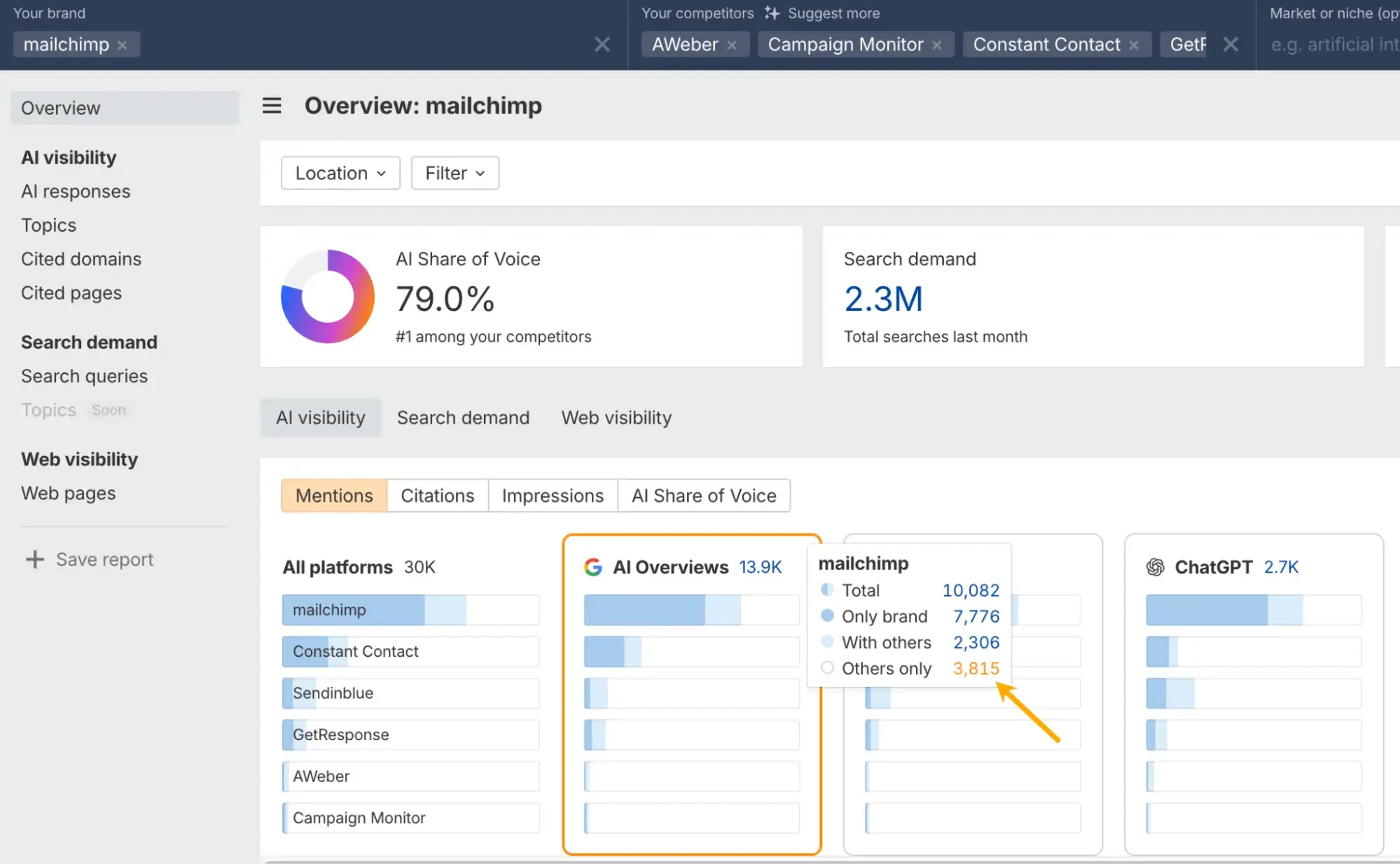

Checkpoint 5: Geographic and Segment Personalization

As demonstrated by the Apple Maps failure, what works in one region or for one user demographic may fail in another. Modern release tools allow for "targeting," where features are enabled only for specific cohorts where they are proven to be effective.

Broader Impact and Industry Implications

The shift from "shipping code" to "managing releases" represents a fundamental evolution in software engineering. The traditional model of long development cycles followed by massive launches is being replaced by continuous delivery and experimentation.

The economic implications are significant. Companies that master these safety checkpoints can innovate faster because the cost of failure is minimized. Conversely, firms that continue to rely on manual deployments and "gut feeling" product decisions risk becoming the next Knight Capital. In an era where software is the primary interface between a business and its customers, the release process is no longer just a technical task—it is a core pillar of corporate risk management and strategic growth. By treating feature releases with the same rigor as financial audits or safety inspections, the technology industry can finally begin to lower that 80 percent failure rate, ensuring that the software of tomorrow is both valuable and resilient.