The landscape of digital marketing has shifted from a discipline of grand, singular launches to one of continuous, granular refinement. As global advertising spends continue to face scrutiny amid fluctuating economic conditions, the traditional "set-and-forget" approach to A/B testing is being replaced by iterative testing—a methodology that prioritizes small, evidence-based improvements over time. This process, rooted in software development and agile management, allows marketing teams to adapt to shifting consumer behaviors, reduce budget waste, and accelerate conversion rates through a perpetual cycle of experimentation.

The Evolution of Marketing Optimization: From Intuition to Iteration

Historically, marketing campaigns were designed as fixed assets. A creative team would develop a campaign, launch it, and measure its success post-mortem. While A/B testing introduced a scientific element to this process—allowing marketers to compare two versions of a landing page—it often remained a one-time event. Marketers would crown a "winner" and move on to the next project, often leaving potential gains on the table.

Iterative testing fundamentally changes this timeline. Instead of viewing a test as a terminal point, it treats every experiment as a data source for the next hypothesis. This shift is critical as user behavior becomes increasingly volatile. According to recent industry benchmarks, the efficacy of marketing copy and design is no longer static; what resonated with a target audience six months ago may fail today due to changes in market saturation, competitor strategies, or platform algorithms.

Key Data Points: The Case for Simplicity and Accessibility

Data from the 2024 Conversion Benchmark Report underscores the necessity of this iterative approach. One of the most significant findings in the report reveals a stark correlation between reading level and conversion performance. Landing pages written at a 5th-to-7th-grade reading level—prioritizing clarity over professional jargon—convert at an average rate of 11.1%. This is more than double the conversion rate of pages written at a professional or academic level.

Furthermore, the report highlights a growing "Mobile-Desktop Paradox." While mobile devices now account for approximately 83% of all landing page visits, desktop environments continue to convert 8% better on average. For marketers, this data point is not a signal to abandon mobile, but rather a prompt for iterative testing. By isolating mobile-specific friction points—such as form length, load times, and button placement—teams can incrementally close the conversion gap.

Additional research indicates that word complexity has a -24.3% negative correlation with conversion rates. This suggests that as marketers attempt to sound more authoritative or "technical," they inadvertently alienate their audience. Iterative testing allows a brand to systematically strip away complexity, testing simplified headlines and bullet points against their original "professional" copy to find the optimal balance for their specific niche.

The Six-Step Framework for Iterative Success

To implement a successful iterative testing program, organizations must move away from haphazard guessing and adopt a structured, six-step framework designed for speed and clarity.

1. Hypothesis Formation

The foundation of any iterative test is a laser-focused hypothesis. Broad goals, such as "improving the page," are replaced with specific, measurable predictions. For example, a marketer might hypothesize that "changing the call-to-action (CTA) from ‘Sign Up Now’ to ‘Start My Free Trial’ will increase click-through rates by 10% by emphasizing the value proposition." By testing a single variable, the team ensures that the resulting data is actionable and unambiguous.

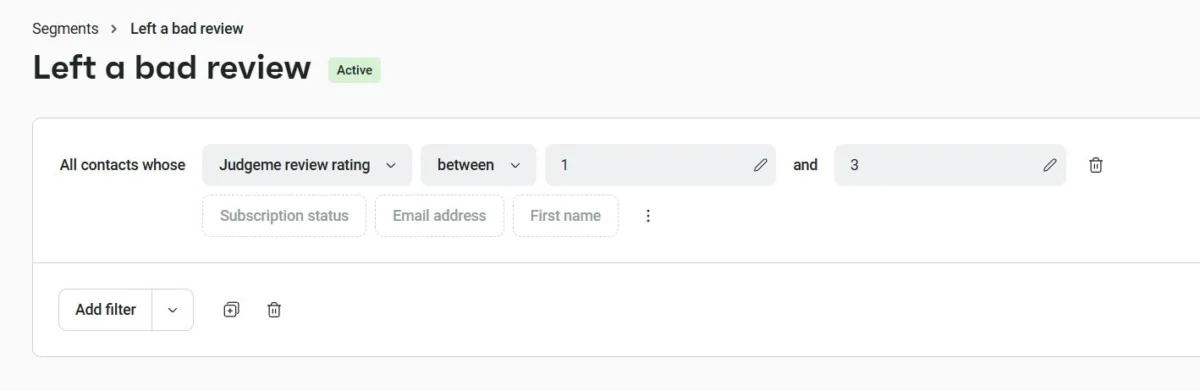

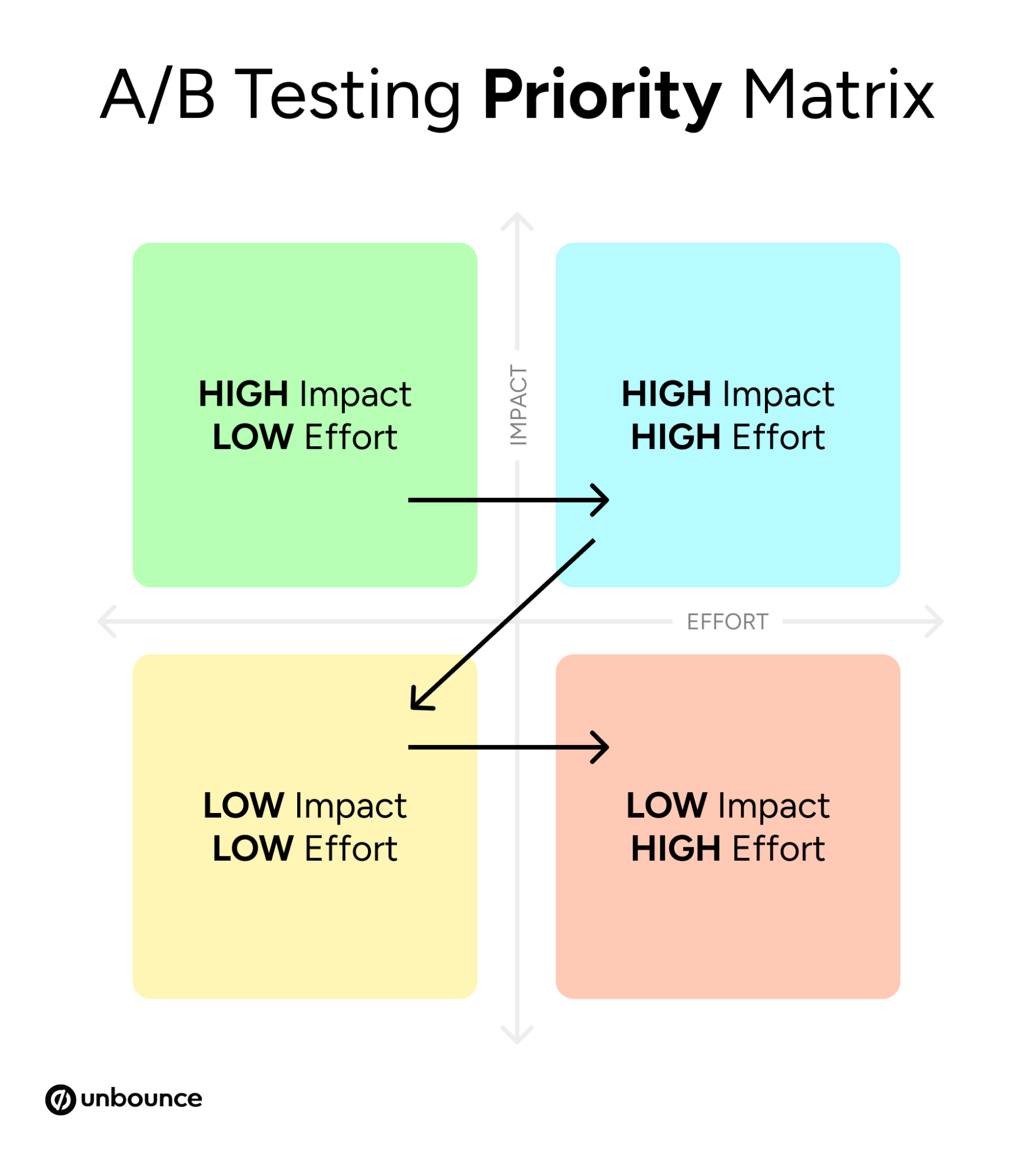

2. Prioritization via the Impact-Effort Matrix

In a high-velocity marketing environment, not all tests are worth the resources they require. Strategic teams utilize a 2×2 matrix to categorize potential experiments:

- Quick Wins: High impact, low effort (e.g., changing a headline).

- Big Bets: High impact, high effort (e.g., redesigning the entire checkout flow).

- Fill-ins: Low impact, low effort (e.g., changing a button color).

- Time Sinks: Low impact, high effort (e.g., custom animations).

Prioritizing "Quick Wins" allows teams to build momentum and prove the ROI of the testing program early in the cycle.

3. Development of Minimal Viable Variants

The iterative design philosophy discourages massive overhauls. Instead, marketers are encouraged to build "minimal but testable" variations. This involves duplicating a control page and making isolated changes. Using modern A/B testing platforms, teams can execute these changes without the need for extensive developer intervention, significantly reducing the "time-to-market" for each experiment.

4. Data Collection and Statistical Validation

A common pitfall in digital marketing is "early peaking"—terminating a test as soon as one variant shows a slight lead. To avoid making decisions based on random noise, tests must reach statistical significance. This typically requires a minimum of 100 conversions per variant or several hundred visits, depending on the baseline conversion rate. Patience is required to ensure that the data reflects a genuine shift in user preference rather than a temporary trend.

5. Analysis and Extraction of Insights

Once a test concludes, the focus shifts from "who won" to "why they won." If a simpler headline outperformed a complex one, the insight is not just about that specific page; it is about the audience’s preference for clarity. This insight can then be institutionalized across other channels, such as email marketing, social media ads, and sales scripts.

6. Scaling and Institutionalizing Learnings

The final step is the "loop" in the iterative cycle. Successful learnings are scaled across the organization, while failed tests are documented as valuable data points on what does not work. This institutional memory prevents different teams from repeating the same mistakes and ensures that the brand’s digital presence is always evolving toward higher efficiency.

Institutional Responses and Professional Perspectives

Industry experts suggest that the move toward iteration is as much a cultural shift as it is a technical one. Josh Gallant, founder of Backstage SEO and a prominent voice in SaaS growth, notes that many marketing failures are not "massive flops," but rather "slow leaks" that drain budgets over months. "Iterative testing plugs those leaks," Gallant argues, suggesting that consistent "base hits" (small wins) are more sustainable for long-term growth than attempting to hit "home runs" with every campaign launch.

From an organizational standpoint, this approach requires breaking down silos. When customer support teams share common pain points or sales teams report frequent objections, those insights become the raw material for the next round of marketing tests. This collaborative environment ensures that the testing backlog is fueled by real-world user data rather than internal hunches.

The Role of Artificial Intelligence and Automation

The future of iterative testing is increasingly intertwined with machine learning. Tools such as "Smart Traffic" algorithms are now capable of optimizing campaigns in real-time. Unlike traditional A/B tests that eventually funnel all traffic to a single winner, AI-driven tools can route specific visitors to the variant most likely to convert based on their device, location, and past behavior.

This automation does not replace the marketer but rather elevates their role. Instead of manually monitoring data for weeks, marketers can focus on high-level strategy and creative hypothesis generation, leaving the technical execution of traffic routing to automated systems. This accelerates the feedback loop, with some tools beginning to optimize after as few as 50 visits.

Broader Implications for the Digital Economy

The broader impact of the iterative testing movement is a more efficient and user-centric internet. As brands move away from intrusive, "guesswork" marketing and toward data-backed experiences, user satisfaction generally increases. When a landing page provides the exact information a user needs in a format they prefer, the friction of the digital economy is reduced.

Furthermore, for small to medium-sized enterprises (SMEs), iterative testing levels the playing field. While large corporations may have the budget for massive brand awareness campaigns, SMEs can achieve high ROI by being more agile—testing faster, learning quicker, and optimizing their limited spend with surgical precision.

Conclusion: The Path Forward

Iterative testing represents the maturation of digital marketing. By acknowledging that perfection is a moving target, organizations can commit to a path of constant improvement. The goal is no longer to launch the "perfect" campaign, but to build a marketing engine that is smarter today than it was yesterday. In an era defined by rapid technological change and shifting consumer expectations, the ability to iterate is not just a competitive advantage—it is a requirement for survival. Through disciplined experimentation, clear data analysis, and cross-functional collaboration, brands can transform their marketing from a static expense into a dynamic, self-optimizing asset.