The landscape of digital experimentation has undergone a radical transformation over the last decade, shifting from a niche growth tactic used by Silicon Valley giants to a foundational pillar of modern business operations. By 2026, the barrier to entry for running A/B tests has effectively vanished. With the maturation of generative artificial intelligence (GenAI) and automated testing platforms, the execution of experiments has become a low-cost commodity. AI systems now possess the capability to ideate hypotheses, design UI variants, write production-ready code, and synthesize complex statistical results in a matter of seconds. However, this ease of execution has birthed a new challenge: the dilution of quality. In an era where anyone can test anything, the true competitive advantage for enterprises no longer lies in the quantity of tests run, but in the rigor, transparency, and disciplined decision-making frameworks that govern the experimentation process.

The Shift Toward Systematic Experimentation

The transition into 2026 has seen a move away from "instinct-based" marketing toward what industry leaders call "Experimentation-Led Growth" (ELG). As customer journeys become increasingly fragmented across various digital touchpoints—ranging from AR interfaces to decentralized social platforms—one-off campaigns are no longer sufficient. Companies are now embedding experimentation directly into their core marketing systems. A primary example is HubSpot’s "Loop framework," which treats every marketing interaction as a continuous feedback loop rather than a static campaign.

Industry experts argue that while frameworks like Loop provide a roadmap, the industry at large is grappling with the "democratization paradox." When testing becomes too easy, the likelihood of "Garbage In, Garbage Out" (GIGO) increases exponentially. If the inputs—such as the initial hypothesis or the underlying data—are flawed, the resulting business decisions will be equally compromised.

The Chronology of Experimentation: From 2020 to 2026

The path to the current state of experimentation can be viewed through a five-year evolution of technology and methodology:

- 2020–2022: The Tooling Explosion. The market saw a surge in "no-code" testing tools, allowing non-technical marketers to launch tests without developer intervention.

- 2023–2024: The AI Integration Phase. Large Language Models (LLMs) began assisting in copy generation and basic data analysis, though human oversight remained heavy.

- 2025: The Automation Pivot. Platforms began offering "autonomous testing," where AI could identify underperforming pages and suggest optimizations automatically.

- 2026: The Rigor Revolution. Organizations realized that automated "wins" were often statistical noise. The focus shifted back to human-centric strategy, data triangulation, and ethical AI usage.

The GIGO Effect and the Importance of Mental Models

In 2026, the most significant risk to a testing program is the GIGO effect. This phenomenon occurs when hypotheses are built on shallow research, messy data tracking, or unvalidated AI outputs. A common failure mode in the current market involves the use of AI-generated buyer personas. While these personas can be generated instantly, they often lack the nuance of real-world customer behavior. If a company optimizes its website for a synthetic persona that does not reflect its actual audience, the "successful" test results will fail to translate into actual revenue.

To combat this, leading experimenters are adopting specific mental models. One such model, famously articulated by Richard Feynman, emphasizes that if a guess disagrees with the experiment, it is simply wrong—regardless of how "smart" the AI that generated it might be. Furthermore, optimization is increasingly viewed not as a quest for "wins," but as a search for "why." Understanding the underlying psychological drivers of a user’s action is now considered more valuable than a 2% lift in click-through rates.

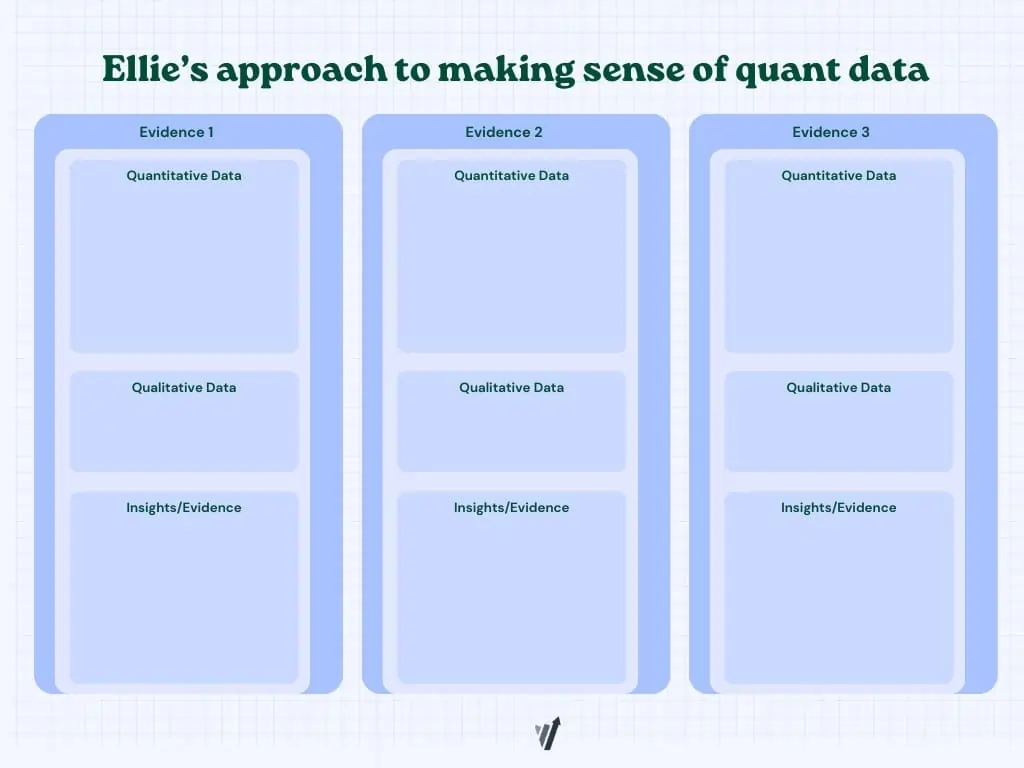

Data Triangulation: Reaching Defensible Insights

No single data source in 2026 is considered sufficient to justify a high-stakes experiment. The industry has moved toward "triangulation," a method of synthesizing various data types to ensure a hypothesis is robust. Ellie Hughes, Head of Consulting at the Eclipse Group, describes this as taking a "quantitative approach to qualitative data and a qualitative approach to quantitative data."

Triangulation typically involves three pillars of evidence:

- Quantitative Data: Heatmaps, clickstream data, and funnel analytics that show where users are dropping off.

- Qualitative Data: Session recordings, user interviews, and surveys that explain why users are behaving that way.

- Heuristic Evaluation: Expert reviews based on psychological principles such as cognitive load, social proof, and clarity of value propositions.

By reconciling contradictory findings across these sources, teams can avoid the trap of testing "best practices" that don’t apply to their specific context.

The Statistical Backbone: Frequentist vs. Bayesian Frameworks

The debate between Frequentist and Bayesian statistics remains a central theme in 2026. While Frequentist methods—relying on p-values and fixed sample sizes—remain the gold standard for clinical-level rigor, Bayesian statistics have gained favor for their intuitive nature.

Frequentist testing is often favored by large enterprises with high traffic volumes, as it provides a robust framework for de-risking decisions through strict error control. However, it is prone to "peeking"—the act of checking results before a test is finished—which can lead to false positives. Bayesian testing, conversely, updates the probability of a variant being "better" as data flows in, making it more flexible for fast-moving startups. However, experts warn that Bayesian analysis is not a "magic bullet" and still requires careful interpretation of the "probability of being best."

The ALARM Protocol for High-Quality Variants

To ensure that variants are designed to maximize learning, many top-tier CRO (Conversion Rate Optimization) teams have adopted the ALARM protocol. Developed by GAIN Conversion, this framework forces experimenters to pressure-test their ideas before they go live:

- Alternative Executions: Challenging the team to find at least three different ways to test the same hypothesis.

- Loss Factors: Identifying why the test might fail (e.g., low visibility or confusing messaging).

- Audience and Area: Defining exactly who should see the test and where it should occur to avoid diluting the results.

- Rigor: Applying psychological principles to ensure the variant is scientifically sound.

- MDE & MVE: Determining the Minimum Detectable Effect (the smallest lift worth tracking) and the Minimum Viable Experiment (the simplest version of the test).

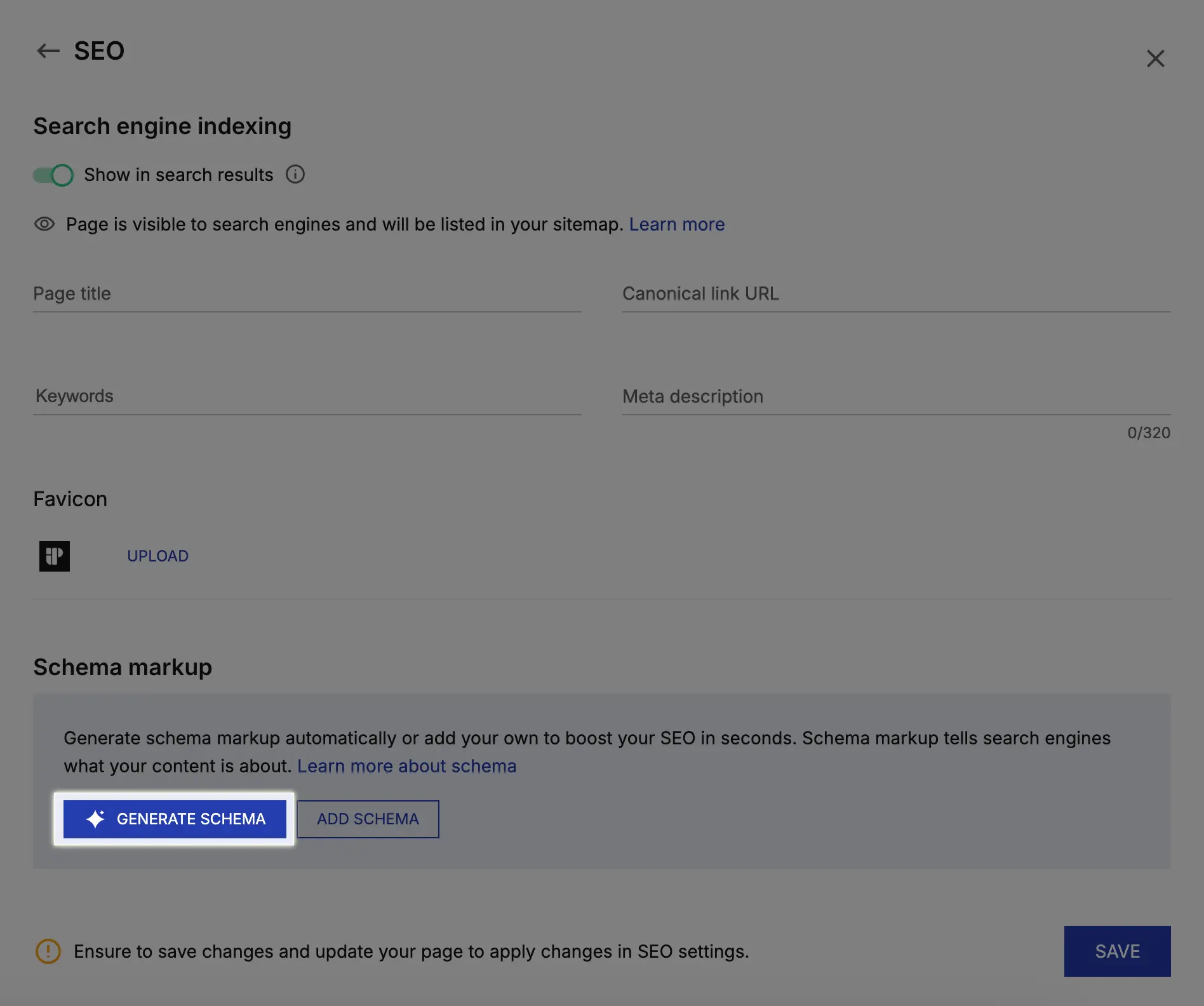

The Role of Generative AI: Adoption and Hallucinations

A recent survey conducted by Marcella Sullivan, a prominent CRO expert, revealed that 83.3% of experimenters now use GenAI tools like ChatGPT and Claude on a daily basis. The primary use cases include analyzing large qualitative data sets, generating low-fidelity prototypes, and writing custom JavaScript for test variants.

Despite this high adoption rate, "AI hallucinations" remain a significant threat. These occur when a model produces fabricated or unsupported insights that sound confident. In the context of A/B testing, an AI might "detect" a pattern in data that is actually just random noise. To mitigate this, industry standards now dictate a "Human-in-the-Loop" (HITL) requirement. While AI can draft the variants and summarize the data, human judgment is required to declare a winner and approve any code that goes into a production environment.

Broader Impact and Future Implications for 2027

As we look toward 2027, the experimentation industry is bracing for several major shifts. First, the decline of client-side testing is accelerating. Due to privacy regulations and the need for better site performance, "server-side" testing—where the experiment is managed on the company’s servers rather than the user’s browser—is becoming the default. This allows for deeper testing of product features and backend algorithms.

Second, "continuous experimentation" is replacing the traditional "test-and-learn" cycle. In this model, AI-driven systems continuously allocate traffic to the best-performing variants in real-time, effectively blurring the line between a "test" and "personalization."

The broader economic implication of these trends is a widening gap between companies that treat experimentation as a core competency and those that treat it as a peripheral task. Organizations that invest in clean data, rigorous statistical frameworks, and human-AI collaboration are seeing significantly higher returns on their digital investments than those relying on automated "quick wins."

In conclusion, the state of A/B testing in 2026 is defined by a return to fundamentals. While the tools have become more sophisticated, the core requirement remains the same: a relentless focus on the truth, backed by data that has been scrutinized, triangulated, and validated through a disciplined process. The future belongs not to the fastest testers, but to the most rigorous ones.