The digital landscape is undergoing a profound transformation, with search engine optimization (SEO) at the forefront of this shift. As highlighted in the April 2026 SEO Update by Yoast, featuring experts Carolyn Shelby and Alex Moss, the month saw significant advancements in Agentic AI, stringent Google spam and core updates, and a definitive declaration that simply producing more content is no longer a viable strategy – and can even be detrimental. This comprehensive recap delves into the critical developments shaping the future of search, emphasizing Google’s pivot towards an AI-agent manager model and the renewed importance of high-quality, authoritative content.

The discussions underscored a pivotal moment for webmasters, content creators, and digital marketers. Google’s continuous integration of artificial intelligence is not merely an enhancement but a fundamental restructuring of how information is accessed and processed online. This paradigm shift demands a re-evaluation of traditional SEO tactics, pushing industry professionals to adapt to a web increasingly defined by AI-driven interactions and sophisticated content evaluation algorithms. The April 2026 update served as a crucial bellwether, signaling a future where adaptability, authenticity, and technical acumen in an AI-first environment will dictate digital success.

Chronology of Key Developments: March-April 2026

The period leading up to and including April 2026 was marked by a flurry of critical updates and announcements from Google, underscoring a concerted effort to refine search quality and integrate advanced AI capabilities. These developments were not isolated events but rather pieces of a larger strategy aimed at creating a more helpful, efficient, and intelligent search ecosystem.

March 2026: The Double-Edged Rollout

The month began with Google initiating two significant updates: the March 2026 spam update and the March 2026 core update. These rollouts, which concluded in April, were widely anticipated and had substantial ramifications across the web. The spam update specifically targeted manipulative practices and low-value content, building on Google’s long-standing commitment to improving search results hygiene. This included a renewed focus on identifying and penalizing content created solely for ranking purposes, doorway pages, and sites engaging in aggressive ad placements or cloaking. Initial reports from the SEO community indicated significant volatility, with many sites experiencing drastic shifts in rankings, both positive and negative, as Google’s algorithms became more adept at discerning genuine value from manufactured content.

Concurrently, the March 2026 core update represented a broad adjustment to Google’s ranking algorithms. While the specifics of core updates are rarely disclosed, their purpose is generally to improve the overall relevance and quality of search results. Industry analysts speculated that this update further emphasized factors like E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness), user experience signals, and the helpfulness of content. The combination of these two updates sent a clear message: Google was intensifying its efforts to reward high-quality, user-centric content while aggressively demoting anything perceived as spammy or unhelpful, regardless of how technically optimized it might be. This period saw many webmasters scrambling to audit their content strategies and technical SEO practices in light of the new enforcement levels.

April 2026: The AI Agent Acceleration

Following the foundational shifts of March, April 2026 brought a surge of AI-centric news, cementing Google’s direction towards an "AI agent manager." The introduction of a new Google-agent user agent was a critical signal, indicating more explicit support for AI-driven crawling and interaction. This wasn’t merely a new bot but a dedicated agent designed to understand and interact with the web in a more sophisticated, task-oriented manner, moving beyond traditional indexing.

Simultaneously, proposals like WebMCP (Web Manifest Content Protocol) gained traction, aiming to standardize how AI agents interact with websites. This initiative reflects a broader industry recognition of the need for clear protocols as AI agents become more prevalent, preventing abuse and ensuring controlled access to web resources. Google leadership, in various public statements throughout the month, reiterated their vision of search evolving into an "AI agent manager," where users delegate tasks to AI, and the AI, in turn, orchestrates interactions with various web services and information sources to fulfill those tasks. This evolving vision marks a significant departure from the traditional "10 blue links" model, promising a more interactive and personalized search experience.

These chronological developments collectively painted a picture of a search ecosystem in rapid flux, driven by Google’s dual objectives: enhancing search quality through rigorous content enforcement and pioneering the next generation of AI-powered interaction.

The Rise of Agentic AI in Search

A cornerstone of the April 2026 SEO Update was the extensive discussion surrounding Agentic AI and its burgeoning role in Google Search. The introduction of a new Google-agent user agent marks a significant milestone, moving beyond the conventional Googlebot which primarily indexes content for human search queries. This specialized agent suggests a future where Google’s AI systems will not merely fetch information but actively engage with websites to perform tasks, synthesize answers, and manage complex queries on behalf of users.

Background and Context: The concept of "agentic AI" refers to autonomous AI systems capable of understanding goals, planning actions, executing them, and learning from the outcomes. For Google, this translates into search evolving from a directory of information to a proactive assistant. Historically, Google has gradually integrated AI through features like RankBrain, BERT, and MUM, which enhance understanding of language and context. However, the explicit Google-agent user agent signals a more direct and interactive role for AI. This is a strategic move by Google to stay ahead in the rapidly evolving AI landscape, competing with other AI-powered assistants and platforms.

Implications of an "AI Agent Manager": Google’s CEO has articulated a vision where search becomes an "AI agent manager." This implies that users will increasingly pose complex questions or tasks directly to Google’s AI, which will then orchestrate a series of interactions with various online resources—websites, APIs, databases—to fulfill the request. For example, instead of searching for "restaurants near me" and then navigating through multiple sites, an AI agent might directly book a table based on user preferences and availability, drawing information from relevant platforms. This fundamental shift redefines the user’s interaction with the web, making search more transactional and less purely informational.

Standardizing AI Interaction (WebMCP): The emergence of proposals like WebMCP (Web Manifest Content Protocol) is a direct response to this evolving AI landscape. Just as robots.txt governs crawler access, WebMCP aims to provide a standardized framework for websites to declare how AI agents can interact with their content, what data they can access, and what actions they are permitted to perform. This is crucial for maintaining website control, preventing unintended AI interactions, and ensuring data privacy. Without such protocols, the proliferation of AI agents could lead to uncontrolled data scraping, misinterpretation of content, and security vulnerabilities. WebMCP seeks to establish a clear contract between websites and AI agents, fostering a more structured and responsible AI-driven web.

Actionable Takeaway: Webmasters and developers must begin to think about their websites as not just human-facing interfaces but also as API-like endpoints for AI agents. This involves:

- Enhanced Structured Data: Moving beyond basic schema markup to provide highly granular, unambiguous data that AI agents can easily parse and act upon.

- API Readiness: Considering how critical functionalities or data points could be exposed via APIs for trusted AI agent interaction.

- Agent Permissions: Implementing or preparing for protocols like WebMCP to explicitly define what AI agents are allowed to do on a site.

- Content Atomization: Breaking down content into easily digestible, self-contained units that AI agents can readily extract and synthesize.

The move towards Agentic AI is not a distant future but an immediate reality. Websites that fail to adapt their technical infrastructure and content strategy to accommodate these intelligent agents risk being bypassed in the evolving search ecosystem.

Google’s AI Capabilities and Efficiency: TurboQuant and Task-Based Search

Beyond the architectural shift towards agentic AI, Google continues to push the boundaries of AI capabilities and efficiency, as evidenced by the introduction of TurboQuant and the expansion of task-based features in AI Mode. These innovations collectively aim to make AI faster, more powerful, and more seamlessly integrated into the user experience.

TurboQuant: Redefining AI Efficiency: Google’s announcement of TurboQuant represents a significant leap in AI model compression. AI models, particularly large language models (LLMs), are notoriously resource-intensive, requiring substantial computational power and memory. TurboQuant, a novel approach to quantization, allows for extreme compression of these models without a proportional loss in performance.

- Background: Quantization in AI involves reducing the precision of numerical values used in a model (e.g., from 32-bit floating-point numbers to 8-bit integers). This makes models smaller and faster, but traditionally, aggressive compression can degrade accuracy.

- Significance: TurboQuant’s breakthrough means Google can deploy more sophisticated AI models across a wider range of devices and services, from data centers to edge devices, with reduced latency and lower operational costs. This efficiency is critical for scaling AI services to billions of users and for powering complex agentic AI systems that perform multiple operations in real-time. It enables Google to run more powerful AI processes on more queries, more frequently, making the entire search infrastructure more responsive and intelligent.

Expanding Task-Based Features in AI Mode: Concurrent with efficiency gains, Google is actively expanding task-based features within its AI Mode search experiences. This development directly aligns with the "AI agent manager" vision, moving users from simply retrieving information to directly accomplishing tasks through search.

- Context: AI Mode in search is designed to provide more interactive, conversational, and direct answers, often synthesizing information from multiple sources. Task-based features allow users to express intentions like "plan a trip," "compare products," or "summarize a document," and the AI then executes these tasks, potentially interacting with third-party services.

- Implications for User Behavior: As AI becomes more adept at handling complex tasks, user expectations will shift dramatically. Users will anticipate that search can not only provide answers but also facilitate actions. This means less navigating through search results pages and more direct engagement with AI-generated solutions. This shift will further reduce clicks to traditional websites for many informational and transactional queries, especially those that AI can confidently fulfill.

Actionable Takeaway: Businesses and content creators need to anticipate a future where AI handles more direct user interactions.

- Optimize for Direct Answers and Actions: Ensure your content is structured to provide clear, concise answers to common questions and supports direct calls to action that an AI agent could understand and execute.

- Focus on Value Beyond Information: If AI is providing summary answers, websites must offer deeper insights, unique tools, community features, or specific transactional capabilities that differentiate them.

- Prepare for API Integration: As task-based search grows, services that can integrate directly with Google’s AI (e.g., booking systems, e-commerce APIs) will gain a significant advantage.

- Monitor AI-Generated Content: Understand how AI systems are synthesizing information related to your brand and industry, and ensure your authoritative content is being correctly represented.

The synergy between increased AI efficiency and expanded task-based functionalities signifies a profound evolution in how users interact with the digital world, demanding a proactive and adaptive approach from anyone seeking visibility online.

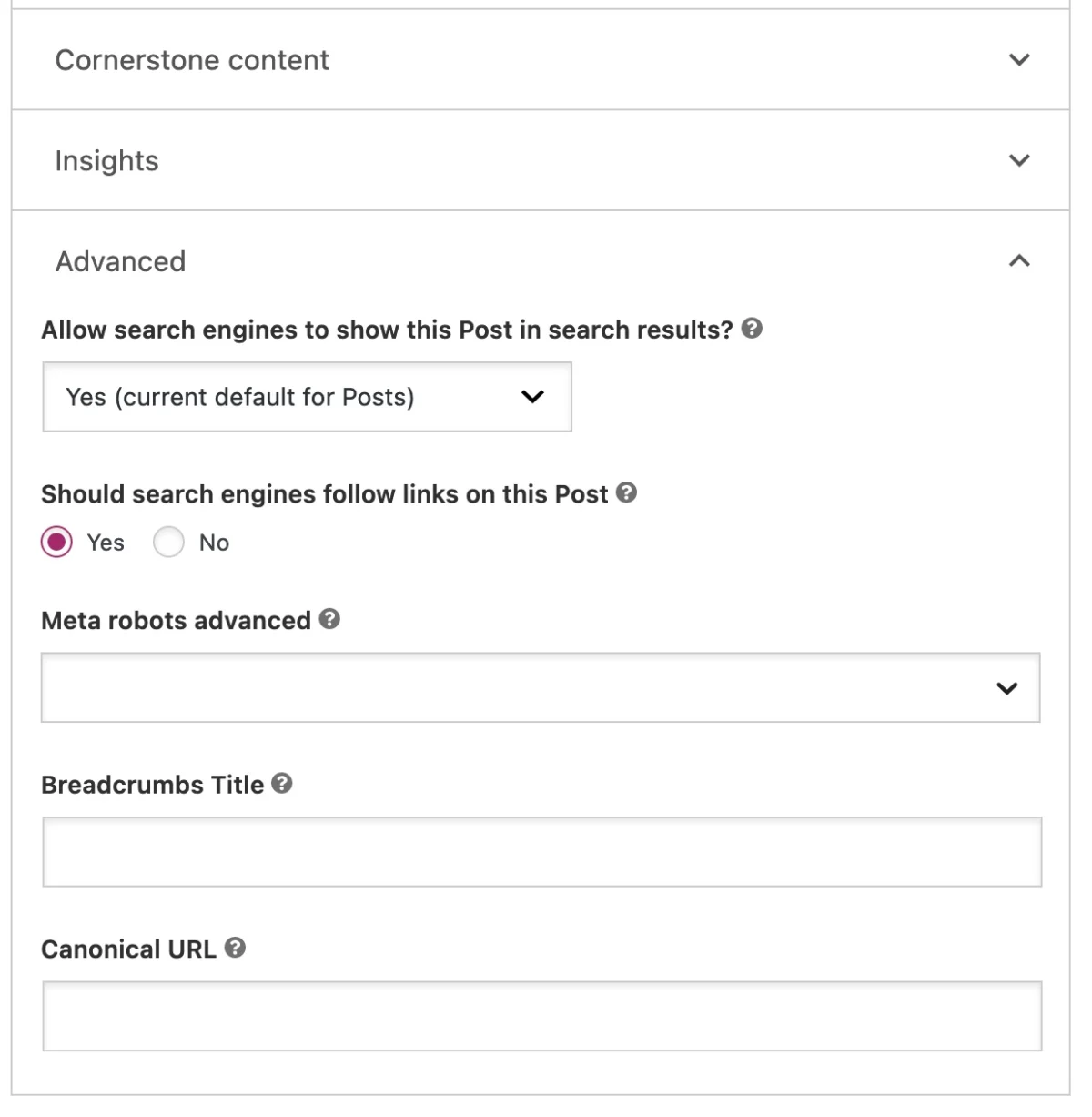

Structured Data and Documentation for AI-First Search

The evolution towards AI-first search necessitates a parallel evolution in how content is structured and interpreted. Google’s recent updates to structured data and documentation reflect this need, aiming to provide clearer signals for AI systems and ensure content authenticity.

AI Bot Labels for Forum and Q&A Structured Data: A significant update involved adding AI bot labels to forum and Q&A structured data. This move addresses the growing concern over the proliferation of AI-generated content in user-contcontributed sections of websites.

- Background: As generative AI tools become more accessible, distinguishing between human and AI-generated contributions in forums, comment sections, and Q&A platforms has become increasingly challenging. This can erode trust, dilute the quality of discussions, and make it difficult for search engines to ascertain the genuine voice of a community.

- Significance: By allowing webmasters to explicitly label AI-generated contributions, Google aims to provide its algorithms with crucial context. This helps search engines to:

- Assess Content Authenticity: Differentiate between genuine human interactions and automated responses.

- Improve Quality Signals: Potentially prioritize human-generated content for certain types of queries where authenticity and personal experience are paramount.

- Combat Misinformation: Identify and filter out AI-generated content used for spam or malicious purposes.

- Enhance Transparency: Give users and search engines a clearer understanding of the origin of the information.

This update underscores Google’s commitment to quality and transparency, especially as AI blurs the lines of content creation.

"Read More" Deep Link Best Practices: Google also updated its documentation with "read more" deep link best practices. While seemingly minor, this update holds significant weight in an AI-driven search environment.

- Context: Deep links guide users directly to specific sections within a page, enhancing user experience. In the context of AI-generated summaries and direct answers, these links become critical for users who wish to delve deeper into a topic or verify the source of information provided by an AI.

- Significance: As AI systems synthesize answers, they often present a condensed version of information. Providing clear, semantically relevant "read more" deep links ensures that:

- Users can easily access the original context: If an AI answers a query, a deep link allows the user to click through to the precise section of the source page that provided that information.

- AI systems can better understand content structure: Clear deep linking practices help AI agents understand the hierarchical organization of content within a page, improving their ability to extract relevant snippets.

- Attribution and Authority are Maintained: It reinforces the connection between the AI-generated summary and its authoritative source, a crucial aspect of building trust and validating information.

Actionable Takeaway:

- Implement AI Bot Labels: For any website with user-generated content, diligently apply AI bot labels to clearly distinguish automated contributions. This is a matter of transparency and trust.

- Master Structured Data: Go beyond basic schema.org markup. Utilize all relevant structured data types to provide comprehensive context about your content, authors, and organization. Ensure accuracy and completeness.

- Optimize Internal Linking and Anchors: Pay meticulous attention to internal linking, using descriptive anchor text and ensuring that every section of your content that could be an atomic answer has a direct, stable URL. This will improve the chances of your content being used for deep links by AI.

- Review Documentation: Regularly review Google’s official developer documentation for updates on structured data and best practices, as these will continually evolve with AI advancements.

These updates emphasize that technical cleanliness, clear content labeling, and meticulous structural optimization are no longer just good practices but essential requirements for visibility and trust in an AI-first search ecosystem.

Core Updates, Spam Policies, and Enforcement Tighten

The digital ecosystem witnessed a significant crackdown on low-quality and manipulative content in March and April 2026, as Google completed its March 2026 spam update and core update. These updates, coupled with new spam policies, signaled a renewed and intensified commitment from Google to improve search quality and user experience.

The March 2026 Spam Update: This update specifically targeted tactics designed to manipulate search rankings without providing genuine value to users. Google’s focus extended to:

- Scaled Content Abuse: The use of automation to generate large volumes of low-quality, unoriginal content primarily for SEO purposes. This includes AI-generated content that lacks expertise, originality, or helpfulness.

- Site Reputation Abuse: Instances where reputable sites host low-value, third-party content (often from affiliates or advertisers) to leverage their domain authority for ranking purposes.

- Expired Domain Abuse: The practice of purchasing expired domains with strong backlink profiles to host new, often unrelated, low-quality content.

- Back Button Hijacking: A newly addressed tactic where deceptive redirects or intrusive overlays prevent users from easily returning to previous search results, trapping them on a site. This specific policy update highlights Google’s granular approach to identifying and penalizing user-hostile experiences.

The spam update was a clear declaration that Google’s algorithms are becoming increasingly sophisticated at identifying and penalizing these nuanced forms of manipulation. The impact was significant, with many sites that relied on such tactics experiencing sharp declines in visibility.

The March 2026 Core Update: Running concurrently with the spam update, the core update brought broader changes to Google’s ranking algorithms. While less specific than a spam update, core updates generally aim to improve the overall relevance, helpfulness, and trustworthiness of search results. Industry analysis suggested that this update further emphasized:

- E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness): Content from demonstrated experts, backed by real-world experience, and hosted on authoritative, trustworthy domains received a boost.

- User Experience: Sites offering a superior user experience, including fast loading times, intuitive navigation, and minimal intrusive elements, were favored.

- Originality and Depth: Content that provided unique insights, original research, or comprehensive coverage beyond what could be found elsewhere was rewarded.

Improved Spam Reporting Tools: In conjunction with these updates, Google also improved its spam reporting tools, empowering users and webmasters to more easily flag egregious violations. This crowdsourced feedback mechanism provides Google with additional data points to refine its algorithms and expedite manual actions against problematic sites.

Implications: The tightening of core updates and spam policies underscores a pivotal shift. The era of low-cost, scaled, and manipulative content is definitively over. Google’s enforcement is becoming more granular, targeting not just technical manipulation but also the intent and quality of content. This signifies Google’s continued commitment to providing a clean, reliable, and user-centric search experience, particularly as AI becomes more integrated into content creation.

Actionable Takeaway:

- Prioritize Genuine Value: Every piece of content must serve a clear, helpful purpose for the user. Focus on originality, depth, and unique insights that demonstrate real expertise and experience.

- Conduct Content Audits: Regularly audit existing content to identify and remove or significantly improve low-value, thin, or duplicate pages.

- Adhere to Google’s Guidelines: Thoroughly understand and strictly adhere to Google’s Webmaster Guidelines, paying close attention to new or updated spam policies.

- Focus on E-E-A-T: Invest in building demonstrable expertise and authority for your authors and your brand. Showcase credentials, provide author bios, and ensure content is accurate and well-researched.

- Enhance User Experience: Ensure your website is fast, mobile-friendly, easy to navigate, and free from intrusive ads or deceptive practices like back button hijacking.

These stringent updates demand a fundamental re-evaluation of content strategies, emphasizing that quality, authenticity, and a user-first approach are now non-negotiable for sustainable SEO success.

Platforms and Tools Expand AI-Driven Workflows

The integration of AI is not confined to Google’s search algorithms; it is rapidly permeating the entire digital ecosystem, embedding directly into content creation, website management, and monetization workflows. April 2026 saw several key platforms and tool providers launch or preview new AI-driven capabilities, signaling a broad industry shift.

AI in Content Management Systems (CMS):

- Elementor’s Angie: Elementor, a popular WordPress website builder, introduced "Angie, an agentic AI for WordPress." This represents a significant step towards democratizing AI-powered website management. Angie aims to assist users with various tasks, from generating content ideas and writing copy to optimizing layouts and managing website elements, all within the WordPress environment. This integration streamlines workflows for millions of WordPress users, making advanced AI capabilities accessible without extensive coding knowledge.

- Cloudflare’s EmDash: Cloudflare, known for its web performance and security services, introduced EmDash as a WordPress alternative, specifically designed for "agent readiness standards." While details are still emerging, EmDash appears to be a headless CMS or content delivery platform built from the ground up to interact seamlessly with AI agents. This signifies a proactive approach to building infrastructure that is optimized for the future of agentic AI, potentially offering faster content delivery and more robust API access for AI systems than traditional CMS platforms.

Advanced AI Model Releases:

- Anthropic’s Claude Design and Mythos: Anthropic, a leading AI research company, released "Claude Design" and previewed "Mythos." Claude Design likely refers to tools or frameworks for designing more effective and safer AI systems, possibly for applications in creative fields or complex problem-solving. Mythos, still in preview, hints at next-generation AI models that could offer unprecedented capabilities in reasoning, knowledge synthesis, or even creative generation, further pushing the boundaries of what AI can achieve. These developments from Anthropic underscore the rapid pace of fundamental AI research and its potential applications across various industries.

OpenAI’s Commercial AI Integrations:

- AdsBot and ChatGPT Ad Manager: OpenAI, the creator of ChatGPT, was observed testing an "AdsBot" and introduced a ChatGPT ad manager interface. This indicates OpenAI’s move into directly facilitating advertising and monetization through its AI models. AdsBot likely assists in generating ad copy, optimizing campaigns, or even interacting with ad platforms. The ChatGPT ad manager interface suggests a user-friendly way for businesses to leverage ChatGPT’s capabilities for advertising-related tasks, such as creating targeted messages, analyzing ad performance, or automating campaign adjustments. This development points to a future where AI not only helps create content but also directly manages its commercialization.

Implications: These diverse platform and tool advancements collectively illustrate that AI is no longer just a backend technology; it’s becoming an integral part of frontend applications, content creation tools, and even monetization strategies. This embedding of AI directly into workflows has several profound implications:

- Democratization of AI: Advanced AI capabilities are becoming accessible to a broader audience, from individual bloggers to large enterprises, without requiring deep AI expertise.

- Increased Efficiency: AI-driven tools can significantly accelerate content creation, optimization, and management processes, freeing up human resources for more strategic tasks.

- New Competition: The ease of AI content generation could lead to an explosion of content, intensifying the need for genuine quality and originality to stand out.

- Ethical Considerations: The widespread use of AI in content creation and advertising raises new ethical questions regarding authenticity, bias, and responsible use.

Actionable Takeaway:

- Embrace AI Tools Judiciously: Explore and integrate AI tools into your workflows for efficiency gains, but always maintain human oversight to ensure quality, accuracy, and adherence to brand voice.

- Stay Informed on Platform AI: Keep abreast of AI features offered by your CMS (e.g., WordPress, Shopify), hosting providers, and other digital tools.

- Understand AI Monetization: Investigate how AI is shaping advertising and monetization models. Consider how your content and products can be optimized for AI-driven ad platforms.

- Develop AI Literacy: Equip your team with the knowledge to effectively use and critically evaluate AI-generated content and insights.

The pervasive integration of AI into digital workflows signifies a transformative period, demanding continuous learning and strategic adaptation to leverage these powerful tools effectively and ethically.

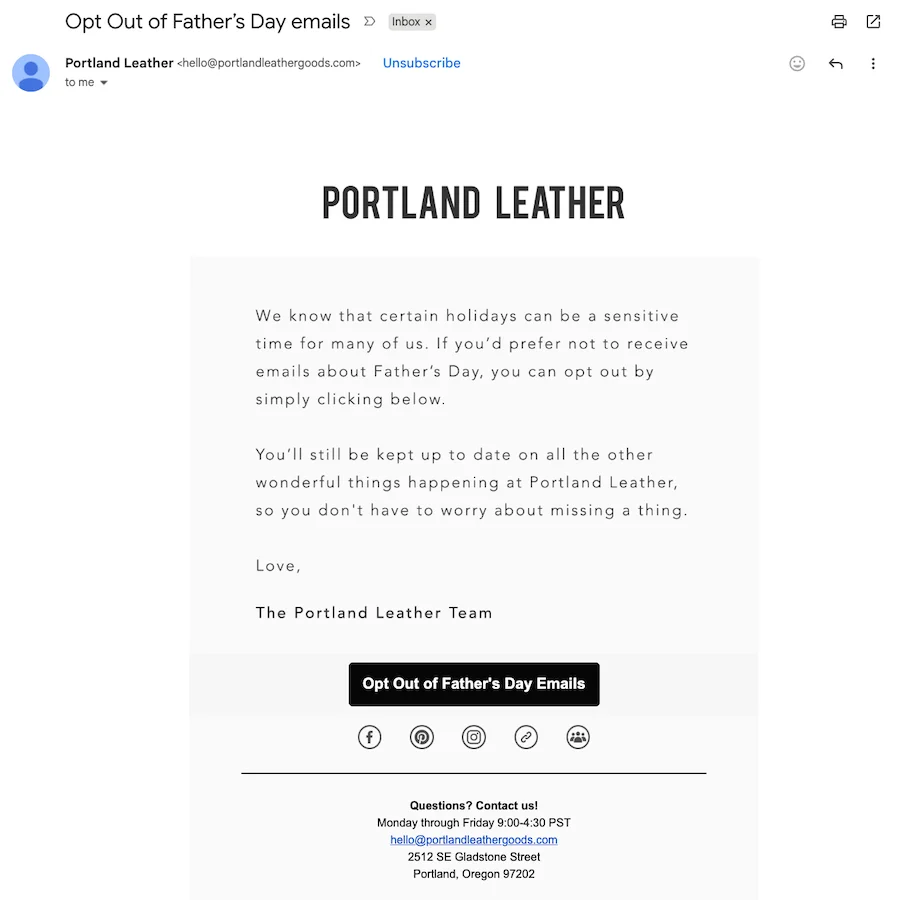

Authority, Trust, and Content Quality Remain Central

Amidst the rapid advancements in AI and the tightening of spam policies, one fundamental truth resonated throughout the April 2026 SEO Update: the paramount importance of authority, trust, and content quality. Google reinforced that "commodity content does not perform well," a statement that carries even greater weight in an AI-driven search landscape.

The Failure of Commodity Content:

- Definition: Commodity content refers to easily replicable, generic, or superficial information that offers little unique value, perspective, or depth. It often relies on rephrasing existing information, lacks original research, or is generated at scale without genuine expertise.

- Why it Fails: In an era where AI systems can quickly synthesize vast amounts of information, commodity content is redundant. Google’s algorithms, now significantly enhanced by AI, are highly effective at identifying and devaluing content that merely rehashes what’s already available. Such content fails to demonstrate E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) and provides no compelling reason for a user to choose it over another source or an AI-generated summary. The March 2026 core and spam updates specifically targeted this type of low-value content, leading to significant demotions for sites relying on it.

The Enduring Power of Authority, Freshness, and First-Party Signals: Broader industry analysis consistently highlights the enduring importance of authority, freshness, and first-party signals. These elements are not just ranking factors; they are foundational to building trust, both with users and with search engines.

- Authority: Content from sources demonstrating clear expertise and authoritativeness in their field is heavily favored. This includes content written by recognized experts, backed by credible research, or published by reputable organizations. As AI systems synthesize answers, they rely more heavily on trusted, differentiated sources to ensure the accuracy and reliability of the information they present.

- Freshness: For topics where information changes rapidly (e.g., news, technology, current events), freshness remains critical. Google prioritizes recently updated or published content to ensure users receive the most current information. This doesn’t mean constantly updating every page, but rather ensuring that time-sensitive content is regularly reviewed and refreshed.

- First-Party Signals: These are unique insights, data, or experiences that originate directly from a brand or individual. Examples include proprietary research, unique customer data, original photography, exclusive interviews, or genuine user-generated content (e.g., reviews, forum discussions). First-party signals are inherently difficult for AI to replicate or synthesize from other sources, making them a powerful differentiator. They provide a unique value proposition that commodity content cannot match.

Implications: In an AI-driven world, where information is readily available and synthesized, the premium on truly valuable, trustworthy, and unique content has never been higher. Websites that invest in genuine expertise, original contributions, and a distinct voice will stand out. Those that continue to churn out generic content will find themselves increasingly marginalized by both Google’s algorithms and sophisticated AI agents.

Actionable Takeaway:

- Invest in Expertise: Commission content from genuine experts, showcase their credentials, and ensure your content reflects real-world experience.

- Cultivate Originality: Prioritize original research, unique data, exclusive insights, and distinct perspectives. Avoid simply rephrasing or aggregating existing information.

- Build Brand Authority: Focus on becoming a recognized leader or trusted source in your niche. This involves consistent publication of high-quality content, thought leadership, and positive brand mentions.

- Leverage First-Party Data: Use your unique customer data, internal research, or proprietary insights to create content that no one else can replicate.

- Regularly Update Evergreen Content: Ensure that your foundational, high-value content remains accurate and up-to-date, demonstrating its ongoing relevance and freshness.

The message is unequivocal: in the age of AI, quality, authenticity, and demonstrated authority are the bedrock of digital success. Websites that prioritize these attributes will be the ones that AI systems trust and reference, securing their position as valuable sources in the evolving web.

Measurement and Reporting Shifting Toward AI Visibility

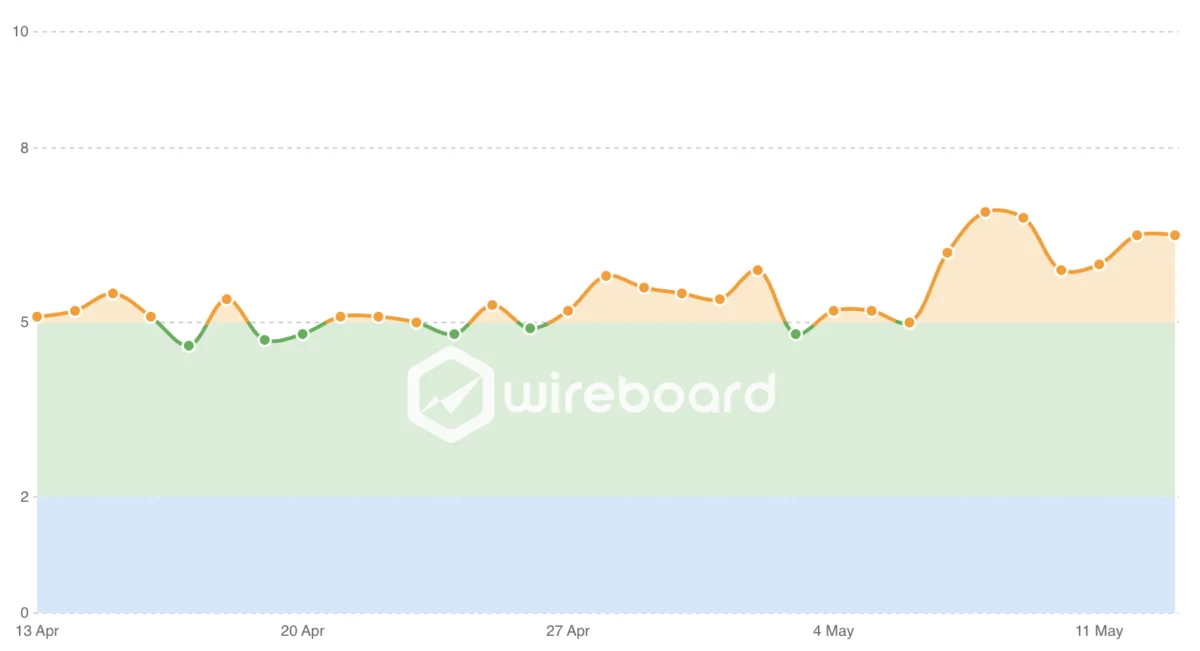

As the search landscape transforms into an AI-first environment, traditional SEO metrics are becoming increasingly insufficient to gauge true online success. The April 2026 SEO Update highlighted a critical shift in measurement and reporting: the move towards "AI visibility."

Why Traditional Rankings Are Less Relevant: For decades, the holy grail of SEO has been ranking #1 on Google for target keywords. However, with the rise of AI-generated answers, direct task completion, and rich snippets that often provide full answers without a click, a #1 ranking no longer guarantees traffic or influence. If an AI agent synthesizes an answer or performs a task directly, the user may never even see the traditional search results page, let alone click on a link.