The landscape of digital commerce has shifted from a focus on mere presence to a rigorous pursuit of optimization. For modern enterprises, the question is no longer whether to conduct A/B testing, but rather how to structure the experimentation engine that drives it. The primary distinction between A/B testing services and software tools lies in the locus of control and expertise: services provide expert-led, turnkey experimentation programs, while software tools empower internal teams to build, manage, and scale their own testing ecosystems. This strategic choice dictates not only the speed of initial results but also the long-term accumulation of institutional knowledge and data sovereignty.

The Fundamental Dichotomy: Ownership vs. Execution

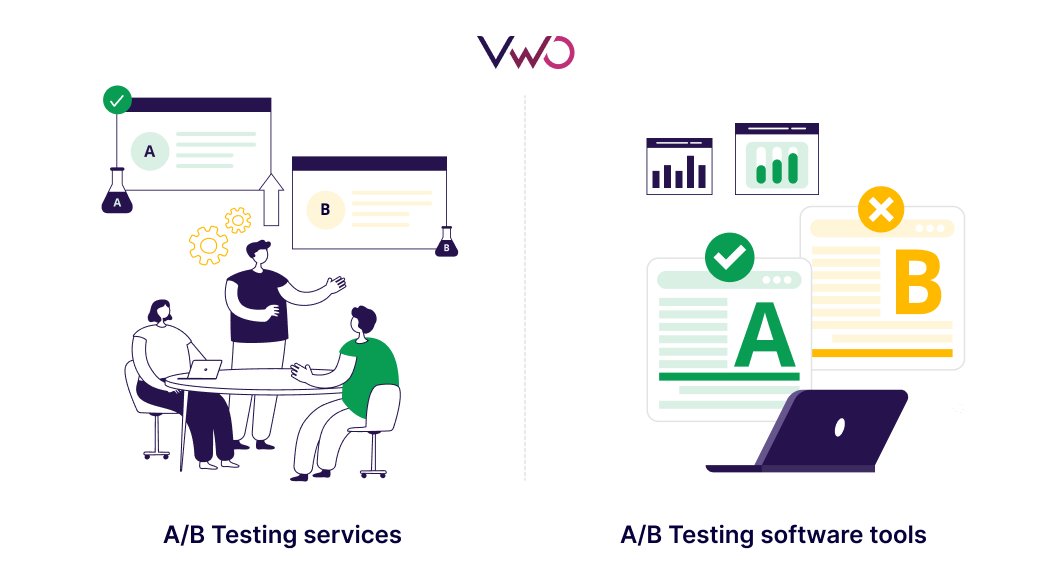

In the current digital economy, conversion rate optimization (CRO) has evolved from a tactical marketing function into a core product development strategy. Organizations must choose between two distinct paths. A/B testing software, such as VWO, Optimizely, or Adobe Target, provides the technological infrastructure for organizations to run experiments directly. This model requires an internal commitment to hiring or training specialists who can design hypotheses, implement code variations, and interpret complex statistical data. When owned internally, experimentation becomes a continuous loop that informs marketing, engineering, and product roadmaps.

Conversely, A/B testing services—typically offered by specialized CRO agencies—deliver a comprehensive outcome. The agency assumes responsibility for the entire lifecycle of the experiment, from identifying friction points in the user journey to the final QA of the test code. This model is frequently adopted by organizations that prioritize immediate ROI and lack the current internal bandwidth to manage a sophisticated testing platform. While the service model offers a faster "time-to-first-test," it often leaves a gap in institutional learning, as the expertise remains with the external vendor.

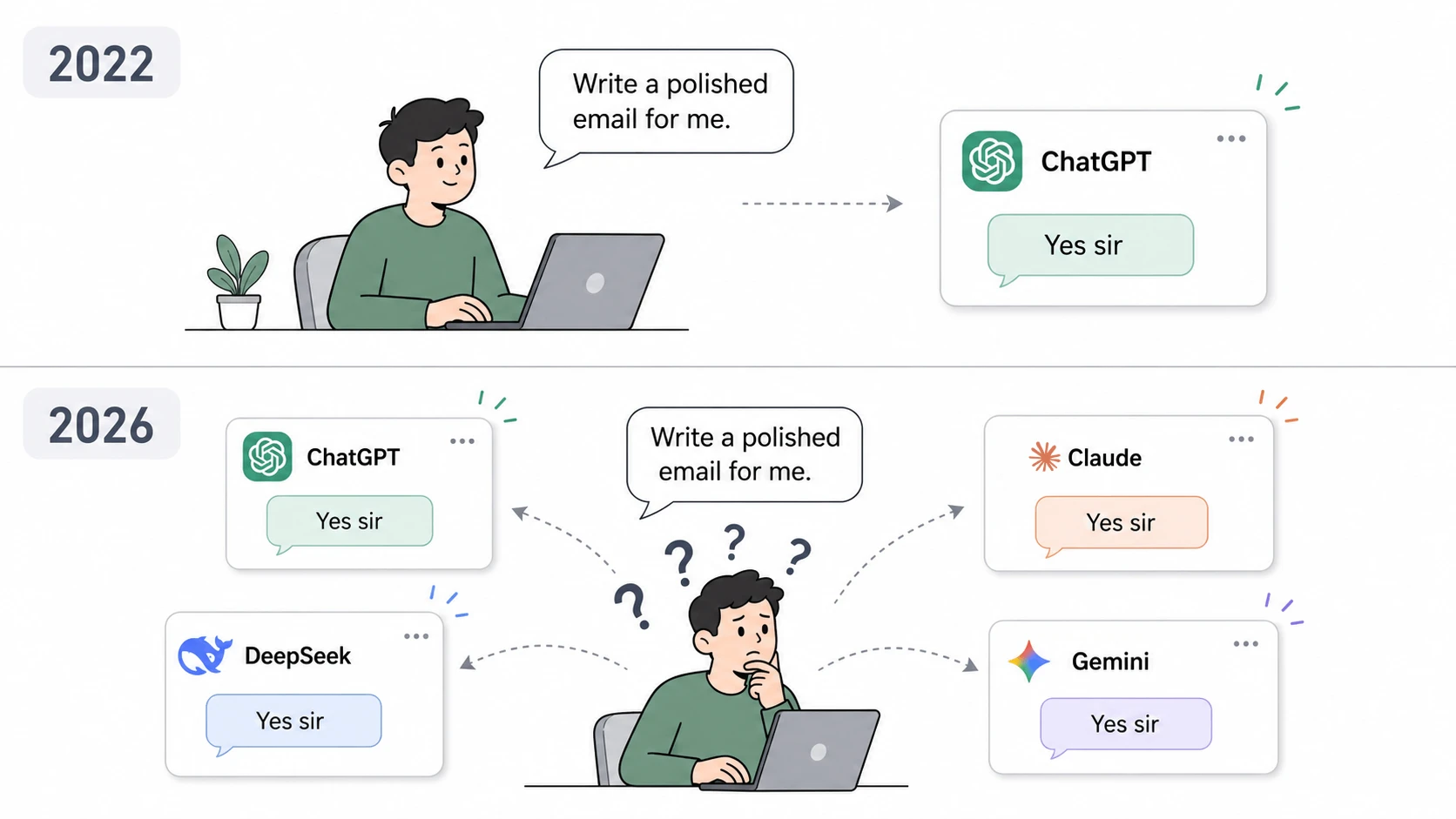

The Evolution of Experimentation: A Chronological Perspective

The journey toward experimentation maturity usually follows a predictable timeline. Understanding where an organization sits on this chronology is vital for choosing the right investment model.

- The Ad-Hoc Phase: Early-stage companies often start with basic, sporadic tests using free or low-cost tools. At this stage, there is rarely a dedicated team or a formal process.

- The Agency Partnership Phase: As organizations recognize the value of data-driven decisions but face a "talent gap," they often hire an A/B testing service. This period is marked by rapid gains as experts clean up "low-hanging fruit" on landing pages and checkout flows.

- The Tool Adoption and Internalization Phase: Once the ROI of experimentation is proven, mature organizations often transition toward owning their tech stack. They invest in enterprise-grade software tools to integrate testing into their CI/CD (Continuous Integration/Continuous Deployment) pipelines.

- The Center of Excellence (CoE) Phase: High-maturity organizations build internal "Centers of Excellence" where software tools are used across every department. At this stage, experimentation is a cultural norm, not just a marketing project.

Comparative Analysis: Strategic Decision Factors

The choice between a service and a tool is rarely binary; it is a calculation based on several high-stakes variables.

1. Data Sovereignty and Governance

For organizations in highly regulated sectors such as FinTech, healthcare, or insurance, data ownership is non-negotiable. Software tools allow these entities to keep experimental data within their own secure environments, ensuring compliance with GDPR, CCPA, and HIPAA. While agencies use compliant tools, the "mediation" of data through a third party can introduce complexities in governance and unified reporting.

2. Scalability and Velocity

In the software model, scalability is limited only by internal team capacity. Once a platform is embedded into the web and mobile architecture, teams can run dozens of simultaneous tests across different product layers—from UI changes to backend algorithm adjustments. Services, however, are often constrained by the "scope of work" defined in a contract. Scaling a program with an agency typically requires an increase in retainer fees and is subject to the agency’s personnel bandwidth.

3. The Cost of Curiosity

The financial structures of these two models differ significantly. Software tools usually operate on a subscription basis, often tied to the volume of monthly tracked users (MTU). As an internal team becomes more efficient, the "cost per experiment" effectively drops. Agency services are typically priced as a premium retainer. While this provides a predictable OpEx (Operating Expenditure), the cost remains high regardless of how many experiments are run, as the organization is paying for specialized human capital.

The Rise of AI-Powered Internal Experimentation

A significant factor tipping the scales toward software tools in recent years is the integration of Artificial Intelligence (AI). Modern platforms have introduced AI-driven features—such as VWO’s Copilot—that lower the barrier to entry for internal teams. AI can now assist in generating test hypotheses based on historical data, automatically creating code variations via natural language commands, and summarizing thousands of session recordings into actionable insights.

These advancements address one of the traditional "cons" of software tools: the steep learning curve. By automating the more tedious aspects of experimentation, AI allows smaller internal teams to perform at the level of a full-scale agency, effectively democratizing high-level CRO.

Supporting Data: The Impact of Ownership on Conversion

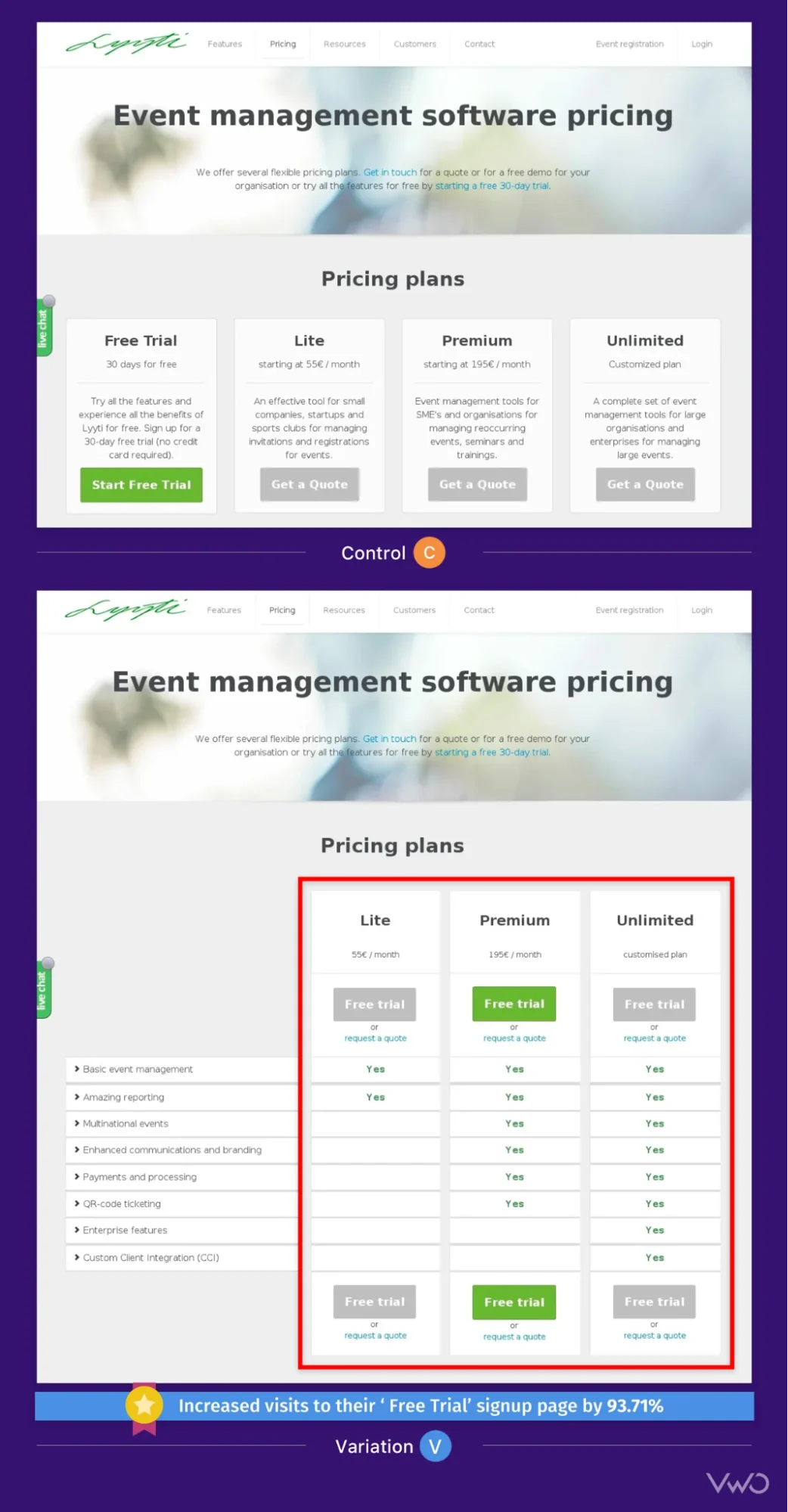

The tangible benefits of choosing the right model are best illustrated through real-world outcomes. Consider the case of Vandebron, a Dutch green energy provider. The company faced a common challenge: friction in the registration process. By utilizing a unified software platform that combined behavioral analytics with A/B testing, their internal team identified a specific usability issue in the date-of-birth input field.

By testing a simplified input method internally, they achieved a 16.3% increase in sign-up conversions. This success was not just about the test itself, but about the speed with which the internal team could identify a problem using heatmaps and immediately launch a corrective experiment without waiting for an external agency’s sprint cycle.

Official Perspectives and Industry Reactions

The shift toward internal ownership is echoed by industry leaders. Lucia van den Brink, Founder of The Initial, noted in a recent industry podcast that the ownership of experimentation fundamentally changes the morale and output of a product team. "It empowers teams to do their best work," van den Brink stated. "It gives designers, developers, and product teams the power to verify their ideas and have influence in deciding what to do next."

This sentiment is becoming the consensus among Chief Product Officers (CPOs) who view experimentation not as a "service" to be bought, but as a "capability" to be built. The prevailing view is that while agencies are excellent for jump-starting a program, the ultimate goal should be a self-sustaining internal culture of testing.

The Hybrid Model: A Pragmatic Middle Ground

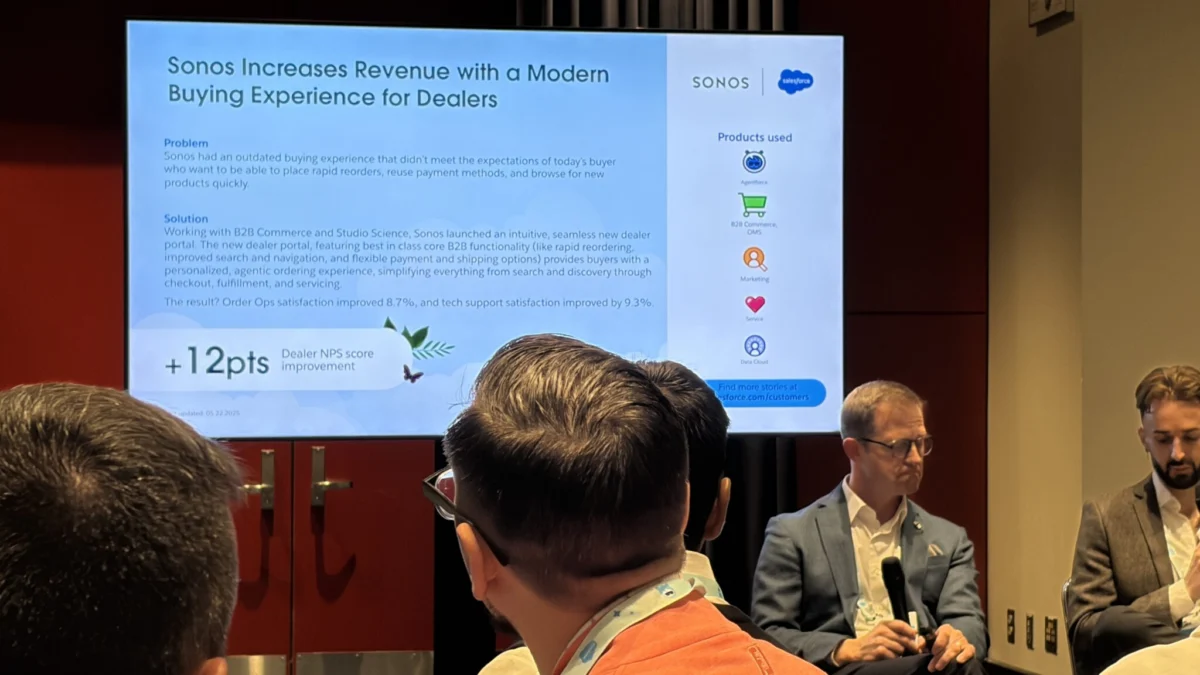

Many Fortune 500 companies have moved toward a hybrid approach to mitigate the risks of both models. In this scenario, the organization purchases and owns the software tool (ensuring data ownership and long-term infrastructure) but hires an agency to act as an extension of their team.

The agency provides the high-level strategy and technical execution in the short term, while simultaneously training the internal staff. This "train-the-trainer" approach allows for immediate results while systematically building the organization’s internal experimentation maturity. This model is particularly effective for companies that have high traffic volume but are undergoing a rapid digital transformation.

Broader Impact and Future Implications

The decision between A/B testing services and software tools will likely shape the competitive landscape of the next decade. As AI and machine learning continue to evolve, the "software" path will become increasingly autonomous, potentially reducing the need for traditional agency execution. However, the need for high-level strategic thinking—deciding what to test and why—will remain a human-centric requirement.

Organizations that invest in software tools today are essentially building a "data moat." By collecting years of experimental data and behavioral insights internally, they create a proprietary knowledge base that competitors cannot easily replicate. On the other hand, organizations that rely solely on external services must ensure they have robust knowledge-transfer protocols in place to avoid "expertise drain" should the agency partnership end.

In conclusion, for companies seeking to de-risk every product release and turn user behavior into a competitive advantage, the choice depends on their stage of maturity. While services offer a fast track to optimization, software tools provide the foundation for a truly data-driven organization. The most successful enterprises will be those that view experimentation not as a project with a finish line, but as a permanent, evolving infrastructure for innovation.