The landscape of software development is undergoing a fundamental transformation as "vibe coding"—a term popularized by AI researcher and Anthropic co-founder Andrej Karpathy—moves from a niche experimental practice to a mainstream business strategy. This paradigm shift allows individuals with little to no formal programming experience to build and deploy entire applications using agentic AI tools like Claude Code and OpenAI Codex. However, as the barrier to entry for software creation falls, industry experts are sounding the alarm regarding a burgeoning crisis of security vulnerabilities, technical debt, and data privacy risks that many small and medium-sized businesses (SMBs) are ill-equipped to manage.

The Rise of Vibe Coding: A Chronology of AI Integration

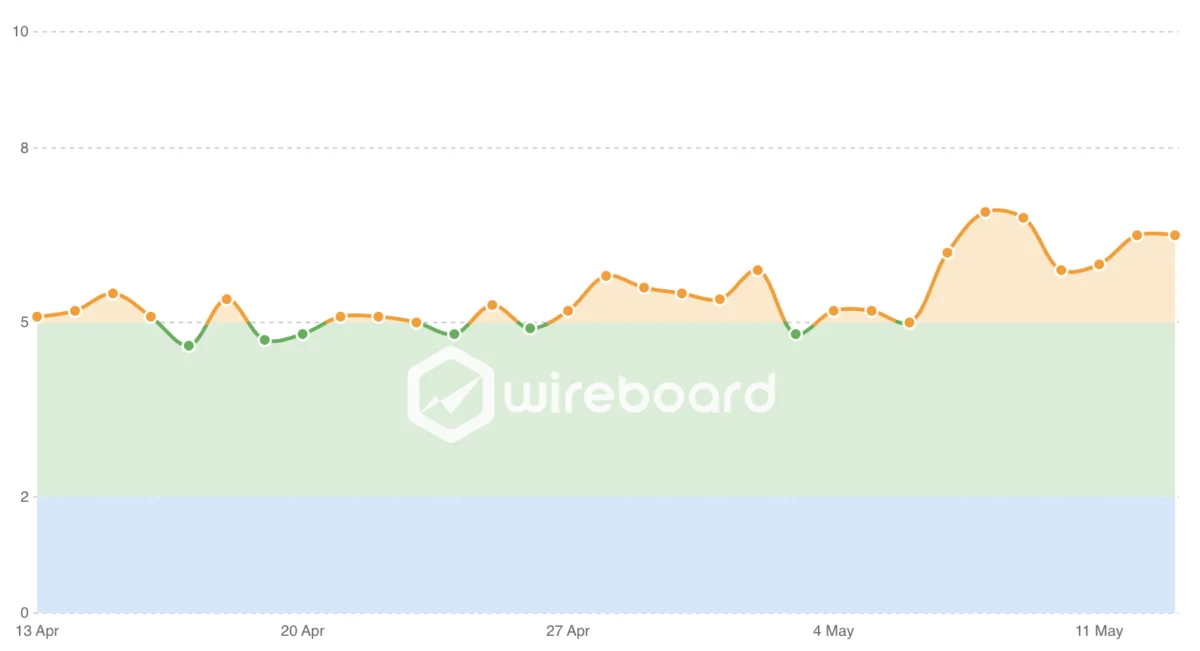

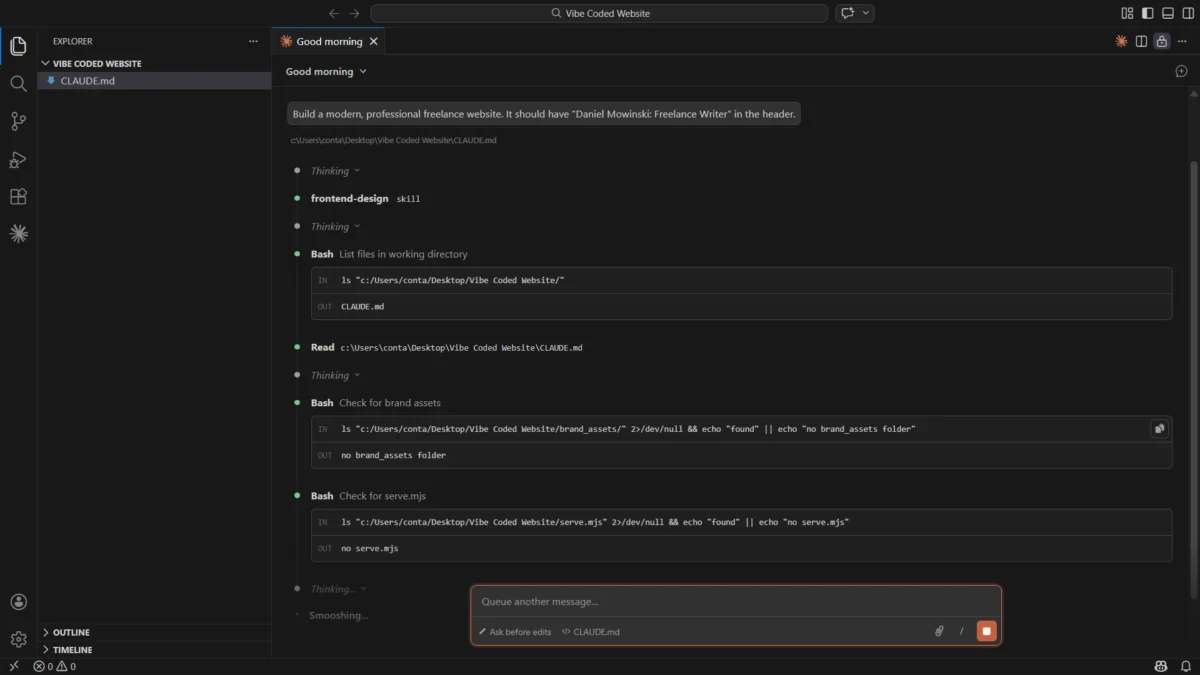

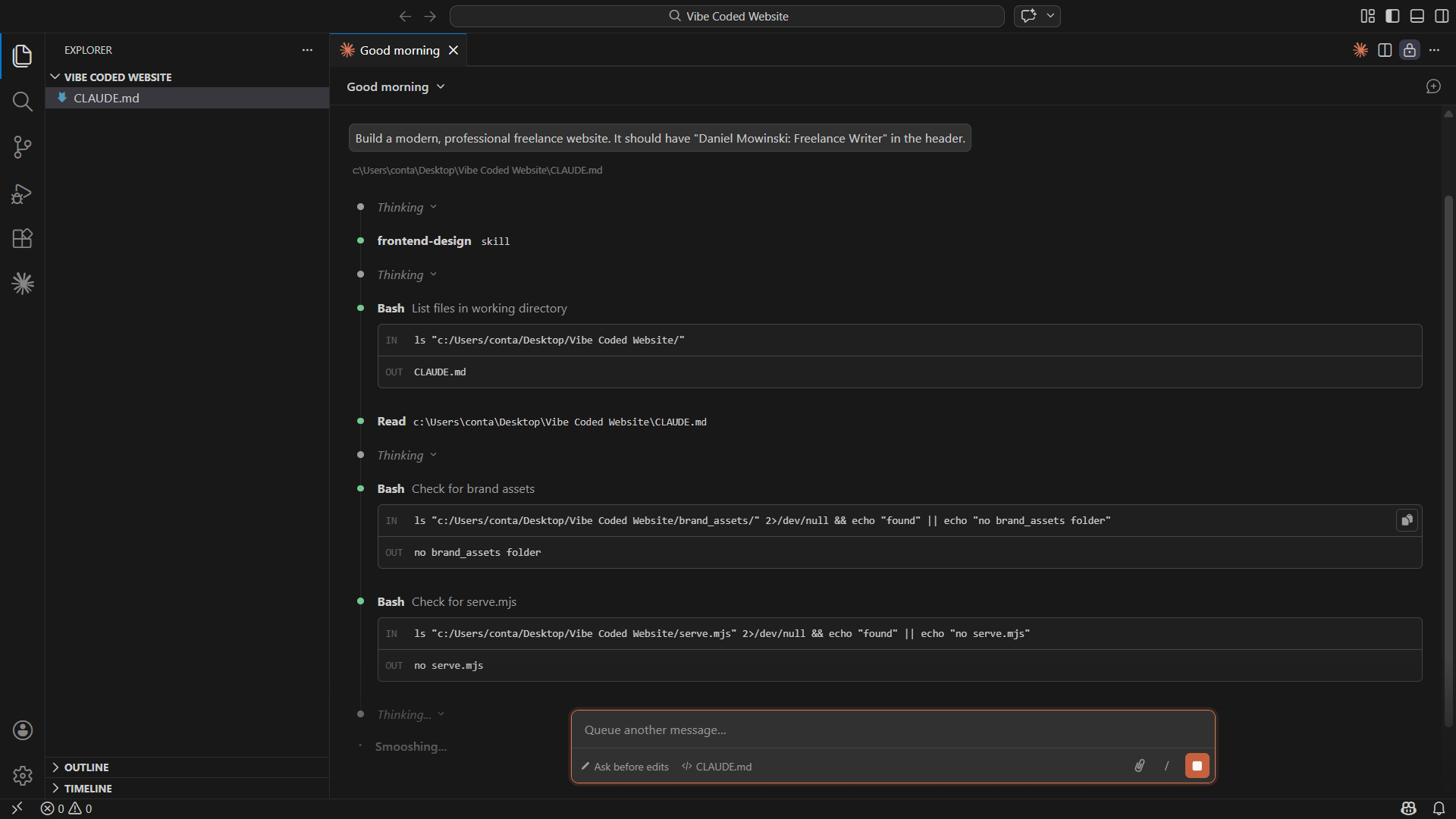

The evolution of AI-assisted development has moved with unprecedented velocity over the last 24 months. In late 2024, the primary use of Large Language Models (LLMs) in programming was restricted to "copilots" that suggested snippets of code. By early 2025, the release of sophisticated agentic tools transformed the workflow. These agents no longer just suggest code; they access local file systems, execute terminal commands, interact with GitHub repositories, and manage multi-stage deployment pipelines.

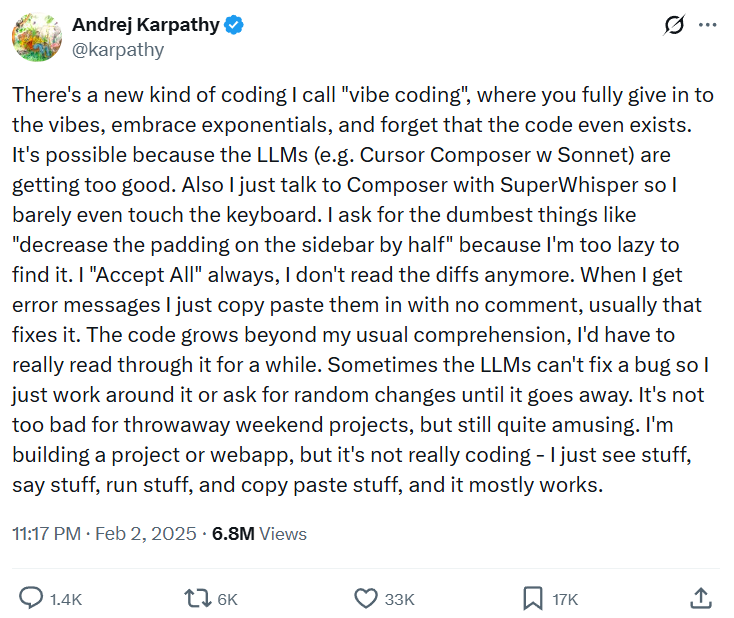

In February 2025, Andrej Karpathy described this phenomenon on social media as "vibe coding," noting that developers were increasingly moving away from writing syntax and toward "vibing" with the AI—essentially providing high-level instructions and reviewing the visual or functional output rather than the underlying logic. This trend reached a fever pitch in March 2025, when the startup accelerator Y Combinator reported that 25% of the companies in its winter cohort featured codebases that were 95% or more AI-generated.

By mid-2025, the "vibe coding" movement had trickled down to non-technical business owners. Reports from the U.S. Chamber of Commerce indicated that one in five small businesses were utilizing generative AI coding tools to build internal dashboards, customer-facing websites, and basic booking systems. However, this democratization of software creation was met with a harsh reality check in early 2026 with the "OpenClaw Crisis," a series of high-profile data breaches involving autonomous AI agents that exposed sensitive medical and financial records due to improperly secured API gateways.

Statistical Analysis of the AI Coding Landscape

Data from the 2025 AI Engineering Trends report by Jellyfish highlights the scale of this shift. Currently, 67% of software engineers utilize AI tools in their daily workflows, and 14% of all pull requests—proposed changes to a codebase—are now generated autonomously by AI agents. For SMBs, the motivation is primarily economic; a survey by Pax8 found that 62% of small business leaders believe AI adoption is the only way to remain competitive in a high-inflation environment where traditional development costs are prohibitive.

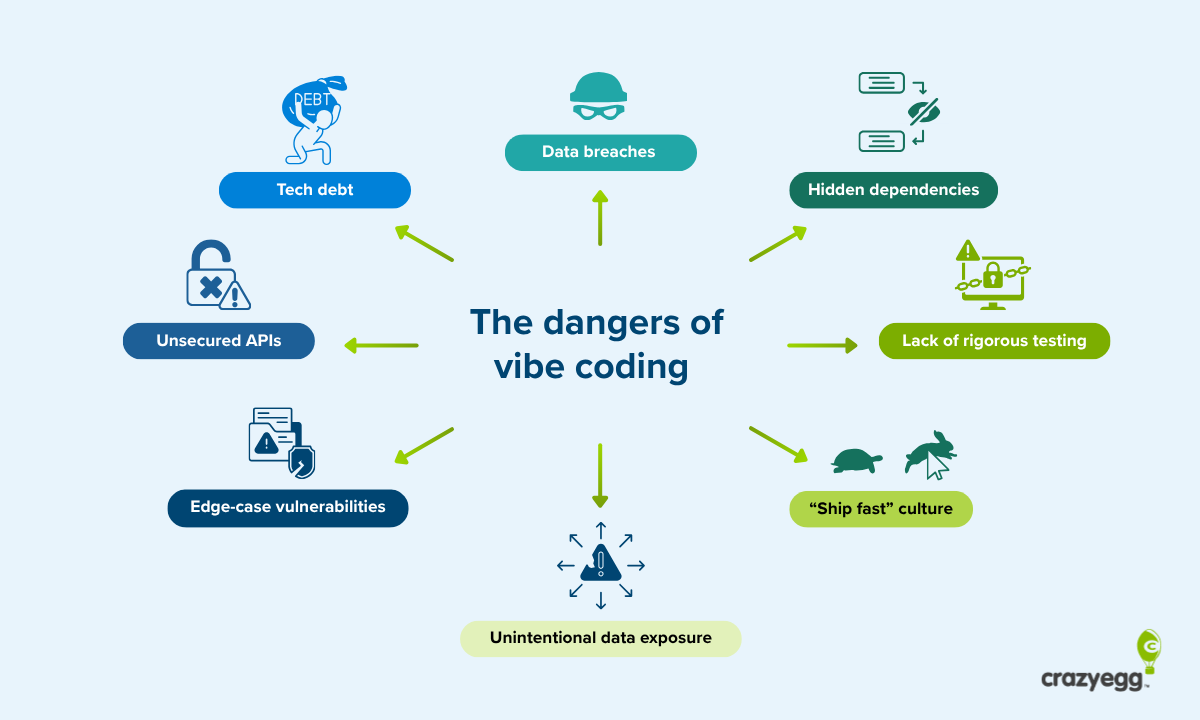

Despite the efficiency gains, the security implications are stark. A comprehensive survey of 5,600 publicly available applications built using vibe-coding platforms in late 2025 uncovered over 2,000 significant vulnerabilities. The study, conducted by the security firm Escape, found that many of these apps lacked basic authentication protocols or utilized "plain-text" storage for sensitive user data, essentially leaving digital backdoors wide open for exploitation.

The Consumption of the Implementation Phase

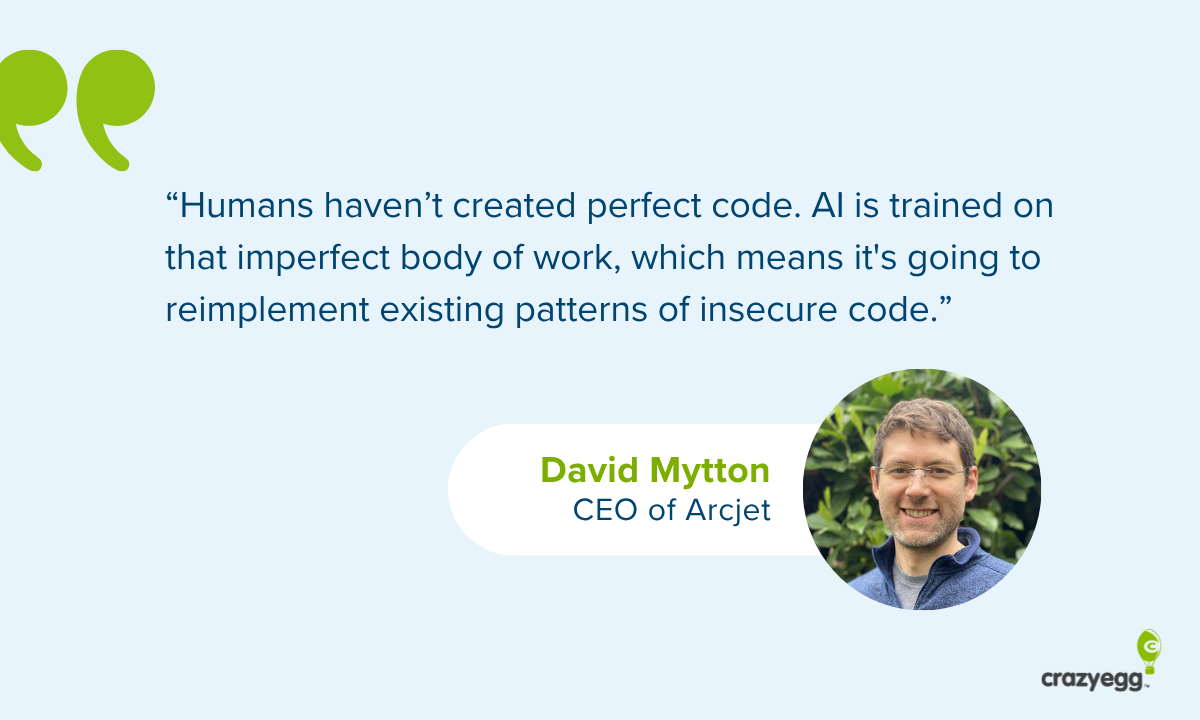

David Mytton, CEO of the security platform Arcjet and a researcher at the University of Oxford, suggests that AI has fundamentally altered the traditional three-phase development lifecycle: planning, implementation, and testing. Historically, humans managed all three phases, with the "middle" implementation phase—the actual writing of code—representing the bulk of the value and effort.

"The middle phase is no longer run by humans," Mytton notes. "This delivers incredible speed and lowers costs, but it removes the human oversight necessary to catch edge cases." According to Mytton, experienced engineers are often skeptical of these tools because they understand that AI is trained on an "imperfect body of work." Because humans have historically written insecure code, AI models frequently replicate those same insecure patterns, such as failing to sanitize user inputs or utilizing deprecated cryptographic libraries.

Mytton argues that while AI is excellent at generating functional code, it struggles with the nuances of security protocols. "It’s rare that there is one single issue like failing to implement authentication," he explains. "Instead, there is often a chain of multiple small vulnerabilities that, when exploited together, allow a hacker to gain system access. AI currently lacks the sophisticated reasoning required to identify these subtle bugs."

The "Semi-Developer" and the Hidden Cost of Tech Debt

The risks are not limited to malicious attacks; they also encompass the long-term viability of the software itself. Amy Gottler, PhD, a consultant specializing in Technology Enhanced Learning, has observed the rise of "semi-developers"—professionals who have a surface-level understanding of HTML or CSS and use AI to build complex systems.

"The issue is that people don’t know what they don’t know," Gottler says. She cites the example of a small business owner, such as a hair salon proprietor, who uses AI to build a custom booking system to save on subscription fees. Without an understanding of database encryption, that owner might unknowingly create a system where customer passwords and credit card details are stored without protection.

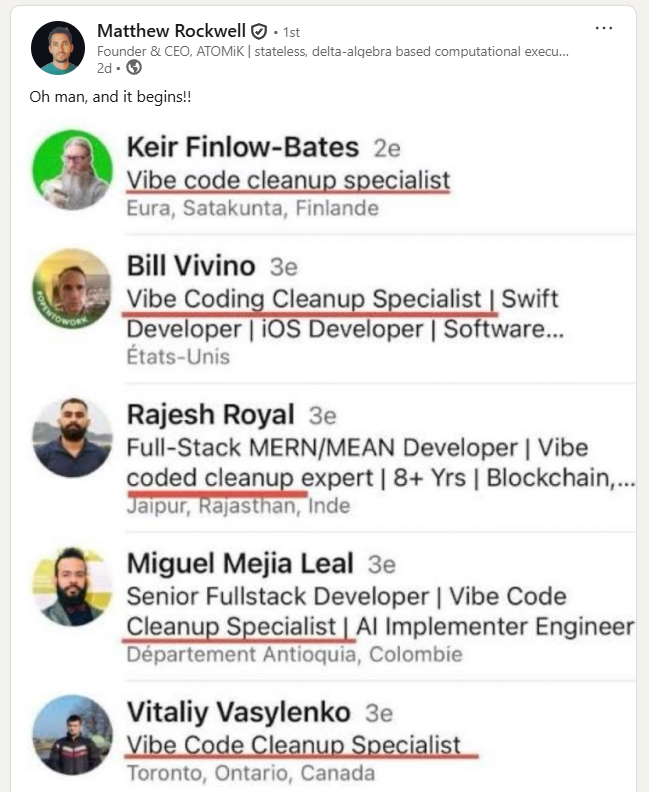

Furthermore, Gottler warns of "tech debt," where AI-generated features appear to work initially but break when the system is updated. Because the "vibe coder" does not understand the broader context of the codebase or its hidden dependencies, a minor update can trigger a cascade of failures. "In attempting to make a system go further, they can unknowingly introduce cross-stack compatibility issues," Gottler explains. This often leads to the emergence of "vibe code cleanup specialists"—a new category of developer whose sole job is to untangle and secure the "spaghetti code" generated by AI.

The Infrastructure Deficit and the "Two-Way Gateway" Problem

Matthew Rockwell, founder of the hardware architecture firm ATOMiK, points to a fundamental misunderstanding of how data moves in modern applications. Many vibe-coded apps rely on APIs and bidirectional interfaces that allow data to flow between the user and the server.

"A lot of vibe coders are under the impression that they’ve created a one-way radio—they can talk, but no one can listen," Rockwell says. "The reality is that these gateways are often two-way. If you don’t have a strong virtual sandbox environment, you can’t see what a malicious actor is capable of doing once they gain access to that gateway."

Rockwell emphasizes that experienced programmers spend a significant portion of their time "trying to hack their own systems" to find edge cases—unusual user behaviors that could crash the app or expose data. Vibe coders, focused on the "output" and the "vibe" of the user interface, rarely perform this level of stress testing. This lack of rigor is particularly dangerous in industries handling confidential information, where a breach can lead to legal action, brand destruction, and catastrophic financial loss.

Industry Recommendations for Mitigating Risks

As vibe coding becomes an inescapable reality of the digital economy, experts recommend several critical guardrails for businesses to prevent systemic failure:

1. Categorization of the "Blast Radius"

Businesses should categorize their codebase based on the potential damage a breach could cause. A bug on a marketing homepage has a small "blast radius," whereas a vulnerability in a login flow or payment gateway is mission-critical. High-risk areas should never be deployed based solely on AI output; they require mandatory human review by a security specialist.

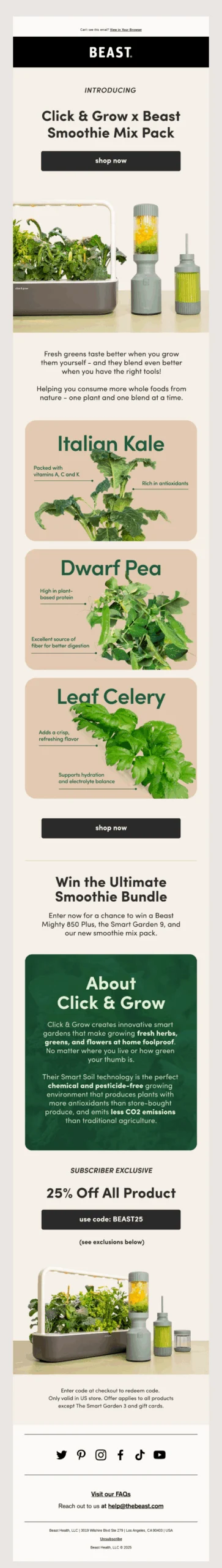

2. Utilization of Third-Party Infrastructure

Rather than asking an AI to "write a database" or "create an authentication system," non-experts should utilize established third-party tools like Stripe for payments or Auth0 for logins. These companies invest billions in security and provide robust APIs that can be integrated into a vibe-coded frontend without exposing the backend to unnecessary risk.

3. Implementation of Automated Guardrails

As the volume of code exceeds the human capacity for review, businesses must implement automated security layers. Tools that provide real-time bot detection, rate limiting, and threat feed integration can serve as a "safety net" for AI-generated applications, catching emerging threats before they can be exploited.

4. Continuous Employee Education

Security is not a one-time setup but a cultural requirement. Experts argue that businesses must provide ongoing training for any employee utilizing AI coding licenses. This includes training on data privacy, the importance of encrypted routers, and the dangers of "shadow IT"—where employees build and deploy tools outside of the organization’s official oversight.

The Future of the Software Engineering Role

The consensus among technology leaders is that while vibe coding will not replace professional developers, it will radically redefine their roles. The industry is seeing the emergence of the "infrastructure engineer" and the "AI auditor"—professionals who focus on setting up safe testing environments and validating the logic of AI-generated systems.

The "ship at any cost" mentality that currently dominates the vibe coding movement is likely to face increasing regulatory and legal pressure as the 2026 security crises continue to unfold. For small businesses, the message is clear: the ability to build an app in ten minutes is a powerful tool, but without a foundational respect for data security and infrastructure, that same tool can become a company-ending liability. The transition from "vibe coding" to "agentic engineering"—the disciplined, validated use of AI in software development—will be the defining challenge for the tech industry in the coming years.