Instagram, a subsidiary of Meta Platforms, is rolling out significant enhancements to its parental supervision tools, providing caregivers with unprecedented insight into their children’s engagement with content on the platform. This strategic move, integrated within Meta’s revamped Family Center dashboard, aims to offer greater transparency regarding the topics that capture teens’ attention, and how these interests evolve over time. The update comes at a critical juncture, as social media companies face escalating global pressure to safeguard younger users and mitigate potential harms associated with their platforms.

Deep Dive into Enhanced Parental Oversight Features

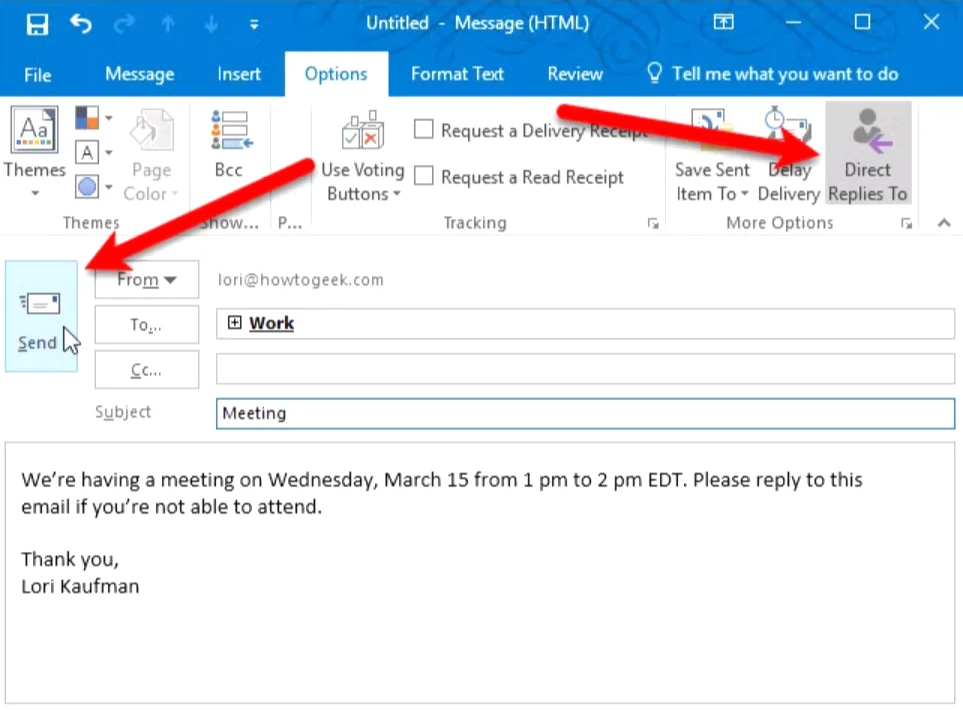

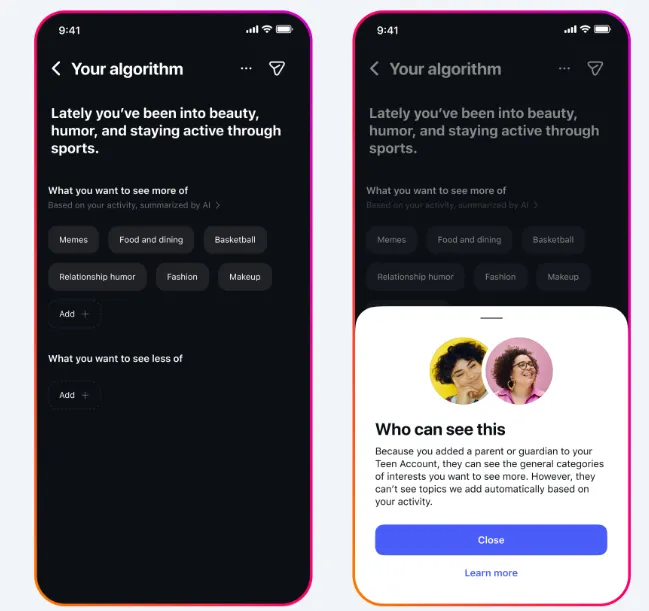

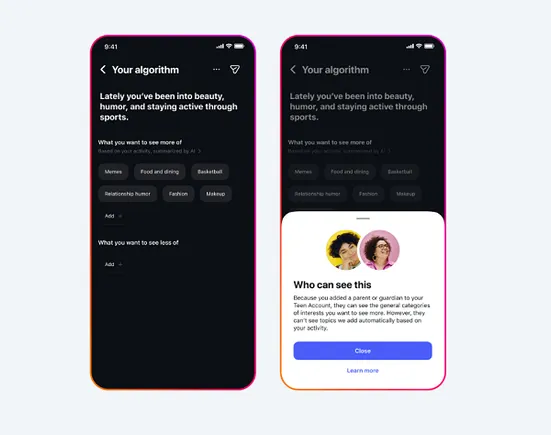

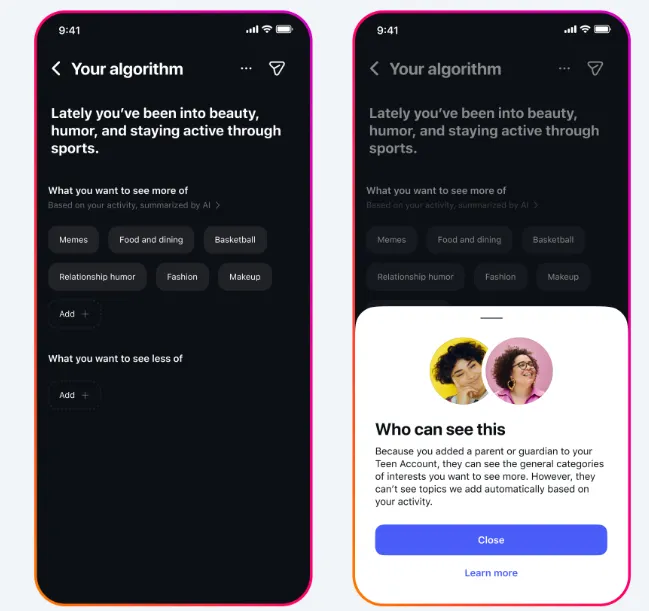

At the core of Instagram’s latest update is the expansion of its "Your Algorithm" control option, a feature previously available to users to manage the topics the system believes they are interested in. Now, for supervised teen accounts, parents will gain access to this crucial listing, offering a high-level view of the general content categories their child is interacting with. This means parents will be able to see broad topics such as "basketball," "photography," or "musicals," without delving into the specifics of individual posts or direct messages, maintaining a degree of privacy for the teen while providing parents with actionable insights.

Meta has clarified the intent behind this feature, stating, "Starting today, parents and guardians can view the general topics their teens engage with using our supervision tools. By expanding these insights to cover all available categories within Your Algorithm, we’re providing families with a clearer understanding of the content their teens see on Instagram." This approach is designed to foster open conversations between parents and teens about their online experiences, rather than enabling intrusive monitoring of every interaction. Parents will also have the ability to tap on an interest category for more details, helping them better comprehend the context of their teen’s digital explorations.

Beyond simply viewing current interests, Meta plans to introduce proactive alerts for parents. Soon, caregivers will receive notifications when their teen adds a new interest to their algorithm, signaling a shift or expansion in their content consumption patterns. This real-time update capacity is intended to empower parents to stay informed and engage in timely discussions with their children about emerging interests and potential related content. For example, if a teen suddenly develops an interest in a new hobby or cultural phenomenon, parents will be alerted, allowing them to understand and guide their child’s online journey more effectively.

The Unified Meta Family Center: A Centralized Hub for Parental Control

Complementing these new topic insights, Meta is also rolling out a significantly updated Family Center user interface. This redesign aims to simplify parental management of their children’s online activity across Meta’s diverse ecosystem of applications. The new Family Center will serve as a single, centralized hub, providing oversight for all supervised accounts on Instagram, Meta Horizon, Facebook, and Messenger. This consolidation is a direct response to feedback from parents who found managing settings across multiple apps cumbersome and time-consuming.

Meta emphasized the convenience this unified platform offers, stating it will allow "parents to find and manage their teen’s safety settings in one place without switching between apps." This streamlining is expected to enhance the user experience for parents, making it easier to adjust privacy settings, manage screen time limits, and access insights across various platforms their children might be using. The move reflects a broader industry trend towards integrated parental control dashboards, recognizing that young users often navigate multiple digital environments.

A Broader Context: Global Regulatory Pressure and Teen Online Safety

These new features are not merely incremental updates but represent Meta’s concerted effort to address mounting global concerns and regulatory pressure regarding the safety and well-being of young people on social media platforms. Over the past few years, a growing chorus of child safety advocates, mental health experts, and lawmakers worldwide has highlighted the potential negative impacts of social media on adolescent development, including issues related to mental health, body image, cyberbullying, and exposure to inappropriate content.

Chronology of Regulatory Actions and Industry Responses:

The timeline of legislative and regulatory actions underscores the urgency driving Meta’s recent initiatives:

- 2021-2022: Initial widespread public debate intensifies, fueled by whistleblower testimonies and academic research linking heavy social media use to adverse mental health outcomes in teens. Meta (then Facebook) faces scrutiny over internal research on Instagram’s impact on teen girls.

- Early 2023: Several governments begin actively exploring and drafting legislation to restrict teen access to social media. Meta introduces some initial parental control features, including time limits and expanded supervision tools, but these are widely seen as insufficient by critics.

- Late 2023 – Early 2024:

- Australia: Implements new laws banning children under 16 from using social media apps, a landmark move that sent ripples across the globe. Despite the ban, reports indicate challenges in enforcement, with many teens still finding ways to access platforms.

- Turkey and Spain: Both nations approve their own versions of teen social media bans, slated to go into effect shortly. These measures often include stricter age verification requirements and parental consent mandates.

- European Union: The EU Commission launches an investigation into Meta’s age restriction systems under the Digital Services Act (DSA). Preliminary findings announced recently determined that Meta’s current age-checking and detection systems failed to meet the obligations outlined in the DSA, which mandates platforms to implement robust measures to protect minors. This investigation carries significant weight, potentially leading to hefty fines and mandatory changes for Meta across all EU member states.

- United States: The regulatory landscape remains fragmented but active. States like New Mexico have proposed new rules that would impose tougher penalties on social media companies if they fail to keep children off their apps. In a notable reaction, Meta announced last week that it is considering withdrawing its apps from New Mexico if these proposed rules are enacted, highlighting the escalating tensions between tech giants and state legislatures. Other states are considering similar legislation, indicating a nationwide trend.

These legislative actions are a direct response to alarming statistics and expert consensus. Research from organizations like the Pew Research Center consistently shows that a vast majority of teenagers (over 80%) use social media, with a significant percentage reporting near-constant use. Studies from institutions such as the American Psychological Association have identified correlations between excessive social media use and increased rates of anxiety, depression, sleep disturbances, and body image dissatisfaction among adolescents. A 2023 study by the U.S. Surgeon General, Dr. Vivek Murthy, declared social media an "urgent public health crisis" for youth mental health, further amplifying calls for intervention.

Statements and Reactions from Stakeholders

Meta’s official position consistently emphasizes its commitment to teen safety and well-being. The company views these new tools as part of a continuous effort to provide families with the resources they need to navigate the digital world safely. They frame the updates as a way to "empower parents" and "foster meaningful conversations," positioning themselves as a responsible corporate citizen.

However, reactions from various stakeholders are likely to be nuanced:

- Child Safety Advocates and NGOs: Organizations dedicated to child protection will likely welcome these new tools as a positive step, acknowledging Meta’s responsiveness to public and regulatory demands. However, many will likely argue that these measures do not go far enough. They might call for more stringent age verification processes, default privacy settings for minors, and greater algorithmic transparency to understand how content is amplified or suppressed for young users. Concerns about the ease with which teens can bypass age restrictions or parental controls are also likely to persist.

- Parents: The reception among parents could be mixed. Many will undoubtedly appreciate the increased visibility and centralized management offered by the updated Family Center, finding assurance in the ability to understand their child’s general interests. For parents struggling to keep up with their children’s online lives, these tools could be invaluable. However, some parents might still find the insights too general, wishing for more granular control or reporting. Others might express concerns about the potential for these tools to create friction or distrust within families if not handled sensitively, highlighting the delicate balance between oversight and respecting a teen’s autonomy.

- Teens: For teenagers, these new parental controls could be a source of frustration or concern. While the tools are designed to provide only general insights, some teens may perceive any form of parental monitoring as an invasion of privacy or a lack of trust. This could potentially lead to efforts to circumvent the controls, such as creating secondary "finsta" accounts or shifting to less-monitored platforms, thereby undermining the intended safety benefits. The balance between safeguarding youth and respecting their evolving need for independence and privacy is a complex one.

- Regulators: Legislative bodies and regulatory agencies are likely to view these updates as a sign that Meta is acknowledging its responsibilities and attempting to self-regulate. However, it is improbable that these measures alone will be enough to fully satisfy the demands of legislators, particularly in regions like the EU, where the Digital Services Act imposes strict requirements, or in the U.S., where states are pushing for punitive measures. Regulators may see these as a good start but will likely continue to pursue broader legislative solutions, including industry-wide standards for age verification, data privacy, and algorithmic accountability.

Broader Impact and Implications

The introduction of these enhanced parental supervision tools and the unified Family Center carries several significant implications for Meta, the broader social media landscape, and the ongoing debate about digital well-being:

- Navigating Regulatory Headwinds: These updates are a clear strategic move by Meta to proactively address regulatory scrutiny. By demonstrating a commitment to teen safety and parental empowerment, Meta hopes to mitigate the risk of more draconian legislative interventions, such as outright bans or severe operational restrictions. The success of this approach will depend on whether regulators perceive these efforts as genuine and effective, or merely superficial gestures.

- The Evolving Privacy vs. Safety Debate: The new tools inherently wade into the complex ethical territory of balancing a teenager’s right to privacy with a parent’s responsibility for their child’s safety. While Meta has opted for general topic insights rather than specific content monitoring, the very act of parental oversight, even at a high level, redefines the boundaries of digital autonomy for minors. This will likely fuel further discussions about appropriate levels of surveillance and the role of technology companies in mediating family relationships.

- Algorithmic Transparency: While the "Your Algorithm" insights offer a glimpse into the mechanics of content curation, they represent a limited form of algorithmic transparency. Critics may argue that true transparency would involve understanding why certain topics are suggested or amplified, and how content is moderated or prioritized. This update might be seen as a stepping stone towards greater algorithmic accountability, but it is unlikely to fully satisfy demands for comprehensive insight into how these powerful systems shape young minds.

- Industry Precedent: Meta’s moves often set precedents for the wider social media industry. If these tools prove effective in addressing some concerns, other platforms might follow suit with similar parental oversight features. This could lead to a new industry standard for parental controls, driven by both competitive pressures and a shared need to appease regulators.

- User Experience and Engagement: The long-term impact on teen engagement with Instagram remains to be seen. While some teens may adapt, others might seek out platforms perceived as offering greater anonymity or freedom from parental scrutiny. This could potentially fragment the youth social media landscape further, creating new challenges for parents and platforms alike.

- Educational Opportunities: These tools, if utilized effectively, could serve as powerful catalysts for digital literacy and open communication within families. Instead of being solely a monitoring mechanism, the topic insights can provide parents with concrete starting points for conversations about online content, critical thinking, and responsible digital citizenship.

In conclusion, Instagram’s latest enhancements to its parental supervision tools and the unification of its Family Center represent a significant, albeit reactive, step by Meta in a rapidly evolving regulatory and public opinion landscape. While these measures offer increased transparency and control for parents, their ultimate efficacy in safeguarding teen well-being and satisfying global legislative demands will be closely watched. The ongoing dialogue between tech companies, governments, child safety advocates, and families will undoubtedly continue to shape the future of social media for the next generation.