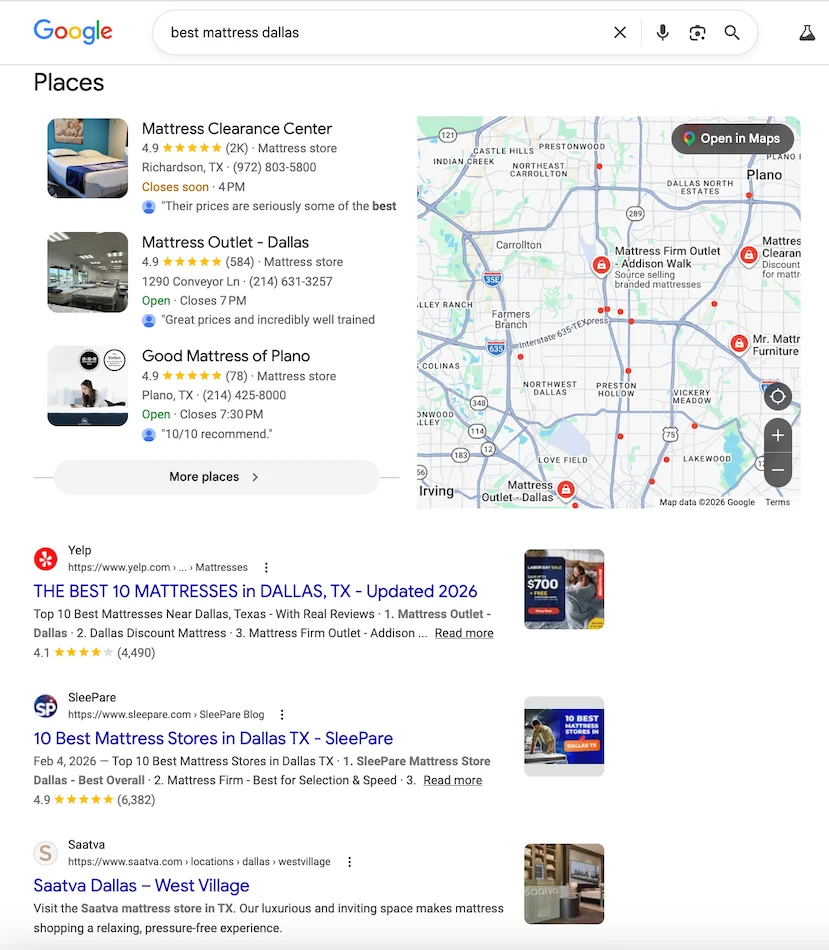

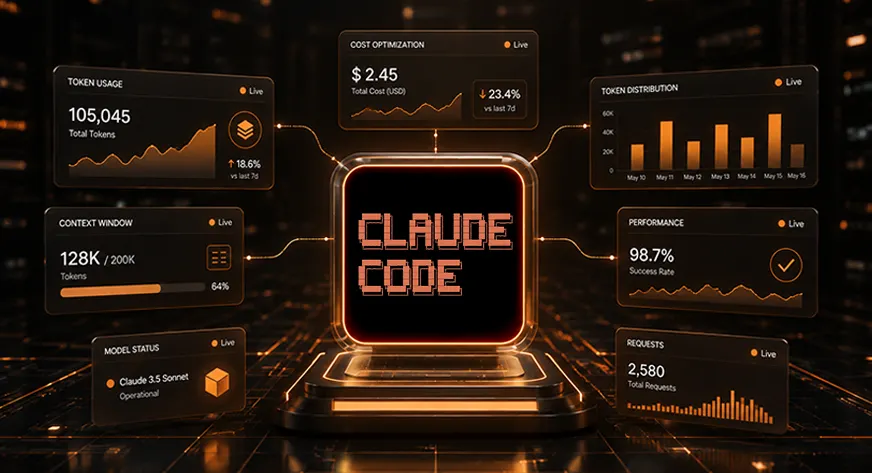

The rapid integration of agentic AI tools into software development lifecycles has introduced a significant new overhead for engineering departments: the escalating cost of large language model (LLM) tokens. As developers increasingly rely on Claude Code, Anthropic’s terminal-based coding assistant, to manage complex repositories, many are discovering that unchecked context expansion can lead to budget-draining API bills. A landmark 2025 Stanford study recently underscored this trend, revealing that software engineers frequently waste thousands of tokens per session through redundant context loading and unoptimized file scanning. By implementing a rigorous framework for context management, engineering teams can achieve substantial cost reductions without sacrificing the quality of the AI-generated code.

The Economics of Context Bloat in Agentic Workflows

The primary driver of cost in Claude Code is the "context window," the total amount of information the model processes during a single interaction. Unlike standard chat interfaces, agentic tools like Claude Code continuously ingest file contents, terminal outputs, system instructions, and chat history. Because Anthropic’s pricing model is sensitive to input volume, every additional line of code or debugging log retained in the chat history compounds the cost of every subsequent prompt.

The 2025 Stanford study specifically highlighted "context leakage" as a primary culprit for budgetary overruns. This occurs when a developer transitions from one task—such as debugging an authentication module—to another, like UI styling, without clearing the previous session’s data. The model continues to "read" the authentication logs during the UI task, leading to thousands of wasted input tokens. To mitigate this, developers must transition from passive AI usage to active "context engineering."

Chronology of AI Agent Adoption and the Token Crisis

The evolution of AI coding assistants has moved through three distinct phases. In 2022, the industry saw the rise of "Snippet Assistants" like GitHub Copilot, which operated on small, localized code blocks. By 2023 and 2024, "Chat-Based Assistants" allowed for broader discussions but required manual copying and pasting. The current era, beginning in late 2024 and early 2025, is defined by "Agentic CLI Tools" like Claude Code.

While Claude Code offers unprecedented autonomy—running tests, reading files, and executing shell commands—it also introduced the "Agentic Token Spike." In the early months of its release, enterprise users reported monthly API costs exceeding projected budgets by 300% to 500%. This financial pressure necessitated the development of the following 23 strategies, designed to maintain the tool’s effectiveness while enforcing strict fiscal discipline.

Fundamental Session Management Tactics

The first line of defense against rising costs is the disciplined management of active chat sessions.

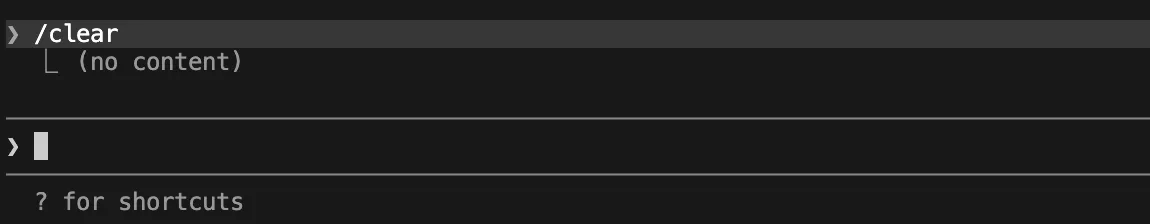

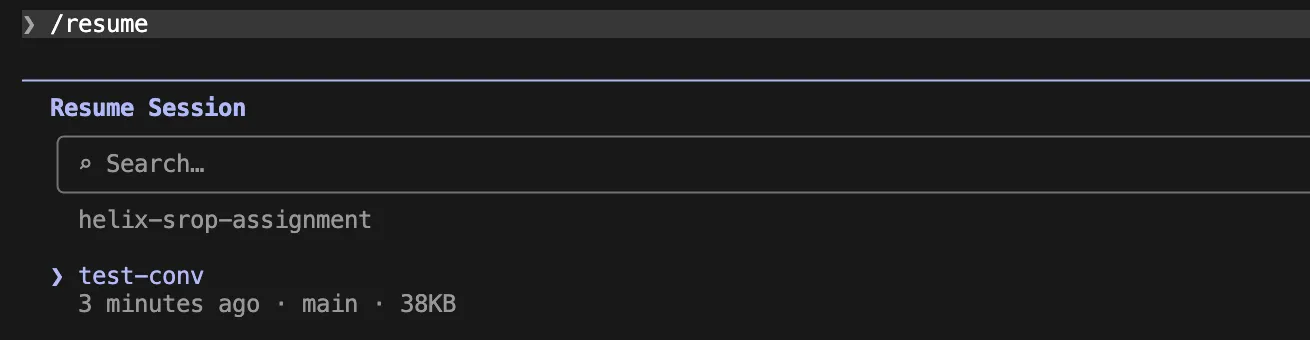

1. Systematic Chat Clearing Between Tasks

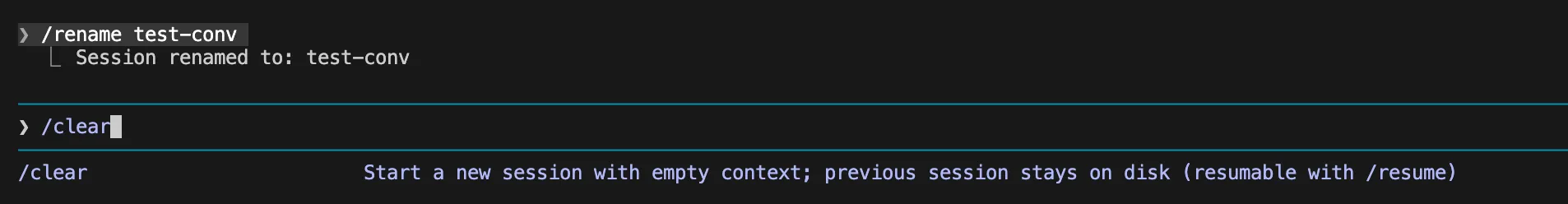

The simplest yet most effective strategy is the use of the /clear command. By starting a fresh session when switching between unrelated tasks, developers ensure that the model is not processing irrelevant history. For continuity, developers are encouraged to use /rename to save a session before clearing it, allowing for a structured archive of work without the ongoing token cost.

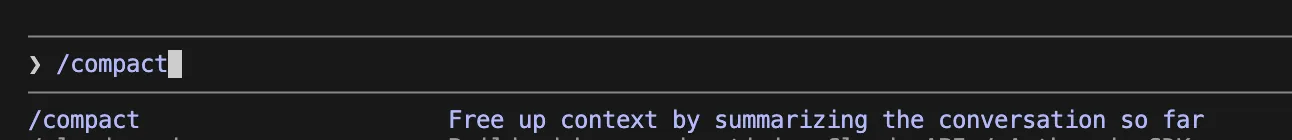

2. Strategic Context Compaction

For long-running tasks where session history is necessary, the /compact command serves as a critical optimization tool. This command summarizes the previous conversation, retaining essential decisions and technical outcomes while discarding verbose terminal outputs and repetitive discussions.

3. Adjusting the Auto-Compact Threshold

By default, Claude Code initiates compaction when the context window reaches approximately 95% capacity. However, for most development workflows, this is too late to prevent cost spikes. Experts recommend lowering this threshold to 70% using the environment variable export CLAUDE_AUTOCOMPACT_PCT_OVERRIDE=70. For particularly noisy environments involving extensive test logs, a 50% threshold is more appropriate.

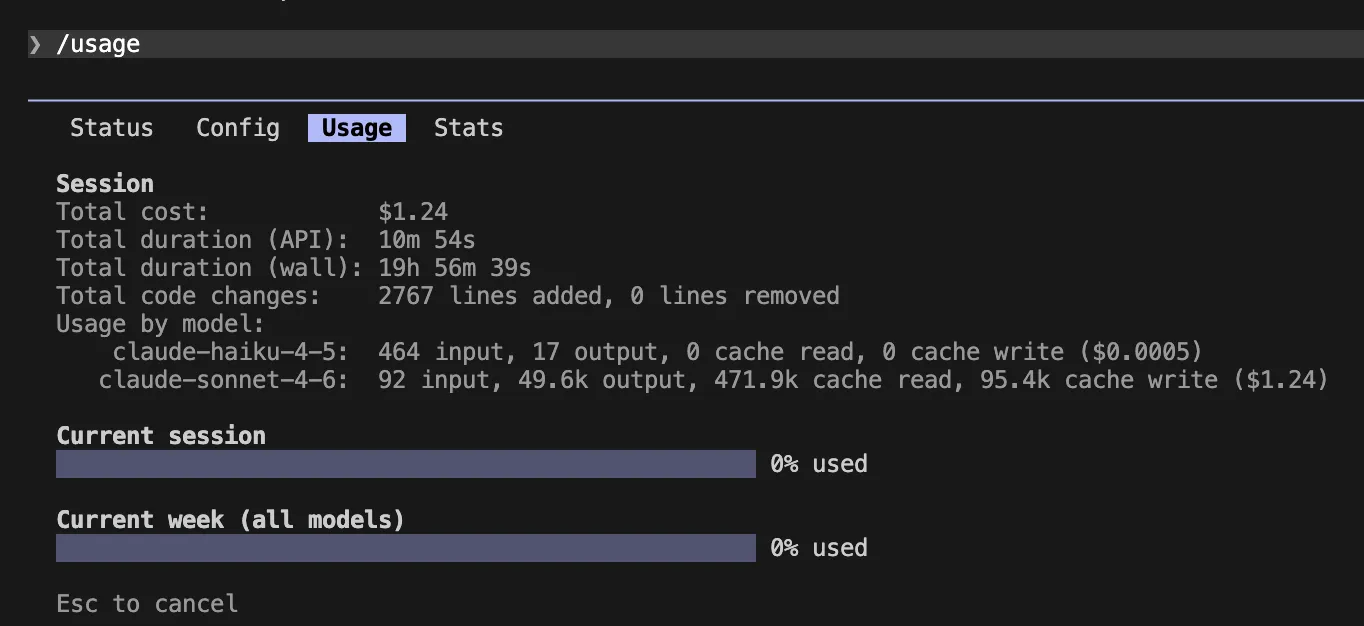

4. Real-Time Usage Monitoring

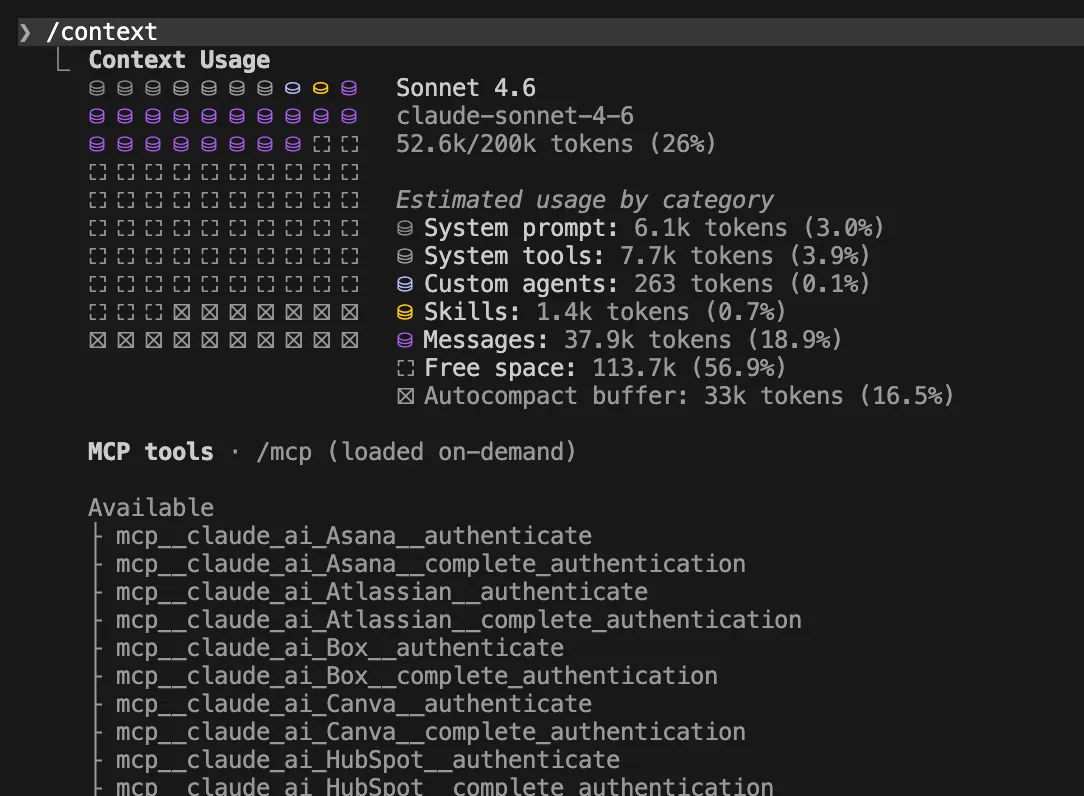

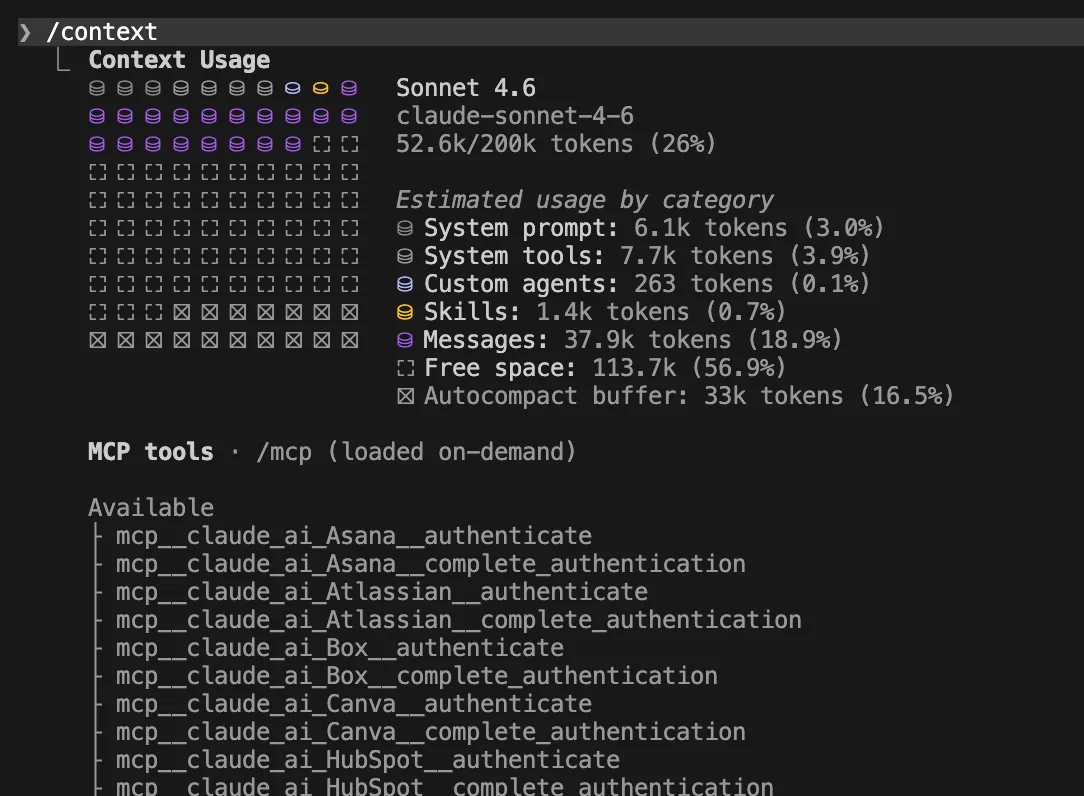

Visibility is the precursor to control. Developers should frequently utilize the /usage and /context commands. The former provides a breakdown of costs for the current session, while the latter displays exactly which files and instructions are consuming the most space.

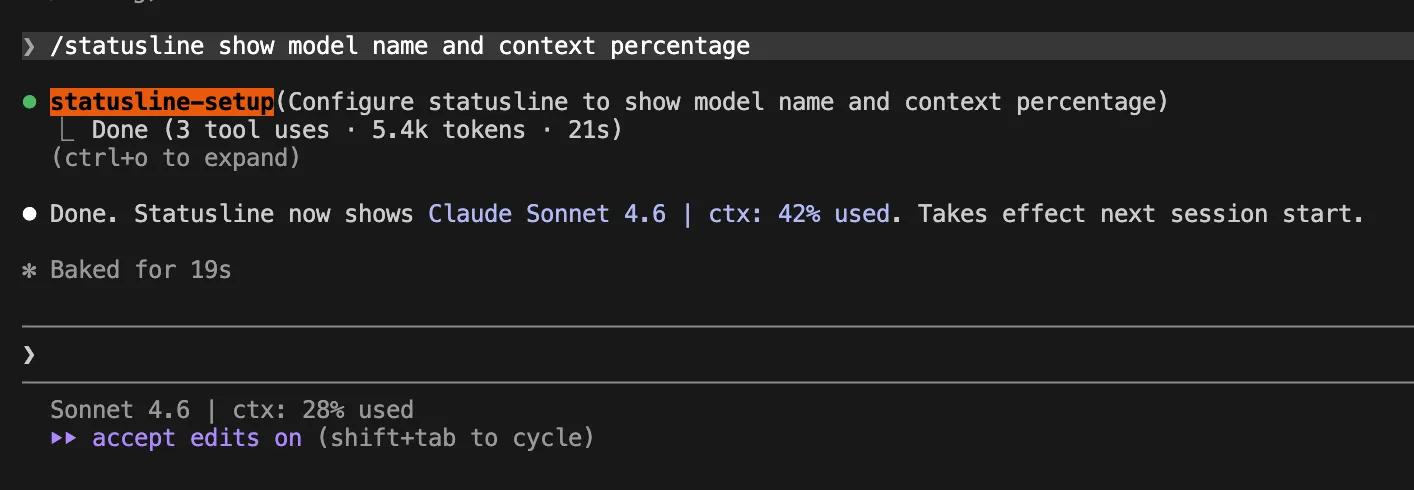

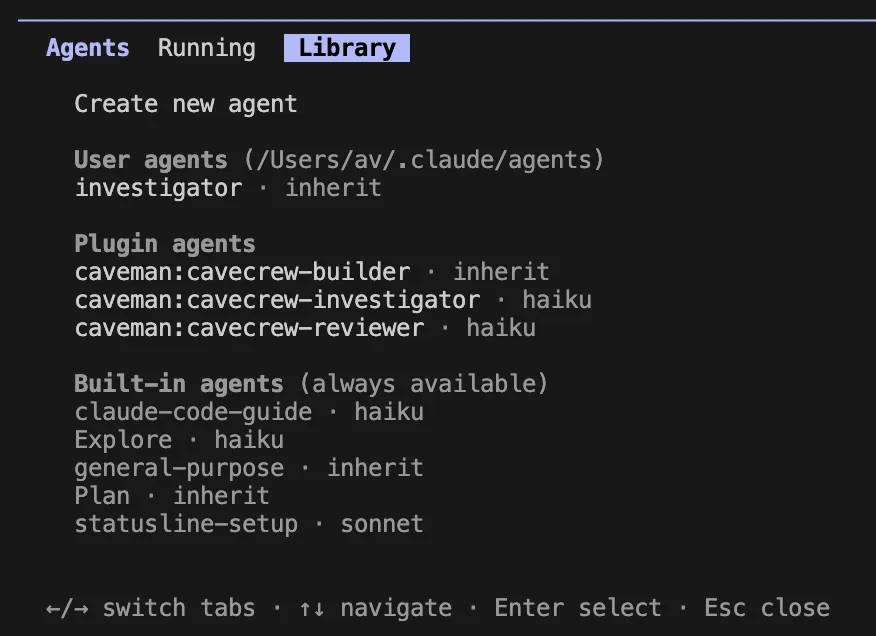

5. Implementation of a Live Status Line

To prevent "token shock," developers can integrate a live status line into their terminal. By configuring ~/.claude/settings.json or using the /statusline command, users can see a real-time percentage of context usage and the model currently in use, fostering a constant awareness of resource consumption.

Instruction and File Architecture Optimization

How a project is structured and how instructions are delivered significantly impact the baseline token cost of every interaction.

6. Minimizing Global Instructions (CLAUDE.md)

The CLAUDE.md file acts as a permanent system prompt for the project. Anthropic recommends keeping this file under 200 lines. Excessive documentation here is expensive, as it is processed with every single message. Project essentials—such as package managers and primary directory structures—should be the only items stored globally.

7. Utilizing Path-Scoped Rules

Rather than cluttering the global CLAUDE.md file, developers should use path-scoped rules. By placing specific markdown files in .claude/rules/, instructions only load when Claude is editing files within that specific directory. This "lazy loading" of instructions ensures that API rules aren’t being processed while the developer is working on front-end CSS, and vice versa.

8. Isolating Specialized Workflows via Skills

The introduction of "Claude Skills" allows for the creation of on-demand workflows. By defining a skill in .claude/skills/, such as a GitHub issue fixer, the model only invokes that specific set of instructions when the skill is called via /fix-issue. This keeps the primary prompt clean and focused.

9. Prioritizing CLI Tools Over Server Protocols

While Model Context Protocol (MCP) servers offer great flexibility, they often introduce more token overhead than standard CLI tools. Using basic shell commands (e.g., gh for GitHub) is generally more token-efficient than maintaining a persistent MCP server connection for simple tasks.

Managing Tool and Terminal Output

Terminal outputs are often the largest contributors to context bloat, particularly when running test suites or build commands.

10. Capping MCP and Server Outputs

Large outputs from external tools can quickly overwhelm a context window. Setting export MAX_MCP_OUTPUT_TOKENS=8000 provides a safety net, preventing any single tool from flooding the session with data.

11. Restricting Bash Output Length

Similarly, standard terminal outputs should be capped. A limit of 20,000 characters (via export BASH_MAX_OUTPUT_LENGTH=20000) is typically sufficient for debugging while preventing 100,000-line log files from being ingested by the LLM.

12. Pre-Filtering Logs

Developers should never feed raw, unfiltered logs into Claude. Utilizing standard Unix utilities like grep, head, and tail to isolate error messages before piping them into Claude can reduce token usage by over 90% for debugging tasks.

Advanced Model and Agent Strategies

Choosing the right tool for the job is a hallmark of an efficient AI-augmented workflow.

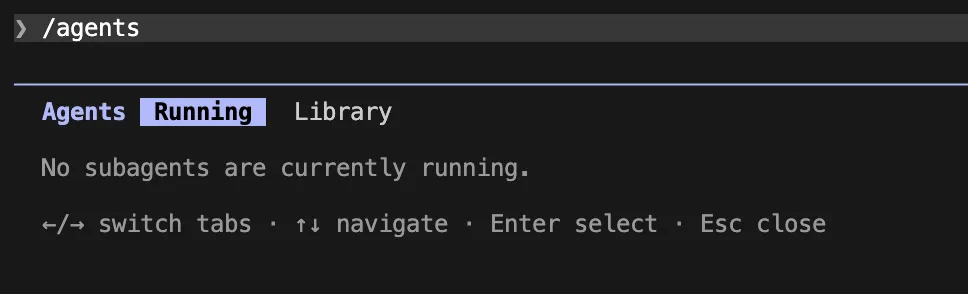

13. Deploying Research Subagents

For tasks requiring the analysis of massive amounts of documentation or code, subagents are indispensable. By delegating a "verbose" research task to a subagent, the main chat context remains clean. The subagent performs the heavy lifting in an isolated, temporary context and returns only a concise summary to the primary session.

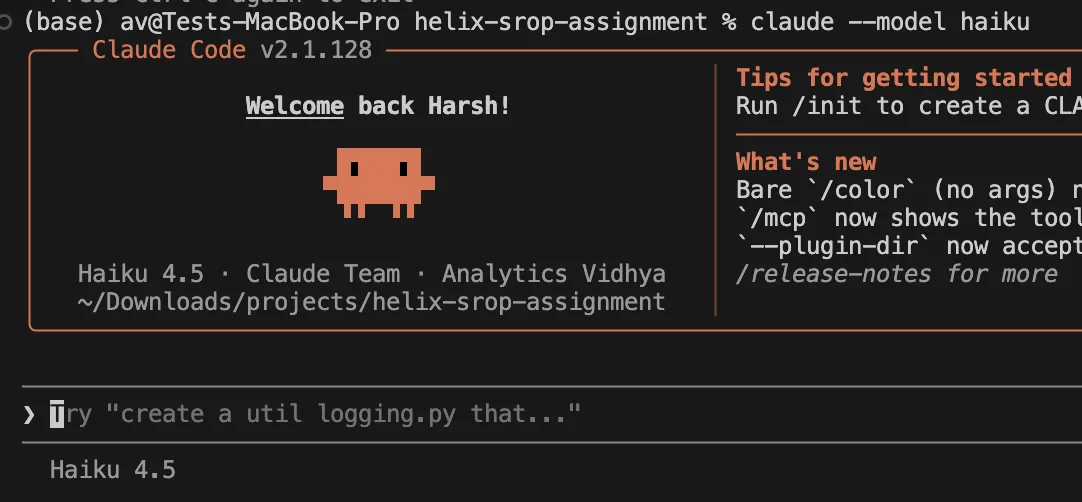

14. Economic Model Selection

Not every task requires the reasoning power of Claude 3.5 Opus. Claude 3.5 Sonnet is the industry standard for most coding tasks, offering a superior balance of speed and cost. For simple refactoring or documentation updates, the Haiku model (claude --model haiku) offers a significant price reduction.

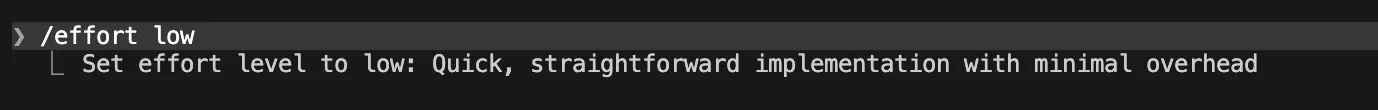

15. Modulating Effort Levels

Claude Code allows users to set an "effort level." For routine tasks, /effort low forces the model to work faster and more concisely, while /effort medium is the recommended default. Reserving high-effort modes for complex architectural problems prevents unnecessary token expenditure on trivial edits.

16. Disabling Extended Thinking

Anthropic’s "Extended Thinking" feature is powerful but expensive, as thinking tokens contribute to the total output cost. For straightforward edits, disabling this feature via export CLAUDE_CODE_DISABLE_THINKING=1 can lead to immediate savings.

17. Leveraging Code Intelligence Plugins

For typed languages like TypeScript or Go, using code intelligence plugins allows Claude to navigate symbols more accurately. This precision prevents the model from having to "guess" and read multiple files to find a definition, thereby saving tokens.

Environmental Control and Workflow Discipline

Restricting what the AI can see is as important as telling it what to do.

18. Denying Access to Noisy Directories

Project "noise"—such as .env files, node_modules, build artifacts, and coverage reports—should be explicitly blocked. By updating the deny list in ~/.claude/settings.json, developers prevent Claude from accidentally scanning thousands of lines of irrelevant data.

19. Prohibiting Broad Repository Scans

Vague prompts like "Find where the error is" often trigger a full repository scan. Efficient developers provide exact file paths: "Check the validation logic in src/auth/validator.ts." This targeted approach is the single most effective way to prevent accidental context explosion.

20. Providing Verification Targets

By telling Claude exactly how to verify a fix (e.g., "Run pnpm test auth.test.ts"), the developer prevents the model from attempting multiple different verification strategies, each of which consumes tokens.

21. Proactive Course Correction

If a developer notices Claude beginning to read irrelevant files or going down a "rabbit hole," they should immediately interrupt the process. Rewinding the session or clarifying the prompt mid-stream prevents the accumulation of useless context.

22. Utilizing Simple System Prompts

For advanced users of the Opus model, enabling export CLAUDE_CODE_SIMPLE_SYSTEM_PROMPT=1 removes verbose tool descriptions from the system prompt. This is a "power user" setting that assumes the model already understands the environment, reducing the per-message overhead.

23. Removing Redundant Git Instructions

If a team uses a custom CI/CD pipeline or a specific Git workflow, the built-in Git instructions in Claude Code may be redundant. Disabling them via export CLAUDE_CODE_DISABLE_GIT_INSTRUCTIONS=1 further shrinks the baseline prompt.

Analysis of Implications for the Future of Software Engineering

The necessity of these 23 strategies highlights a shift in the role of the modern software engineer. As AI tools become more autonomous, the engineer’s primary responsibility is shifting from "writing code" to "managing context and cost." The 2025 Stanford study suggests that teams that do not adopt these "token-frugal" habits will find AI development unsustainable at scale.

Furthermore, the industry is seeing a move toward "Context-Aware Infrastructure." Future versions of IDEs and CLI tools will likely automate many of these strategies, but for the current generation of tools like Claude Code, manual discipline remains the only way to ensure project viability. Engineering managers are now beginning to include "API Efficiency" as a key performance indicator (KPI) for their teams, recognizing that an efficient developer is one who can solve complex problems with the smallest possible token footprint.

In conclusion, the era of "limitless context" has met the reality of "limited budgets." By treating tokens as a finite resource—much like memory or CPU cycles—developers can harness the full power of Claude Code while maintaining a sustainable and cost-effective development environment. Applying these strategies ensures that the benefits of AI-driven development are not erased by the hidden costs of context bloat.