The landscape of digital marketing is undergoing a fundamental shift as organizations move away from traditional, one-off campaign launches toward a model of continuous refinement known as iterative testing. This methodology, which has long been a staple of software development and product engineering, is now being adopted by high-growth marketing teams to mitigate risk, maximize return on investment, and adapt to rapidly changing consumer behaviors. Unlike traditional A/B testing, which often concludes after a single winning variant is identified, iterative testing functions as a perpetual cycle of experimentation where each result informs the next hypothesis. Industry data suggests that this approach is no longer optional for brands seeking to maintain a competitive edge in a saturated digital marketplace where user attention is both scarce and expensive.

The Shift from Static Experiments to Continuous Optimization

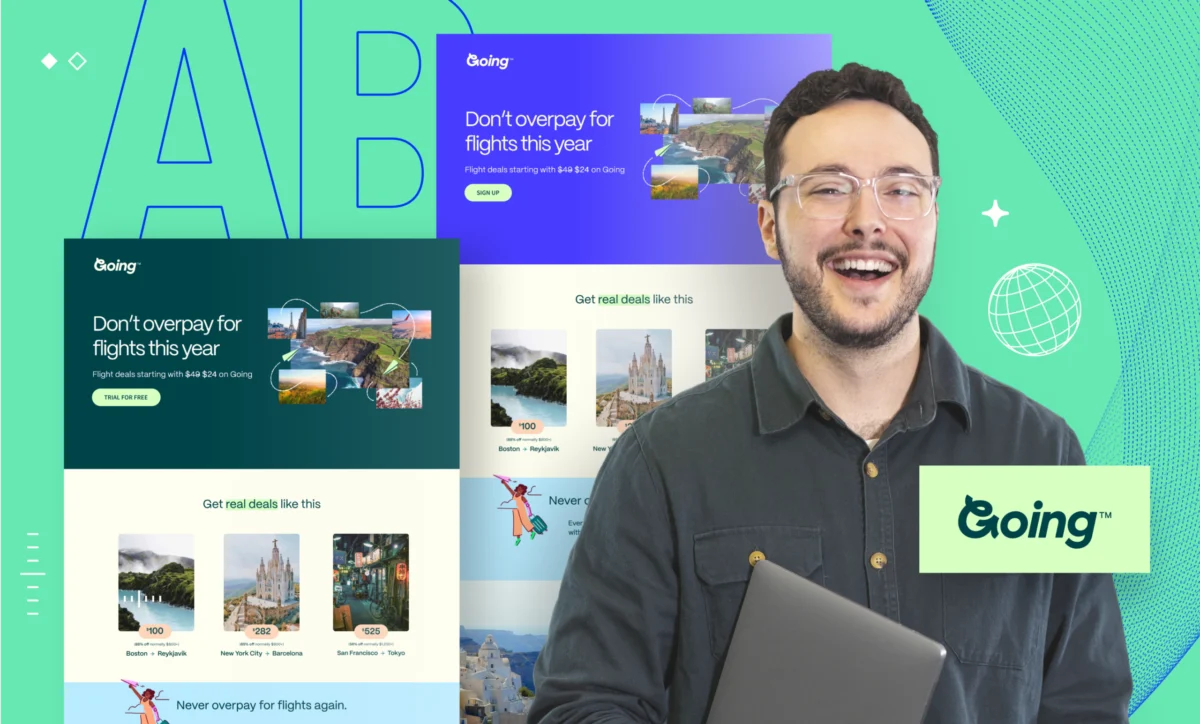

For years, the standard approach to marketing experimentation was characterized by the "big bang" launch. Marketers would design a campaign, run a single A/B test on a landing page or email subject line, declare a winner, and then move on to the next project. While this provided a snapshot of performance, it failed to account for the evolving nature of user intent or the compounding benefits of incremental improvements.

Iterative testing addresses these shortcomings by treating marketing assets as living documents. By repeatedly testing, measuring, and refining assets based on empirical data, teams can identify "slow leaks" in their conversion funnels—small inefficiencies that, over time, drain significant portions of a marketing budget. The transition to this model represents a move toward evidence-based decision-making, where the "highest paid person’s opinion" (HiPPO) is replaced by the voice of the customer as expressed through behavioral data.

A Chronological Framework for Implementation

The adoption of an iterative testing culture typically follows a structured lifecycle. This progression ensures that testing is not a chaotic series of guesses but a disciplined scientific process.

Phase One: The Focused Hypothesis

The process begins with the identification of a single, measurable variable. One of the most common failures in early-stage testing is the attempt to change multiple elements simultaneously—such as a headline, a hero image, and a call-to-action (CTA)—which makes it impossible to determine which change drove the result. Professional practitioners now focus on laser-targeted hypotheses, such as testing whether a benefit-driven headline outperforms a feature-driven one, or if reducing the number of form fields increases lead quality.

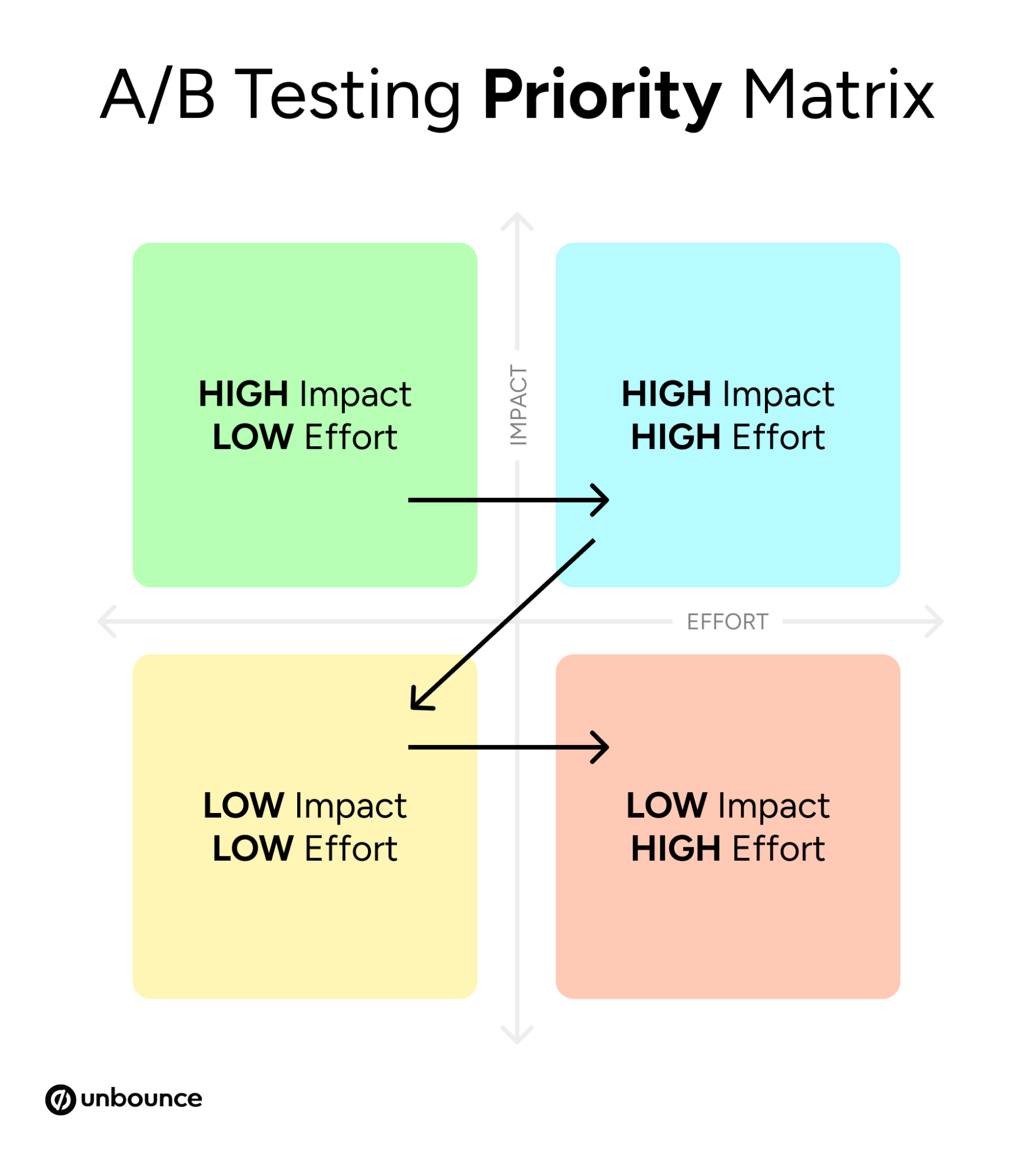

Phase Two: Strategic Prioritization

Not all tests are of equal value. Advanced marketing teams utilize prioritization matrices to rank experiments based on two primary factors: potential impact and ease of implementation. By focusing on "low-hanging fruit"—changes that are easy to make but likely to yield significant results—teams can build internal momentum and secure the budget for more complex, long-term experiments.

Phase Three: The Minimal Viable Variation

In this stage, the goal is to build a testable variant with the least amount of friction. This often involves duplicating an existing control page and making a single, isolated change. The use of low-code or no-code testing platforms has accelerated this phase, allowing marketing teams to bypass traditional development bottlenecks and launch experiments in hours rather than weeks.

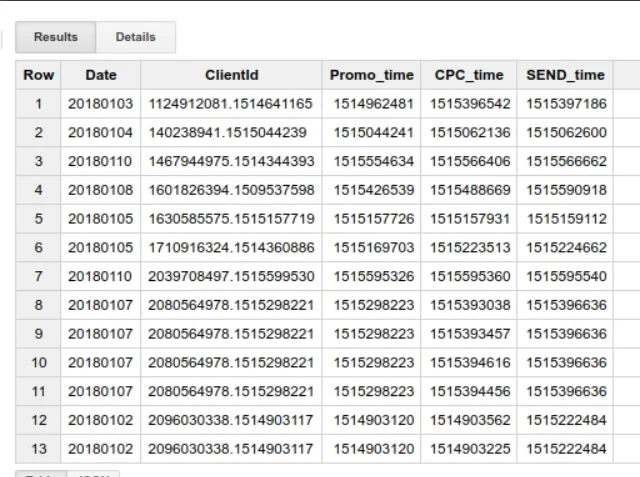

Phase Four: Data Accumulation and Statistical Significance

Launching the test is only the beginning. The collection of meaningful data requires patience to reach statistical significance—the point at which results are reliable enough to base decisions on rather than being the result of random chance. Analysts suggest that most tests require a minimum of 100 conversions per variant and at least one to two full business cycles (usually weeks) to account for fluctuations in traffic sources and timing.

Phase Five: Deep Analysis and Insight Extraction

Once a test concludes, the focus shifts from "what happened" to "why it happened." If a simpler headline increased conversions, the insight may be that the target audience values clarity over cleverness. This qualitative interpretation of quantitative data is what allows a single test result to be scaled across an entire marketing department, influencing everything from ad copy to sales scripts.

Phase Six: The Iterative Loop

The final step is the most critical: using the insights from the previous test to form the next hypothesis. This creates a compounding effect where each small win builds upon the last, leading to exponential growth in conversion rates over time.

Supporting Data: The Science of Conversion

Recent industry reports, including the 2024 Conversion Benchmark Report, provide compelling evidence for the efficacy of iterative testing. One of the most significant findings in recent years is the impact of content complexity on user behavior. Data shows that landing pages written at a 5th-to-7th-grade reading level convert at a rate of 11.1%, which is more than double the conversion rate of pages written with more professional or academic language.

Furthermore, the complexity of the language used has a documented negative correlation with conversion rates. Specifically, landing pages with higher word complexity show a -24.3% negative correlation with conversions. This data point alone has prompted thousands of companies to begin iterative tests focused on simplifying their value propositions.

Device-specific data also highlights the need for continuous testing. Currently, approximately 83% of landing page visits occur on mobile devices. However, desktop users still convert at an average rate that is 8% higher than mobile users. This discrepancy suggests that while "mobile-first" is a common industry mantra, many mobile experiences remain suboptimal. Iterative testing allows brands to bridge this gap by testing mobile-specific layouts and simplified navigation structures that cater to the on-the-go user.

Stakeholder Reactions and Organizational Impact

The move toward iterative testing is receiving broad support across various corporate departments, though each views the impact through a different lens.

Chief Marketing Officers (CMOs) are increasingly championing iterative models because they provide a more predictable ROI. In an era of tightening budgets, the ability to prove that every dollar spent is backed by evidence is a significant advantage during board-level discussions. CMOs view iterative testing as a risk-mitigation strategy that prevents the "all-in" bets that can lead to catastrophic campaign failures.

Sales departments also benefit from this approach. By iteratively testing lead capture forms and the messaging that precedes them, marketing teams can deliver higher-quality leads. When a marketing team identifies that a specific type of messaging resonates better with a high-value audience segment, the sales team can incorporate those exact phrases into their outreach, creating a seamless experience for the prospect.

Product and UX designers, meanwhile, see iterative testing as a way to validate user interface choices. Instead of debating the color of a button or the placement of a menu in a vacuum, designers can rely on live user data to settle disputes, leading to faster design cycles and a more user-centric product.

Broader Implications for the Future of Digital Marketing

The rise of artificial intelligence and machine learning is set to accelerate the iterative testing movement. New tools are emerging that can automatically reroute traffic to high-performing variants in real-time, a process known as "Smart Traffic" or automated optimization. Some of these systems can begin optimizing after as few as 50 visits, drastically shortening the feedback loop and allowing even low-traffic campaigns to benefit from the iterative model.

However, the human element remains irreplaceable. While AI can identify which variant is performing better, it cannot yet explain the psychological nuances of why a particular message resonates with a human audience. Therefore, the future of the industry likely lies in a hybrid model: AI handling the rapid execution and traffic distribution of tests, while human strategists focus on the creative hypothesis and the long-term application of insights.

The ultimate implication of this shift is the democratization of growth. In the past, only companies with massive budgets and dedicated data science teams could afford sophisticated testing programs. Today, the availability of accessible testing tools and a wealth of benchmark data means that even small startups can implement a rigorous iterative testing process.

As digital channels become increasingly crowded and the cost of customer acquisition continues to rise, the companies that thrive will be those that view their marketing not as a series of static events, but as a continuous laboratory. Iterative testing provides the framework for this evolution, turning every visitor interaction into a data point and every campaign into a learning opportunity. The era of marketing by intuition is coming to an end; the era of the evidence-based marketer has arrived.