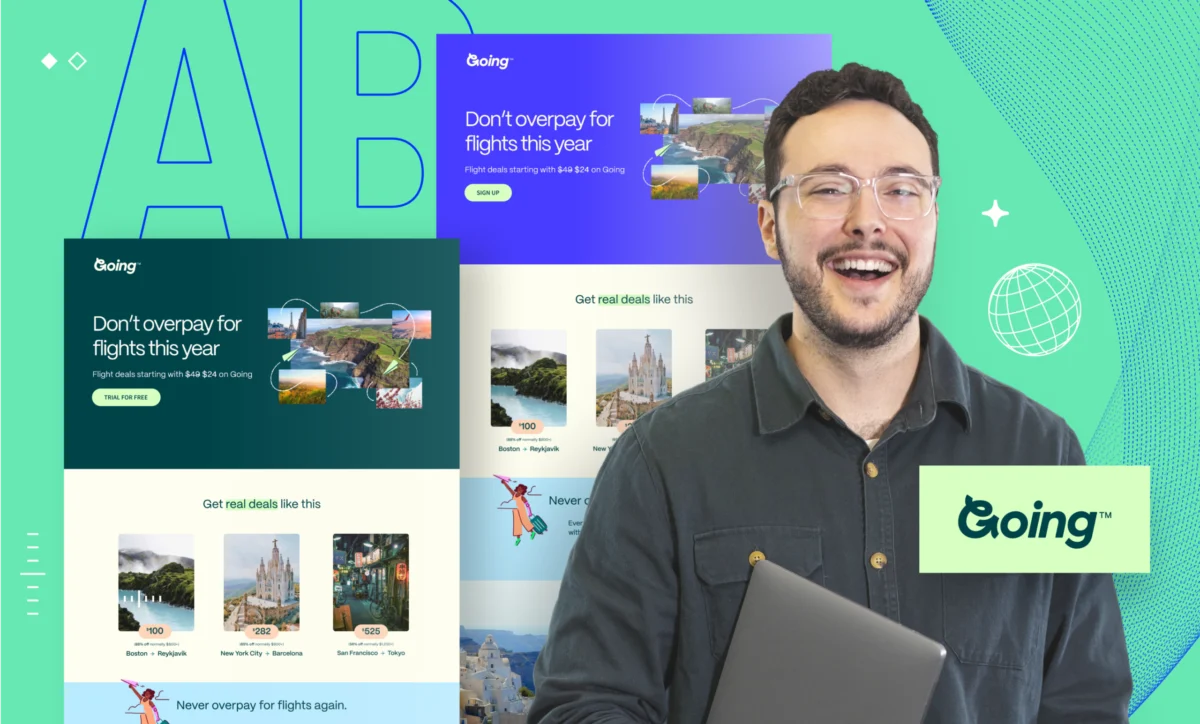

Digital marketing methodologies are undergoing a fundamental transformation as organizations move away from traditional, high-stakes campaign launches in favor of iterative testing, a process of continuous, evidence-based refinement. In an era where consumer behavior shifts rapidly across devices and platforms, the "one-and-done" approach to A/B testing is increasingly viewed as an outdated relic of the early web. Industry experts now advocate for a cyclical testing model that prioritizes small, incremental improvements which compound over time to deliver significantly higher conversion rates and more predictable returns on investment.

The Paradigm Shift in Conversion Rate Optimization

Historically, marketing teams approached A/B testing as a singular event—a "championship game" where a new landing page or ad creative was pitted against an old one. While this provided a snapshot of performance, it often failed to capture the "why" behind user actions or account for the shifting variables of the digital marketplace. Iterative testing, a methodology long utilized in software development and product engineering, has bridged this gap. It involves a repetitive cycle of testing, measuring, and refining assets based on the insights gleaned from previous experiments.

This shift is driven by the realization that massive marketing failures are rarely the result of a single catastrophic event. Instead, they are often the result of "slow leaks"—minor inefficiencies in messaging, user interface, or technical performance that drain budgets over time. By adopting an iterative framework, marketers can identify and patch these leaks in real-time, transforming the testing phase from a post-mortem analysis into a proactive engine for growth.

A Chronology of Methodological Development

The transition to iterative marketing can be traced through several distinct stages of digital evolution. In the early 2010s, static A/B testing became accessible to the average marketer, allowing for basic comparisons of headlines and button colors. However, the feedback loops remained long, often requiring weeks or months of data collection to reach statistical significance.

By the late 2010s, the rise of machine learning and automated optimization tools began to accelerate these cycles. The introduction of technologies such as "Smart Traffic" signaled a move toward dynamic optimization, where algorithms could begin shifting traffic to winning variants in as few as 50 visits. As of 2024, the industry has reached a point of maturity where the most successful firms no longer view testing as a project, but as a permanent cultural fixture. Data from the 2024 Conversion Benchmark Report indicates that this continuous refinement is no longer optional; it is the primary differentiator between brands that scale and those that stagnate.

The Iterative Framework: A Step-by-Step Analysis

To implement an effective iterative testing program, organizations are adopting a structured six-step process designed to maximize learning and minimize risk.

1. Hypothesis Formulation

The process begins with the development of a focused hypothesis. Unlike traditional tests that might change five variables at once, iterative testing demands a laser focus on a single element. For example, a marketer might hypothesize that "Changing the call-to-action from ‘Sign Up Now’ to ‘Start My Free Trial’ will increase conversions by 10% because it emphasizes the immediate benefit to the user." This specificity ensures that the results are actionable and clearly linked to a specific user behavior.

2. Strategic Prioritization

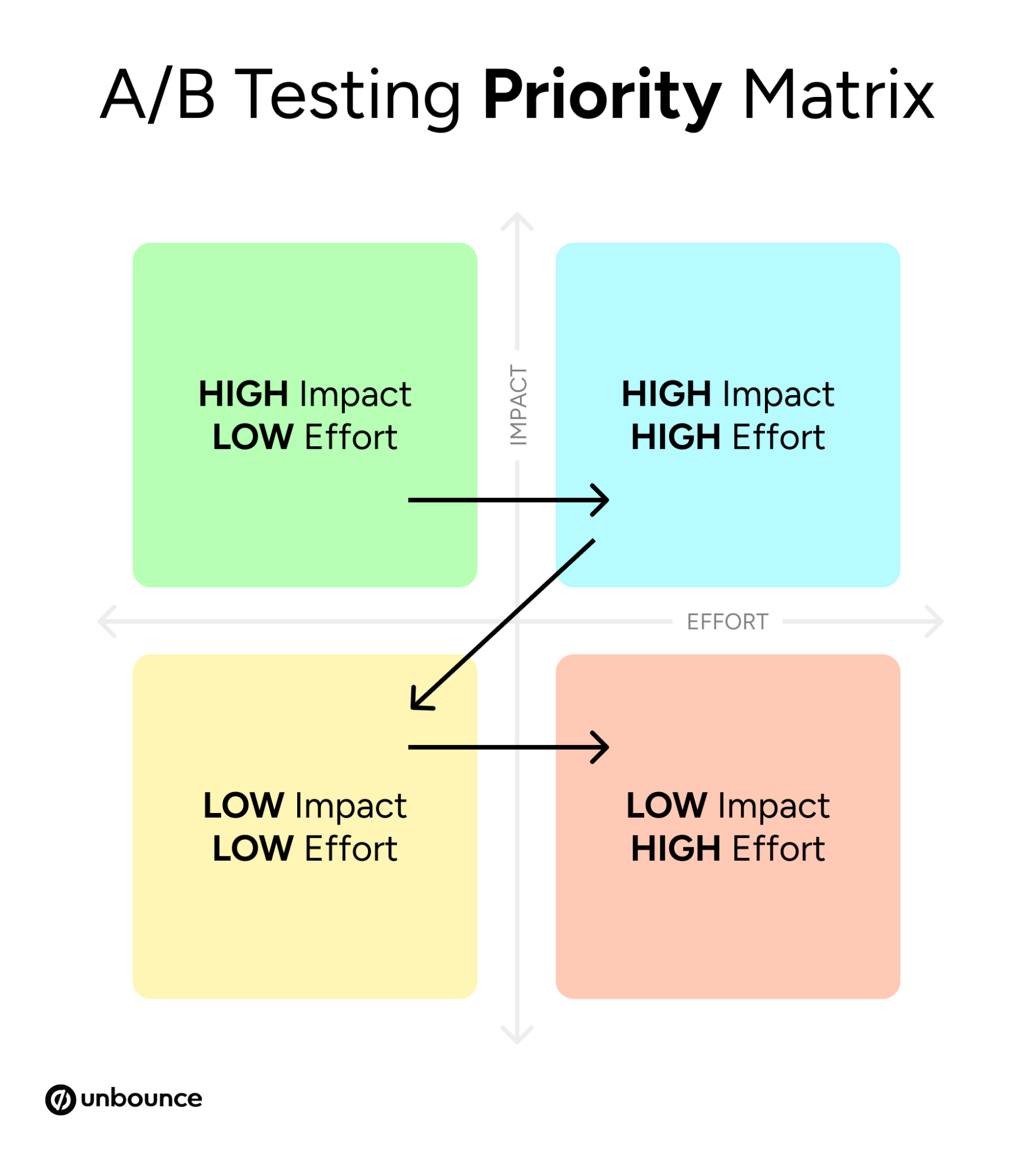

Recognizing that resources are finite, teams utilize an "Impact vs. Effort" matrix to prioritize experiments. High-impact, low-effort changes—often referred to as "quick wins"—are prioritized to build momentum. This strategic layering ensures that the testing program remains agile and produces results that justify continued investment from stakeholders.

3. Minimal Testable Variations

Borrowing from the "Minimum Viable Product" (MVP) concept in tech, iterative design focuses on building variants that are different enough to test a hypothesis but simple enough to launch quickly. This avoids the "perfectionism trap" where teams spend weeks designing a variant only to find it underperforms in the real world.

4. Data Collection and Statistical Validation

A critical component of the process is ensuring statistical significance. Journalistic analysis of industry trends suggests that many teams fail because they "peek" at results too early and make decisions based on random fluctuations. Modern iterative testing requires rigorous adherence to sample size requirements and confidence intervals—typically 95% or higher—to ensure that a "win" is a genuine reflection of user preference.

5. Insight Extraction

The analysis phase goes beyond identifying a winner. It seeks to understand the underlying psychology. If a simplified headline outperforms a complex one, the insight is not just about that specific page; it is about the audience’s preference for clarity over cleverness—a finding that can then be applied to email subject lines, social media copy, and even product descriptions.

6. Scaling and Evolution

Successful learnings are not kept in isolation. Once a variant is proven, it is scaled across other channels. A successful landing page headline might become the core message of a global ad campaign, creating a feedback loop where every small test informs the broader brand strategy.

Supporting Data and Industry Benchmarks

The necessity of this iterative approach is underscored by recent data highlighting the complexity of modern user behavior. According to the 2024 Conversion Benchmark Report, which analyzed thousands of landing pages, the relationship between content complexity and conversion is stark. Pages written at a 5th-to-7th-grade reading level convert at an average rate of 11.1%, which is more than double the conversion rate of pages written in professional or academic language.

Furthermore, the report revealed a significant disconnect between device usage and conversion efficiency. While 83% of landing page visits now occur on mobile devices, desktop sessions still convert 8% better on average. This discrepancy provides a prime target for iterative testing: marketers must continuously experiment with mobile-specific layouts, faster load times, and simplified forms to close the "mobile gap."

Additional findings indicate that word complexity has a -24.3% negative correlation with conversion rates. This data suggests that many brands are over-complicating their value propositions, a mistake that iterative testing is uniquely suited to correct through the gradual simplification of messaging.

Expert Insights and Organizational Reactions

Josh Gallant, a prominent organic growth strategist and founder of Backstage SEO, emphasizes that the primary benefit of iterative testing is the reduction of wasted spend. "Most marketing failures aren’t massive flops," Gallant notes. "They’re slow leaks that drain your budget day after day." His analysis suggests that by moving from quarterly feedback cycles to daily or weekly iterations, companies can identify what resonates with users before they have exhausted their quarterly budgets.

Industry analysts also point to the psychological benefits of this model within an organization. When marketing teams are encouraged to view "failed" tests as valuable data points rather than personal or professional setbacks, it fosters a culture of experimentation. This organizational shift is often cited by Chief Marketing Officers (CMOs) as a key factor in retaining top talent and maintaining a competitive edge in crowded markets.

Broader Impact and Future Implications

The implications of iterative testing extend far beyond simple conversion rate optimization (CRO). As artificial intelligence (AI) becomes more integrated into marketing technology, the ability to run thousands of iterations simultaneously is becoming a reality. AI-driven tools can now analyze user segments in real-time, serving different variations of a page to different users based on their past behavior or demographic data.

However, this technological advancement does not eliminate the need for human oversight. The "human in the loop" remains essential for setting the strategic direction and interpreting the broader emotional resonance of a brand’s message. The future of marketing appears to be a hybrid model where AI handles the rapid execution of iterations, while human marketers focus on high-level hypothesis generation and cross-functional strategy.

Ultimately, iterative testing represents a move toward a more scientific, disciplined form of marketing. By treating every campaign as a learning opportunity and every data point as a stepping stone, organizations can build a more resilient and responsive marketing engine. In a landscape defined by volatility, the ability to learn and adapt faster than the competition is the only sustainable advantage. The move toward iterative models is not merely a trend in digital advertising; it is a fundamental maturation of the industry, signaling a future where data-driven precision replaces creative guesswork.