In the contemporary landscape of digital marketing, the transition from intuition-based design to data-driven experimentation has become a prerequisite for commercial success. Experiments serve as the backbone of high-performing marketing campaigns, providing the empirical evidence necessary to distinguish between effective strategies and those requiring refinement. While A/B testing has long been the standard for comparing isolated page elements, the increasing complexity of user interfaces has necessitated more sophisticated approaches. For organizations seeking to understand the intricate interplay between multiple design variables, multivariate testing (MVT) has emerged as the definitive solution for maximizing conversion rates and optimizing the user journey.

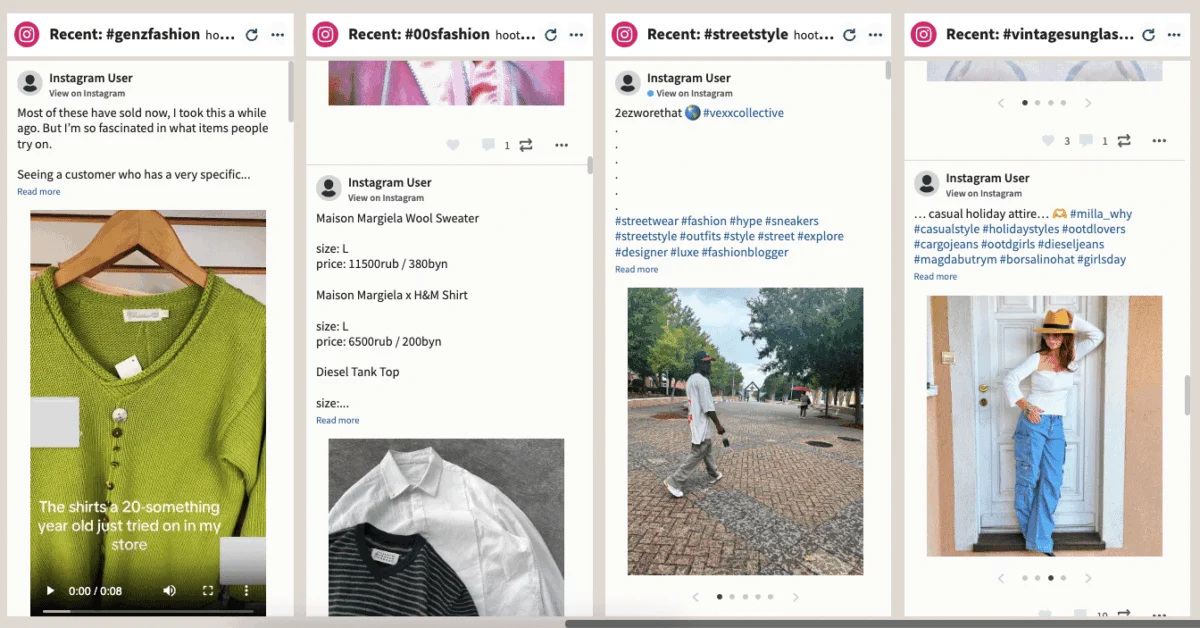

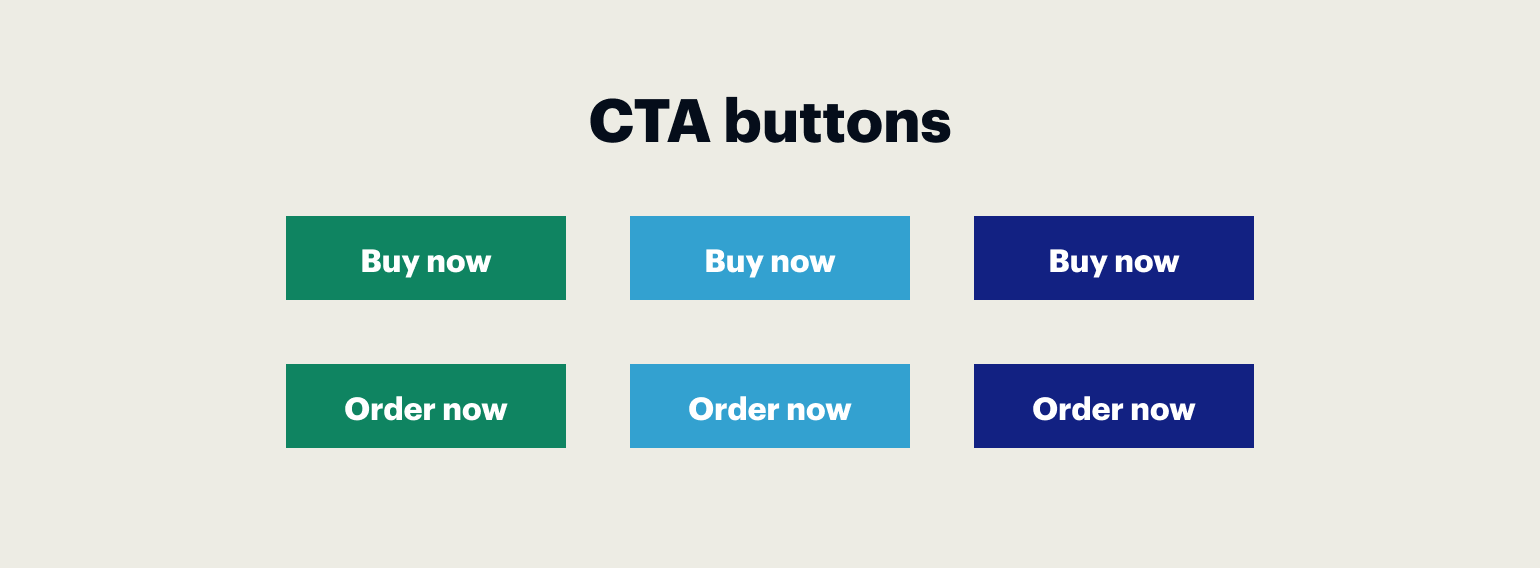

Multivariate testing represents a significant advancement in optimization logic. Unlike standard split testing, which typically isolates a single variable—such as a headline or a call-to-action (CTA) button—MVT allows marketers to analyze how different components of a web page interact with one another. By simultaneously modifying and testing several variables, such as images, web forms, button colors, and link placements, businesses can identify the precise combination of elements that resonates most effectively with their target audience. This approach goes beyond simple "this or that" comparisons, offering a holistic view of page performance and the subtle psychological drivers of user behavior.

The Technical Foundations of Multivariate Testing

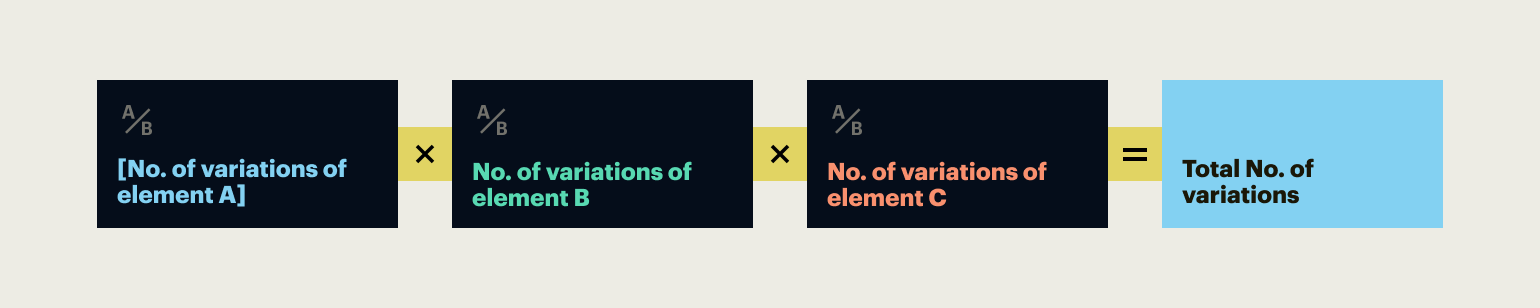

To understand the utility of multivariate testing, one must first grasp its mathematical and operational framework. The primary objective of an MVT experiment is to determine the "winning variation"—the specific combination of headlines, visuals, and interactive elements that produces the highest engagement or conversion rate. This process is instrumental in Conversion Rate Optimization (CRO), as it validates multiple hypotheses concurrently rather than sequentially.

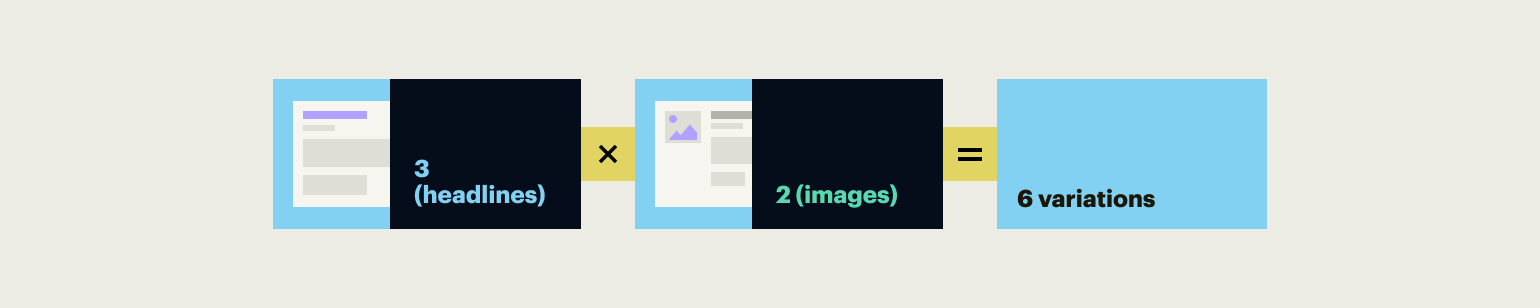

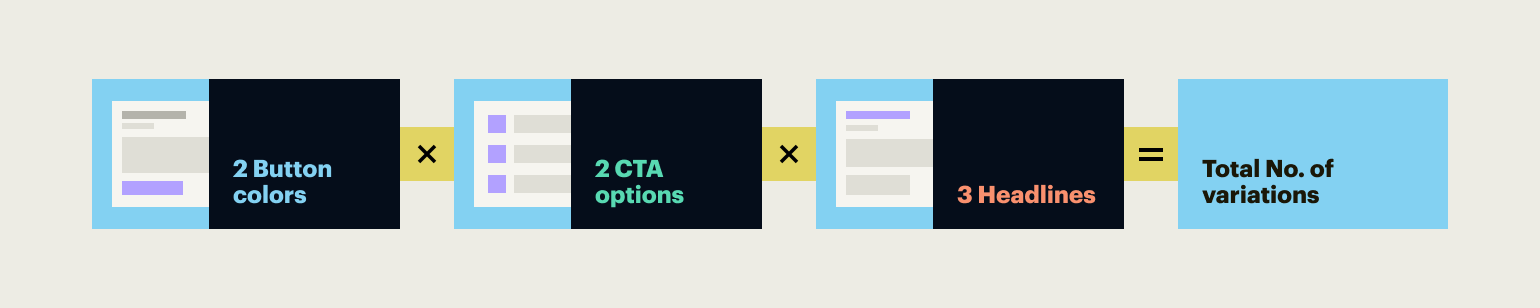

The number of variations generated in an MVT experiment is determined by a multiplicative formula: the number of variations for the first element multiplied by the number of variations for the second element, and so on. For instance, if a marketing team wishes to test three different headlines and two distinct hero images, the result is a total of six unique page combinations. If the experiment expands to include two different CTA button colors and two different wording options, the total number of combinations grows to 24. This exponential growth highlights both the power and the primary challenge of MVT: the requirement for substantial traffic to achieve statistical significance.

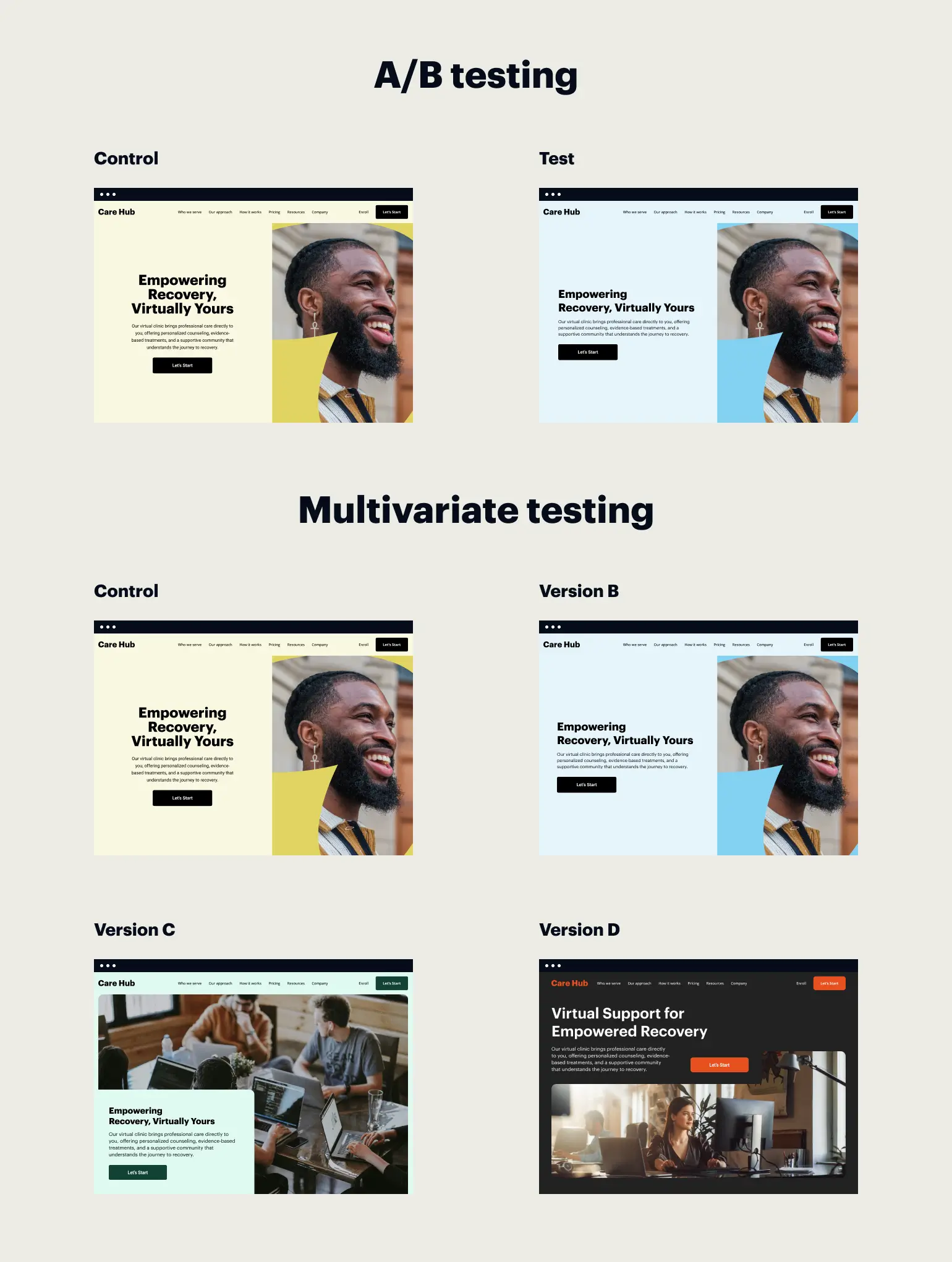

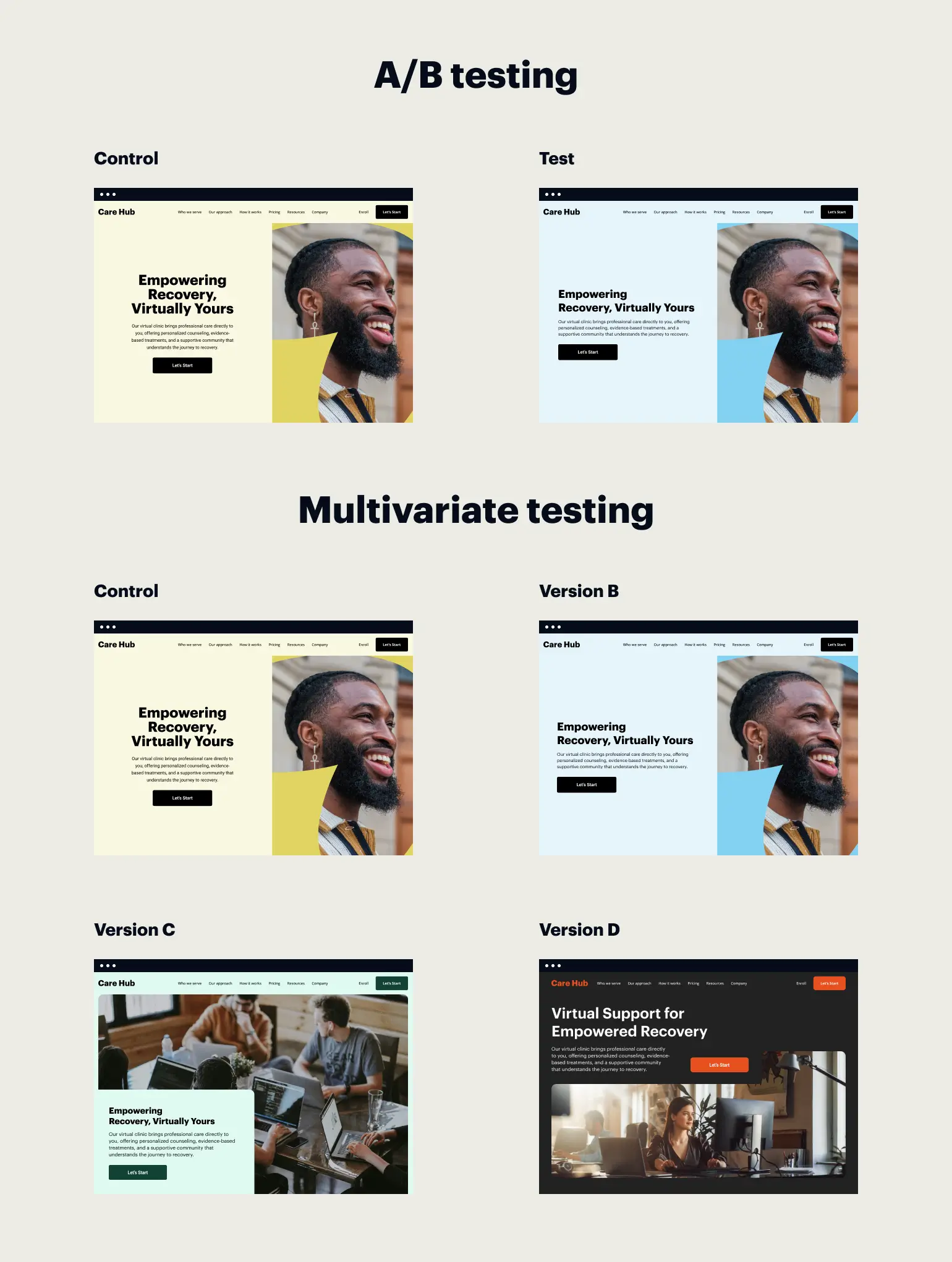

Distinguishing MVT from Traditional A/B Testing

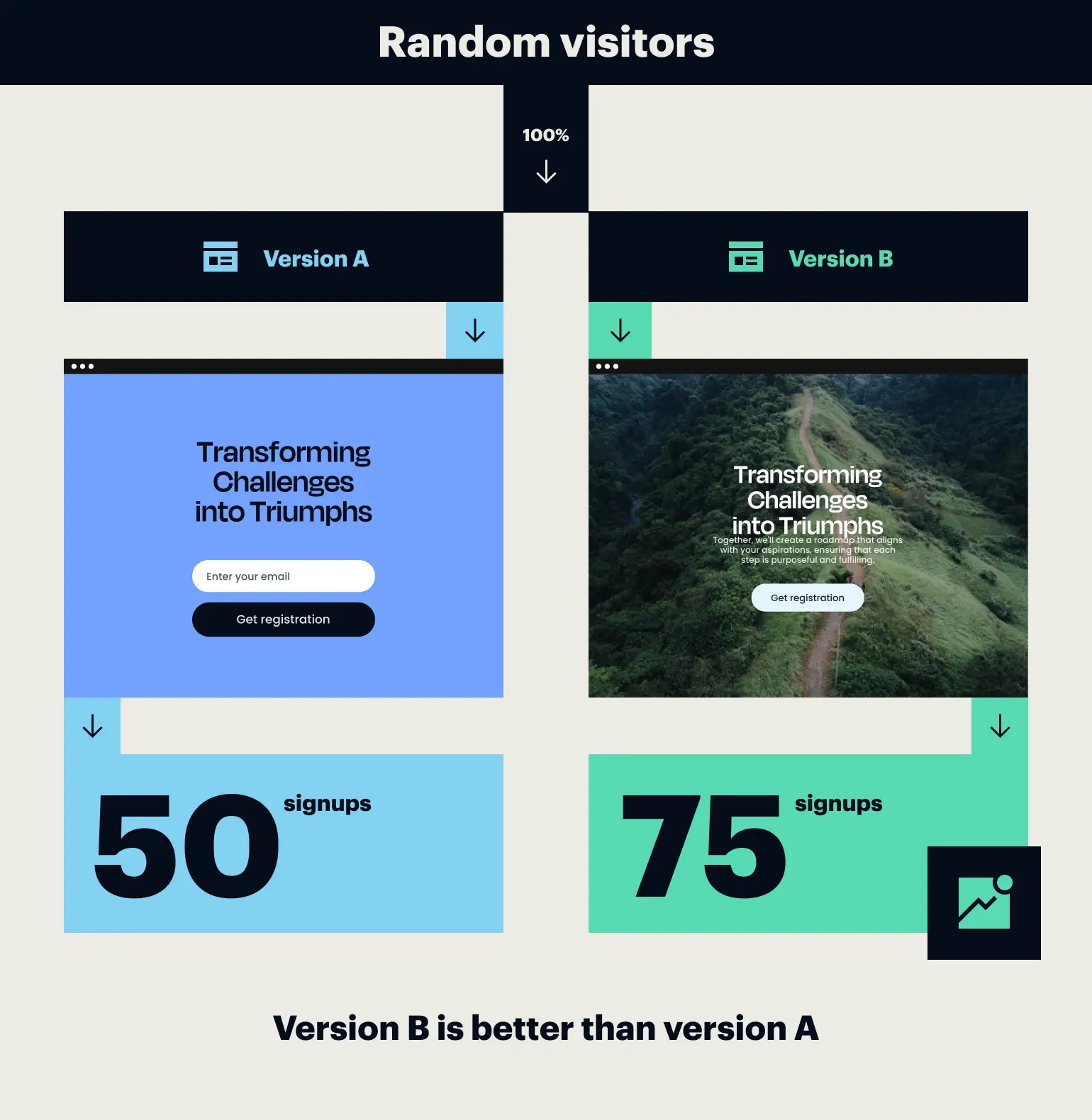

While both A/B testing and multivariate testing share the goal of enhancing user experience and performance, they are rooted in different methodologies. A/B testing, or split testing, is a linear process where an audience is divided into two groups, each exposed to a different version of a single element. This method is highly effective for radical changes—such as testing two completely different page layouts—or for websites with lower traffic volumes that cannot support the complexity of MVT.

In contrast, multivariate testing is an advanced level of experimentation designed for fine-tuning and optimization. It empowers marketers to assess the ripple effects of small changes. For example, a travel agency seeking to optimize its booking process might use an A/B test to decide between a short-form and a long-form layout. However, once a layout is chosen, the agency might employ MVT to test different combinations of form field labels, button placements, and trust signals (such as security badges) to find the "sweet spot" for conversions.

The trade-off between the two methods involves complexity and speed. A/B tests are generally faster to implement and require smaller sample sizes, making them ideal for rapid iterations. MVT, while more resource-intensive and slower to conclude, provides a more comprehensive insight into user behavior by revealing "interaction effects"—instances where a specific headline might perform poorly on its own but exceptionally well when paired with a specific background image.

Methodologies of Multivariate Experimentation

Marketers typically employ three primary statistical approaches when conducting multivariate tests: Full Factorial, Fractional Factorial, and the Taguchi Method.

- Full Factorial Testing: This is widely regarded as the gold standard for accuracy. In a full factorial test, every possible combination of variables is tested against a portion of the audience. This ensures that the interaction between every element is accounted for, providing the most reliable data. However, because it tests every combination, it requires the highest volume of traffic.

- Fractional Factorial Testing: This method tests only a fraction of the possible combinations. It uses mathematical models to predict how the untested combinations would likely perform based on the data gathered from the tested ones. This is a pragmatic choice for websites that want the insights of MVT but lack the massive traffic required for a full factorial test.

- The Taguchi Method: Originally derived from manufacturing and industrial quality control, this method is the least common in digital marketing. It involves highly complex calculations to identify the most impactful variables with minimal testing. While efficient in theory, its complexity often outweighs its benefits in a fast-paced digital environment.

Case Studies in Optimization: Real-World Applications

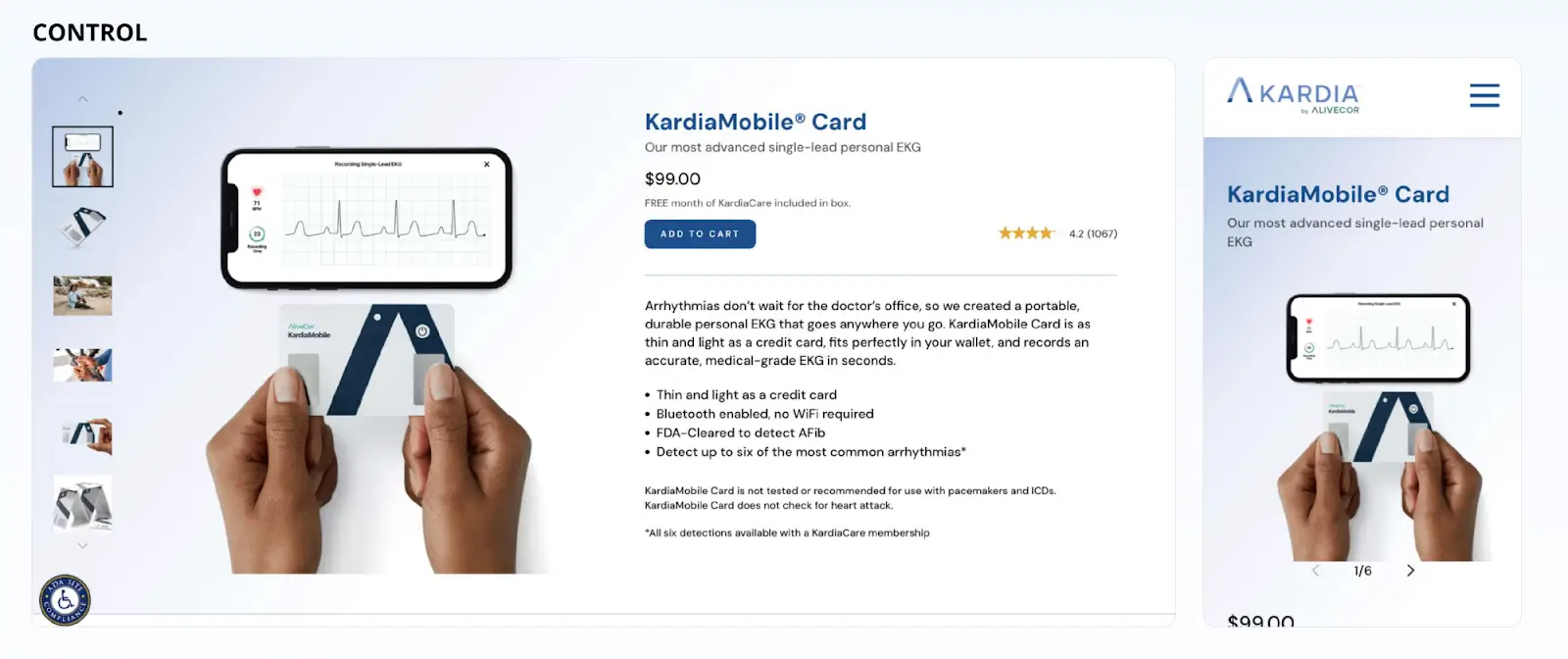

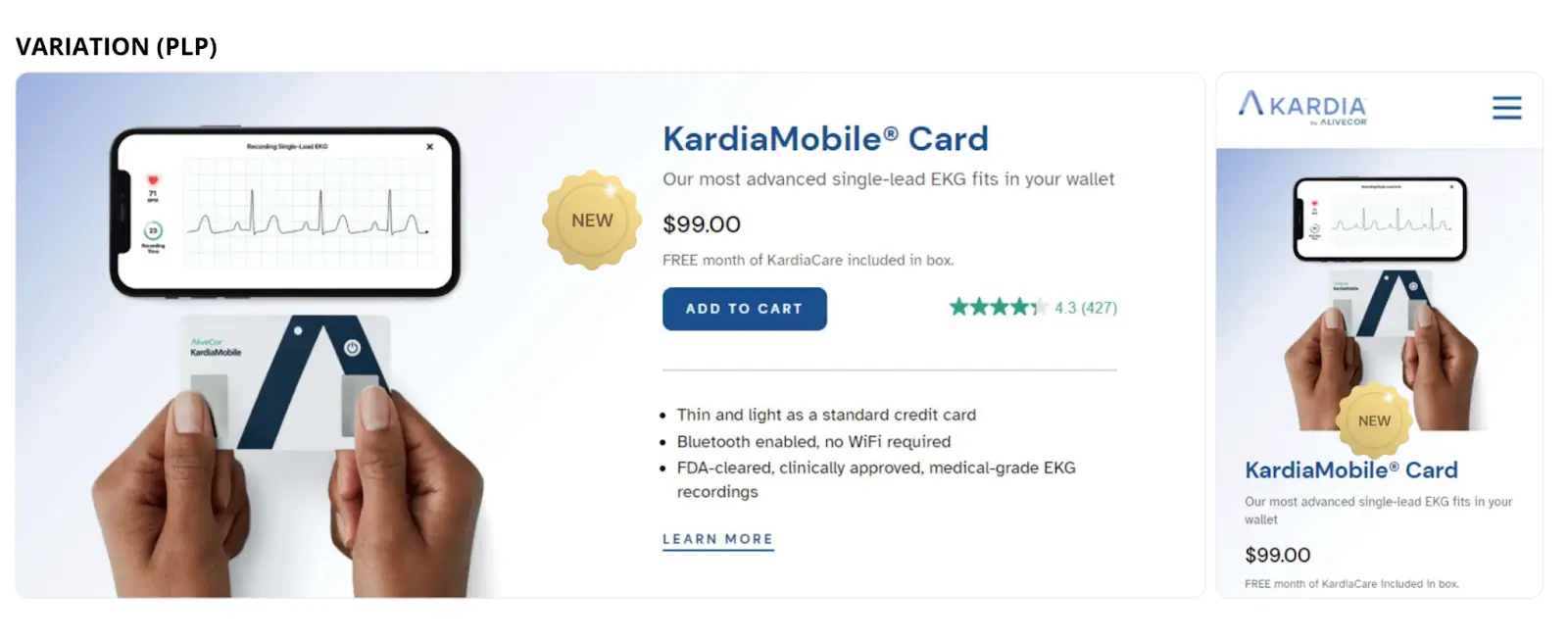

The efficacy of these testing methodologies is best illustrated through documented industry case studies. One notable example involves AliveCor, a medical technology company. During the launch of its KardiaMobile Card, the company faced the challenge of promoting a new product without cannibalizing the sales of its existing portfolio.

Based on the hypothesis that users interact more with highlighted elements, AliveCor conducted an experiment testing the placement of a "New" badge on product detail and listing pages across both desktop and mobile platforms. The experiment utilized different badge sizes and positions. The results were transformative: the winning variation, which featured a prominent "New" badge, resulted in a 25.17% increase in conversion rates and a 29.58% increase in revenue per user. By validating the visual hierarchy through testing, AliveCor successfully integrated a new product line while maximizing overall profitability.

Similarly, Groove, a customer support management platform, utilized testing to overhaul its landing page strategy. The company aimed to move away from feature-heavy descriptions toward a "copy-first" narrative that emphasized user benefits. By testing various combinations of headlines and storytelling sequences, Groove was able to increase its conversion rate from 2.3% to 4.3%. This nearly 87% improvement underscores the impact of aligning messaging and layout through rigorous experimentation.

Navigating the Challenges of Multivariate Testing

Despite its analytical depth, multivariate testing is not a universal solution. The "traffic hurdle" remains its most significant limitation. Because the audience must be split among numerous variations, an MVT experiment with 16 variations requires significantly more visitors than an A/B test with two variations to reach the same level of statistical confidence. For small businesses or niche websites with limited daily sessions, an MVT could take months to yield actionable results, during which time external factors (such as seasonality or market shifts) might skew the data.

Furthermore, there is the risk of "interference," where variables are too closely linked, making it difficult to isolate which change actually drove the behavior. For these reasons, industry experts often recommend a hybrid approach: using A/B testing for major structural changes and MVT for the final stages of page refinement.

The Role of Specialized Optimization Platforms

As the technical demands of experimentation grow, the role of specialized software like Instapage has become increasingly vital. These platforms simplify the process of setting up, naming, and deploying experiments. By providing tools that allow marketers to easily create variations, set traffic splits, and define hypotheses, platforms like Instapage lower the barrier to entry for sophisticated testing.

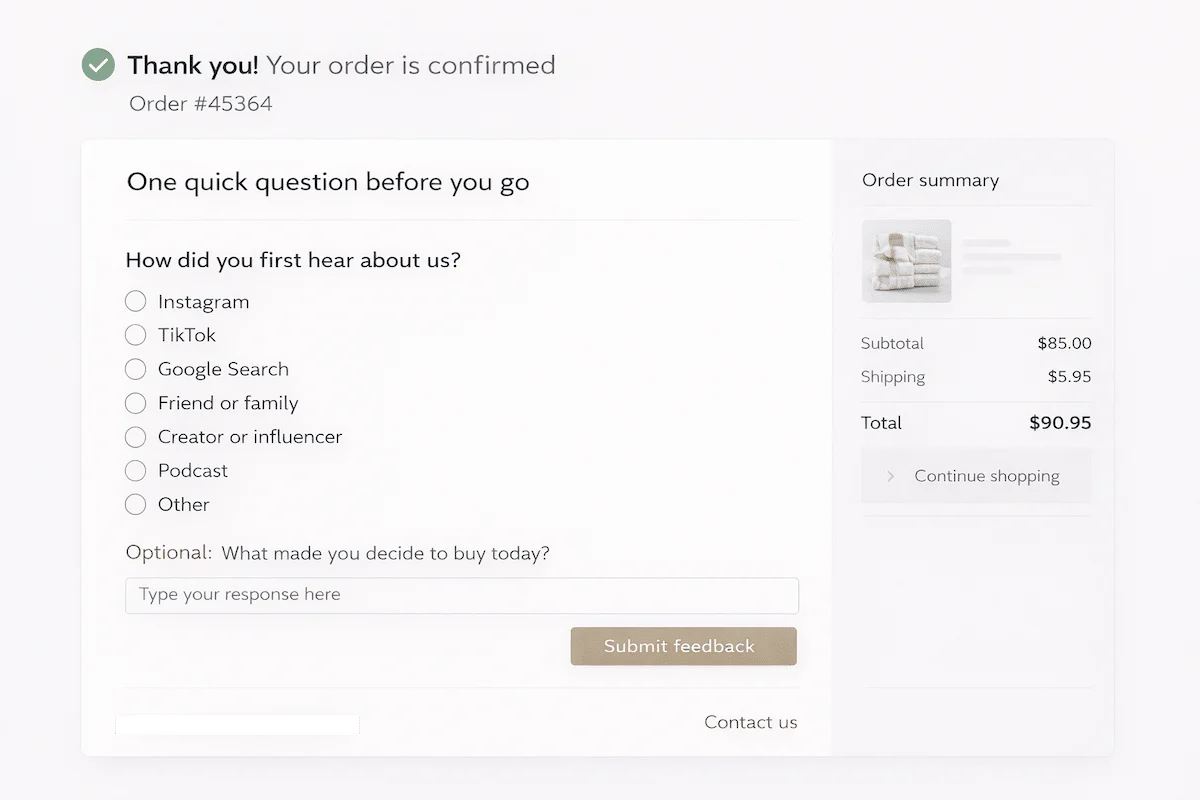

The standard workflow for such experiments typically involves:

- Hypothesis Formation: Defining exactly what is being tested and why (e.g., "Changing the CTA to green will increase clicks by 5%").

- Variation Creation: Using a visual builder to modify page elements.

- Traffic Allocation: Determining what percentage of visitors will see each variation.

- Data Analysis: Monitoring real-time performance metrics to identify the winner.

Broader Implications and the Future of Digital Testing

The shift toward multivariate testing signals a broader trend in the digital economy: the professionalization of user experience. As the cost of customer acquisition continues to rise, businesses can no longer afford to leave conversion rates to chance. The ability to systematically dissect and optimize every pixel of a landing page provides a competitive advantage that is difficult to replicate.

Looking ahead, the integration of Artificial Intelligence (AI) and Machine Learning (ML) is expected to further evolve multivariate testing. Emerging "multi-armed bandit" testing models use AI to automatically shift traffic toward better-performing variations in real-time, minimizing the "loss" associated with showing users underperforming page versions during a test.

In conclusion, while A/B testing remains a foundational tool for digital marketers, multivariate testing offers the precision required for high-level optimization. By understanding the interactions between various page elements, organizations can move beyond surface-level changes and develop a deep, data-backed understanding of their customers’ preferences. For any brand operating in a competitive digital space, the adoption of these rigorous testing methodologies is no longer optional—it is the engine of sustainable growth.