The global artificial intelligence landscape has reached a new milestone with the release of GLM-5.1, the latest flagship large language model from Z.ai. Positioned as a direct competitor to the industry’s most advanced systems, GLM-5.1 integrates a sophisticated Mixture-of-Experts (MoE) architecture with a 100-billion parameter scale to deliver what the company describes as a "generational leap" in reasoning, coding, and autonomous agent capabilities. By optimizing for both operational efficiency and deep logical processing, Z.ai aims to bridge the gap between high-performance proprietary models and the accessibility of open-source frameworks.

The launch comes at a critical juncture in the AI development cycle, where the focus is shifting from sheer parameter count to the refinement of "agentic" workflows—systems capable of executing complex, multi-step tasks with minimal human intervention. GLM-5.1 addresses this demand by introducing an advanced hybrid attention mechanism and an optimized decoding pipeline, allowing it to handle long-context documents and intricate programming assignments with a level of precision that rivals, and in some cases exceeds, the performance of OpenAI’s GPT-5.4 and Anthropic’s Claude 4.6.

The Evolution of the GLM Series: A Chronological Context

The development of the GLM (General Language Model) series has been a cornerstone of Z.ai’s strategy to establish a dominant presence in the international AI market. The journey began with early iterations focused on bilingual capabilities and efficient fine-tuning. However, it was the transition to the GLM-4 architecture that first signaled the company’s intent to compete on a global scale.

In early 2024, the GLM-4 model introduced significant improvements in context window handling and tool usage. This was followed by the rapid development of GLM-5, which prioritized reasoning speed. The current iteration, GLM-5.1, represents a rapid refinement cycle, incorporating feedback from enterprise developers and researchers. Unlike its predecessors, GLM-5.1 is built specifically to support "agent-based" systems, reflecting a broader industry trend toward AI that can use software tools, browse the web, and execute code to solve real-world problems.

Architectural Innovations: The Mixture-of-Experts Advantage

At the heart of GLM-5.1 is its Mixture-of-Experts (MoE) framework. Unlike dense models where every parameter is activated for every query, the MoE architecture uses a routing system to activate only a subset of the 100 billion parameters relevant to the specific task. This approach allows the model to maintain the "intelligence" of a massive system while operating with the speed and cost-efficiency of a much smaller one.

Key components of the GLM-5.1 architecture include:

- Hybrid Attention Mechanism: This system balances global and local attention, allowing the model to maintain focus on specific details within a document while understanding the broader context. This is particularly effective for long-form document analysis and complex codebases.

- Multi-Token Prediction: By predicting multiple future tokens simultaneously rather than one at a time, GLM-5.1 significantly increases its inference speed, reducing latency for end-users.

- Enhanced Decoding Pipeline: The optimized pipeline ensures that the model can handle contextual windows that extend far beyond previous versions, making it suitable for analyzing entire legal libraries or technical manuals in a single prompt.

These architectural choices enable GLM-5.1 to provide a high level of performance without the prohibitive computational costs typically associated with models of this scale.

Benchmark Analysis: Outperforming the Industry Leaders

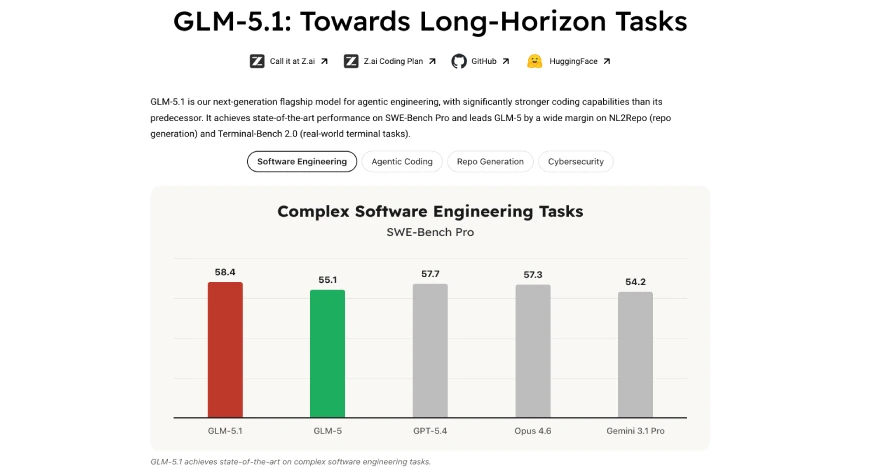

Z.ai has released comprehensive benchmark data to validate the performance claims of GLM-5.1. The results indicate that the model is particularly potent in technical and academic domains.

Coding Proficiency

In the SWE-Bench Pro assessment, which evaluates a model’s ability to resolve real-world software engineering issues, GLM-5.1 achieved a score of 58.4. This performance placed it ahead of GPT-5.4 (57.7) and Claude Opus 4.6 (57.3). When evaluated across a broader spectrum of coding tests—including Terminal-Bench 2.0 and CyberGym—GLM-5.1 maintained a consistent score above 55, securing a top-three global ranking. This is a significant improvement over the standard GLM-5, which scored 48.3, representing a nearly 42% increase in coding accuracy.

Academic and Mathematical Reasoning

The model’s reasoning capabilities were tested using the AIME (American Invitational Mathematics Examination) and GPQA (Graduate-Level Google-Proof Q&A) benchmarks. GLM-5.1 recorded a 95.3% success rate on AIME and 86.2% on GPQA. While slightly behind GPT-5.4’s peak scores in certain sub-categories, the results demonstrate that GLM-5.1 possesses the high-level cognitive functions required for advanced scientific research and complex financial modeling.

Agentic Workflow Durability

Perhaps the most impressive data point involves the model’s performance on the VectorDBBench optimization task. GLM-5.1 successfully executed 655 iterations, involving more than 6,000 tool function calls. Even after surpassing 1,000 tool usages in stress tests, the model maintained its development track without crashing or experiencing significant logic degradation. This durability is essential for autonomous agents tasked with long-term projects, such as continuous software debugging or automated market research.

Practical Application: A Deep Dive into Logic and Code

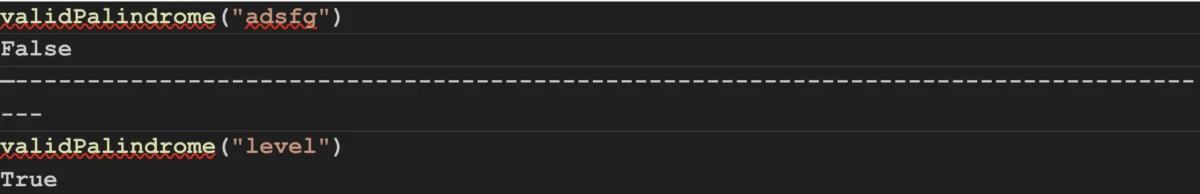

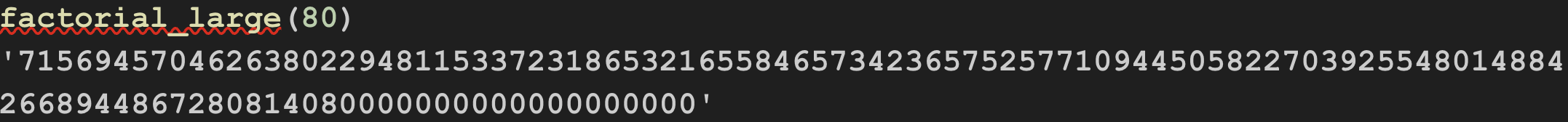

To demonstrate the practical utility of GLM-5.1, Z.ai highlighted its performance in two distinct programming tasks. These examples serve as a litmus test for the model’s ability to translate natural language instructions into optimized, executable code.

In the first task, the model was asked to write Python code to find the factorial of a large number. GLM-5.1 produced an iterative solution using an array to store individual digits, effectively bypassing the integer size limits inherent in some programming environments. The verdict from technical reviewers noted that while the code was highly efficient and utilized a two-pointer technique to minimize resource overhead, it prioritized algorithmic speed over production-level documentation. This suggests the model is currently optimized for high-speed problem solving and rapid prototyping.

The second task involved a string manipulation problem: determining if a string could become a palindrome after deleting at most one character. GLM-5.1 utilized a nested helper function approach, which is the standard optimal solution for this "O(n)" complexity problem. The model’s ability to recognize and implement the most efficient algorithmic path—rather than a "brute force" method—underscores its sophisticated understanding of computer science principles.

Accessibility and the Open-Source Strategy

In a move that distinguishes Z.ai from many of its Western counterparts, the company has released the complete model weights for GLM-5.1 under the MIT license. This open-source approach allows developers to host the model on their own infrastructure, fine-tune it for specific industrial applications, and integrate it into private ecosystems without the privacy concerns associated with cloud-only APIs.

For those requiring managed services, Z.ai provides a commercial API with competitive pricing. The model is also accessible via the Hugging Face platform, ensuring that the global research community can experiment with its capabilities. By offering both open weights and a low-cost API, Z.ai is positioning GLM-5.1 as the "workhorse" model for the next generation of AI startups.

Broader Impact and Market Implications

The introduction of GLM-5.1 has significant implications for the global AI market. First, it challenges the perceived monopoly of Silicon Valley firms over "frontier-class" models. The ability of Z.ai to produce a model that outpaces GPT and Claude in specific coding and reasoning benchmarks suggests that the technological gap between leading AI labs is closing.

Second, the focus on "agentic" features signals a shift in the AI value proposition. The industry is moving away from "chatbots" that simply talk and toward "agents" that do. GLM-5.1’s ability to handle thousands of tool calls makes it a prime candidate for automating complex back-office operations, software development lifecycles, and scientific data analysis.

Finally, the release emphasizes the growing importance of the Mixture-of-Experts architecture. As the environmental and financial costs of training massive dense models become a concern, the efficiency of MoE systems like GLM-5.1 offers a sustainable path forward for the industry.

Conclusion: A Foundation for the Future of Autonomous AI

GLM-5.1 stands as a testament to the rapid evolution of large language models, successfully combining massive scale with operational agility. By excelling in long-term planning, code generation, and multi-turn logical reasoning, it provides the necessary infrastructure for the next wave of AI development: autonomous agent-based systems.

As developers begin to integrate GLM-5.1 into their workflows, the model’s impact will likely be felt most in the fields of software engineering and research automation. Its routing systems, hybrid attention mechanisms, and commitment to open-source accessibility set a new standard for what a flagship AI model can—and should—be. In the burgeoning era of agentic AI, GLM-5.1 is not just a tool for conversation, but a robust engine for execution and discovery.