In the competitive landscape of digital optimization, marketing technology vendors frequently utilize "lightweight" script sizes as a primary selling point. Claims of snippets as small as 2.8 KB or 13 KB are often used to persuade performance-conscious developers and SEO specialists that a tool will have a negligible impact on page load speeds. However, a comprehensive investigation into the production environments of leading A/B testing platforms reveals a significant discrepancy between these advertised "stub" sizes and the actual payload required to execute experiments. The data suggests that the initial script installed on a website is often merely a loader, with the true execution footprint—the code that actually modifies the user experience—arriving later and reaching sizes that can exceed 280 KB.

The investigation into these performance metrics was prompted by a growing concern within the conversion rate optimization (CRO) industry regarding the "flicker effect" and the degradation of Core Web Vitals. As search engines like Google increasingly prioritize user experience metrics such as Largest Contentful Paint (LCP) and Cumulative Layout Shift (CLS), the overhead of third-party scripts has come under intense scrutiny. To address this, researchers conducted a multi-stage analysis of several prominent tools, including Convert, VWO, ABlyft, Mida.so, Webtrends Optimize, Visually.io, Fibr.ai, and Amplitude Experiment.

The Methodology of Script Measurement

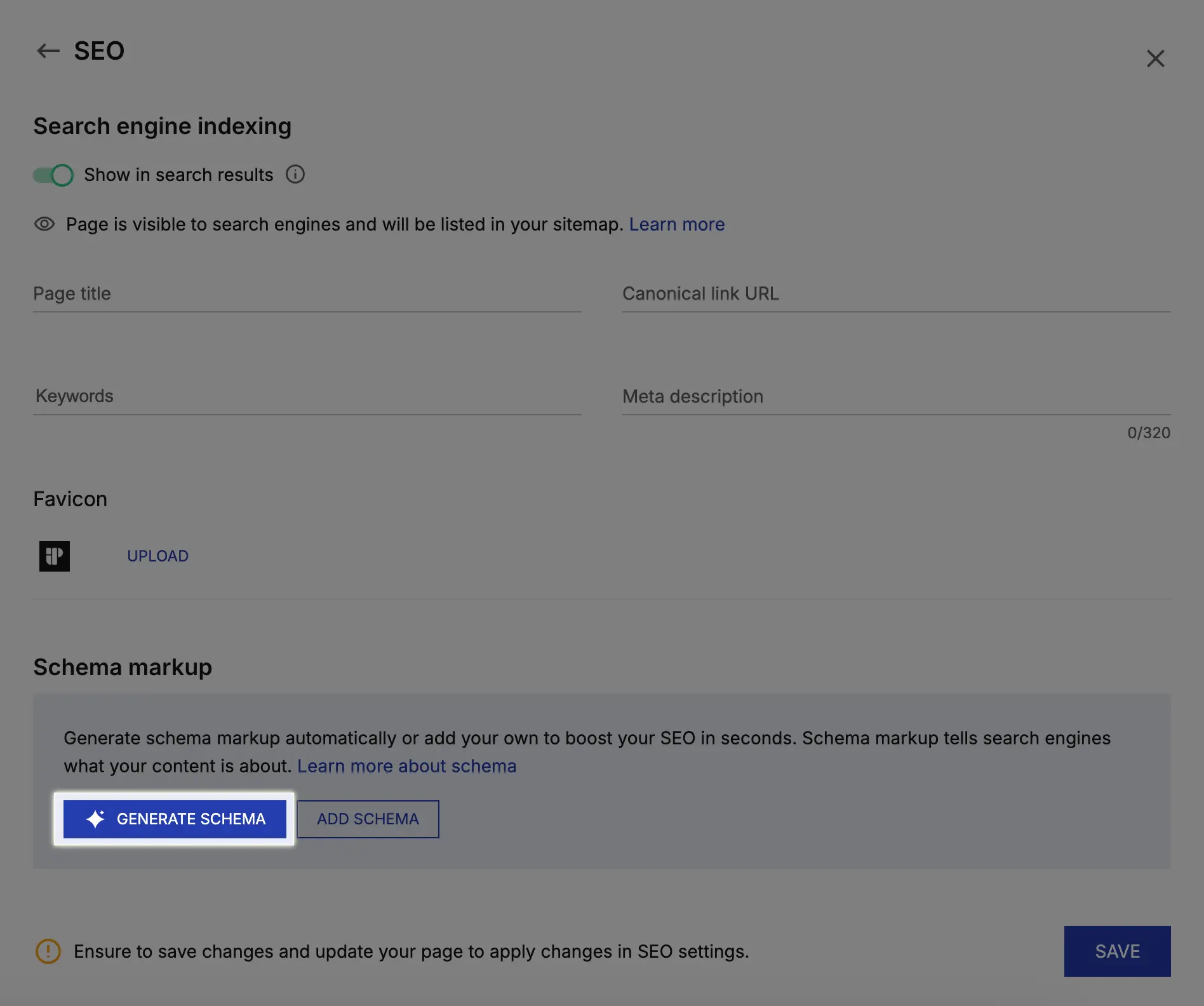

To move beyond marketing claims and capture the reality of script execution, the study employed a rigorous six-step methodology. First, investigators performed direct measurements from production environments, capturing tracking scripts from live customer sites using "curl" commands to record both gzipped transfer sizes and uncompressed payloads. This provided a baseline of what is actually delivered to the end-user’s browser.

The second phase involved a code-level analysis of script execution. Researchers looked for "progressive injection" patterns, where a small initial script triggers the download of additional libraries or variation logic at runtime. This was followed by a network waterfall analysis using Browser DevTools to capture secondary scripts, configuration files, and dynamically injected resources. By tracing the full execution path, the study was able to define the "total payload" rather than just the initial entry point.

Finally, the findings were categorized by architectural type and validated against vendor documentation and third-party benchmarks, such as the Mida.so benchmark. This allowed for a direct comparison between what vendors report in their marketing materials and what is observed in a live, multi-experiment environment.

Chronology of the A/B Testing Performance Debate

The tension between A/B testing functionality and site performance is not a new phenomenon, but it has evolved through distinct phases over the last decade.

In the early 2010s, most A/B testing tools relied on heavy, synchronous JavaScript libraries. While these were effective at preventing the "flicker" (where a user briefly sees the original page before the variation loads), they significantly slowed down the initial rendering of the page. By 2015, the industry began shifting toward asynchronous loading to improve perceived performance, though this introduced the risk of layout shifts and flashing content.

The 2020 announcement of Google’s Core Web Vitals served as a major turning point. Suddenly, performance was no longer just a UX concern; it was an SEO requirement. In response, many vendors began marketing "micro-snippets" or "stubs." This period saw the rise of the 2 KB to 20 KB claim, which focused exclusively on the initial loader. The current era, as highlighted by this latest investigation, is defined by a push for transparency regarding the "total execution footprint," as technical teams realize that deferred payloads still consume bandwidth and CPU cycles.

Analysis of Findings: Advertised vs. Measured Data

The investigation revealed a stark contrast in how different platforms handle their script delivery. The data suggests that the smaller the advertised snippet, the more likely the platform is to defer its actual payload to subsequent requests.

For example, VWO advertises a 2.8 KB "stub." However, direct measurements of the base SDK in production environments showed a minimum of 14.7 KB gzipped—roughly 5.2 times larger than the claim. When accounting for the total payload required to run experiments, the size can balloon to approximately 254 KB. This discrepancy exists because the initial stub excludes the dynamically loaded library and the specific campaign code needed to modify the page.

Similarly, ABlyft claims a 13 KB footprint, but the investigation found a ~32 KB gzipped SDK, which translates to a massive 168.5 KB uncompressed payload. In live environments, the total footprint was observed to reach over 280 KB. Mida.so, which advertises a 17.2 KB size, was found to have a loader of approximately 19.5 KB and a base SDK between 30 and 40 KB, utilizing a progressive injection model that hides the full cost of runtime configurations.

In contrast, Convert employs a different architectural philosophy. Rather than using a lightweight stub, it delivers a single upfront bundle. While its baseline is higher at 93 KB gzipped, the investigation found that it remains predictable. With three to five active experiments, the total payload only increases to roughly 95-110 KB. Because it does not rely on hidden runtime fetches, the performance impact is transparent and constant.

Architectural Trade-offs: Sync, Async, and Feature Flagging

The study identified three primary architectural models used by optimization platforms, each with distinct trade-offs for site performance and data reliability.

-

Embedded Bundles: Used by platforms like Convert, this model includes all experiment logic, targeting, and variation code in a single initial payload. The primary benefit is predictability; there are no follow-up requests, which reduces the reliance on network timing and minimizes the risk of flicker. The trade-off is a larger initial download size compared to "stubs."

-

Stub + API Configuration: Employed by VWO, Mida, and ABlyft, this model uses a tiny loader to fetch configurations and variations over time. While this results in a very low initial script size, it often leads to delayed execution. This delay can trigger the flicker effect, forcing developers to use "anti-flicker" snippets that temporarily hide page content, which can negatively impact LCP scores.

-

Feature Flagging: Platforms like Amplitude Experiment operate differently, returning only variant decisions rather than DOM-modifying code. While this is the most lightweight approach, it is generally not comparable to traditional DOM-based A/B testing tools, as it requires more manual implementation by development teams.

Implications for Modern Web Development

The findings of this investigation suggest that "smallest script size" is an insufficient, and perhaps even misleading, metric for evaluating A/B testing tools. A small initial script that triggers a massive, deferred payload can be more detrimental to user experience than a slightly larger, transparent bundle.

From a technical perspective, the timing of execution is as critical as the size of the payload. If experiment logic is available early (as in the embedded bundle model), changes can be applied before the page stabilizes. If the logic arrives late via an API call, the browser may have already rendered the original content, leading to a jarring visual transition or necessitating "flicker prevention" scripts that artificially delay the page load. These delays are captured by performance monitoring tools and can lower a site’s overall quality score.

Furthermore, the "opaque" nature of deferred payloads makes it difficult for performance engineers to budget their site’s resources. When a tool’s impact is spread across multiple runtime requests and API calls, calculating the true cost to the user’s data plan and CPU becomes a complex task.

Conclusion and Industry Outlook

The investigation concludes that the optimization industry is reaching a point of reckoning regarding performance transparency. As organizations become more sophisticated in their monitoring of Core Web Vitals, the marketing of "micro-snippets" is likely to face increased skepticism.

For decision-makers, the recommendation is to move beyond the kilobyte count of the initial snippet and instead ask three critical questions: What is the total amount of code required to run a set number of experiments? When does that code arrive in the browser? And how does the tool mitigate the flicker effect without sacrificing LCP or CLS?

The shift toward a more holistic view of script impact marks a maturing of the CRO field. By prioritizing predictable, transparent delivery over "stub" marketing, optimization teams can ensure that their efforts to improve conversion rates do not inadvertently undermine the very performance and SEO foundations upon which their websites are built. The data clearly shows that in the world of A/B testing, what you see in the initial snippet is rarely the whole story.