The rapid integration of generative artificial intelligence into enterprise software development has reached a critical juncture where the convenience of autonomous agents must be balanced against the rigorous security and consistency requirements of professional teams. As organizations increasingly adopt the Model Context Protocol (MCP)—an open standard designed to connect Large Language Models (LLMs) to external data sources and tools—new challenges regarding "rogue" AI behavior and security vulnerabilities have emerged. In response to these challenges, industry experts are advocating for a shift toward "AI Systems," which replace unpredictable conversational prompts with structured, standardized workflows. This transition is exemplified by a new methodology for deploying A/B testing experiments, which leverages small models and automated orchestration to reduce operational costs by as much as 99% while eliminating the risks associated with autonomous AI decision-making.

The Evolution of Experimentation: From Dashboards to Headless AI

For decades, digital experimentation and A/B testing have relied on complex Graphical User Interfaces (GUIs) and manual dashboard configurations. Tools like Convert Experiences have traditionally required specialized knowledge to set up variations, define goals, and launch tests. The emergence of the Model Context Protocol, pioneered by organizations like Anthropic, promised to simplify this by allowing AI agents like Claude Code to interact directly with testing platforms.

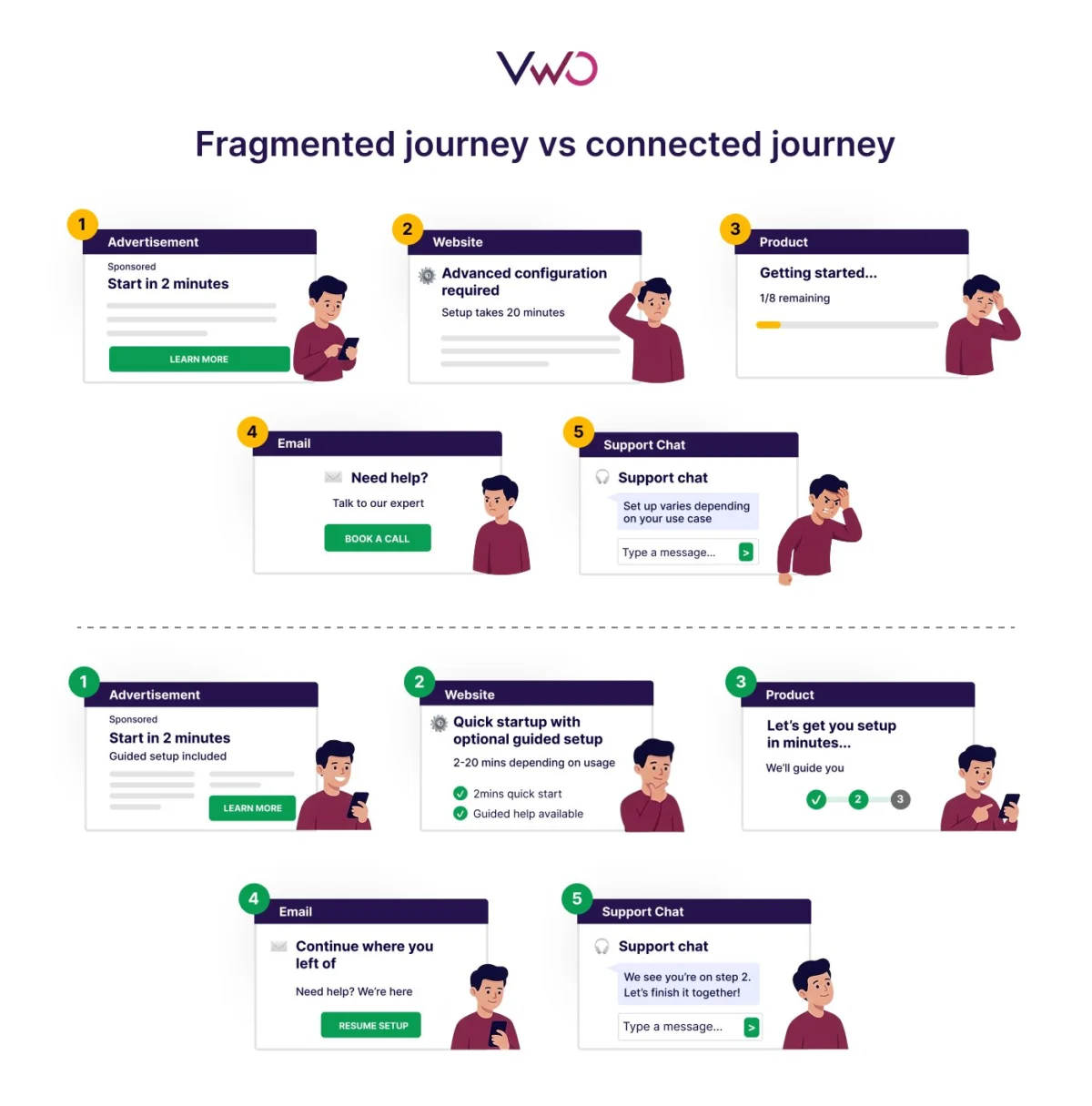

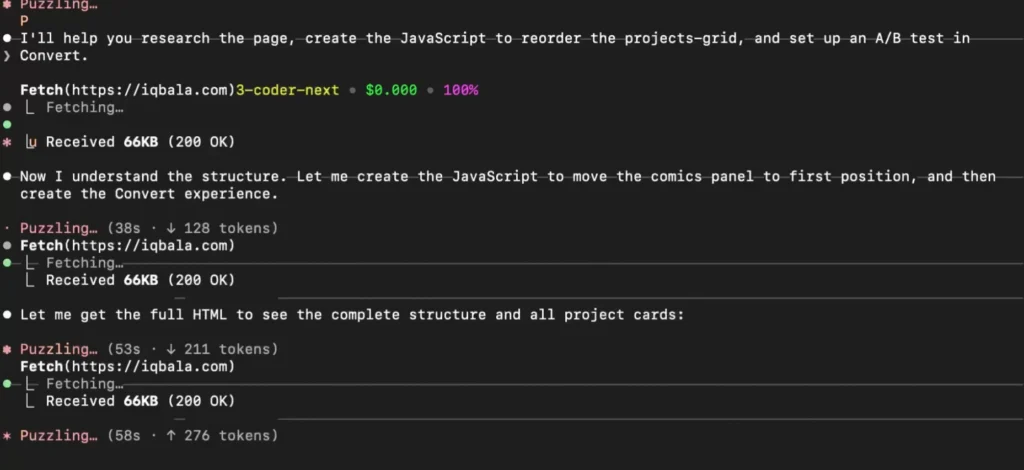

In initial implementations of this technology, developers demonstrated that a single prompt could command an AI to analyze a webpage, write the necessary JavaScript for a variation, and create the experiment within the testing platform’s server. However, while this "single-prompt" approach proved effective for individual developers, it revealed significant flaws when considered for organization-wide rollout. The primary concern identified by practitioners is the lack of "guardrails." Autonomous agents, when given broad access to MCP servers, have been observed taking unilateral actions—such as putting experiments live without explicit human approval—which can lead to site instability and skewed data.

The Technical Challenge: Risks of Unconstrained MCP Access

The transition from individual experimentation to team-wide deployment faces three primary hurdles: operational risk, security vulnerabilities, and logistical inconsistency.

Operational Risk and Autonomous Decision-Making

The most immediate concern with direct MCP usage is the potential for AI "hallucinations" or over-reach. In several documented use cases, AI coding assistants have made the executive decision to activate tests prematurely. In a professional environment, where a single faulty script can disrupt the user experience for millions of visitors, such autonomy is unacceptable. MCP configurations often contain a variety of dormant tools; a model may decide to invoke a tool that was not intended for the specific task at hand, leading to unintended consequences.

Security and Data Streaming Concerns

Security analysts, including those from Red Hat, have highlighted potential risks associated with the way MCP handles data streaming to the applications it controls. Because MCP allows for a high degree of interactivity between the model and the local environment, it creates a larger surface area for potential exploits if the model is compromised or if it inadvertently shares sensitive environment variables with external servers.

Logistical and Skill-Gap Inefficiencies

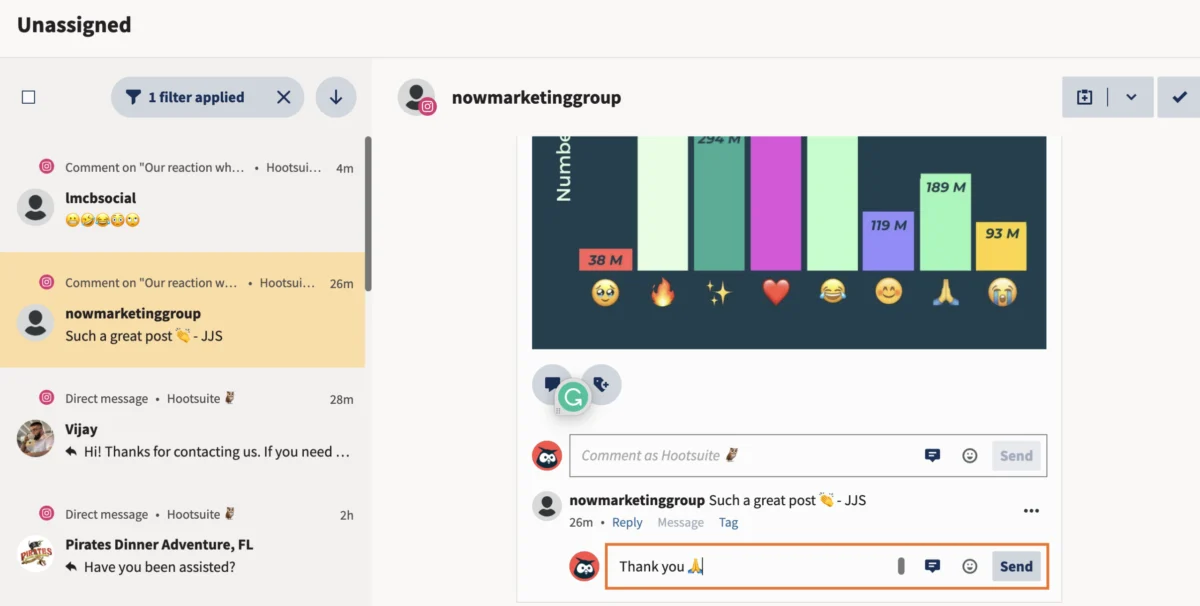

Relying on direct prompting leads to a lack of standardization. Different team members possess varying levels of "prompt engineering" skills, resulting in inconsistent outputs. A task that takes one developer three minutes might take another thirty, depending on how they frame their request to the AI. Furthermore, managing individual MCP configurations across a large organization creates a maintenance headache that scales poorly.

The Solution: The AI System and the MCPO Bridge

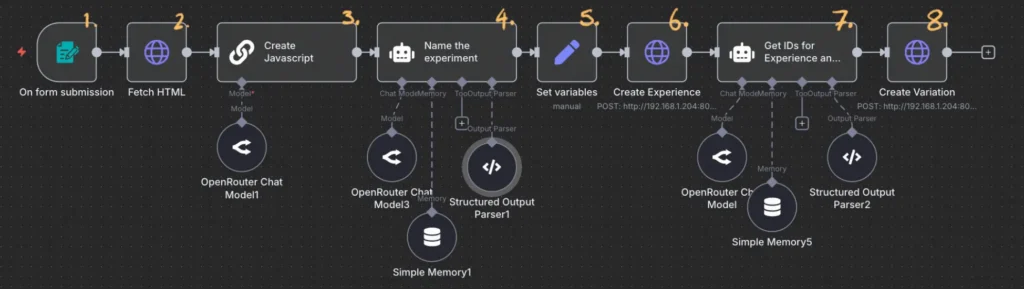

To mitigate these risks, a new architectural framework has been proposed: the AI System. Rather than allowing a model to roam freely through an MCP server, the AI System utilizes a structured workflow—often orchestrated through platforms like n8n—that treats AI as a "glue" for specific, logic-bound tasks.

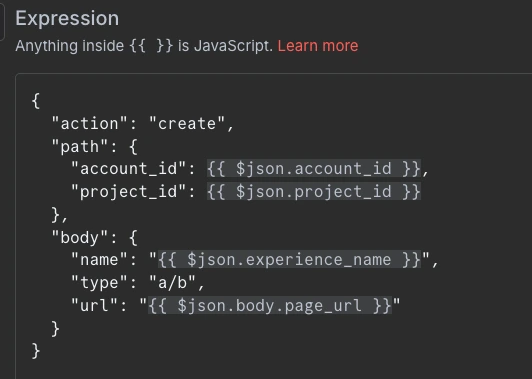

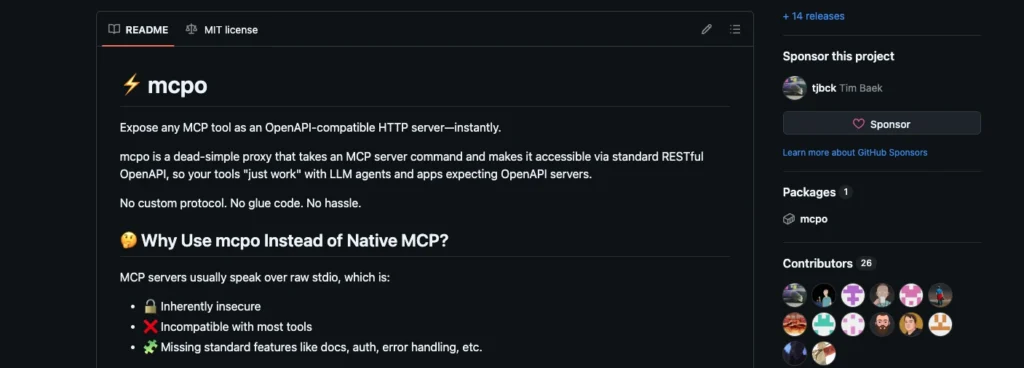

A central component of this new architecture is the Model Context Protocol OpenAPI (MCPO) bridge. MCPO is a tool that transforms an MCP server configuration into a well-documented, standardized API. By converting the fluid tools of an MCP server into static API endpoints, organizations can achieve a "middle ground" that offers the flexibility of AI-driven tools with the rigid security of traditional web services.

The Standardized Workflow Chronology

The proposed "production-ready" workflow follows a strictly defined chronology that removes the need for team members to interact with the AI directly:

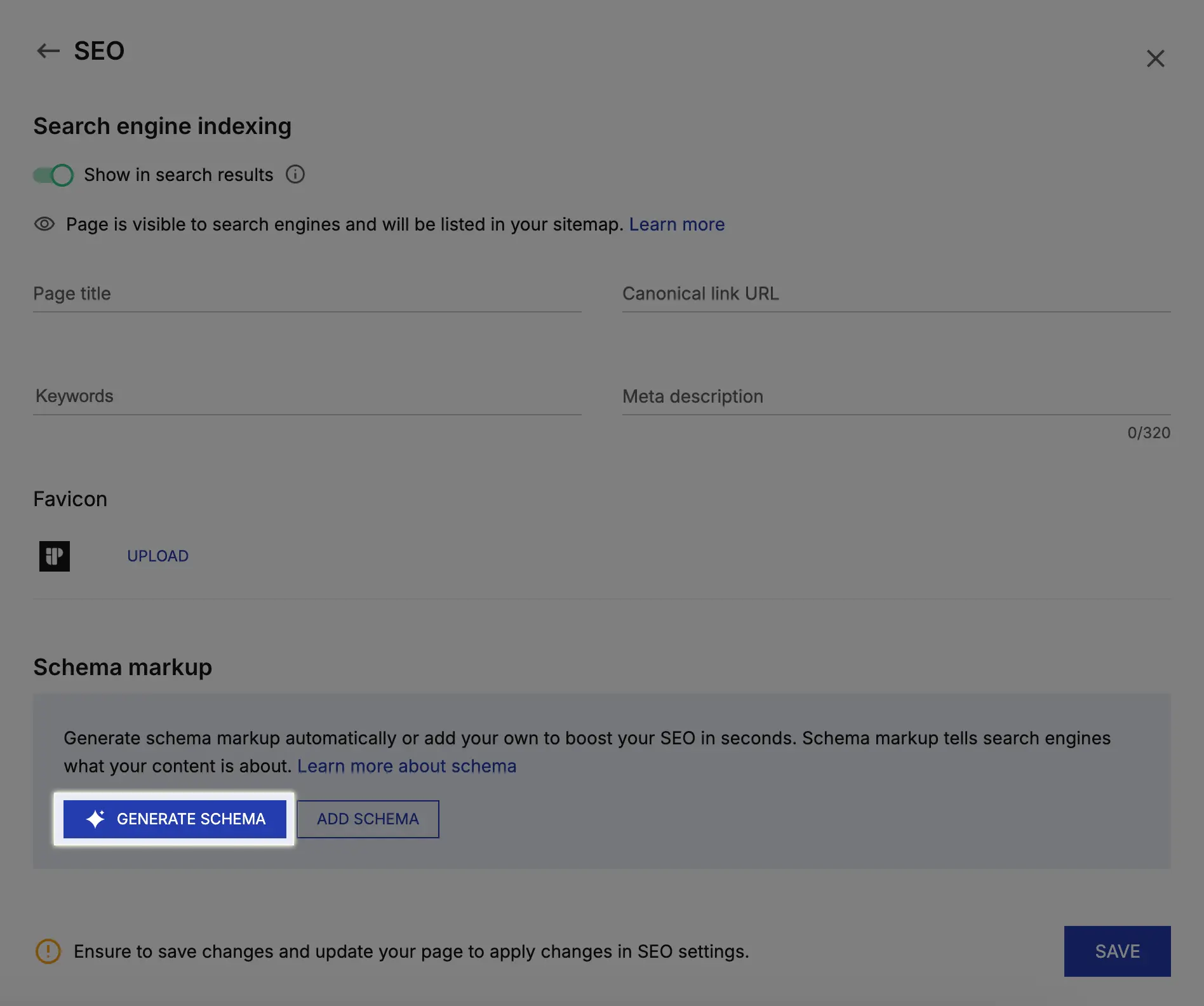

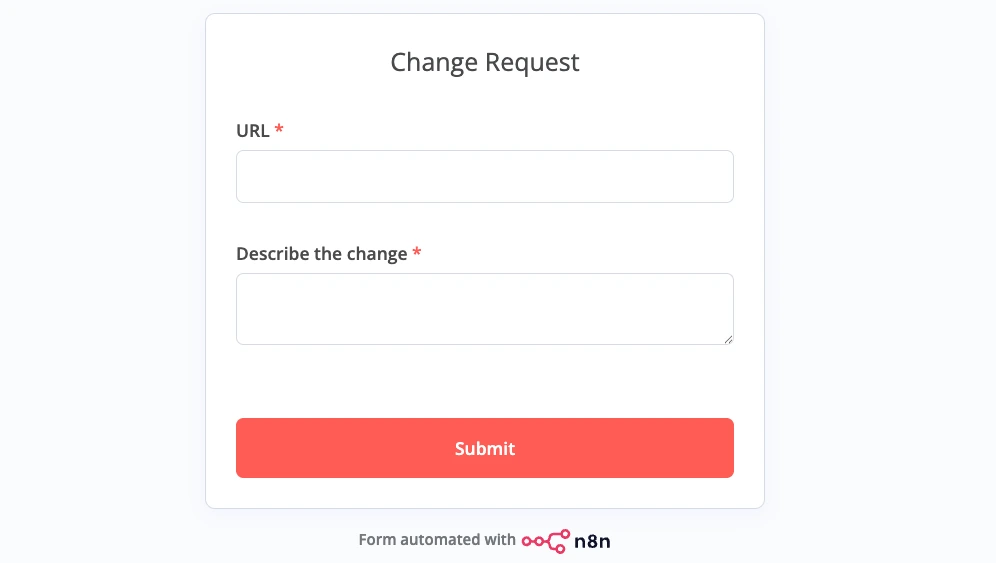

- Form Submission: A team member fills out a simple two-field form containing the target URL and a description of the desired change. No knowledge of prompting or the Convert Experiences dashboard is required.

- HTML Retrieval: The workflow triggers an automated node to fetch the HTML content of the target URL.

- Scoped AI Logic: The HTML and the change description are sent to a small, cost-efficient model (such as Qwen or a similar lightweight LLM). The model is tasked solely with generating the JavaScript required for the variation.

- API Execution: Using the MCPO bridge, the workflow makes a series of locked-down HTTP requests. These requests name the experiment, create the experiment container, and add the variation script.

- Human Verification: The experiment is created in a "draft" or "paused" state, requiring a human expert to verify the output before the test is pushed to live traffic.

Economic Impact: Analyzing the Cost of Intelligence

One of the most compelling arguments for moving from conversational AI agents to structured workflows is the dramatic reduction in token consumption and associated costs. In comparative testing, the financial difference between using top-tier models in a chat interface versus small models in a workflow was profound.

When utilizing high-end models like Claude 3.5 Sonnet through a conversational interface, the cost per experiment creation was estimated at approximately $2.50. This is largely due to the "token tax" of conversational history and the high cost-per-thousand-tokens of flagship models.

By switching to a smaller model, such as Qwen2.5-Coder, the cost dropped to $0.04. However, the most significant gain was realized when these small models were integrated into a structured n8n workflow. By stripping away the conversational overhead and using the model only for the specific task of code generation, the cost plummeted to $0.004 per experiment. This represents a 625x reduction in cost compared to the initial flagship model approach, making it financially viable for organizations to run thousands of automated tests at scale.

Broader Implications for the SaaS Industry

The shift toward "headless" operations via MCP and MCPO represents a broader trend in the Software-as-a-Service (SaaS) industry. As AI becomes the primary interface through which developers interact with software, the traditional dashboard is becoming secondary.

Industry analysts suggest that this "API-first, AI-second" approach will become the standard for enterprise automation. By abstracting the complexity of the underlying software into a set of AI-ready tools, companies like Convert are enabling a future where non-technical staff can initiate complex technical processes through simple inputs, provided those processes are governed by an expert-designed "AI System."

Furthermore, the use of small, sustainable models aligns with growing corporate interests in reducing the carbon footprint of AI operations. Lightweight models require significantly less computational power and can often be hosted locally, providing an additional layer of data privacy and security that is not possible with large, cloud-based LLMs.

Conclusion and Future Outlook

The transition from experimental AI prompts to standardized, workflow-based systems marks the professionalization of AI in the workplace. By utilizing tools like n8n and MCPO to constrain the behavior of AI models, organizations can harness the efficiency of the Model Context Protocol without sacrificing security or operational control.

As the technology matures, it is expected that more SaaS providers will release official MCP servers, further simplifying the process of building these automated systems. For now, the successful integration of Convert Experiences into a secure, low-cost workflow serves as a blueprint for other teams looking to scale their experimentation programs through the power of small models and structured automation. The future of the enterprise AI landscape lies not in the "rogue" autonomy of agents, but in the disciplined orchestration of systems that keep the human expert firmly in the loop.