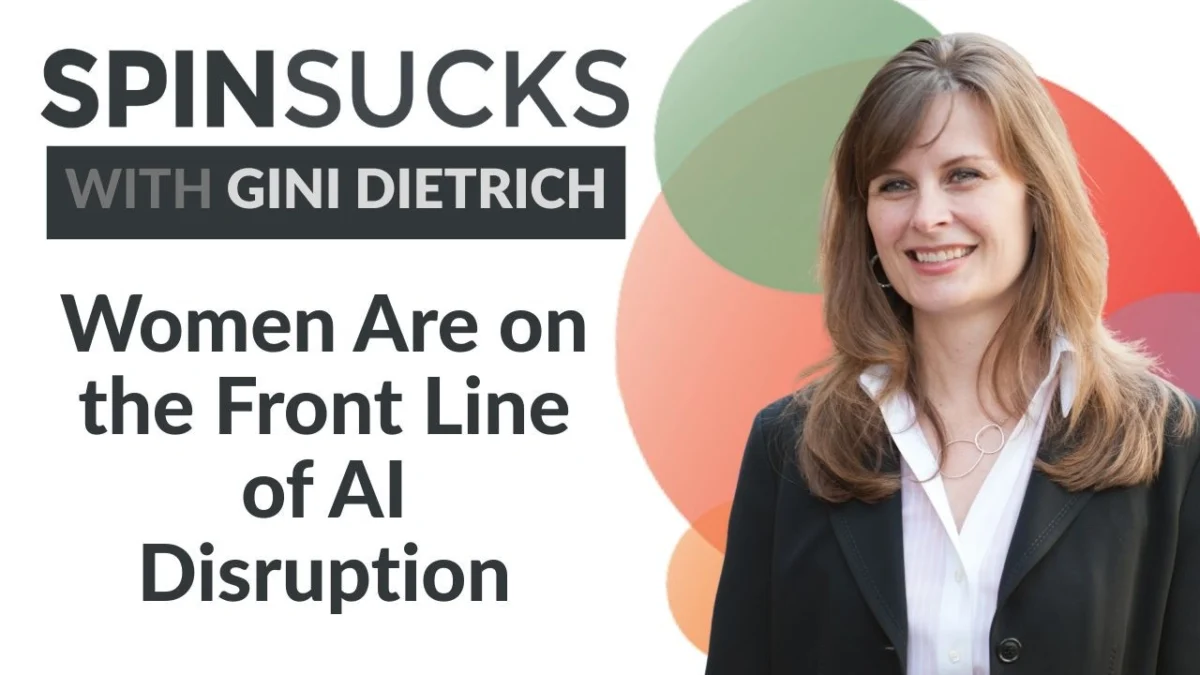

The rapid integration of generative artificial intelligence into the global economy has moved beyond a technological evolution, emerging instead as a structural shift with specific demographic consequences. Recent public statements from leaders in the technology sector, most notably Palantir CEO Alex Karp, have highlighted a projected redistribution of economic and political leverage. This shift is expected to disproportionately affect highly educated, humanities-trained women who occupy roles in communications, marketing, and administration. As AI systems become increasingly proficient at text-based tasks and strategic analysis, the traditional "white-collar" workforce—a sector where women are statistically overrepresented—faces a period of unprecedented disruption.

The Convergence of Technology and Demographic Power

In a series of high-profile appearances in early 2026, Alex Karp, the CEO of the $200 billion data analytics firm Palantir, articulated a vision of the future where AI serves as a catalyst for a significant social and economic realignment. During a televised interview with CNBC, Karp suggested that the proliferation of generative AI would diminish the economic and political influence of "highly educated, often female" professionals. Conversely, he predicted an increase in the economic standing of "vocationally trained, working-class, often male" workers.

Karp’s assessment was not framed as a hypothetical risk but as a logical outcome of current product development and market trends. He specifically identified the "humanities-trained" workforce as the primary target for displacement. This includes professionals whose careers are built on critical thinking, persuasive writing, ethical reasoning, and institutional accountability—skills traditionally honed through liberal arts educations. By automating these "text-heavy" and "strategy-driven" functions, AI technology effectively targets the professional foundations of the modern female-dominated white-collar sector.

A Chronology of Public Pronouncements

The discourse regarding AI’s demographic impact has accelerated over the first quarter of 2026, marked by several key public interactions involving major tech stakeholders.

In January 2026, during the World Economic Forum in Davos, Karp held a public conversation with BlackRock CEO Larry Fink. During this session, Karp was blunt about the fate of humanities-focused roles, stating that AI would "destroy" these jobs. He advised those with elite degrees in subjects like philosophy to ensure they possessed "some other skill," asserting that the traditional market for such expertise was rapidly evaporating. He further characterized the failure to recognize these disruptions as a form of institutional blindness.

In March 2026, coinciding with International Women’s Month, Karp expanded on these themes during his CNBC interview. By naming the specific demographic—highly educated women who often align with Democratic political leanings—he moved the conversation from general automation to a specific socio-political forecast. This transparency from a CEO whose company provides critical infrastructure to the United States national security apparatus suggests that the displacement of these roles is a core component of the current technological roadmap.

Structural Exposure: Why Women Are on the Front Line

The vulnerability of female professionals to AI automation is not a matter of individual skill but of structural labor distribution. Data from the International Labour Organization (ILO) provides a statistical backbone to these concerns. According to recent ILO findings, women in high-income countries are nearly three times more likely than men to work in roles with high exposure to generative AI automation.

In the United States, approximately 70% of the female workforce is employed in white-collar roles, compared to roughly 50% of the male workforce. Men are more heavily represented in manual trades, manufacturing, and construction—sectors where physical labor and complex spatial navigation make automation more difficult and expensive to implement. In contrast, women are concentrated in sectors that generative AI targets first:

- Communications and Public Relations: Crafting narratives, media monitoring, and campaign execution.

- Marketing and Content Creation: Copywriting, social media management, and email marketing.

- Administration and Operations: Strategic scheduling, internal communications, and project management.

- Customer Engagement: High-level support and relational management.

The ILO reports that in advanced economies, the risk of high automation potential for women stands at 9.6%, while for men, it remains significantly lower at 3.5%. This discrepancy indicates that the "first wave" of AI-driven restructuring is landing directly on the professions that have historically provided women with a path to economic independence and corporate leadership.

The Gender Gap in AI Upskilling

The threat of displacement is compounded by a documented disparity in how AI tools are being introduced within organizations. A report by McKinsey & Company, "Women in the Workplace," highlights a significant gap in managerial support for AI adoption. The study found that only 21% of entry-level women reported being encouraged by their managers to utilize AI tools, whereas 33% of men at the same level received such encouragement.

This "upskilling gap" creates a dangerous paradox: women are more likely to be in roles that AI can automate, yet they are less likely to be given the resources or institutional permission to lead the integration of these tools. This lack of support hinders the ability of female professionals to transition from "tasks that AI can do" to "managing the AI that does the tasks," a shift that is critical for maintaining professional relevance.

The Ethical Paradox: Devaluing the Conscience of AI

One of the most significant contradictions in the current AI landscape is the devaluation of the very skills required to govern the technology safely. Critical thinking, ethical reasoning, and the ability to assess long-term societal impact are central to the humanities. These are also the skills identified by researchers as essential for the development of "Responsible AI."

Research from the Markkula Center for Applied Ethics at Santa Clara University indicates that women disproportionately occupy AI ethics and governance roles globally. This trend aligns with broader social science research suggesting that disciplines emphasizing context, stakeholder impact, and relational consequences—such as the humanities—are where women have traditionally focused their professional development.

By framing humanities-trained professionals as "obsolete," technology leaders risk removing the ethical guardrails necessary for safe AI deployment. The ability to ask "should we?" rather than "can we?" is a function of the critical analysis taught in the humanities. If the power of these professionals is reduced, the oversight of AI systems may shift toward a purely efficiency-based model, potentially ignoring systemic biases and long-term social harms.

Fact-Based Analysis of Economic and Political Implications

The reduction of economic power for highly educated women carries broader implications for the global economy and political landscape. If the predictions of a "vocational shift" hold true, the following trends may emerge:

- Erosion of the Middle-Class Wage Base: Many humanities-based roles serve as the backbone of the professional middle class. Rapid automation without a corresponding creation of high-wage roles could lead to significant income volatility.

- Political Realignment: As economic power shifts between demographics, voting patterns and political priorities are likely to follow. A decline in the economic leverage of "humanities-trained" voters could alter the funding and focus of political campaigns.

- Educational Restructuring: If liberal arts degrees are perceived as "unmarketable," higher education institutions may face a crisis of relevance, leading to a decline in the study of ethics, history, and philosophy in favor of narrow technical training.

Strategic Defenses and the Shift to Measurable Value

In response to these challenges, industry experts suggest a shift in how communications and marketing professionals demonstrate their value. The move from "creative execution" to "strategic business function" is seen as a primary defense against automation.

One such framework is the PESO Model (Paid, Earned, Shared, Owned), which emphasizes integrated strategy and measurable business outcomes. By utilizing data to show how earned media drives qualified leads or how owned content reduces customer acquisition costs, professionals can move beyond being perceived as a "cost center." Strategic functions—those that involve high-level judgment, risk assessment, and complex human negotiation—remain significantly more difficult to automate than the production of text or basic data analysis.

Conclusion: A Call for Strategic Adaptation

The statements made by Alex Karp and the supporting data from the ILO and McKinsey serve as a definitive warning for the white-collar workforce. The disruption of humanities-trained roles is not an accidental byproduct of AI but a foreseeable outcome of its design and deployment.

For the professionals targeted by this shift, the path forward involves more than simple technical proficiency. It requires a vigorous defense of the strategic and ethical value that human judgment brings to the corporate table. While AI can simulate persuasion and generate content, it cannot assume the accountability, moral reasoning, or relational depth required for high-level leadership. The future of professional power for women in the AI era will likely depend on their ability to lead the conversation on governance, prove their impact through rigorous measurement, and reject the premise that their critical thinking is a redundant commodity.