The landscape of artificial intelligence is shifting from static conversational interfaces toward dynamic, agentic systems capable of interacting with external data and software tools. Central to this evolution is the Model Context Protocol (MCP), an open standard designed to provide a universal interface for AI models to connect with various data sources and services. By utilizing n8n, a leading low-code workflow automation platform, developers and businesses are now able to bridge the gap between local large language models (LLMs) and complex business applications like Google Sheets and vector databases. This development represents a significant milestone in the democratization of private, local AI agents that can perform real-world tasks without relying on expensive or privacy-compromising cloud infrastructures.

The Rise of the Model Context Protocol

The Model Context Protocol, originally introduced by Anthropic in late 2024, was conceived to solve a recurring problem in AI development: the fragmentation of tool-calling integrations. Previously, if a developer wanted an AI to access a specific database or API, they had to write custom "glue code" for every different model or chat interface. MCP standardizes this connection, allowing a single "server" to provide tools to any "client" that supports the protocol.

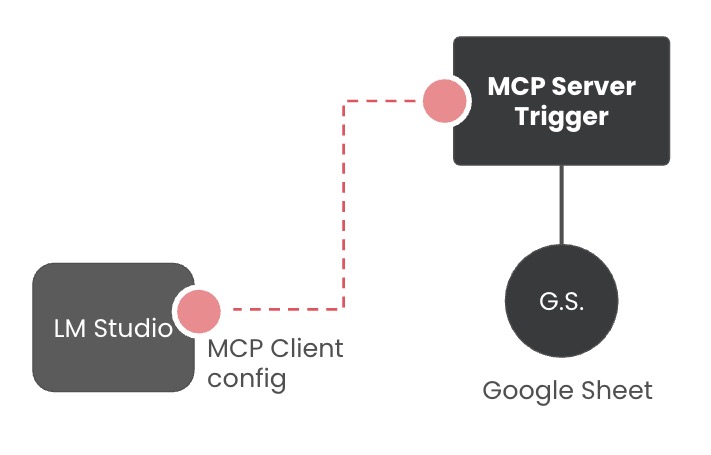

In the context of local AI development, this means that software like LM Studio—a popular interface for running open-source models—can now act as an MCP client. When paired with n8n acting as an MCP server, the AI gains the ability to "reach out" into the user’s digital environment. This synergy allows a small, locally-hosted model to access real-time data, manage calendars, or query massive datasets, effectively punching above its weight class in terms of utility and reasoning.

Chronology of Development: From Calculators to Semantic Search

The integration of MCP into the n8n ecosystem has followed a rapid development trajectory. Initially, proof-of-concept workflows focused on simple utility tools, such as connecting AI models to basic calculators to ensure mathematical accuracy—a known weakness of LLMs. However, as the protocol matured, the focus shifted toward data retrieval and "Retrieval-Augmented Generation" (RAG).

The latest iteration of this technology involves connecting n8n’s MCP Server Trigger to enterprise-grade tools. By February 2026, the workflow has evolved to include sophisticated integrations with Google Sheets for structured data and Qdrant for unstructured semantic data. This progression highlights a clear trend: the transition of AI from a novelty chat tool to a functional component of the modern productivity stack.

Technical Implementation: Connecting LM Studio to n8n

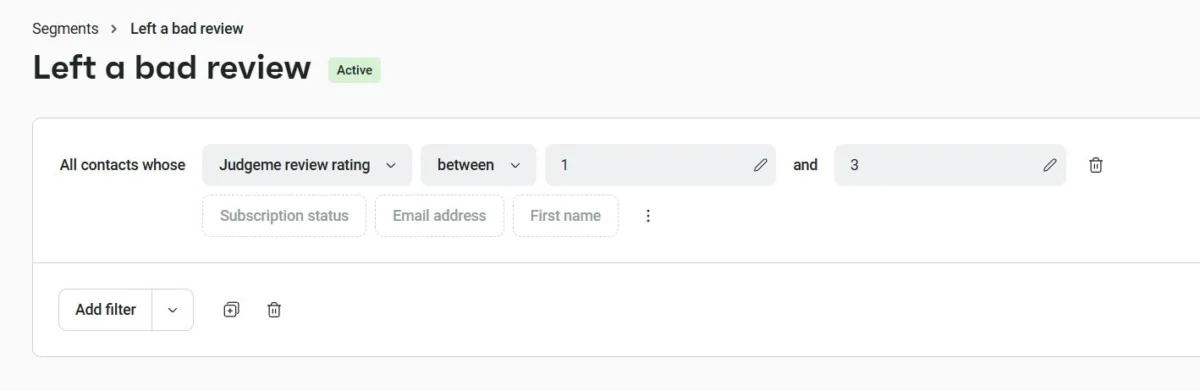

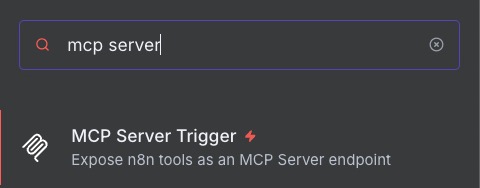

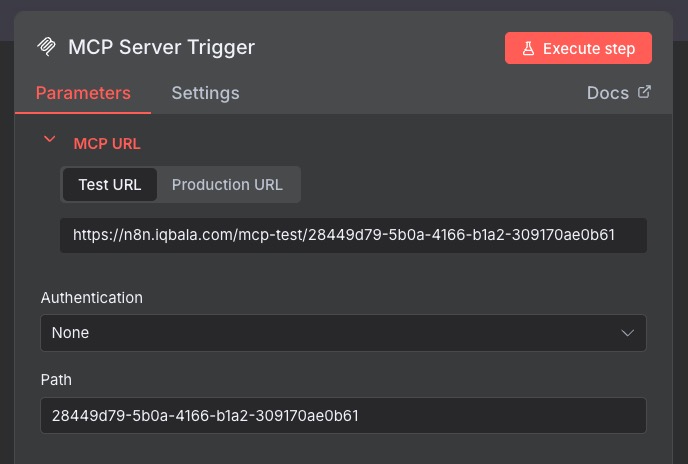

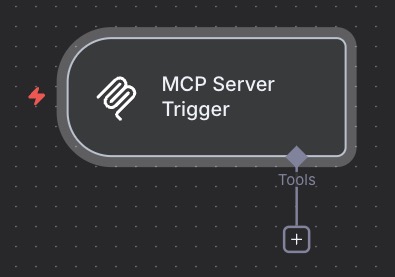

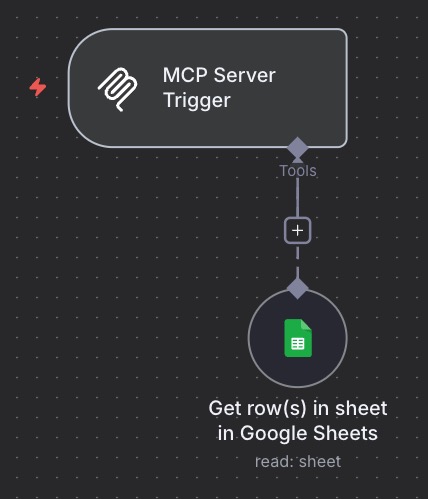

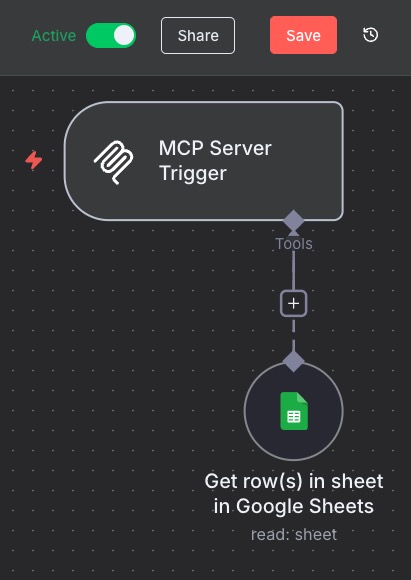

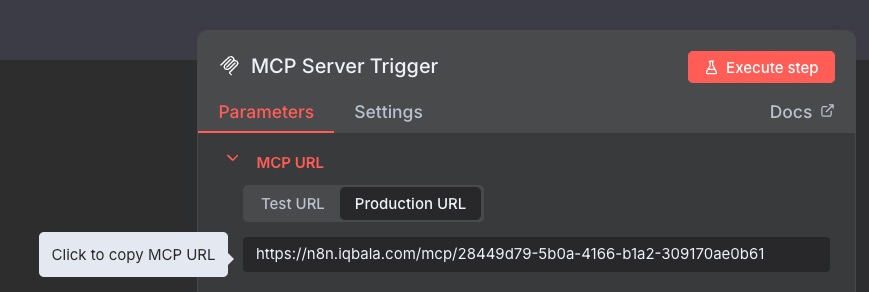

The process of building a functional AI agent via MCP involves a multi-layered architecture where n8n serves as the intermediary logic layer. The workflow begins with the "MCP Server Trigger" node within n8n. This node acts as the listener, waiting for instructions from the AI client.

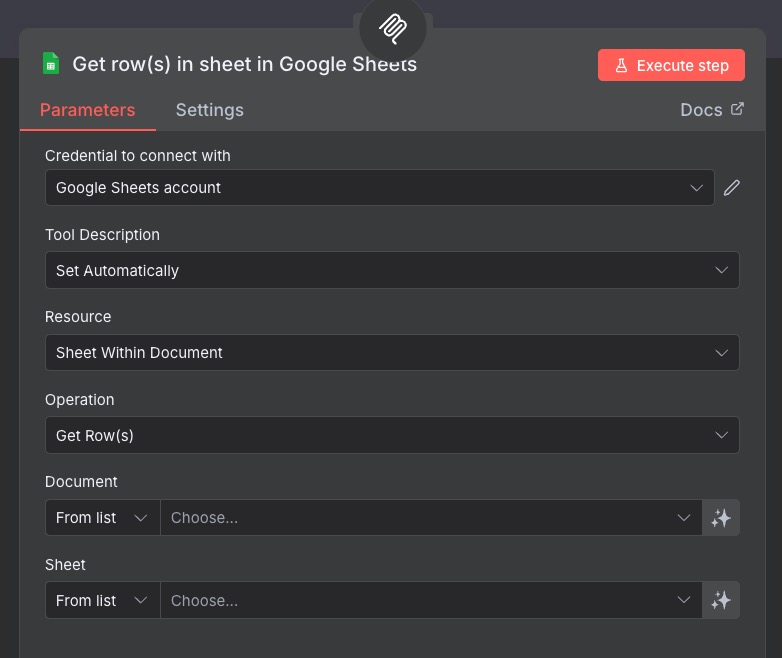

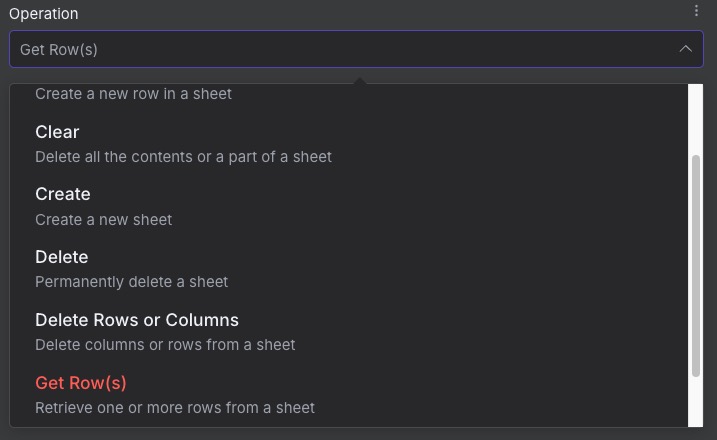

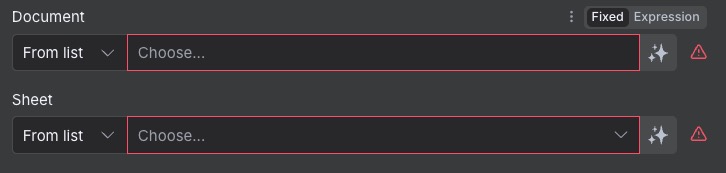

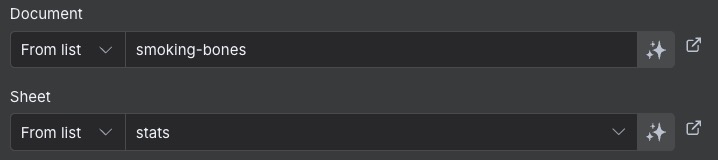

Structured Data Integration via Google Sheets

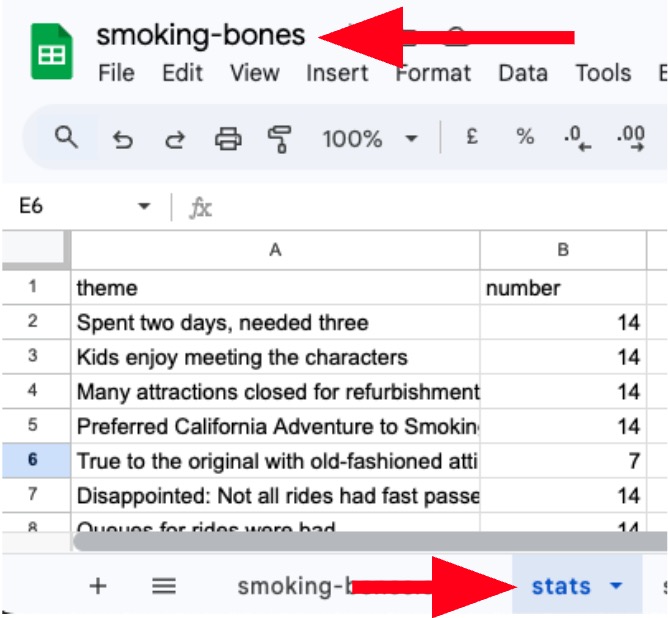

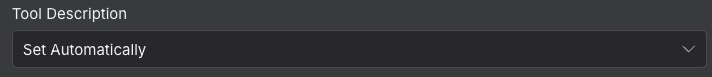

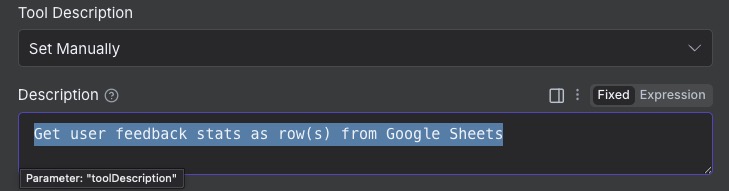

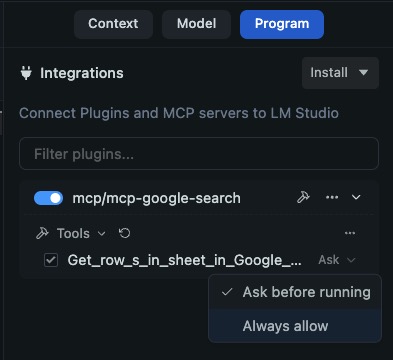

For structured data tasks, such as analyzing user feedback or financial records, the n8n workflow utilizes the Google Sheets node. In a typical configuration, the node is set to the "Get Row(s)" operation. A critical aspect of this setup is the "Tool Description" field. Because LLMs interpret tools through natural language, the description must be precise. For instance, labeling a tool "Get user feedback stats as rows from Google Sheets" provides the model with the necessary context to know when to call that specific function.

This structured approach limits the AI’s scope, reducing the likelihood of "hallucinations" or errors. By pointing the tool to a specific document and sheet, the developer ensures the AI operates within a controlled environment, which is a cornerstone of safe AI deployment in professional settings.

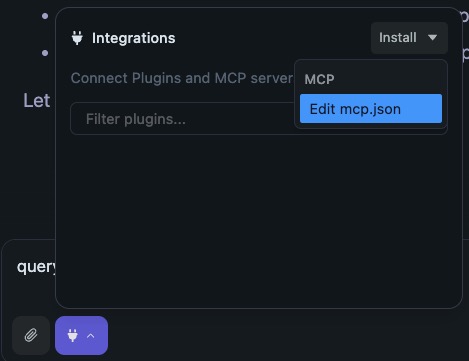

The Client-Side Configuration

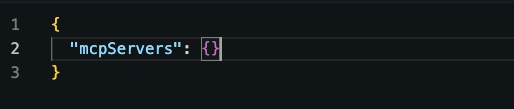

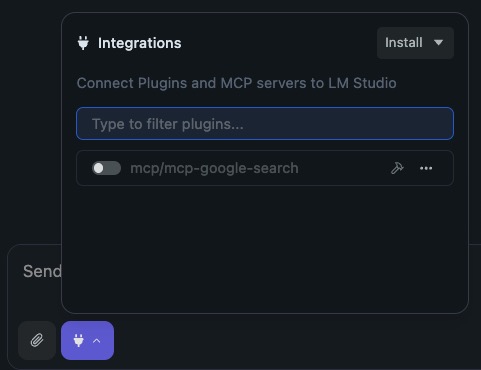

Once the n8n workflow is active, the connection is established on the client side, such as LM Studio. This is achieved by editing the mcp.json configuration file. The file serves as a registry of all available servers, requiring a unique name and a production URL provided by the n8n trigger.

A standard configuration for a single server follows a strict JSON structure:

"mcpServers":

"n8n-google-sheets":

"url": "https://your-n8n-instance.com/mcp/unique-id"

Upon saving this configuration, the LM Studio interface displays the available tools, often represented by a "plug" or "hammer" icon. This visual confirmation indicates that the local model now has a direct pipeline to the specified data source.

Advanced Capabilities: Accessing Vector Stores

While structured data is useful for statistics, the true power of modern AI lies in semantic retrieval. This is where vector databases like Qdrant become essential. Unlike traditional databases that look for literal keyword matches, vector stores use mathematical embeddings to find "similar" concepts.

Architecture of a Vector Search Tool

In the enriched n8n workflow, a second MCP server can be established specifically for semantic search. This involves connecting the Qdrant Vector Store tool to an MCP trigger. The architecture requires an embedding model (such as those provided by OpenAI or local alternatives like HuggingFace) to be attached to the workflow. This model converts the user’s natural language query into a numerical vector that the database can understand.

When a user asks, "Find me feedback related to the checkout experience," the AI doesn’t just look for the word "checkout." It uses the MCP connection to trigger a similarity search in Qdrant, retrieving all entries that are conceptually related to the purchasing process. The results are then fed back into the chat session, allowing the AI to summarize findings or identify trends based on actual data rather than pre-trained knowledge.

Supporting Data: The Case for Local AI Agents

The move toward local MCP-based agents is driven by several critical factors:

- Privacy and Security: According to industry surveys, over 60% of enterprises cite data privacy as the primary barrier to AI adoption. Local models running on LM Studio ensure that sensitive data from Google Sheets or internal databases never leaves the local network.

- Latency and Cost: Cloud-based API calls for every tool interaction can become prohibitively expensive at scale. Local execution eliminates per-token costs for tool invocation and reduces latency for real-time applications.

- Model Performance: Research indicates that even "tiny" models, such as the Qwen 0.6B or 1.5B parameters, perform significantly better when augmented with specific tools. By offloading data retrieval to an MCP server, the model can focus its limited parameters on reasoning and synthesis rather than memorization.

Official Responses and Industry Outlook

The developer community has responded with overwhelming positivity toward the n8n MCP integration. Early adopters report that the ability to use natural language to trigger complex n8n workflows—which can involve hundreds of different app integrations—is a "game-changer" for personal and enterprise productivity.

"The Model Context Protocol is essentially the USB port for the AI era," noted one automation consultant. "By making it low-code through n8n, we are allowing non-developers to build highly sophisticated AI agents that were previously the domain of specialized software engineers."

However, security experts urge caution. While the local aspect of LM Studio is secure, the n8n triggers must be properly authenticated. Most production environments utilize header-based authentication or API keys to ensure that only authorized clients can trigger these workflows. As the technology matures, the implementation of robust authentication within the MCP framework is expected to become standard practice.

Broader Impact and Future Implications

The integration of MCP servers into platforms like n8n signals a broader shift in the "AI Operating System" landscape. We are moving away from a world where we "chat" with AI toward a world where we "collaborate" with AI agents that have hands and eyes in our digital workspace.

The implications for business intelligence are profound. A marketing manager can now sit at a local chat interface and say, "Cross-reference our latest user feedback in Qdrant with the sales stats in Google Sheets and give me a list of three improvements we should make to the checkout page." The AI will then execute the search, fetch the rows, perform the analysis, and provide a data-backed recommendation—all within seconds and entirely within a private environment.

As we look toward the future, the range of tools accessible via MCP is likely to expand. Beyond spreadsheets and vector stores, we can expect to see integrations for real-time web browsing, system-level file manipulation, and even IoT device control. The question is no longer what the AI "knows," but what the AI is "allowed to do."

In conclusion, the combination of n8n and the Model Context Protocol represents a powerful paradigm shift. It empowers users to build bespoke AI systems that are tailored to their specific data needs while maintaining the highest standards of privacy and efficiency. As local LLMs continue to improve in reasoning capabilities, the infrastructure provided by MCP will be the foundation upon which the next generation of digital assistants is built. The era of the truly capable, local AI agent has officially arrived.