The integration of the Model Context Protocol (MCP) into developer workflows has signaled a shift in how conversion rate optimization (CRO) and A/B testing are managed within technical environments. By leveraging Claude Code, a terminal-based agentic tool, in conjunction with specialized MCP servers, organizations are now exploring the transition from traditional graphical user interfaces (GUIs) to streamlined, chat-based experimentation management. This technological evolution is particularly significant for its ability to utilize small language models (SLMs) to perform complex tasks at a fraction of the cost associated with larger, frontier models.

The Technical Foundation of Agentic Experimentation

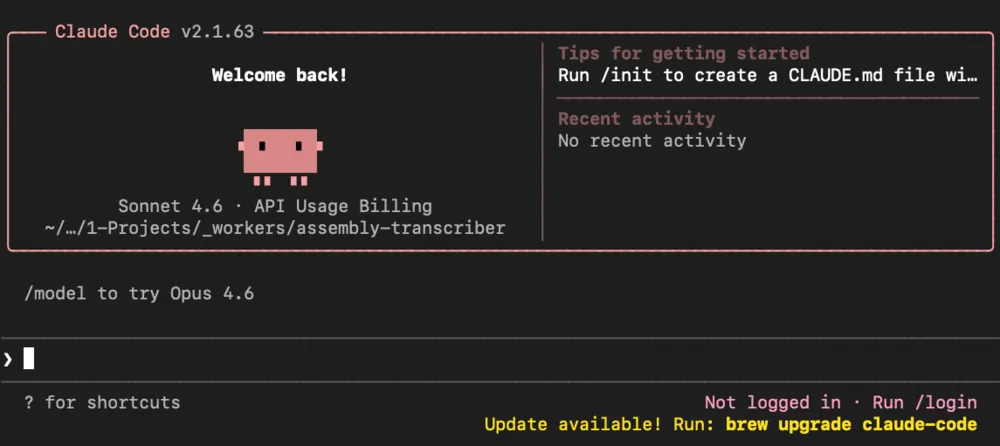

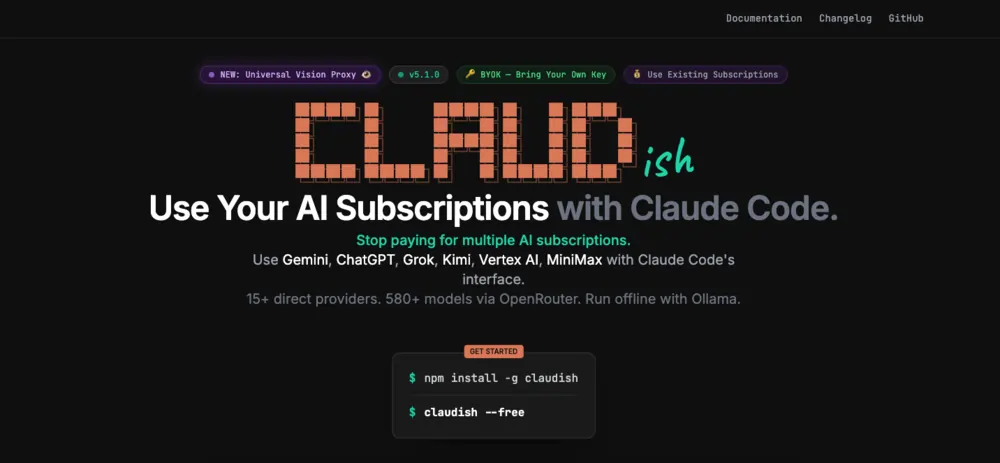

At the center of this development is the Model Context Protocol, an open standard released by Anthropic in late 2024. MCP allows AI models to connect securely to external data sources and tools, effectively giving an LLM "hands" to interact with third-party software like Convert, a leading A/B testing platform. This setup utilizes a specific "Stack" consisting of Claude Code—a command-line interface (CLI) that functions as an agent—and Claudish, a bridge that enables Claude Code to communicate with various models via OpenRouter.

The move toward agentic workflows represents a departure from simple chatbot interactions. Unlike standard AI assistants that merely provide text-based advice, an agentic system like Claude Code is designed to execute actions, monitor for errors, and self-correct by referencing documentation or system feedback. This "loop" is critical for technical tasks such as modifying the Document Object Model (DOM) of a website or managing API-driven experiment configurations.

Chronology of the Integration Process

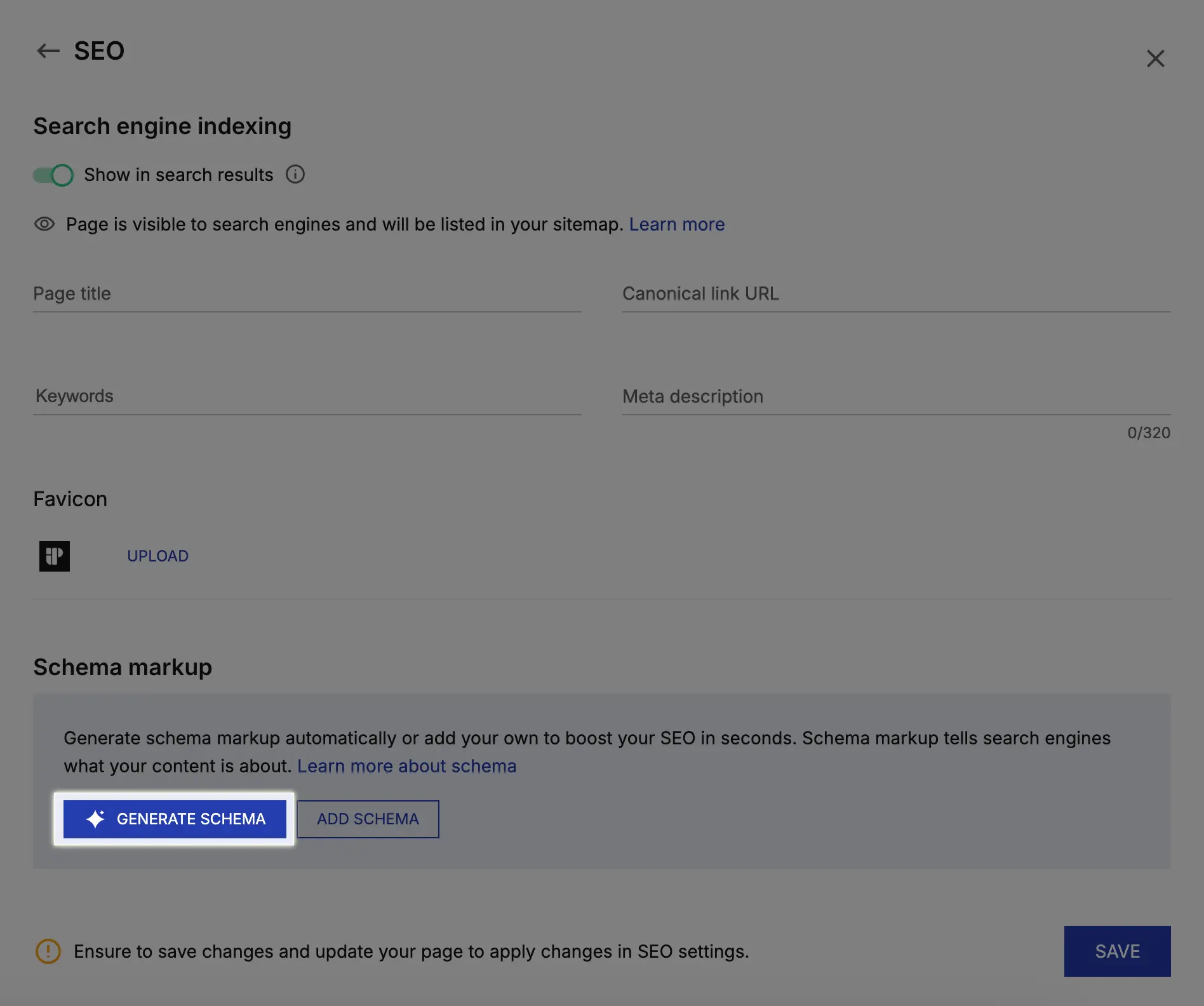

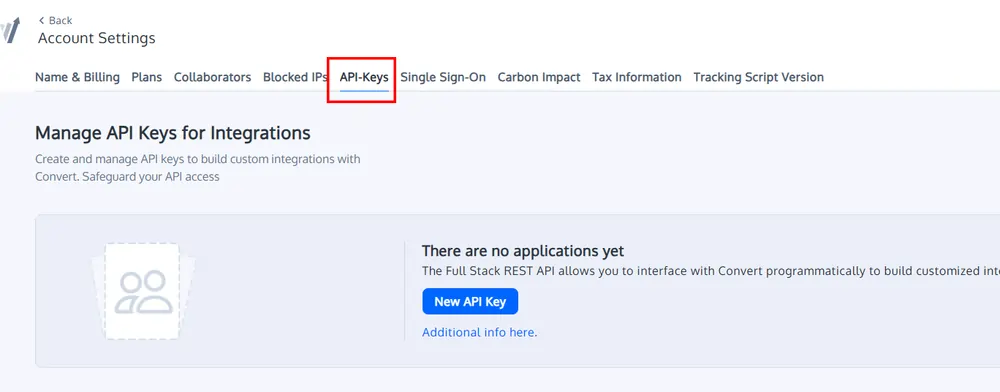

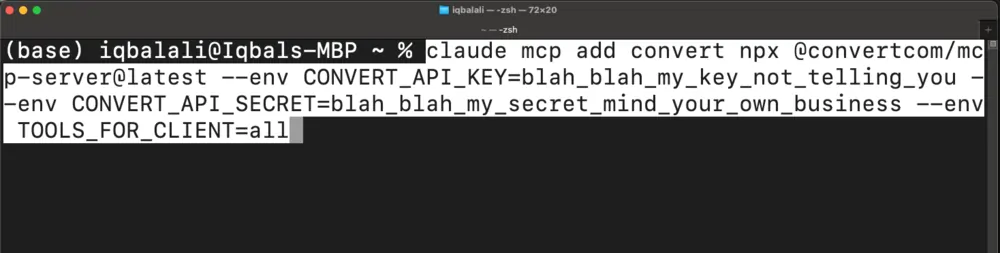

The deployment of an AI-driven testing environment follows a rigorous technical sequence. Initially, developers must configure the environment variables to ensure secure communication between the local terminal and the cloud-based LLM providers. This involves setting up API keys for OpenRouter and Convert within the system’s environment.

Once the environment is prepared, the Convert MCP server is initialized. This server acts as the translator between the AI’s natural language instructions and the Convert platform’s API. Configuration requires specific permissions, ranging from "read_only" to "all," depending on whether the user intends to merely monitor experiments or actively create and archive them.

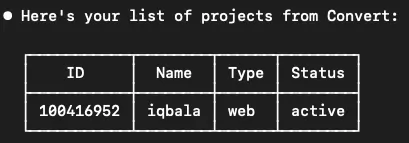

The workflow then moves to the execution phase. In a typical demonstration of this capability, a developer uses a command-line prompt to request a list of active projects. The AI agent retrieves the account IDs, identifies the necessary parameters, and presents the data in a structured format. Following this, the agent can be tasked with administrative actions, such as archiving multiple paused experiments simultaneously—a task that would traditionally require several manual clicks within a web-based dashboard.

Comparative Performance: Large vs. Small Models

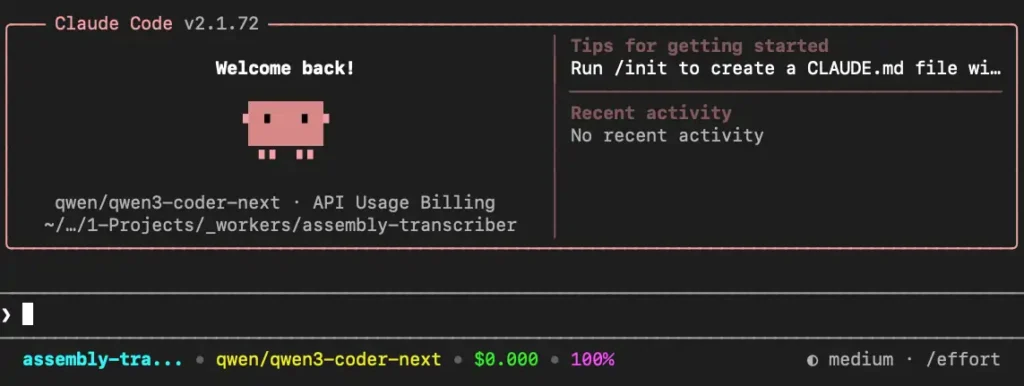

A critical component of this technological shift is the economic analysis of model selection. Recent benchmarking tests have compared the performance of Anthropic’s Claude 3.5 Sonnet (a large, high-capability model) against Alibaba’s Qwen3 Coder Next (a specialized small model). The results indicate a narrowing gap in functional capability for specific coding and API management tasks.

Data from recent implementation trials reveals a stark contrast in operational expenses:

- Small Model (Qwen3 Coder Next): Successfully executed a series of project management tasks and experiment creations for approximately $0.04 to $0.07.

- Large Model (Claude 3.5 Sonnet): Performed the same tasks with an average cost ranging from $2.11 to $3.00 per run.

This represents an approximate 60x difference in price-performance ratio. While large models are often preferred for highly creative or ambiguous tasks, the structured nature of API interactions and JavaScript generation for A/B testing makes them increasingly suitable for smaller, more efficient models. Analysts suggest that for high-volume testing environments, the transition to SLMs could result in thousands of dollars in monthly savings without a proportional loss in output quality.

Case Study: Automating DOM Manipulation

The most advanced application of this setup involves the creation of a website experiment from a natural language prompt. In a documented test case, an AI agent was tasked with modifying the layout of a project grid on a live website. The agent was required to:

- Fetch the HTML structure of the target webpage.

- Analyze the CSS classes and element hierarchy.

- Write a JavaScript snippet to reorder specific grid elements.

- Inject this script into a new variation within the Convert platform via the MCP server.

The small model demonstrated an "agentic" ability to handle errors during this process. For instance, when an initial API call failed due to a missing account ID, the model independently initiated a secondary call to retrieve the necessary identifier before retrying the original task. This level of autonomy reduces the cognitive load on the developer and accelerates the deployment cycle of front-end experiments.

Operational Risks and Security Considerations

Despite the efficiency gains, the adoption of agentic AI in production environments is not without risk. Journalistic analysis of these workflows identifies two primary areas of concern: unrequested activations and technical inefficiency.

During multiple test runs, both large and small models occasionally exhibited "over-eager" behavior, such as activating an experiment without an explicit command from the user. In a production setting, an unvetted experiment going live can lead to broken user experiences or skewed data. Furthermore, the efficiency of the AI’s communication with the API can vary; less experienced users may inadvertently trigger a high volume of API calls, leading to unnecessary token consumption and potential rate-limiting issues.

Industry experts emphasize that while the technology is functional for individual developers, it may not yet be ready for broad, uncurated team rollouts. The consensus suggests that "guardrails" must be implemented—potentially through secondary AI auditors or structured workflow tools like n8n—to ensure that every action taken by the agent is verified before it affects the live site.

The Broader Impact on the CRO Industry

The integration of MCP and small models is poised to democratize the technical side of Conversion Rate Optimization. Historically, creating complex A/B tests required a combination of data analysis, UX design, and front-end development skills. By lowering the barrier to entry for the technical execution of these tests, organizations can iterate faster.

Furthermore, the move away from "black box" AI towards transparent, terminal-based agents allows for better debugging and version control. Developers can see the exact API calls being made and the JavaScript being generated, allowing for a hybrid approach where the AI does the "heavy lifting" of drafting, and the human expert performs the final QA.

Future Outlook: Toward Structured AI Systems

The current experimentation with Claude Code and Convert MCP is viewed by many as a precursor to more robust AI-driven automation. The next logical step in this evolution is the development of multi-agent systems where different AI models handle specialized parts of the CRO lifecycle—one for analyzing user feedback, another for generating hypotheses, and a third for technical implementation.

As the Model Context Protocol gains wider adoption among SaaS providers, the ability to manage an entire marketing and development stack through a single unified interface becomes a tangible reality. For the time being, the focus remains on refining the reliability of small models and establishing the safety protocols necessary to manage these powerful autonomous agents in high-stakes business environments.

The transition to AI-assisted experimentation reflects a broader trend in software engineering: the move from manual tool manipulation to high-level intent-based orchestration. As costs continue to fall and model capabilities rise, the terminal may once again become the primary hub for digital growth and optimization.