After conducting a comprehensive study on the accuracy of Artificial Intelligence (AI) in Pay-Per-Click (PPC) advertising, significant curiosity has arisen regarding its performance in the realm of Search Engine Optimization (SEO). Within the digital marketing landscape, where Search Engine Optimization remains a critical driver of website traffic, the precision of AI-generated advice is paramount. This investigation delves into the efficacy of popular AI tools in answering complex SEO-related queries, a topic of growing importance as businesses increasingly turn to AI for strategic guidance.

The current digital ecosystem heavily favors organic search traffic, with Google overwhelmingly leading as a referral source for websites. This reliance underscores the necessity for accurate SEO strategies, leaving little room for error. In light of this, a rigorous experiment was undertaken to assess the reliability of AI tools when tasked with SEO-related questions. Five distinct AI platforms were subjected to the same set of 50 SEO queries, ranging from fundamental concepts to advanced strategic and technical aspects. The objective was to meticulously analyze their responses and identify areas of accuracy, inaccuracy, and ambiguity.

The Experiment: Hypothesizing AI’s SEO Prowess

The widespread adoption of AI for immediate information retrieval has transformed how individuals and businesses seek answers. While AI excels at providing straightforward information, its application to nuanced and evolving fields like SEO presents a unique challenge. Many users tend to accept AI-generated outputs at face value, a practice that becomes concerning when dealing with critical business strategies. This study’s central hypothesis was that Large Language Model (LLM) AI tools, while offering convenience, do not consistently deliver 100% accurate SEO data, strategies, or insights.

To validate this hypothesis, five leading AI chat tools—ChatGPT, Google Gemini, Perplexity, Meta AI, and Microsoft Copilot—were each presented with the identical 50 SEO questions. These questions spanned a spectrum of difficulty, from basic SEO principles to intricate technical SEO and strategic considerations. The inherent dynamism of SEO, where best practices and ranking factors are subject to constant change and interpretation, posed a significant challenge in developing a definitive scoring system. Unlike some other marketing channels, SEO often operates with a degree of opacity, with search engines like Google rarely confirming the exact weight of specific ranking factors.

To address this complexity, a scoring methodology was devised. Each correct answer received a full point, while answers deemed "iffy" or requiring further nuance were awarded half a point. This approach allowed for a quantifiable assessment of each tool’s accuracy and identified areas where their responses fell short of complete accuracy or helpfulness. The overarching goal was to ascertain the degree to which businesses could confidently rely on AI for their SEO efforts and to understand the potential pitfalls of using AI-generated information without critical evaluation.

Key Findings: A 13% Inaccuracy Rate Across AI Responses

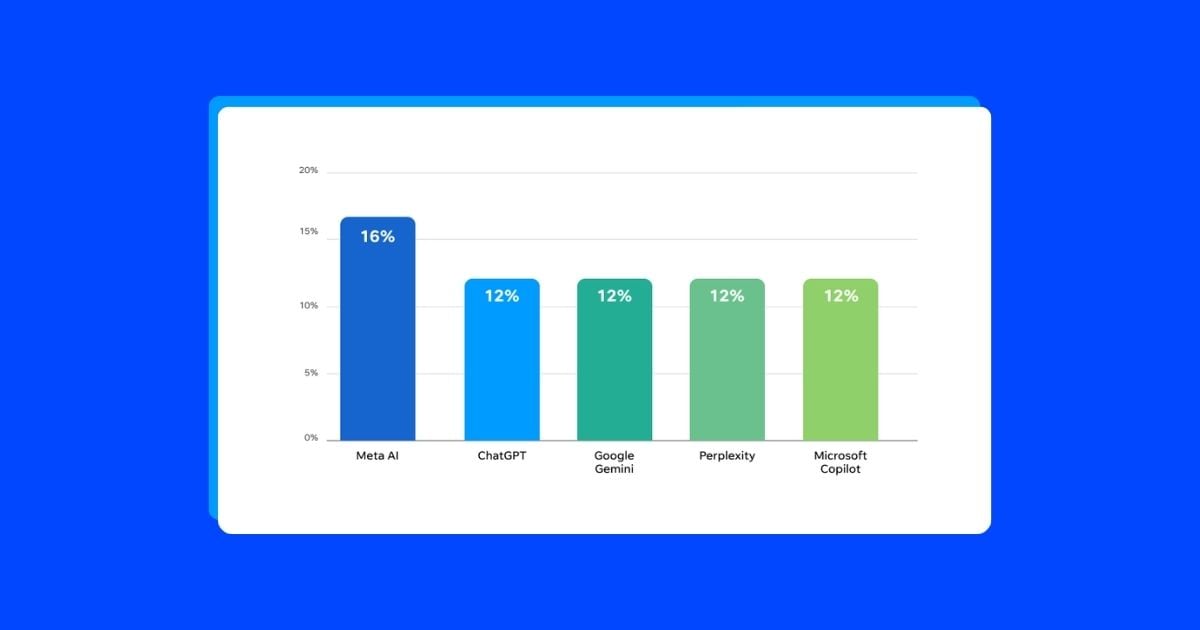

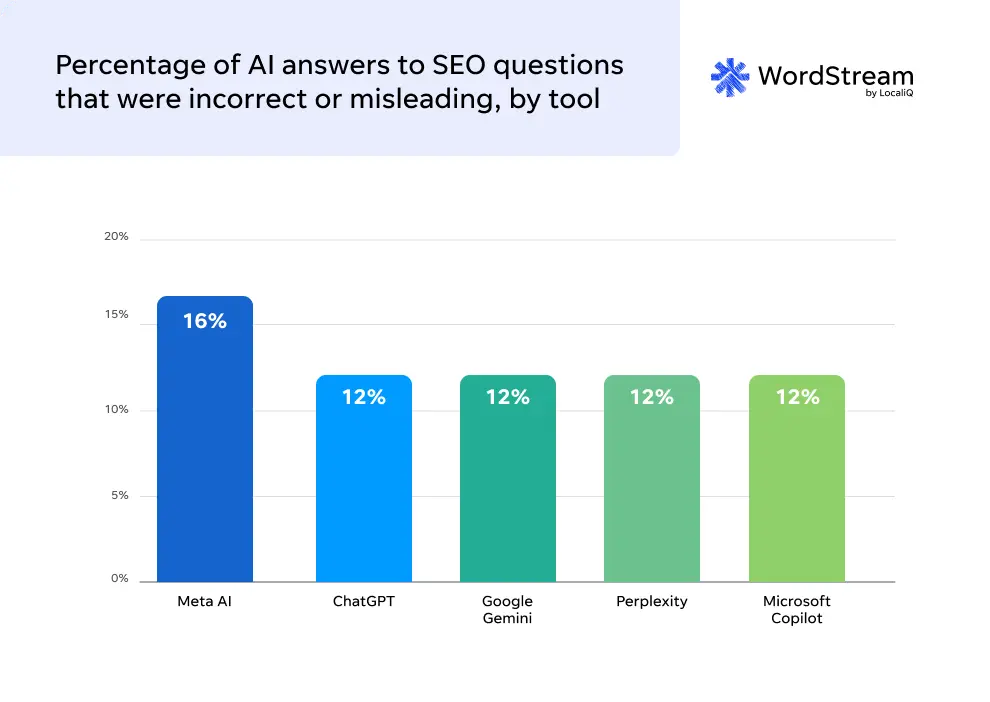

The comprehensive analysis revealed that approximately 13% of the 250 total AI-generated answers to SEO questions were either incorrect or, in some instances, misleading. These "iffy" answers often lacked the necessary depth, nuance, or contextual expansion required to effectively guide SEO strategy. Across the board, the AI tools achieved an average accuracy rate of roughly 87%, a score that, while respectable, still indicates a significant margin for error.

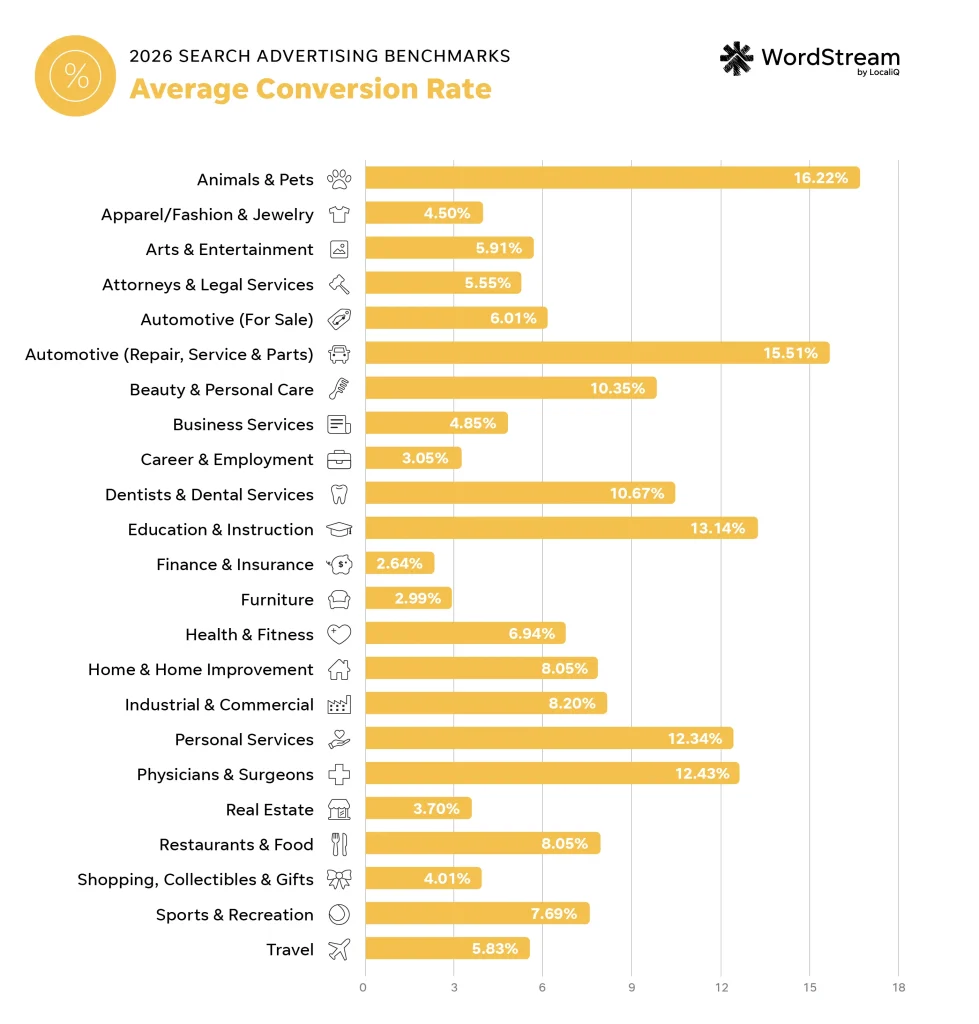

Accuracy Breakdown by AI Tool:

- Meta AI: This platform exhibited the lowest accuracy rate among the tested tools, with approximately 16% of its responses being incorrect or misleading. This places it at the bottom of the performance spectrum for SEO accuracy.

- ChatGPT: Demonstrated a strong performance, with a lower inaccuracy rate than Meta AI.

- Google Gemini: Showed a commendable level of accuracy, though it sometimes offered overly technical advice.

- Perplexity: Performed consistently well, providing generally accurate and useful information.

- Microsoft Copilot: While generally accurate, Copilot’s responses were sometimes overly simplistic, lacking the depth needed for complex SEO tasks.

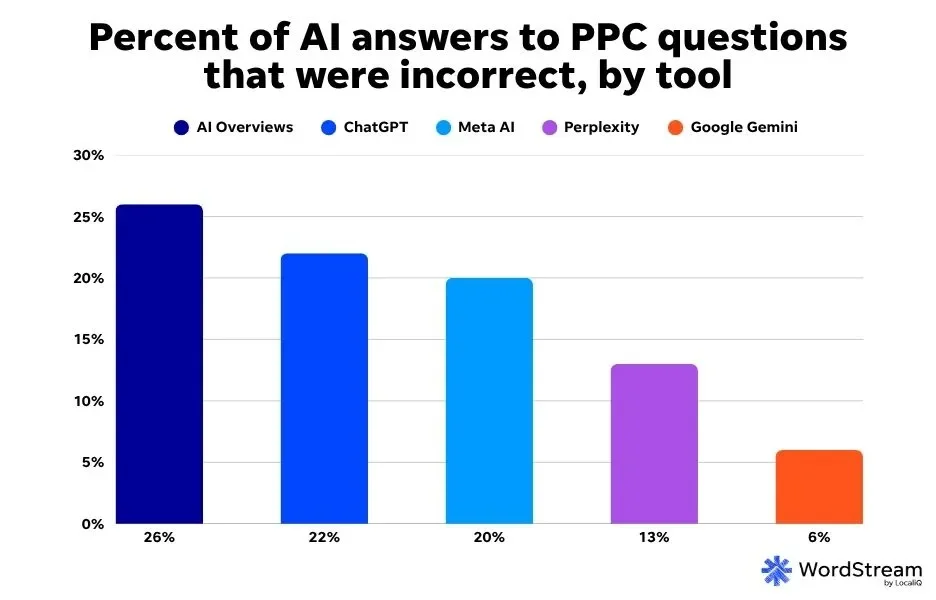

While these results suggest AI is a developing resource for SEO, the 13% inaccuracy rate highlights the critical need for human oversight and verification. The study also noted a comparative analysis with a previous PPC AI accuracy study, indicating that AI tools generally performed better in SEO-related queries (13% inaccuracy) than in PPC queries (20% inaccuracy). This suggests that the more nuanced and evolving nature of SEO might be better understood by current AI models compared to the more data-driven and direct nature of PPC.

Detailed Observations from the AI SEO Query Experiment

The extensive testing yielded several key observations about the capabilities and limitations of AI in the SEO domain:

1. Meta AI: The Least Accurate SEO Information Source

Meta AI emerged as the least accurate among the surveyed AI tools for SEO-related inquiries. With a 16% inaccuracy rate on the 50 questions, it consistently provided less reliable information compared to its counterparts. In contrast, the other four tools had a lower percentage of completely incorrect answers, with a smaller portion of their responses falling into the "iffy" or "misleading" categories. This performance disparity is significant, particularly for businesses that may rely on a single AI tool for their digital marketing intelligence.

2. Keyword Research: AI’s Significant Weakness

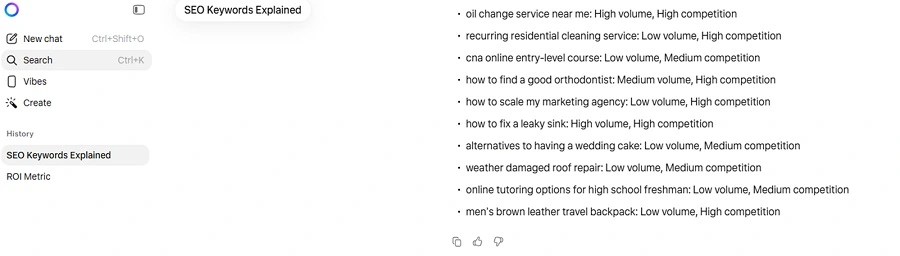

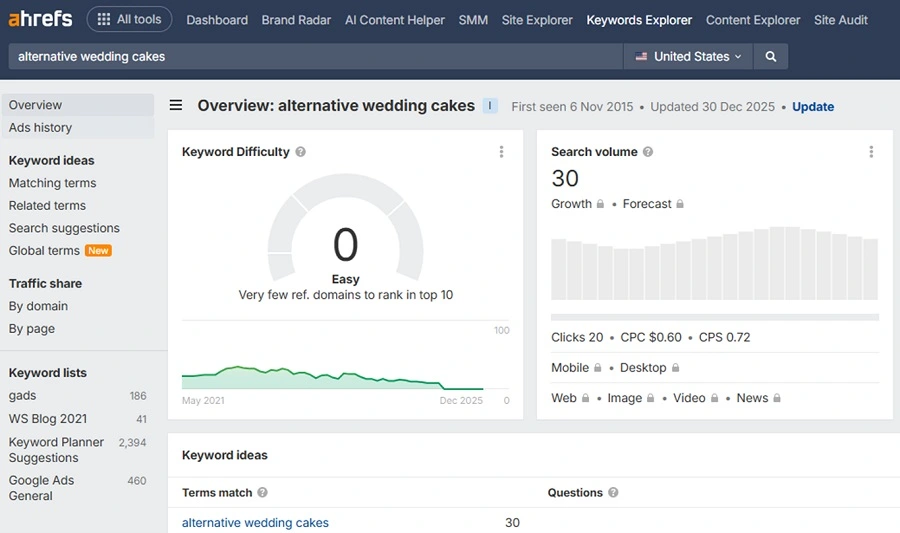

A consistent theme across the SEO community is the unsuitability of general AI tools for precise keyword research. This experiment corroborated this sentiment, with three of the five AI tools failing to provide clear and reliable keyword volume and competition metrics. While AI can be a valuable tool for brainstorming keyword ideas or expanding existing lists, it cannot replace specialized keyword research platforms. Tools like WordStream’s Free Keyword Tool, Google Ads Keyword Planner, Ahrefs, and Semrush offer the granular data and accuracy essential for effective keyword strategy.

The study highlighted stark discrepancies in keyword volume and competition estimates across AI tools for common search terms. For instance, the keyword "how to fix a leaky sink" received vastly different volume and competition estimates from ChatGPT, Gemini, and Perplexity, with their numbers diverging significantly from actual data. Similarly, "oil change service near me," a high-volume, high-competition keyword, was subject to a wide range of inaccurate estimates from the AI tools.

Meta AI and Microsoft Copilot were particularly unhelpful, providing only qualitative labels like "low," "medium," and "high" for volume and competition, rather than concrete numerical data. This lack of precision renders their keyword research insights largely unusable for strategic decision-making. The vast inconsistencies underscore that relying on AI for keyword research is not only unreliable but also potentially detrimental to SEO strategy development.

3. SEO Audits: Lack of Practical Guidance for SMBs

None of the AI tools provided practical, step-by-step guidance for conducting an SEO audit, particularly for small to medium-sized businesses (SMBs). While experienced SEO professionals may not require AI assistance for audits, the average marketer or small business owner seeking help often needs basic, actionable steps. Existing resources, such as WordStream’s SEO audit guide and LocaliQ’s SEO checklist, offer structured approaches. In contrast, the AI-generated audit lists from tools like Gemini and Meta AI, while not entirely incorrect, often lacked actionable first steps or presented information that was too general for a novice or intermediate user. This suggests that AI is not yet equipped to provide the tailored, practical insights needed for comprehensive SEO auditing.

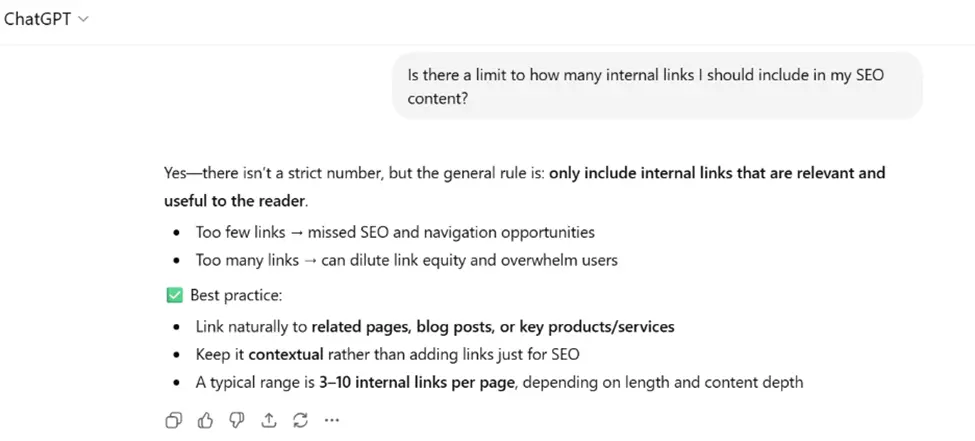

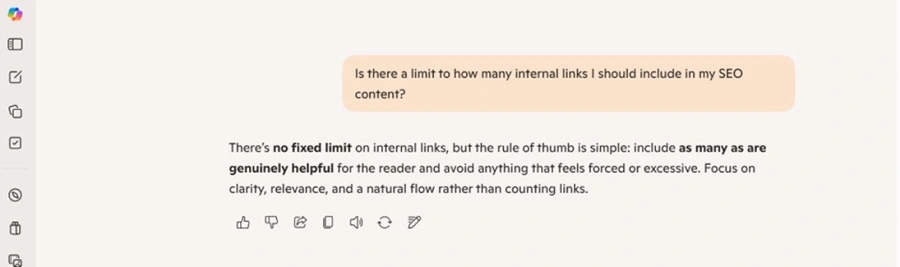

4. Internal Linking Best Practices: Conflicting Advice

The AI tools offered conflicting advice regarding internal linking best practices. While there’s no strict limit on the number of internal links per page, finding an optimal balance to avoid appearing spammy is crucial. ChatGPT suggested an ideal range of 3-10 internal links per page, a figure that many SEO experts would consider low. Other tools provided similar, yet varied, recommendations. Microsoft Copilot’s response was particularly vague. The divergence in advice highlights the subjectivity within SEO and the difficulty AI faces in providing universally applicable best practices for nuanced topics like internal linking.

5. Page Ranking Timelines: Inconsistent Estimates

Estimates for how long it takes a webpage to rank on search engines varied significantly among the AI tools. ChatGPT suggested a 1-6 month timeframe, which might be too generous. Gemini and Meta AI’s estimations of 6-12 months could be overly conservative for many scenarios. According to SEO experts like Heman Patel, Senior SEO Analyst at WordStream, a more realistic timeframe for most pages is 3-6 months. This variability underscores that accurate page ranking timelines depend on numerous factors, including content type, SERP competition, and link acquisition rates, which require expert human analysis. Copilot and Perplexity offered the most accurate answers in this instance, but the overall inconsistency across tools reinforces the need for expert human judgment.

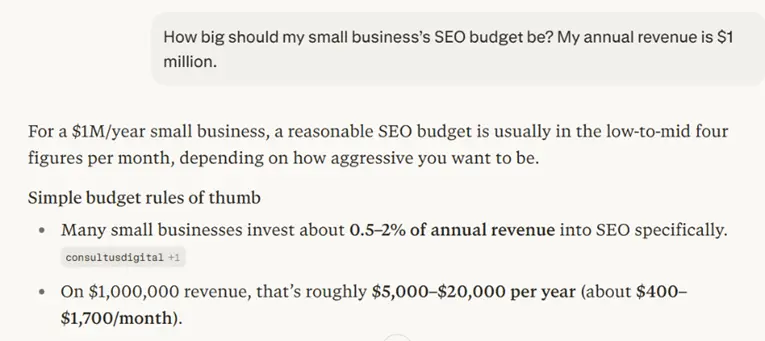

6. SEO Budget Suggestions: Inaccurate and Undifferentiated

Two of the five AI tools provided inaccurate SEO budget suggestions. When prompted with a scenario of a small business with $1 million in annual revenue, Meta AI and Microsoft Copilot offered suggestions that were either too high or failed to differentiate an SEO budget from an overall marketing budget. Industry benchmarks suggest that small businesses typically allocate 5-10% of their revenue to marketing, with a portion of that dedicated to SEO. For a $1 million revenue business, this could translate to a $20,000 annual SEO budget, a range that ChatGPT, Gemini, and Perplexity provided. However, the lack of personalization in AI budget recommendations—which should account for specific business goals, industry, and market conditions—limits their practical utility.

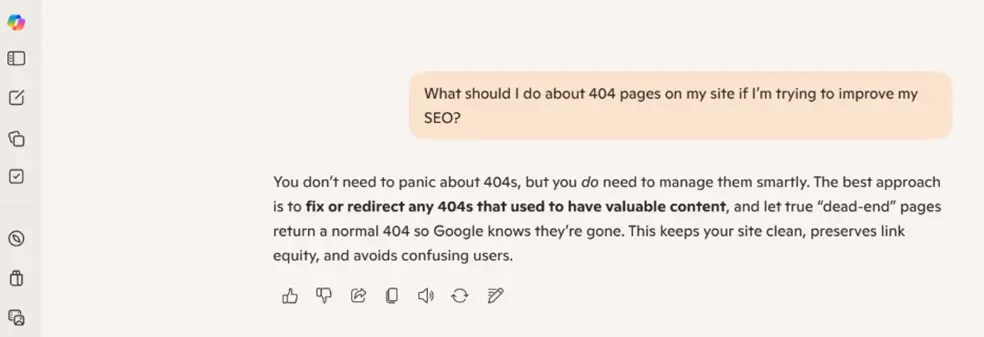

7. Microsoft Copilot: Overly Simplistic Responses

A recurring observation with Microsoft Copilot was the tendency towards overly simplistic answers. While simplicity can be beneficial for basic queries, SEO often involves complex nuances that require detailed explanations. For instance, when asked for ways to optimize a sitemap, Copilot provided a very basic list, lacking the actionable and technical depth offered by other tools like Gemini and Perplexity. Similarly, when discussing 404 pages, Copilot omitted the crucial recommendation of creating custom 404 pages, a suggestion provided by all other tools and essential for user experience. This indicates that Copilot may be best suited for introductory SEO concepts rather than in-depth strategy development.

8. Google Gemini: Excessively Technical Advice

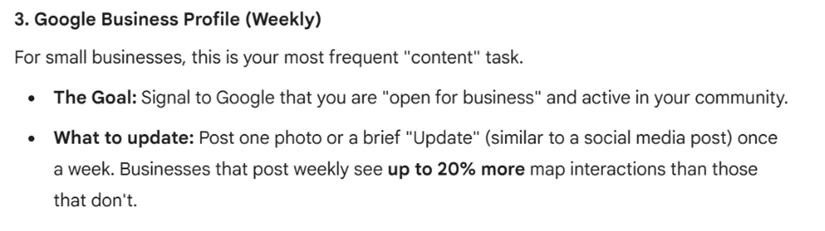

Conversely, Google Gemini frequently provided overly technical responses that might be overwhelming for the average SEO professional or SMB marketer. Its recommendations for top SEO metrics, for example, included terms like "INP" (Interaction to Next Paint), which, while relevant, might not be a primary KPI for many businesses compared to metrics like sessions or key events in Google Analytics 4. Furthermore, Gemini’s suggestion to update Google Business Profile weekly for organic ranking improvement, while a valid SEO practice, might be an unattainable task for SMBs with limited marketing bandwidth. This suggests Gemini’s insights are more geared towards seasoned SEO experts or agencies.

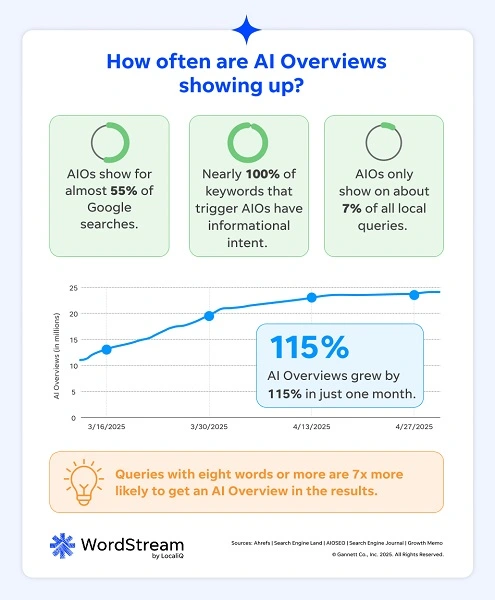

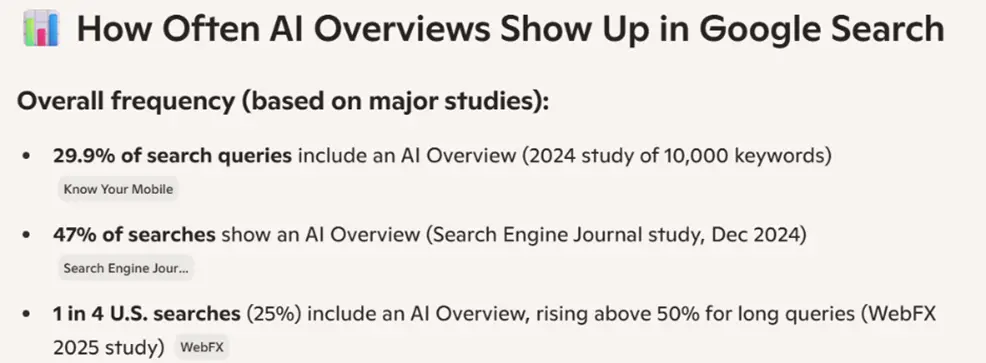

9. AI Overviews: Underestimation of Prevalence

All surveyed AI tools underestimated the current prevalence of Google AI Overviews on Search Engine Results Pages (SERPs). AI Overviews are now appearing in approximately 55% of Google searches, a figure that is rapidly increasing. The AI tools provided significantly lower estimates, indicating a lag in their data or understanding of this rapidly evolving search feature. This underestimation could lead to flawed strategic decisions regarding content optimization for AI-driven search results.

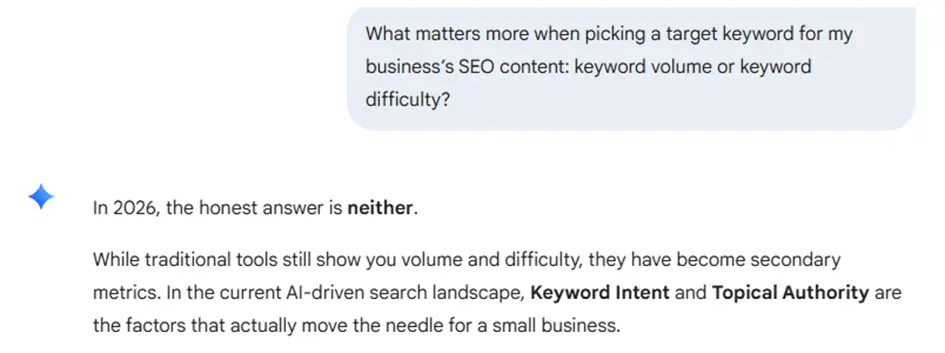

10. Keyword Prioritization: Gemini’s Misleading Advice

Google Gemini provided potentially misleading advice regarding keyword prioritization. When asked about the relative importance of keyword volume versus keyword difficulty, Gemini stated that in 2026, neither would be as important as "keyword intent and topical authority." While intent and topical authority are indeed critical, Gemini’s assertion that volume and difficulty are secondary and should be disregarded is problematic. This advice could lead businesses to overlook essential keyword research metrics, negatively impacting their SEO efforts.

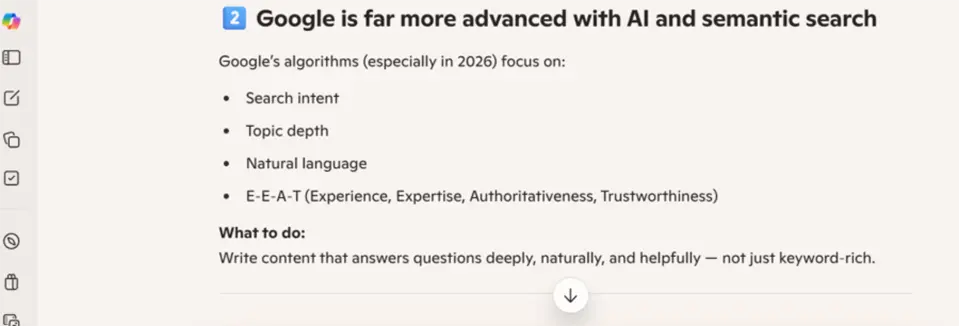

11. Microsoft Copilot: Self-Deprecating Remark

In an interesting turn, Microsoft Copilot made a self-deprecating remark about its parent company’s search engine, Bing. When asked about different SEO strategies for Google and Bing, Copilot acknowledged that "Google is far more advanced with AI and semantic search" than Bing. While this might be factually accurate, it’s an unusual admission from an AI tool designed to promote its ecosystem. This observation highlights the potential for AI to offer candid, albeit sometimes inconvenient, assessments.

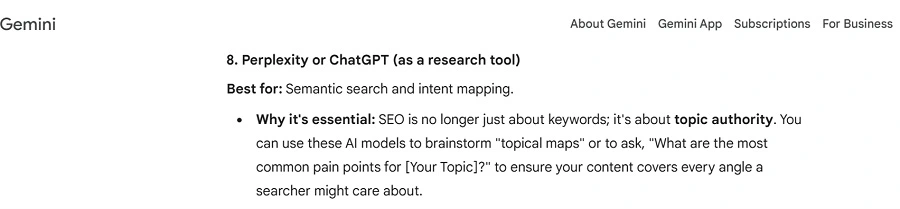

12. Google Gemini: Recommending Competitor AI Tools

Notably, Google Gemini was the only tool that recommended using competing AI platforms for SEO assistance. When asked for SEO tools, Gemini suggested Perplexity and ChatGPT, alongside AI in general. This approach, while transparent, is somewhat surprising given that a more brand-centric AI might promote its own services. This instance suggests that some AI tools are designed to provide broader, more objective recommendations, even if it means pointing users to rivals.

13. AI vs. PPC Accuracy: A Comparative Edge for SEO

Comparing this study’s findings with a prior investigation into AI accuracy for PPC revealed that AI tools generally performed better for SEO. While 13% of AI answers on SEO were inaccurate, the rate rose to approximately 20% for PPC-related questions. This suggests that current AI models may possess a more nuanced understanding of the dynamic SEO landscape than the more direct, data-intensive realm of PPC. However, the inherent subjectivity and complexity of SEO mean that even these "more accurate" answers require critical evaluation and expert verification.

Navigating AI in SEO: Best Practices for Users

Given the findings, a strategic approach to integrating AI into SEO workflows is essential. The following recommendations can help users maximize the benefits while mitigating the risks:

1. Master Your Prompts: The Foundation of Quality Output

The quality of AI-generated content is directly proportional to the quality of the prompts used. When querying AI for SEO-related information, it is crucial to provide detailed context, specify the target audience (e.g., SMB, enterprise), outline specific business goals, and clearly articulate the desired outcome. A comprehensive prompt checklist should include details about the business, industry, target keywords, current challenges, and the specific type of information sought (e.g., strategic advice, technical explanation, data analysis).

2. Cross-Reference and Verify AI Responses

Given the observed inconsistencies and inaccuracies, it is imperative to cross-check all AI-generated SEO information with reliable sources. This includes consulting established SEO publications (e.g., Search Engine Journal, Moz), reputable SEO forums, and industry-specific data from specialized tools. Never rely solely on AI for critical strategic decisions. Treat AI outputs as a starting point for further research and validation.

3. AI as a Complement, Not a Replacement, for Expertise

AI should be viewed as a supplementary tool within a broader SEO strategy, not as a substitute for human expertise. While AI can assist with brainstorming, idea generation, and answering basic queries, it falls short in providing nuanced strategic insights or handling complex, context-dependent tasks. Businesses should leverage AI for initial exploration and then rely on experienced SEO professionals or specialized tools for in-depth analysis, strategy development, and execution. The choice of AI tool should also align with the user’s current SEO proficiency—simpler tools for beginners and more advanced options for experts.

The Verdict: Should You Use AI for SEO?

The reliability of AI for SEO is a nuanced question. While AI tools have demonstrated a notable improvement in accuracy compared to other marketing channels like PPC, they are not infallible. Approximately 13% of the analyzed responses contained errors or misleading information. Therefore, users should approach AI-generated SEO advice with a healthy dose of skepticism.

AI can be a valuable resource for those new to SEO, seeking initial ideas, or looking to improve existing strategies. However, its limitations in providing nuanced strategic insights mean that it should be used as one component of a comprehensive SEO approach. Integrating AI with other proven methods, such as keyword research tools, competitive analysis, and consultation with marketing partners, is crucial for building a robust and effective SEO strategy. The journey to mastering SEO involves a blend of cutting-edge technology and seasoned human expertise, with AI serving as a powerful, albeit imperfect, assistant.