The rapid evolution of artificial intelligence has led to a paradoxical situation where the tools used to create intelligence have become increasingly inscrutable to the human mind. As Large Language Models (LLMs) transition from simple transformer blocks to hyper-complex Mixture-of-Experts (MoE) systems and multi-modal pipelines, the task of understanding a model’s internal structure has become a significant hurdle for developers and researchers alike. While platforms like Hugging Face have democratized access to these models, the sheer volume of configuration files, parameter weights, and layer definitions often leaves developers mentally reconstructing architectures—a process that is notoriously error-prone and time-consuming. In response to this challenge, a new utility known as HFViewer (Hugging Face Viewer) has emerged, offering a streamlined, visual approach to model inspection that requires nothing more than a simple URL modification.

The Challenge of Architectural Complexity in Modern AI

In the early days of deep learning, visualizing a neural network was relatively straightforward. A standard Convolutional Neural Network (CNN) or a basic Recurrent Neural Network (RNN) consisted of a manageable number of layers that could be mapped on a single whiteboard. However, the advent of the Transformer architecture in 2017, followed by the scaling laws that led to models with hundreds of billions of parameters, changed the landscape entirely.

Modern models, such as the DeepSeek-V4-Pro or Meta’s Llama-3 series, are not merely deeper; they are architecturally more sophisticated. They utilize complex routing logic, vision encoders, projection layers, and specialized attention mechanisms. For a developer downloading a model from a Hugging Face repository, the "config.json" file is often the only window into how these components interact. Parsing thousands of lines of JSON code to understand the flow of tensors is a significant cognitive load. This "black box" nature of AI architectures hinders debugging, slows down the onboarding of new researchers, and complicates the process of model optimization.

HFViewer: A Seamless Visual Interface

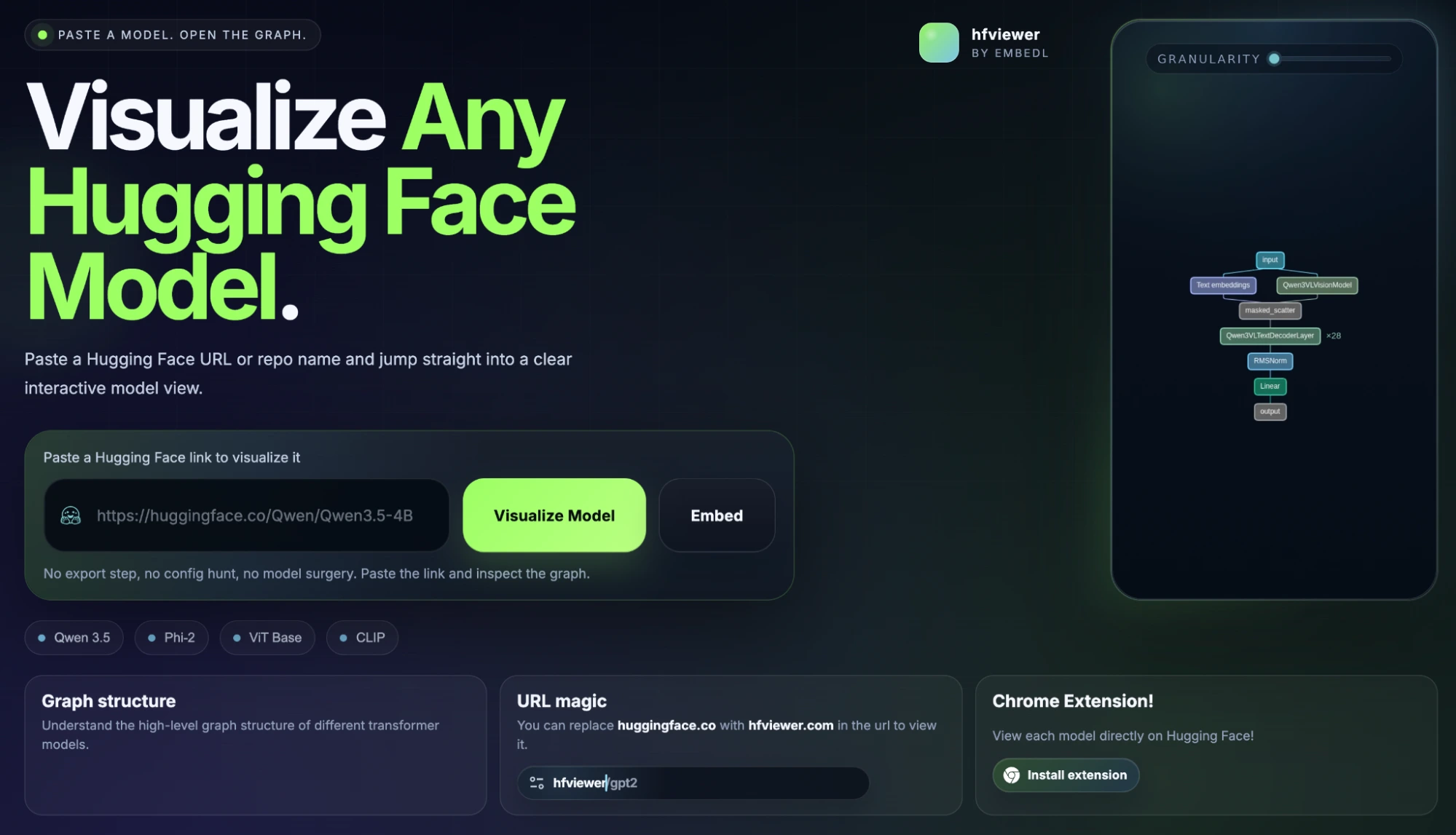

HFViewer addresses this friction by providing an automated, interactive visualization layer on top of Hugging Face’s existing infrastructure. The tool operates on a principle of radical simplicity, utilizing a "URL trick" that allows users to pivot from a standard repository view to a graphical architecture map in seconds.

By replacing the domain huggingface.co with hfviewer.com in any model’s URL, the user is redirected to a platform that parses the model’s configuration files and renders them as an interactive graph. For example, the URL for the DeepSeek-V4-Pro model, originally https://huggingface.co/deepseek-ai/DeepSeek-V4-Pro, becomes https://hfviewer.com/deepseek-ai/DeepSeek-V4-Pro. This instant conversion eliminates the need for manual setup, local environment configuration, or the installation of heavy visualization libraries like TensorBoard for initial inspections.

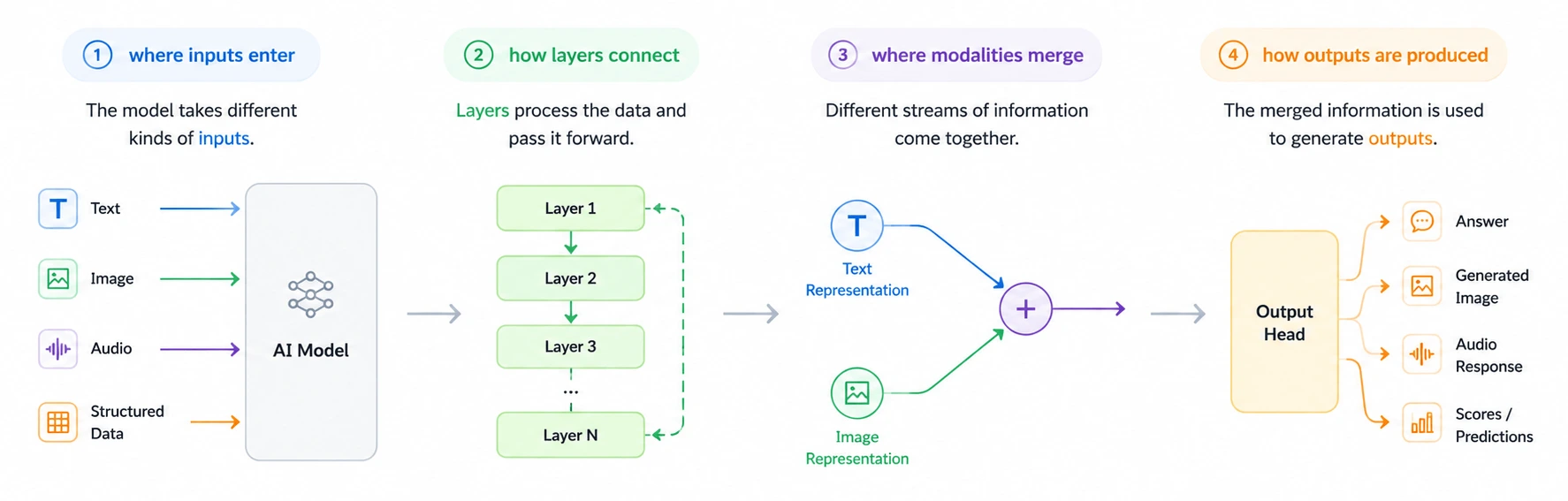

The resulting interface allows users to zoom into specific layers, inspect the dimensionality of attention heads, and trace the path of data through various sub-modules. This is particularly valuable for models like DeepSeek-V4, which employ Mixture-of-Experts (MoE) architectures where only a fraction of parameters are active for any given input. Visualizing the routing logic and the distribution of "experts" provides a level of clarity that raw text files cannot achieve.

Technical Integration and Developer Workflow

Recognizing that modern AI development occurs across various environments, HFViewer has been designed to integrate into existing workflows via terminal commands and browser extensions. This multi-modal access ensures that whether a developer is browsing for new models or working within a secure server environment, the visualization tool remains accessible.

For developers operating within a command-line interface (CLI), HFViewer can be invoked using standard system commands. This allows for the rapid opening of model architectures directly from a terminal session:

- macOS:

open https://hfviewer.com/[model-path] - Linux:

xdg-open https://hfviewer.com/[model-path] - Windows (PowerShell):

start https://hfviewer.com/[model-path]

Furthermore, the "Hugging Face Viewer" browser extension streamlines the process for those who spend significant time on the Hugging Face Hub. The extension adds a dedicated button to the repository interface, making architecture visualization a native part of the browsing experience. This integration is vital for competitive analysis and architectural auditing, where a researcher might need to compare the structural differences between several competing models in quick succession.

A Timeline of Model Visualization Tools

The emergence of HFViewer represents the latest step in a long chronology of tools designed to make neural networks more transparent.

- The Manual Era (Pre-2015): Researchers often relied on manual diagrams or basic scripts using Matplotlib to visualize small-scale networks.

- The TensorBoard Era (2015-2018): With the rise of TensorFlow, TensorBoard became the industry standard, offering a way to visualize computational graphs during the training process. However, it required local setup and was often tied to specific training logs.

- The Netron Era (2017-Present): Netron emerged as a popular desktop application for visualizing saved model files (like .onnx or .pb). While powerful, it requires the user to have the model weights downloaded locally, which is increasingly difficult as model sizes grow into the hundreds of gigabytes.

- The Web-Native Era (2024-Present): Tools like HFViewer represent a shift toward "zero-download" visualization. By leveraging the metadata already hosted on the cloud, these tools provide architectural insights without requiring the user to download a single parameter weight.

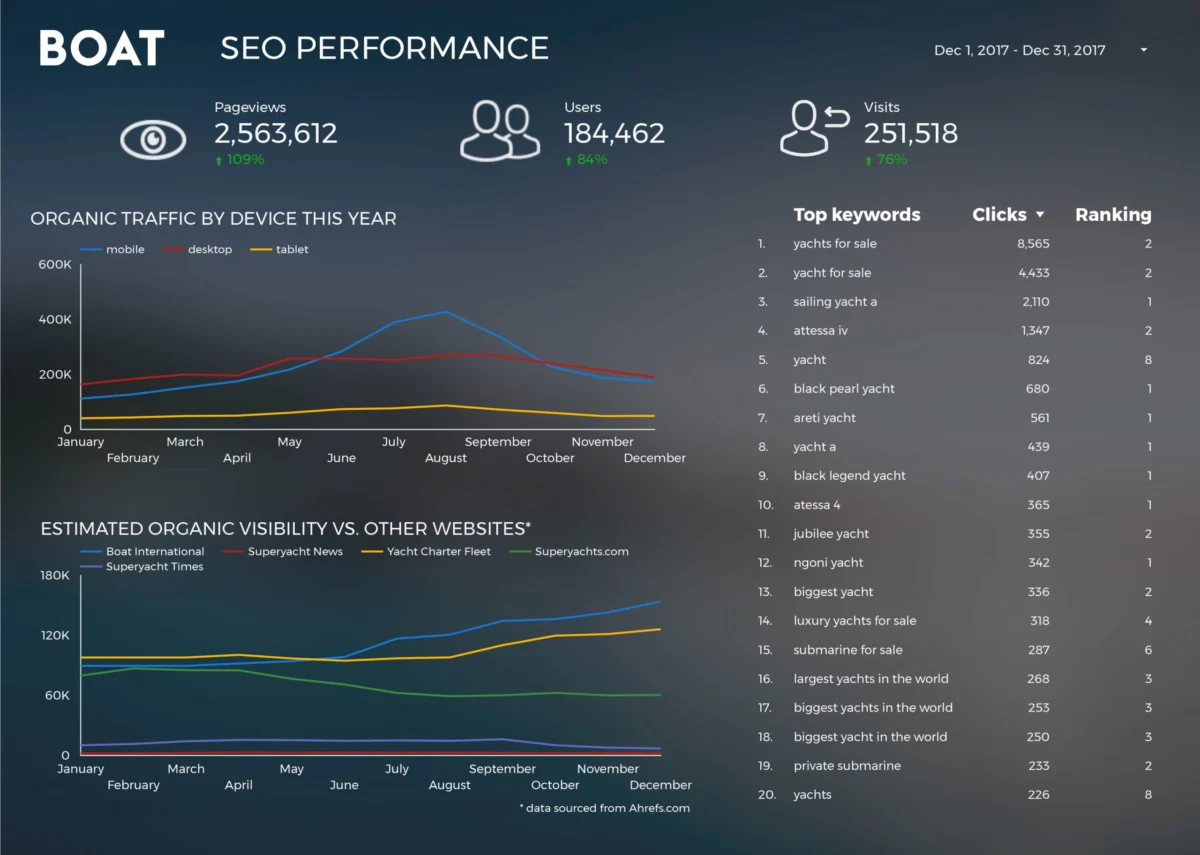

Supporting Data: The Scale of the Problem

The need for such tools is underscored by the explosive growth of the Hugging Face ecosystem. As of 2024, the platform hosts over 1 million models, ranging from niche fine-tunes to foundational giants. The complexity of these models is also increasing. In 2020, a "large" model might have had 24 to 48 layers. Today, state-of-the-art models frequently exceed 80 layers, with some experimental architectures exploring hundreds of modular components.

A survey of AI developers indicates that "understanding model architecture" is cited as one of the top three challenges when implementing open-source models. Without visualization, the time taken to verify the compatibility of a model with specific hardware (such as checking if the attention mechanism supports FlashAttention or determining the KV cache requirements) can take hours of manual documentation review. HFViewer reduces this verification time to minutes by making the structural requirements visually obvious.

Industry Implications and Expert Perspectives

The democratization of AI architecture visualization has broader implications for the industry, particularly in the realms of education, safety, and optimization.

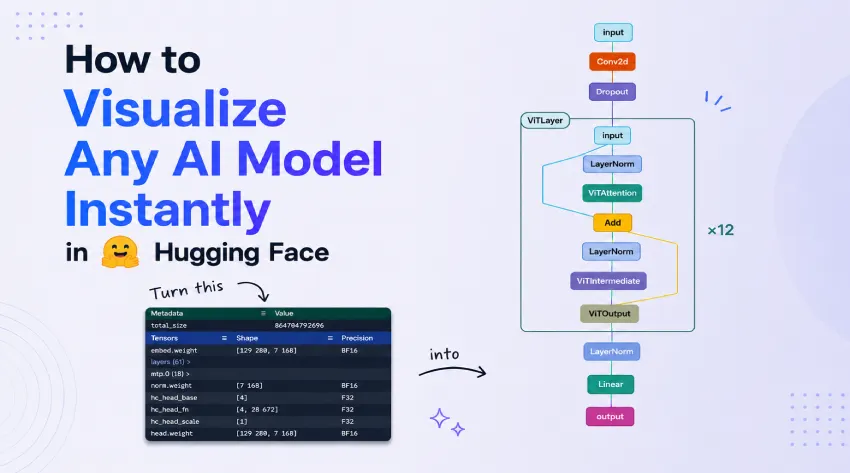

Educational Value: For students and junior engineers, the jump from theoretical transformer papers to production-grade code is steep. Visual tools bridge this gap, allowing learners to see how "Multi-Head Attention" or "Layer Normalization" is actually implemented in a model like Vision Transformer (ViT) or GPT-NeoX.

AI Safety and Interpretability: Transparency is a cornerstone of AI safety. Being able to visualize the "routing" in MoE models or the "cross-attention" in multi-modal models allows researchers to better understand how information flows through a system. While visualization is not a substitute for rigorous mathematical interpretability, it serves as a necessary starting point for identifying potential structural anomalies.

Optimization and Efficiency: As developers move toward "small language models" (SLMs) and edge deployment, understanding the exact layout of a model is crucial for quantization and pruning. A visual map allows engineers to identify "heavy" layers that may be candidates for optimization, ensuring that models can run efficiently on constrained hardware like mobile phones or embedded devices.

The Future of Architectural Transparency

As AI models continue to move toward "Agentic" workflows and increasingly modular designs, the role of visualization will only grow. We are likely to see a shift from static graph rendering to dynamic, real-time visualization that shows how specific inputs activate different parts of a model’s architecture.

HFViewer is a precursor to this future, proving that the complexity of modern AI does not have to be a barrier to entry. By making architectural exploration as simple as changing a URL, the tool empowers a broader range of developers to engage with state-of-the-art AI. It acknowledges a fundamental truth in technical communication: when dealing with billions of data points and thousands of logical connections, a single, well-rendered image is often worth more than a million lines of configuration code.

In conclusion, the transition of AI from a niche academic pursuit to a foundational layer of global technology requires a corresponding transition in how we inspect and understand our tools. HFViewer represents a significant step toward that transparency, offering a lightweight, accessible, and powerful solution to the growing problem of architectural opacity. As the AI community continues to push the boundaries of what is possible, tools that simplify the "how" of AI will be just as important as the models that define the "what."