For years, video content existed in a digital search engine paradox, a visual medium largely invisible to the text-centric algorithms that governed discoverability. While titles, descriptions, and basic tags offered a superficial glimpse, the rich, nuanced information embedded within an eight-minute, carefully scripted narrative remained a "black box," inaccessible to the core mechanics of search. This historical limitation meant that despite video’s growing dominance in content consumption, its full potential for organic reach and information retrieval was severely hampered. Today, this landscape is undergoing a revolutionary transformation, driven by advancements in artificial intelligence that are rapidly dissolving the barriers between visual and textual content. AI-powered video indexing, leveraging sophisticated large language models (LLMs), cutting-edge computer vision, and highly accurate automatic speech recognition (ASR), is now treating video content with the same analytical depth previously reserved for written articles. Search engines and recommendation systems can now meticulously parse everything from spoken dialogue and on-screen captions to the precise text displayed on slides, fundamentally reshaping how video is discovered, consumed, and valued.

This profound shift marks the advent of what many industry experts are calling "Video SEO 2.0," elevating video to a fully discoverable, indexable format capable of ranking and surfacing precise answers with the efficacy of a meticulously optimized blog post. For content creators, marketers, and brands, this evolution is not merely an incremental update but a strategic imperative, demanding a radical rethinking of content production and distribution. The new paradigm necessitates a comprehensive "video retrievability" strategy, ensuring that every valuable clip, every insightful explanation, and every problem-solving demonstration is readily discoverable precisely when users are actively searching for solutions relevant to a product or service.

The Evolution of Search: From Text Dominance to Multimodal Understanding

Historically, search engines were designed to index and retrieve information primarily from text. Their algorithms excelled at analyzing keywords, sentence structure, and contextual relevance within written documents. Video content, despite its undeniable engagement power, presented a significant challenge. Early attempts at video SEO were rudimentary, relying heavily on metadata provided by creators – titles, descriptions, and tags. While these elements offered some contextual clues, they were often insufficient to capture the depth and breadth of information contained within a video. A user searching for a specific technical explanation at the 3:42 mark of a tutorial video would rarely find it through traditional keyword matching unless the creator explicitly highlighted that timestamp and topic in the description.

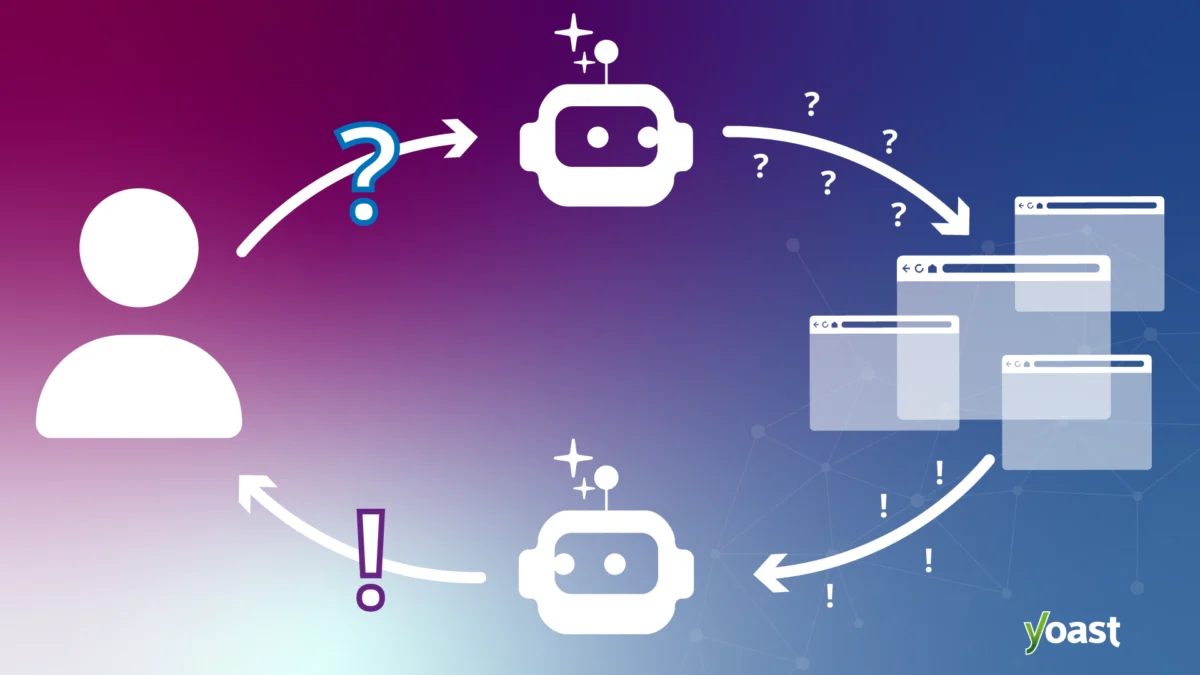

This limitation persisted even as video consumption exploded. According to Cisco’s Visual Networking Index, online video traffic constituted 82% of all consumer internet traffic in 2021, a figure projected to continue rising. Platforms like YouTube solidified their position as the second-largest search engine globally, albeit one where internal video content largely relied on user-generated metadata and viewership signals for discoverability. The rise of short-form video on platforms like TikTok further amplified the demand for efficient content indexing, demonstrating users’ desire for instant, highly relevant visual information.

The turning point has been the rapid maturation of AI technologies. Large Language Models (LLMs), such as those powering ChatGPT and Google’s AI Overviews, have achieved unprecedented capabilities in understanding context, nuance, and intent in natural language. Concurrently, advancements in computer vision allow AI to "see" and interpret visual elements within videos, identifying objects, text on screen, and even actions. Automatic Speech Recognition (ASR) has reached near human-level accuracy, converting spoken words into text with remarkable precision, even in challenging audio environments. When these three technologies converge, they create a powerful analytical framework that can deconstruct video content into its constituent parts – audio, visual, and textual – and derive deep semantic meaning.

Key AI Technologies Driving the Change: Unpacking the "Black Box"

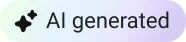

The mechanics of search are indeed evolving at an accelerated pace, propelled by these sophisticated AI systems. Google’s AI Overviews, Perplexity, and OpenAI’s ChatGPT are no longer confined to merely scanning titles or descriptions; they are actively parsing the actual content inside videos. This is possible through a multi-layered approach:

-

Automatic Speech Recognition (ASR): This technology converts all spoken dialogue within a video into a searchable, time-stamped transcript. Beyond mere transcription, modern ASR systems are integrated with natural language processing (NLP) to understand context, identify different speakers, and even detect sentiment. This provides a rich, textual corpus from the video’s audio track, making every word spoken discoverable.

-

Computer Vision (CV): Computer vision algorithms enable AI to "see" and interpret visual information. This includes:

- Object Recognition: Identifying specific objects, people, or scenes.

- Text Recognition (OCR): Extracting and indexing any text that appears on screen, whether it’s a lower third, a slide presentation, a product label, or a caption.

- Activity Recognition: Understanding actions and events occurring within the video, providing contextual cues that reinforce spoken or textual information.

-

Large Language Models (LLMs): Once ASR and CV have generated a wealth of textual and contextual data from the video, LLMs come into play. They analyze this information to:

- Understand Semantic Meaning: Go beyond keywords to grasp the overall topics, arguments, and insights presented.

- Identify Key Moments: Pinpoint specific segments or timestamps where particular concepts are introduced, questions are answered, or examples are provided.

- Summarize and Synthesize: Condense complex video content into concise, digestible summaries, making it easier for search engines to match user queries with highly relevant snippets.

- Infer User Intent: By analyzing the confluence of spoken words, on-screen text, and visual cues, LLMs can better determine what problem a video is trying to solve or what question it aims to answer.

This synergistic application of AI technologies represents a monumental leap from the old world of video SEO, where discoverability was largely a function of compelling thumbnails, relevant tags, and a few surface-level signals. Now, every meaningful moment – from an initial overview of a complex framework to a specific example illustrated at minute 3:42, or a crucial term typed on a screen – can be meticulously read, indexed, and made retrievable. This deep-level understanding forms the very foundation of retrievability: a search engine’s enhanced ability to not just find a video, but to understand its granular content and surface specific insights from within it.

Beyond Traditional SEO: How Generative Search Engines Use Video

While retrievability is a crucial starting point, its implications extend far beyond traditional search engine optimization. Generative search engines, which aim to provide comprehensive, synthesized answers rather than just a list of links, leverage video content in an even more integrated manner. In these multimodal environments, video is no longer treated as an isolated format but as one valuable data source among many – text, audio, and images – that an LLM uses to construct the most authoritative and complete response.

This integration is why video citations are increasingly appearing directly within AI-driven answers. A relevant YouTube clip might be embedded within a Google AI Overview as supporting material, providing visual context or a direct explanation. TikTok’s "Search Highlights" feature exemplifies this, pairing trending queries with short, highly relevant video clips that offer immediate visual answers. Platforms like ChatGPT and Perplexity are becoming adept at extracting structured insights from videos that are properly indexed and easily parseable, incorporating these findings into their generative responses.

For brands, this evolving landscape means that visibility and authority are increasingly dependent on multi-format coverage. If a brand’s expertise exists solely in blog posts, it now faces a significant gap in its digital footprint. Conversely, if a brand’s videos are not meticulously optimized for retrievability, they risk being overlooked by the generative AI systems that are rapidly shaping consumer decisions and information discovery. The ability to present a consistent, authoritative message across text, audio, and video formats is becoming a critical competitive differentiator.

Strategic Optimization for the AI-Powered Video Landscape

Given that video is now discoverable at an unprecedented, dialogue-level depth, a brand’s optimization strategy must extend far beyond conventional metadata. It requires a holistic approach that embeds discoverability into every stage of video production. Here’s how to ensure videos function as high-performing, retrievable content:

-

Crafting Intelligent Scripts: Narrative and Index in One

The video script is no longer just a blueprint for production; it’s a primary indexing document. Content teams must approach scriptwriting with the same SEO rigor applied to a blog post. This involves clear, concise phrasing, the natural integration of long-tail questions, and the strategic front-loading of key terms in a manner that feels authentic and conversational.

The "conversational" element is paramount because LLM-powered search engines prioritize natural language processing. Instead of a formal introduction like, "Today we will discuss customer acquisition strategies," opt for a more query-mirroring phrase such as, "How do you acquire customers without spending a fortune on ads?" This latter phrasing more accurately reflects how users articulate their needs in search, providing AI systems with a clearer, more direct signal about the specific problem the video aims to solve. When explaining a core concept, state it plainly and early in the video. Ambiguity, while sometimes effective for storytelling, actively hinders retrievability. Clarity and directness are key for AI comprehension. -

Mastering Metadata Hygiene: Precision Over Volume

While AI can delve deep into content, high-quality metadata remains crucial. The title, description, and tags should precisely reflect the problem your video solves and the value it delivers, rather than merely listing broad topics. The practice of "keyword dumping" is detrimental; instead, prioritize clarity, user intent, and natural language.

For instance, a title like "Content Marketing Tips | SEO | Video Strategy | 2025" is less effective than "How to Make Your Marketing Videos Discoverable in AI Search." The latter is specific, clearly articulates the content’s value proposition, and directly addresses a user’s likely query. This disciplined approach to metadata applies across all platforms, from YouTube and LinkedIn to Instagram and TikTok, ensuring consistency in how content is presented to algorithms. -

Leveraging Accurate Transcripts: The Unsung Hero of Retrievability

Uploading full, accurate transcripts or SRT (SubRip Subtitle) files is no longer optional; it is a critical ranking signal. Well-formatted transcripts significantly aid AI systems in disambiguating topics, identifying key takeaways, and precisely matching your content to nuanced or niche queries. Transcripts are particularly powerful for capturing long-tail queries that might not fit neatly into a title or description.

Consider a user searching for "how to handle objections in sales calls with technical buyers." A video titled "Advanced Sales Techniques" might be overlooked, but if that exact phrase appears at the 12-minute mark in its accurate transcript, AI systems can pinpoint and surface that specific segment. Maintaining clean transcripts is essential: remove excessive filler words if they obscure meaning, but avoid over-editing, as LLMs are trained on natural, conversational language. -

Harnessing On-Screen Text: A Visual Layer of Indexability

Every piece of text placed on screen – callouts, lower thirds, slide presentations, product labels, or captions – is now crawlable and indexable by computer vision. This presents a significant opportunity to reinforce spoken points and provide additional textual signals to AI. If a video introduces a specific framework, ensure its name appears visually on screen. If a crucial statistic is cited, display it prominently in readable text.

While this is a powerful tool, avoid "text spam" – cluttering the video with keywords solely for crawlability. Instead, use on-screen text strategically to highlight key terms, reinforce takeaways, and visually present concepts that are also discussed verbally, creating a robust, multi-layered indexing opportunity.

Implications for Brands and Content Creators: Navigating the New Frontier

The shift towards video retrievability carries profound implications for brands and content creators. Those who embrace this paradigm early will gain a significant competitive advantage. Brands that invest in high-quality video production, coupled with a rigorous retrievability strategy, will find their content surfacing more frequently and prominently in the generative answers that shape consumer decisions.

This new reality also demands a re-evaluation of resource allocation. Investment in video production must now extend to include comprehensive post-production optimization for AI indexing, including meticulous transcription and strategic on-screen text design. Content teams will need to develop new workflows that integrate SEO best practices directly into the video creation process, from scriptwriting to final upload. Measuring success will also evolve, moving beyond traditional video views and engagement metrics to include factors like video snippet appearances in AI Overviews, precise timestamp referrals, and the ability of videos to directly answer complex user queries.

The Future Landscape: Challenges and Opportunities

The journey into AI-powered search is an ongoing one. As AI search tools become even more sophisticated, the ways they index, interpret, and cite video will continue to evolve. This necessitates continuous adaptation from content creators. Staying abreast of algorithm updates and emerging best practices will be crucial for maintaining visibility.

Beyond the immediate tactical considerations, this evolution opens doors for enhanced global reach and accessibility. AI’s ability to process and translate video content can break down language barriers, making expert knowledge available to a wider audience. However, it also introduces challenges, such as ensuring content authenticity in an era of deepfakes and mitigating potential biases in AI indexing. The core principle, however, remains steadfast: making content easy for AI to find, understand, and reference.

Search engines are learning to see, hear, and cite everything. The black box is definitively open. The power to create truly discoverable, high-performing video content is now within reach, and how brands wield this power will define their success in the evolving digital landscape.

Frequently Asked Questions (FAQs)

How long should my video be for optimal discoverability in AI Search?

There is no universal "best length," as optimal duration depends on user intent and platform. For quick, intent-matching queries on platforms like TikTok and YouTube Shorts, shorter, highly focused videos (under 60-90 seconds) perform exceptionally well. For generative answers that require deeper insights, longer explainer videos (5-15 minutes or more) provide a richer source of material for AI systems to pull from. The key is clarity, structure, and ensuring every second of the video contributes value to the user, making it easier for AI to extract relevant information regardless of length.

Do I need special tools to make my videos indexable by AI Search?

Not necessarily. The foundational elements for AI-driven indexability are primarily within your control during production and upload. These include clean, well-structured scripting, accurate and comprehensive transcripts (SRT files), readable on-screen text, and precise, intent-focused metadata (titles, descriptions, tags). While advanced AI tools for automated transcription or content analysis exist, the core signals that AI search engines look for can be meticulously prepared with standard production and post-production software. The AI search engines themselves handle the complex indexing automatically once these robust signals are present.

How quickly will I see results from video retrievability efforts?

Indexing timelines can vary significantly by platform and the specific algorithms involved, but many brands report seeing initial improvements in visibility and search rankings within weeks of implementing a focused retrievability strategy. However, the most substantial and sustained gains come from consistent effort. This includes adopting unified naming conventions across all content, strategically publishing content across multiple formats (text, video, audio), and continually reinforcing your brand’s expertise with supporting written content that links to and complements your videos. Retrievability is an ongoing practice, not a one-time fix.