The digital commerce landscape has undergone a profound transformation over the last decade, transitioning from a discipline guided by creative intuition to one governed by rigorous data-driven experimentation. Central to this evolution has been the A/B test, a methodology that compares two versions of a single variable to determine which performs better based on a specific metric. While this tool has revolutionized how product and marketing teams interact with their audiences, industry experts and data scientists are increasingly raising alarms regarding an over-reliance on these simple split tests. The current consensus among high-maturity organizations suggests that while A/B testing remains a vital component of the Conversion Rate Optimization (CRO) toolkit, it is frequently misapplied to complex business problems that require more sophisticated analytical frameworks.

The Dominance of the A/B Testing Paradigm

The ascent of A/B testing as the default decision-making tool for digital teams was driven by its perceived simplicity and the clarity of its outputs. In an era where "gut feeling" is often viewed with skepticism, the ability to produce a statistically significant "winner" provides a sense of certainty that is highly valued in corporate environments. This culture of experimentation was largely catalyzed by the success stories of major technology firms. Microsoft’s Bing team, for instance, famously documented an instance where a minor change to ad headline display logic resulted in a $100 million increase in annual revenue. Similarly, Google’s legendary "41 shades of blue" test solidified the idea that even the smallest UI adjustments could yield massive financial returns.

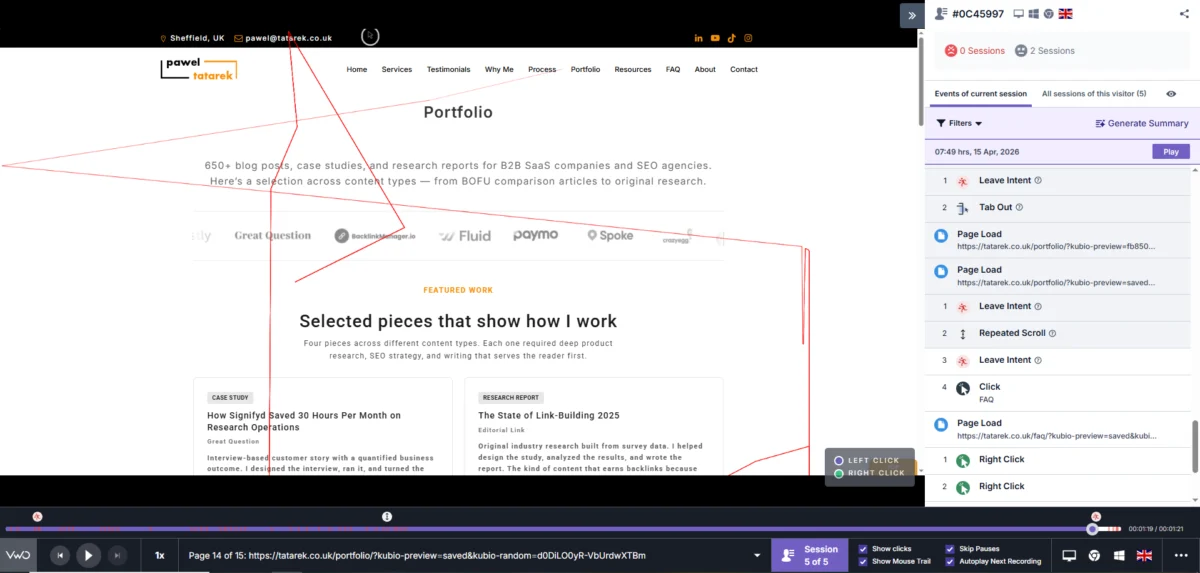

Today, this mindset has permeated the entire industry. According to recent data, approximately 77% of all digital experiments are simple A/B tests involving only two variants. The proliferation of user-friendly experimentation platforms, such as FigPii and Optimizely, has lowered the barrier to entry, allowing marketing and product managers to launch tests without the direct intervention of data scientists or specialized researchers. However, this democratization has led to a "hammer and nail" problem: when A/B testing is the only tool a team is comfortable using, every business challenge begins to look like a simple split-test opportunity.

The Statistical Trap: Why Most Tests Fail to Deliver

One of the most significant challenges facing the modern CRO industry is the lack of statistical power in most experiments. For an A/B test to provide a reliable result, it requires a sufficient volume of traffic and conversions to distinguish a true performance difference from random noise.

For many e-commerce brands, reaching this threshold is an uphill battle. To detect a modest 1% to 2% lift in conversion rates with a high degree of confidence, a site may require hundreds of thousands of visitors per variant. Even mid-market brands generating millions of sessions per month often find themselves in a position where a single test must run for six to twelve weeks to reach significance. This slow pace of experimentation creates a bottleneck, preventing teams from iterating quickly.

When teams ignore these statistical requirements, they often fall into one of three failure modes:

- The False Positive: Implementing a change based on a "winning" result that was actually just a statistical fluke.

- The Inconclusive Loop: Running tests for months only to find no clear winner, leading to "testing fatigue" and a loss of organizational buy-in.

- The Under-Powered Test: Attempting to detect small changes on low-traffic pages, which results in data that is functionally useless for decision-making.

Beyond the "What": The Problem of Survivorship Bias

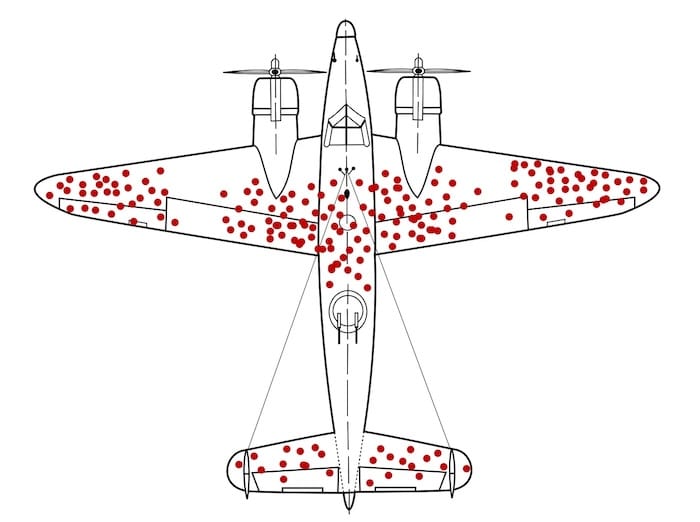

A fundamental limitation of A/B testing is that it focuses exclusively on the "what" while ignoring the "why." A test result can confirm that Version B outperformed Version A, but it cannot explain the psychological or functional reasons behind that performance. This creates a significant blind spot, often referred to as survivorship bias.

The concept, famously illustrated during World War II by statistician Abraham Wald, involves analyzing only the data points that "survived" a process. In the context of a digital funnel, A/B tests primarily measure the behavior of users who are already interacting with the site. They offer little insight into the users who dropped off entirely or the frustrations that led to their exit.

Without qualitative data—such as session recordings, user interviews, and support ticket analysis—teams risk "optimizing the bullet holes." They may reinforce elements that are working for a small segment of users while failing to address the fundamental vulnerabilities that are driving the majority of potential customers away.

The Strategic Pitfalls of Short-Term Metric Focus

High-maturity teams are increasingly recognizing that a "win" in a short-term A/B test does not always translate to a win for the business. Most standard experiments focus on immediate actions: clicks, add-to-cart rates, or single-session conversions. However, the long-term health of an e-commerce business depends on metrics such as Customer Lifetime Value (CLV), return rates, and profit margins.

There is often a direct conflict between short-term conversion lifts and long-term brand equity. For example, a "dark pattern" or a misleadingly aggressive countdown timer might increase immediate sales, but it can also lead to higher return rates and a permanent loss of customer trust.

The "Jam Experiment," a classic study in consumer psychology, highlights the danger of optimizing for the wrong metrics. Researchers found that while a display with 24 varieties of jam attracted more attention and engagement than a display with only six, the smaller selection resulted in a conversion rate ten times higher. In a digital context, a variant that increases clicks (engagement) may actually be creating "choice overload" or confusion that ultimately reduces the final purchase rate.

A Broader Experimentation Toolkit for Mature Teams

To overcome the limitations of simple split testing, leading organizations are adopting a more diverse array of experimental designs. These methods allow teams to answer broader business questions that a standard A/B test cannot touch.

- Sequential Testing: This involves monitoring data as it accumulates, allowing for earlier decision-making if a variant is performing exceptionally well or poorly.

- Holdout Groups: By keeping a small percentage of the audience away from all new features or changes for an extended period, companies can measure the long-term, aggregate impact of their optimization efforts.

- Quasi-Experiments: Used when random assignment is impossible (e.g., testing a new pricing model in a specific geographic market), these designs use statistical controls to infer causality.

- Switchback Testing: Common in marketplace and logistics businesses, this method alternates between treatments over specific time intervals to account for network effects and user interactions.

The Role of Evidence-Led Hypotheses

The quality of an experiment is fundamentally tied to the quality of the hypothesis behind it. Mature CRO programs move away from "random acts of testing" and instead ground every experiment in a four-part hypothesis framework:

- Evidence: "Because our session recordings show users hesitating at the shipping selection…"

- Problem: "…we believe uncertainty about arrival dates is causing checkout abandonment."

- Solution: "…so we will implement a dynamic delivery-date estimator on the product page."

- Outcome: "…and we expect to see a 3% increase in checkout completion among mobile users."

By requiring this level of rigor, teams ensure they are testing "big levers"—elements that affect the core value proposition and buying decisions—rather than minor cosmetic tweaks like button colors or font styles.

Implications for the Future of CRO

As the digital marketplace becomes increasingly competitive and customer acquisition costs continue to rise, the ability to extract meaningful insights from data will be a primary competitive advantage. The move away from a "test everything" mentality toward a "test what matters" strategy marks the maturation of the CRO industry.

Industry analysts suggest that the next phase of experimentation will involve a tighter integration of artificial intelligence and machine learning to handle multivariate complexities that human teams cannot manage alone. However, the human element—specifically the ability to conduct deep qualitative research and translate it into strategic business moves—remains irreplaceable.

For organizations looking to evolve, the path forward involves auditing their current processes to identify where A/B testing has become a crutch. By diversifying their toolkit, focusing on long-term business outcomes, and grounding every test in deep user research, companies can move beyond incremental "wins" and achieve the kind of compounding growth that defines market leaders. The era of the simple A/B test is not over, but its reign as the sole arbiter of digital strategy is undoubtedly coming to an end.