In the competitive landscape of conversion rate optimization (CRO), digital marketers and front-end engineers are frequently presented with a compelling marketing hook: the "lightweight snippet." Vendors often tout script sizes as small as 2.8 KB or 13 KB, suggesting that these tools will have a negligible impact on a website’s loading speed. However, a rigorous investigation into the production environments of leading A/B testing platforms reveals a significant discrepancy between advertised snippet sizes and the actual payload required to execute experiments. The reality is that the initial snippet is often merely a "loader" or "stub," while the true weight of the experimentation engine—the assets that actually modify the user experience—is deferred to subsequent requests that can exceed 200 KB.

This technical discrepancy has profound implications for Core Web Vitals, user experience, and the integrity of test data. To understand the gravity of this issue, one must look beyond the initial script and analyze the full execution footprint of these tools in real-world scenarios.

The Illusion of the Lightweight Snippet

The marketing of A/B testing tools has long focused on the "snippet size" as a proxy for performance. This metric is used to reassure developers that the tool will not contribute to "bloat" or slow down the Largest Contentful Paint (LCP). However, the "snippet" usually refers only to the few lines of JavaScript installed in the <head> of a document.

In production, most modern A/B testing architectures utilize a multi-stage loading process. The initial script initializes the connection, but it does not contain the logic for audience targeting, variation code, or the experimentation engine itself. These components are fetched later, often via secondary API calls or dynamically injected scripts. Consequently, a tool that claims a 2.8 KB footprint may actually force the browser to download and parse over 250 KB of data before a single experiment can be rendered. This "progressive injection" model hides the performance cost from initial scans but manifests clearly in the network waterfall and browser main-thread activity.

Unveiling the Investigation: A Multi-Step Methodology

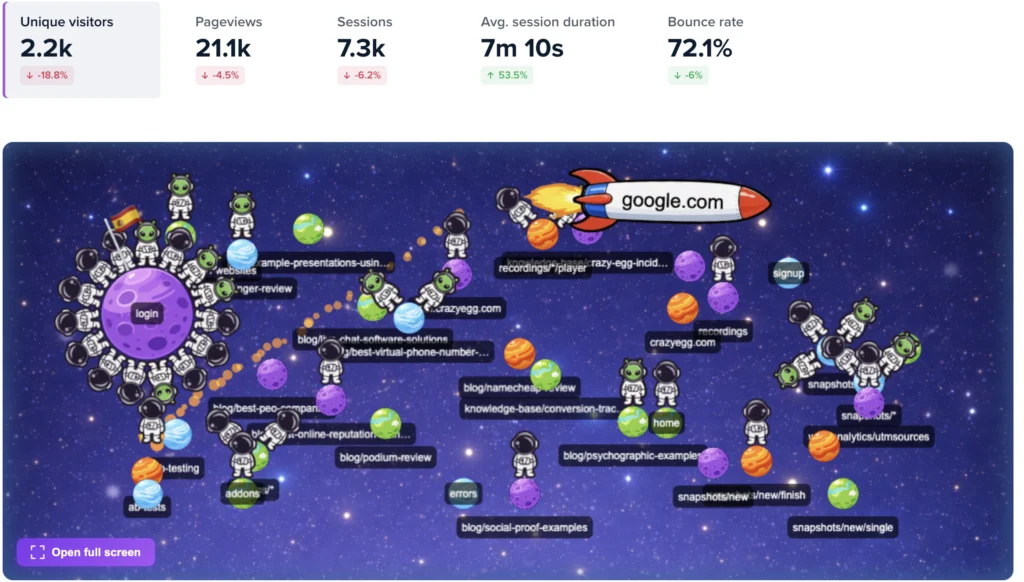

To determine the true execution cost of A/B testing scripts, a comprehensive study was conducted across several leading platforms, including Convert, VWO, ABlyft, Mida.so, Webtrends Optimize, Visually.io, and Amplitude Experiment. The investigation moved beyond vendor documentation to measure real-world impact using a six-step methodology:

- Production Measurement: Researchers collected tracking scripts from live customer websites rather than using "clean" demo environments. Using command-line tools like

curl, they captured both the gzipped transfer size and the uncompressed payload size. - Code-Level Path Analysis: The team analyzed how scripts execute post-load, looking for patterns of "progressive injection" where additional logic is introduced at runtime.

- Network Waterfall Capture: Using Browser DevTools, researchers measured the total network activity triggered by each tool, including secondary scripts, configuration files, and API calls.

- Architectural Categorization: Platforms were grouped by their delivery model: Embedded Bundles, Stub + API configurations, or Feature Flagging models.

- Benchmark Validation: Direct measurements were compared against official vendor claims and third-party benchmarks to identify gaps in transparency.

- Individual Assessment: Each platform was evaluated on its specific trade-offs regarding script delivery, flicker prevention, and execution timing.

Comparative Data: Advertised Claims vs. Production Reality

The findings of the investigation highlight a consistent gap between marketing and reality. While some vendors are transparent about their baseline requirements, others utilize the "stub" model to report significantly lower figures.

Base SDK Measurements

The investigation found that the uncompressed SDK size—the core engine of the testing tool—rarely aligns with advertised snippets. For example, VWO advertises a 2.8 KB "stub," but the measured base SDK required for operation is at least 14.7 KB gzipped, and significantly larger when uncompressed. ABlyft, which claims a 13 KB size, was found to have a gzipped SDK of approximately 32 KB, expanding to over 168 KB once uncompressed in the browser.

In contrast, Convert reported a 93 KB baseline. Direct measurement showed a gzipped baseline of 48.7 KB. The key difference noted was that Convert delivers the full payload upfront, meaning there are no hidden runtime fetches that surprise the browser later in the loading sequence.

Total Payload for Active Experiments

The discrepancy becomes even more dramatic when measuring the total payload required to run a suite of experiments. In a test scenario involving six active experiments, the total observed payloads were as follows:

- Convert: 193 KB (Total payload delivered in a single upfront request).

- VWO: Up to 254 KB (Distributed across multiple runtime requests).

- ABlyft: Up to 280 KB+ (A combination of inline and injected scripts).

- Mida.so: Difficult to fully measure due to API-driven configuration loading that defers costs.

These figures demonstrate that as the complexity of a testing program grows, the "lightweight" advantage of stub-based tools often evaporates, resulting in a total footprint that equals or exceeds that of "bundled" tools.

Architectural Divergence: Bundles vs. Stubs

The investigation identified three primary architectures used by A/B testing vendors, each with distinct performance trade-offs.

1. The Embedded Bundle (e.g., Convert)

This model includes the experimentation engine, targeting logic, and all active variation code in a single file.

- Pros: Predictable loading, no secondary network requests, and higher reliability in experiment application.

- Cons: A larger initial file size which may be flagged by simple performance auditing tools.

2. The Stub + API Config (e.g., VWO, Mida, ABlyft)

This model uses a tiny "loader" script that subsequently calls an API to fetch the necessary configurations and variation code.

- Pros: A very small initial footprint that looks excellent in marketing materials.

- Cons: "Payload hiding," where the real performance cost is deferred. This can lead to increased latency between the page load and the experiment application.

3. Feature Flagging (e.g., Amplitude)

This model is primarily designed for server-side or app-based testing, returning only variant decisions rather than DOM-manipulation code.

- Pros: Extremely lightweight.

- Cons: Not directly comparable to traditional web-based A/B testing tools, as it requires developers to hard-code variations into the site’s codebase.

The "Flicker" Problem and Performance Trade-offs

One of the most critical challenges in A/B testing is "flicker," or the Flash of Unstyled Content (FOUC). This occurs when a user sees the original version of a page for a split second before the testing script applies the variation.

To combat this, many "lightweight" tools require an additional "anti-flicker snippet." This is a small piece of code that hides the entire page (or specific elements) until the testing script has finished loading. While this prevents the visual jarring of flicker, it directly negatively impacts the Largest Contentful Paint (LCP) and the overall user experience. The page appears to be "stuck" or blank, leading to higher bounce rates.

The investigation suggests that tools using a "bundled" upfront approach (like Convert) reduce the reliance on anti-flicker hacks because the experiment logic is available as soon as the script executes, rather than waiting for a secondary API call to return.

Implications for Modern SEO and User Experience

As Google’s Core Web Vitals have become a ranking factor, the impact of A/B testing scripts on site performance is no longer just a technical concern—it is a business one.

- Cumulative Layout Shift (CLS): If a script loads late and shifts elements on the page, it can ruin a site’s CLS score.

- Total Blocking Time (TBT): Large JavaScript files that require significant CPU time to parse can block the main thread, making the site feel unresponsive.

- Conversion Integrity: If a script is too slow to load, some users may interact with the "original" version of the page before the test is applied, leading to "data pollution" where the results of the A/B test are no longer accurate.

Beyond Kilobytes: New Metrics for Evaluating Testing Tools

The investigation concludes that "smallest script size" is a weak and often misleading metric for evaluating A/B testing software. Instead, organizations should evaluate vendors based on:

- Total Execution Footprint: The sum of all requests required to run a specific number of experiments.

- Network Waterfall Impact: How many secondary requests are triggered and how they compete with other critical site assets.

- Flicker Mitigation Strategy: Whether the tool relies on hiding page content (anti-flicker snippets) or applies changes synchronously.

- Predictability: Whether the performance impact is consistent or varies based on API latency and network conditions.

Conclusion: Prioritizing Predictability over Marketing Metrics

The evolution of web performance standards requires a more sophisticated approach to selecting marketing technology. The investigation into A/B testing script sizes reveals that the industry’s obsession with "lightweight snippets" often masks a more complex and potentially more damaging reality of deferred payloads and runtime injections.

For brands that prioritize user experience and SEO, the choice of a testing tool must be based on the total impact on the browser’s lifecycle. While a 193 KB upfront bundle may seem larger on paper than a 2.8 KB stub, the predictability of a single request and the reduction in secondary latency often provide a more stable and high-performing environment for experimentation. As digital landscapes become more sensitive to load times and layout stability, transparency in script delivery will become the new standard for the CRO industry.