The implementation of data-driven experimentation within large-scale organizations often begins with a paradox of ambition. In a typical corporate scenario, a dedicated product team—after months of internal advocacy and securing executive buy-in—finally receives the green light to launch their first A/B test. The mandate from leadership is almost always the same: make it significant. The prevailing logic suggests that to prove the value of a Conversion Rate Optimization (CRO) program, the initial experiment must be transformative, tackling a core business process or a complete architectural overhaul. However, as industry experts have observed, this "swing for the fences" mentality is frequently the primary catalyst for the premature death of experimentation initiatives.

Lucia van den Brink, founder of The Initial and a prominent voice in the experimentation space, has identified this pattern as the "Big Test Trap." Speaking on the VWO Podcast, van den Brink detailed how the pursuit of massive, high-stakes experiments often leads to a cycle of developmental delays and lost momentum. In one documented instance, a team’s "first test" remained in design reviews for three months, lingered in development for six months, and was eventually abandoned after a year without ever reaching the production environment. This phenomenon highlights a critical disconnect between the theoretical desire for innovation and the practical realities of shipping code and generating actionable insights.

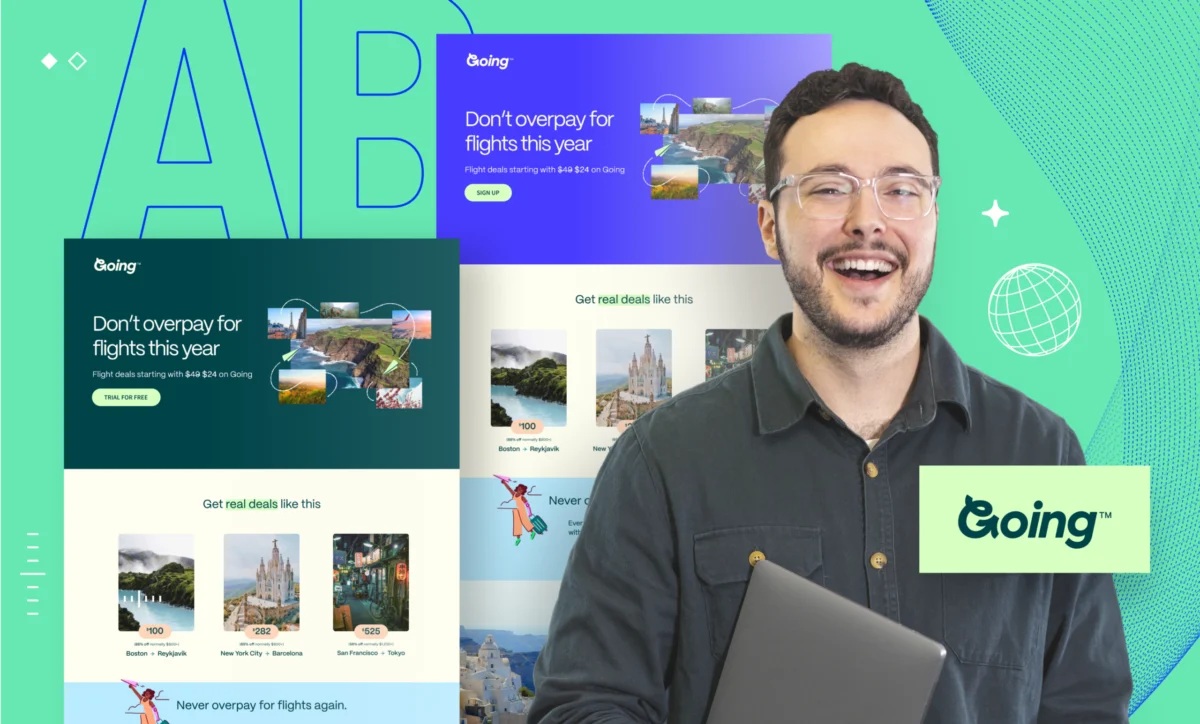

The Anatomy of the Big Test Trap

The inclination to start big is rooted in traditional corporate psychology. High-visibility projects naturally attract leadership attention and resource allocation. There is an implicit, though often flawed, belief that the scale of a change is directly proportional to its potential impact. In reality, large-scale tests—such as a full website redesign or the implementation of a complex fraud detection system—require extensive cross-departmental coordination, involve high technical risk, and make it nearly impossible to isolate specific variables that drive user behavior.

From a chronological perspective, the Big Test Trap follows a predictable and destructive path. Phase one involves high-level excitement and the selection of a "transformative" idea. Phase two sees the project enter a protracted design and approval stage, where various stakeholders attempt to mitigate the risks of such a large change, leading to "scope creep." Phase three is characterized by development bottlenecks, as the experiment competes with the core product roadmap for engineering resources. By phase four, typically 12 months after the initial approval, the original champions of the program have often moved on or lost their political capital, and the experimentation culture is declared a failure before it even began.

A Strategic Pivot: The 4-Step Framework for Sustainability

To counter this systemic failure, van den Brink proposes a four-step framework designed to build experimentation programs that are resilient, scalable, and focused on long-term habit formation rather than one-off victories.

Step 1: Deconstructing the "High Impact" Illusion

The first step in building a sustainable program is recognizing why organizations default to large tests. Leadership buy-in is often contingent on the promise of "moving the needle" in a visible way. However, the complexity of large tests often obscures the data. For example, if a company redesigns its entire checkout flow and sees a 5% increase in conversions, it remains unclear whether the success was due to the new button placement, the simplified form fields, or the updated color palette. By recognizing that big projects are often "validation" exercises disguised as "exploration," teams can begin to shift their focus toward more granular, informative testing.

Step 2: The Power of Incrementalism and "Small Wins"

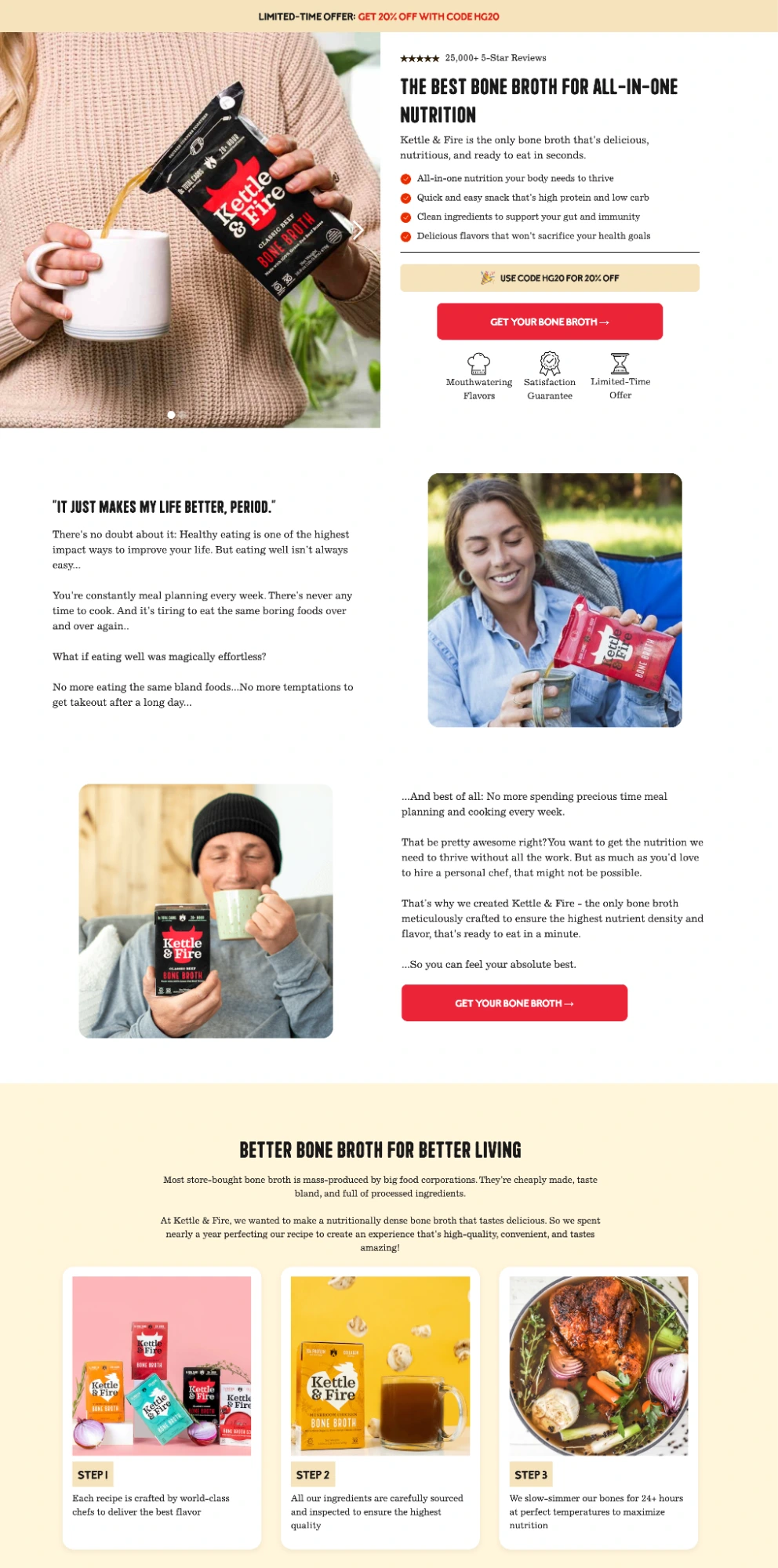

The second phase of the framework emphasizes the disproportionate value of small tests. Modest changes—such as headline adjustments, call-to-action (CTA) optimizations, or the reordering of navigation elements—offer several strategic advantages. They can be designed and deployed in days rather than months, allowing for a higher velocity of learning. Furthermore, small tests are inherently lower risk; if a minor change negatively impacts performance, it can be reverted instantly without disrupting the entire user journey.

Industry data supports this incremental approach. Statistics from major experimentation platforms suggest that only about 10% to 25% of A/B tests result in a statistically significant "win." If a team only runs one "big" test per year, their chances of success are statistically low. Conversely, a team running 50 small tests per year is virtually guaranteed to find several high-impact optimizations that, when compounded, outperform a single large-scale redesign.

Step 3: Strategic Portfolio Balancing

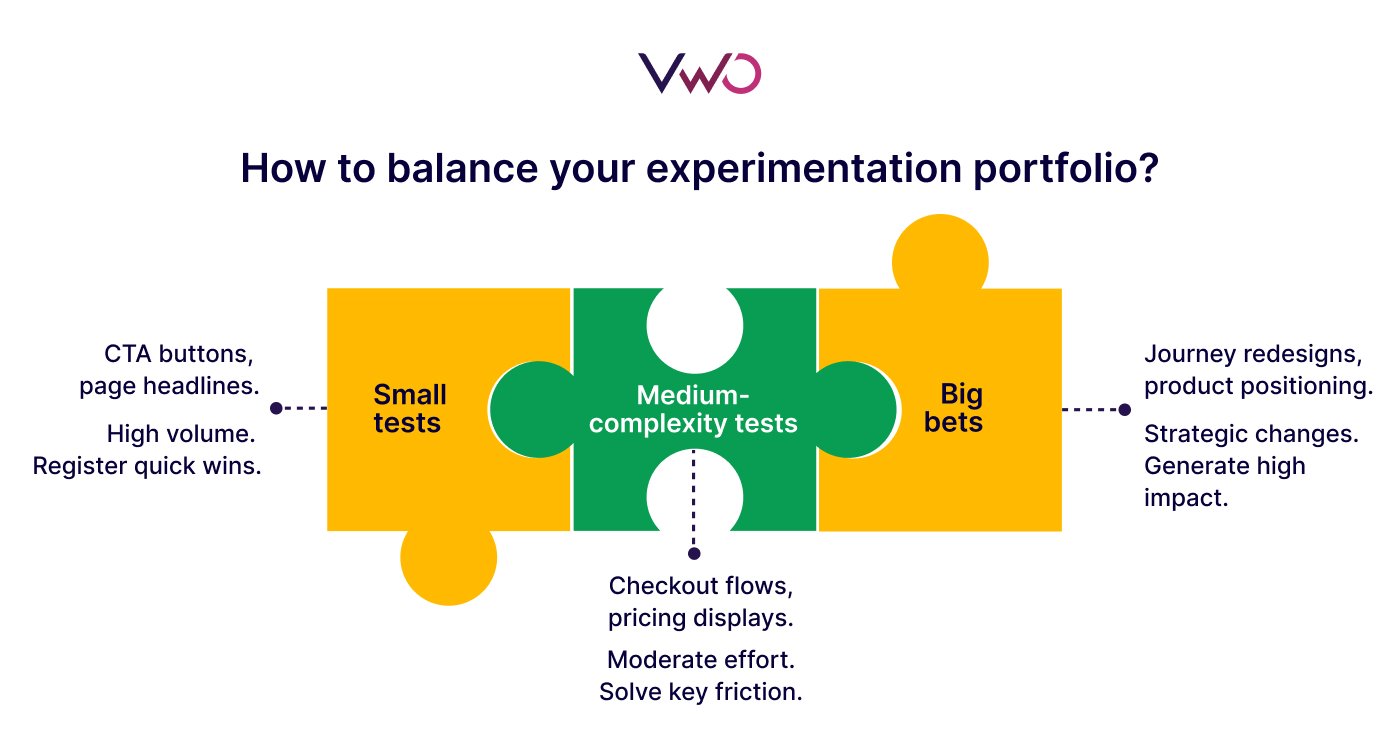

Once a team has established the ability to ship tests quickly, the challenge shifts to portfolio management. Van den Brink suggests that an experimentation program should be viewed similarly to a financial investment portfolio. A healthy mix includes:

- Low-effort/Low-risk (60-70%): Small tweaks and copy changes that keep the program moving and provide steady, incremental data.

- Medium-effort/Medium-risk (20-25%): Testing new features or significant UI adjustments on specific pages.

- High-effort/High-risk (5-10%): The "big swings" that tackle foundational business assumptions or major architectural changes.

This balance ensures that even if a high-risk experiment fails or is delayed, the program continues to deliver value and insights through the steady stream of smaller tests.

Step 4: Using Big Tests for Validation, Not Exploration

The final step in the framework involves a fundamental shift in how large experiments are utilized. Rather than using a massive test to "see what happens," successful organizations use them to validate insights that have already been suggested by smaller, precursor tests. For instance, if a series of small tests indicates that users respond more favorably to "benefit-focused" messaging rather than "feature-focused" messaging, the team can then confidently invest in a large-scale site redesign that centers on those proven benefits. This reduces the risk of the "big test" and ensures that development resources are spent on initiatives with a high probability of success.

Case Study: Infrastructure over Ideation in B2B-SaaS

Consider a hypothetical B2B SaaS company attempting to establish an experimentation culture. Instead of starting with a complete overhaul of their pricing page, the team spends the first three months building the necessary infrastructure: integrating tracking tools, establishing a clear hypothesis-writing process, and training a small group of designers and developers on the testing platform.

In the subsequent six months, they run 20 small experiments. They test different trial durations, varying lead-capture forms, and diverse social proof placements. By the end of this period, they haven’t just increased their conversion rate; they have built a "muscle" for experimentation. New hires now instinctively ask, "How can we test this?" when proposing a change. The team has moved from a state of "guessing" to a state of "informed iteration."

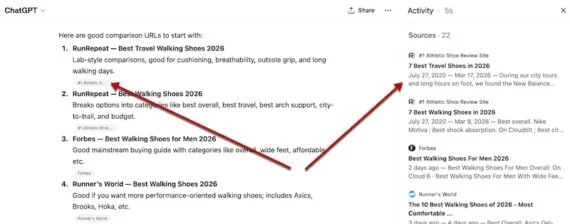

The Role of Technology and AI in Modern CRO

The transition to a high-velocity testing model is being accelerated by advancements in Artificial Intelligence. Tools like VWO Copilot are now enabling teams to set up full-fledged testing campaigns using natural language prompts. AI can assist in generating variations, setting up tracking metrics, and identifying relevant target audiences, significantly lowering the technical barrier to entry for non-technical team members. This automation is a critical component in overcoming the "development bottleneck" that often kills the momentum of experimentation programs.

Broader Implications for the Digital Economy

The shift toward sustainable experimentation is more than a tactical adjustment; it is a strategic necessity in an increasingly volatile digital economy. As customer acquisition costs (CAC) continue to rise across almost all sectors, the ability to maximize the value of existing traffic through CRO has become a primary competitive advantage.

Organizations that master the art of the "small test" are better equipped to respond to market shifts. They are not wedded to a single, monolithic vision of their product but are instead constantly evolving based on real-time user data. This agility is the hallmark of digital maturity.

In conclusion, the failure of most experimentation programs is not a failure of imagination, but a failure of execution. By avoiding the "Big Test Trap" and adopting Lucia van den Brink’s framework of incremental growth and balanced portfolios, organizations can move beyond the cycle of stalled projects and lost momentum. The path to transformative results is paved with small, consistent, and data-driven steps. The goal is not just to run a test, but to build a system where learning is continuous, risk is managed, and growth is an inevitable byproduct of the process. For those ready to move beyond the "big swing" mentality, the tools and frameworks now exist to turn experimentation from a high-stakes gamble into a sustainable engine for business success.