The landscape of modern journalism and public relations is currently grappling with a paradoxical crisis: the very tools designed to protect the integrity of human-authored content are increasingly flagging professional, high-quality writing as the work of artificial intelligence. This phenomenon, highlighted by the recent experience of Jennifer Farr, a senior account director at the Canadian public relations firm Earnscliffe, underscores a growing tension in the media industry. As editors and publishers implement stringent AI detection protocols to maintain authenticity, they are inadvertently creating an environment where "clean," "structured," and "polished" prose is viewed with suspicion, potentially forcing human writers to alter their natural styles to avoid being silenced by an algorithm.

The incident involving Farr began as a standard professional engagement. After pitching an op-ed to a top-tier Canadian publication, the topic was approved, and a draft was submitted. Under normal circumstances, the subsequent phase would involve editorial refinements and fact-checking. However, the editorial team informed Farr that the piece had been flagged by an AI detection tool and, as a result, could no longer be considered for publication. The rejection occurred despite the fact that the article was entirely human-generated, produced through a collaborative, real-time virtual session between Farr and her client. This case is not an isolated event but rather a bellwether for a broader systemic issue affecting the communications industry globally.

The Mechanics of a False Positive

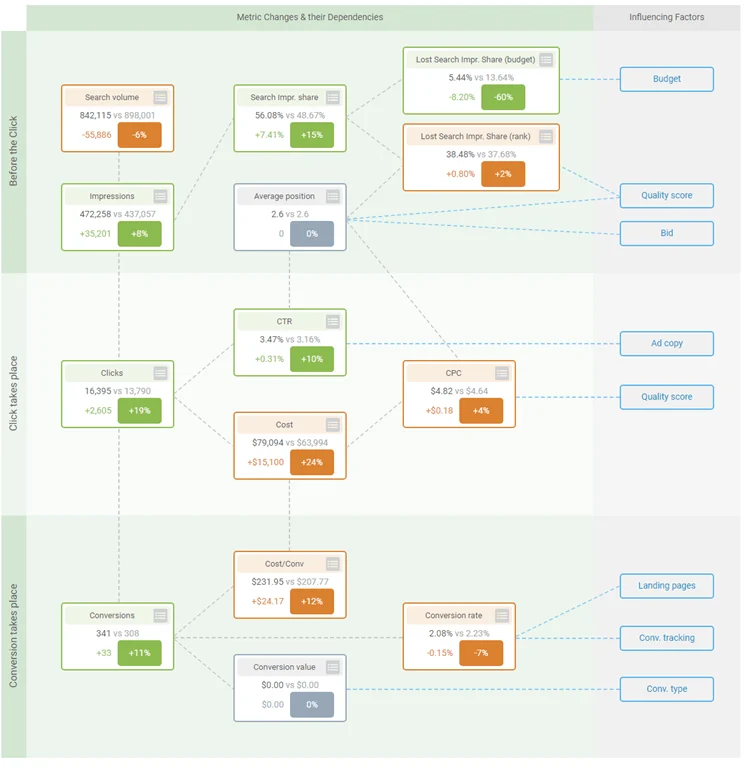

To understand why professional writing is being misidentified as AI-generated, it is necessary to examine the technical foundations of both Large Language Models (LLMs) and the detectors designed to catch them. AI detection software typically operates on two primary metrics: perplexity and burstiness. Perplexity measures the randomness or complexity of the text; if a sentence is highly predictable, it has low perplexity. Burstiness refers to the variation in sentence structure and length throughout a document. Human writing traditionally exhibits high burstiness—varying between long, complex sentences and short, punchy ones—while early AI models tended to produce more uniform, rhythmic prose.

The irony facing professionals like Farr is that high-level corporate and journalistic writing often prioritizes clarity, logical structure, and the removal of linguistic "noise"—traits that result in low perplexity. In a professional setting, especially within an agency, a draft undergoes multiple rounds of rigorous editing. Teams brainstorm, refine, tighten, and polish the text until it is as efficient as possible. By stripping away idiosyncrasies and focusing on a clean, structured delivery, human writers are inadvertently mimicking the "optimal" output that AI models are trained to produce. Consequently, the more professional and "perfect" a piece of writing is, the more likely it is to trigger a false positive in detection software.

A Chronology of the AI Detection Arms Race

The rise of generative AI has forced a rapid, often disorganized response from the publishing world. The timeline of this transition reveals a move from curiosity to defensive suspicion:

- Late 2022: The public release of ChatGPT triggers a surge in AI-generated content across the internet, leading to concerns about "slop" (low-quality, AI-generated filler) polluting search engines and news feeds.

- Early 2023: A wave of AI detection startups, such as GPTZero and Copyleaks, emerge, promising to distinguish between human and machine-generated text. Educational institutions and media outlets begin adopting these tools immediately.

- Mid-2023: OpenAI, the creator of ChatGPT, releases its own "AI Classifier" but is forced to retire it only months later due to a "low rate of accuracy." This serves as a significant warning that even the creators of the technology cannot reliably detect its output.

- Late 2023 to Present: Reports of "false positives" begin to proliferate. Students are accused of cheating on essays they wrote by hand, and professionals like Jennifer Farr find their work rejected by major publications based on algorithmic suspicion.

This chronology suggests that the industry jumped to adopt detection tools before their reliability was proven, creating a "guilty until proven innocent" atmosphere for writers who adhere to high standards of formal composition.

Supporting Data on Detection Inaccuracy

The reliance on AI detectors is increasingly criticized by the scientific community. A prominent study conducted by researchers at Stanford University in 2023 found a significant bias in AI detectors against non-native English speakers. The study revealed that when non-native speakers wrote essays in English, the detectors flagged them as AI-generated more than half the time. This is because non-native speakers often use simpler, more common word choices and more formulaic sentence structures—again, traits that result in low perplexity scores.

Furthermore, testing by various tech journals has shown that false-positive rates for popular detectors can range anywhere from 1% to as high as 10% depending on the complexity of the text. In a newsroom that processes hundreds of submissions a month, even a 2% error rate means that several legitimate, high-quality human voices are being silenced or unfairly scrutinized every month. The "human-in-the-loop" philosophy, where an editor uses the detector only as a guide, is often abandoned in favor of hard-line policies that reject any flagged content to avoid the risk of brand damage.

The Impact on Professional Communications and PR

For the public relations and communications sector, the implications are profound. The industry’s core function is to distill complex ideas into clear, persuasive narratives. When editors begin to view certain punctuation marks—like the em dash—or highly structured arguments as "tells" for AI, it forces writers into a defensive posture.

Farr noted that the issue wasn’t the content of her op-ed, but the perceived "humanity" of its construction. This has led to an emerging trend where writers are beginning to "de-optimize" their work. Some professionals report intentionally leaving in slight imperfections, using less common synonyms, or avoiding certain transitional phrases just to lower the chance of being flagged. This "reverse-engineering" of writing to satisfy an algorithm represents a regression in the quality of public discourse; instead of striving for the best possible writing, professionals are striving for the most "detectably human" writing, which is not always the same thing.

Official Responses and Editorial Pressures

Editorial boards are in a difficult position. The pressure to ensure authenticity is not merely aesthetic; it is an existential necessity. If a publication is found to be unwittingly publishing AI-generated content, it loses credibility with its audience and may suffer in search engine rankings.

In response to the growing number of false positives, some major outlets have begun to update their submission guidelines. While some remain rigid, others are moving toward a "process-based" verification system. Instead of relying solely on a post-submission scan, editors may ask for:

- Version History: Access to Google Docs or Microsoft Word track changes to see the evolution of the draft.

- Source Verification: More rigorous checking of the specific anecdotes and unique insights that an AI would be unlikely to fabricate.

- Direct Communication: A preference for established relationships where the writer’s voice is already known to the editorial team.

However, for freelancers and agency professionals who do not have a decades-long history with a specific editor, the "grey area" Farr describes remains a significant barrier to entry.

Broader Implications for the Future of Writing

The crisis of AI detection raises a fundamental question: how do we define authenticity in a digital age? If a human writes a piece using a collaborative, messy, and creative process, but the final output matches the statistical patterns of an AI, does the "humanity" of the piece vanish?

The current reliance on AI to catch AI is a recursive loop that offers no easy exit. As generative AI becomes more sophisticated, it will get better at mimicking human "burstiness" and "imperfection," likely leading to even higher false-positive rates for actual humans who continue to write clearly. We are approaching a point where the only way to prove a text is human-written may be to provide video evidence of the writing process, a standard that is unsustainable for the fast-paced world of news and PR.

The industry is currently in a transition period where the tools of the trade are evolving faster than the ethics and standards governing them. For writers like Farr, the path forward involves "sitting in the grey area"—continuing to advocate for the value of human collaboration and professional polish while navigating a landscape that is increasingly suspicious of both. The challenge for the media at large will be to develop more nuanced methods of verification that do not penalize excellence in favor of algorithmic certainty. Without a shift in how authenticity is measured, the art of professional writing may find itself increasingly marginalized by the very tools meant to protect it.