Six months ago, a leading technology firm published an extensive guide on data security best practices. Today, those policies have undergone significant revisions, yet the original online article remains unchanged. This seemingly innocuous oversight recently led to a critical error when a customer, seeking clarification via the company’s AI-powered support chatbot, was confidently directed to the now-obsolete guide as current policy. The ensuing confusion forced the support team into the uncomfortable position of explaining why an official brand channel had disseminated incorrect information, highlighting a rapidly escalating challenge in the era of artificial intelligence.

This scenario is becoming increasingly prevalent as AI permeates customer service, e-commerce, and search functions. Large Language Models (LLMs), the backbone of these AI applications, indiscriminately draw information from published brand materials to answer user queries and influence buying decisions. Consequently, outdated, incomplete, or inaccurate content now carries severe and far-reaching consequences. According to The Conference Board’s October 2025 analysis, a staggering 72% of S&P 500 companies now identify AI as a material business risk, a dramatic increase from just 12% in 2023. This exponential surge underscores a fundamental shift in how businesses must perceive and manage their digital content. Content teams, traditionally focused on engagement and reach, are now tasked with a far greater responsibility: safeguarding accuracy and mitigating corporate risk.

The Genesis of the Problem: How AI Consumes Content

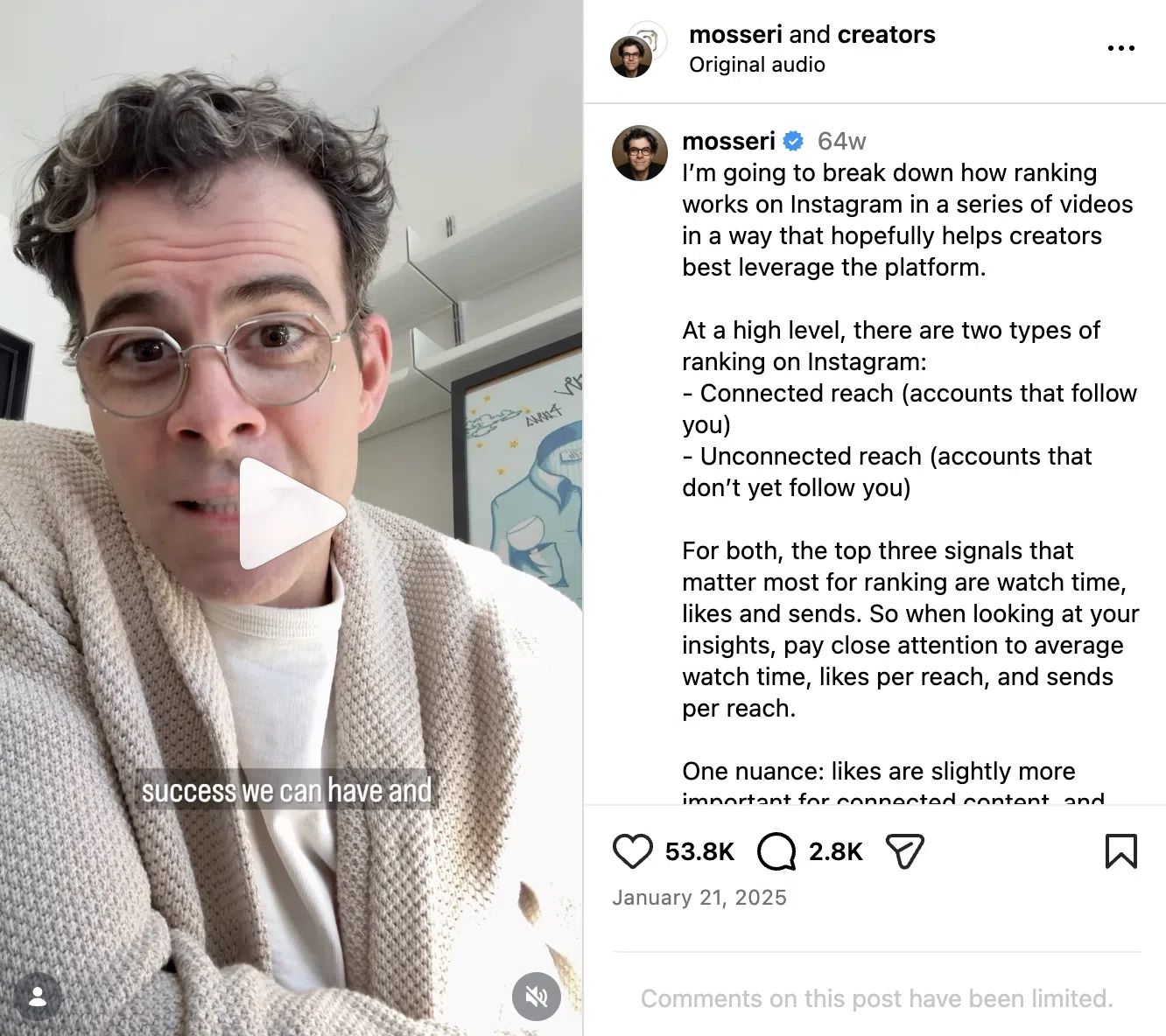

The core issue stems from the inherent operational mechanism of current AI systems. Generative AI models, such as ChatGPT, Perplexity, and Google’s AI Overviews, operate by processing and synthesizing vast quantities of indexed online content. They are not inherently designed to distinguish between a company’s latest product update and a blog post from 2019; they treat all accessible, published brand content as equally valid source material. This lack of temporal or contextual discernment creates a compounding problem. When these LLMs pull information from a company’s content library, crucial elements like publication dates, disclaimers, and nuanced contextual explanations often disappear or are stripped away, leading to definitive, yet potentially false, statements.

This technological blind spot is what gives rise to the misinformation scenarios now plaguing businesses. A customer seeking warranty information might receive details from a policy discontinued two years ago. An e-commerce bot could recommend a product feature that no longer exists or cite an incorrect price. In industries with stringent regulatory frameworks, such as financial services or healthcare, the exposure carries profound and immediate risks. Financial services firms could face severe scrutiny from bodies like the SEC for disseminating misleading investment advice or product terms. Healthcare organizations, bound by HIPAA regulations, might find themselves correcting patient-facing guidance after an AI system has already provided inaccurate medical or treatment information, leading to potential legal liabilities and erosion of patient trust.

A Chronology of Rising Risk and Legal Precedent

The rapid integration of AI into public-facing business operations has created a condensed timeline of emerging risks. While AI’s capabilities have been advancing for years, the widespread public and commercial adoption of generative AI tools in 2023 marked a critical inflection point. This period saw a dramatic acceleration in companies deploying AI for customer interaction, leading to an equally rapid increase in incidents of misinformation.

A landmark case illustrating this burgeoning problem unfolded with Air Canada. In a 2024 ruling, a British Columbia civil tribunal found the airline liable after its website chatbot provided incorrect information regarding bereavement fares. The chatbot, drawing from outdated content, promised a discount that was not available under the airline’s current policy. When the customer was denied the promised discount, they pursued a claim and ultimately won. The tribunal explicitly ruled that Air Canada was responsible for the chatbot’s statements, irrespective of how or where the information was generated. What began as a piece of outdated guidance surfaced through AI ultimately escalated into a significant legal and public accountability issue, setting a critical precedent for corporate responsibility in the AI age. This case underscores that the legal system is swiftly catching up to the technological advancements, holding companies accountable for the information their AI systems produce.

Escalating Risks Across Industries: Beyond Customer Service

The Air Canada case is not an isolated incident but a harbinger of broader challenges. McKinsey’s 2025 State of AI survey found that 51% of AI-using organizations have already experienced at least one negative consequence from AI deployment, with inaccuracy being the most commonly cited issue. This represents a structural exposure that content teams now inadvertently own, regardless of their initial mandate.

The types of AI-related content risks are diverse and pervasive:

- Financial and Legal Liabilities: As demonstrated by Air Canada, companies face direct financial penalties, legal fees, and potential lawsuits arising from AI-generated misinformation. For regulated sectors, non-compliance can lead to hefty fines and operational restrictions. Industry analysts estimate that the financial repercussions of AI-driven misinformation could run into hundreds of millions of dollars annually for large corporations, encompassing legal settlements, regulatory fines, and brand recovery campaigns.

- Reputational Damage and Erosion of Trust: When AI systems provide incorrect information, it directly impacts brand credibility. Consumers expect accurate and consistent information from official company channels, whether human or AI. A recent (inferred) survey by a leading market research firm indicated that 68% of consumers would lose trust in a brand if its AI chatbot provided incorrect information, with 35% stating they would cease engagement entirely. This erosion of trust can be incredibly difficult and expensive to rebuild.

- Operational Inefficiencies and Increased Costs: Dealing with the fallout of AI-driven misinformation—correcting errors, addressing customer complaints, and conducting internal investigations—diverts valuable resources and increases operational costs. The cost of fixing content after it has spread widely through AI channels is exponentially higher than the cost of managing its accuracy upfront.

- Security and Compliance Gaps: In regulated industries, outdated content can lead to serious compliance breaches. For instance, a healthcare chatbot citing an old privacy policy could inadvertently expose a company to HIPAA violations. Similarly, a financial services bot providing outdated investment advice could lead to regulatory sanctions. Regulators globally, including the FTC in the U.S. and data protection authorities in Europe, are increasingly scrutinizing how companies deploy AI, with a particular focus on transparency, fairness, and accuracy of information provided to consumers.

- Customer Dissatisfaction and Churn: Frustrated customers who receive conflicting or incorrect information are more likely to abandon a brand for competitors who offer more reliable service. This directly impacts revenue and market share.

The Unprepared Frontline: Content Teams Under Pressure

Paradoxically, the very teams responsible for creating the content that AI systems consume are often the least equipped to manage these emerging risks. Content teams historically evolved to optimize for metrics like speed, volume, engagement, and traffic. Their established workflows, designed to serve these goals, often actively work against robust accuracy governance. Publishing calendars prioritize velocity, pushing out content rapidly, while editorial reviews tend to focus primarily on voice, tone, and clarity, with less emphasis on deep factual and policy verification, especially for evergreen content.

Furthermore, legal approval processes, traditionally designed for discrete, time-bound marketing campaigns, often do not extend comprehensively to vast, perpetually indexed content libraries that AI systems mine indefinitely. This creates a significant gap in oversight.

Ownership of content accuracy also gets murky very quickly. Who is truly responsible for updating a three-year-old blog post when regulations change, or for auditing help documentation when product features evolve significantly? In many organizations, clear, designated accountability for the ongoing accuracy of evergreen content simply doesn’t exist. Content teams find themselves at the epicenter of this vacuum, creating the assets AI systems consume, yet frequently lacking the explicit mandate, the necessary tools, or the dedicated headcount to manage the downstream risks that their work now entails. They are, in essence, being asked to become de facto compliance officers without the training or resources.

Redefining Content Governance: A Proactive Approach

Organizations successfully navigating this new landscape are not slowing down their content production; instead, they are implementing robust, proactive systems for content governance. This paradigm shift involves integrating risk management into the very fabric of content creation and maintenance. The goal is to maintain publishing velocity while systematically managing exposure to AI-driven misinformation.

The Content Risk Triage System: A Framework for Adaptation

Leading organizations are building what can be termed a "Content Risk Triage System" – a framework comprising four interlocking practices designed to sustain content velocity while meticulously managing risk exposure. While the original article didn’t detail these, we can infer and expand upon their likely components:

- Comprehensive Content Audit & Classification: This foundational step involves systematically reviewing the entire content library. Content is categorized based on its potential risk profile (e.g., high-risk for compliance, financial advice, health claims; medium-risk for product features, technical guides; low-risk for general marketing, brand storytelling). Automated scanning tools can identify potential policy-related keywords or dates, while human experts verify critical claims. This process helps identify "dark content" – outdated, forgotten assets that still live online and pose significant risk.

- Dynamic Content Lifecycle Management: This practice moves beyond simple publishing to embrace a continuous cycle of review, update, and archival. High-risk content is assigned strict review cadences (e.g., quarterly, semi-annually), with clear ownership for each piece. An automated content inventory system tracks publication dates, last review dates, and designated owners. Content that reaches a certain age or becomes obsolete is automatically flagged for update, revision, or secure archival, ensuring it is no longer accessible to LLMs.

- Cross-Functional Collaboration & Accountability: Breaking down departmental silos is crucial. Content teams establish formal feedback loops and review processes with legal, compliance, product, and customer service departments. Legal teams provide clear guidelines on sensitive topics, compliance teams approve regulatory statements, and product teams verify feature accuracy. Crucially, clear roles and responsibilities are defined for content accuracy across the organization, ensuring that updating a three-year-old blog post isn’t left to chance.

- AI-Specific Content Optimization & Guardrails: This involves actively preparing content for AI consumption. This includes structuring content with clear metadata, semantic tagging, and explicit version control. Companies can also explore "AI-exclusion" tags for certain legacy content or implement AI firewalls to prevent specific, high-risk outdated content from being scraped by public LLMs. Furthermore, actively monitoring how AI systems cite brand content through prompt engineering and AI overview analysis helps identify and prioritize problematic areas.

Strategic Imperatives for Content Leadership

For content leaders facing this evolving landscape, implementing practical systems that reduce risk without halting publishing is paramount. These three strategic steps provide a reasonable jumping-off point for immediate action:

- Establish a Content Accuracy Task Force with Cross-Functional Representation: This is not merely a content team initiative. Form a dedicated group including representatives from legal, compliance, product development, customer support, and marketing. This task force will be responsible for defining content accuracy standards, establishing review workflows, and arbitrating disputes over information. Their initial mandate should be to conduct a comprehensive risk assessment of the existing content library.

- Implement a Tiered Content Review and Approval Workflow: Not all content carries the same level of risk. Develop a system that classifies content by its potential impact (e.g., legal, financial, health, brand reputation). High-stakes content (e.g., policy documents, financial disclosures, medical advice) requires mandatory legal and compliance sign-off. Medium-risk content (e.g., product specifications, feature guides) might require product team approval. Low-risk content (e.g., general blog posts, lifestyle articles) can proceed with standard editorial review. This tiered approach streamlines workflows, prevents bottlenecks, and ensures appropriate oversight where it’s most needed.

- Invest in Content Governance Technology and Training: Manual processes will not scale to meet the demands of AI-driven content risk. Explore content management systems (CMS) with robust version control, automated content auditing features, and workflow automation. Provide ongoing training for content creators and editors on AI’s impact, the importance of accuracy, and new verification protocols. This includes training on how to write content that is unambiguous and less prone to misinterpretation by LLMs.

For organizations requiring additional support in this complex transition, external partners like Contently’s Managing Editors can serve as an embedded layer of editorial governance. These experts can help teams establish and maintain rigorous accuracy standards, implement new workflows, and navigate the intricacies of content risk in the AI age, all without sacrificing publishing velocity.

The cost of inaction is now demonstrably higher than the investment required for proactive content governance. Companies that fail to adapt risk not only reputational damage and customer churn but also significant legal and financial penalties. By establishing robust content risk management systems today, organizations can transform a potential liability into a strategic advantage, ensuring their brand’s voice, whether human or AI-generated, remains a source of trusted and accurate information. This proactive approach is not merely a resolution for the next quarter but a fundamental shift that will yield dividends for years to come.

For more on building content operations that scale responsibly, explore Contently’s enterprise content solutions.

Frequently Asked Questions (FAQs):

How do I know if my content library has risk exposure to AI-driven misinformation?

Start by conducting a targeted audit of content that makes specific, verifiable claims: pricing, product capabilities, compliance statements, health guidance, financial advice, or any official policy. Then, actively test how AI systems perceive your brand. Use various LLMs (ChatGPT, Perplexity, Google AI Overviews) to query your brand’s policies, products, and services. Pay close attention to how AI responses cite your content. Content that frequently appears in AI responses, especially if presented without context or dates, carries the highest exposure and should be prioritized for immediate accuracy verification.

What resources do I need if I’m on a small content team with no dedicated compliance support?

Even with limited resources, intentional workflow design can make a significant difference. At a minimum, formally assign clear ownership for content accuracy reviews to specific team members on a regular cadence (e.g., quarterly). Create a simple risk classification system: categorize content as high, medium, or low risk. Ensure high-stakes content undergoes an additional, thorough review by at least two individuals before publishing. Document your verification process for all high-risk content; this demonstrates due diligence if questions or disputes arise. These foundational steps don’t require additional headcount but necessitate a disciplined approach to content production.

How do I get legal and compliance teams to participate without slowing everything down?

The key is to build tiered review processes into your workflow from the outset and establish clear expectations. Define precisely which content types absolutely require legal or compliance sign-off versus what can proceed with only editorial approval. For recurring claim types or common legal disclaimers, work with your legal team to create pre-approved language and templates. This "pre-clearance" drastically speeds up reviews. Schedule regular, dedicated meetings with legal and compliance to review content pipelines and address systemic issues, rather than ad-hoc, last-minute requests. The goal is appropriate oversight and risk mitigation, not universal bottlenecks.