The landscape of digital marketing is undergoing a seismic shift, driven by the rapid integration of Artificial Intelligence (AI) into consumer information discovery. However, a stark reality is emerging that challenges fundamental assumptions marketers have long held about tracking and measuring their brand’s presence. The core of this challenge lies in the inherent inaccuracy of current AI visibility data, a situation that, while uncomfortable for Chief Marketing Officers (CMOs) and Chief Financial Officers (CFOs), presents an opportunity for a more intelligent, albeit probabilistic, approach to measurement.

The Illusion of Precision: Understanding the Data’s Origins

At the heart of the problem is the nature of AI-generated content and the methods used to track it. Platforms like Profound, seoClarity, Peec, and AirOps, along with others currently being piloted, all attempt to quantify a brand’s visibility within AI responses. However, the data they provide is not a direct reflection of user behavior in the way traditional search analytics are. Instead, it relies on a series of estimations and probabilistic models.

"Prompt volume numbers are probabilistic estimates," states an industry insider, "Mention rates fluctuate run to run. And the thing you most want to know, how many people actually saw an AI response that mentioned your brand this month, is, frankly, unknowable." This statement, while seemingly critical of these platforms, highlights a "structural reality of the medium" rather than a fault in the technology itself.

To effectively navigate this new terrain, marketers must first understand the diverse methodologies employed in AI visibility measurement. Broadly, four approaches are prevalent:

- Panel and Survey-Based Estimation: This method leverages data from consumer panels or surveys to infer prompt volume. Its strength lies in its attempt to mirror real human behavior. However, its accuracy can be compromised by the inherent margin of error, particularly within niche or B2B sectors where panel sizes are limited.

- Clickstream and Traffic Inference: By analyzing anonymized browsing behavior, this approach infers the volume of query activity across AI platforms. While useful for high-level comparisons between platforms, such as the growth trajectory of ChatGPT versus Gemini, its reliability diminishes when trying to pinpoint specific prompts or topics.

- Keyword-to-Prompt Modeling: This is the most common methodology. It utilizes existing keyword research data to estimate the likelihood of a given prompt theme being asked within AI contexts. The logic is that search intent for queries like "best running shoes for flat feet" on Google likely translates to similar inquiries on AI platforms. The significant drawback, however, is the assumption in the conversion factor from search volume to AI prompt volume. This model often fails to account for the demonstrably different way users phrase queries on Large Language Models (LLMs) compared to traditional search engines.

- Direct API Sampling: This transparent approach involves running a predetermined set of prompts on a fixed schedule and recording the results. While providing clear insights into what was explicitly asked, it makes no claims about the actual real-world volume of such queries.

The critical distinction, as emphasized by industry analysts, is that "none of these is wrong. All have genuine utility. But none is the equivalent of Google Search Console, where data is logged, deterministic, and directly tied to real user behavior." Internalizing this difference is the first step towards a more effective AI visibility program.

The Deeper Measurement Problem: Inconsistency is the Norm

Beyond the methodological nuances of data collection, a more profound challenge plagues AI visibility measurement: the inherent inconsistency of AI responses themselves. While many acknowledge that different tools yield varying numbers and that sentiment scoring can be unreliable, the deeper issue stems from the generative nature of the AI medium.

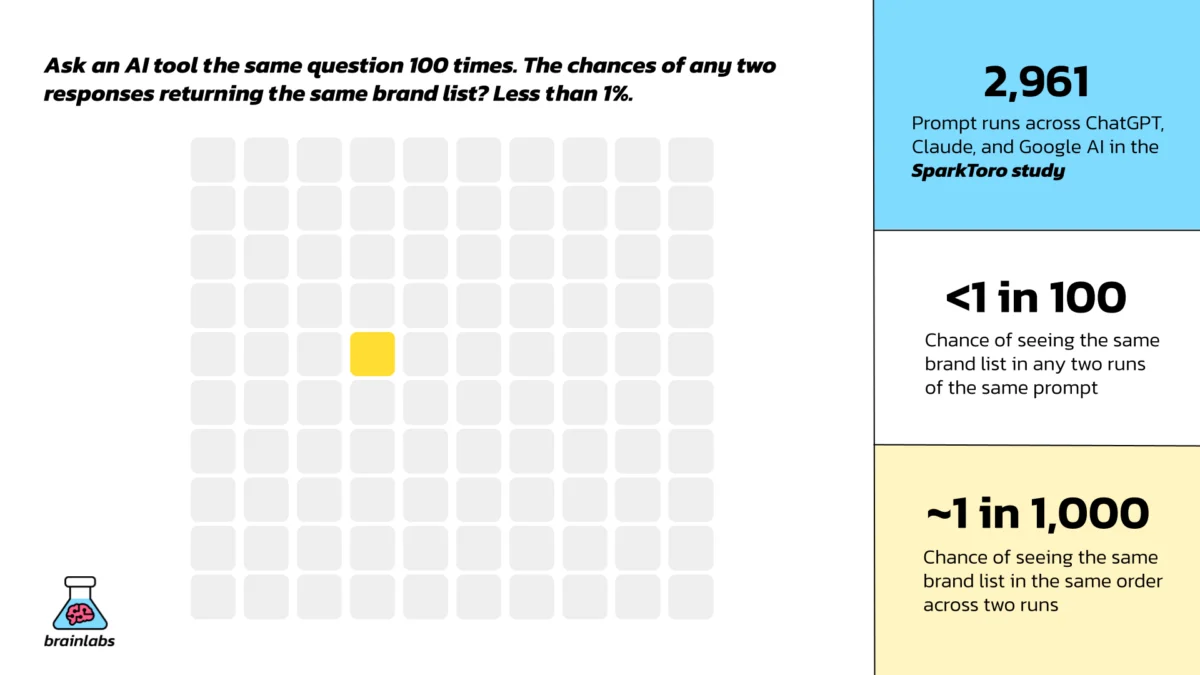

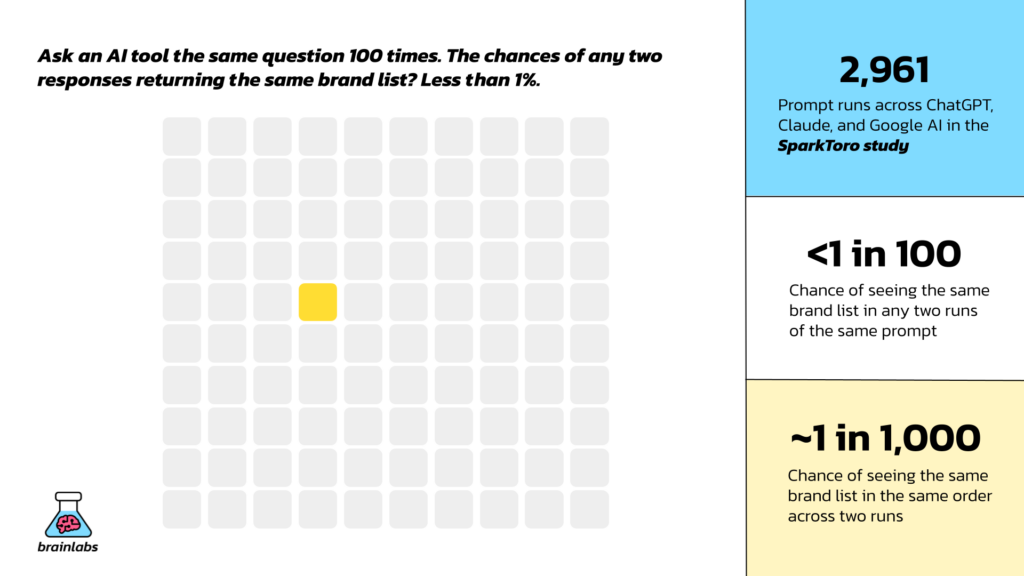

A landmark study by SparkToro’s Rand Fishkin, analyzing nearly 3,000 prompt runs across ChatGPT, Claude, and Google AI, revealed a startling lack of consistency. The research found that the probability of receiving the same list of brand recommendations from any of these AI tools for the identical prompt was less than 1 in 100. This inconsistency escalates dramatically when considering the order of recommendations, dropping to closer to 1 in 1,000.

This fundamental inconsistency renders the concept of a "ranking," a cornerstone of traditional Search Engine Optimization (SEO) reporting, obsolete in the AI search paradigm. Instead of occupying "position three," marketers are now faced with metrics like being "mentioned in 47% of responses to a given prompt cluster." This is not a degraded form of ranking; it represents a "fundamentally different signal requiring a fundamentally different way of thinking."

The Zero-Click Conundrum: A Shift in Influence

The digital marketing world has long grappled with the concept of "zero-click search," where users obtain the information they need directly from the search results page or AI interface, without needing to click through to a website. This phenomenon is particularly pronounced in AI-driven interactions. When a user queries an AI for a recommendation, such as "the best accounting software for a growing startup," they often receive a direct, trusted answer. The subsequent need to click through to multiple sources for verification is significantly reduced.

Despite this widely acknowledged reality, many marketing leaders continue to focus on the wrong metrics. The common refrain, "Why is our LLM click volume so low?" or the even more concerning, "This is only like 1% of organic traffic, does this even really matter?" stems from a deeply ingrained attribution infrastructure.

For two decades, the industry has built a measurement stack—including tools like Google Analytics 4 (GA4), Search Console, and UTM parameters—designed to quantify clicks and attribute outcomes to them. This entire system assumes that value is primarily derived from a click. When clicks cease to be the primary conduit for influence, this entire framework requires a fundamental reorientation, a task far more complex than simply updating a dashboard.

What is truly occurring when a brand is mentioned in an AI response is akin to a brand impression, but "on steroids." It’s an impression delivered by a highly trusted and seemingly objective influencer. While this commentary on a brand’s positioning is absorbed by the user and influences their consideration set, it bypasses traditional analytics. However, this influence often manifests downstream as a branded search, a direct website visit, or a purchase decision. This is the "halo effect" of AI mentions—a growing, tangible force that current measurement systems are ill-equipped to capture accurately.

Intelligence Over Accounting: A New Framework for Measurement

Given the probabilistic nature of AI visibility data, the absolute numbers are less important than the insights they can generate. Marketers must shift their focus from precise accounting to deriving actionable intelligence. This means prioritizing trends, competitive benchmarks, directional signals, prompt-level patterns, and citation source breakdowns. These elements, when understood within the context of uncertainty, become genuinely meaningful.

Brainlabs, a prominent digital marketing agency, frames this approach as "intelligence over accounting." This philosophy deliberately moves away from the instinct to treat AI visibility metrics as definitive numbers to be reported and compared week-over-week as ends in themselves. Instead, the focus is on using these signals to inform strategy and drive action.

In practice, this intelligent approach involves several key strategies:

- Testing Multiple Data Sources and Seeking Convergence: When different AI visibility tools provide a similar directional story—for instance, indicating a loss of ground to a competitor in a specific query cluster—that signal is valuable, even if the exact numerical values diverge. Convergence across imperfect sources offers a more robust indication than relying on the false precision of a single tool.

- Prioritizing Mentions Over Citations: While traditional SEO training emphasizes the importance of links (citations), AI visibility data suggests that brand mentions hold greater immediate influence. Evidence increasingly indicates that being mentioned in AI responses significantly impacts downstream brand behavior, including branded search volume, direct traffic, and ultimately, conversions. The mention, therefore, becomes the primary signal, with the link a valuable, but secondary, bonus.

- Reading AI Metrics Alongside Traditional SEO KPIs: AI visibility data does not replace organic traffic analysis but rather contextualizes it. For example, a rise in branded search volume concurrent with a decline in organic click volume could be plausibly explained by increased AI mentions. Similarly, observing a competitor’s flat domain authority alongside a climbing share of AI citations can reveal where authority is shifting in the AI landscape. These are the narrative insights that intelligent interpretation of AI visibility data can provide.

The Future of AI Visibility Reporting: Actionable Insights, Not Just Numbers

Constructing genuinely useful AI visibility reporting requires a departure from traditional reporting norms. The focus must be on honesty about data limitations while still extracting actionable insights.

- Lead with Direction, Not Decimals: Instead of reporting precise figures like "Our mention rate is 43.7%," which lacks a reliable baseline for absolute meaning, focus on directional trends. A statement like, "Our mention rate on high-intent financial services prompts is up 12 points quarter-on-quarter," provides a meaningful and actionable signal. Presenting trends and relative comparisons is far more valuable than point-in-time snapshots.

- Segment by Prompt Intent, Not Just Platform: Knowing that a brand is more visible on ChatGPT than Gemini is less impactful than understanding visibility across different prompt intents. Identifying visibility on high-commercial-intent prompts versus category-awareness prompts offers actionable strategic direction.

- Build the Halo Effect into Your Framework: Even if precise measurement of the halo effect remains elusive, it must be explicitly acknowledged in reporting. Correlating branded search volume trends with periods of improved AI visibility, tracking direct traffic, and monitoring branded search uplift following content investments aimed at improving AI citation rates are crucial steps.

- Report Alongside, Not Instead Of, Traditional Metrics: AI visibility data should be viewed as an additive component to the existing measurement stack. Organic traffic, GSC data, and conversion rates remain indispensable. AI visibility data offers a crucial lens into the influences shaping these metrics at a layer above direct clicks.

The Right Benchmark for an Evolving Landscape

The traditional SEO model offered marketers a relatively clear path from query to click to outcome. The disruption of AI search, with its inherent imprecision, can be uncomfortable, leading to a natural inclination to seek out the nearest, albeit shaky, proxy for certainty.

However, the brands poised to succeed in the evolving AI search environment will not be those who find the most convincing-looking numbers for their board slides. They will be the ones who embrace the imprecision, invest in directional intelligence, and develop content and distribution strategies robust enough to resonate across the diverse sources that LLMs draw upon.

While the data will undoubtedly improve and measurement methodologies will mature, and attribution models will eventually evolve to account for zero-click influence, the present reality demands a different approach. In the interim, "imprecise and actionable beats precise and paralyzing every time." The fundamental truth remains: your AI visibility data may be imperfect, but it is still a critical tool for navigating the future of information discovery.

Marketers seeking to understand how to effectively leverage AI visibility measurement for their specific industries, whether in retail, financial services, or B2B sectors, are encouraged to engage with experts who can provide tailored strategies and insights. The journey into AI-driven marketing is ongoing, and a proactive, intelligent approach to measurement will be key to unlocking its full potential.