The landscape of artificial intelligence in software development is undergoing a fundamental shift from simple autocomplete tools to integrated reasoning engines. While early adopters of AI coding assistants often treat these systems as advanced search engines or enhanced text completion features, a growing body of evidence suggests that the true potential of tools like Anthropic’s Claude Code lies not in the complexity of the prompt, but in the architectural integrity of the repository itself. Recent developments in the field demonstrate that developers who treat AI as a core system rather than a peripheral feature achieve higher consistency, fewer regressions, and more scalable codebases.

The Evolution of AI-Assisted Development

The transition from basic Large Language Model (LLM) integration to full-scale AI engineering represents the third wave of developer productivity tools. The first wave was characterized by static analysis and basic IDE shortcuts. The second wave introduced generative AI through "chat" interfaces and basic "ghost-text" completions. However, these second-wave tools often suffer from what engineers call "context drift"—a phenomenon where the AI loses track of the project’s overarching logic, repeats previously corrected errors, and produces inconsistent results across different files.

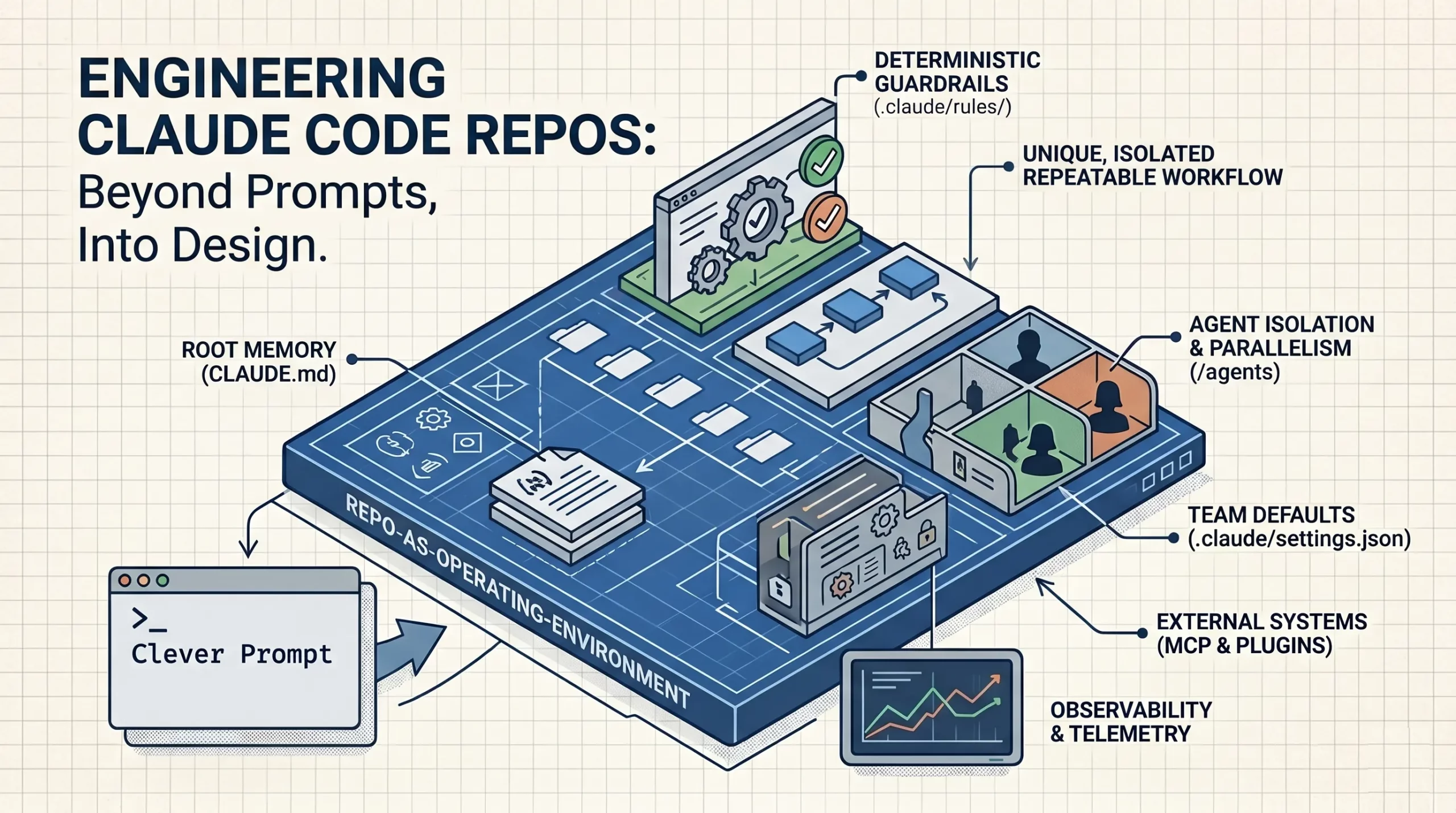

The emerging third wave, exemplified by the Claude Code command-line interface (CLI), demands a more sophisticated approach. Developers are discovering that an "extended prompt" is a temporary fix for a deeper structural problem. To build production-grade AI systems, engineers must now focus on creating a "memory-rich" environment that allows the AI to function as a native engineer within the codebase rather than an external consultant.

The Case for Respondly: An Architectural Blueprint

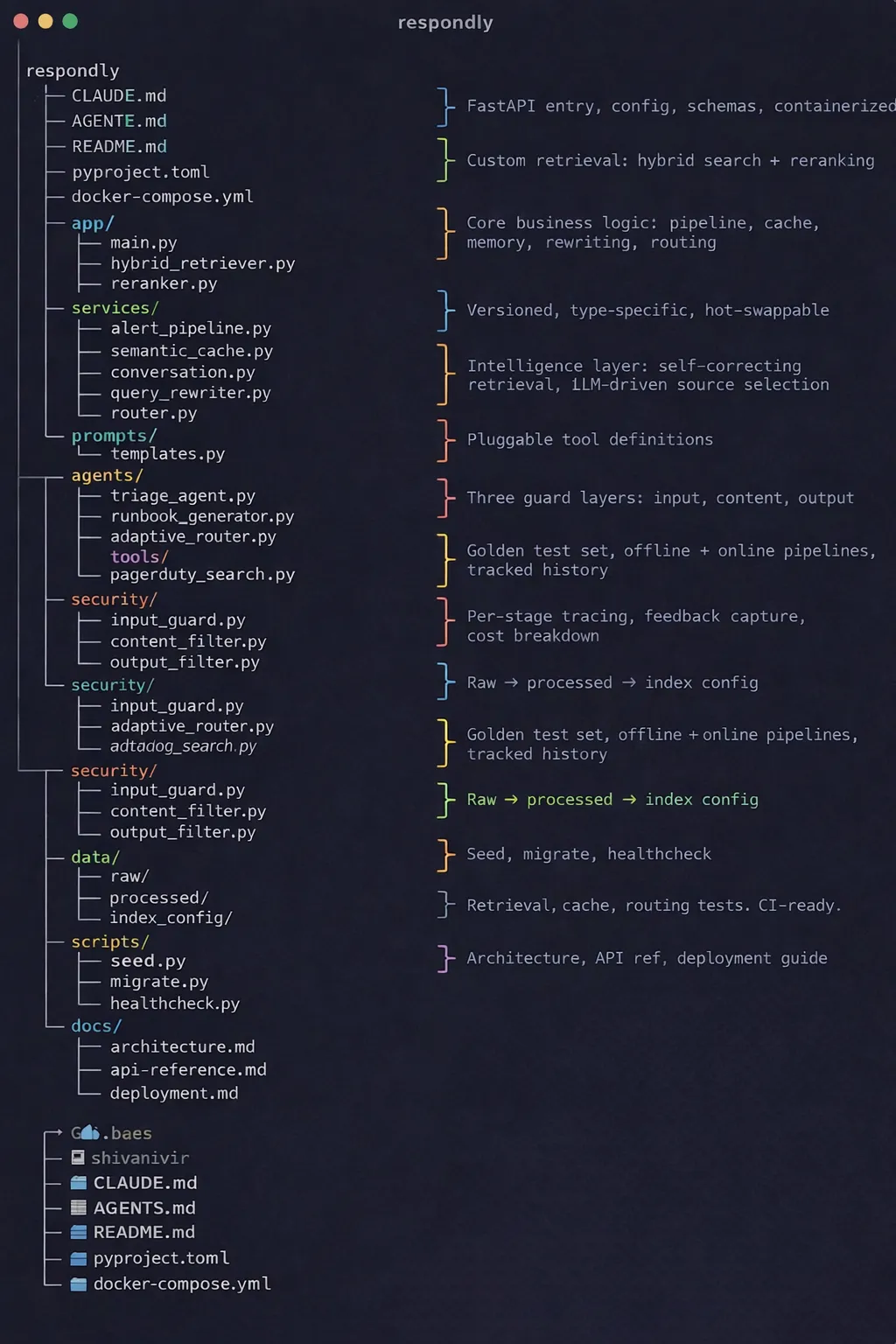

To illustrate the necessity of structural engineering, industry experts have pointed to "Respondly," a hypothetical yet technically grounded cloud-based incident management system powered by AI. Respondly serves as a model for how a repository should be organized to maximize the reasoning capabilities of Claude Code.

The core philosophy behind Respondly is that repository structure determines how effectively an AI can navigate complexity. Most developers stop at simple API calls, but a production-grade system requires layers of evaluation, observability, and structured context. By organizing a project into specific directories that serve as memory, skills, rules, and documentation, developers can reduce the cognitive load on the LLM, leading to a significant reduction in "hallucinations" and logical bugs.

Chronology of AI Integration in Software Engineering

The path to structured AI engineering has followed a distinct timeline of technological breakthroughs and subsequent realizations:

- 2022 – The API Era: Developers began integrating LLMs via simple API calls. The focus was on "prompt engineering"—the art of crafting the perfect single-turn instruction.

- Early 2023 – The Chat & Plugin Era: Tools like GitHub Copilot and ChatGPT plugins allowed AI to see active files. However, the AI still lacked a holistic view of large-scale repositories.

- Late 2023 – The RAG (Retrieval-Augmented Generation) Breakthrough: Developers realized that feeding the entire codebase into a prompt was inefficient and expensive. RAG allowed systems to "look up" relevant code snippets.

- 2024 – The Agentic Era: The release of Claude 3.5 Sonnet and specialized CLI tools shifted the focus to "agents" that can execute terminal commands, run tests, and browse the web.

- 2025 and Beyond – The Structural Era: The current focus is on "Repository Engineering." Developers are now building codebases specifically designed to be read and modified by AI agents, using files like

CLAUDE.mdto provide persistent memory.

The Four Pillars of an AI-Ready Repository

For an AI reasoning engine to perform at an elite level, it requires four specific types of information, each housed within a deliberate directory structure.

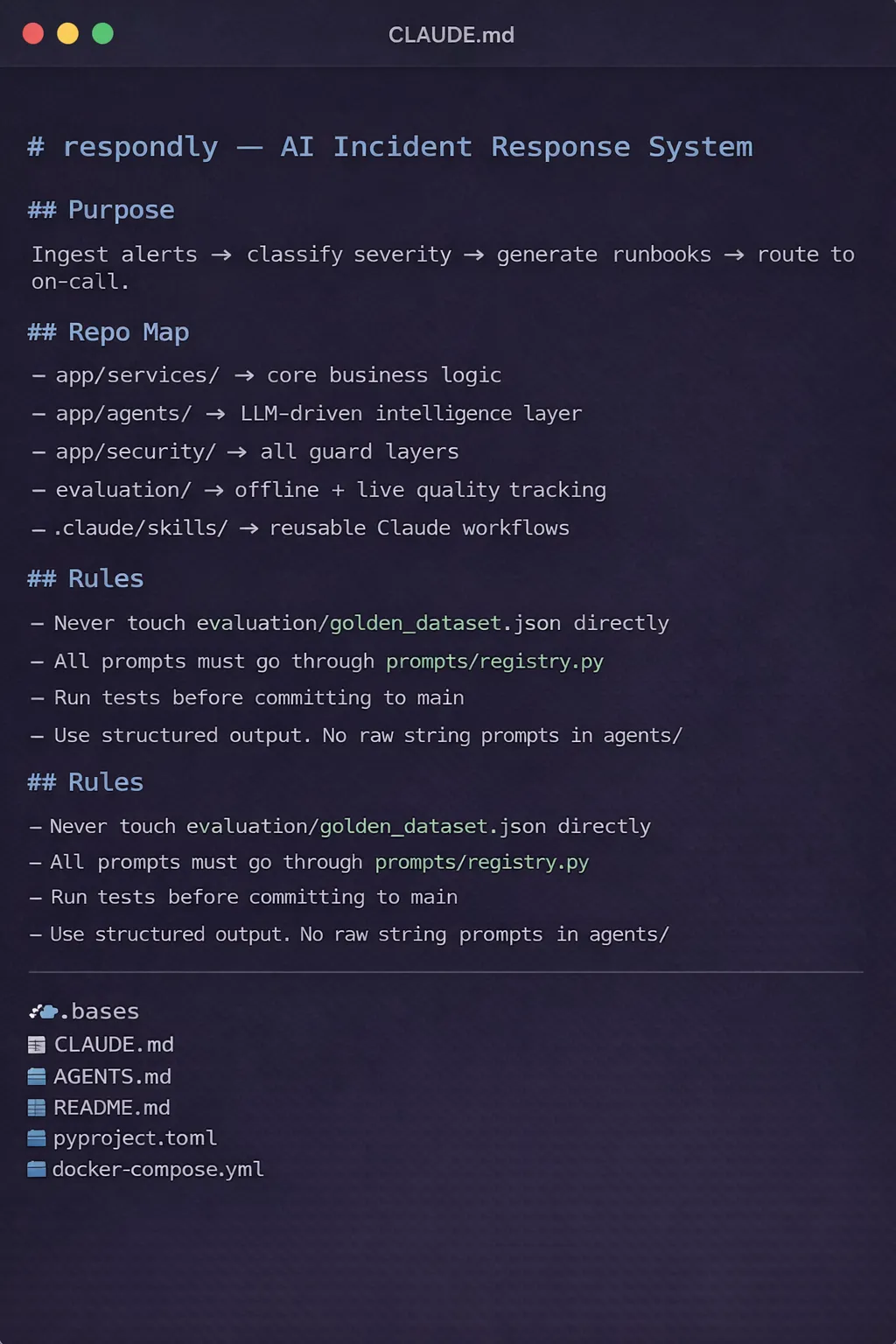

1. Root Memory: The CLAUDE.md File

In the Respondly project, the CLAUDE.md file acts as the "Day One" onboarding document for the AI. Unlike traditional documentation meant for humans, this file is optimized for a reasoning engine. It provides a concise overview of the system’s purpose, core technical stack, and immediate priorities. Experts suggest that this file should be kept brief—no more than three sections—to ensure the model does not become overwhelmed by irrelevant details. Every time a new session begins, the AI references this file to establish its "identity" within the project.

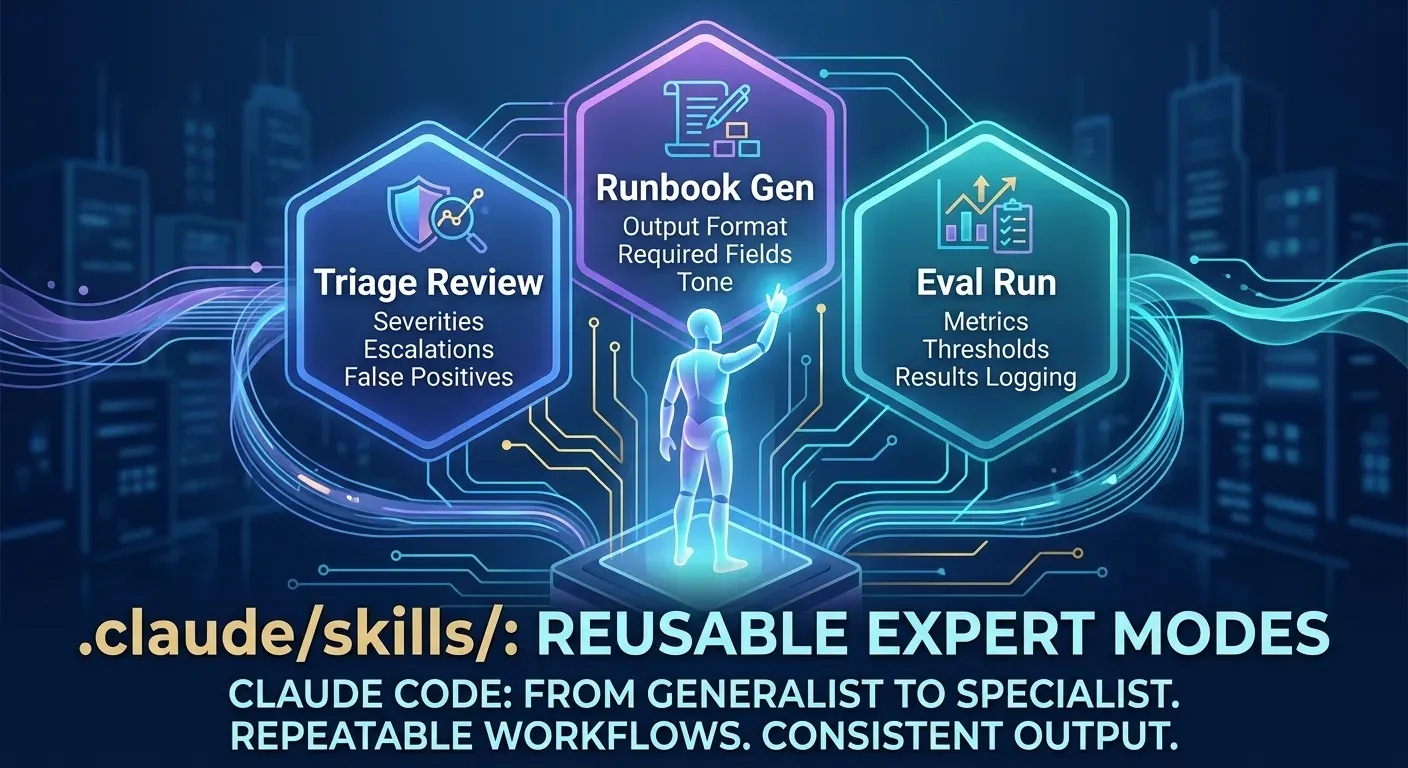

2. Reusable Expert Modes: .claude/skills

One of the most significant advancements in Claude Code is the ability to transition from a generalist to a specialist. By defining reusable "skills" within the .claude/skills directory, developers can create repeatable workflows. For Respondly, these skills include specialized routines for security auditing, automated testing, and deployment orchestration. Once a process is defined as a skill, the AI can load it on demand, ensuring that every user on a team receives the same high-quality, standardized output.

3. Immutable Guardrails: .claude/rules

While LLMs are prone to forgetting instructions over long conversations, a dedicated rules directory provides non-negotiable guardrails. These rules might include mandatory linting standards, security protocols (such as "never commit API keys"), or architectural constraints (such as "always use functional components for UI"). By embedding these rules into the repository structure, they become an inherent part of the project’s DNA, requiring no manual reminders from the human developer.

4. Progressive Context: .claude/docs

The "prompt overload" anti-pattern occurs when a developer tries to cram every piece of project history into a single message. A structured documentation directory allows for "progressive context." The AI only accesses the specific documentation it needs for the task at hand. In the Respondly framework, this includes API specifications, database schemas, and incident response playbooks. This targeted access reduces token usage and improves the accuracy of the AI’s decisions.

Managing "Danger Zones" with Local Context

Not all areas of a codebase are created equal. High-stakes modules—such as security protocols, agent logic, and evaluation frameworks—often contain hidden complexities that a global CLAUDE.md might overlook.

The Respondly project introduces the concept of "Local CLAUDE.md" files. By placing a specific context file within a directory like app/security/, developers can provide the AI with hyper-local instructions. This might include warnings about specific legacy bugs, requirements for encryption standards, or pointers to related dependencies. This isolation strategy has been shown to significantly reduce the occurrence of "LLM-enabled bugs" in critical system components.

Data Analysis: The Impact of Structure on AI Performance

Recent industry benchmarks and developer surveys suggest that structured repositories outperform unstructured ones in several key metrics:

- Logic Retention: AI agents operating in structured environments show a 40% improvement in maintaining architectural consistency over sessions exceeding 50 turns.

- Error Rate: Projects utilizing local context files (

Local CLAUDE.md) report a 25% decrease in regressions during refactoring tasks. - Onboarding Time: New developers (human) and AI agents alike can contribute to the codebase 30% faster when the repository follows a standardized "Memory/Skill/Rule" layout.

The Multi-Agent Intelligence Layer

The Respondly project also highlights the shift toward multi-agent frameworks. Within the respondly/agents/ folder, the system utilizes four distinct intelligence modules: Triage, Analysis, Resolution, and Communications.

This modularity is what separates a simple AI demo from a production-grade system. By breaking down intelligence into specific roles, developers can run targeted evaluations on each component. For instance, the "Analysis Agent" can be tested specifically for its diagnostic accuracy without interference from the "Communications Agent’s" formatting logic. This separation of concerns allows for a "chain of thought" that is observable, auditable, and easily debugged.

Broader Impact and Industry Implications

The shift toward structured AI engineering has profound implications for the future of the software development life cycle (SDLC). As AI agents become more autonomous, the role of the human developer is evolving from a "writer of code" to an "architect of context."

Industry analysts predict that within the next three years, "AI-readiness" will become a standard metric for evaluating software quality. Repositories that are not structured for AI consumption will be seen as "legacy," much like code written without version control or automated tests is viewed today.

Furthermore, this structural approach addresses one of the most significant hurdles in enterprise AI adoption: security and compliance. By utilizing the .claude/rules layer, organizations can bake compliance requirements directly into the repository. If an AI agent attempts to write code that violates a data privacy law or a corporate security policy, the internal rules act as an automated "no-go" signal, providing a level of governance that manual prompting cannot match.

Conclusion: From Tool to Teammate

The most significant misunderstanding in contemporary AI development is the belief that an LLM is a standalone solution. In reality, AI is a reasoning engine that is only as effective as the context and structure it is given. The Respondly project demonstrates that when a repository is organized with intentionality—using root memory, reusable skills, immutable rules, and progressive context—the AI ceases to be a mere chatbot. It becomes a native engineer, capable of making informed judgments and maintaining the long-term health of the codebase.

The execution plan for developers is clear: move beyond the prompt. By establishing a foundational architecture before scaling features, engineers can create systems that are not only AI-powered but AI-optimized. In this new era of development, structure is not just a best practice; it is the definitive criterion for success. Turning a reasoning engine into a productive team member requires a shift in mindset: stop trying to talk the AI into doing a good job, and start building a project that makes it impossible for the AI to do a bad one.