The discourse surrounding content pruning in the realm of Search Engine Optimization (SEO) has intensified, moving beyond a simplistic ‘delete low-performing content’ directive to a more sophisticated, context-dependent approach. Veteran SEO Barry Adams recently articulated this evolving perspective within the NewsSEO Slack community, emphasizing that content pruning is not an overarching "industry-wide best practice" but rather a specialized tool requiring precise application. This viewpoint underscores a broader shift in SEO methodologies, where empirical testing and a deep understanding of specific digital ecosystems are paramount over generalized recommendations.

Adams’ insights highlight a critical divergence from earlier, more aggressive content pruning strategies. "Many SEOs have been proclaiming the virtues of content pruning for many years, with some anecdotal evidence to back up its success," Adams observed, "but there have been plenty of instances where the evidence was very thin, and even where content pruning caused disaster. So it’s not an ‘industry-wide best practice.’ It’s just another tool in a very broad arsenal, and a tool that needs to be applied only when it is the right tool for the job. And that is very context-dependent." This caution reflects a growing recognition within the SEO community that indiscriminate content removal can inadvertently harm a website’s topical authority, search visibility, and overall digital footprint.

The Debate Unfolds: Pruning vs. Consolidation

Adding another layer to this strategic discussion, Ulrik Baltzer, SEO Manager at TV 2 Danmark, champions content consolidation as a potentially superior alternative to outright pruning. Baltzer cited examples, such as the widely discussed content strategy of CNET, suggesting that merging related articles could yield more beneficial outcomes. "Personally, I think [CNET] could stick to 1+2 in their content pruning process without deprecating [content]," Baltzer stated. "By consolidating articles without deprecating unnecessarily, they could retain topical authority and focus their editorial efforts on fewer and better articles going forward. It’s like consolidating ten different stories about the history of CPUs into one mother article or something along those lines. But it depends on your perspective, I guess." This perspective emphasizes the long-term value of comprehensive content hubs that establish a site as an authoritative source on specific subjects, rather than fragmenting expertise across numerous, potentially thin, articles.

The core tension between pruning and consolidation lies in their respective impacts on topical authority. Pruning aims to remove perceived dead weight, theoretically improving crawl efficiency and concentrating "link equity" on higher-performing pages. However, it risks inadvertently deleting content that, while individually low-performing, contributes to a broader topical web that Google’s algorithms might interpret as comprehensive expertise. Consolidation, conversely, seeks to merge these fragmented pieces into a robust, singular resource, aiming to boost the authority and depth of the consolidated page, thereby enhancing its chances of ranking for a wider array of related queries.

Historical Context: The Genesis of Content Quality and Pruning

The concept of content pruning gained significant traction in the SEO landscape following Google’s algorithm updates, particularly the Panda update first rolled out in 2011. Panda targeted websites with "thin, low-quality content" or "duplicate content," leading to widespread declines in search rankings for sites that had prioritized quantity over quality. This event prompted many webmasters and SEOs to re-evaluate their content strategies, leading to an initial wave of aggressive content audits and deletions. The prevailing wisdom at the time often suggested that removing pages deemed to be of low quality or receiving minimal traffic would improve the overall quality signal of a website, thereby boosting the rankings of its remaining, higher-quality content.

Over the subsequent years, Google continued to refine its understanding of content quality, introducing concepts like E-A-T (Expertise, Authoritativeness, Trustworthiness) and later E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) as crucial factors in evaluating content. These evolving guidelines further solidified the idea that simply having a large volume of content was insufficient; the content needed to be valuable, relevant, and credible to users. This continuous emphasis on quality fueled the content pruning discussion, with SEOs seeking methods to align their sites with Google’s quality expectations.

The Nuance of Application: When is Pruning the Right Tool?

The evolving consensus, as articulated by Adams, is that content pruning is a highly contextual tool. Its efficacy depends on numerous factors, including the website’s size, industry, target audience, existing content architecture, and overarching business objectives. For instance, an e-commerce site with thousands of product pages might approach pruning differently than a niche blog or a large news publisher. A site struggling with significant crawl budget issues due to an excessive number of low-value pages might benefit more from pruning than a site with a well-maintained, albeit slightly underperforming, content library.

Factors to consider when determining if pruning is "the right tool" include:

- Website Size and Complexity: Larger sites with millions of pages might experience more pronounced benefits from pruning, particularly in terms of crawl budget optimization, compared to smaller sites.

- Content Type and Purpose: Evergreen content, which remains relevant over time, should be treated differently from timely news articles or event-specific content that naturally depreciates in value.

- Topical Authority: Websites striving to be definitive sources on specific topics must carefully assess whether a piece of content, even if individually low-performing, contributes to their overall topical depth.

- Resource Allocation: Maintaining and updating a vast library of content consumes significant editorial and technical resources. Pruning or consolidating can free up these resources for creating higher-impact content.

- User Experience (UX): A cluttered website with many low-quality or outdated pages can detract from user experience. A streamlined content library can improve navigation and user satisfaction.

The Imperative of Testing: Challenging Conventional Wisdom

Perhaps the most crucial advice in navigating the complexities of content strategy, including pruning, emanated from a LinkedIn job posting that emphasized a scientific approach: "Don’t accept theories at face value, and enjoy testing to prove the effectiveness of tactics." This sentiment resonates deeply with the current state of SEO, where algorithms are constantly evolving, and what works for one site may not work for another. The admonition to "test, test, and test again" serves as a fundamental principle for any SEO endeavor.

Implementing content pruning or consolidation without prior testing or a robust understanding of potential outcomes can lead to detrimental consequences. SEO professionals are increasingly employing A/B testing, controlled experiments, and meticulous data analysis to validate their strategies before widespread implementation. This includes tracking key performance indicators (KPIs) such as organic traffic, keyword rankings, conversion rates, and bounce rates before and after content modifications. The data derived from these tests provides objective evidence of effectiveness, mitigating the risks associated with broad, unproven changes.

A Strategic Framework for Content Management: Beyond Simple Deletion

The original three-step process for content pruning offers a solid foundation, but in light of the evolving debate, it can be enriched to encompass a more comprehensive content management strategy.

1. Comprehensive Content Audit and Performance Analysis

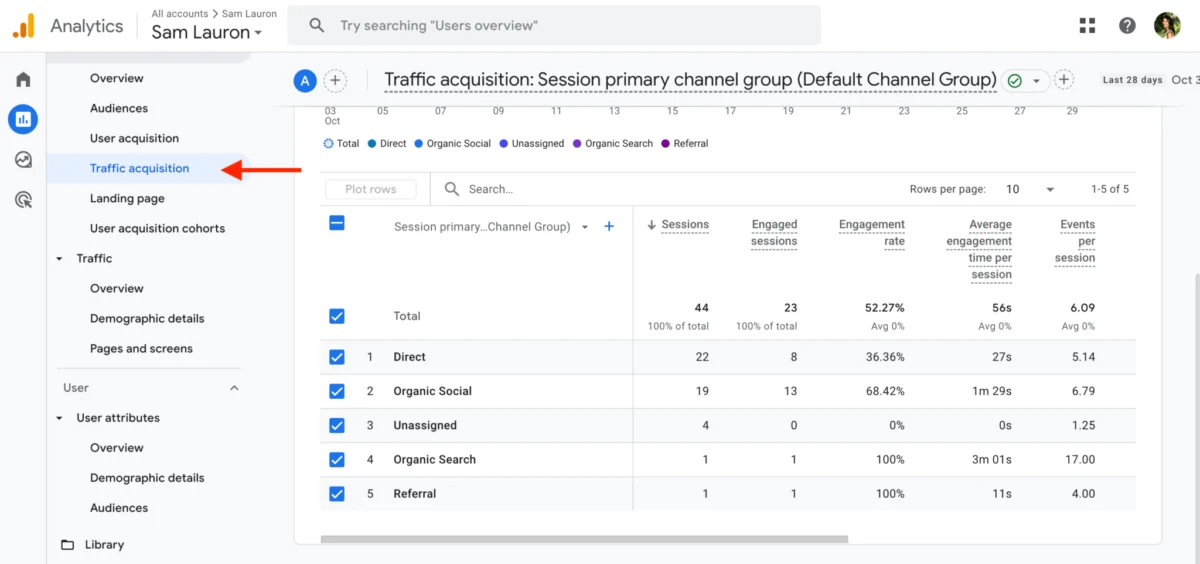

The initial step involves a rigorous audit of the entire content inventory. This goes beyond merely identifying struggling content to understanding the why behind its performance. Key metrics to analyze include:

- Organic Traffic: Pages with significant drops in organic sessions over time.

- Keyword Rankings: Content that has lost rankings for target keywords or ranks for irrelevant terms.

- Backlinks: Pages that have accumulated valuable backlinks, even if traffic is low, indicating potential for revival.

- Engagement Metrics: High bounce rates, low time on page, or lack of social shares.

- Crawl Data: Pages that are rarely crawled or have crawl errors.

- Content Freshness: Identifying content with outdated information, statistics, or references.

- Duplicate/Near-Duplicate Content: Instances where multiple pages address the same topic with minimal differentiation.

- Topical Contribution: Assessing whether a page contributes to the site’s overall topical authority, regardless of individual performance.

Tools such as Google Analytics, Google Search Console, Screaming Frog, Semrush, Ahrefs, and internal site search logs are indispensable for this phase. A critical component is also a manual review of content to assess its quality, relevance, and alignment with the brand’s voice and mission.

2. Identifying Opportunities for Optimization and Quick Wins

Not all underperforming content requires deletion or consolidation. A significant portion can be revitalized through updates and optimization. Focus on content that shows "signs of life" – meaning audiences and search engines still deem it somewhat relevant, but it has experienced recent performance drops.

- Criteria for Quick Wins:

- Recent Drops: Content whose performance decline is relatively recent (e.g., within the last 6-12 months) rather than a long-term decay.

- Existing Rankings: Content that still ranks on the second or third page of search results, indicating potential for improvement with targeted efforts.

- Backlink Profile: Pages with existing backlinks from reputable sources.

- Audience Relevance: Content that addresses evergreen topics or core audience interests, even if currently underperforming.

- Keyword Gaps: Content that can be updated to target new, relevant keywords or cover existing keywords more comprehensively.

Actions for Quick Wins:

- Content Refresh: Update statistics, add new sections, improve readability, and embed new media (images, videos).

- Internal Linking: Strengthen internal links to and from the updated content.

- Meta Data Optimization: Refine titles, meta descriptions, and header tags.

- E-E-A-T Enhancement: Add author bios, source citations, and expert quotes to bolster credibility.

- Call-to-Action (CTA) Optimization: Improve CTAs to better align with user intent and business goals.

3. Strategic Action for Underperforming Assets

For content showing little to no performance, and which does not fit the criteria for a quick win, a decisive action plan is required. The options extend beyond simple deletion:

- Consolidate: Merge multiple thin or similar articles into one comprehensive "pillar" page. This often involves redirecting the old URLs to the new consolidated URL using 301 redirects, preserving any accumulated link equity. This is Baltzer’s preferred method for retaining topical authority.

- Update & Repurpose: If content is valuable but outdated, a significant overhaul might be necessary, effectively turning it into a new, fresh piece. This can also involve repurposing it into a different format (e.g., a blog post into an infographic or video script).

- Noindex: If a page serves a specific internal purpose (e.g., an archive page) but offers no value to search engines or external users, it can be "noindexed" to prevent it from appearing in search results, thereby saving crawl budget.

- Delete & Redirect (301): For truly irrelevant, low-quality, or duplicate content that serves no purpose, deletion is an option. Crucially, if the page has ever received traffic or backlinks, implement a 301 redirect to the most relevant remaining page on the site (e.g., a category page, a related article, or the homepage if no direct alternative exists). This prevents broken links and passes on any residual authority.

- Delete (410 Gone): Only for pages that are completely obsolete, have no value, no backlinks, and no relevant redirect target. A 410 Gone status code explicitly tells search engines that the content is permanently removed and should not be crawled again. This should be used sparingly and with extreme caution.

Beyond Pruning: The Broader Content Strategy

Regardless of whether a site opts for pruning, consolidation, or aggressive optimization, the underlying principles of effective content strategy remain constant. The wrap-up points from the original article serve as enduring questions that should guide all content decisions:

- Is your content really relevant to your target audience? In the pursuit of growth and search visibility, sites can sometimes drift from their core mission, publishing content on tangential topics that dilute their brand identity. Maintaining a sharp focus on the specific needs, interests, and pain points of the target audience ensures that content consistently delivers value and reinforces the brand’s position as a relevant authority.

- Is your content helping you achieve a goal? Every piece of content published should be tied to a specific, measurable objective. This could be to rank for a particular keyword, attract high-quality backlinks, drive email sign-ups, generate leads, increase product sales, or enhance brand awareness. Content created "for content’s sake" not only wastes resources but also adds to the digital clutter, potentially harming overall site performance.

The Evolving Landscape of Content SEO

The discussions surrounding content pruning and consolidation are set against a backdrop of continuous evolution in search algorithms. Google’s increasing sophistication, particularly with advancements in AI and natural language processing, means that its ability to understand content quality, user intent, and topical depth is constantly improving. This necessitates a more strategic, less tactical, approach to content management. The future of content SEO will likely emphasize:

- Semantic Understanding: Creating content that comprehensively covers a topic from a semantic perspective, anticipating user questions and related queries.

- User Intent Alignment: Deeply understanding the various intents behind search queries and crafting content that directly addresses those intents.

- E-E-A-T: Continuously demonstrating experience, expertise, authoritativeness, and trustworthiness through robust content, author profiles, and reliable sourcing.

- Personalization: Delivering content experiences tailored to individual user preferences and historical interactions.

- Omnichannel Strategy: Integrating content across various platforms (web, social, email, video) to create a cohesive user journey.

In conclusion, the era of content pruning as a blanket "best practice" has demonstrably ended. It has been replaced by a more nuanced, data-driven philosophy that views content management as an ongoing, strategic process. The insights from Barry Adams and Ulrik Baltzer, coupled with the fundamental principle of rigorous testing, underscore the critical need for SEO professionals to approach their content inventories with discernment, purpose, and a clear understanding of their unique digital ecosystem. Maintain your content strategically, and you will undoubtedly reap the rewards of enhanced search visibility, improved user experience, and sustained digital growth.