Your data is only as powerful as it is trustworthy—especially in the age of AI. In an increasingly data-driven world, the integrity of information stands as the bedrock of sound decision-making, operational efficiency, and competitive advantage. Data quality management (DQM) is not merely a technical undertaking but a strategic imperative that underpins an organization’s ability to innovate, personalize customer experiences, and navigate complex regulatory landscapes. Without a steadfast commitment to high-quality data, businesses risk making flawed decisions, incurring significant financial losses, and ultimately ceding ground to more data-savvy competitors.

Defining Data Quality Management: Core Principles and Practices

Data quality management encompasses a comprehensive suite of practices, tools, and processes designed to ensure that an organization’s data assets are consistently accurate, complete, consistent, timely, unique, and valid. This holistic discipline covers the entire data lifecycle, from initial data profiling and cleansing to ongoing governance, rigorous validation, strategic enrichment, and continuous monitoring. It transcends simple data entry correction, evolving into a sophisticated framework that builds a reliable foundation for all business operations.

At its core, DQM is guided by six critical dimensions:

- Accuracy: Ensuring data precisely reflects the real-world entities or events it represents. For instance, a customer’s address must be correct and deliverable.

- Completeness: Verifying that all necessary data points are present and accounted for. Missing fields, such as an email address in a contact record, can cripple outreach efforts.

- Consistency: Maintaining uniformity of data across different systems and over time. Discrepancies, like varying product codes for the same item in different databases, can lead to confusion and errors.

- Timeliness: Confirming that data is up-to-date and available when needed. Outdated customer contact information renders sales and marketing efforts ineffective.

- Uniqueness: Eliminating duplicate records to prevent redundant efforts and skewed analyses. Multiple entries for the same customer lead to wasted resources and a fragmented view of interactions.

- Validity: Ensuring data conforms to predefined formats, types, and business rules. For example, a phone number field should only contain numerical characters in a specific format.

Collectively, these qualities determine whether data can be trusted to drive critical business decisions or if it inadvertently introduces systemic problems that remain undetected until significant consequences arise.

The Silent Threat: How Poor Data Quality Undermines Business Operations

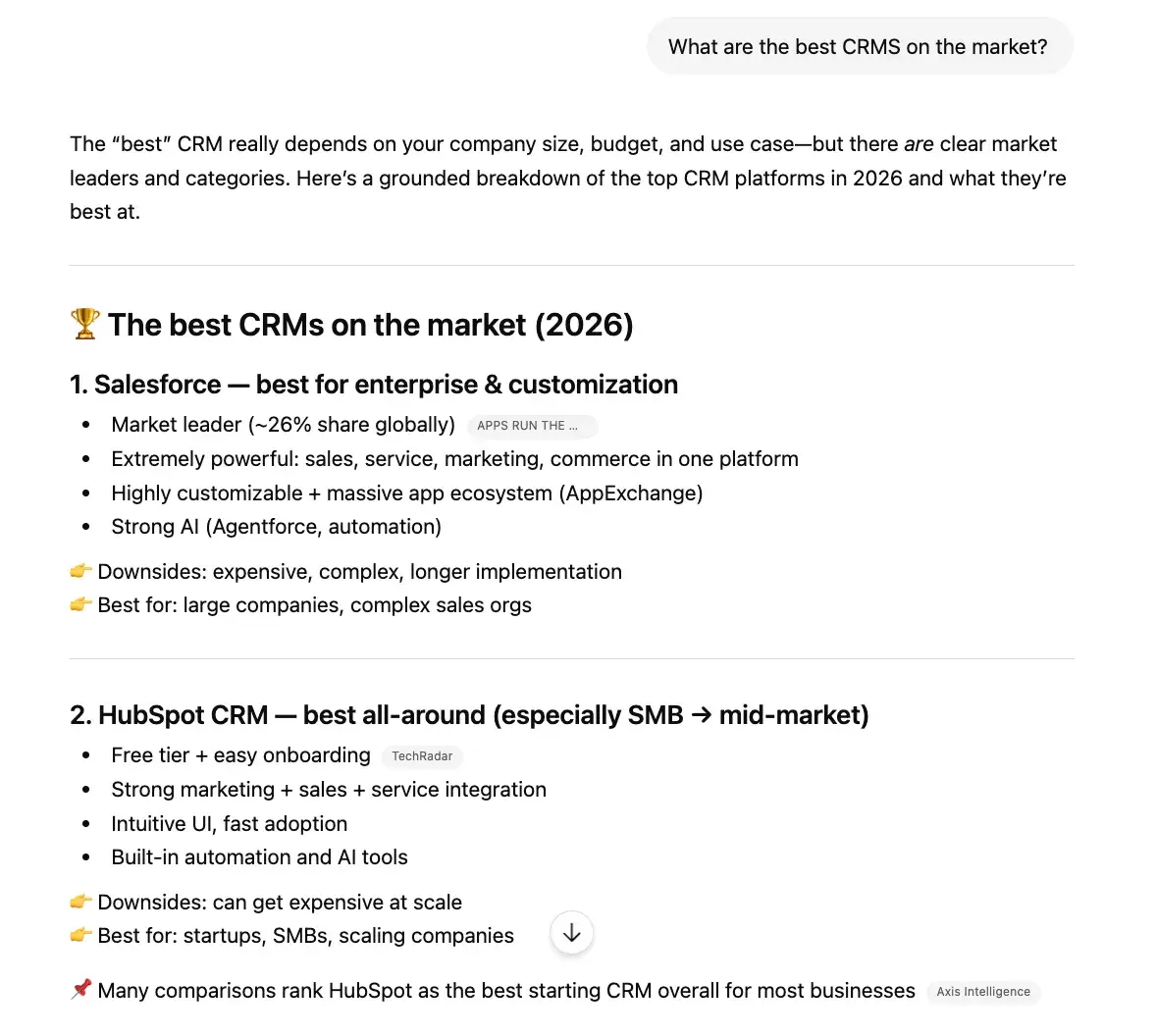

The ramifications of poor data quality are far-reaching and insidious, particularly within critical systems like Customer Relationship Management (CRM). A CRM system, intended to serve as the single source of truth for customer information, can quickly become a repository of misinformation if data quality is neglected. This contamination does not remain isolated; instead, it ripples outwards, creating a cascade of negative effects across the organization.

For sales teams, poor data quality translates into wasted time chasing outdated leads, contacting non-existent prospects, or misidentifying customer needs due to incomplete profiles. Marketing departments struggle with inaccurate customer segmentation, leading to irrelevant campaigns, diminished engagement, and inflated customer acquisition costs. Operations are slowed by the need to manually verify or correct information, diverting valuable resources from core activities. Crucially, corrupted reports and analytics skew forecasts and performance breakdowns, leading to misinformed strategies and suboptimal resource allocation.

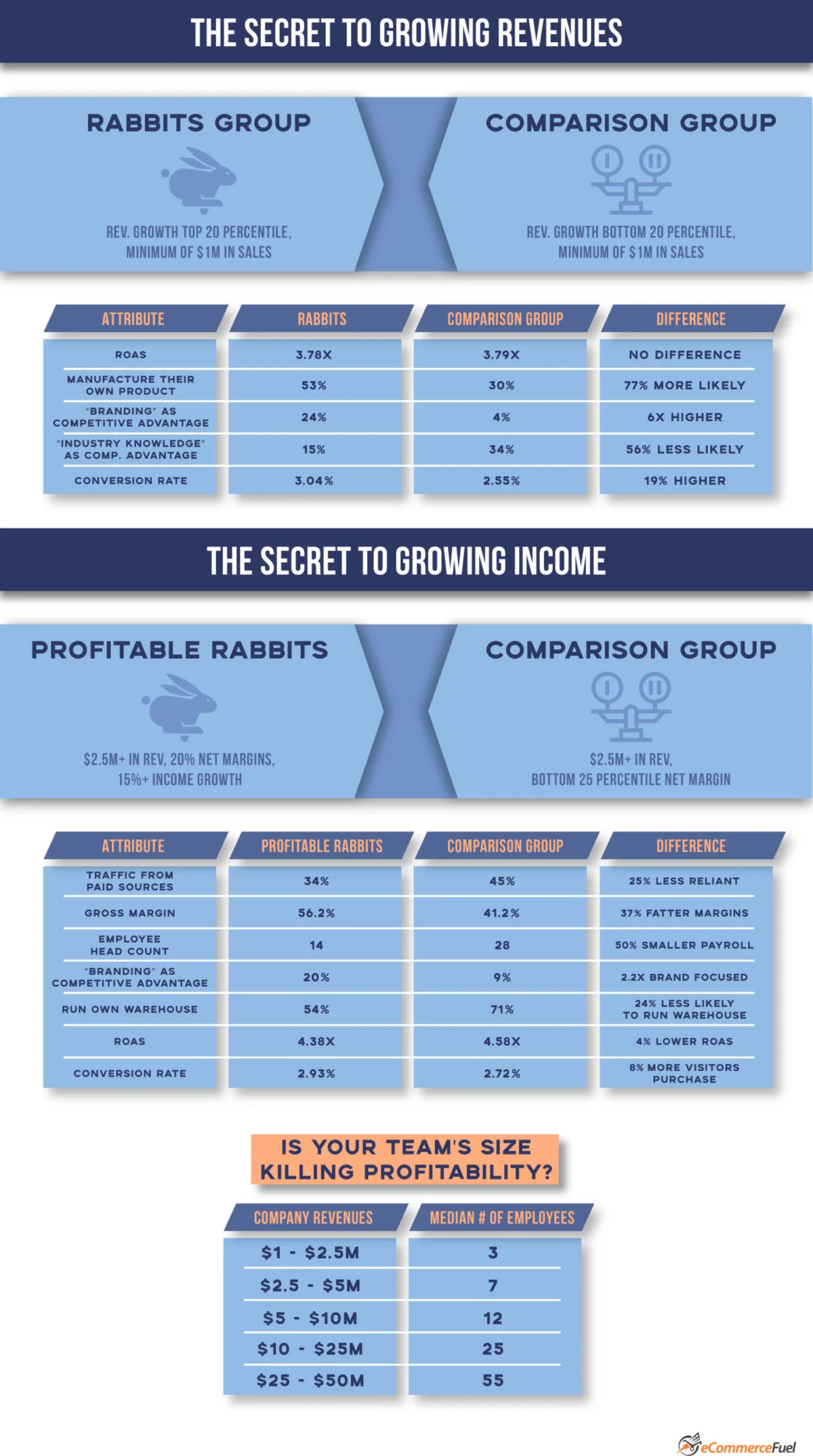

When operating at scale, these inefficiencies and inaccuracies are not mere inconveniences; they become significant competitive liabilities. The "State of CRM Data Management in 2025" report by Validity highlights this stark reality, revealing that 37 percent of teams report losing revenue directly due to poor data quality. This financial impact underscores the urgent need for robust DQM practices, transforming data cleanliness from a "nice-to-have" into a fundamental differentiator between a system that empowers a business and one that actively works against it.

The Genesis of Data Decay: Sources and Challenges in Detection

Data quality issues are often subtle in their genesis and notoriously difficult to detect until they have compounded into significant problems. A CRM, designed to consolidate customer information, is constantly fed by multiple data streams, each introducing potential points of failure. Sources such as bulk imports from legacy systems, integrations with third-party applications, manual data entry by diverse users, and automated data capture mechanisms all present unique variables and risks.

Errors can originate from various points:

- Manual Entry: Human error, typos, inconsistent formatting, or incomplete information.

- System Integrations: Mismatched data schemas, failed data mapping, or poor synchronization between disparate systems.

- Data Migration: Corruption during transfer from one system to another, leading to lost or altered data.

- Third-Party Data: Acquisition of external data that may be outdated, inaccurate, or inconsistent with internal standards.

- Lack of Standardization: Absence of clear rules for data input, leading to varied formats (e.g., "Street," "St.," "STRT" for street).

- Duplicate Records: The same entity entered multiple times under slightly different identifiers.

The insidious nature of data quality issues is that they often develop slowly. A single error here or there might not be immediately obvious. However, as these small problems accumulate, the integrity of the entire dataset begins to crack. This challenge is further exacerbated by the exponential growth in data volume. The sheer scale of information makes manual analysis and error detection impractical, if not impossible. CDO Trends notes that 57 percent of data practitioners identify maintaining data quality as their biggest challenge during data analysis, underscoring the magnitude of this problem.

Data quality problems can hide in plain sight due to:

- Data Silos: Information scattered across disconnected systems, making a unified view impossible and discrepancies hard to spot.

- Lack of Ownership: Unclear accountability for data domains, leading to neglect and inconsistent maintenance.

- Insufficient Tools: Reliance on manual processes or inadequate software that cannot handle the volume and complexity of modern data.

- Ignorance or Underestimation: A lack of awareness among stakeholders about the severity and impact of poor data quality.

Quantifying the Cost: Business Impact Beyond the CRM

The financial and operational repercussions of poor data quality extend far beyond the immediate frustrations within a CRM. Businesses face a multitude of direct and indirect costs that erode profitability and hinder growth.

Direct Costs:

- Wasted Marketing Spend: Invalid contact information leads to bounced emails, undeliverable mail, and ineffective advertising, essentially throwing marketing budget away.

- Operational Inefficiencies: Employees spend an inordinate amount of time cleansing, verifying, and correcting data, diverting resources from higher-value tasks. A study by IBM estimated that poor data quality costs the U.S. economy approximately $3.1 trillion annually.

- Compliance Fines: Inaccurate or incomplete data can lead to non-compliance with regulations such as GDPR, CCPA, or industry-specific standards, resulting in hefty fines and reputational damage.

- Customer Service Strain: Inconsistent or missing customer data leads to longer resolution times, frustrated agents, and a degraded customer experience.

Indirect Costs and Strategic Implications:

- Lost Revenue Opportunities: Inability to accurately identify upselling or cross-selling opportunities due to a fragmented customer view.

- Flawed Decision-Making: Strategic choices based on unreliable data can lead to misguided investments, market miscalculations, and competitive setbacks.

- Failed AI and Analytics Projects: The "garbage in, garbage out" principle is amplified by AI. Machine learning models trained on poor data produce unreliable predictions and recommendations, undermining the very purpose of AI initiatives. The cost of a failed AI project due to data issues can run into millions.

- Damaged Customer Relationships: Inaccurate personalization attempts, irrelevant communications, or repetitive outreach due to duplicate records can alienate customers, leading to churn.

- Reputational Damage: A perception of incompetence or unreliability stemming from data errors can severely damage a brand’s image.

Validity’s "State of CRM Data Management in 2025" report found that 37% of CRM users reported losing revenue directly because of poor data quality. This figure, while significant, likely understates the true cost, as many indirect impacts are difficult to quantify but profoundly affect long-term business health. When businesses cannot trust their data, the competitive landscape shifts, often in favor of those who have prioritized data integrity.

Establishing a Foundation: Key Metrics for Data Quality Monitoring

To effectively oversee and improve data quality, organizations must move beyond anecdotal evidence and establish measurable metrics. These five key data quality metrics provide tangible insights into how practical, trustworthy, and actionable data is, guiding improvement efforts:

- Data Accuracy Rate: This metric quantifies the percentage of data entries that are correct and reflect the true state of affairs. For example, the percentage of customer addresses that are valid and deliverable. A low accuracy rate indicates significant issues with data input, validation, or maintenance.

- Data Completeness Rate: This measures the proportion of required data fields that are populated. For a customer record, it might be the percentage of records with a complete address, phone number, and email. Low completeness can hinder segmentation, communication, and analytics.

- Data Consistency Rate: This tracks the uniformity of data across different systems or within the same system over time. For instance, ensuring a customer’s name is spelled identically across CRM, billing, and marketing platforms. Inconsistencies lead to confusion and operational friction.

- Data Uniqueness Rate: This metric identifies the percentage of records that are distinct and do not have duplicates. A high uniqueness rate is crucial for preventing redundant outreach, ensuring accurate reporting, and maintaining a single, unified view of customers.

- Data Timeliness/Currency Rate: This measures how up-to-date the data is, often by tracking the age of critical information or the frequency of updates. For example, the percentage of customer records updated within the last 12 months. Outdated data renders insights irrelevant and actions ineffective.

By consistently monitoring these metrics, businesses can gain a clear, quantitative understanding of their data health, identify problematic areas, and measure the effectiveness of their DQM initiatives.

Crafting a Robust Strategy: Building a Data Quality Management Framework

Implementing an effective data quality management strategy requires a structured approach that integrates people, processes, and technology. A strong strategy begins with clearly defined standards and evolves into a continuous cycle of improvement.

- Conduct a Comprehensive Data Audit: The first step is to understand the current state of data quality. This involves profiling existing data across all critical systems to identify inaccuracies, inconsistencies, duplicates, and gaps. An audit helps surface the biggest issues and prioritize remediation efforts.

- Define Data Quality Rules and Standards: Establish clear, measurable definitions for each of the six data quality dimensions (accuracy, completeness, consistency, timeliness, uniqueness, validity). These standards should be documented and communicated organization-wide. For example, define acceptable formats for phone numbers, mandatory fields for customer records, and rules for resolving conflicting information.

- Appoint Data Stewards and Establish Governance: Data quality is not solely an IT responsibility. Appoint data stewards—individuals or teams responsible for specific data domains—who will own the quality of that data, enforce entry standards, and resolve issues. Establish a data governance framework that outlines roles, responsibilities, policies, and procedures for data creation, maintenance, and usage.

- Implement Data Quality Tools: Leverage specialized software solutions, like DemandTools from Validity, to automate ongoing data quality processes. These tools can perform deduplication, standardization, validation, and enrichment tasks at scale, preventing bad data from entering the system and cleaning existing issues. Automation is crucial for managing large volumes of data efficiently.

- Integrate Data Quality into Workflows: Embed data quality checks and validations directly into operational workflows. This means integrating real-time validation at the point of data entry, during system integrations, and before data is used for critical analyses or campaigns. Proactive prevention is more effective than reactive cleansing.

- Regular Monitoring and Reporting: Continuously monitor data quality metrics and generate regular reports to track progress, identify new issues, and demonstrate the ROI of DQM initiatives. This feedback loop is essential for continuous improvement and maintaining stakeholder buy-in.

- Training and Awareness: Educate all data users—from entry-level staff to executive leadership—on the importance of data quality, the established standards, and their role in maintaining data integrity. A culture of data quality is vital for long-term success.

Data Quality in Action: Real-World Transformations

The business case for data quality management is compelling, and its impact is demonstrably transformative for organizations that embrace it. Real-world examples illustrate how dedicated DQM efforts, often powered by specialized tools, translate into tangible operational efficiencies and strategic advantages.

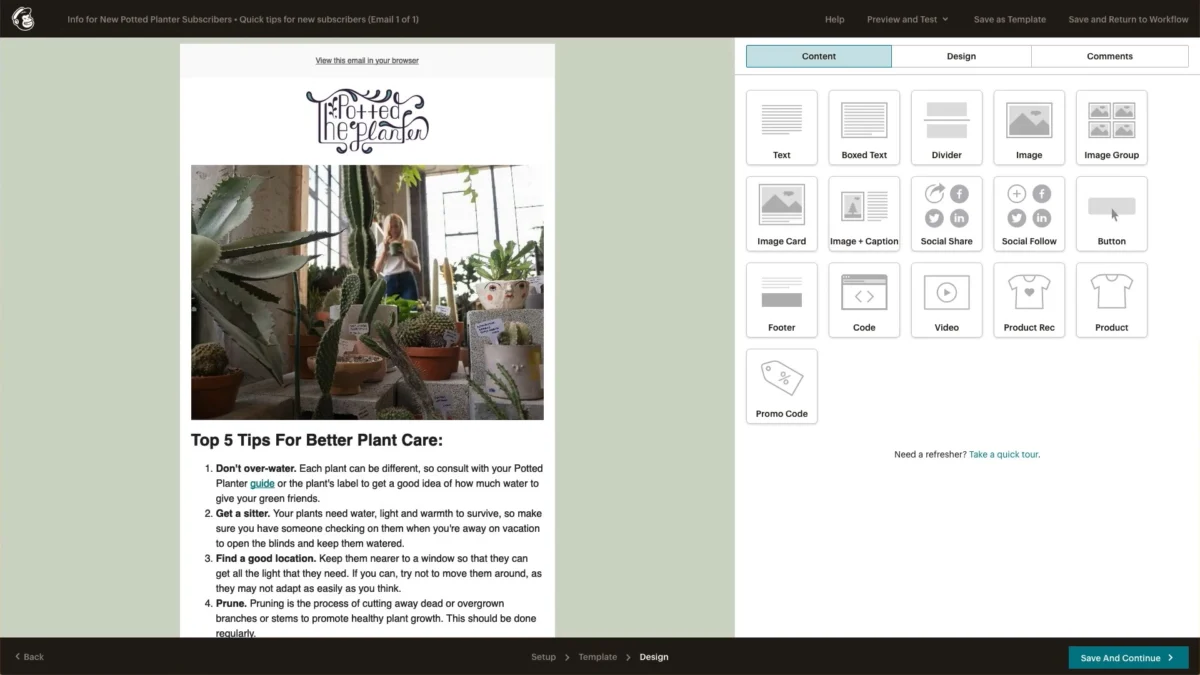

BARBRI: Automating Duplicate Resolution, Reclaiming Time

BARBRI, a leading legal education provider, faced a significant challenge with system integrations flooding their Salesforce instance with thousands of duplicate records monthly. This proliferation of duplicates forced sales representatives to waste valuable time working the same prospects multiple times, leading to inefficiencies and a fragmented customer view. By implementing DemandTools, BARBRI automated its entire data cleansing process. What once consumed days of manual effort was reduced to minutes, allowing the team to shift focus from reactive data maintenance to proactive sales enablement strategies, ultimately improving productivity and lead conversion.

Thornburg Investment Management: Recovering 120 Hours Weekly

Thornburg Investment Management contended with a constant influx of duplicate and unstandardized data, primarily from various third-party sources. This data pollution necessitated extensive manual data management, consuming significant resources. After integrating DemandTools into their operations, Thornburg achieved a remarkable recovery of 120 hours of manual data management time per week. This gain was equivalent to effectively adding a full-time developer to their team without the associated hiring costs, showcasing the immense value of automation in resource optimization and efficiency.

908 Devices: Elevating Data to a Competitive Advantage

For 908 Devices, a pioneer in high-precision chemical analysis, Salesforce data is the lifeblood that feeds everything from daily sales activities to executive-level decision-making dashboards in Tableau. Recognizing the criticality of accurate data for strategic insights and agility, they embedded DemandTools as the backbone of their data quality initiatives. The platform handled deduplication, standardization, and record management at scale, enabling the team to operate with speed and confidence without compromising data accuracy. This proactive approach transformed data quality from a challenge into a distinct competitive advantage, ensuring that every strategic move was grounded in reliable information.

These case studies underscore that effective data quality management is not merely about fixing problems but about unlocking potential, optimizing resources, and building a foundation for sustainable growth and innovation.

The AI Revolution: Elevating Data Quality to a Strategic Imperative

The advent and rapid proliferation of Artificial Intelligence (AI) and Machine Learning (ML) technologies have dramatically raised the stakes for data quality. The foundational principle of "garbage in, garbage out" has always held true for data analysis, but AI amplifies its consequences exponentially. As organizations increasingly rely on AI to drive complex decisions, automate workflows, and generate predictive insights, the margin for error in the underlying data shrinks to near zero.

AI models learn from the data they are fed. If this data is inaccurate, incomplete, or biased, the AI will internalize these flaws, leading to skewed predictions, erroneous recommendations, and potentially discriminatory outcomes. A poorly trained AI model, fed with low-quality data, can exacerbate existing problems at a massive scale, leading to significant financial losses, reputational damage, and even ethical dilemmas. This shift is transforming data quality from a reactive, manual task into a real-time, proactive discipline.

Industry analysts widely agree that data quality is now the single biggest bottleneck for successful AI adoption. Businesses are realizing that investing in cutting-edge AI without simultaneously investing in the quality of the data that fuels it is a futile exercise. This dynamic is pushing data quality management to the forefront of strategic priorities, demanding sophisticated tools that can help organizations stay ahead of problems before they surface and ensure that their AI investments yield accurate, reliable, and ethical results.

Addressing Common Concerns: Data Quality Management FAQs

For organizations embarking on or refining their data quality journey, several common questions often arise:

What is data quality management?

Data quality management is an ongoing organizational discipline that ensures data is accurate, consistent, reliable, and complete. It integrates technology (such as DemandTools), well-defined processes, and trained personnel to maintain data integrity across an enterprise. The goal is to ensure that data is clean, standardized, and ready for use when launching campaigns, making critical business decisions, or training AI models.

Why is data quality management important?

Data forms the core of nearly every modern business decision. Poor data leads to tangible consequences: lost revenue, regulatory non-compliance, suboptimal decision-making, and compromised customer experiences. Organizations with mature data quality practices gain a significant competitive advantage, enabling them to operate faster, serve customers more effectively, allocate resources more efficiently, and leverage advanced technologies like AI with confidence.

How do you implement data quality management?

Implementation typically begins with a thorough data quality audit to pinpoint existing issues. Following this, organizations must define clear data quality rules and standards across all relevant data domains. Appointing data stewards to oversee these domains and enforce data entry standards is crucial. Finally, implementing an automated data quality tool, such as DemandTools, is essential for continuous cleaning, standardization, and prevention of bad data entry.

What are the main challenges in data quality management?

The primary challenges stem from inconsistent, inaccurate, incomplete, and duplicate data. These issues are typically caused by poor data hygiene practices, a lack of standardization, and inadequate data governance. Unaddressed, these problems lead to data silos, inefficient operational processes, and gaps in accountability that negatively impact the entire organization’s ability to trust and leverage its data.

How much does poor data quality cost businesses?

The cost of poor data quality is substantial and often underestimated. Validity’s "State of CRM Data Management in 2025" report indicates that 37 percent of CRM users reported direct revenue loss due to poor data quality. Beyond direct costs like wasted marketing spend on invalid contacts and potential compliance fines, there are harder-to-quantify costs such as missed revenue opportunities, flawed strategic decisions, failed AI projects, and customer churn resulting from irrelevant or incorrect communications. Industry estimates often place the cost in the trillions globally.

How is AI changing data quality management?

AI profoundly changes DQM by making data quality a more critical and proactive discipline. AI systems are only as effective as the data they are trained on; thus, "garbage in, garbage out" takes on amplified significance. As businesses increasingly rely on AI for insights and automation, the need for flawless underlying data becomes paramount. This shift compels organizations to move from reactive data cleaning to real-time, preventative measures, leveraging tools that can ensure data integrity at scale before it impacts AI model performance.

A Phased Approach: Implementing a Data Quality Management Program

While every organization’s data quality journey is unique, a typical implementation timeline for a robust DQM program can be broken down into distinct phases:

-

Phase 1: Discovery and Assessment (Weeks 1-4)

- Goal: Understand the current state of data quality and identify critical pain points.

- Activities:

- Define project scope and key stakeholders.

- Inventory critical data sources and systems (e.g., CRM, ERP, marketing automation).

- Conduct initial data profiling to identify common errors (duplicates, incompleteness, inconsistencies).

- Interview data users to gather anecdotal evidence of data quality issues and their impact.

- Establish baseline data quality metrics.

-

Phase 2: Strategy and Planning (Weeks 5-8)

- Goal: Develop a clear strategy, define standards, and select appropriate tools.

- Activities:

- Define specific data quality objectives aligned with business goals.

- Establish data quality standards and rules (e.g., data formats, mandatory fields, validation criteria).

- Design a data governance framework, including roles (data stewards), responsibilities, and policies.

- Evaluate and select data quality management tools (e.g., DemandTools).

- Develop a detailed implementation roadmap.

-

Phase 3: Initial Implementation and Remediation (Months 3-6)

- Goal: Deploy chosen tools, cleanse existing data, and establish initial automated processes.

- Activities:

- Install and configure data quality software.

- Perform bulk data cleansing: deduplication, standardization, and enrichment of existing data.

- Integrate data quality checks into key data entry points and system integrations.

- Train data stewards and key users on new processes and tools.

- Begin regular monitoring of initial data quality metrics.

-

Phase 4: Continuous Improvement and Expansion (Months 7 onwards)

- Goal: Embed DQM as an ongoing discipline, expand scope, and continuously refine processes.

- Activities:

- Regularly review data quality metrics and performance.

- Conduct periodic data audits to identify new or emerging issues.

- Refine data quality rules and governance policies based on feedback and evolving business needs.

- Expand DQM scope to additional data sources or systems.

- Regularly update and maintain data quality tools and integrations.

- Foster a culture of data quality through ongoing training and communication.

This phased approach allows organizations to systematically address data quality challenges, build a sustainable framework, and gradually integrate DQM into their operational DNA.

Choosing a Trusted Partner: Expertise in Data Quality Solutions

Successfully navigating the complexities of data quality management often requires specialized expertise and robust technological solutions. Thousands of organizations globally have entrusted their Salesforce data quality to DemandTools from Validity, and for good reason. With over two decades of experience, Validity has consistently helped businesses of all sizes clean, manage, and maintain high-quality data. This dedication translates into improved operations, more intelligent decision-making, and stronger customer relationships.

DemandTools offers a comprehensive suite of capabilities designed to empower organizations to effortlessly clean, organize, and maintain their data at scale. From advanced duplicate elimination to precise field standardization and proactive prevention of incorrect data entry, DemandTools provides the granular control necessary to ensure data integrity. Its flexible and powerful features enable businesses to streamline data management tasks, freeing up valuable resources and ensuring that their Salesforce database remains a reliable asset rather than a liability.

Conclusion: The Future is Clean Data

In an era defined by data proliferation and the transformative power of artificial intelligence, data cleanliness is no longer merely a best practice; it is an essential prerequisite for survival and success. Quality data forms the bedrock for efficient operations, accurate reporting, insightful analytics, and superior business outcomes. Organizations that prioritize and invest in robust data quality management are better positioned to innovate, optimize customer experiences, comply with regulations, and ultimately gain a decisive competitive edge.

With powerful solutions like DemandTools, businesses have everything they need to build and maintain a high-quality Salesforce database. By offering flexible and potent ways to standardize fields, eliminate duplicates, update records, and proactively prevent bad data from entering the system, DemandTools ensures that data remains an engine of growth, not a source of friction. The journey towards data mastery begins with cleanliness, and the future of business depends on it.