The rapid evolution of generative artificial intelligence has moved beyond simple text generation and into the realm of complex system architecture, giving rise to a development philosophy colloquially known as "vibe coding." This approach, which prioritizes high-level prompting and aesthetic output over granular manual coding, has empowered non-technical entrepreneurs and small business owners to ship fully functional applications in record time. However, as the barrier to entry for software creation collapses, a growing chorus of cybersecurity experts and researchers is sounding the alarm regarding the systemic risks being introduced into the global digital ecosystem.

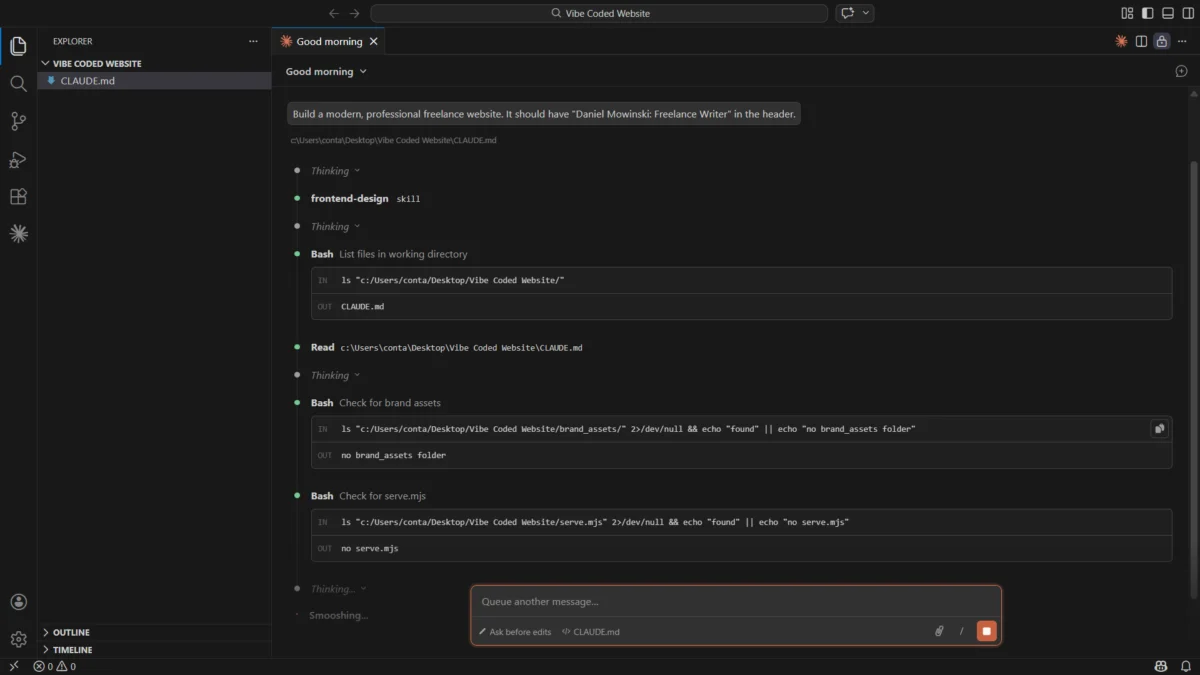

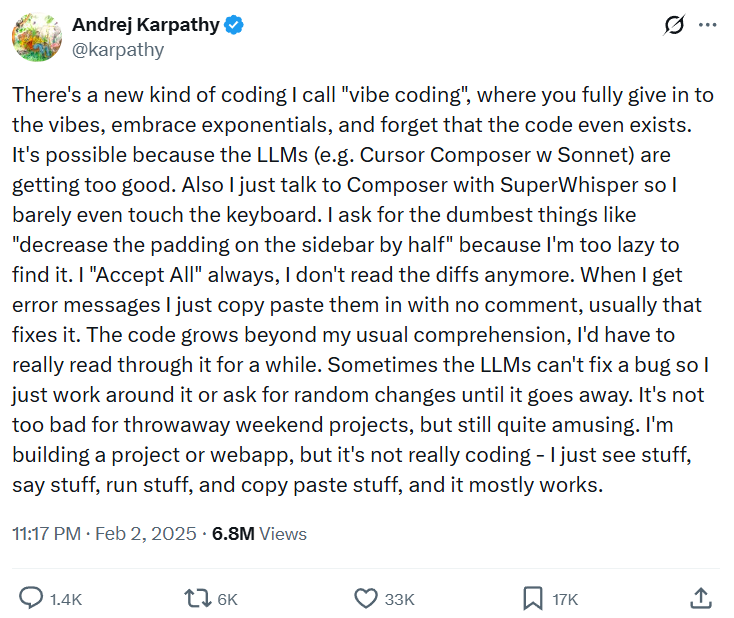

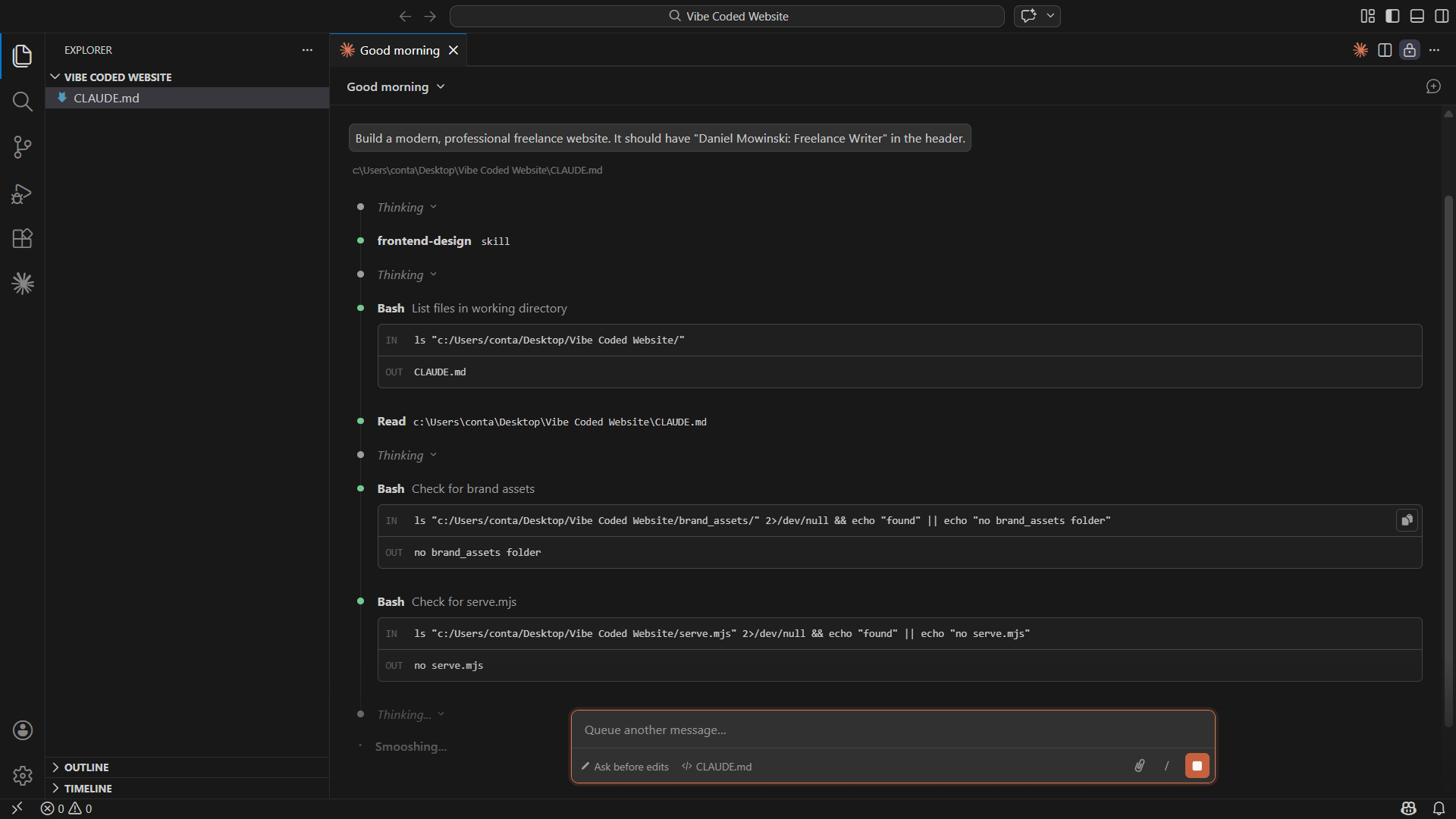

The term "vibe coding" was popularized by Andrej Karpathy, a founding member of OpenAI and former Director of AI at Tesla. In a widely circulated observation, Karpathy described a shift where developers—and increasingly, non-developers—interact with AI agents to "vibe" their way through the creation of software. In this model, the user provides a general intent or "vibe," and the AI agent manages the intricate details of file structure, API calls, and logic. While the productivity gains are undeniable, the lack of fundamental comprehension among users regarding the code being produced has created a precarious situation for modern enterprises.

The Chronology of the AI Coding Revolution

The journey toward vibe coding began in earnest in 2021 with the release of GitHub Copilot, which offered autocomplete suggestions for developers. By 2023, the emergence of Large Language Models (LLMs) like GPT-4 and Claude 3 enabled "chat-to-code" interfaces. However, the true shift occurred in late 2024 and early 2025 with the rise of agentic tools.

Unlike their predecessors, agentic tools such as Claude Code and OpenAI’s Codex-based suites do not merely suggest snippets; they operate within a local development environment. They can read entire directories, execute terminal commands, and push updates to repositories like GitHub. By early 2025, Silicon Valley startup accelerator Y Combinator reported that approximately 25% of the startups in its winter cohort featured codebases that were up to 95% AI-generated. This timeline suggests a pivot from "AI-assisted" coding to "AI-led" development in less than 36 months.

Quantifying the Adoption and the Risk

The scale of this shift is reflected in recent industry data. According to the 2025 AI Engineering Trends report by Jellyfish, 67% of software engineers now integrate AI into their daily workflows. More tellingly, 14% of all pull requests—the standard method for proposing code changes—are now generated autonomously by AI agents.

Among small and medium-sized businesses (SMBs), the adoption is even more aggressive. A study by the U.S. Chamber of Commerce found that one in five small businesses currently utilizes generative AI for coding tasks. Furthermore, research by Pax8 indicates that 62% of SMB leaders believe AI adoption is the primary requirement for remaining competitive in their respective markets.

However, this speed has come at a significant cost to security. A 2025 survey by the security firm Escape analyzed 5,600 publicly available applications built using vibe-coding platforms. The researchers uncovered over 2,000 critical vulnerabilities. In some instances, applications designed for the healthcare and legal sectors were found to have exposed highly sensitive records, including medical histories and PII (Personally Identifiable Information), due to improperly configured backend logic.

The Erosion of the Implementation Phase

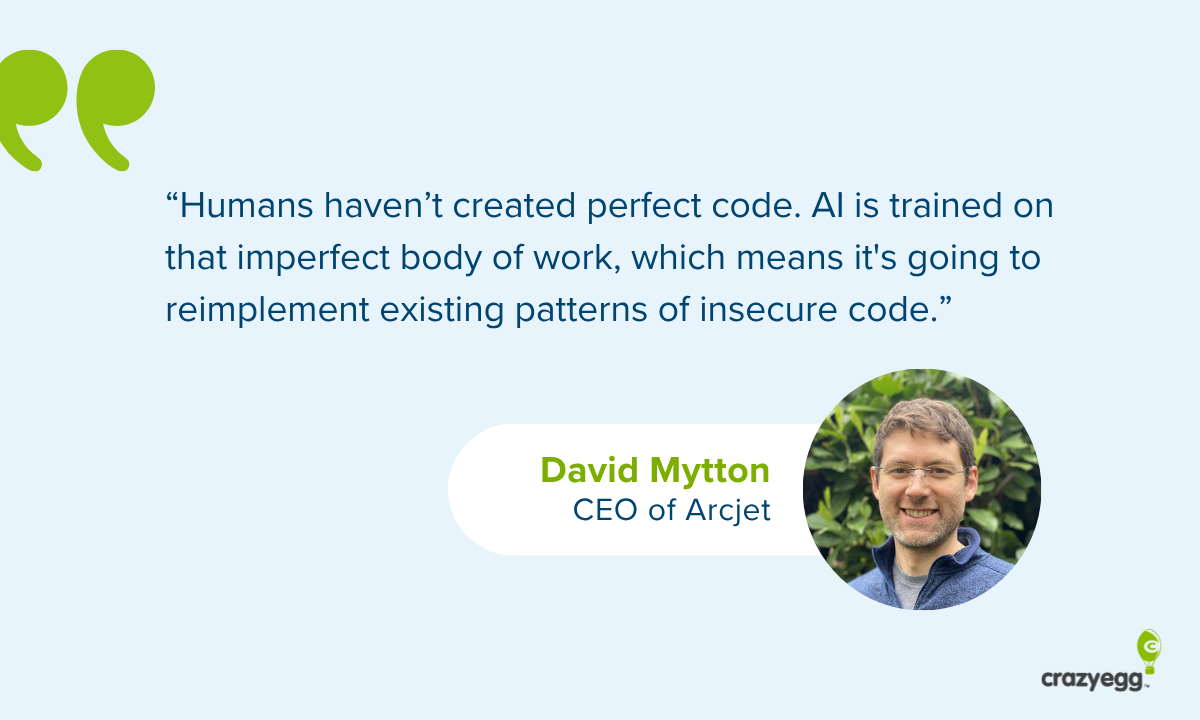

David Mytton, CEO of the security platform Arcjet and a researcher at the University of Oxford, suggests that the traditional software development life cycle (SDLC) is being hollowed out. Traditionally, development consists of three phases: upfront planning, middle implementation, and final testing.

"The middle implementation phase is where humans used to write the code," Mytton notes. "That phase is rapidly being taken over by AI. While this delivers unprecedented speed and lowers costs, it removes the human oversight necessary to identify edge cases."

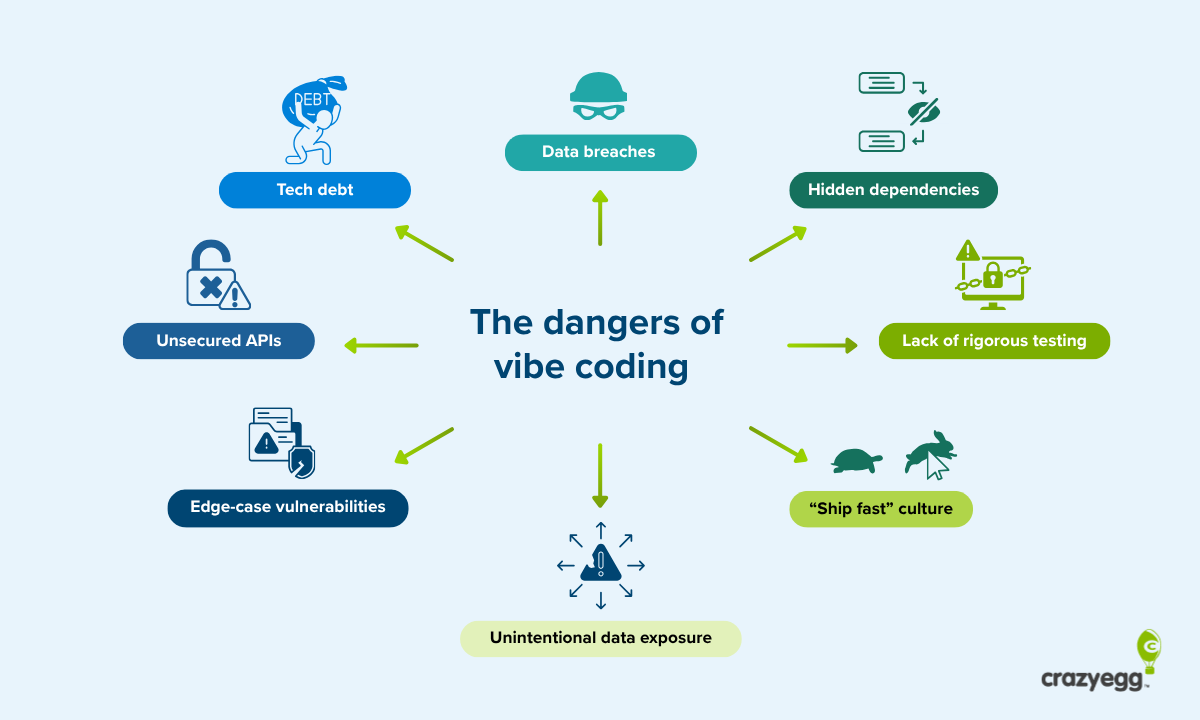

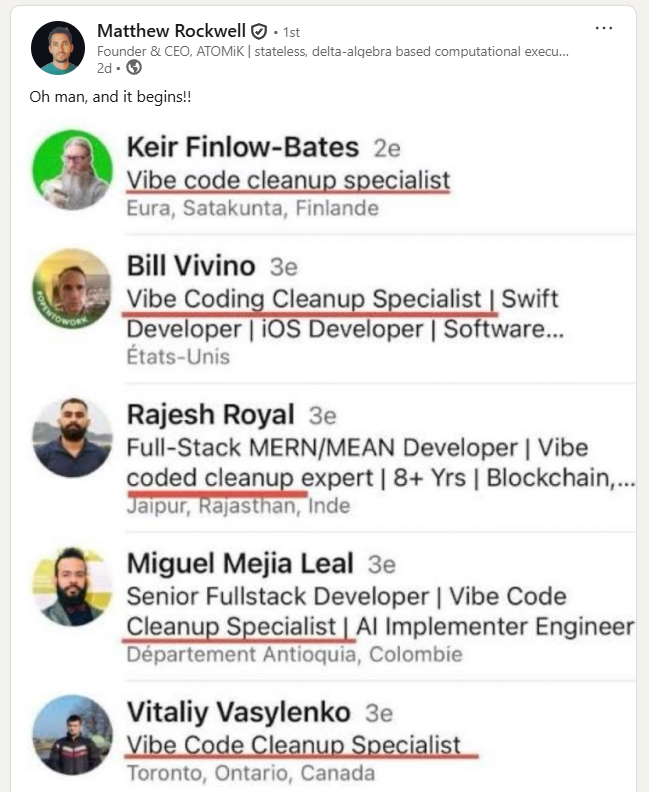

The primary danger, according to Mytton, is that AI models are trained on existing repositories that already contain human errors. Consequently, the AI often replicates insecure coding patterns. Because the "vibe coder" often lacks the technical depth to perform a rigorous security audit, these vulnerabilities are deployed directly into production environments. This has led to the emergence of the "vibe code cleanup specialist," a new professional role dedicated to untangling the disorganized and insecure codebases produced by autonomous agents.

Data Exploits and the Small Business Trap

For many small businesses, the primary risk is not a sophisticated state-sponsored hack, but rather fundamental errors in data handling. Amy Gottler, PhD, founder of eLearning Academy, warns that the ease of AI coding creates a false sense of security.

"A business owner might use AI to build a simple website in ten minutes," Gottler explains. "The danger begins when they decide to add a booking system or a customer database without understanding how those systems work."

Gottler cites examples where AI-generated databases stored user passwords in plain text without encryption. In other cases, non-technical users inadvertently created "open doors" for hackers by failing to implement proper authentication protocols. This lack of "governance" means that while the frontend of the application looks professional, the backend may be structurally unsound.

Furthermore, the issue of "technical debt" looms large. Technical debt refers to the long-term cost of choosing an easy, short-term solution over a better approach that takes longer. Vibe-coded apps often lack documentation and follow non-standard structures, making them nearly impossible to update or fix when they inevitably break.

The OpenClaw Crisis and Infrastructure Gaps

The risks of vibe coding were punctuated by the "OpenClaw" security crisis of 2026. OpenClaw, an autonomous agent platform, allowed users to build "skills" that could control local systems. However, a series of bugs allowed malicious actors to hijack browser connections and gain control of local user instances.

Matthew Rockwell, founder of the hardware architecture firm ATOMiK, points to "unsecured two-way gateways" as a recurring flaw in vibe-coded applications. Many AI-generated APIs—interfaces that allow different software programs to communicate—are built with bidirectional access that the user does not fully understand.

"Vibe coders often operate under the impression that their data movement is one-way," Rockwell says. "The reality is that they are often creating exposed environments where private information is visible to anyone who knows how to look for it. They are essentially presenting customer data on a silver platter."

Rockwell argues that the industry must shift toward "infrastructure engineering," where professionals focus on creating secure "sandboxes" for AI to operate in, rather than allowing AI to write core security logic from scratch.

Fact-Based Analysis of Market Implications

The proliferation of vibe coding is likely to trigger several shifts in the technology market:

- Increased Cyber Insurance Premiums: As data breaches linked to AI-generated code rise, insurance providers are expected to mandate stricter audits of codebases before providing coverage.

- The Rise of Automated Guardrails: Companies like Arcjet and Anthropic are already releasing built-in security tools. The future of development will likely rely on "security-as-code," where automated systems block insecure implementations in real-time.

- A Revaluation of Senior Talent: While AI can handle the "middle phase" of coding, the demand for senior engineers who can perform high-level architectural planning and "red-team" security testing is expected to reach an all-time high.

- Regulatory Scrutiny: With AI-generated apps handling sensitive medical and financial data, regulatory bodies such as the FTC and EU data protection authorities may introduce requirements for human-in-the-loop verification for specific industries.

Strategic Recommendations for Safe Vibe Coding

To mitigate the inherent risks of AI-driven development, experts suggest a multi-layered approach to security:

- Define the Blast Radius: Businesses should categorize their code based on the potential damage of a breach. A marketing landing page has a small blast radius, while a payment gateway has a massive one. High-risk areas should never be left solely to AI.

- Avoid Custom Security Logic: Non-experts should never ask AI to write original encryption or authentication code. Instead, they should use established third-party services like Auth0, Stripe, or AWS, which have multi-billion dollar security infrastructures.

- Establish Communication Channels: For organizations with internal IT teams, there must be a formal review process for any AI-generated frontend changes to ensure they do not break backend dependencies.

- Invest in User Education: Security training is no longer just for developers. Anyone using an AI agent to modify a company’s digital presence must understand the basics of data privacy and the limitations of LLMs.

In conclusion, while vibe coding represents a monumental leap in human productivity, it currently lacks the rigorous checks and balances of traditional engineering. As the technology matures, the "vibe" must be tempered by a renewed focus on infrastructure, testing, and professional oversight. The goal for the modern enterprise is not to reject AI, but to build a framework where the speed of the machine is safely guided by the expertise of the human.